In a multi-cloud environment, security logs are often scattered across different cloud platforms, making unified threat detection and incident response difficult. Security Center's Agentic SOC feature lets you centrally import and analyze security logs from Huawei Cloud products — including Web Application Firewall (WAF) and Cloud Firewall (CFW) — so you can manage security across all your cloud environments from one place.

This guide walks you through the following steps:

Send Huawei Cloud WAF or CFW logs to Log Tank Service (LTS).

Choose an import method: Kafka (DMS) or OBS.

Configure the data channel and import task.

Set up ingestion policies and threat detection rules to start analyzing logs.

How it works

Logs flow from Huawei Cloud to Security Center through the following stages:

Log aggregation: WAF and CFW logs are collected into Huawei Cloud's Log Tank Service (LTS).

Data export: LTS exports log data to Distributed Message Service (DMS) for Kafka or Object Storage Service (OBS), which act as relay points for cross-cloud transfer.

Cross-cloud import: Agentic SOC subscribes to and pulls log data from DMS for Kafka (using the Kafka protocol) or OBS (using the S3 protocol), then ingests it into a specified data source.

Ingestion and standardization: Inside Agentic SOC, an ingestion policy applies standardization rules to parse and normalize the raw logs before storing them in the data warehouse.

Supported log types

Agentic SOC supports importing the following log types from Huawei Cloud:

Web Application Firewall (WAF) alert logs

Cloud Firewall (CFW) alert logs

Step 1: Send logs to LTS

Before importing logs, send all security product logs from Huawei Cloud to LTS.

Web Application Firewall

For detailed instructions, see the Huawei Cloud documentation: Using LTS to Record WAF Logs.

Log in to the Web Application Firewall console. In the upper-left corner, select a region or project, then click Events in the left navigation pane.

On the Log Settings tab, click Connect to LTS and configure the following parameters:

ImportantThe configuration takes about 10 minutes to take effect.

Parameter Value Log Types WAF access logs and WAF attack logs Log Group Select the log group where you want to store the logs. Click Create Log Group to create a new one. WAF Access Log Stream If you selected WAF access logs, select a WAF access log stream. Click Create Log Stream to create a new one. WAF Attack Log Stream If you selected WAF attack logs, select a WAF attack log stream. Click Create Log Stream to create a new one.

Cloud Firewall

For detailed instructions, see the Huawei Cloud documentation: Ingesting CFW Logs to LTS.

Create a log group and log stream.

Log in to the Cloud Log Service consoleCloud Log Service console. On the Log Ingestion page, click Create Log Group.

On the Create Log Group page, set Log Group Name and Log Retention Period (Days). > Note: Add the suffix

-cfwto the log group name (for example,mylog-cfw) for easier identification.After the log group is created, find it in the list and click Create Log Stream under the

icon.

icon.On the Create Log Stream page, set Log Stream Name and Log Storage Duration (Days).

ImportantNote: Use suffixes like

-attack,-access, and-flowfor attack event logs, access control logs, and traffic logs, respectively.CFW supports three log stream types:

Log type Description Attack logs Records attack alerts, including event type, protection rule, action, 5-tuple, attack payload, and other details. Access logs Records traffic that matches ACL policies, including hit time, 5-tuple, response action, access control rule, and other details. Traffic logs Records all traffic passing through the Cloud Firewall, including start time, end time, 5-tuple, byte count, packet count, and other details.

Set up LTS synchronization.

Log in to the Cloud Firewall console. In the upper-left corner, select the region and firewall instance, then choose Log Audit > Log Management in the left navigation pane.

On the Log Management page, click Configure LTS Synchronization. Set Log Group and Log Source to the log group and log stream you created.

Step 2: Choose an import method

Two methods are available for importing Huawei Cloud LTS logs into Security Center. Choose based on your real-time requirements, cost constraints, and configuration complexity.

| Aspect | Kafka (DMS) | OBS |

|---|---|---|

| Real-time performance | Near-real-time (real-time transfer can be configured) | Minute-level latency |

| Configuration complexity | Higher. Requires configuring a Kafka instance, Elastic IP Addresses (EIPs), security groups, and more. | Lower. Only requires configuring a transfer task. |

| Cost (Huawei Cloud) | Kafka instance, EIP and traffic, Log Service | OBS storage, Log Service |

| Cost (Alibaba Cloud) | Agentic SOC log ingestion traffic | Agentic SOC log ingestion traffic |

| Best for | Scenarios requiring near-real-time log analysis, such as stream-based security computing or rapid alert response | Scenarios where real-time performance is not critical, focusing on cost-effectiveness, log archiving, or batch offline analysis |

Step 3: Configure the data import

Follow the instructions for your chosen import method.

Import data using Kafka (DMS)

Prepare the Kafka data channel on Huawei Cloud

Configure a Kafka instance

Create a Kafka instance.

Go to the Buy Kafka Instance pageBuy Kafka Instance page. On the Quick Config tab, complete the basic and network configurations, including instance specifications and a Virtual Private Cloud (VPC).

In the Access Mode area, select Public Network Access and configure the following parameters:

Parameter Value Public Network Access Select Ciphertext Access. Public IP Addresses Select an accessible Elastic IP Address (EIP). If you don't have enough EIPs, click Create Elastic IP to go to the EIP purchase page. For more information, see the Huawei Cloud documentation: Applying for an EIP. After purchase, click the  icon next to Elastic IP Address and select the newly purchased EIPs from the drop-down list.

icon next to Elastic IP Address and select the newly purchased EIPs from the drop-down list.Kafka Security Protocol SASL_SSL: uses SASL for authentication and SSL certificates for data encryption. SASL_PLAINTEXT: uses SASL for authentication and transmits data in plaintext for better performance. SASL PLAIN Mechanism If you set Kafka Security Protocol to SASL_PLAINTEXT, select CRAM-SHA-512. Username / Password The credentials the client uses to connect to the Kafka instance. The username cannot be changed after encrypted access is enabled. ImportantImportant: Purchase at least three EIPs. Save the username and password â youâll need them later to grant Security Center access to Kafka.

For more information, see the Huawei Cloud documentation: Buying a Kafka Instance.

Create a topic.

Go to the Huawei Cloud - Kafka Management page. In the upper-left corner, select the region where your Kafka instance is located.

In the left navigation pane, click Kafka Instances. Click the name of your target instance to open its details page, then click Topic Management.

Click Create Topic and configure the parameters. The default settings work for most use cases.

For more information, see the Huawei Cloud documentation: Topic Parameter Description.

Configure security group rules. After enabling public access, configure security group rules to allow connections to Kafka.

On the Kafka instance details page, click Overview in the left navigation pane. In the Network section, click the

icon next to Security Group.

icon next to Security Group.On the policy configuration page, go to the Inbound Rules tab, click Add Rule, and set the following:

Field Value Policy Allow Type IPv4 Protocol Custom TCP Port 9095 Source 0.0.0.0/0

Note the Kafka connection parameters. On the Kafka instance Overview page, record the Address (Public Network, Ciphertext), the enabled Security Protocol, and the SASL PLAIN Mechanism. You'll need these when connecting Security Center to Kafka.

Create a transfer task from LTS to Kafka

For detailed instructions, see the Huawei Cloud documentation: Transferring Logs to DMSTransferring Log Data to DMS.

Log in to the Log Service console. In the left navigation pane, click Log Transfer, then click Configure Log Transfer in the upper-right corner.

Set the following transfer parameters:

Parameter Value Transfer Mode Periodic transfer Transfer Destination DMS Log Group Name / Log Stream Name The log group and stream you configured in Step 1 (for example, WAF attack logs) Kafka Instance The Kafka instance you configured Topic The topic you created Transfer Interval Real-time Format Raw Log Format or JSON

Configure the Kafka log import on Alibaba Cloud

Grant Security Center access to Kafka

Go to Security Center consoleSecurity Center console > Agentic SOC > Integration Center. In the upper-left corner, select your asset region: Chinese Mainland or Outside Chinese Mainland.

On the Multi-cloud Configuration Management tab, select Multi-cloud Assets, click Grant Permission, and select IDC from the drop-down list. In the panel that appears, set the following:

Parameter Value Vendor Apache Connection Type Kafka Endpoint The IPv4 Encrypted Public Endpoint for Kafka you recorded from Huawei Cloud Username / Password The Kafka credentials you configured on Huawei Cloud Communication Protocol The security protocol you enabled on Huawei Cloud SASL Authentication Mechanism The SASL PLAIN Mechanism you configured on Huawei Cloud Under Configure synchronization policy, set AK Service Status Check to the interval at which Security Center checks the validity of the Huawei Cloud access key. Select Disable to turn off this check.

Create a data import task

Create a data source for the Huawei Cloud log data. Skip this step if you've already created one.

Go to Security Center console > Agentic SOC > Integration Center. In the upper-left corner, select your asset region.

On the Data Source tab, create a data source for the Huawei Cloud logs. For instructions, see Create a data source: Logs are not ingested into Simple Log Service (SLS).

Parameter Value Source Data Source Type Select User Log Service or Agentic SOC Dedicated Collection Channel. Add Instances Create a new Logstore to isolate the data.

On the Data Import tab, click Add Data. In the panel that appears, set the following: Transfer format to value type mapping:

Parameter Value Endpoint The IPv4 Encrypted Public Endpoint for Kafka Topics The topic you created on Huawei Cloud Value Type See the mapping below Transfer format Value type JSON format json Raw Log Format text Under Configure the destination data source, set the following:

Data Source Name: Select the data source you created.

Destination Logstore: Logstores under the selected data source are loaded automatically.

Click OK. Security Center begins pulling logs from Huawei Cloud automatically.

Import data using OBS

Prepare OBS data on Huawei Cloud

Configure LTS to transfer logs to OBS

Create a transfer task.

Log in to the . In the left navigation pane, click Log Transfer, then click Configure Log Transfer in the upper-right corner.

Set the following transfer parameters:

Parameter Value Transfer Mode Periodic transfer Transfer Destination OBS Bucket Log Group Name / Log Stream Name The log group and stream you configured in Step 1 (for example, WAF access log stream) OBS Bucket Select an existing OBS bucket or create a new one on the Huawei Cloud - Bucket List page Custom Log Transfer Path Enabled: set a custom path in the format /LogTanks/RegionName/%GroupName/%StreamName/<custom_transfer_path>(default:lts/%Y/%m/%d). Disabled: logs go to the default pathLogTanks/RegionName/2019/01/01/<Log_Group>/<Log_Stream>/<log_file_name>.Compression Format uncompressed,gzip, orzipNoteNote: LTS can transfer logs to OBS buckets that use the Standard or Restored Archive storage class.

WarningWarning: Security Center does not support parsing log files compressed in the

snappyformat.

For detailed instructions, see the Huawei Cloud documentation: Transferring Logs to OBS.

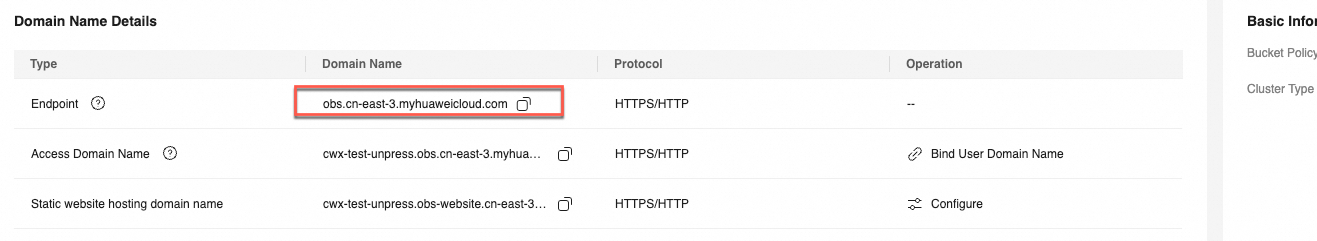

Get the OBS bucket endpoint.

Go to the Huawei Cloud Bucket List page. Locate the OBS bucket you configured for LTS log transfer and open its details page. In the left navigation pane, click Overview.

In the Domain Name area, note the Endpoint. The format is

obs.${region}.myhuaweicloud.com.

Create an access key

Go to the Huawei Cloud - My Credentials page. In the left navigation pane, click Access Keys.

Click Create Access Key. Either click Download CSV File or copy the Access Key ID and Secret Access Key to a local file for safekeeping. For more information, see Access KeyAccess Keys.

Configure the OBS log import on Alibaba Cloud

Grant Security Center access to Huawei Cloud OBS

Go to Security Center console > Agentic SOC > Integration Center. In the upper-left corner, select your asset region: Chinese Mainland or Outside Chinese Mainland.

On the Multi-cloud Configuration Management tab, select Multi-cloud Assets, click Grant Permission, and select IDC from the drop-down list. In the panel that appears, set the following:

Parameter Value Vendor AWS-S3 Connection Type S3 Endpoint The OBS bucket endpoint (format: obs.${region}.myhuaweicloud.com)Access Key ID / Secret Access Key The access key you created on Huawei Cloud Under Configure synchronization policy, set AK Service Status Check to the interval at which Security Center checks the validity of the Huawei Cloud access key. Select Disable to turn off this check.

Create a data import task

Go to Security Center console > Agentic SOC > Integration Center. In the upper-left corner, select your asset region: Chinese Mainland or Outside Chinese Mainland.

On the Data Import tab, click Add Data. In the panel that appears, set the following:

Parameter Value Endpoint The OBS bucket endpoint OBS Bucket The OBS bucket where LTS transfers logs Under Configure the destination data source, set the following:

Data Source Name: Select a custom data source with a normal status (Custom Log Capability or Agentic SOC Dedicated Data Collection Channel). If no suitable data source is available, create one. For instructions, see Data sources.

Destination Logstore: Logstores under the selected data source are loaded automatically.

Click OK. Security Center begins pulling logs from Huawei Cloud automatically.

Step 4: Analyze the imported data

After the data is ingested, set up parsing and detection rules.

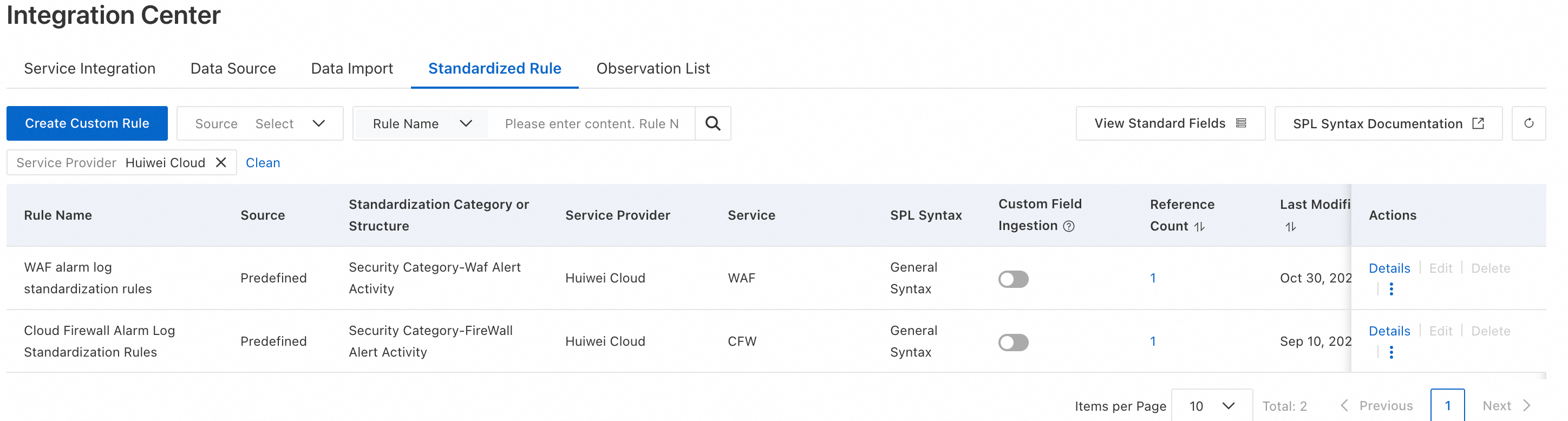

Create an ingestion policy. Follow the instructions in Connect products to Agentic SOC 2.0 to create an ingestion policy with the following settings:

Parameter Value Data Source Select the destination data source you configured in the data import task. Standardized Rule Select from the built-in standardization rules for Huawei Cloud products. Standardization Method For alert logs, this is set to Real-time Consumption by default and cannot be changed.

Configure threat detection rules. Enable or create log detection rules in rule management to analyze logs, generate alerts, and create security events. For instructions, see Configure threat detection rules.

Billing

This solution incurs costs from both cloud platforms. Review the billing documentation for each product before proceeding.

Huawei Cloud costs (data transfer and storage):

| Service | Billable items | Billing documentation |

|---|---|---|

| LTS | Log storage, read/write operations, and more | Huawei Cloud LTS - Billing overview |

| DMS for Kafka | Instance specifications, public network traffic, and more | Huawei Cloud Kafka - Billing overview |

| OBS | Storage capacity, number of requests, public network traffic, and more | Huawei Cloud OBS - Billing overview |

Alibaba Cloud costs (depend on the data storage method you choose):

For Agentic SOC billing, see Billing details and Pay-as-you-go billing for Threat Analysis and Response. For Simple Log Service (SLS) billing, see SLS billing overview.

| Data source type | Agentic SOC billable items | SLS billable items | Notes |

|---|---|---|---|

| Agentic SOC Dedicated Collection Channel | Log ingestion fee + log storage and write fees (both consume Log Ingestion Traffic) | Fees for items other than log storage and writes (such as public network traffic) | Agentic SOC creates and manages the SLS resources. Log storage and write fees are billed through Agentic SOC. |

| User Log Service | Log ingestion fee (consumes Log Ingestion Traffic) | All log-related fees (storage, writes, public network traffic, and more) | All log resources are managed by SLS. All log-related fees are billed through SLS. |

FAQ

No log data appears in SLS after creating a data import task

Check in this order:

Huawei Cloud side: Log in to the Huawei Cloud console and confirm that logs are generated and delivered to the configured LTS log stream, Kafka topic, or OBS bucket.

Credentials: In Security Center, go to the Multi-cloud Assets page and confirm the authorization status is normal and the access key is valid.

Network connectivity (Kafka method only): Confirm that public access is enabled for the Kafka service and that the security group rules allow inbound traffic from Security Center's service IP addresses.

Data import task: Go to the Data Import page in Security Center to review task status and error logs, then make corrections.

Why select `Apache` or `AWS-S3` instead of `Huawei Cloud` when granting permission?

The log import feature uses standard, protocol-compatible interfaces rather than vendor-specific APIs.

IDC is the drop-down value that represents the protocol vendor.

Apacherepresents the Kafka protocol, andAWS-S3represents the S3-compatible object storage protocol.Authorizing Huawei Cloud as a vendor enables Agentic SOC to coordinate security event responses with Huawei Cloud — such as blocking an IP address using threat detection rules — but does not enable log import.

What's next

Configure threat detection rules — Create detection rules to analyze the imported logs and generate security alerts.

Connect products to Agentic SOC 2.0 — Set up additional ingestion policies for other log sources.

Data sources — Manage your Agentic SOC data sources and Logstores.