Dirty data introduced during extract, transform, load (ETL) processing can silently propagate through your pipeline, corrupting downstream reports and ML models before anyone notices. A data quality monitoring node runs scheduled checks on a table and blocks downstream tasks when a critical threshold is breached, stopping bad data before it spreads. This guide walks you through creating a node, configuring monitoring rules, defining exception-handling policies, and deploying the node to run on a schedule.

Prerequisites

Before you begin, make sure that:

-

A computing resource is associated with your workspace, and the table you want to monitor exists in that computing resource. See Associate a computing resource and Node development.

-

A serverless resource group is available. Data quality monitoring nodes run only on serverless resource groups. See Resource group management.

-

(Required for RAM users) The RAM user is added to the workspace with the Development or Workspace Administrator role. The Workspace Administrator role has extensive permissions; assign it only when necessary. See Add members to a workspace.

Limitations

| Limitation | Details |

|---|---|

| Supported data sources | MaxCompute, E-MapReduce (EMR), Hologres, Cloudera's Distribution Including Apache Hadoop (CDH) Hive, AnalyticDB for PostgreSQL, AnalyticDB for MySQL, and StarRocks |

| Tables per node | Each node monitors exactly one table. To monitor multiple tables, create one node per table. |

| Monitored tables scope | You can only monitor tables from data sources that are added to the workspace to which the current data quality monitoring node belongs. |

| Non-partitioned table | All data in the table is checked by default. No additional configuration is needed. |

| Partitioned table | You must specify a partition filter expression to select which partition to check. |

| Rule management scope | Rules created in Data Studio can only be run, modified, and managed in Data Studio. In DataWorks Data Quality, you can view them but cannot trigger or manage them. |

| Redeployment behavior | If you modify rules in the node and redeploy, the original rules are replaced. |

Step 1: Create a data quality monitoring node

-

Go to the Workspaces page in the DataWorks console. In the top navigation bar, select a region. Find the workspace and choose Shortcuts > Data Studio in the Actions column.

-

In the left-side navigation pane of the Data Studio page, click the

icon. In the Workspace Directories section of the DATA STUDIO pane, click the

icon. In the Workspace Directories section of the DATA STUDIO pane, click the  icon and choose Create Node > Data Quality > Quality Monitoring. In the Create Node dialog box, set the Path and Name parameters and click OK.

icon and choose Create Node > Data Quality > Quality Monitoring. In the Create Node dialog box, set the Path and Name parameters and click OK.

Step 2: Configure monitoring rules

Select a table

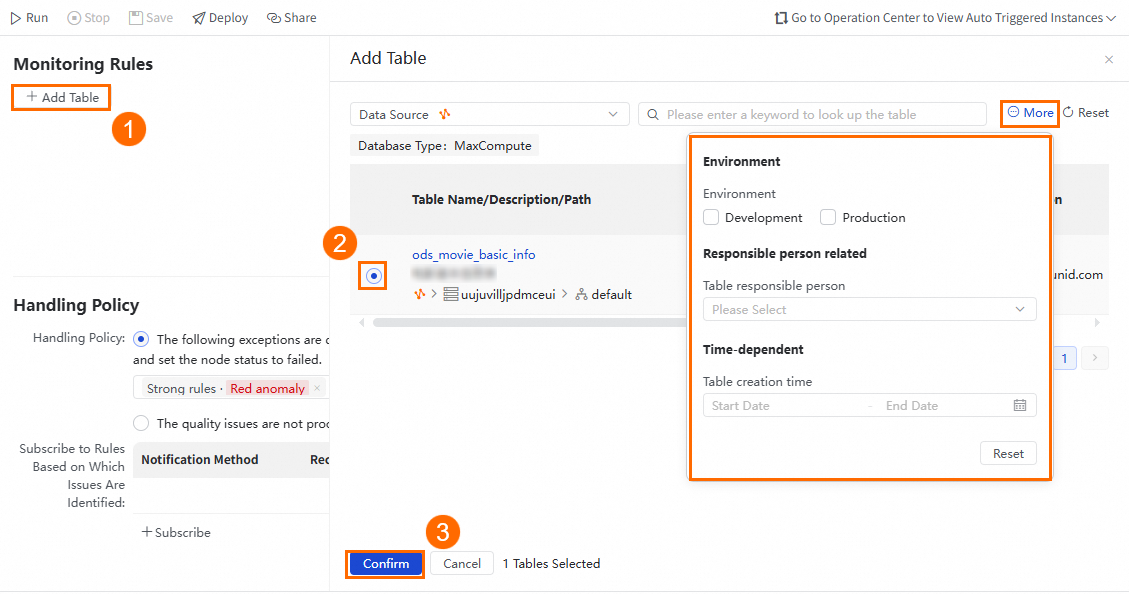

In the Monitoring Rules section of the node's configuration tab, click Add Table. In the Add Table panel, select the table to monitor. Click More to apply filter conditions and locate the table faster.

If the table is not listed, go to Data Map and manually refresh the table's metadata.

Set the data range

-

Non-partitioned table: All data is checked by default. No additional configuration is needed.

-

Partitioned table: Select the partition to check. Use scheduling parameters to specify the partition dynamically. Click Preview to verify that the partition filter expression resolves as expected.

Add monitoring rules

Rules define what gets checked and what counts as a pass, a warning, or a failure. By default, all configured rules are enabled.

Starting point: Copilot-based rule recommendation (where available)

DataWorks Copilot can automatically generate monitoring rules based on the table's schema and statistics. Review the suggestions and accept or reject them based on your requirements.

DataWorks Copilot is available for public preview in specific regions only. If it is unavailable in your region, create or import rules manually using one of the methods below.

You can create rules from scratch or import existing ones.

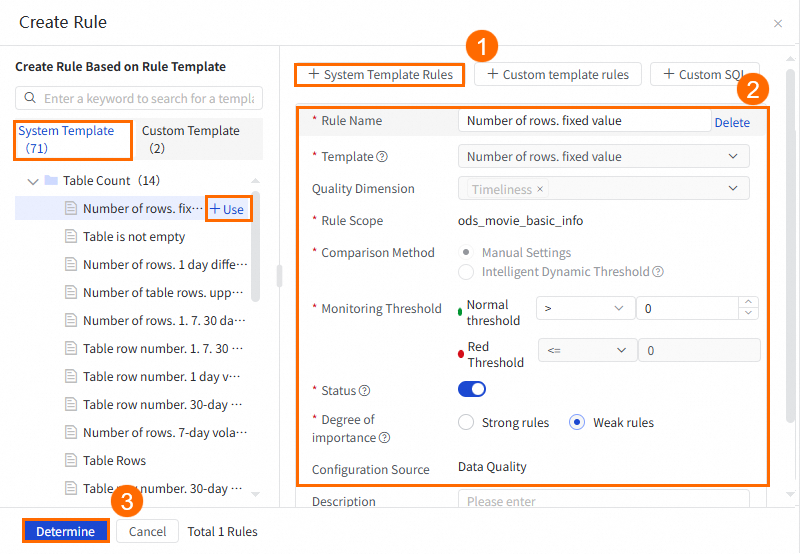

Create a rule from a built-in template

DataWorks provides built-in table-level and field-level rule templates covering common checks such as row count, null values, and value ranges. Click Create Rule and select a template.

You can also browse the template list on the left side of the Create Rule panel and click + Use to apply a template.

Rule parameters

| Parameter | Description |

|---|---|

| Rule name | The name of the monitoring rule. |

| Template | The check type to apply. See View built-in rule templates for the full list. Note

Average value, sum, minimum, and maximum checks are available for numeric fields only. |

| Rule scope | For table-level rules, the scope is the current table. For field-level rules, select the target field. |

| Comparison method | How the check result is evaluated. Choose Manual settings to define fixed thresholds, or Intelligent dynamic threshold to let the system determine thresholds automatically based on historical patterns. Note

Intelligent dynamic threshold is supported only for custom SQL, custom range, and dynamic threshold rule types. |

| Monitoring threshold | The pass/warn/fail boundaries. See Threshold tiers below. |

| Retain problem data | When enabled, the system saves the rows that failed the check to a separate table for review. Available for MaxCompute and Hologres tables on supported rule types. Problematic data is not stored when the rule is disabled. |

| Status | Enable or Disable the rule. A disabled rule is not triggered during test runs or by scheduling nodes. |

| Degree of importance | Controls whether a rule violation blocks the pipeline. Strong rules: if the red threshold is exceeded, the associated scheduling node is blocked by default. Weak rules: if the red threshold is exceeded, the scheduling node is not blocked. |

| Configuration source | The origin of the rule. Defaults to Data Quality. |

| Description | Optional notes about the rule. |

Threshold tiers

DataWorks uses three threshold tiers to differentiate expected, borderline, and critical data quality issues:

| Tier | Meaning | Pipeline effect |

|---|---|---|

| Normal threshold | Check result is within the expected range — data is healthy. | No action |

| Orange threshold | Result is outside the normal range but not severe enough to block — worth investigating. | No blocking |

| Red threshold | Result indicates a critical issue. | Strong rules trigger pipeline blocking. |

Example: A daily orders table normally has 50,000–55,000 rows. A typical configuration: normal threshold >= 48,000, orange threshold between 40,000–47,999, red threshold < 40,000. One day the row count drops to 38,000 — the red threshold fires and the strong rule blocks downstream jobs before the bad data reaches your reports.

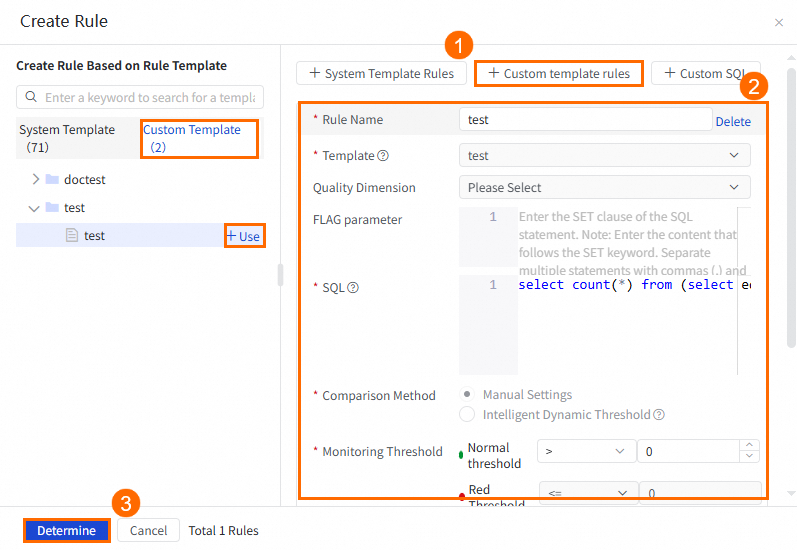

Create a rule from a custom template

Before using this method, create a custom rule template in Data Quality: go to Quality Assets > Rule Template Library, and in the Custom Template Category section click the plus icon to add a template. See Create and manage custom rule templates.

Once the template exists, select it in the Create Rule panel.

You can also click + Use next to the template in the custom template list on the left.

Custom templates share most parameters with built-in templates (see the table above) plus these additional fields:

| Parameter | Description |

|---|---|

| FLAG parameter | A SET statement to execute before the rule's SQL statement runs. |

| SQL | The complete check logic as a SQL statement. The result must be a single numeric value (one row, one column). Enclose the partition filter expression in brackets []. Example: SELECT count(*) FROM ${tableName} WHERE ds=$[yyyymmdd]; — ${tableName} is automatically replaced with the monitored table name. Once you configure this parameter, the data range setting on the monitor configuration no longer applies. |

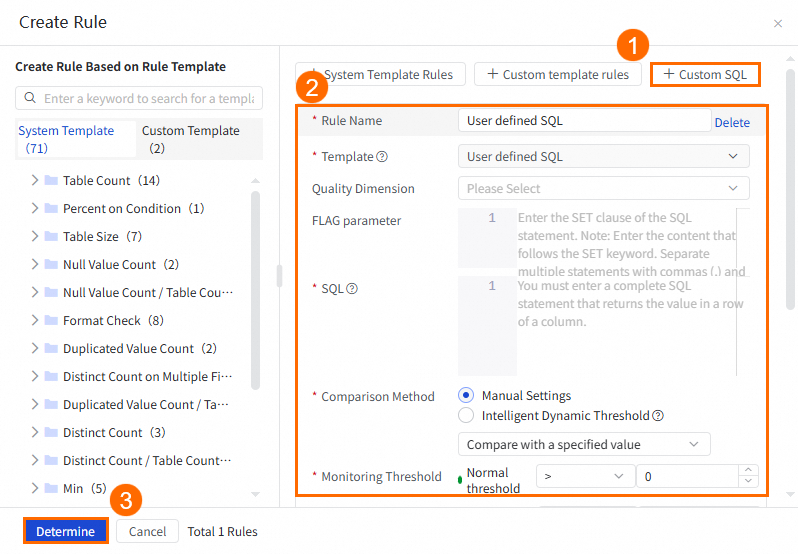

Create a rule using custom SQL

This method lets you write the full check logic as a SQL statement, with no template required.

The parameters are the same as for custom templates (FLAG parameter and SQL), with one difference: you must replace <table_name> with the actual table name in your SQL statement — the table is not substituted automatically. Example: SELECT count(*) FROM <table_name> WHERE ds=$[yyyymmdd];

For partition filter expression syntax, see Appendix 2: Built-in partition filter expressions.

Import existing rules

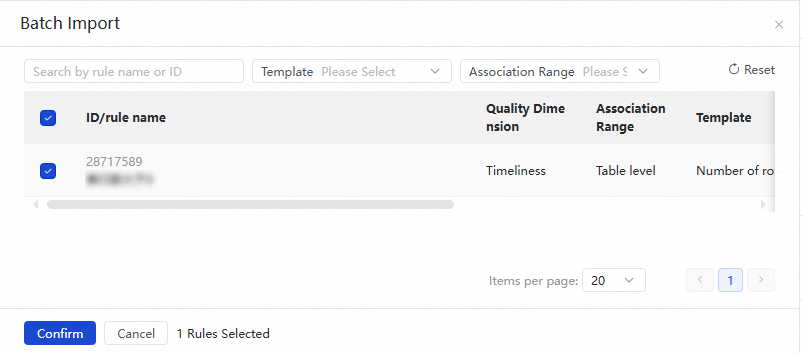

If you have already configured rules for this table in DataWorks Data Quality, import them to reuse the configuration. Click Import Rule to open the Batch Import panel. Filter by rule ID, name, template, or association range, then select the rules to import. The association range specifies whether the rule applies to the entire table or specific fields.

After the node is published, you can view its rules in DataWorks Data Quality, but you cannot modify or delete them there.

Configure runtime resources

Select the data source to use when running the monitoring rules. By default, the data source that the monitored table belongs to is selected. If you select a different data source, make sure it can access the target table.

Step 3: Configure exception handling

In the Handling Policy section of the configuration tab, define what happens when a monitoring rule detects an issue.

Exception categories

| Exception | Description |

|---|---|

| Strong rule — Check failed | The validation task itself failed to run (for example, the monitored partition was not generated, or the monitoring SQL failed). |

| Strong rule — Critical threshold exceeded | The result hit the red threshold — critical data issue, pipeline is blocked by default. |

| Strong rule — Warning threshold exceeded | The result hit the orange threshold — data is abnormal but the pipeline is not blocked. |

| Weak rule — Check failed | Same as strong rule check failed, but the rule is not pipeline-blocking. |

| Weak rule — Critical threshold exceeded | The result hit the red threshold, but descendant nodes continue to run. |

| Weak rule — Warning threshold exceeded | The result hit the orange threshold for a weak rule. |

Handling policies

For each exception category, select one of the following policies:

-

Do not ignore: Stop the current node and mark it as Failed. Descendant nodes do not run, which prevents dirty data from propagating downstream. Use this for exceptions with significant business impact.

-

Ignore: Continue running descendant nodes despite the exception.

Notification

Configure who gets notified when an exception occurs.

| Method | Recipient | Setup |

|---|---|---|

| Current account user only | Make sure the account's email address is configured. See View and set alert contacts. | |

| Email + SMS | Current account user only | Make sure the account's phone number is configured. See View and set alert contacts. |

| Phone call | Current account user only | Make sure the account's phone number is configured. See View and set alert contacts. |

| Webhook | Custom recipients via webhook URL | Provide the webhook URL. To get a DingTalk webhook URL, see Obtain a webhook URL. |

For high-impact exceptions (such as strong rule — critical threshold exceeded), use a fast notification method — phone call or webhook — so the right people can investigate before downstream jobs are affected.

Step 4: Configure scheduling

To run the node on a recurring schedule, click Properties in the right-side navigation pane and configure the scheduling properties. See Node scheduling configuration.

Step 5: Debug the node

-

(Optional) Configure the resource group and scheduling parameter values for the test run.

-

In the right-side navigation pane, click Run Configuration. On the Debugging Configurations tab, select a resource group for scheduling.

-

If the node uses scheduling parameters, assign test values in the Script Parameters section. See Task debugging process.

-

-

Save and run the node. In the top toolbar, click the

icon to save and the

icon to save and the  icon to run. View the result in the lower panel. If the run fails, check the error message and fix the configuration before proceeding.

icon to run. View the result in the lower panel. If the run fails, check the error message and fix the configuration before proceeding.

Step 6: Deploy the node

After configuration is complete, deploy the node. Deployment also publishes all monitoring rules configured in the node. After deployment, the node runs automatically according to its scheduling properties.

-

In the top toolbar, click the

icon to save the node.

icon to save the node. -

Click the

icon to deploy.

icon to deploy.

For deployment details, see Node and workflow deployment.

What's next

-

Monitor operations: Click O&M in the upper-right corner of the configuration tab to go to Operation Center, where you can view the node's run history and the details of triggered rules. See Manage auto triggered tasks.

-

Review rule results in Data Quality: After the node is published, go to DataWorks Data Quality to view rule details. Note that modifying or deleting rules from Data Quality is not supported for rules created in a data quality monitoring node. See Data Quality.