Data Integration supports real-time data synchronization from single tables in sources such as DataHub, Kafka, and LogHub to MaxCompute. This topic describes how to synchronize real-time data from a single LogHub (SLS) table to MaxCompute.

Prerequisites

You have purchased a Serverless resource group or an exclusive resource group for Data Integration.

You have created a LogHub (SLS) data source and a MaxCompute data source. For more information, see Data Source Configuration.

You have established network connectivity between the resource group and the data sources. For more information, see Overview of network connection solutions.

Limits

Syncing source data to MaxCompute foreign tables is not supported.

Procedure

Step 1: Select a sync task type

Go to the Data Integration page.

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose . On the page that appears, select the desired workspace from the drop-down list and click Go to Data Integration.

In the navigation pane on the left, click Synchronization Task. At the top of the page, click Create Synchronization Task to open the task creation page. Configure the basic information as follows.

Source:

LogHub.Destination:

MaxCompute.Task Name: You can enter a custom name for the sync task.

Task Type:

Single Logstore Realtime Sync.

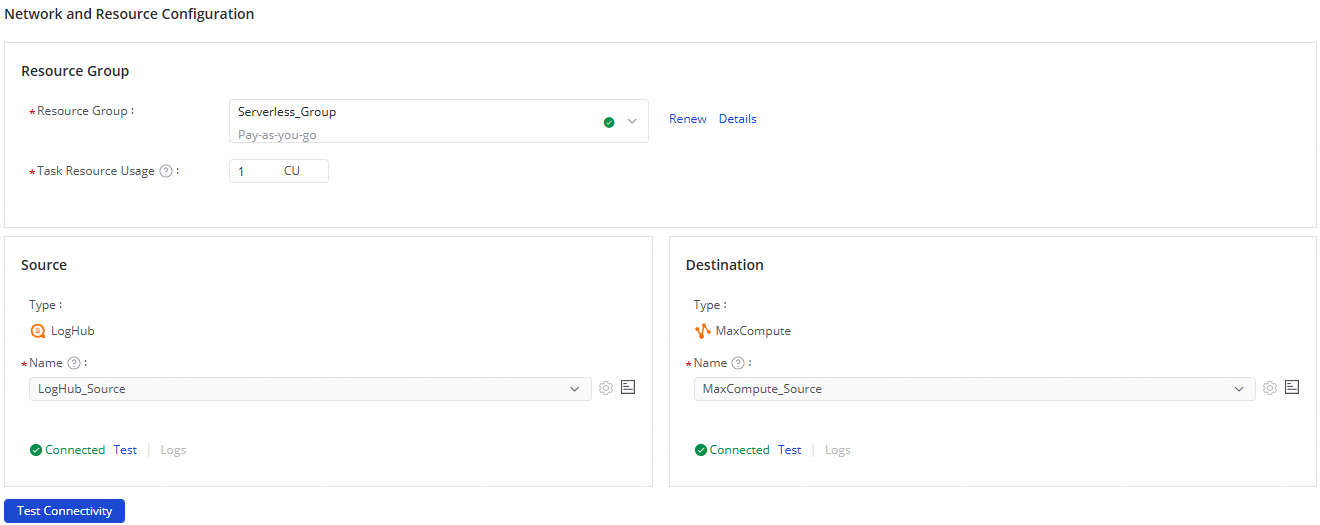

Step 2: Configure network and resources

In the Network and Resource Configuration section, select a Resource Group for the sync task. For Task Resource Usage, allocate CUs based on your requirements.

Set Source to the

LogHubdata source and Destination to theMaxComputedata source. Then, click Test Connectivity.

After ensuring that the source and destination data sources are connected, click Next.

Step 3: Configure the synchronization link

1. Configure the SLS source

At the top of the page, click the SLS source and edit the Source Information.

In the Source Information section, select the logstore in LogHub to synchronize.

In the upper-right corner, click Data Sampling.

In the dialog box that appears, specify the Start Time and Sampled Data Records, and then click Start Collection. This action samples data from the logstore and generates a preview. You can use this preview to configure data processing and visualization in subsequent nodes.

After you select a logstore, its data is automatically loaded into the Configure Output Field section, and the corresponding field names are generated. You can adjust the Data Type, Delete fields, or use the Add Output Field option as needed.

NoteIf a configured field does not exist in SLS, the value of that field is output as NULL to the downstream node.

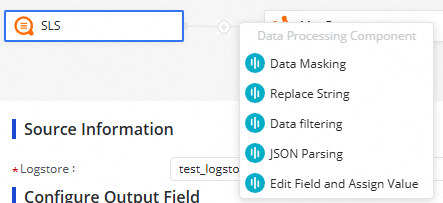

2. Edit the data processing node

You can click the  icon to add data processing methods. The following data processing methods are supported: Data Masking, Replace String, Data filtering, JSON Parsing, and Edit Field and Assign Value. You can arrange the data processing methods based on your business requirements. When the synchronization task is run, data is processed based on the processing order that you specify.

icon to add data processing methods. The following data processing methods are supported: Data Masking, Replace String, Data filtering, JSON Parsing, and Edit Field and Assign Value. You can arrange the data processing methods based on your business requirements. When the synchronization task is run, data is processed based on the processing order that you specify.

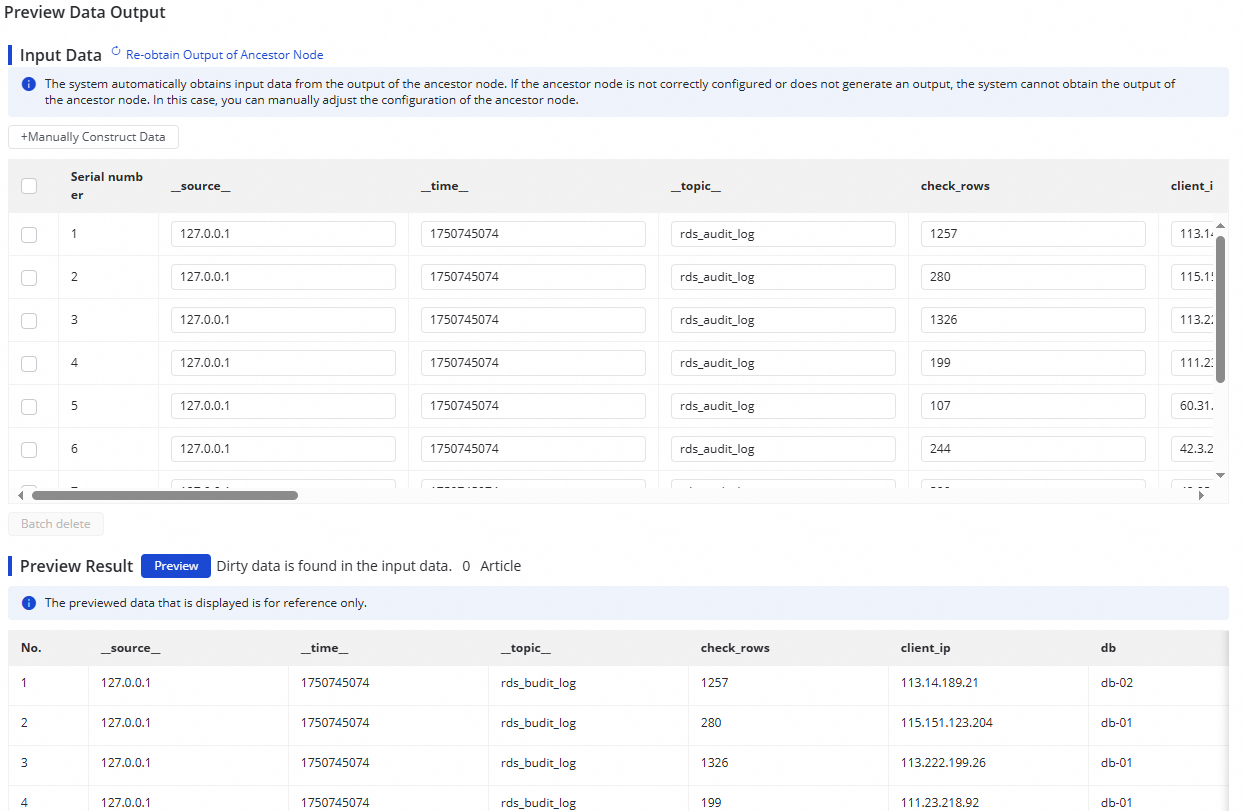

After you configure a data processing node, click the Preview Data Output button in the upper-right corner. In the dialog box that appears, click Re-obtain Output of Ancestor Node to simulate the output that the current node generates from the sampled log data.

The data output preview feature relies on Data Sampling from the LogHub (SLS) source. Therefore, you must complete data sampling on the source form to enable the preview.

3. Configure the MaxCompute destination

At the top of the page, click the MaxCompute destination and edit the Destination Information.

In the Destination Information area, select Create tables automatically or Use Existing Table.

If you select Automatically Create Table, a table with the same name as the source table is created by default. You can change the name of the destination table as needed.

If you select Use Existing Table, select the destination table from the drop-down list.

(Optional) Modify the schema of a destination table.

If you select Create tables automatically for the Destination Table parameter, click Edit Table Schema. In the dialog box that appears, edit the schema of the destination table that will be automatically created. You can also click Re-generate Table Schema Based on Output Column of Ancestor Node to re-generate a schema based on the output columns of an ancestor node. You can select a column from the generated schema and configure the column as the primary key.

NoteThe destination table must have a primary key. Otherwise, the configurations cannot be saved.

Configure mappings between fields in the source and fields in the destination.

After you complete the preceding configuration, the system automatically establishes mappings between fields in the source and fields in the destination based on the Map Fields with Same Name principle. You can modify the mappings based on your business requirements. One field in the source can map to multiple fields in the destination. Multiple fields in the source cannot map to the same field in the destination. If a field in the source has no mapped field in the destination, data in the field in the source is not synchronized to the destination.

Partition settings.

Select a partitioning method. The supported methods are Automatic Time-based Partitioning and Dynamic Partitioning By Field Value.

4. Configure alert rules

To prevent the failure of the synchronization task from causing latency on business data synchronization, you can configure different alert rules for the synchronization task.

In the upper-right corner of the page, click Configure Alert Rule to go to the Alert Rule Configurations for Real-time Synchronization Subnode panel.

In the Configure Alert Rule panel, click Add Alert Rule. In the Add Alert Rule dialog box, configure the parameters to configure an alert rule.

NoteThe alert rules that you configure in this step take effect for the real-time synchronization subtask that will be generated by the synchronization task. After the configuration of the synchronization task is complete, you can refer to Run and manage real-time synchronization tasks to go to the Real-time Synchronization Task page and modify alert rules configured for the real-time synchronization subtask.

Manage alert rules.

You can enable or disable alert rules that are created. You can also specify different alert recipients based on the severity levels of alerts.

5. Configure advanced parameters

DataWorks allows you to modify the configurations of specific parameters. You can change the values of these parameters based on your business requirements.

To prevent unexpected errors or data quality issues, we recommend that you understand the meanings of the parameters before you change the values of the parameters.

In the upper-right corner of the configuration page, click Configure Advanced Parameters.

In the Configure Advanced Parameters panel, change the values of the desired parameters.

6. Configure resource groups

You can click Configure Resource Group in the upper-right corner of the page to view and change the resource groups that are used to run the current synchronization task.

7. Perform simulated running

After the preceding configuration is complete, you can click Perform Simulated Running in the upper-right corner of the configuration page to enable the synchronization task to synchronize the sampled data to the destination table. You can view the synchronization result in the destination table. If specific configurations of the synchronization task are invalid, an exception occurs during the test run, or dirty data is generated, the system reports an error in real time. This can help you check the configurations of the synchronization task and determine whether expected results can be obtained at the earliest opportunity.

In the dialog box that appears, configure the parameters for data sampling from the specified table, including the Start At and Sampled Data Records parameters.

Click Start Collection to enable the synchronization task to sample data from the source.

Click Preview to enable the synchronization task to synchronize the sampled data to the destination.

8. Run the synchronization task

After the configuration of the synchronization task is complete, click Complete in the lower part of the page.

In the page of page, find the created synchronization task and click Start in the Operation column.

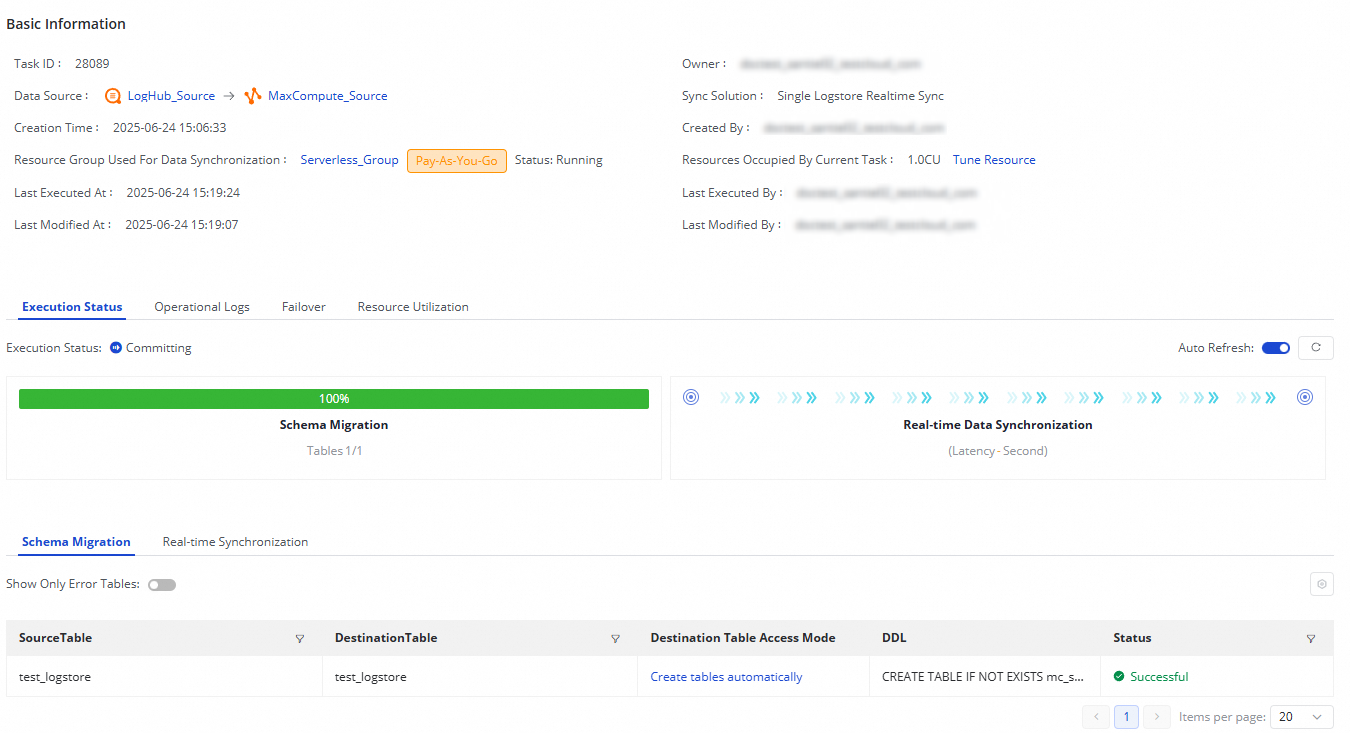

Click the Name or ID of the synchronization task in the Tasks section and view the detailed running process of the synchronization task.

Synchronization task O&M

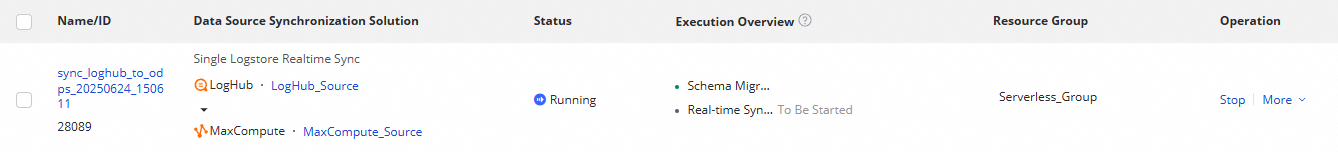

View task running status

After you create a sync task, you can view a list of your sync tasks and their basic information on the Sync Task page.

In the Actions column, you can Start or Stop a sync task. You can also click More to perform other operations, such as Edit and View.

You can view the status of running tasks in the Execution Overview and click a task to view its execution details.

A sync task from LogHub (SLS) to MaxCompute involves two steps: Schema Migration and Real-time Data Synchronization.

Schema Migration: Specifies whether to use an existing destination table or automatically create a new one. If you select automatic table creation, the Data Definition Language (DDL) statement for the table is displayed.

Real-time Data Synchronization: Provides performance statistics, such as real-time runtime information, DDL records, and alert information.

Rerun the synchronization task

In some special cases, if you want to modify the fields to synchronize, the fields in a destination table, or table name information, you can also click Rerun in the Operation column of the desired synchronization task. This way, the system synchronizes the changes that are made to the destination. Data in the tables that are already synchronized and are not modified will not be synchronized again.

Directly click Rerun without modifying the configurations of the synchronization task to enable the system to rerun the synchronization task.

Modify the configurations of the synchronization task and then click Complete. Click Apply Updates that is displayed in the Operation column of the synchronization task to rerun the synchronization task for the latest configurations to take effect.