The JSON Parsing Component converts a JSON field from a real-time single-table synchronization source into structured table columns. Add it between the Source and Destination nodes in a Data Integration task to parse leaf nodes, JSON objects, and JSON arrays into fixed or dynamic output fields.

Prerequisites

Before you begin, make sure you have:

-

A data source configured in Data Integration. For more information, see Data source management.

-

A real-time single-table synchronization task. For more information, see Configure a real-time sync task in Data Integration.

The JSON Parsing Component is only available when the synchronization type is Single-table Real-time. For supported data sources, see Supported data sources and synchronization solutions.

Add the JSON Parsing Component

-

In the task canvas, enable the Data Processing option.

-

Click +Add Node and select JSON Parsing.

-

Enter a name and optional description for the node.

-

Configure the component using either fixed fields or dynamic fields.

Configure fixed fields

Fixed fields map specific JSON paths to named output columns. Each rule explicitly defines which part of the JSON to extract and how to output it.

Get the JSON data structure

From Data Sampling (recommended)

Run Data Sampling on the Source before configuring fixed fields. This is the fastest way to load the JSON structure.

-

Run Data Sampling on the Source (for example, Kafka) to capture sample records.

-

Click Add Fixed Field For JSON Parsing to open the Fixed Field For JSON Parsing dialog.

-

In the Select Field list, choose the source field that contains the JSON data.

-

Click Get JSON Data Structure to parse and display the JSON tree.

After loading the structure, use Run Simulation to verify that parsed fields match your expectations.

From manual input

If you have not sampled data or the source data is empty:

-

Click Add Fixed Field For JSON Parsing to open the dialog.

-

Click Edit JSON Text, paste your JSON content into the editor, and click Return to Selection.

Parse nodes from the JSON tree

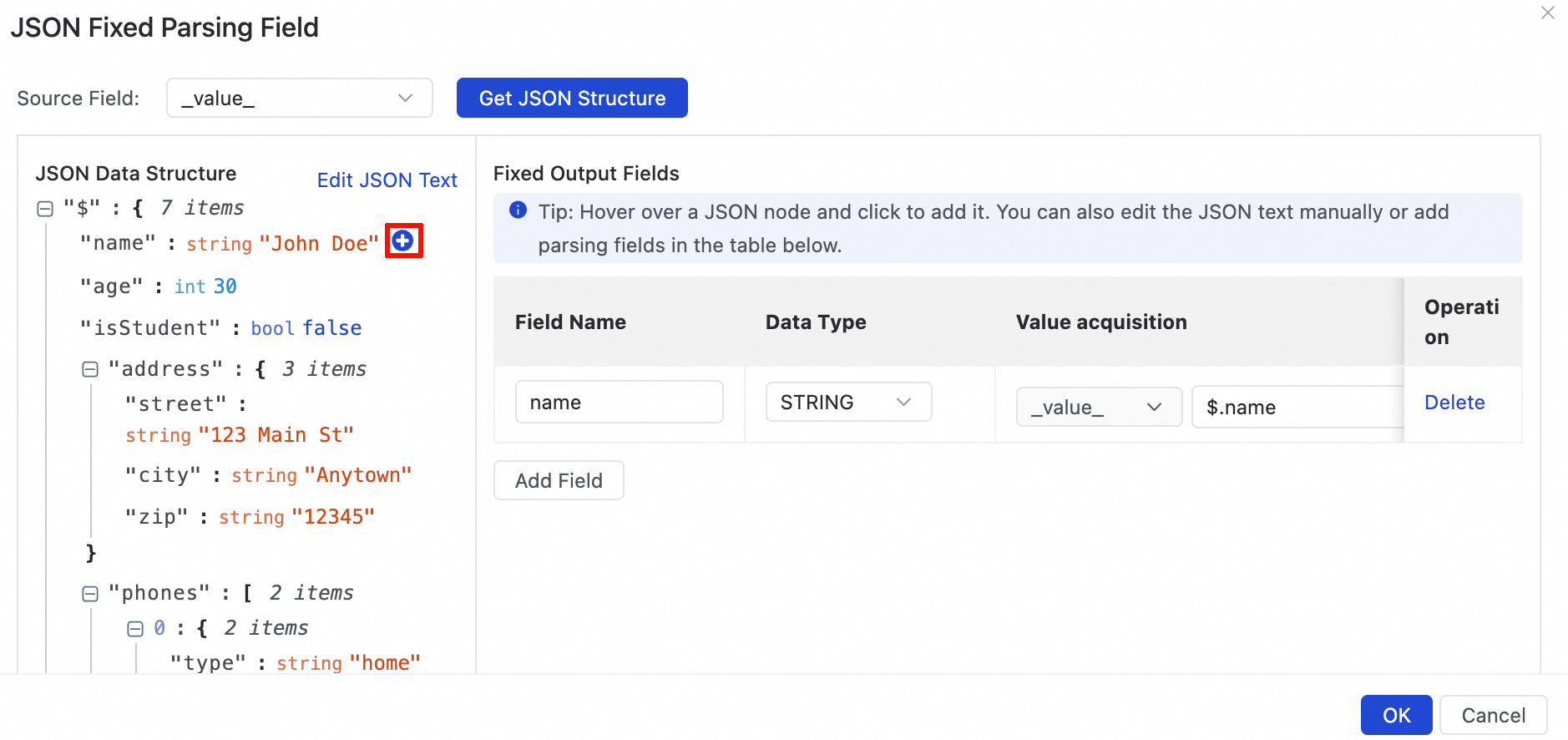

After loading the JSON structure, click the ![]() icon next to any field to add a parsing rule to Fixed Output Fields. The behavior depends on the node type.

icon next to any field to add a parsing rule to Fixed Output Fields. The behavior depends on the node type.

Leaf nodes

Clicking the icon next to a leaf node automatically adds it as a fixed output field.

Example output from parsing leaf nodes:

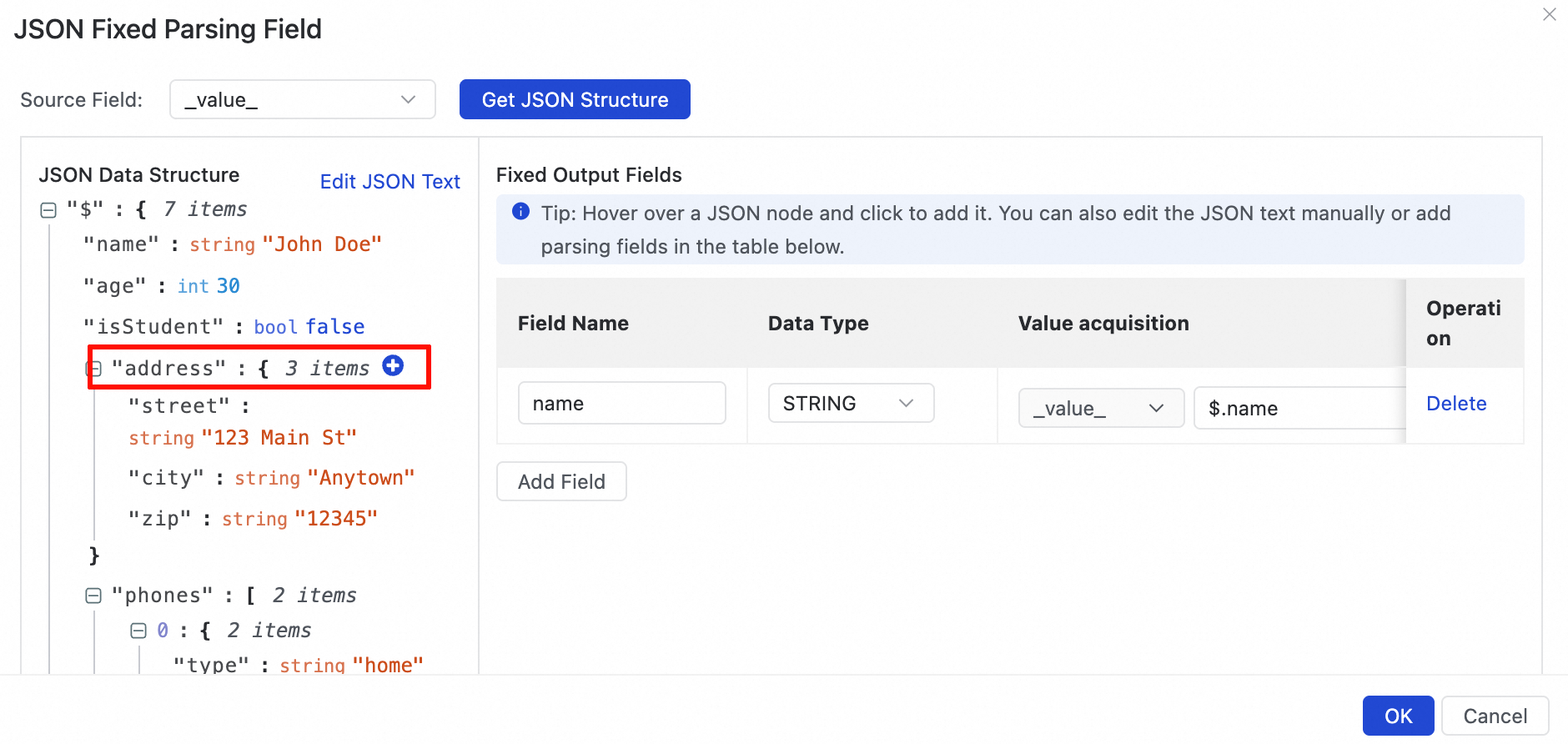

JSON objects

Clicking the icon next to a JSON object (for example, the address field) opens a dialog with two options:

| Option | Output result | Example |

|---|---|---|

| Add each key-value pair in the JSON object as a separate field. The key is used as the field name and is assigned its corresponding value. | The object is flattened into multiple columns. | Input: {"address":{"street":"Main St","city":"Seattle","zip":"98101"}} → Three output columns: street = "Main St", city = "Seattle", zip = "98101" |

| Add the entire JSON object as a single field. The value is the JSON string of the object. | The entire object is stored as a JSON string in one column. | Input: {"address":{"street":"Main St","city":"Seattle","zip":"98101"}} → One output column: address = {"street":"Main St","city":"Seattle","zip":"98101"} |

JSON arrays

Clicking the icon next to a JSON array opens a dialog with two options:

| Option | Output result | Example |

|---|---|---|

| Add the array as a multi-row output. | Each element in the array becomes a separate row. The element is combined with the other fields in the record to form one row per element. | Input: {"id":1,"tags":["a","b","c"]} → Three output rows: (id=1, tags="a"), (id=1, tags="b"), (id=1, tags="c") |

| Add the entire array as a single field. The value is the JSON string of the array. | The entire array is stored as a JSON string in one column. | Input: {"id":1,"tags":["a","b","c"]} → One output column: tags = ["a","b","c"] |

Nested arrays within key-value pairs of the primary array are not recursively parsed.

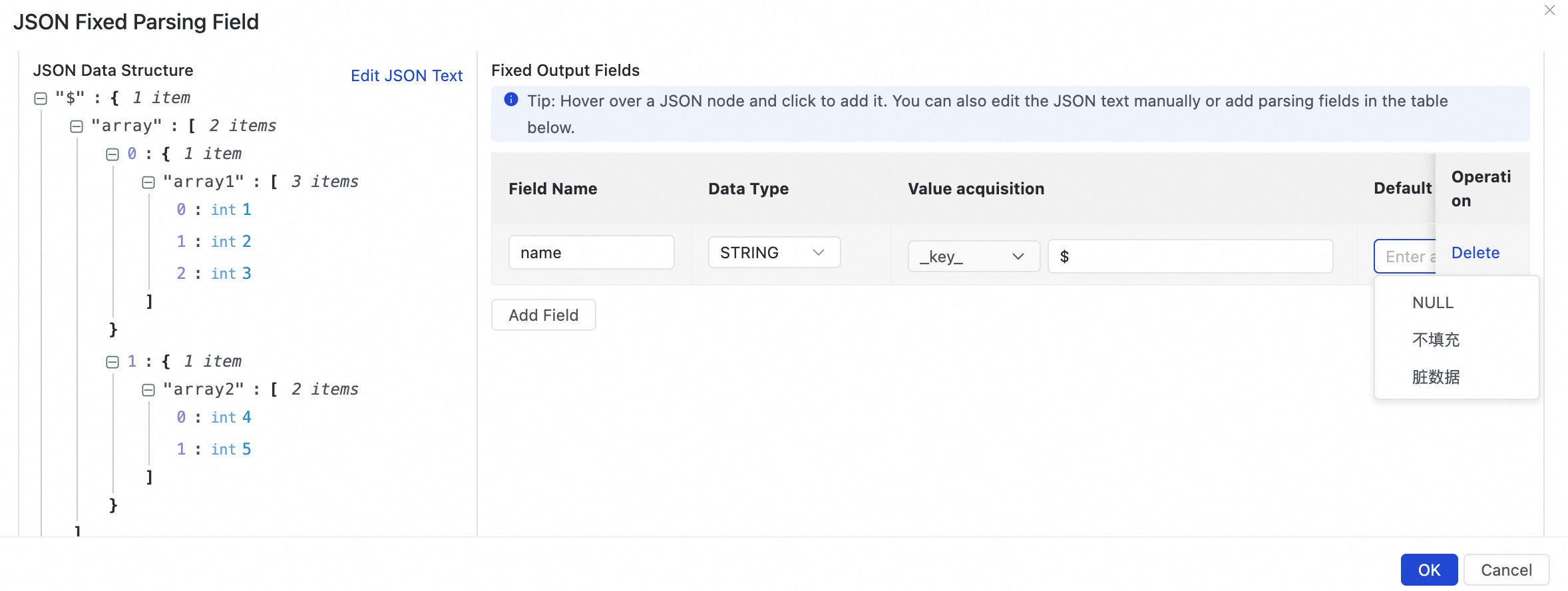

Add a field manually

If you cannot load the JSON structure from Data Sampling or manual input, click Add A Field to define a parsing rule by path.

| Parameter | Description |

|---|---|

| Field Name | The name of the output column referenced by downstream nodes. |

| Value | The JSON path to the value to extract. See JSON path syntax below. |

| Default Value | The behavior when the JSON path cannot be resolved. See Default Value options below. |

JSON path syntax

Use the following syntax to write JSON paths in the Value field:

| Syntax | Description | Example path | Input JSON | Extracted value |

|---|---|---|---|---|

$ |

Root node | $ |

{"name":"Alice"} |

{"name":"Alice"} (entire document) |

. |

Child node | $.name |

{"name":"Alice"} |

Alice |

[number] |

Array index, starting from 0 | $.items[0] |

{"items":["a","b","c"]} |

a |

[*] |

Expand array into multiple rows | $.items[*] |

{"items":["a","b","c"]} |

Three rows: a, b, c |

Field names in the JSON path can contain only letters, numbers, hyphens (-), and underscores (_).

Default Value options

Applies when the JSON path does not exist in a record — for example, when the upstream schema changes.

| Option | Behavior | When to use |

|---|---|---|

| NULL | Sets the field value to NULL. | Use when downstream systems handle NULL explicitly. |

| Do Not Fill | Leaves the field unpopulated. If the destination table has a default value for this column, that default is used instead of NULL. | Use when you want the destination's own default to apply. |

| Dirty Data | Marks the record as dirty data. The task may stop depending on your dirty data tolerance settings. | Use when a missing path indicates a data quality issue that should halt processing. |

| Manually enter a constant | Sets the field to a fixed constant you specify. | Use when a fallback value is known and safe to apply. |

Configure dynamic fields

Dynamic fields parse all key-value pairs under a specified JSON object path at runtime. Use dynamic fields when the JSON schema changes frequently — for example, when new keys are added to an object — so the task automatically picks up new fields without reconfiguration.

At runtime, the component processes each key-value pair under the specified JSON object path, using the original key as the field name and converting the value to STRING. New keys added to the object are automatically detected and passed to downstream nodes.

Only the first level of key-value pairs is expanded. Nested objects and arrays are serialized as JSON strings.

Get the JSON data structure

From Data Sampling (recommended)

-

Run Data Sampling on the Source.

-

Click Add Dynamic Field For JSON Parsing to open the Dynamic Output Field For JSON Parsing dialog.

-

Select the source field from the list.

-

Click Get JSON Data Structure to load the JSON tree.

From manual input

-

Click Add Dynamic Field For JSON Parsing.

-

Click Edit JSON Text, enter the JSON, and click Return to Selection.

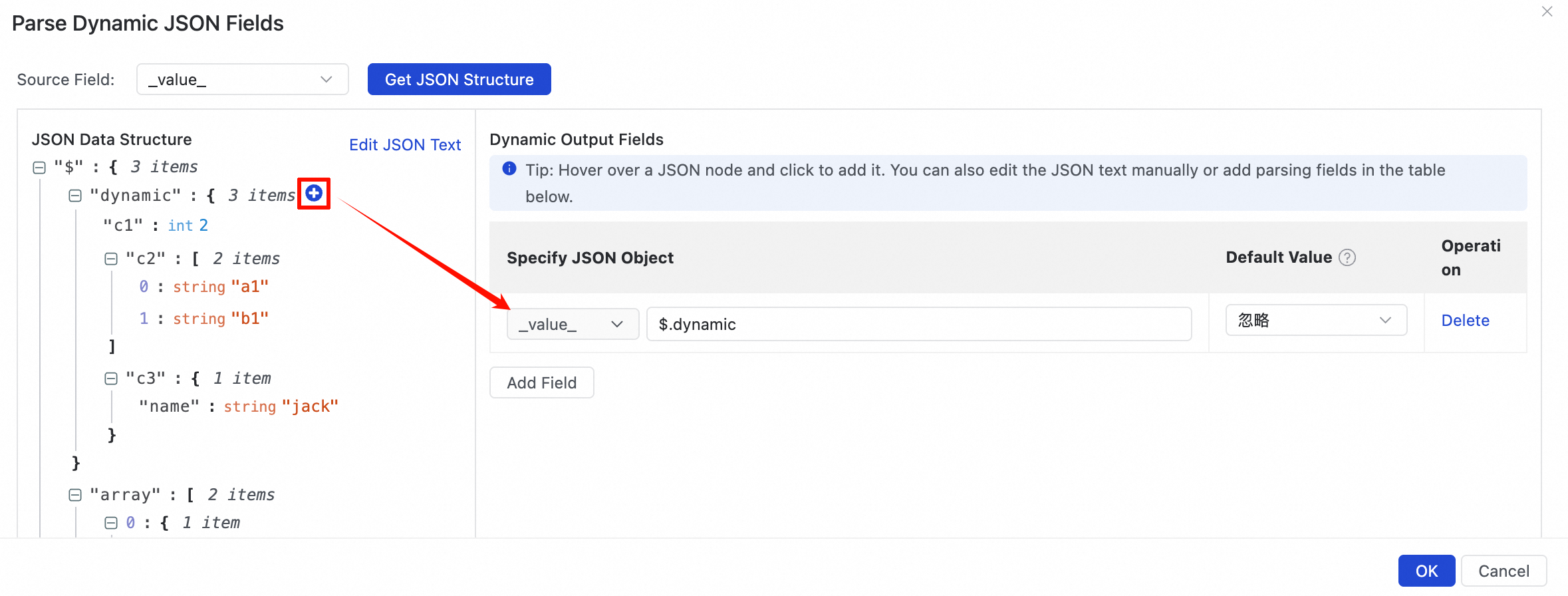

How dynamic parsing works

The following example shows what happens when a new field c3 is added to the dynamic object mid-stream:

| Input JSON | c1 (STRING) | c2 (STRING) | c3 (STRING) |

|---|---|---|---|

{"dynamic":{"c1":2,"c2":["a1","b1"]}} |

2 |

["a1","b1"] |

Not populated |

{"dynamic":{"c1":2,"c2":["a1","b1"],"c3":{"name":"jack"}}} |

2 |

["a1","b1"] |

{"name":"jack"} |

Configuration:

Add a dynamic field manually

Click Add A Field to specify the JSON object path directly:

| Parameter | Description |

|---|---|

| Specify JSON Object | The path to the JSON object to expand dynamically. Uses $ for the root node, . for child nodes, and [number] for array indexes. Field names can contain only letters, numbers, hyphens (-), and underscores (_). |

| Default Value | The behavior when the specified path cannot be resolved. Ignore: skips dynamic parsing for this record. Dirty Data: marks the record as dirty data; the task may stop based on your dirty data tolerance settings. |

Handle duplicate field names

When a dynamically expanded field has the same name as an existing field in the record, choose one of the following policies:

| Policy | Behavior |

|---|---|

| Overwrite | The expanded field's value replaces the existing field's value. |

| Discard | The existing field's value is kept; the expanded field's value is discarded. |

| Error | The task stops and reports an error. |

What's next

After configuring the Source and JSON Parsing nodes, click Run Simulation to preview the output data and verify that the parsed fields match your expectations.