Data Integration's codeless UI lets you set up periodic batch synchronization tasks without writing code. You select a source and destination, configure field mappings and scheduling properties, then publish — no scripts required. This topic covers the general configuration flow for a single-table batch synchronization task. Settings vary by data source; for source-specific details, see Supported data sources and synchronization solutions.

The codeless UI does not support all advanced features. If you need fine-grained control, you can convert the task to a script and configure it in the code editor at any time. If a data source does not support the codeless UI, the UI displays a message prompting you to switch. See Code editor configuration.

Prerequisites

Before you begin, ensure that you have:

-

Data sources configured in Data Source Management in DataWorks. See Data source list for supported sources and configuration steps.

-

A resource group of suitable specification purchased and associated with your workspace. See Use a serverless resource group.

-

Network connectivity established between the resource group and your data sources. See Configure network connectivity.

Step 1: Create a Data Integration node

Data Studio (new version)

-

Log on to the DataWorks console. In the left-side navigation pane, choose Data Development and O&M > Data Studio. Select your workspace from the drop-down list and click Go to Data Studio.

-

Create a workflow. See Workflows.

-

Create a Data Integration node using one of the following methods:

-

Method 1: In the workflow list, click the

icon in the upper-right corner and choose Create Node > Data Integration.

icon in the upper-right corner and choose Create Node > Data Integration. -

Method 2: Double-click the workflow name and drag the Data Integration node from the Data Integration directory to the workflow editor on the right.

-

-

Configure the source and destination types, select Single Table Batch Sync, and click OK.

Data Studio (legacy version)

-

Log on to the DataWorks console. In the left-side navigation pane, choose Data Development and O&M > Data Development. Select your workspace from the drop-down list and click Go to Data Development.

-

Create a workflow. See Create a workflow.

-

Create a batch synchronization node using one of the following methods:

-

Method 1: Expand the workflow, right-click Data Integration, and choose Create Node > Batch Synchronization.

-

Method 2: Double-click the workflow name and drag the Batch Synchronization node from the Data Integration directory to the workflow editor on the right.

-

-

Follow the prompts to finish creating the node.

Step 2: Configure data sources and runtime resources

-

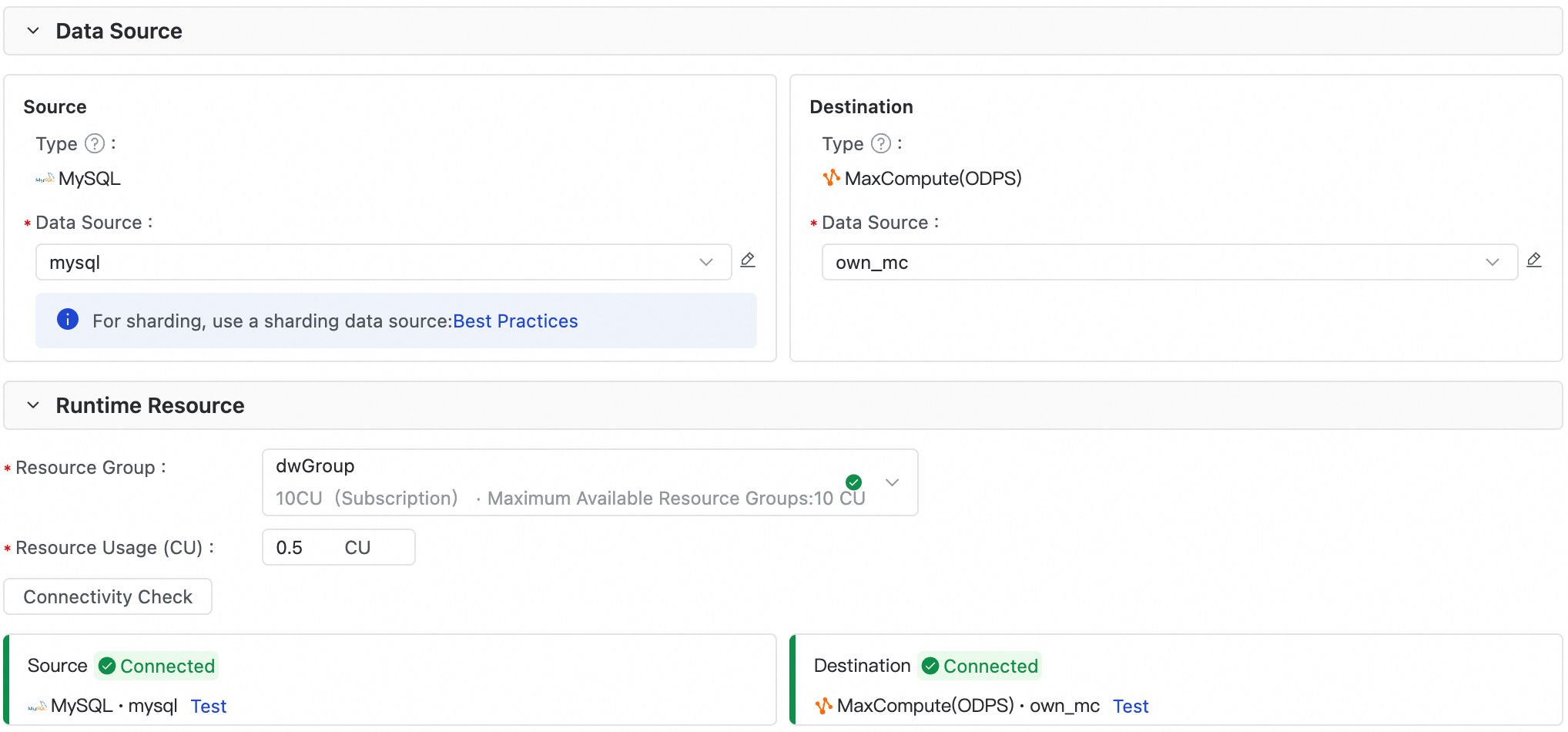

In the Source and Destination sections, select the source and destination objects to read from and write to.

-

In the Runtime Resources section, select a resource group and allocate CUs. If the task fails with an out-of-memory (OOM) error, increase the CU value. For recommended configurations, see Data Integration performance metrics.

-

Verify that both the source and destination pass the connectivity test. If connectivity fails, configure the network connection as prompted or see Configure network connectivity.

If your resource group doesn't appear in the list, confirm it's associated with the workspace. See Use a serverless resource group.

Step 3: Configure the synchronization solution

Plugin configurations vary. The following sections describe common settings. For plugin-specific support and configuration details, see Data source list.

Source

Configure the source table and fill in the required parameters.

| Setting | Required | Description |

|---|---|---|

| Data filtering | No | Filter source rows using a WHERE clause condition (omit the WHERE keyword). Only rows that match the condition are synchronized. If no filter is set, the task synchronizes the full table by default. Configure a filter when you need incremental synchronization — for example, gmt_create >= '${bizdate}' synchronizes only new rows from the current day. Assign a value to the variable in the scheduling properties. See Supported formats of scheduling parameters and Scenario: Configure a batch synchronization task for incremental data. |

| Sharding key | No | The source field to use as the sharding key (splitPk). The task splits data into subtasks based on this key for concurrent, batched reads. Configure a sharding key when you want to improve read throughput on large tables. Use the table's primary key — primary keys are usually distributed evenly, which prevents data skew across shards. The sharding key must be an integer; string, float, date, and other types are not supported. If an unsupported type is specified, DataWorks ignores it and falls back to a single channel. If no sharding key is set, the task uses a single channel. Not all plugins support sharding key configuration — check your plugin's documentation for details. |

The method for configuring incremental synchronization varies by data source and plugin.

Data processing

Data processing is available in the new version of Data Studio. To use it in the legacy version, select Use New Version (with data processing feature) when creating the task. To access all features, upgrade your workspace to the new version. See Data Studio upgrade guide.

Data processing lets you transform source data — using string replacement, AI-assisted processing, or data embedding — before writing it to the destination table.

-

Click the switch to enable data processing.

-

In the data processing list, click Add Node and select a processing type: Replace String, AI Process, or Data Embedding. Add multiple nodes as needed — DataWorks runs them in sequential order.

-

Configure the processing rules as prompted. For details on AI-assisted processing and data embedding, see Data processing.

Data processing consumes additional computing resources, increasing resource overhead and task runtime. Keep processing logic as simple as possible to avoid affecting synchronization throughput.

Destination

Configure the destination table and fill in the required parameters.

| Setting | Required | Description |

|---|---|---|

| Pre- and post-synchronization statements | No | Execute SQL statements on the destination before writing data (pre-sync) and after the write completes (post-sync). Configure a pre-sync statement when you need to prepare the destination table before loading — for example, use truncate table tablename in the Pre-import Preparation Statement field to clear existing data before writing new rows. MySQL Writer supports preSql and postSql for this purpose. |

| Write mode | Depends on plugin | Specify how to handle write conflicts — for example, when a primary key already exists in the destination. The available options depend on the destination data source and writer plugin. See your plugin's documentation for details. |

Field mapping

After selecting the source and destination, define the mapping between source and destination columns. The task writes data from each source field to its mapped destination field.

-

Source fields not mapped to any destination field are not synchronized.

-

If the automatic mapping is incorrect, you can adjust it manually.

-

To exclude a field, delete the connecting line between the source and destination fields.

Type mismatches between source and destination fields during synchronization can produce dirty data and cause write failures. Set the tolerance for dirty data in Advanced Settings (the next step).

Map fields by name or by row position. Additional options:

-

Assign values to destination fields: In the Source Field column, click Add Field to add constants, scheduling parameters (for example,

'${scheduling_parameter}'), or built-in variables (for example,'#{built_in_variable}#'). For scheduling parameter syntax, see Supported formats of scheduling parameters. -

Edit source fields: Click Manually Edit Mapping to apply source database functions to fields (for example,

Max(id)to synchronize only the maximum value), or to manually add fields that weren't retrieved during auto-mapping.NoteMaxCompute Reader does not support source database functions.

The following built-in variables are available for select plugins:

| Built-in variable | Description | Supported plugins |

|---|---|---|

'#{DATASOURCE_NAME_SRC}#' |

Name of the source data source | MySQL Reader, MySQL (Sharded) Reader, PolarDB Reader, PolarDB (Sharded) Reader, PostgreSQL Reader, PolarDB-O Reader, PolarDB-O (Sharded) Reader |

'#{DB_NAME_SRC}#' |

Name of the database where the source table resides | MySQL Reader, MySQL (Sharded) Reader, PolarDB Reader, PolarDB (Sharded) Reader, PostgreSQL Reader, PolarDB-O Reader, PolarDB-O (Sharded) Reader |

'#{SCHEMA_NAME_SRC}#' |

Name of the schema where the source table resides | PolarDB Reader, PolarDB (Sharded) Reader, PostgreSQL Reader, PolarDB-O Reader, PolarDB-O (Sharded) Reader |

'#{TABLE_NAME_SRC}#' |

Name of the source table | MySQL Reader, MySQL (Sharded) Reader, PolarDB Reader, PolarDB (Sharded) Reader, PostgreSQL Reader, PolarDB-O Reader, PolarDB-O (Sharded) Reader |

Step 4: Configure advanced settings

Advanced settings was previously called Channel Control in earlier versions.

Use advanced settings to control synchronization behavior. For parameter details, see Relationship between concurrency and throttling for batch synchronization.

| Parameter | Required | Description |

|---|---|---|

| Expected maximum concurrency | No | The maximum number of threads used to concurrently read from the source or write to the destination. Due to resource constraints, actual runtime concurrency may not reach this value. Fees for the debugging resource group are based on actual concurrency, not the configured value. Task scheduling fees are based on the number of batch synchronization tasks, not concurrency. See Performance metrics. |

| Synchronization rate | No | Controls data transfer speed. Throttling caps the extraction rate to protect the source database from excessive load — the minimum speed limit is 1 MB/s. No throttling lets the task run at the maximum transfer rate the hardware supports, within the configured concurrency limit. The traffic metric reflects throughput within Data Integration itself, not actual network interface card (NIC) traffic. NIC traffic is typically one to two times the channel traffic, depending on the serialization overhead of the data source's transfer protocol. |

| Policy for dirty data records | No | Dirty data refers to data records that could not be written to the destination due to exceptions, such as type conflicts or constraint violations. By default, dirty data is allowed and does not affect task execution. Set the tolerance to 0 to fail the task if any dirty data is generated. Set a threshold to allow dirty data up to that limit — data within the threshold is skipped and not written to the destination; if the limit is exceeded, the task fails. Excessive dirty data can slow overall synchronization. |

| Distributed execution | No | Splits task slices into multiple processes for concurrent execution, breaking the single-process bottleneck and improving throughput. Enable this option when you have high throughput requirements and sufficient resources. Requires concurrency of 8 or greater. Disable this option if an OOM error occurs at runtime. |

| Time zone | No | Set the source time zone to ensure correct timestamp conversion when synchronizing data across time zones. |

Overall synchronization speed depends not only on the settings above, but also on source database performance and network conditions. For tuning guidance, see Accelerate or rate-limit batch synchronization tasks.

Step 5: Configure scheduling properties

For a periodically scheduled task, click Scheduling in the right-side panel to configure scheduling parameters, scheduling policy, schedule time, and scheduling dependencies.

-

For the new version of Data Studio, see Node scheduling (new version).

-

For the legacy version, see Node scheduling configuration (legacy version).

For scheduling parameter usage examples, see Typical scenarios of using scheduling parameters in Data Integration.

Step 6: Test and publish the task

Configure debugging parameters

On the task configuration page, click Run Configuration in the right-side panel.

| Parameter | Description |

|---|---|

| Resource group | Select a resource group connected to the data source. |

| Script parameters | Assign values to placeholder parameters. For example, if the task uses ${bizdate}, enter a date in yyyymmdd format. |

Run the task

Click the ![]() Run icon in the toolbar to run and debug the task. After it completes, query the destination table to verify the data was synchronized correctly.

Run icon in the toolbar to run and debug the task. After it completes, query the destination table to verify the data was synchronized correctly.

Publish the task

After the task runs successfully, if it needs to be scheduled periodically, click the ![]() icon on the node configuration page to publish it to the production environment. See Publish tasks.

icon on the node configuration page to publish it to the production environment. See Publish tasks.

Limitations

-

Single-table batch synchronization tasks can only be configured in Data Development.

-

Some data sources do not support the codeless UI. If a message indicates that the codeless UI is not supported after you select a data source, click the

icon in the toolbar to switch to the code editor. See Code editor configuration.

icon in the toolbar to switch to the code editor. See Code editor configuration.

-

The codeless UI does not support all advanced features. For fine-grained control, convert the task to a script and configure it in the code editor.

Next steps

After publishing the task, go to Operation Center to monitor its schedule and execution. For task management, status monitoring, and resource group O&M, see O&M for batch synchronization tasks.