Channel control settings let you tune how a data synchronization node reads from a source and writes to a destination. Configuring these settings correctly keeps your source and destination stable, improves throughput, and reduces unexpected failures.

This topic covers five settings:

Parallelism — the number of parallel threads the node runs

Data transmission rate — the per-node bandwidth cap

Distributed execution — whether slices run across multiple Elastic Compute Service (ECS) instances

Dirty data limit — how many write failures the node tolerates before stopping

Connection quotas — the maximum number of source and destination connections

Parallelism

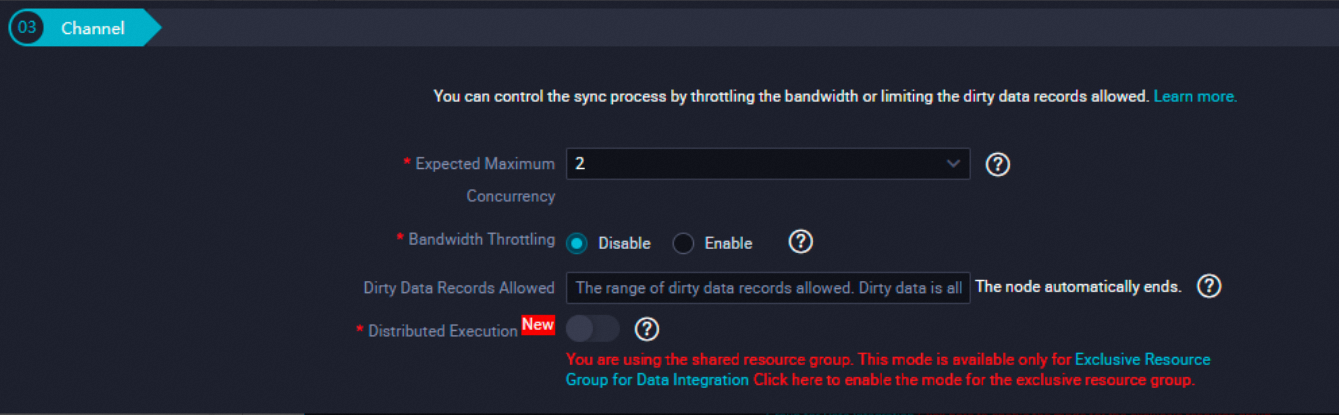

Parallelism sets the maximum number of parallel threads that read from a source or write to a destination when a node runs. The Expected Maximum Concurrency parameter in the codeless UI specifies this maximum.

Data Integration tries to match the actual thread count to the configured maximum, but the actual count can be lower depending on the source type, data distribution, and resource group capacity.

When actual threads are fewer than configured

| Scenario | Why threads are limited |

|---|---|

| Relational database (MySQL, PolarDB, SQL Server, PostgreSQL, Oracle) with no shard key or an invalid shard key | Without a valid shard key, the node cannot split data into shards, so only one thread reads the data. Use an integer-type field as the shard key. Oracle also accepts a time-type field. |

| PolarDB for Xscale (PolarDB-X) | The node shards data based on the physical topology of logical tables. If the number of physical table shards is less than the configured maximum, fewer threads run. |

| File-based sources (Object Storage Service (OSS), FTP, Hadoop Distributed File System (HDFS), AWS S3) | The node reads one file per thread. If the number of files is less than the configured maximum, fewer threads run. |

| Extremely uneven data distribution | After high-volume shards finish, fewer shards remain active. In the later stages of a run, the active thread count drops below the configured maximum. |

If you use the shared resource group for Data Integration (debugging), fees are charged based on the actual number of threads run, not the configured maximum.

Best practices

Match parallelism to your data volume. For small datasets, configure a low thread count. A lower setting requires fewer resources, which lets the node acquire fragment resources faster and keeps run durations predictable.

Avoid running multiple nodes on the same data source in parallel. Stagger the schedule or serialize the runs to distribute read load and keep resource group utilization balanced.

Resource allocation follows first in, first out (FIFO). Nodes committed earlier get resources first. A high thread count increases resource consumption and can delay other nodes waiting in the queue.

Data transmission rate

The data transmission rate setting caps the bandwidth a node uses. Used together with parallelism, it keeps read and write workloads on the source and destination within safe limits.

How throttling works

When you enable throttling and set a maximum transmission rate, Data Integration calculates the per-slice rate as follows:

Rate per slice = ceil(maximum transmission rate / maximum parallel threads)

Minimum rate per slice = 1 MB/s

Actual maximum rate = actual threads run x actual rate per sliceA *slice* is the portion of a data synchronization node assigned to one parallel thread.

Examples (maximum parallel threads = 5):

| Maximum rate | Rate per slice | Actual threads = 5 | Actual threads = 1 |

|---|---|---|---|

| 5 MB/s | ceil(5 / 5) = 1 MB/s | 5 MB/s (matches config) | 1 MB/s (below config) |

| 3 MB/s | ceil(3 / 5) = 1 MB/s | 5 MB/s (exceeds config) | 1 MB/s (below config) |

| 10 MB/s | ceil(10 / 5) = 2 MB/s | 10 MB/s (matches config) | 2 MB/s (below config) |

When the configured rate divided by the thread count rounds up (for example, 3 MB/s / 5 threads = 1 MB/s per slice), the actual total rate can exceed the configured maximum if all threads run at full capacity.

When throttling is disabled

Without throttling, each slice runs at the maximum rate the hardware and configurations support. The actual rate depends on the source and destination specifications, network capacity, thread count, and memory allocation.

Distributed execution

By default, all parallel threads for a node run on a single ECS instance. In distributed execution mode, Data Integration splits the node into slices and distributes them across multiple ECS instances, using fragment resources that would otherwise sit idle.

Enable distributed execution when you have high throughput requirements and your exclusive resource group contains multiple ECS instances.

Limits

Distributed execution requires a maximum parallel thread count of 8 or more.

If your exclusive resource group has only one ECS instance, distributed execution provides no benefit — all slices still run on the same machine.

For small datasets, use a low thread count on a single ECS instance instead. Distributed execution adds coordination overhead that is not justified for low-volume runs.

A large number of threads in distributed mode generates a proportionally high number of access requests to the source. Before enabling distributed execution, assess whether the source can handle the additional load.

Maximum number of dirty data records

A dirty data record is a single data record that fails to write to the destination. The dirty data limit controls how many write failures a node tolerates before stopping.

Why dirty data matters

When you synchronize data to a relational database, the node writes records in batches by default. If a write failure occurs, the node switches from batch writes to record-by-record writes to isolate the failing record and let the rest succeed. This mode change significantly reduces throughput.

For example, if a destination table has a primary key constraint but the source does not, duplicate rows from the source cause primary key violations on the destination. Each violation is a dirty data record. If violations occur in large numbers, the one-by-one write mode can slow synchronization to the point where the run takes far longer than expected.

Dirty data settings

| Setting | Behavior |

|---|---|

| Blank (not configured) | Dirty data records are allowed; the node continues running regardless of the number of write failures |

0 | No dirty data is allowed; the node fails immediately when the first write failure occurs |

Positive integer N | Up to N dirty data records are allowed; the node fails if the count exceeds N |

Best practices

Set the limit to `0` for data-critical destinations such as relational databases (MySQL, SQL Server, PostgreSQL, Oracle, PolarDB, PolarDB-X), Hologres, ClickHouse, and AnalyticDB for MySQL. This surfaces data quality issues immediately rather than letting them accumulate silently.

Leave the limit blank for destinations with low data-quality requirements. This reduces the operational overhead of handling occasional dirty records.

Configure retry on error for nodes that support it. This prevents transient environment issues from permanently blocking a run.

Set up alert rules on node failure or delay for key nodes to detect issues quickly.

Quota for the number of connections

Connection quotas limit how many concurrent source and destination connections a data synchronization solution can use.

Parameters

| Parameter | Default | Maximum | Description |

|---|---|---|---|

| Maximum connections for data write to destination | 3 | 32 | Maximum parallel threads for writing data to a destination in a real-time synchronization node. Set this based on the resource group specifications and the destination's write capacity. |

| Maximum connections for data read from source (Java Database Connectivity (JDBC)) | 15 | — | Upper limit on JDBC connections to the source when batch synchronization nodes run full data reads. This prevents parallel node runs from exhausting the source's connection pool. Set this based on the source's available resources. |

Troubleshooting: nodes stuck in Submit state

If batch synchronization nodes generated by a data synchronization solution are stuck in the Submit state, the JDBC connection limit is likely too low to serve all parallel node runs.

To resolve this:

Adjust the scheduling time of other nodes that use the same data source to reduce concurrent connection demand.

Increase the maximum number of connections for data read from the source.