Configure a batch synchronization node to synchronize only incremental data

By default, offline sync tasks transfer all records from the source table on every run—a full sync. For large tables, this wastes compute resources and time. To reduce costs and improve throughput, configure a filter condition that selects only records that changed since the last run. Combine the filter with a scheduling parameter such as $bizdate so the time range shifts automatically on each scheduled execution, enabling incremental synchronization without manual updates.

Limitations

Some data sources do not support incremental synchronization. HBase and OTSStream are not supported.

The parameter name for the filter condition varies by Reader plugin. Check the documentation for your specific Reader plugin before configuring. For a full list of supported data sources and plugins, see Supported data sources and read/write plugins.

Database type Incremental sync parameter Supported syntax MySQL Reader where(codeless UI: Data filtering)Database syntax. Combine with scheduling parameters to read data from a specific time range each day. MongoDB Reader query(codeless UI: Retrieve query condition)Same as database syntax. Combine with scheduling parameters to read data from a specific time range each day. OSS Reader ObjectSpecify a path. Combine with scheduling parameters to read from a specific file each day. ... ... ...

How it works

Incremental synchronization uses a filter condition as a cursor—only records with a field value (typically a timestamp) newer than the cursor are transferred. Scheduling parameters like $bizdate act as the cursor value: at runtime, DataWorks replaces the parameter placeholder with the actual business date, so the filter window shifts automatically on each run.

For details on scheduling parameter expressions, see Configure and use scheduling parameters.

Configure incremental synchronization

In an offline Data Integration sync task, use scheduling parameters to specify data paths and timestamp ranges for source and destination tables. The configuration method is the same as for other task types and has no special restrictions.

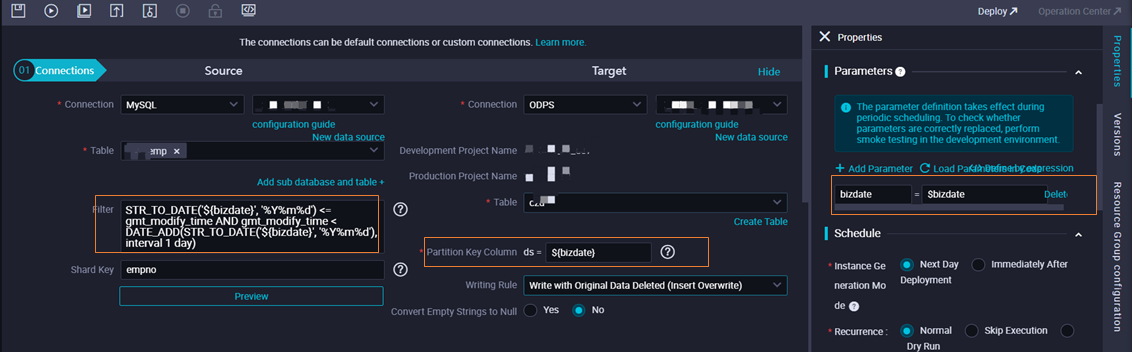

The following example uses MySQL data synchronization:

If Data filtering is not configured, the task syncs all data to the destination table by default.

If Data filtering is configured, the task syncs only records that match the filter condition.

To write results to a specific MaxCompute partition, specify the partition name using a scheduling parameter. The $bizdate parameter represents the data timestamp. When the task runs, DataWorks replaces the partition expression with the resolved value of the scheduling parameter.

Time-based incremental fields

For timestamp or date fields, use scheduling parameters to build a dynamic filter. DataWorks automatically replaces the parameter with the business date at scheduling time.

For supported scheduling parameter formats, see Supported formats of scheduling parameters.

Non-time-based incremental fields

For fields such as incrementing IDs or status flags, use an assignment node to convert the field value to the required data type, then pass it to Data Integration for synchronization.

For details, see Assignment node.

Use cases

Sync historical data: To sync historical incremental data to the corresponding time-based partitions of a destination table, use the data backfill feature in Operation Center. See O&M for data backfill instances.

End-to-end example: Incremental data synchronization from RDS to MaxCompute