By Huang Zhong, AnySQL

Logs are an important part of a database, as logs record all operations on the database in a sequential incremental manner. The design of the log module is very important for the reliability, stability and performance of the database. In terms of reliability, after a basic full backup of a data file, for a running database, the log file is more important than the data file, as long as the operation is recorded in the log and the disk write is completed, the operation is completed without having to wait for the data file to be written to disk. The incremental, real-time backup of the database, including the standby database, is made possible due to the sequential and incremental logging. You can also protect all the data by protecting the log using asynchronous, synchronous, Raft consensus, and other means.

In terms of stability, the incremental nature of the log reduces the amount of data that needs to be written; the sequence of logs is friendly to IO operations, and it can fully save path-finding time (mechanical hard disk) and write caching, so that the log's write operations can be very smooth, and so that intense jitter does not easily occur during high-concurrency transactions, which results in high stability and performance.

The log organization can be divided into physical log and logical log. The physical log describes the changes in a way that is more partial to the underlying data block operation. Logical logs tend to describe changes in the form of a record mirror or SQL statement. Transaction engines typically use the mode of the physical log to log the underlying operation of a transaction, while non-transaction engines generally use logical logs.

Using programming language as a metaphor, the physical log is equivalent to using the assembly language to record operations, logical logs, on the other hand, are equivalent to logging operations in Go/Python languages. Physical logs have higher reliability, stability and performance than logical logs. Looking back at the history of the database, commercial databases have only supported physical logs, never logical logs. Because of MySQL's upper and lower layer (SQL and engine layers) design, the resulting transaction storage engine layer must have separate physical logs, and due to multi-engine support, logical logs must be designed at the SQL layer to make transparent the support for different storage engines (primary and backups can use different engines), creating dual-log existing scenario that brings a lot of difficulties and challenges to the stability and performance of MySQL.

The physical log, due to its relatively low-level format, is difficult to create a read-only instances and switching from a read-only instance to a read-write instance takes a long time. Looking at the historical development of the Oracle database, it has never supported read-only standby database. It takes a relatively long period of time to change the standby database into the primary database. It is difficult to automate, and it is not easy to meet the demand for Internet read throughput expansion.

With logical logs, it is very easy to create read-only instances because of their higher-level format. Converting from a read-only instance to a read-write instance can be done in seconds, and has an entire suite for incremental data subscription consumption. MySQL is also subject to some of the limitations of logical replication while enjoying the benefits of logical replication.

It is difficult to generate logical logs directly from the storage engine layer. For data consistency, the XA (2 PC) mechanism is introduced between the physical log and the logical log, resulting in major limitations and challenges to stability and performance. This results in a large gap in terms of transaction handling performance compared to traditional business databases, while based on the physical log, the gap is extremely small.

The MySQL logical log for the same transaction needs to be written in sequence, so larger transaction operations cannot be supported. Too large a transaction can cause the operation to fail. Based on physical logs, logs for the same operation can be split into separate (transaction start, operation 1, operation 2, transaction commit) writes so that large transaction operations can be supported.

The existing logical log of MySQL holds the before and after images of the entire record. The result is a large increase in IO pressure due to logical log writes, which is liable to result in reduced performance and jitter. The physical log records only the changed fields in a compact format to reduce the total log volume, with better IO performance. It is not liable to cause performance degradation and jitter, and definitely offers higher performance and stability.

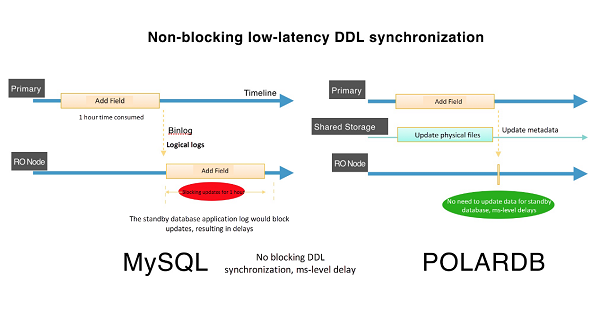

MySQL logical log needs to re-pass the SQL Layer Code upon playback; with a relatively long execution path and with difficulty in parallel processing, it is liable to cause standby database delays - that is, the logical log generation speed exceeds the playback speed; the physical log contains complete transaction information, better facilitates transaction consistency for parallel playback, can greatly improve the recovery speed of the standby database, achieving ms-level delay under high pressure for primary and backups. As shown in the following figure:

MySQL logical log, does not contain transaction information, cannot support continuity test, can begin recovery from any point. Non-familiar unprofessional operation is liable to cause problems; the physical log contains complete transaction information, supports continuity test. It will automatically identify the last breakpoint, reducing manual judgment in operation, can effectively prevent human errors in operation.

Therefore, MySQL, based on logical replication, offers a poor experience for operations to add fields, and indexes to large tables. PolarDB, fully recognizing the advantages and disadvantages of MySQL logical replication, chose to implement physical copies as the basis for realizing duplicate nodes (Replica), thus enhancing the efficiency and experience of primary and backup replication. It provides customers with stable, reliable and high performance read-only nodes, leading the development of the next generation of replication technologies.

ApsaraDB - October 24, 2022

ApsaraDB - May 29, 2025

ApsaraDB - January 16, 2026

ApsaraDB - January 17, 2025

ApsaraDB - October 17, 2024

Alibaba Clouder - April 8, 2018

ApsaraDB RDS for MySQL

ApsaraDB RDS for MySQL

An on-demand database hosting service for MySQL with automated monitoring, backup and disaster recovery capabilities

Learn More Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn More ApsaraDB RDS for PostgreSQL

ApsaraDB RDS for PostgreSQL

An on-demand database hosting service for PostgreSQL with automated monitoring, backup and disaster recovery capabilities

Learn More ApsaraDB RDS for MariaDB

ApsaraDB RDS for MariaDB

ApsaraDB RDS for MariaDB supports multiple storage engines, including MySQL InnoDB to meet different user requirements.

Learn MoreMore Posts by ApsaraDB