By Wuzang

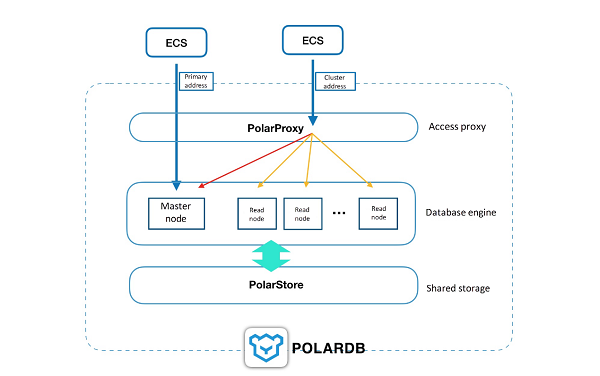

As we know, PolarDB is a database cluster consisting of multiple nodes, a master node and multiple read nodes. Two addresses are provided externally by default, one is the cluster address and the other is the primary address. It is recommended to use the cluster address because it provides a read/write splitting function that integrates the resources of all nodes to provide services externally.

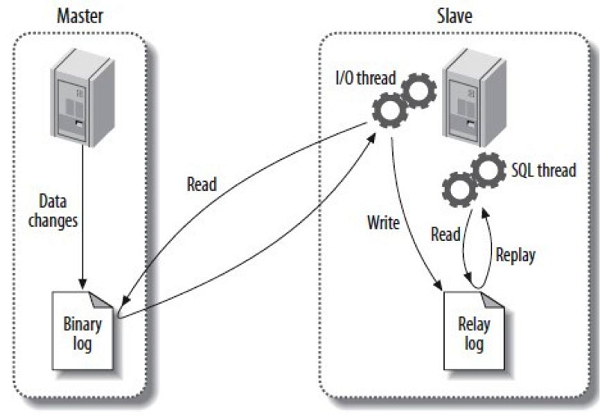

Anyone who has used MySQL knows that MySQL master-slave replication is simple to use, and very popular. Through the asynchronous transfer of the BINLOG of the primary database to the standby database and the real-time application, it can achieve high availability on one hand and provide for queries using the standby database on the other hand, relieving pressure on the primary database.

Although the standby database can allow for queries, there are two problems. First, the primary database and the standby database generally provide two different access addresses, so the application side needs to choose which one to use, intruding over onto the application. Second, MySQL replication is asynchronous, even semi-synchronous can not achieve 100%-strong synchronization, therefore, the standby database data is not the latest and has delay, which does not guarantee the consistency of the queries.

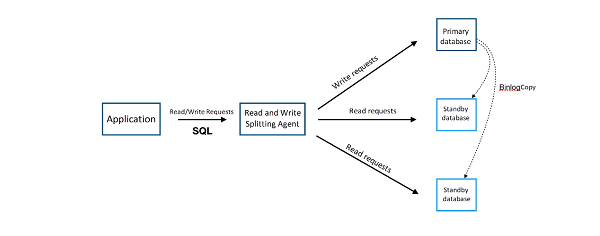

To solve the first problem, we introduced the Read and Write splittingagent, as shown in the following figure, very friendly to the application. The general reality is that the agent will pretend to be MySQL, establishes a connection with the application, parses every SQL sent in, if it is an UPDATE, DELETE, INSERT, CREATE, and other operation, it is sent directly to the primary database, if it is SELECT, it is sent to the standby database.

But the second problem - delay causing the query to be inconsistent - was not resolved. When used, it is inevitable that the standby database SELECT query data can be inconsistent (because the master-standby has delay). When the MySQL load is low, the latency can be controlled to within 5 seconds, but when the load is high, especially for large tables (such as add-on fields) or large batch inserts, delays can be very serious.

PolarDB is a read/write splitting structure. Traditional splitting of read and write only provides a guarantee of eventual consistency, master-slave replication delays result in different query results from different node. For example, the following QUERY is executed continuously within a session:

INSERT INTO t1(id, price) VALUES(111, 96);

UPDATE t1 SET price = 100 WHERE id=111;

SELECT price FROM t1;Under read write splitting, the result of the last query is uncertain, because read will be sent to the read-only database, and whether or not the update before the time the SELECT is synchronized to the read-only database is uncertain. Therefore, the result is also uncertain; because of this problem, the application is required to adapt to the eventual consistency. The general solution is: the business is split and requests with high consistency requirements are connected directly to the primary database, while those where eventual consistency is acceptable take the read-write splitting route; this obviously increases the burden of application development, it will also increase the pressure on the primary database, and impact the performance of read/write splitting;

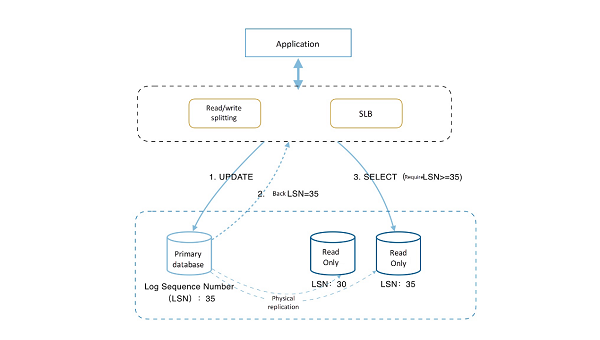

To solve this problem in PolarDB, we provide a guarantee of session consistency or causal consistency. Session consistency guarantees that within the same session, subsequent requests will definitely be able to see data version generated by the previous update, or newer version, ensuring monotonicity, which is a very good way to solve the problem in the example above;

While reading and writing splitting is carried out in the PolarDB link intermediate layer, the intermediate layer would track the redolog position (LSN) that has been applied to each node. At the same time, every update would record this updated position as Session LSN. When a new request arrives, we compare the Session LSN with the current LSNs of the nodes. The request is sent only to the node with LSN >= Session LSN, thus ensuring the session consistency; on the surface, this solution may appear to put significant pressure on the primary database, but because PolarDB is physically replicated, as described previously, the speed is extremely fast. In the above scenario, when the update is completed, replication is synchronized when the client results are returned. When the next read request arrives, it is very likely that the master-slave replication has already been completed. Therefore, most of the application scenarios are more reads and fewer writes. It has been verified that session consistency is guaranteed under this mechanism, it also guarantees load balance with read and write separation.

ApsaraDB - October 26, 2023

ApsaraDB - April 30, 2019

ApsaraDB - May 29, 2025

ApsaraDB - January 16, 2026

ApsaraDB - April 1, 2019

ApsaraDB - October 17, 2024

ApsaraDB RDS for MySQL

ApsaraDB RDS for MySQL

An on-demand database hosting service for MySQL with automated monitoring, backup and disaster recovery capabilities

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn MoreMore Posts by ApsaraDB