By Wang Wei (Zhanyi)

The Auto Analyze feature released this time has addressed problems that arose during the use of the AnalyticDB for PostgreSQL instance. The main problem is that the degradation of the cost-based optimizer (CBO) in generating plans due to the failure to execute analyze statement in time to collect statistics, resulting in a business analysis slowdown.

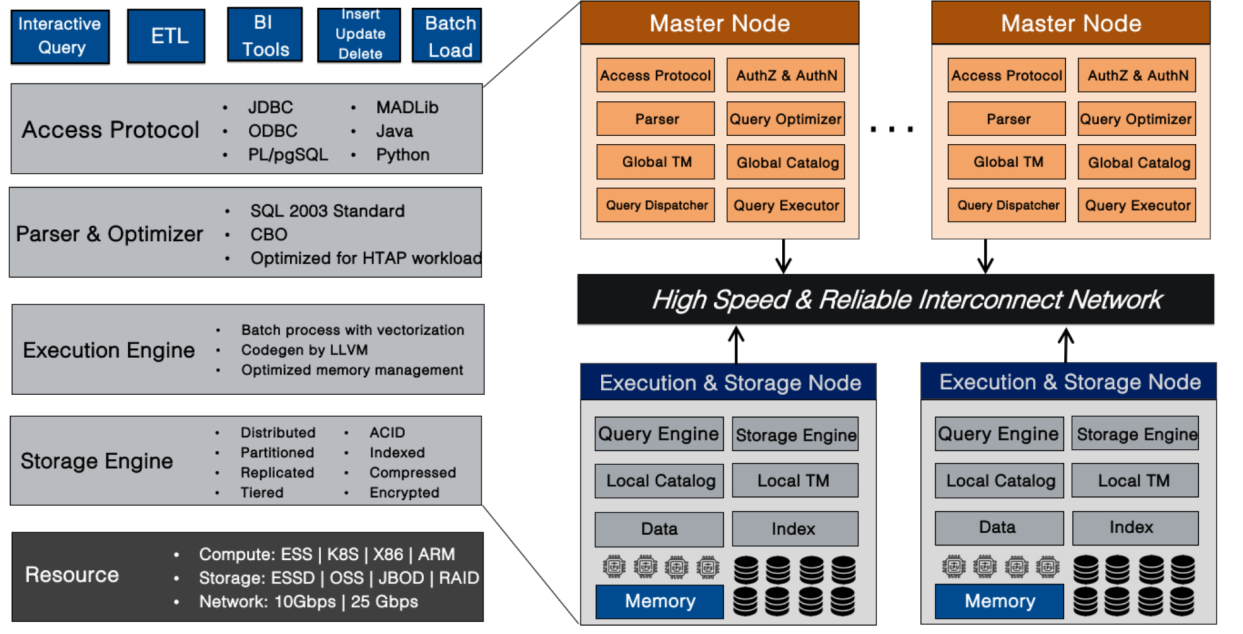

AnalyticDB for PostgreSQL is a data warehouse service with massive-parallel processing architecture on Alibaba Cloud. It is compatible with PostgreSQL and Greenplum, and is highly compatible with Oracle syntax ecology, with PostgreSQL engine as its kernel, supporting standard SQL 2003.

Moreover, AnalyticDB for PostgreSQL features storage and computing separation and smooth online scaling, providing supports for online analysis and exploration in any dimension as well as high-performance offline data processing. So, it is a competitive data warehouse solution for the industries such as the internet, finance, securities, insurance, banking, digital government and new retail.

With the MPP architecture, the instance in AnalyticDB for PostgreSQL is composed of multiple compute nodes. Its storage capacity scales linearly with the number of nodes and the query response time remains constant.

The CBO optimizer of AnalyticDB for PostgreSQL selects the best plan for a query based on table statistics. The Auto Analyze feature released this time has addressed problems that arose during the use of the AnalyticDB for PostgreSQL instance. The main problem is that the degradation of the cost-based optimizer (CBO) in generating plans due to the failure to execute analyze statement in time to collect statistics, resulting in a business analysis slowdown.

The current cost-based optimizer (CBO) of AnalyticDB for PostgreSQL relies on the evaluation of the cost used to measure each candidate plan, which in turn relies on the statistical information collected. The relationship between CBO and statistical information is very close and complementary. If CBO is good enough but without sufficient information, then it is like making bricks without straw. Statistical information is collected to provide CBO with enough reasonable information so that CBO can make reasonable decisions based on this statistical information. For example, there is e a table t and idx_t_z shown below.

create table t(i int , j int, z int);

create index idx_t_z on t(z);

insert into t select i, i, i from generate_series(1, 2) i; -- 1

insert into t select i, i, i from generate_series(1, 3333333) i; -- 2

insert into t select i, i, 20181218 from generate_series(1, 10) i;The first insert here triggers the AnalyticDB for PostgreSQL AutoStats mechanism, which will then perform an analyze statement on table t and collect the relevant statistics. Then, use explain analyze to execute a simple query and output the execution plan of the query.

tmp=# explain analyze select * from t where z = 20181218;

Gather Motion 3:1 (slice1; segments: 3) (cost=0.00..2.02 rows=1 width=12) (actual time=287.952..743.833 rows=10 loops=1)

-> Seq Scan on t (cost=0.00..2.02 rows=1 width=12) (actual ti me=287.428..287.430 rows=5 loops=1)

Filter: (z = 20181218)

Planning time: 1.242 ms

(slice0) Executor memory: 59K bytes.

(slice1) Executor memory: 42K bytes avg x 3 workers, 42K byt es max (seg0).

Memory used: 128000kB

Optimizer: Postgres query optimizer

Execution time: 744.675 msIt can be seen that since analyze was triggered only at the first insert after table t was created, the statistics information was not updated in time after the data was updated so that the statistics information seen by the optimizer at the time of optimization recorded that the total number of rows in table t was 2. As a result, the CBO mistakenly believe that SeqScan was more efficient than IndexScan at this time. Here, analyze can be executed manually as follows:

tmp=# ANALYZE t;

ANALYZE

tmp=# explain analyze select * from t where z = 20181218;

Gather Motion 3:1 (slice1; segments: 3) (cost=0.18..8.20 rows=1 width=12) (actual time=0.429..0.439 rows=10 loops=1)

-> Index Scan using idx_t_z on t (cost=0.18..8.20 rows=1 widt h=12) (actual time=0.014..0.016 rows=5 loops=1)

Index Cond: (z = 20181218)

Planning time: 1.305 ms

(slice0) Executor memory: 92K bytes.

(slice1) Executor memory: 60K bytes avg x 3 workers, 60K byt es max (seg0).

Memory used: 128000kB

Optimizer: Postgres query optimizer

Execution time: 1.322 msAs shown in the figure, the CBO uses more accurate statistics to generate a better execution plan, reducing the query execution time from 700 milliseconds to 1 millisecond.

More accurate statistics can not only help the optimizer generate more efficient execution plans but also make the multi-dimensional sorting released recently by AnalyticDB for PostgreSQL obtain better sorting results. The better the sorting, the more significant the acceleration of the query.

As mentioned above, because of the importance of analyze, AnalyticDB for PostgreSQL introduces the AutoStats mechanism to improve the user experience. The AutoStats mechanism has the following three modes of operation, which are controlled by the gp_autostats_mode configuration.

ON_NO_STATS. This means that after a user performs the DML operation such as insert, update, or delete, AnalyticDB for PostgreSQL will query the status of the DML target table before the DML operation. If the target table before the DML operation is empty, then after the DML operation, AnalyticDB for PostgreSQL will trigger an analyze statement on the target table within the same transaction at the same time. This is also the default configuration on the AnalyticDB for PostgreSQL online.ON_CHANGE. This means that after performing a DML operation, AnalyticDB for PostgreSQL will determine the number of rows affected by this DML operation. If the number exceeds a certain threshold, the analyze statement will be triggered on the target table.NONE. This means that the AutoStats system is shut down.Since AutoStats determines whether to trigger an analyze statement on the target table based only on the result of the most recent DML operation, AutoStats is more suitable for extract-transform-load (ETL) business.

However, with the improvement of hybrid transactional/analytical processing (HTAP) capability of AnalyticDB for PostgreSQL and the connection with the peripheral ecosystem, more and more users tend to import data to AnalyticDB for PostgreSQL in stream mode, for example, Alibaba Cloud data transmission service. AutoStats, therefore, is becoming increasingly ineffective.

As can be seen from the above example, AutoStats only triggers an analyze statement once after the first insert, so that the statistics of table t after the second and third insert operations are completely inconsistent with the actual situation. As a result, the CBO could not generate better execution plans.

AnalyticDB for PostgreSQL has developed an Auto Analyze function that is more applicable to wider scenarios and friendly to stream insertion. Auto Analyze records for each table the cumulative value of all operations such as insert, update and delete affected rows since performing the last analyze statement. Then it decides whether to perform an analyze statement on the table based on this value and the size of the table itself.

In addition, the system can perform the analyze statement asynchronously. Compared with the synchronous execution of the analyze statement by AutoStats in a user business transaction, the asynchronous execution of the analyze statement is basically insensitive to the user business, and prevents any other problems that may be caused by synchronous analyze, such as deadlocks. Moreover, adhering to the open-source ideas of embracing, giving back, integrating and rewarding, the Auto Analyze feature of AnalyticDB for PostgreSQL has also contributed to and incorporated into the Greenplum master branch.

With an Auto Analyze function enabled, execute the example given at the beginning again. As shown below, after executing the third insert, the system also triggers an analyze on table t.

tmp=# select objid::regclass, staactionname, stasubtype from pg_stat_last_operation where objid = 't'::regclass order by statime desc;

objid | staactionname | stasubtype

-------+---------------+------------

t | ANALYZE | AUTO

t | CREATE | TABLE

(2 rows)In this case, users no longer need to execute analyze statement manually, which also allows CBO to generate a better execution plan.

Gather Motion 3:1 (slice1; segments: 3) (cost=0.18..8.20 rows=1 width=12) (actual time=0.765..0.773 rows=10 loops=1)

-> Index Scan using idx_t_z on t (cost=0.18..8.20 rows=1 width=12) (actual time=0.013..0.015 rows=5 loops=1)

Index Cond: (z = 20181218)

Planning time: 1.034 ms

(slice0) Executor memory: 92K bytes.

(slice1) Executor memory: 60K bytes avg x 3 workers, 60K bytes max (seg0).

Memory used: 128000kB

Optimizer: Postgres query optimizer

Execution time: 1.647 msBefore we discuss the implementation of Auto Analyze of AnalyticDB for PostgreSQL, here is a look at how auto analyze is implemented in PostgreSQL,. Auto Analyze implementation on PostgreSQL relies on two components that are statistics collector and auto vacuum. The auto vacuum, in short, traverses each database periodically. It can determine whether to trigger the analyze or vacuum for each table based on the relevant statistics of the table in the statistics collector. The statistics collector is responsible for collecting, storing, and persisting various metric information generated during the running of PostgreSQL, such as the number of rows added, deleted, and changed in tables.

All the information collected by the statistics collector is stored in memory. When the statistics collector process is closed, statistics in memory are persisted to disk files. When the statistics collector process starts, the previously persisted statistics from disk files can also be read into memory. Statistics collector is also a user datagram protocol (UDP) server that monitors a specific port. When PostgreSQL is running, the backend will package the metrics it collected into a UDP message and send it to the statistics collector. Take the collection of statistical information in the PgStat_StatTabEntry as an example to demonstrate this process.

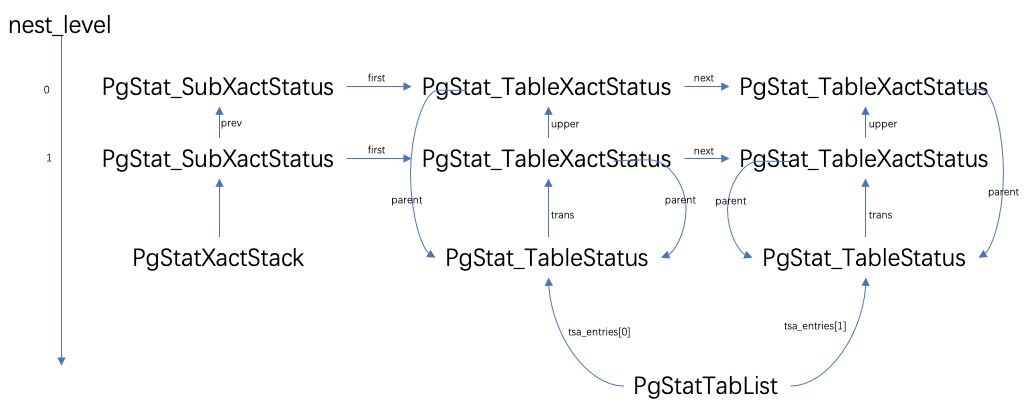

In the process, the pgStatTabList points to the same structure as linkedList. Each backend stores the metrics that are collected at the table level into the corresponding PgStat_TableStatus array.

The structure to which the PgStat_TableStatus::trans points is equivalent to vec, which stores the statistics of the current table at each transaction level through PgStat_TableXactStatus. The PgStat_SubXactStatus structure stores all PgStat_TableXactStatus structures within a specific transaction level, and stores all the statistics of occurrence within that transaction level. PgStatXactStack always points to the PgStat_SubXactStatus structure that corresponds to the current transaction level.

When the backend opens a table at a certain transaction level ready to perform operations such as insert, update and delete, a PgStat_TableStatus element will be assigned to RelationData::pgstat_info from the array pointed to by pgStatTabList. After that, when executing the insert, update or delete operation, the backend will update the PgStat_TableXactStatus structure for the specific table at the current transaction level. Whenever a child transaction commits or rolls back, all PgStat_TableXactStatus statistics within the transaction at that level are merged into the parent transaction.

In addition, PgStat_TableXactStatus statistics are merged into PgStat_TableStatus.t_counts when the top-level transaction commits or rolls back. Finally, whenever the backend enters the idle state or exits, all valid PgStat_TableStatus in pgStatTabList will be packaged into a UDP message and sent to the statistics collector, after which the statistics in the pgStatTabList will be cleared.

Therefore, the main implementation of Auto Analyze on AnalyticDB for PostgreSQL is to collect the number of rows affected by insert, update or delete operations in each node after the execution, and add them up to get the total number of rows. This information is then recorded in the corresponding PgStat_TableXactStatus structure, as is done in the PostgreSQL statistics collector.

Finally, the system will send the information to the statistics collector process of the master node of the AnalyticDB for PostgreSQL in due course. For more details, please refer to the pull request submitted when contributing AnalyticDB for PostgreSQL Auto Analyze to the community

The introduction of the Auto Analyze function allows users to collect statistical information when using AnalyticDB for PostgreSQL instances on time. This also enables users to achieve better execution plans for their business analysis, and the execution performance of business analysis with SQL will no longer degrade drastically due to outdated information.

In addition, based on the infrastructure built by the Auto Analyze feature and problems encountered by online users in using AnalyticDB for PostgreSQL, this goal has been set as well, that is, achieve auto vacuum. As with analyze, vacuum plays an important role in AnalyticDB for PostgreSQL, and the introduction of auto vacuum is expected to further the user experience.

How ADB for PG Provide Data Analysis for Lake-Warehouse Integration

ApsaraDB - July 26, 2023

ApsaraDB - August 12, 2020

ApsaraDB - December 21, 2023

ApsaraDB - February 7, 2025

ApsaraDB - July 11, 2023

ApsaraDB - August 12, 2020

PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn More Database Migration Solution

Database Migration Solution

Migrating to fully managed cloud databases brings a host of benefits including scalability, reliability, and cost efficiency.

Learn MoreMore Posts by ApsaraDB