With the popularity of cloud computing and the increasing demand for data analysis, data analysis for data lake and data warehouse integration has become the core capabilities of the next-generation data analysis systems. Compared with data warehouses, data lakes have obvious advantages in terms of cost, flexibility, and multi-source data analysis. Three of the ten predictions for the cloud market in China for 2021 released by International Data Corporation (IDC) are related to data lake analysis. It is foreseeable that cross-system integration, data control, and more comprehensive data driving capabilities will be important competitive areas for data analysis systems in the future.

AnalyticDB for PostgreSQL (ADB for PG) is a cloud-native data warehouse product developed by the Alibaba Cloud database team based on the PostgreSQL. In scenarios such as real-time interactive data analysis with petabyte-level, hybrid transaction/analytical processing (HTAP), extract-transform-load (ETL), and business intelligence (BI) report generation, ADB for PG has unique technical advantages. As a data warehouse product, how does ADB for PG provide data analysis for lake-warehouse integration? This article describes how to use ADB for PG to achieve data lake analysis based on the foreign table object type of PostgreSQL.

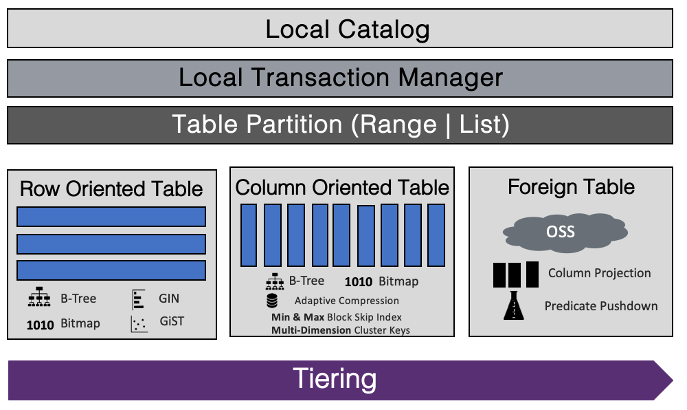

ADB for PG inherits the foreign table feature of PostgreSQL. Currently, the lake-warehouse integration of ADB for PG is mainly built based on the foreign table. With the foreign table, ADB for PG can query and write data from other data analysis systems. Compatible with multiple data sources, the advantages of the original optimizer and execution engine of ADB for PG can be used as well.

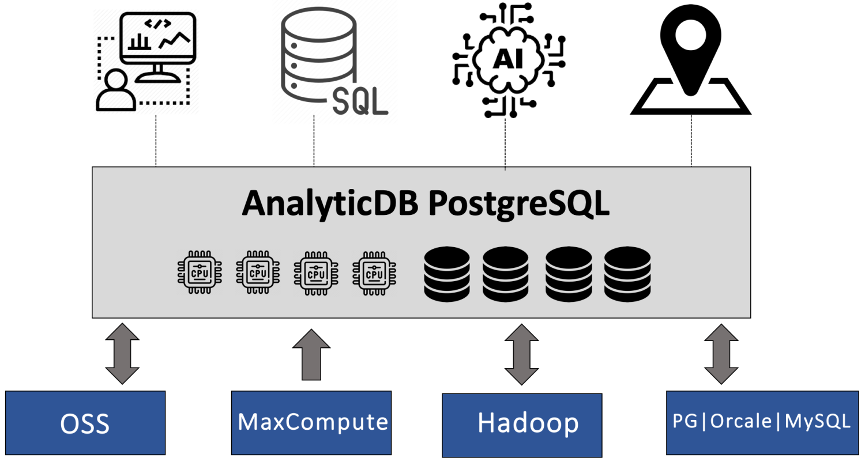

The lake-warehouse integrated analysis of ADB for PG currently supports analysis and writing for multiple data sources, such as Object Storage Service (OSS), MaxCompute, Hadoop, RDS for PG, Oracle, and RDS for MySQL. Users can flexibly apply this service to different scenarios, such as data storage, interactive analysis, and ETL, to achieve multiple data analysis features in a single instance. It means users can use ADB for PG to complete the core process of data analysis or as one of the numerous links to build data links.

However, the data analysis of the foreign table relies on the external software development kit (SDK) and network input/output (I/O) to realize data reading and writing. Due to the huge differences between the features of the network itself and the local disk, a different performance optimization solution is needed at the technical level than for local storage This article takes external data reading and writing in OSS as an example to describe the major problems that ADB for PG encounters when achieving lake-warehouse integrated analysis and their solutions.

The kernel of ADB for PG is divided into the optimizer, execution engine, and storage engine. During the data analysis of the foreign table, the original optimizer and core part of the execution engine of ADB for PG can be reused with minimal modification. The main extension is the transformation of the storage engine layer, that is, data in the foreign table is read and written through the external interface. The foreign table data is stored in another distributed system and needs to be connected to ADB for PG through the network, which is the core difference with reading local files. Different remote access interfaces are provided for different foreign table data, which must be compatible in projects, for example, the data reading interfaces for OSS and MaxCompute are different. On the other hand, accessing data on remote machines over the network has certain commonalities, such as network latency, network amplification, bandwidth limitations, and network stability issues.

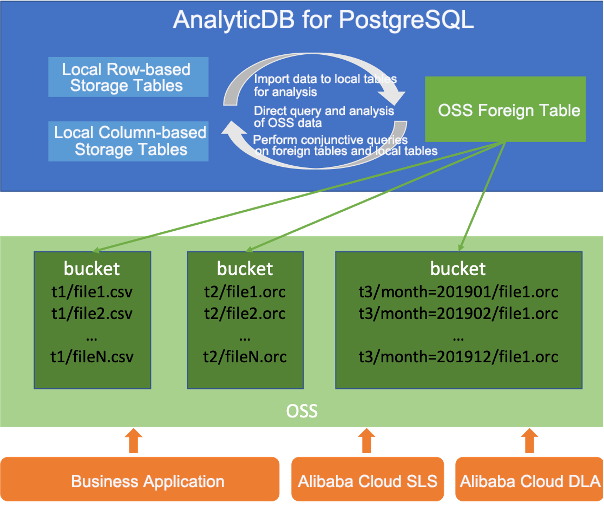

Based on these challenges above, this article describes some important techniques used by ADB for PG appearance analysis in support of OSS data analysis. OSS is a low-cost distributed storage system launched by Alibaba Cloud, which stores a large amount of cold and hot data and has a large demand for data analysis. To help developers expand the system, OSS provides SDKs based on mainstream development languages such as Java, Go, C/C++, and Python. ADB for PG uses the OSS SDK for C for development. Currently, ADB for PG fully supports various features of OSS analysis of foreign tables. Except for the different table creation statements, users can access the OSS foreign tables in the same way as if local tables. Moreover, the ADB for PG supports concurrent reading and writing, and common data formats such as CSV, ORC, and Parquet as well.

The following are some core technical problems that ADB for PG solved when developing OSS foreign table analysis based on the OSS SDK for C.

In scenarios of AnalyticDB, column-based storage is generally believed to be superior to row-based storage in terms of I/O performance. The reason is that column-based storage only scans specific columns when scanning data, while row-based storage scans full data. So column-based storage can save some I/O resources. However, in the development process, the team found that in some scenarios, for example, scanning a large wide table with many fields, the column-based storage format with high scanning performance is surprisingly worse than the row-based storage with CSV format. Later, after the positioning, it was found that on the one hand, when the files are scanned in the ORC/PARQUET format, the number of interactions between the client and OSS server was too frequent. On the other hand, the amount of data requested by ADB for PG to OSS in a single request was relatively small. These two reasons cause significant performance problems.

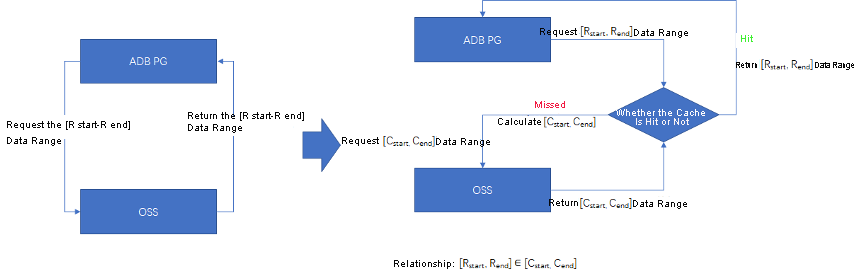

Compared to local disk I/O, the round-trip latency generated by the network I/O can often be magnified by several orders of magnitude. Therefore, if the network requests are treated as local disk requests when some column-based storage formats, such as ORC or PARQUET, are parsed, the reduction in network bandwidth usage due to high compression ratios is not sufficient to offset the amplification of round-trip latency because of fragmented requests. As a result, the performance test results are lower than expected. The solution to this problem is to reduce fragmented network requests through caching. Each time ADB for PG preloads enough data and cashes it when scanning the OSS data, and determines whether the cache is hit or not when it is requested. If there is a hit, ADB for PG will directly return the cache. Otherwise, the next round of preload will be performed, reducing the number of network requests and improving the efficiency of a single request. The default size of the preload cache is one megabyte.

Since the I/O performance of the network is often lower than that of the local storage, it is important to reduce the consumption of I/O bandwidth resources when scanning foreign table data. For this reason, ADB for PG adopts the column filtering and predicate pushdown to process files in the ORC or Parquet format.

Column filtering, which means that only the data columns required by the SQL query are requested on the foreign table and the unnecessary data columns are excluded. Both ORC and Parquet are formats with column-based storage, therefore, when a network request is initiated for the foreign table, only the data range of the requested column is required. This greatly reduces the network I/O, and ORC and Parquet will compress the column data, further reducing I/O at the same time.

Predicate pushdown is to move the upper-layer filter conditions in the execution plan, such as those in the where clause, to the lower-layer scanning node in the foreign table. By doing so, the network I/O will be reduced by filtering out the data blocks that do not match the query conditions when the foreign table is scanned for network requests. In the files with ORC or Parquet format, statistics such as mini-max sum of each column of data in each block are stored in the header of the block. When the foreign table is scanned, the block's header statistics are read first, then compared with the push-down query conditions. If the column statistics do not meet the query conditions, the column data can be skipped.

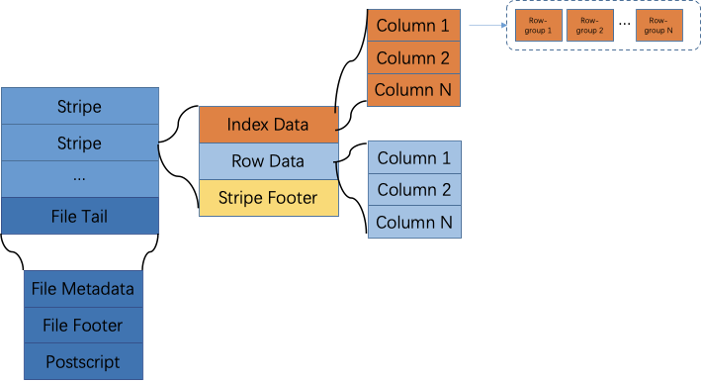

Here is a brief introduction to the implementation of predicate pushdown for the foreign table in ORC format. An ORC file is divided into several stripes based on the several rows of data with column-based storage. Each stripe is divided into several row groups, and every 10,000 rows of all columns make up a row group. The details are shown below.

The ORC file stores three levels of statistics. File-level and stripe-level statistics are stored at the footer of the ORC file, and row group-level statistics are stored at the header of each stripe block. The ORC foreign table can implement filtering with file-level, stripe-level and row group-level by the three levels of statistics above. Specifically, when scanning a new ORC file, the file-level statistics in the footer of the file will be read first. If it does not meet the query conditions, the scanning of the entire file will be skipped. Then read all stripe-level statistics at the footer of the file to filter out non-conforming stripe blocks. For each eligible stripe block, the row group-level statistics at the block header will be read to filter out unnecessary data.

OSS SDK for C defines a type of error code to indicate exceptions. "996" is the error code -996 defined in OSS SDK for C. Similar error codes include -998, -995, and -992. This type of error is usually caused by network anomalies that cause the OSS foreign table import and export failures. -996 is the most common.

The OSS SDK for C uses curl to interact with the OSS server over a network. The common curl error codes include curl 56, that is, connection reset by peer, and curl 52. The curl error codes are usually caused by the automatic dropping connections from clients it regards as "inactive" when the load on the OSS server is high. In other words, when large scale OSS data needs to be imported or exported, the client cannot hold the connection for a long time for continuous communication because the client is in different stages of an execution plan. So the client will be closed by the OSS server as "inactivity".

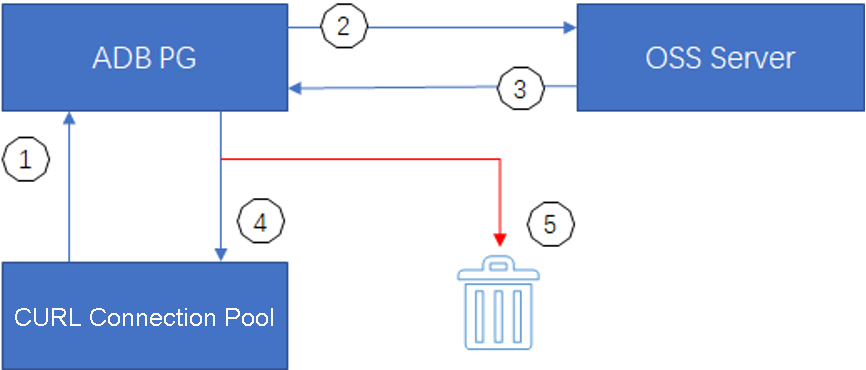

Typically, for this case, the client needs to retry. However, during the actual development, it is found that the exception cannot be improved even if an automatic retry mechanism is added to the client interface. After careful positioning, it was noticed that the OSS SDK for C added a connection pool of curl handles to improve the connection efficiency, while these handles with network anomalies are also stored in the pool. For this reason, even if the request is retried, the abnormal curl handles are still used for communication that the problem of 996 cannot be improved.

Now that the root cause is clear, the solution will be simple and intuitive then. In the recycling interface for curl handles, add a status check for curl handles, and destroy abnormal curl handles instead of adding them back to the connection pool. By doing so, the presence of invalid curl hands in the connection pool is avoided. When the client interface retries, just select a valid client interface or create a new curl connection to communicate with the client interface again. Of course, the automatic retry mechanism can only be used for those cases that can be resolved by retrying.

(1) When ADB for PG accesses the OSS foreign table, it first obtains the connection from the curl connection pool, or creates a new one if it does not exist.

(2) ADB for PG uses the curl connection handle to request communication with the OSS server.

(3) The OSS server returns the communication result by curl connection handles.

(4) The normally returned curl connection handles are added back to the connection pool for the next use.

(5) The abnormal curl connection handles are destroyed.

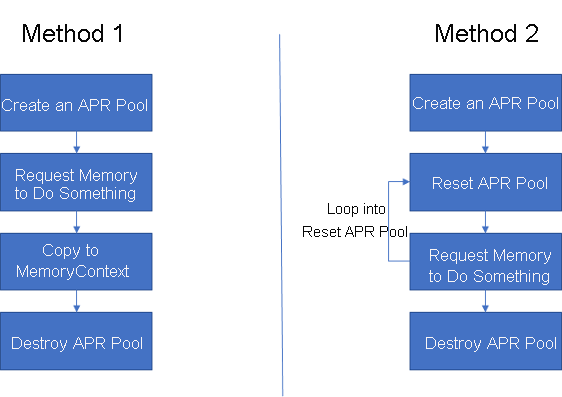

ADB for PG is built on the PostgreSQL kernel and inherits the memory management mechanism of PostgreSQL. The memory management of PostgreSQL adopts the memory context with process security, while OSS SDK for C adopts APR pools with thread safety. Under the memory context, every allocated memory can be explicitly called for free release, and memory context will be used to defragment the memory. However, in the APR pool, only operations such as creating memory pools, applying for memory, and destroying memory pools are visible, but no explicit release interface for memory is available.

The situation mentioned above means that a clear understanding of the lifecycle of the memory held by the OSS SDK for C interface is required, otherwise, problems such as memory leaks and access to already released memory can easily occur. Typically, there are two methods to request memory for the APR Pool.

The incompatibility between ADB for PG and OSS SDK for C in terms of memory management can be well solved through these two methods.

Most data in OSS is in formats such as CSV, ORC, and Parquet. The underlying storage encoding of data in formats like ORC or Parquet is not consistent with the data encoding of ADB for PG. Therefore, data type conversion is essential when scanning the foreign table. Type conversion is essentially the process of changing data from one encoding to another. For example, ORC is represented differently to ADB for PG in the decimal type. In ORC, the Decimal64 consists of an int64 that holds the numerical values of the data, followed by the number of digits and decimal places represented by the precision and scale. While in ADB for PG, the decimal type consists of an int16 that holds the numerical values of the data. The format conversion algorithm needs to perform cyclic division and modulo operations on each data, which is very CPU-intensive.

In order to reduce the CPU consumption caused by type conversions and further optimize the query performance of the foreign table, ADB for PG skips the type conversion step when exporting data through the foreign table, and writes ADB for PG data directly to the foreign table files in binary form. By doing so, there is also no need to perform any data type conversions when querying the foreign table. For example, when exporting an ORC foreign table, the table can directly write any data type as the binary type of ORC. The binary data stored in the ORC are encoded according to the corresponding data type in ADB for PG. Therefore, when querying the ORC foreign table, the type conversion can be omitted directly, thus reducing the CPU consumption. According to the test results with the TPC-H standard, the overall query performance can be improved by 15% to 20%.

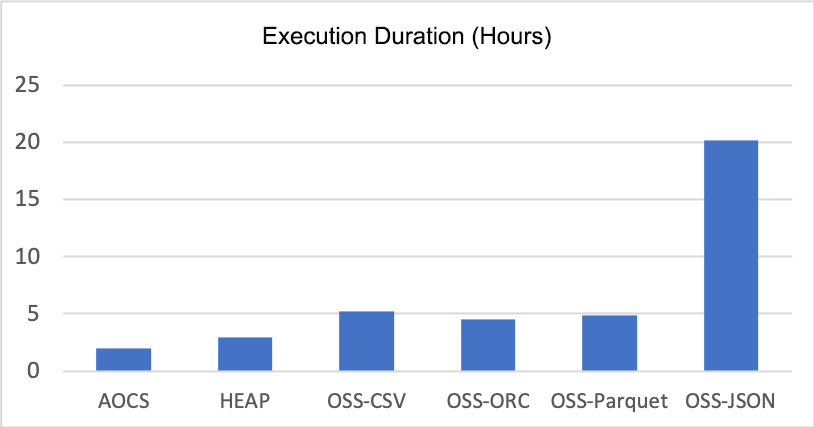

For more information about how to use the analysis function for foreign tables in ADB for PG, please see the Alibaba Cloud product manual: Use OSS foreign tables to access OSS data. Except for the different table creation statements, there is almost no difference between the operations on the foreign table and those on the local table, so the learning difficulty is very low. Here, compare the performance issues of the OSS foreign table analysis scenarios with those of the local table analysis scenarios.

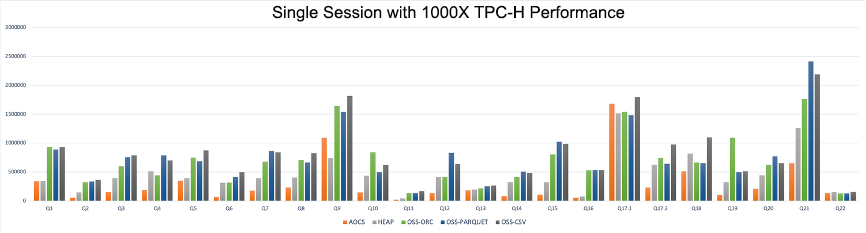

The environment configuration the test applied is the Alibaba Cloud ECS d1ne.4xlarge with 16 Intel Xeon E5-2682v4 as its kernel and 64 gigabytes of memory. Each elastic compute service (ECS) instance is configured with four HDDs and each disk has a read-write speed of about 200 megabytes per second. In the test, four ECS instances are used, two are used as master nodes and four are used as segment nodes with a total of 16 segments are deployed. This test uses a TPC-H query standard and a 1 terabyte dataset generated by official tools.

For local tables, the compressed column-based storage (AOCS) table and HEAP table. For OSS foreign tables, four formats of CSV, ORC, Parquet and JSON have been tested. The following table shows the total execution time of the 22 queries with TPC-H. The test data indicates that the query performance of the AOCS table is slightly better than that of the HEAP table. In terms of the foreign tables, the query performance in formats of CSV, ORC, and Parquet is slightly slower than that of local tables, with a gap of about 50%. The query performance of foreign tables in JSON format is significantly slower than that in other formats, which is mainly due to the slow parsing speed of the JSON format itself, not related to foreign tables.

The following figure shows the detailed time of 22 queries. The performance gap between local tables and foreign tables varies on different queries. Considering the advantages of foreign table analysis in terms of storage cost, flexibility and scalability, ADB for PG has great potential in application scenarios.

Lake-warehouse integration is an important capability of the next generation of data warehouse products. ADB for PG, as a powerful and scalable data warehouse product, has achieved the analysis and writing capabilities for various data sources based on the PostgreSQL foreign table object type, and has integrated with many performance optimization technologies. In the future, ADB for PG will continue to exert its efforts in product functions, cost-effectiveness, cloud-native capabilities, and lake-warehouse integration to provide users with more functions, performance, and cost optimization.

Making SQL Optimizer More Accurate: AnalyticDB for PostgreSQL Auto Analyze Feature

Cloud-Native Database 2.0: All-in-One Comprehensive Data Management and Services

ApsaraDB - April 28, 2020

ApsaraDB - July 23, 2021

Alibaba EMR - July 9, 2021

harold c - July 24, 2023

ApsaraDB - May 19, 2023

Michael Peng - September 24, 2019

Data Lake Formation

Data Lake Formation

An end-to-end solution to efficiently build a secure data lake

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn MoreMore Posts by ApsaraDB