By Yanxian (Cai Chang)

The importance of data assets is becoming increasingly prominent today. Enterprises have accumulated a large amount of historical data during their development. Although the access frequency of this data is low, the data is of great value for trend analysis, compliance audit, model training, and customer behavior research. It is the cornerstone for enterprises to build data-driven decision-making capabilities. Currently, archived data management faces three major challenges. First, the storage cost is high, requiring high-capacity and low-cost solutions. Second, access performance requirements must be met. The solution must support point queries and possess certain analysis capabilities. Third, management complexity is an issue. Users expect a lightweight cold and hot data management method to avoid manual archiving or maintaining multiple architectures.

The cloud-native database PolarDB previously launched a row-oriented table solution based on the X-Engine engine. This solution adopts the Log-Structured Merge-Tree (LSM-Tree) architecture and zstd high-efficiency compression. In standard scenarios, this solution can compress the storage space by 60% compared with InnoDB, and the performance decreases by 10% to 30%. This solution achieves a preliminary balance between cost and performance. However, the problem is not completely solved. On the one hand, row-oriented tables use high-performance SSD storage, so the cost of long-term retention (LTR) of cold data is still high. On the other hand, row-oriented storage cannot meet the requirements of high-performance analysis. Therefore, PolarDB X-Engine continues to evolve and launches a columnar table solution to break through the bottleneck from the two dimensions of storage architecture and computing capability:

• Lower cost: Column store is introduced. High-efficiency encoding and general compression are combined to compress data to 1/10 of the raw data. The ultimate cost-effectiveness of Object Storage Service (OSS) is utilized to significantly reduce long-term storage fees.

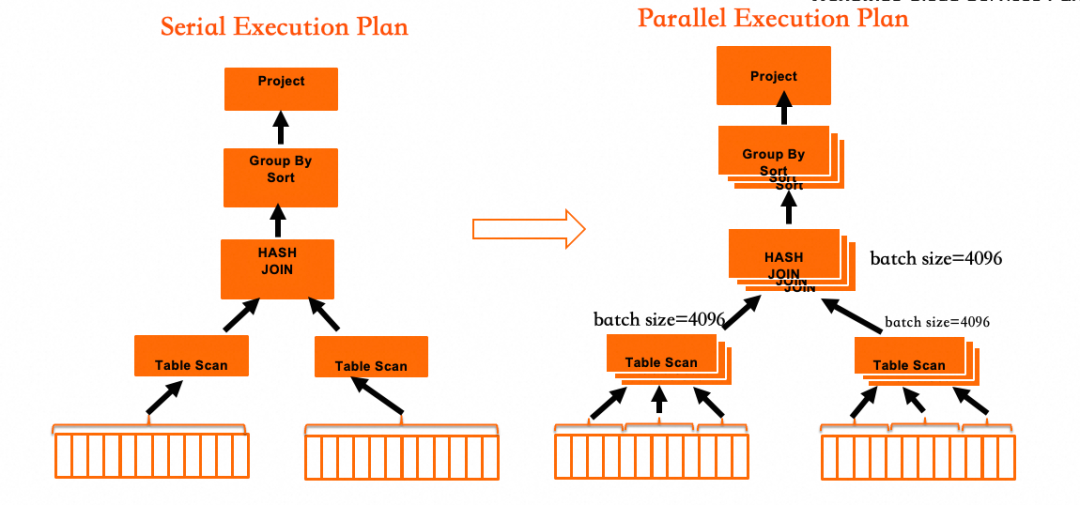

• Stronger analysis: Parallel calculation and batch processing technologies are utilized to improve the analysis performance of archived data by an order of magnitude.

Today, PolarDB has implemented fully automatic cold and hot data layer management. No manual intervention is required throughout the process. This provides a low-cost, high-performance, and easy-to-manage integrated solution for massive historical Data. This helps enterprises fully release the value of data assets and promotes intelligent upgrades.

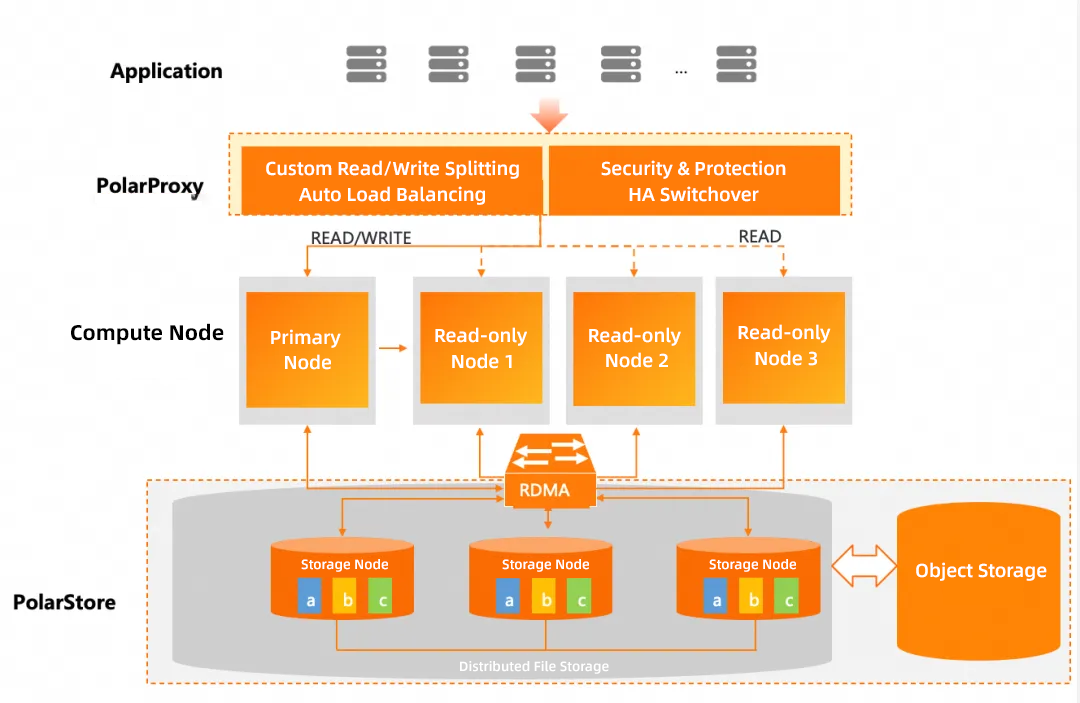

PolarDB adopts a storage-compute decoupled architecture. The bottom layer is the distributed storage tier. Multi-replica consistency is ensured through the ParallelRaft protocol. OSS is transparently supported. A compute node consists of one read-write (RW) node and one or more read-only (RO) nodes. The InnoDB and X-Engine engines are supported simultaneously. PolarProxy serves as a bridge between applications and compute nodes to provide read/write splitting and load balancing capabilities.

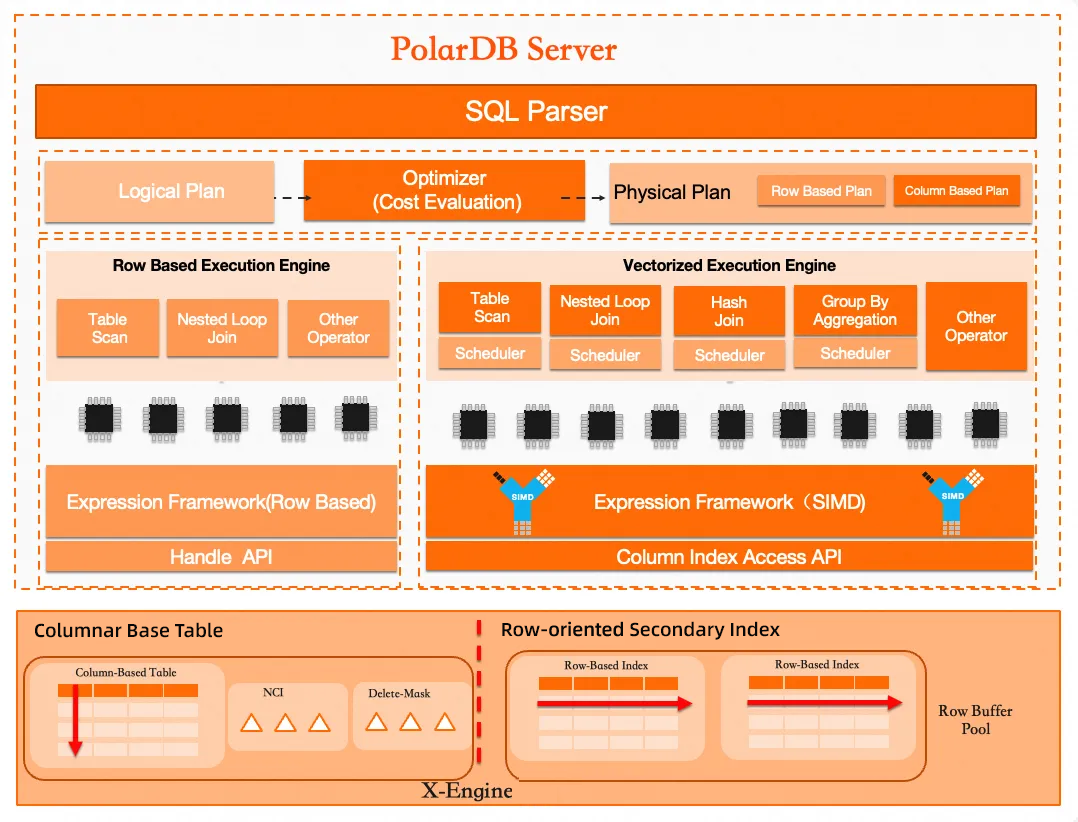

The core features of the PolarDB columnar table are mainly reflected in the compute nodes, including the optimizer, execution operator, and storage engine layers.

• Optimizer: The cost-based optimizer is designed for row-column hybrid storage. The optimizer can automatically select row store or column store to execute query requests based on the cost.

• Execution engine: The vectorized parallel operator is designed for column store. It Supports extremely fast single table and multi-table queries.

• Storage engine: Support for column store is added for real-time transaction updates. The primary table of the column store adopts column store. Rapid update capabilities are provided through the NCI component (primary key index). The delete-mask component is used to mark deletion to provide high-efficiency parallel query capabilities. The row-oriented secondary index provides the ability to quickly filter invalid data.

Based on 100% compatibility with the MySQL protocol, the PolarDB columnar table implements low-cost cold data archiving storage and brings an order-of-magnitude improvement in query performance.

• Storage-compute decoupled architecture: The storage-compute decoupled architecture is adopted. Compute and storage are extended independently to provide PB-level storage capacity.

• 100% compatible with MySQL: The columnar table has a data type system consistent with MySQL. It supports flexible type transformation and is 100% compatible with the MySQL protocol.

• Open column store: Based on the open-standard ORC columnar storage file format, this feature provides a compression ratio of 5 to 10 times to further reduce storage costs.

• Support for OSS: This feature supports storing table data on OSS. It also supports snapshot backup and restore, which is transparent to users.

• High-performance real-time analysis: You can perform analysis queries immediately after data is written. The system uses parallel, vectorized, and Massively Parallel Processing (MPP) technologies to provide high-performance analysis.

• Ease of use: One system replaces the original two systems for transactional processing (TP) and analytical processing (AP). This achieves the integration of online, archiving, and analysis.

• Large wide table: The system supports large wide table storage and supports a maximum of 10,000 columns.

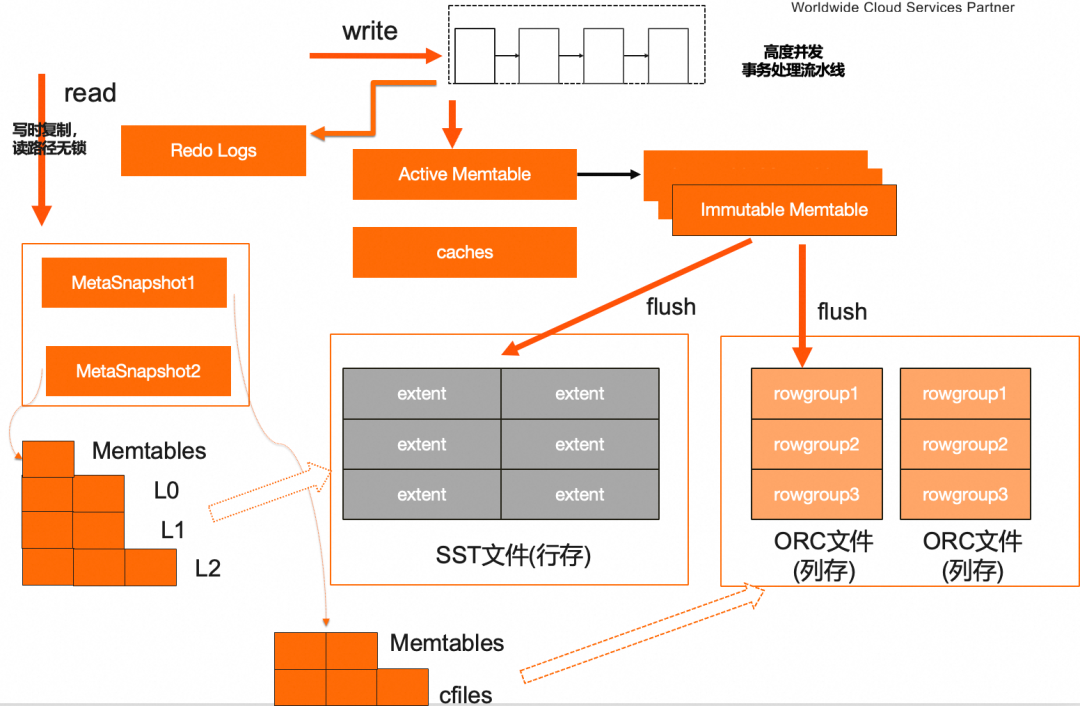

The X-Engine engine uses the LSM-tree structure. Data is written to memory by using the append-only write method and is periodically materialized to disks. Data in memory exists in the form of memtables. Data on disks is divided into row-oriented tables and columnar tables based on the data organization form.

• X-Engine row-oriented tables are stored by row. When memtables are flushed to disks, the memtables are written to disks in the SST file format. Data on disks is stored in layers. The data includes a total of three layers: L0, L1, and L2. Data in each layer is organized in order by block.

• X-Engine columnar tables use column store. When memtables are flushed to disks, the memtables are written to disks in the open ORC format. ORC files use column store. Combined with attributes such as encoding and compression, the raw data is compressed by 5 to 10 times. In scenarios with obvious data features, a higher compression ratio can be obtained.

X-Engine columnar tables use row-column hybrid storage. Data in the primary table is stored in the column format. Primary key indexes and common secondary indexes are stored in the row store format. Data in the primary table is in the insertion order by default. Users can define the order_key property to specify the data order. Primary key indexes store the mapping from the primary key (PK) to the rowid. The purpose is to facilitate the fast location of specified records based on the primary keys. This supports fast point queries and modification actions. Records in columnar tables are organized by rowgroup. Each column in a rowgroup is stored separately. Combined with the data features of columns, encoding and compression can be performed to reduce storage space.

The column-oriented primary table uses non-primary key order storage. To support fast point queries and point query-based updates and deletes, we introduced the Non-Clustered Index (NCI) component to store the mapping between primary keys and records. This is actually the primary key index. In column store, the column-oriented primary table and the primary key index are two components of the system. The core difference is that one uses the column format and the other uses the row store format for data flushed to disks.

For the traditional LSM-tree structure, data is written to memtables by using the "append-only write" method. The benefit is high write performance. The cost is that read operations need to "merge" multiple versions. This is a write-friendly storage architecture. The main scenario of column store is to accelerate analysis, which imposes higher requirements on read performance. Therefore, our update operations use the Insert/Delete separation model. Insert data is written to the column-oriented primary table, and Delete operations are written to the Delete-mask component. After optimization, multiple threads can scan IMCI files in parallel. Each thread "merges" the corresponding Delete-mask information to finally obtain the query result. This execution method can fully utilize the advantages of multi-core and batch processing of modern computers. This significantly improves the throughput and response performance of complex analysis queries.

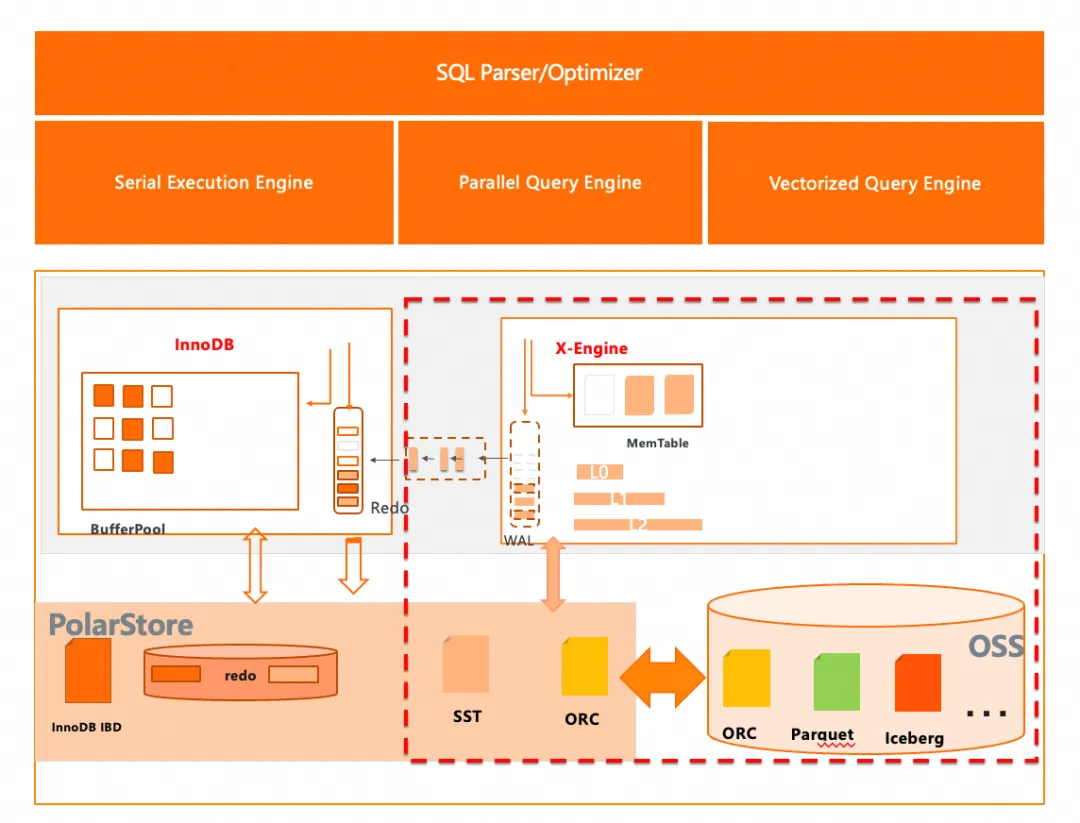

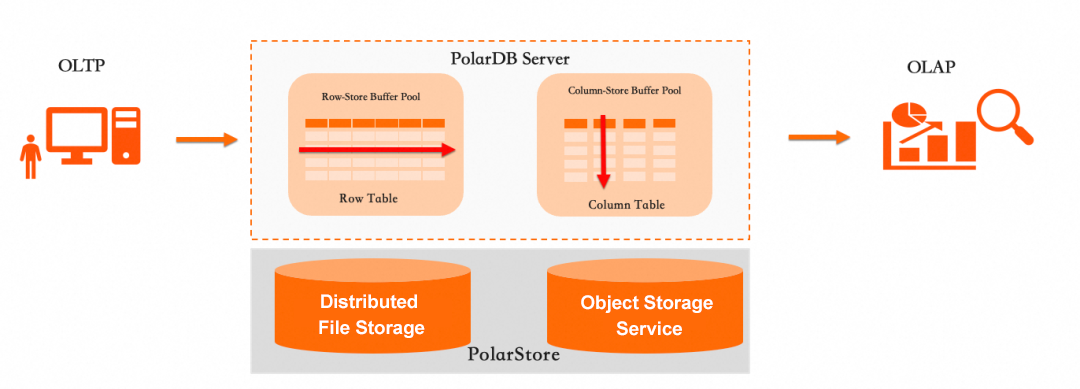

As more and more data is accumulated in the database, users expect to retain historical data at a lower storage cost. Combined with the InnoDB engine and X-Engine, the system can provide customers with an integrated hot and cold storage solution. For online hot data, the InnoDB engine is used for storage. For warm data and cold data, the X-Engine engine can be used for storage. The X-Engine engine supports both row store and column store formats. The row store format uses general compression algorithms for compression. In general scenarios, a compression ratio of 2 to 5 can be achieved. Data in the column store format is organized by column. Combined with encoding and general compression algorithms, a compression ratio of 5 to 10 can be achieved. With the underlying distributed storage capabilities, the X-Engine engine supports both PolarStore and OSS. For cold data, the use of OSS can further reduce the storage cost to 1/10. A table in PolarDB can support multiple storage engines simultaneously. For example, some partitions use InnoDB partitions and other partitions use X-Engine partitions. This achieves the storage of hot and cold data in the same table and is completely transparent to business access. The hybrid partitioned table can also fully support DML operations and DDL operations. Table operations are not restricted because of cold data partitions.

The X-Engine engine supports row-oriented and column-oriented storage. For row-oriented storage, X-Engine supports creating indexes to accelerate queries and alleviate the issue of slow access to cold data. Because the X-Engine engine and the InnoDB engine run on the same PolarDB instance, you can perform hybrid queries by using a single SQL statement to access both cold data and hot data simultaneously. The query SQL ensures 100% compatibility with MySQL. For column-oriented storage, the X-Engine engine adopts the open ORC format. Combined with the IMCI feature of PolarDB, the X-Engine engine can significantly enhance the analysis capabilities of archived tables. In addition, the IMCI column-oriented executor has the capability to analyze open formats such as ORC and Parquet. For data that is already stored in OSS, the parallel scan capability of the IMCI executor can fully utilize OSS bandwidth and increase CPU utilization through parallel computing and vectorization technology. For scenarios that require joint analysis, the IMCI execution engine can access X-Engine tables and OSS external tables simultaneously in a single SQL statement.

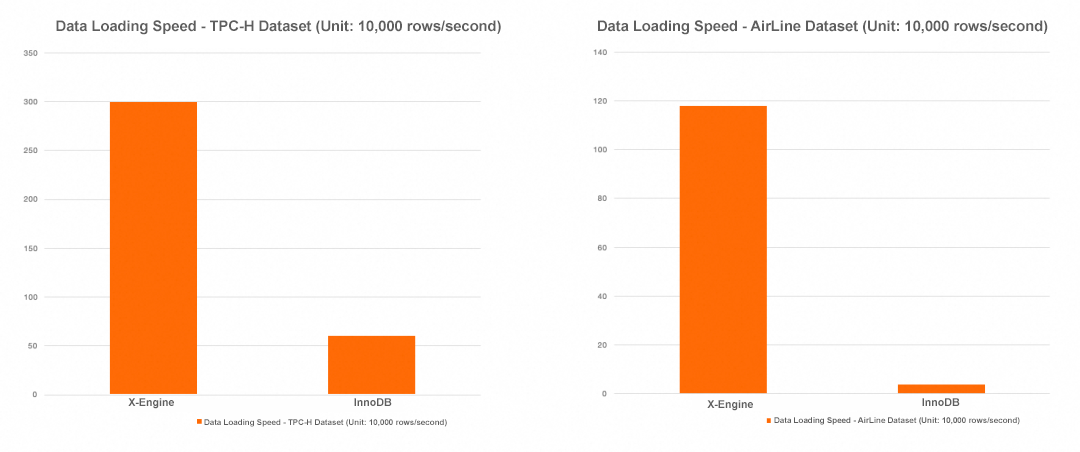

The core feature of a relational database is the support for transaction atomicity, consistency, isolation, and durability (ACID). Each transaction write requires operations such as locking, writing redo/undo logs, and writing data pages. For scenarios with UNIQUE constraints, uniqueness validation is also required. These heavy actions result in low write efficiency and critically affect data import performance. To accelerate data loading speed, PolarDB columnar tables support the bypass import feature, which performs extreme optimization on data loading. After the optimization, the loading speed with 64 concurrent threads can reach 3 million rows per second.

PolarDB columnar tables significantly extend the boundaries of PolarDB. On one hand, they utilize the columnar format and object storage to further reduce the storage costs of massive data archiving. On the other hand, they utilize the column-oriented execution engine to perform efficient aggregation and analysis on data. Combined with the capability to access open format external tables, PolarDB columnar tables can also perform federated analysis on offline data and online data. This truly achieves the goal of handling online data transaction processing and data analysis within a single database, eliminating the burden of maintaining multiple computing architectures for applications.

• Massive data archiving scenario: Data is archived from data sources such as PolarDB and RDS to PolarDB (X-Engine) to reduce archiving storage costs.

• Real-time data warehouse scenario: Relying on the massive data storage capability of PolarStore, the system aggregates multiple upstream data sources and performs real-time aggregation and analysis, using the database as a dedicated data warehouse.

• Federated query and analysis scenario: Relying on the capability of PolarDB to access open format files via external tables, PolarDB can directly analyze offline data in external tables or perform federated analysis on online data and offline data.

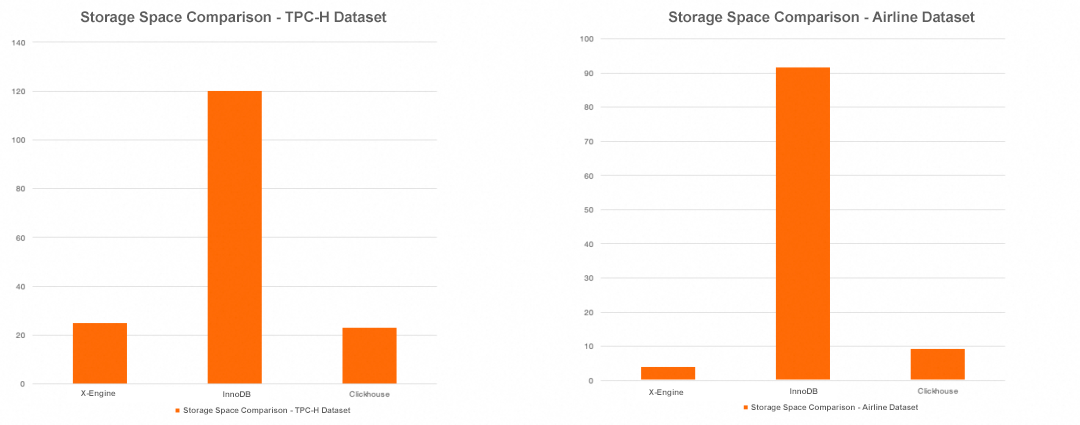

PolarDB columnar tables provide excellent data compression and analysis performance. In the TPC-H testing scenario, compared with the InnoDB engine, the storage space of columnar tables is compressed to 1/5 of the raw data, and the loading performance is increased by 5 times. In the Airline dataset, the storage space of columnar tables is compressed to 1/20 compared with the InnoDB engine, and the data loading performance is increased by 30 times.

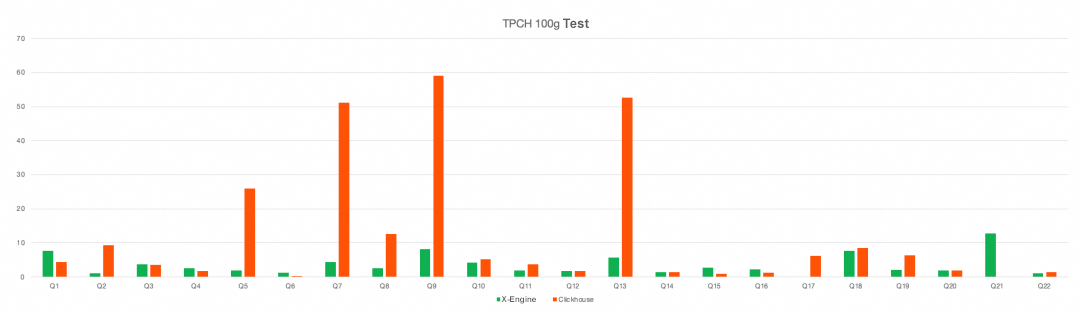

In terms of query performance, for the 22 SQL queries in TPC-H, the performance of most SQL queries on columnar tables is better than that on ClickHouse. At the same time, columnar tables support multi-node massively parallel processing (MPP) analysis, and the performance can increase linearly as the number of nodes increases.

Query Performance of TPC-H 100 GB

1. Unified catalog management to support richer open lake formats. The PolarDB columnar table engine cannot only efficiently analyze local data but also access data in the data lake by utilizing the powerful computing engine. Currently, the engine supports open ORC and Parquet file formats. In the future, the engine will fully support table formats such as Paimon, Iceberg, Hudi, and Delta Lake to achieve integrated analysis of data lakes and data warehouses.

2. Multi-master and multi-write. PolarDB columnar tables support single-node write and multi-node read, and improve write capabilities through vertical scaling. To handle larger-scale data loading and streaming writes, multi-master capabilities will be implemented in the future to achieve horizontally scalable write capabilities.

Alibaba Cloud ApsaraDB Data Agent Achieves Dual Breakthroughs in Performance and Experience

Lindorm Tops VectorDBBench Performance, Defining New Heights for Vector Retrieval

ApsaraDB - March 20, 2026

ApsaraDB - November 28, 2022

ApsaraDB - November 18, 2025

ApsaraDB - January 9, 2023

Apache Flink Community - July 28, 2025

Alibaba EMR - September 13, 2022

PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn More Backup and Archive Solution

Backup and Archive Solution

Alibaba Cloud provides products and services to help you properly plan and execute data backup, massive data archiving, and storage-level disaster recovery.

Learn MoreMore Posts by ApsaraDB