As LLM applications and search, advertising, and recommendation systems evolve, enterprise demand for vector databases goes beyond simple "TopK retrieval". In a production environment, how do you maintain ultra-low latency with massive data? How do you maintain high throughput under complex scalar filter conditions? How do you ensure index freshness and performance during frequent data updates? These are key to business success.

Recently, the cloud-native multi-model database Lindorm upgraded its new Vector Retrieval Service. It topped the performance charts on the list of the industry-recognized VectorDBBench, validating the robust capabilities of this cloud-native database in handling massive, high-concurrency, and complex hybrid retrieval scenarios.

The test uses the industry-standard Cohere dataset. It compares Lindorm against mainstream vector databases in a real cloud environment.

Tests were conducted at the 10-million (Cohere-10M) and 1-million (Cohere-1M) scales. Notably, this speed does not sacrifice precision. In tests for both datasets, Lindorm maintains a recall rate of over 99%.

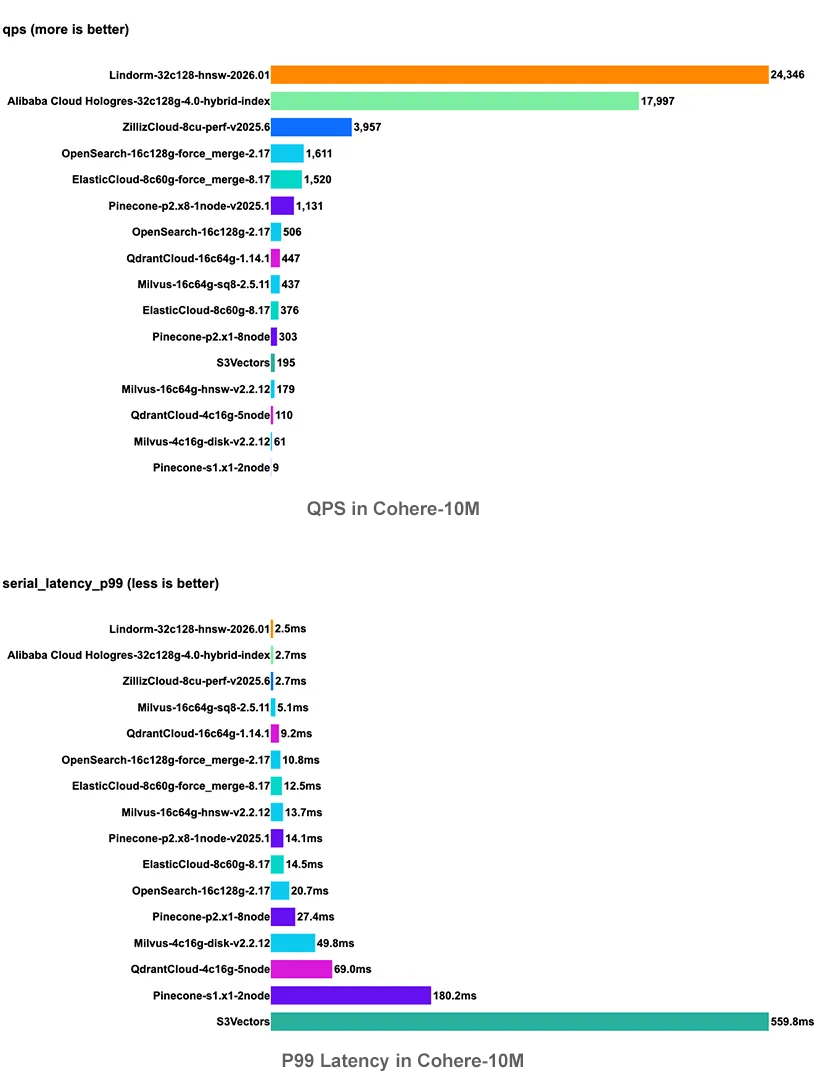

With a data size of 10 million, we compared Lindorm (32C single node) with top cloud services on the VectorDBBench list:

• Top QPS: Lindorm achieved a high QPS of 24,346, significantly surpassing Zilliz Cloud (3,957) and the previous SOTA record (18,000).

• Extreme latency: Under the same high throughput, Lindorm's P99 latency remains stable at 2.5 ms. In contrast, competitor latency is generally 10 ms or even over 100 ms.

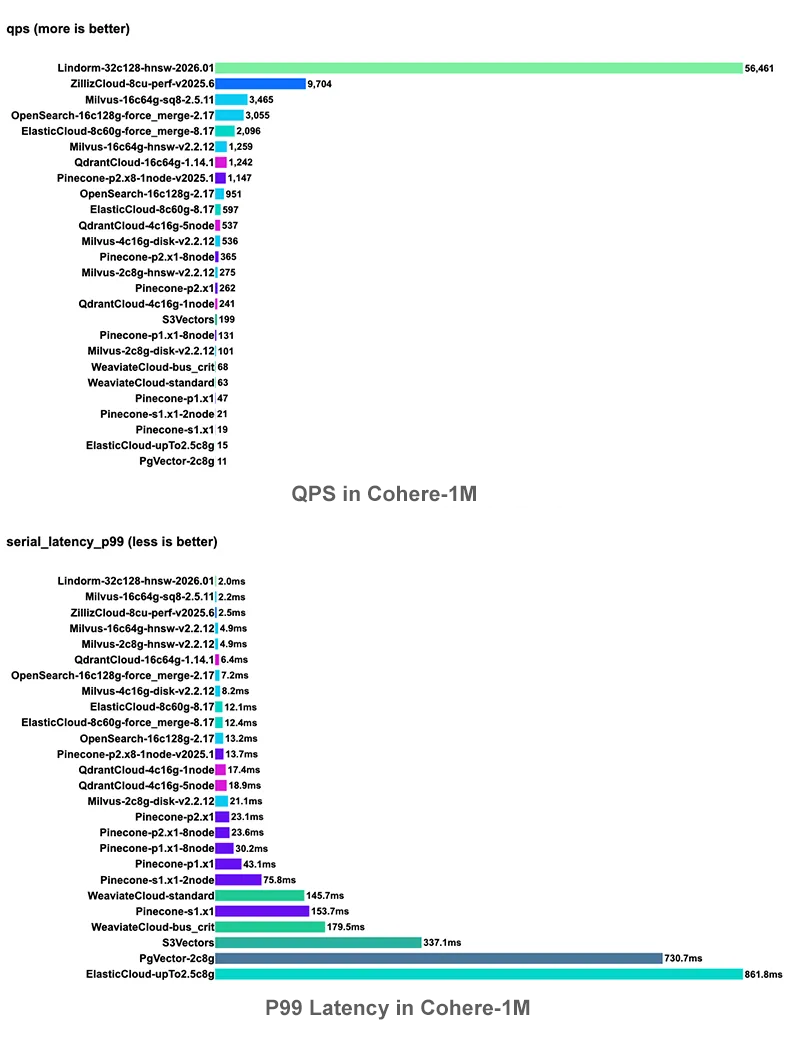

At the 1 million level, Lindorm shows a crushing performance advantage. Lindorm QPS exceeds 56,000, while keeping latency at 2 ms. In comparison, the QPS of mainstream open source products such as Milvus and OpenSearch is generally around 3,000.

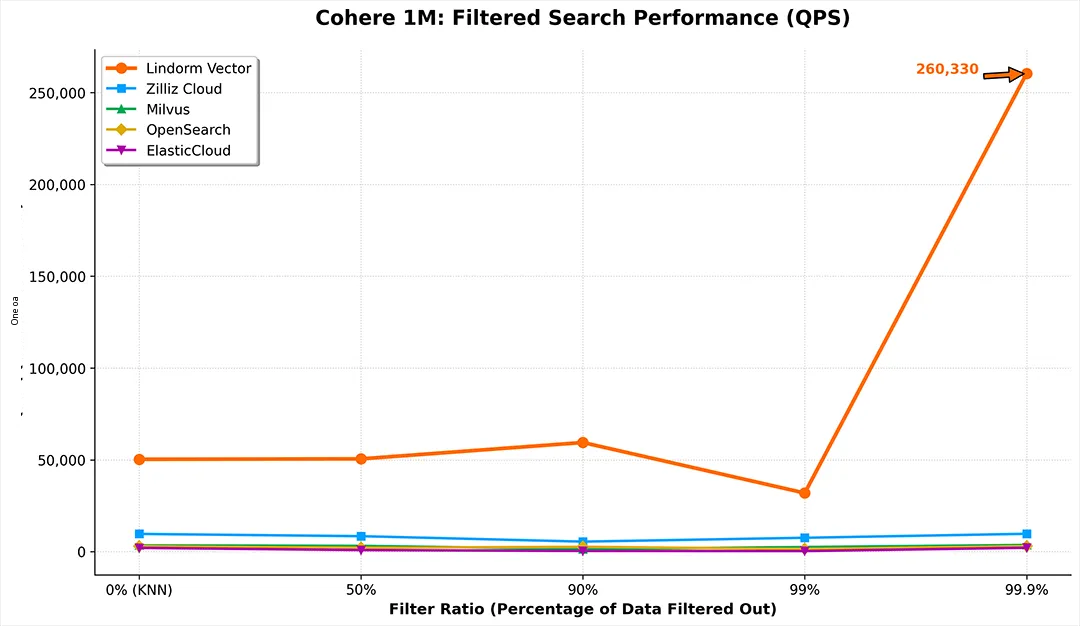

Hybrid search is a real test for production environments. In business scenarios, 80% of queries involve complex scalar filter conditions. Traditional "filter then retrieve" or "retrieve then filter" patterns often suffer from critical performance collapse at specific filtering ratios.

Thanks to the CBO/RBO hybrid optimization optimizer and adaptive hybrid index architecture, Lindorm achieves intelligent execution plan routing across the full filtering range. It maintains high performance and ensures that the recall rate for all branches exceeds 90%:

• Low filtering ratio (Vector-Driven): When the filtered result set is large, the optimizer selects the vector-first navigation policy. Using cross-pipeline technology, it performs scalar filtering in parallel during graph traversal, maintaining a QPS of over 50,000, comparable to pure vector retrieval.

• High Filter Ratio (Scalar-Driven): When filter conditions are strict, the system automatically switches to scalar-driven mode. Using Bitmap/inverted index, QPS soars to over 260,000. This completely avoids the performance pitfalls of traditional solutions with sparse result sets.

To ensure fairness and reproducibility, this test used standard industry hardware specifications and open source testing frameworks. All values are actual measurements in no Query Cache mode.

• Test environment specifications: The Lindorm instance type is 32 cores 128 GB (32C128G). This is a typical configuration for cloud production environments.

• Software version: The Lindorm vector engine version is 3.10.16 or later.

• Test tools: We used the authoritative VectorDBBench for stress testing. To support Lindorm protocols, we submitted adaptation code to the official VectorDBBench repository. Developers can reproduce these results directly using this PR. For the adaptation code, see:

🔗https://github.com/zilliztech/VectorDBBench/pull/718

• Comparison data: This references the VectorDBBench Leaderboard and public evaluation reports.

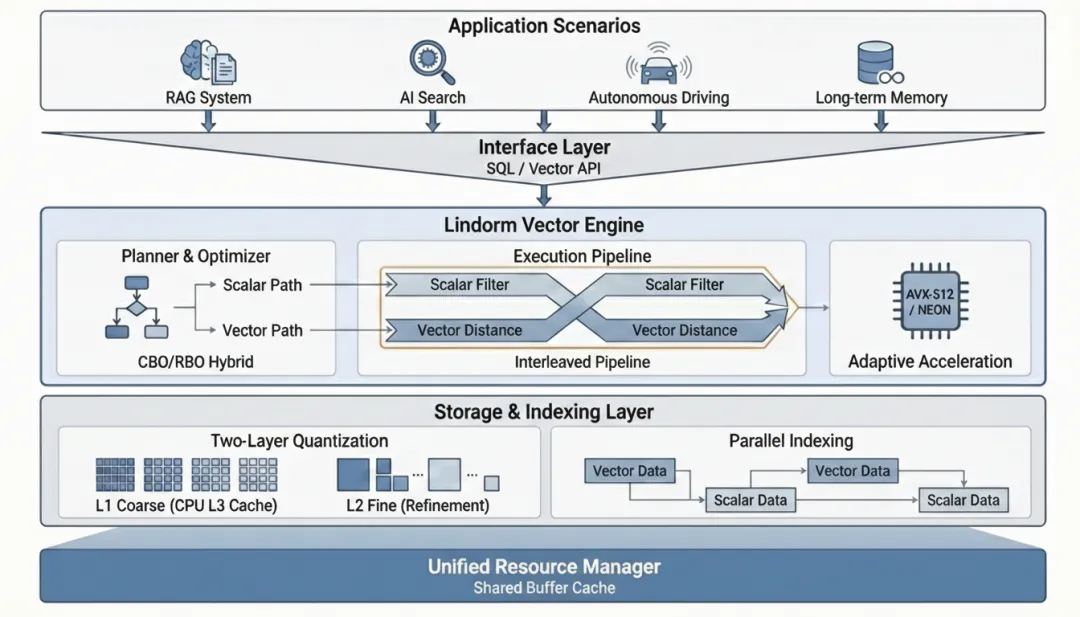

The breakthrough in Lindorm AISearch performance does not rely on optimizing a single algorithm. It stems from a deep restructuring of the database system architecture. We evolved AISearch from an "external index" to a "native database system."

To address the high-frequency memory access of AISearch, Lindorm deeply integrates clustering, graph indexing, and two-layer quantization technologies:

• **Clustering and graph indexing:

Clustering indexes provide stable space partitioning. They quickly locate target areas and significantly reduce invalid searches. Graph indexes build navigation graphs on this basis. They provide rapid nearest-neighbor convergence.

• **Two-layer quantization:

Layer 1 coarse ranking uses high-compression quantization. This keeps the index in the CPU L3 Cache and relieves memory pressure. Layer 2 fine ranking re-ranks only key candidate sets with original precision.

This "fast then precise" strategy lets Lindorm achieve a qualitative leap in AISearch throughput while maintaining high recall.

This is the core revolution of the Lindorm vector engine. Unlike the "patchwork" pattern of traditional solutions that simply splice vector and scalar indexes, Lindorm treats vectors and scalars as a unified abstract entity.

• Unified architecture: We redesigned the storage structure, optimizer, and executor with vector data as the anchor. In this architecture, scalar properties are no longer accessories to vectors. Instead, they interweave closely with vector features to eliminate data transfer losses.

• Smart routing: The system features a built-in hybrid CBO/RBO optimizer. It automatically selects the optimal path (such as vector-driven, scalar-driven, or parallel pipeline) based on real-time statistics. This ensures high performance even under complex filter conditions and avoids the performance cliffs of traditional solutions.

• Hardware acceleration: Supports cross-platform adaptive detection. It automatically activates x86 (AVX512) or ARM (NEON) instruction sets to maximize performance on different hardware.

• Graph structure evolution: Introduces a background automatic reorganization mechanism. This fixes data drift caused by incremental writes. It continuously monitors structure quality and performs gentle repairs and optimizations without affecting online services. This ensures the index remains in an ideal state.

• Production-grade dynamic capabilities: Supports real-time updates of vector and scalar data in seconds and online schema evolution. You can flexibly change fields without reindexing.

The Lindorm vector service is more than a faster retrieval index. It reconstructs the underlying logic of vector databases. Lindorm integrates high-performance retrieval acceleration, full-architecture adaptation, and database-level query optimization. This provides a solid performance foundation for large-scale AI applications. Lindorm handles retrieval augmentation for Large Language Models (LLMs) with trillions of parameters. It also manages product recommendations with ultra-high QPS pressure and real-time changes. Lindorm is ready to support your business.

Lindorm is a cloud-native database developed by Alibaba Cloud for the AI era. It supports data models such as vector, wide table, search, column store, and time series. Lindorm provides one-stop data storage and processing capabilities for enterprises.

DAS Agent Helps Businesses Enter the AI-native Database O&M Era

Alibaba Cloud Big Data and AI - February 26, 2026

ApsaraDB - February 26, 2025

Alibaba Cloud Community - January 30, 2024

ApsaraDB - December 13, 2024

Alibaba Container Service - March 12, 2026

ApsaraDB - December 17, 2024

Database for FinTech Solution

Database for FinTech Solution

Leverage cloud-native database solutions dedicated for FinTech.

Learn More Oracle Database Migration Solution

Oracle Database Migration Solution

Migrate your legacy Oracle databases to Alibaba Cloud to save on long-term costs and take advantage of improved scalability, reliability, robust security, high performance, and cloud-native features.

Learn More Lindorm

Lindorm

Lindorm is an elastic cloud-native database service that supports multiple data models. It is capable of processing various types of data and is compatible with multiple database engine, such as Apache HBase®, Apache Cassandra®, and OpenTSDB.

Learn More PolarDB for MySQL

PolarDB for MySQL

Alibaba Cloud PolarDB for MySQL is a cloud-native relational database service 100% compatible with MySQL.

Learn MoreMore Posts by ApsaraDB