Disclaimer: This is a translated work of Qinxia's 漫谈分布式系统. All rights reserved to the original author.

As we discussed in the first chapter of the series, distributed systems obtain horizontal scale-out by splitting the storage and computation of data into multiple nodes.

The power of scale-out is infinite. In theory, there are as many nodes as storage and computing power.

From this perspective, distributed systems have the potential to develop into decentralized systems. However, due to architectural design problems, at least in the big data field, most distributed systems are not decentralized systems. Instead, they have centers.

Specifically, many distributed systems adopt the master-slave architecture for different reasons.

Let's take HDFS and YARN, which are most mentioned in the previous article, as examples to see the problems caused by this design.

HDFS uses the master-slave architecture to maintain metadata in a unified manner.

Let's review the process of HDFS reading and writing data (as described in the third article in this series).

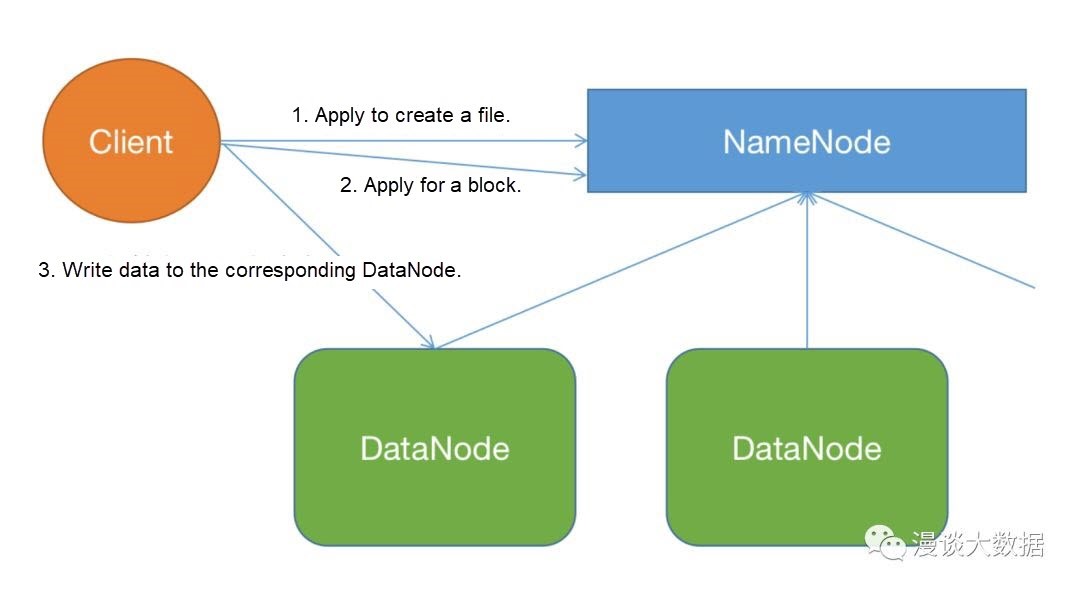

The following is a simplified flowchart of writing data:

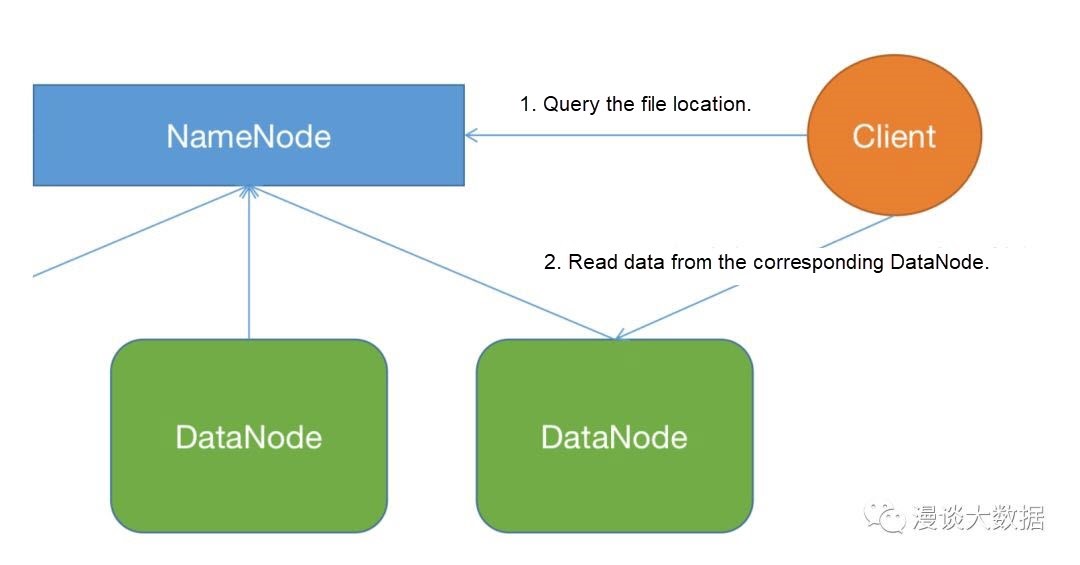

The following is a simplified flowchart of reading data:

Both graphs are schematic and do not contain details (such as pipeline writing and packet splitting), but they are enough to illustrate the problem.

As shown in these two figures, whether we read or write data, we must first go to the NameNode (NN) to access metadata.

After the previous articles about data consistency, the benefits of unified metadata management are clear: data consistency is guaranteed.

This is important. When the data is split into blocks and scattered on various machines, once the metadata is inconsistent, the data cannot be spelled back into usable files even if it is still there.

However, metadata is also data and has resource overhead. However, as the resources of a single-point NN (HA is only a standby instance) are limited, continual scale-out will encounter a bottleneck one day.

There are two main components to the memory overhead of NN:

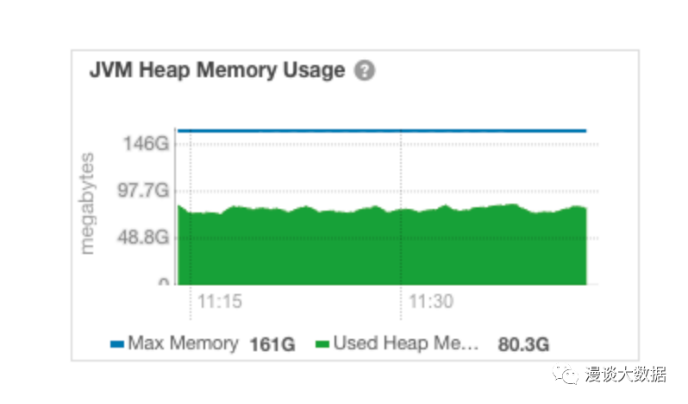

According to the memory overhead of a file or block object being 150 bytes, we can calculate the actual overhead of the first part and reserve some margin for the second part. The recommended setting is 1GB of memory per 1 million blocks. Therefore, 0.1 billion files or block objects need about 100GB of memory.

The following figure shows the memory situation of NN I intercepted in a production cluster before. At that time, the total number of files + block objects was about 0.15 billion.

NN's memory requirements will exceed the server's physical memory as the amount of data continues to grow. NN will become the performance bottleneck of the cluster or even bring down the whole cluster directly.

Hadoop uses the master-slave architecture for computing resources to uniformly schedule computing resources and tasks.

Scheduling computing resources aims to improve overall utilization, and scheduling tasks aims to organize the task execution process.

Since data is not stored like HDFS NN, the performance pressure on computing resources and task scheduling is more reflected in processing power. Externally, it is characterized by processing latency.

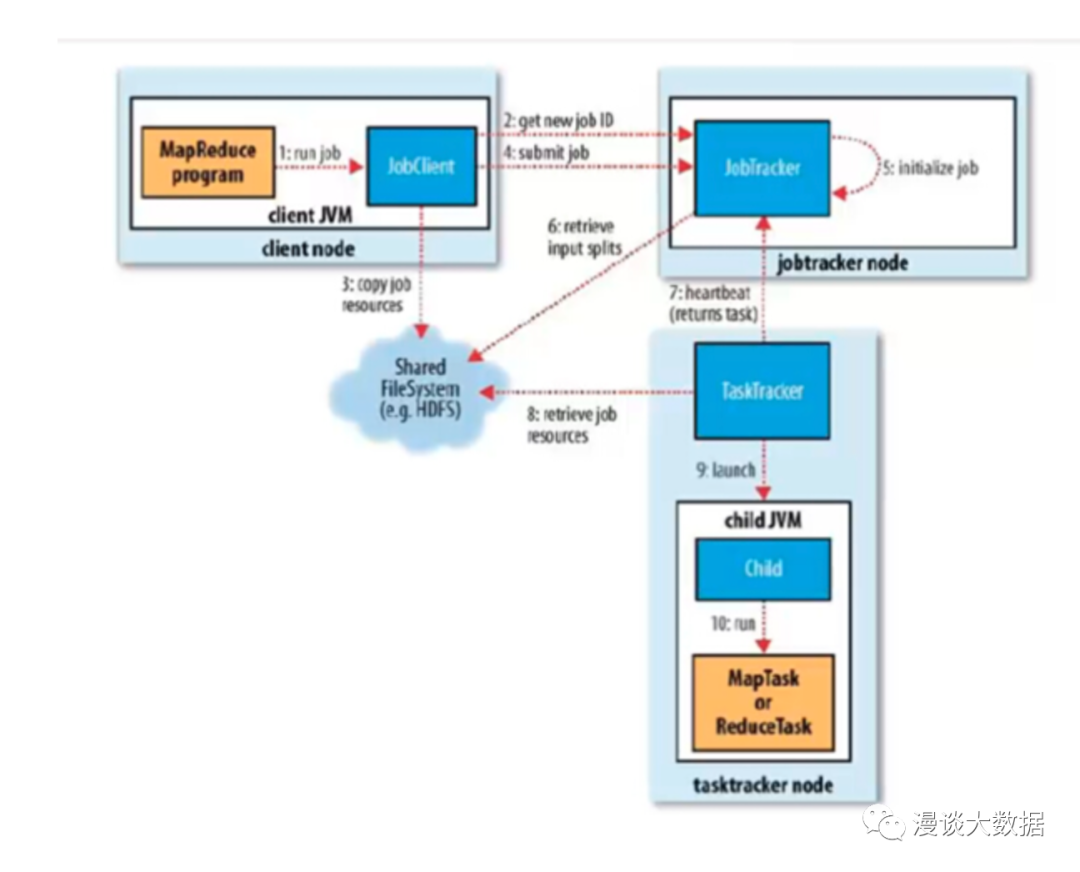

The following is Hadoop's first-generation computing resource scheduling framework, the MRv1 architecture graph.

It shows that JobTracker is responsible for resource scheduling and task scheduling.

As the cluster scale increases, more computing resources need to be scheduled, and more tasks need to be scheduled due to the increase in data volume and business processing programs. As a result, the performance of JobTracker becomes the bottleneck of the cluster given limited server resources.

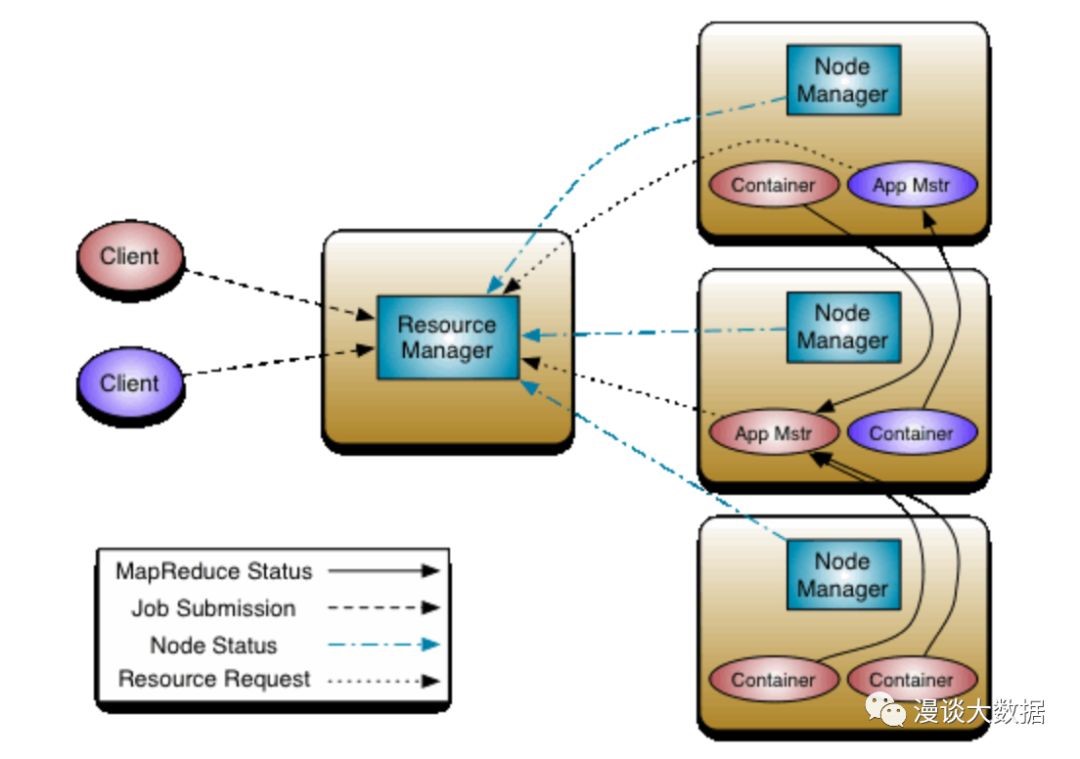

The cost of task scheduling will grow much faster than resource scheduling. After all, the new program is much cheaper and faster than the new node. So, the following MRv2 (YARN) is introduced, which is the mainstream architecture of most companies now.

The responsibilities of JobTracker are divided into two parts. The responsibilities of resource scheduling are reserved for RM, and the responsibilities of task scheduling are split to ApplicationMaster. In addition, AM and tasks have a one-to-one companion relationship, so they will not encounter performance bottlenecks.

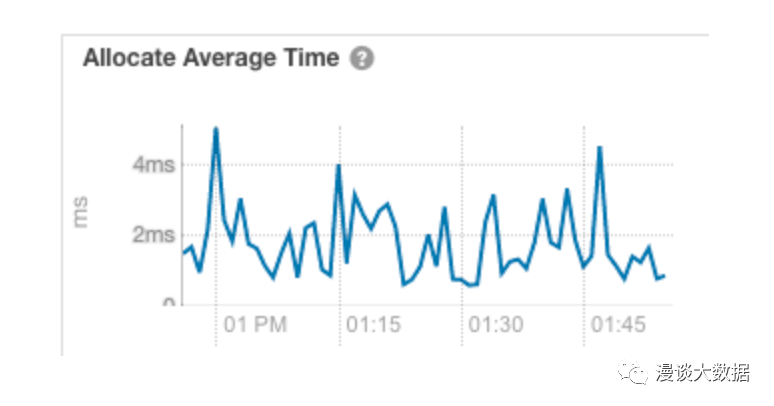

However, this is not enough. The scheduling of resources may still have performance bottlenecks.

The preceding figure is taken from a production system. As we can see, the average resource scheduling time is about 2ms. However, as the number of tasks increases, this figure will increase until it drags down the entire cluster.

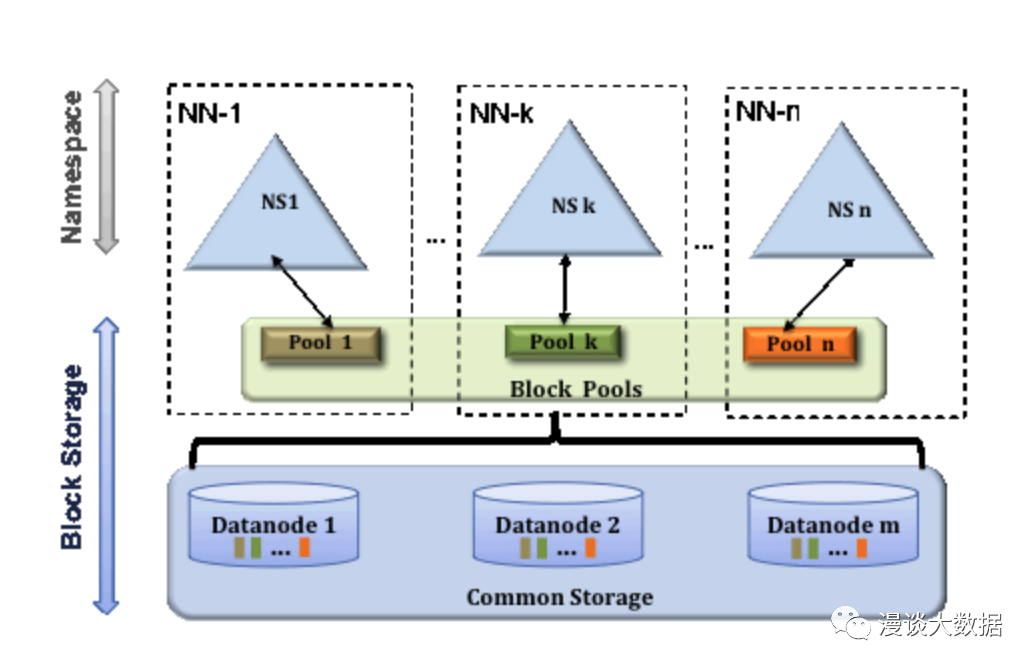

After the previous articles on partitioning, we can think that the solution to the centralization problem of HDFS NN is splitting.

Get more NNs, and each NN is only responsible for maintaining part of the metadata, so NNs can achieve continuous scale-out.

How can we split it?

HDFS is a file system, and the file system is organized in the form of a directory tree. Therefore, it is natural to think of splitting according to directories.

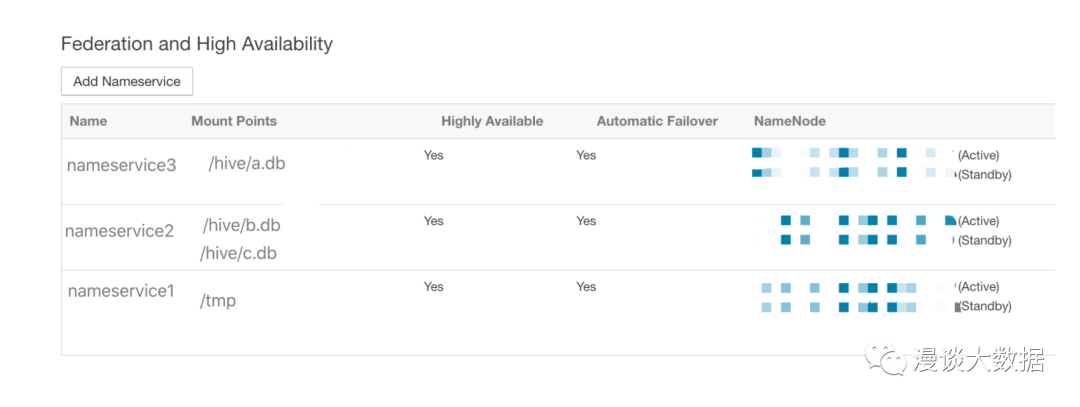

The community has proposed HDFS Federation implementation according to this idea. After the directory is split, it is allocated to different Nameservices in the form of mount points (like Unix systems) to provide services.

Each DataNode still reports to all NameNodes but maintains a separate block pool. Therefore, physically, the actual storage resources are still shared by the NNs, and the splitting is mainly reflected in the logic.

For example, in the following figure, three NSs are divided and mount different directories.

As such, the internal split is done, and each NS serves its directory independently and completely, without interacting with each other or even knowing the existence of each other.

The rest is how to provide a unified view of the outside.

HDFS is a relatively complex system. In order to reduce changes without affecting stability, the community has proposed a solution to implement a unified view of the client. This solution is called ViewFS Federation.

<property>

<name>fs.defaultFS</name>

<value>viewfs://clusterDemo</value>

</property>

<property>

<name>fs.viewfs.mounttable.clusterDemo.link./tmp</name>

<value>hdfs://nameservice1/tmp</value>

</property>

<property>

<name>fs.viewfs.mounttable.clusterDemo.link./hive/b.db</name>

<value>hdfs://nameservice2/hive/b.db</value>

</property>

<property>

<name>fs.viewfs.mounttable.clusterDemo.link./hive/c.db</name>

<value>hdfs://nameservice2/hive/c.db</value>

</property>

<property>

<name>fs.viewfs.mounttable.clusterDemo.link./hive/a.db</name>

<value>hdfs://nameservice3/hive/a.db</value>

</property>We only need to follow the format above to configure the client, and the file operation request of a certain directory can be automatically forwarded to the corresponding Nameservices.

Although the change of ViewFS Federation is small, it has the following disadvantages:

This is normal because ViewFS is a client-centric split. So, it's natural to think of a server-centric split.

It is called Router-Based Federation (RBF).

We only need to set up a layer of proxy on Nameservices to receive client requests and forward them to the corresponding Nameservices. This proxy layer is assumed by Router.

The configuration of mount tables is unified to the centralized State Store. Currently, two implementations are based on file and ZooKeeper, respectively.

With the State Store state, Router is completely stateless and can be considered for deployment behind the Server Load Balancer.

In addition to responding to client requests, Router is responsible for monitoring NN's survival status, load, and other information and sending the information to the State Store in the form of heartbeats.

In addition, the community recommends deploying multiple Routers for each NN to ensure that changes at HA and namservice layers can be synchronized to State Store in time (another data consistency scenario). This may lead to data conflicts. Don't worry. Just use the quorum algorithm mentioned in the previous article.

With this layer of the proxy, the client and the server are completely decoupled. We can boldly implement it on the server side (such as adding new subclusters, adjusting the mount tables, and moving data between subclusters for rebalance). Also, it can be transparent to the client.

Due to the launch time and stability, most companies in the industry have adopted the ViewFS solution, while RBF is only available in Hadoop 3. However, more companies have begun to deploy RBF, and some can't even wait to implement a private version similar to RBF.

HDFS and YARN belong to Hadoop. YARN only needs to copy HDFS to solve the problem of centralization.

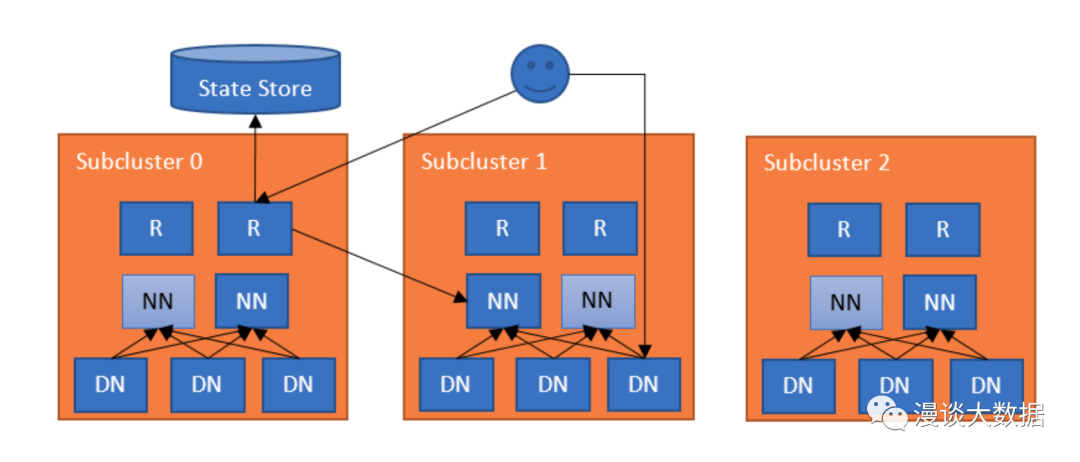

It is the YARN Federation program.

The architecture is a copy of HDFS RBF. A client request is sent to Router. After Router queries the sub-cluster status from the State Store, it forwards the request to the corresponding cluster.

However, there are still some differences in implementation.

In HDFS, each nameservice shares all DataNodes, and the core of splitting is the logical directory.

What about YARN?

Computing resources are dynamically allocated. They are allocated when needed and recycled when used up. There is no logical split point. So, it can only be physically separated. RMs under YARN Federation do not share all NMs. Instead, they are split into groups based on NMs.

However, this will bring about a problem. Originally, all NMs are clustered together, and resources can be scheduled as a whole. Now, they are split. If each RM is scheduled separately, and resources are not shared, the overall utilization rate will be reduced.

It is necessary to support cross-cluster resource scheduling.

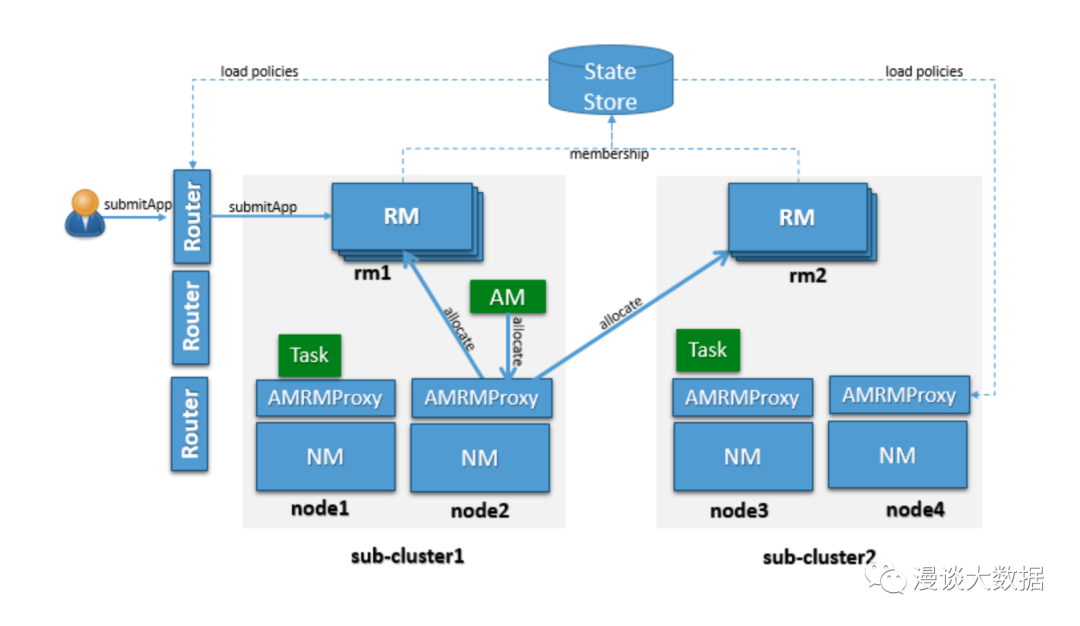

Therefore, a new role, AMRMProxy, is introduced and deployed on each NM machine. As the name implies, AMRMProxy is used by ApplicationMaster to act as the Proxy of RM.

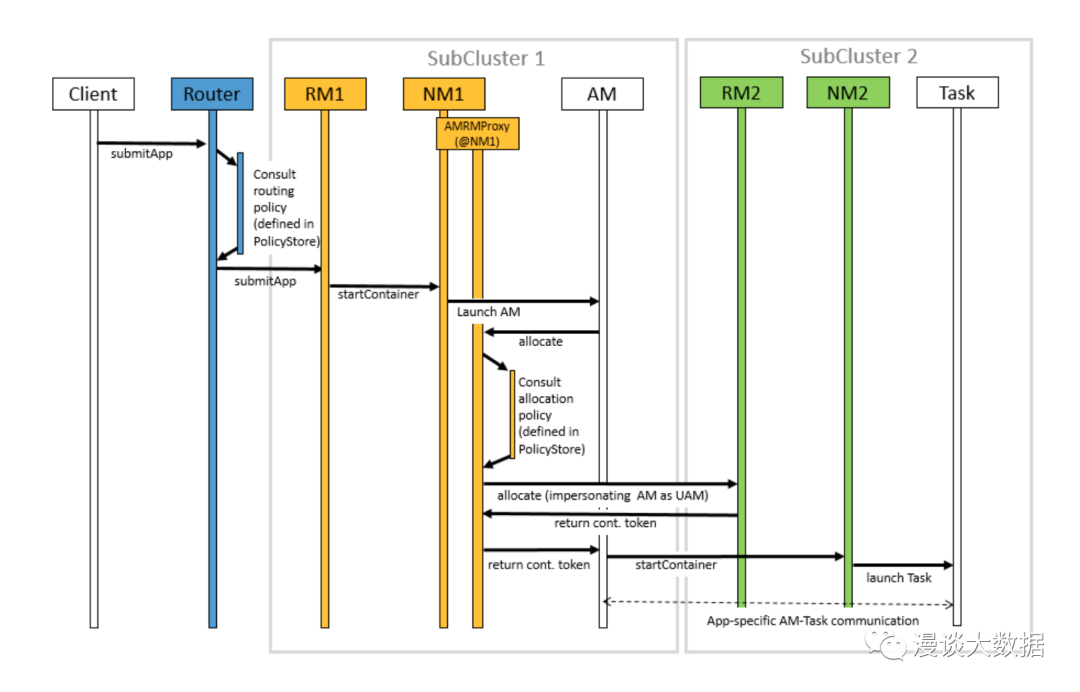

The preceding figure shows the main process of task submission and execution under the YARN Federation architecture. There are two key points:

As mentioned earlier, centers exist in distributed systems (such as HDFS and YARN) because they adopt the master-slave architecture.

Approaches (like the Federation) can alleviate this problem to a large extent.

Is there any other idea? Let's take a step back. If there was no problem at the beginning, wouldn't there be no need to solve it?

Let's abandon the master-slave architecture!

Let's think about it carefully. In essence, the master has the following two main functions:

Do these two requirements have to be implemented with a centralized architecture? No. It depends.

Dynamo, a storage system mentioned in the twelfth article in this series, is a good example. Dynamo's data is located on the client side through consistent hashing, so there is no need to centrally store metadata.

Not all systems can be decentralized like Dynamo. For example, it is difficult to decentralize the scheduling of computing resources. However, it provides us with another way of thinking that is worth referring to.

This article shows that distributed systems are not completely distributed. Due to the master-slave architecture, once masters have performance problems, they will drag down or even bring down the entire cluster.

We also discussed some typical solutions to centralization problems. The performance problems of masters have been solved. What about slaves? Will there also be performance problems?

In the next article, let's look at whether there will be any problems with slaves' performance, or at least, whether there is room for improvement.

This is a carefully conceived series of 20-30 articles. I hope to give everyone a core grasp of the distributed system in a storytelling way. Stay tuned for the next one!

New Courses and Updates from Alibaba Cloud Academy Training and Certification in May 2023

Learning about Distributed Systems – Part 16: Solve the Performance Problem of Worker

64 posts | 59 followers

Followyouliang - February 5, 2021

Alibaba Developer - April 19, 2022

Alibaba Clouder - April 19, 2019

Samuel_Song - December 9, 2020

Alibaba Clouder - August 10, 2018

Alibaba Clouder - April 24, 2020

64 posts | 59 followers

Follow Hybrid Cloud Distributed Storage

Hybrid Cloud Distributed Storage

Provides scalable, distributed, and high-performance block storage and object storage services in a software-defined manner.

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn MoreMore Posts by Alibaba Cloud_Academy

Dikky Ryan Pratama June 27, 2023 at 12:49 am

awesome!