By Li Xiang, Senior Technical Expert at Alibaba and TCO of CNCF

Kubernetes is an industrial-grade container orchestration platform. The name Kubernetes originates from Greek and means helmsman or pilot. "K8s (or "ks" in some articles), is an abbreviation derived by replacing the eight letters "ubernete" with "8." If you are wondering why the name Kubernetes which means "helmsman" was chosen, then let's take a look at the following figure.

This is a ship carrying a pile of containers. The ship is sailing to the sea for transporting the containers to their destinations. In English, this word also means the "container" for packing goods to be transported. Therefore, taking the cue from its literal meaning, Kubernetes hopes to become a ship that transports containers. In other words, it intends to help manage these containers. Therefore, the word "Kubernetes" is used to represent this project. More specifically, Kubernetes is an automated container orchestration platform, which is responsible for deploying, elastically scaling, and managing applications based on containers.

Service Discovery and Load Balancing

The following sections describe the key capabilities of Kubernetes in detail using three examples.

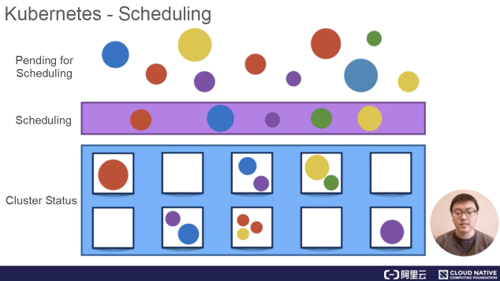

In Kubernetes, a container submitted by a user is deployed onto a node in a cluster managed by Kubernetes. The scheduler in Kubernetes is the component that implements this capability. It monitors the size and specifications of the container that is being scheduled. For example, the scheduler estimates the required CPU and memory capacities and then places the container onto a node that is relatively idle in the cluster. In this example, the scheduler may place the red container onto the second idle node to complete the scheduling task.

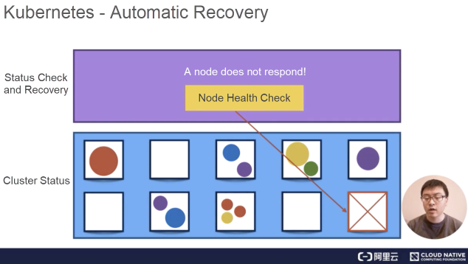

Kubernetes provides node health check. This feature allows the system to monitor all hosts in the cluster. When a host or software problem occurs, Kubernetes automatically detects the fault and automatically migrates containers that are running on the failed node to a healthy host to automatically recover the containers in the cluster.

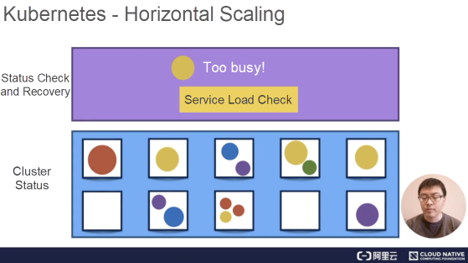

Kubernetes is capable of checking service loads through monitoring. If the CPU usage of service is excessively high or the response to service is excessively slow, Kubernetes scale-out that specific service accordingly. In the following example, the first yellow node is excessively busy, hence, Kubernetes distributes loads of the yellow node to three pieces. Then, through load balancing, Kubernetes evenly distributes the original loads on the first yellow node to three yellow nodes, including the first yellow node itself, to increase the response speed.

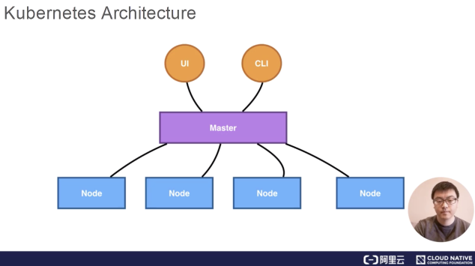

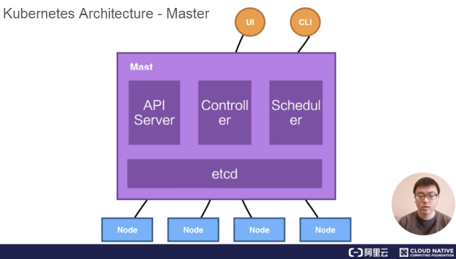

The Kubernetes architecture is a typical two-layer server-client architecture. As the central control node, the master connects to multiple nodes. All UI clients and user-side components are connected to the master to send desired states or to-be-executed commands to the master. Then, the master sends these commands or states to corresponding nodes for final execution.

In Kubernetes, the master runs four key components including the API server, controller, scheduler and etcd. The following figure shows the detailed Kubernetes architecture.

The API server is a deployment component that allows horizontal scaling in terms of deployment structure. In addition, the controllers represent a deployment component that implements hot backup. Only one controller is active and has its corresponding scheduler. Despite this, it's still possible to implement the hot backup.

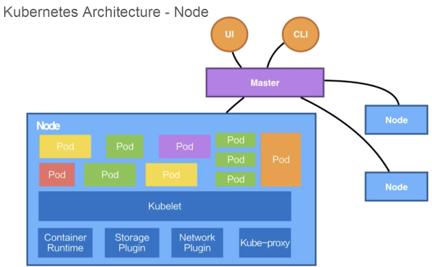

In Kubernetes, service loads are actually carried by nodes, with each piece of service load running as a pod. The concept of a pod will be introduced later. In addition, one or more containers are running in a pod. The component that actually runs these pods is called kubelet, which is also the most critical component on the node. It receives the running status of the desired pod from the API server and submits the running status to the container runtime component shown in the following figure.

It's critical to manage the storage and network for creating the running environment on the OS for a container and finally running the container or the pod. Kubernetes does not directly perform any storage or network operations but, depends on storage plug-ins or network plug-ins for completing these operations. Usually, users or cloud vendors develop corresponding storage plug-ins or network plug-ins to perform actual storage or network operations.

Kubernetes also includes a Kubernetes network, which provides a service network for networking. The concept of service will be introduced later. The component that truly completes service networking is kube-proxy. It sets up a cluster network in Kubernetes by leveraging iptables. So far, you have learned about all the four components on a node. In Kubernetes, a node does not directly interact with users but, depends on the master. Users deliver messages to a node through the master. In Kubernetes, each node runs all of the preceding four components.

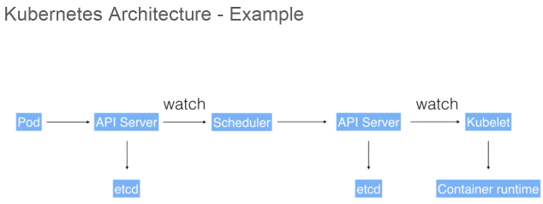

The following example demonstrates how these components interact with each other in the Kubernetes architecture.

A user may submit a pod deployment request to Kubernetes through the UI or CLI. This request is first submitted to the API server in Kubernetes through the CLI or UI. Then, the API server writes the request information into the etcd store. Lastly, the scheduler obtains this information through the watch or notification mechanism of the API server. The information indicates that the pod needs to be scheduled.

At this time, the scheduler makes a scheduling decision based on its memory status. After completing the scheduling, it reports to the API server with "OK! This pod needs to be scheduled to a certain node."

After receiving this report, the API server writes the scheduling result into etcd. Then, the API server notifies the corresponding node to start the pod. After receiving this notification, kubelet on the corresponding node communicates with container runtime to actually start configuring the container and the running environment of the container. In addition, kubelet schedules the storage plug-in to configure storage and the network plug-in to configure the network, respectively. This example shows how these components communicate with each other and work together to complete the scheduling of a pod.

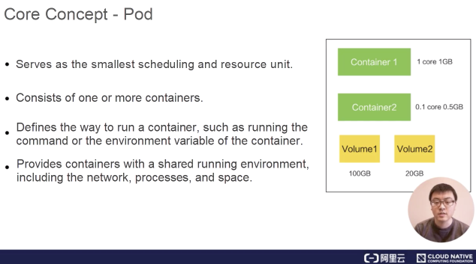

A pod is the smallest scheduling and resource unit in Kubernetes. A user creates a pod through the Pod API of Kubernetes so that Kubernetes schedules the pod. Specifically, the pod is placed and runs on a node managed by Kubernetes. In short, a pod is the abstraction of a group of containers, containing one or more containers.

As shown in the following figure, the pod contains two containers, and each container specifies its required resource size, such as 1 GB memory and 1 CPU core, or 0.5 GB memory and 0.5 CPU core.

This pod also contains other required resources, such as the storage resource called volume, or 100 GB or 20 GB memory.

In a pod, define the way to run a container, such as running the command or the environment variable of the container. The pod also provides a shared running environment for the containers within it. In this case, these containers share a network environment and directly find each other through the localhost. In addition, pods are isolated from each other.

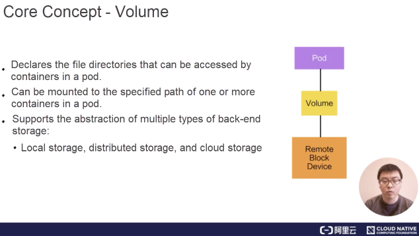

In Kubernetes, a volume is used to manage storage and declare the file directories that are accessed by containers in a pod. A volume can be mounted to the specified path of one or more containers in a pod.

The volume itself is an abstraction. One volume supports multiple types of backend storage. For example, the volume in Kubernetes supports many storage plug-ins. It supports local storage, distributed storage such as Ceph and GlusterFS, and cloud storage such as disks in Alibaba Cloud, AWS, and Google.

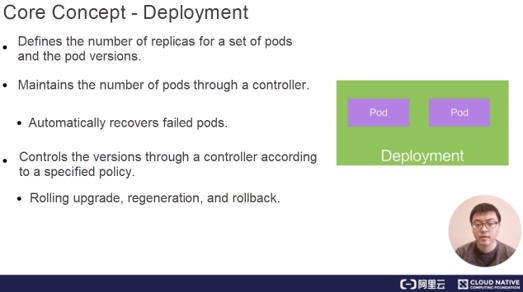

A deployment is an abstraction on top of a pod and defines the number of replicas for a set of pods and pod versions. Generally, this abstraction is used to actually manage applications, and pods are the smallest units that form a deployment. In Kubernetes, a controller maintains the number of pods in deployment and helps the deployment automatically recover failed pods. For example, define a deployment that contains two pods. If one pod fails, a corresponding controller detects the failure and restores the number of pods in the deployment from one to two by creating a pod. The controllers in Kubernetes also allow us to implement published policies, such as rolling upgrades, regeneration upgrades, or version rollback.

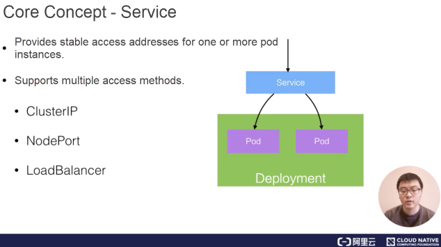

A service provides static IP addresses for one or more pod instances. In the preceding example, one deployment may include two or more identical pods. For an external user, accessing any pod is the same, and therefore load balancing is preferred. To achieve load balancing, the user wants to access a static virtual IP (VIP) address rather than to know the specific IP addresses of all pods. As mentioned earlier, a pod may be in the terminal go (terminated) status. If this is the case, it may be replaced by a new pod.

For an external user, if the specific IP addresses of multiple pods are provided, this user needs to constantly update the IP addresses of pods. If a pod fails and then restarts, the abstraction may abstract the access to all pods into a third-party IP address. The abstraction that implements this feature in Kubernetes is called service. Kubernetes supports multiple ingress modes for services, which include the ClusterIP, NodePort, and LoadBalancer modes. It also supports access through networking by using kube-proxy.

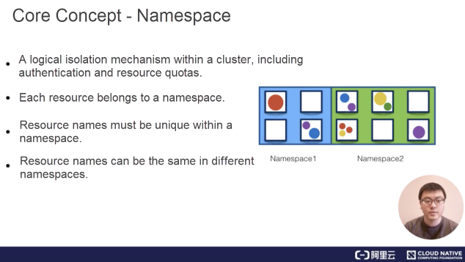

A namespace is used for implementing logical isolation within a cluster, which involves authentication and resource management. Each resource in Kubernetes, such as the pod, deployment, and service, belongs to a namespace. Resource names must be unique within a namespace, but can be the same in different namespaces. The following describes a use case of namespaces. At Alibaba, there are many business units (BUs). To isolate each BU at the view level and make them different in authentication and compute unified device architecture (CUDA), we will use namespaces to provide each BU with such a visual isolation mechanism.

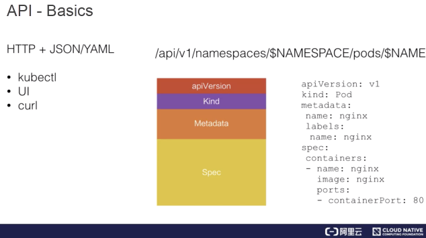

The following diagram describes the basics of the Kubernetes API. From a high-level perspective, the Kubernetes API is based on HTTP and JSON. Specifically, users access the API through HTTP, and the content of the accessed API is in the JSON format. In Kubernetes, the kubectl command-line tool, the Kubernetes UI, or sometimes curl is used to directly communicate with Kubernetes based on HTTP and JSON. In the following example, the HTTP access path of a pod consists of the following parts: the API, apiVesion: V1, namespace, pod, and pod name.

In contrast, if we submit a pod or get a pod, the content of the pod is expressed in the JSON or YAML format. The preceding figure shows a YAML file example. In this YAML file, the description of the pod consists of several parts.

Generally, the first part is the API version. In this example, the API version is V1. The second part is the kind of resource that is being worked on. For example, if the kind of the resource is a pod, the name of this pod is written in the metadata. If the kind of resource is Nginx, we will add some labels to it. The concept of a label will be described later. In metadata, sometimes we also write annotations to additionally describe the resource from the perspective of users.

Another important part is the spec, which indicates the desired state of the pod. For example, the spec shows the containers that need to be run in the pod, the image of an Nginx container included in the pod, and the ID of the exposed port.

When we obtain this resource through the Kubernetes API, the spec usually comes along with an item named status, which indicates the current state of this resource. For example, a pod may be in the scheduling, running, or terminated status. The terminated status means that the pod has been executed.

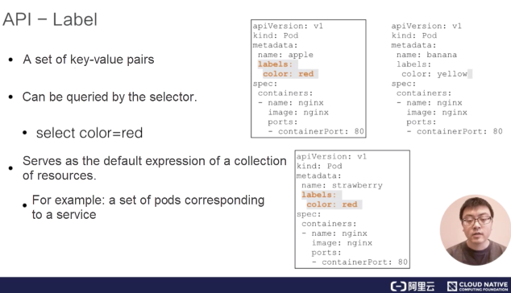

While describing the API, we mentioned an interesting metadata element called "label". A label may be a set of key-value pairs. For example, for the first pod in the following figure, the label may be color red, which indicates that the pod is red. It is also possible to add other labels, such as size big, which indicates that the size is defined as big. In addition, it is possible to add a set of labels. These labels are queried by a selector. In fact, this capability is very similar to that of an SQL select statement. For example, you may select from the three pods shown in the following figure. When the color is named red, implying that the color of a pod is red, note that only two pods are selected because only their labels indicate the red color. The label of another pod says that the color is yellow, indicating that this pod is yellow and therefore is not selected.

Labels allow the Kubernetes API to filter these resources. The filtering is also a default way to indicate a collection of resources in Kubernetes.

For example, a deployment may represent a set of pods or is an abstraction of a set of pods. This set of pods is indicated by using a label selector. As described previously, a service corresponds to one or more pods to access them in a centralized way. In this description, the set of pods are also selected by the label selector.

Therefore, the label is a core concept of the Kubernetes API. In subsequent tutorials, we will dive into the concepts of the label and how to make full use of labels.

Lastly, let's try out a Kubernetes cluster demo. Before that, make sure to install a Kubernetes cluster locally and a Kubernetes sandbox environment by performing the following steps:

Before installing, download VirtualBox from https://www.virtualbox.org/wiki/Downloads

minikube start —vm-driver virtualbox startup command.Note: If your computer is not running on the Mac system, visit the following this link to see how to install the minikube sandbox environment in other operating systems.

After completing the installation, implement a use case as follows.

1) Submit an Nginx deployment: kubectl apply -f https://k8s.io/examples/application/deployment.yaml

2) Update the Nginx deployment: kubectl apply -f https://k8s.io/examples/application/deployment-update.yaml

3) Scale out the Nginx deployment: kubectl apply -f https://k8s.io/examples/application/deployment-update.yaml

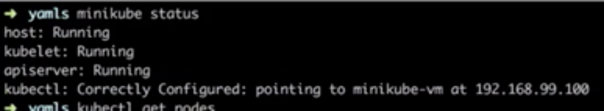

To summarize, submit an Nginx deployment first and then upgrade the version of this deployment to change the versions of its pods. Lastly, scale-out the Nginx deployment horizontally. Now let's proceed with three operations. First, check the status of minikube. The output shows that both kubelet master and kubectl are correctly configured.

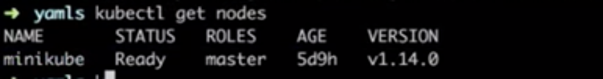

Then, check the status of the nodes in this cluster through kubectl. The output shows that the master is running.

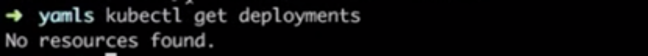

Let's check the deployment in the cluster based on the master.

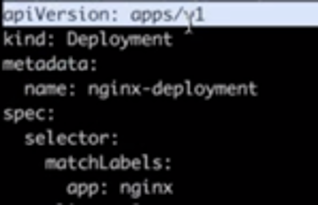

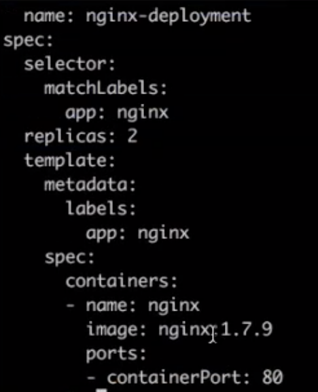

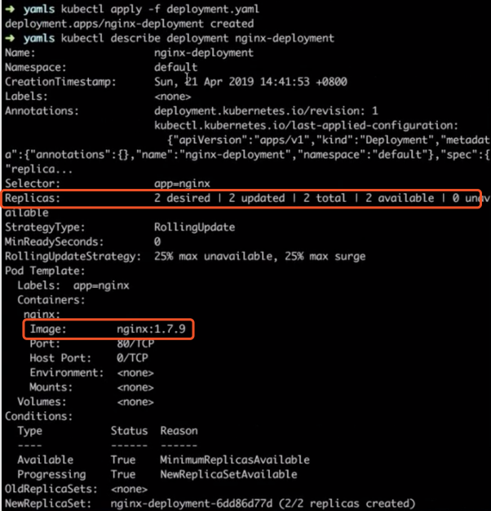

The output shows that the cluster does not include any deployments. View the changes to the deployment in the cluster by using the watch semantics. Now, it's ready to perform the preceding three operations. First, create a deployment. The first figure below shows the content of an API. Specifically, the kind is a deployment, and the name is nginx-deployment. As shown in the second figure, the number of replicas is 2, and the image version is 1.7.9.

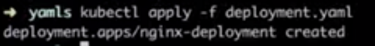

Now, run the kubectl command to create the deployment. Note that a simple operation enables the deployment to keep generating replicas.

The number of replicas is 2. The deployment named nginx-deployment did not exist before. Now let's describe the current state of this deployment.

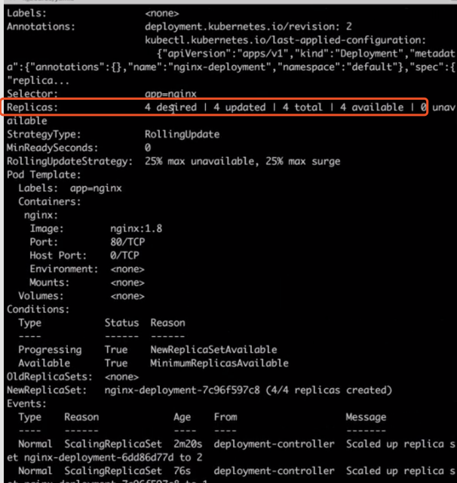

In the following figure, note that a deployment named nginx-deployment has been generated. The number of replicas is as desired, the selector meets the requirements, and the image version is also 1.7.9. In addition, observe that the controller named deployment-controller is managing the generation.

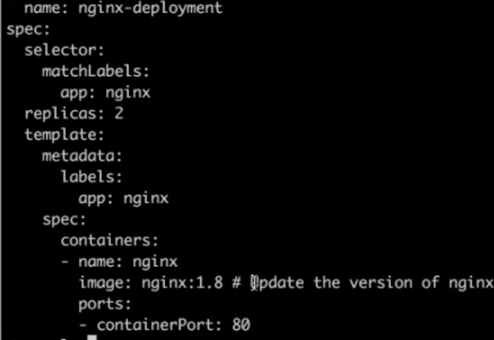

Next, let's update the version of this deployment. First, download another YAML file named deployment-update.yaml. Also, note that the image version in this file is updated from 1.7.9 to 1.8.

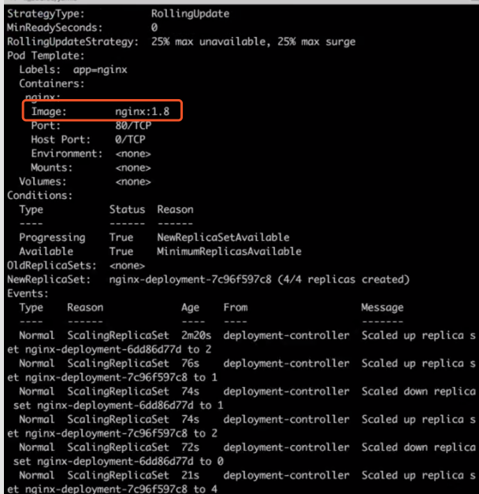

Then, apply the new deployment-update.yaml file. Some operations of this deployment update appear on the screen on the other side, and the up-to-date value changes from 0 to 2. This indicates that all containers and pods are up-to-date. Run describe commands to check whether the versions of all the pods have been updated. The output shows that the image version has been updated from 1.7.9 to 1.8.

In addition, note that the controller performs several new operations subsequently to maintain the state of the deployment and pods.

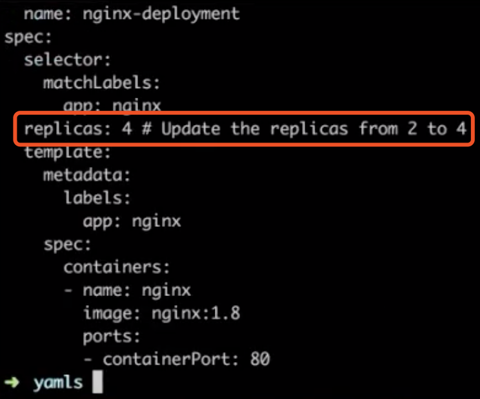

Now, let's horizontally scale out the deployment by downloading another deployment-scale.yaml file. This file shows that the number of replicas has been changed from 2 to 4.

Return to the initial window and use kubectl to apply the new deployment-scale.yaml file. In another window, note that the number of pods is changed from 2 to 4 after the deployment-scale.yaml file is applied. Again, describe the deployment in the current cluster. The output shows that the number of replicas has increased from 2 to 4, and the controller has performed several new operations. This indicates that the scale-up is complete.

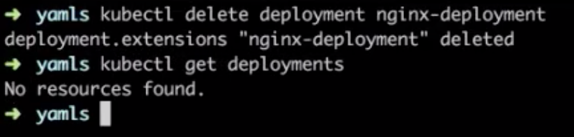

Lastly, let's perform the delete operation to delete the generated deployment. As shown in the following figure, kubectl delete deployment is also the name of the original deployment. After deletion, all the required operations are done. Thus, this deployment no longer exists and the cluster returns to its original clean state.

This article focuses on the core concepts and architectural design of Kubernetes, which mainly include the following points:

Getting Started with Kubernetes | Basic Concepts of Kubernetes Containers

701 posts | 57 followers

FollowAlibaba Developer - January 11, 2021

Alibaba Developer - January 21, 2021

Farah Abdou - January 19, 2026

Alibaba Developer - March 25, 2019

Alibaba Cloud Storage - June 4, 2019

Alibaba Developer - March 31, 2020

701 posts | 57 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Cloud Native Community