In this article, Alibaba technical expert Aohai introduces the basic concepts and architecture of a recommender system, specifically, what a recommender system is and how an enterprise-level recommender system architecture looks like.

1) Common Recommendation Scenarios

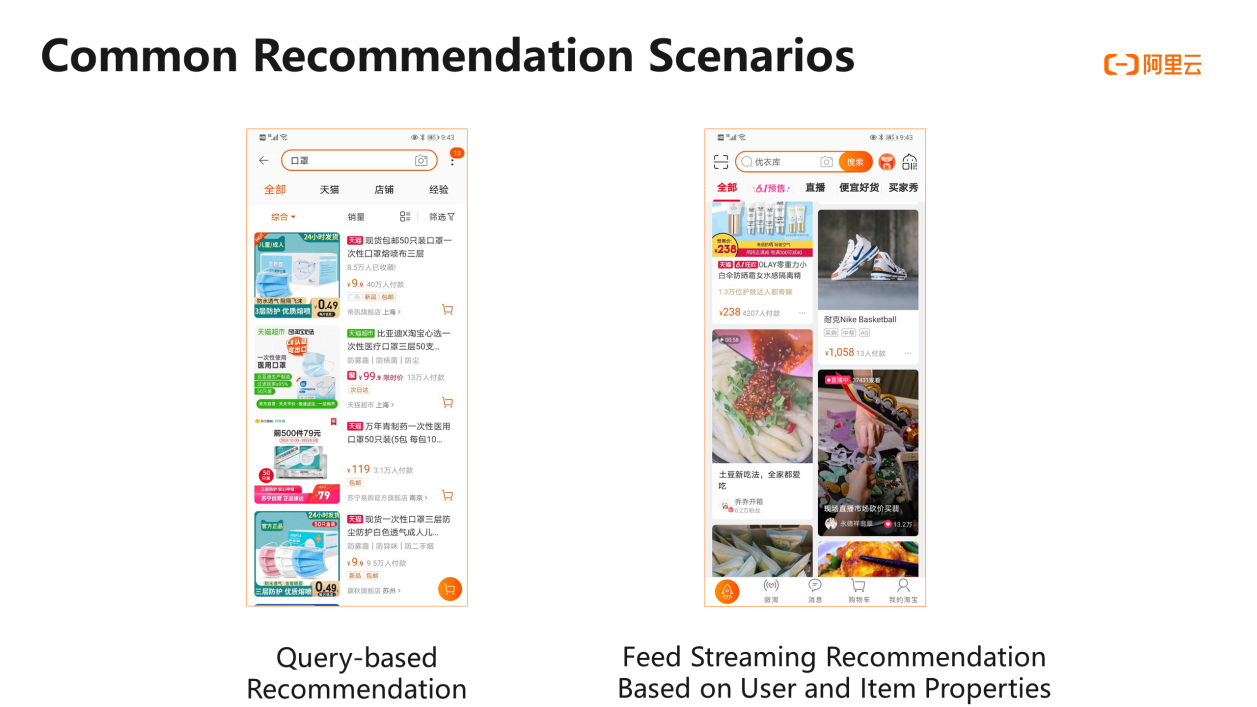

Let's start with an explanation of what a recommender system is and why it's needed. People will be able to dabble in more detail as Internet technologies develop. The Taobao website, for example, features a wide range of items. Taobao faces a difficulty in connecting consumers with items that are right for them. The recommender method effectively solves the problem of knowledge matching by better matching user data with item data. You may have seen a variety of recommendation situations when using smartphone applications. There are two common suggestion scenarios. The feed streaming recommendations are focused on consumer and item assets, and the recommendations based on queries are the first.

The left part of the following figure shows what a query-based recommendation is. For example, if I search for a mask, the displayed items must be related to masks. Mask-related items may be as many as more than 110,000. A recommender system is required to determine which items should be ranked at the top and which ones should be ranked behind. The system needs to rank the items according to users' properties, such as their favorite colors and price preferences. If a user prefers luxury goods, the system will surely rank the expensive masks with good performance at the top for this user. If a user is sensitive to prices, the system may need to rank cheaper masks with higher cost performance at the top for this user. To sum up, a query-based recommendation is the matching between users' purchase preferences and item properties.

The right part of the following figure shows that feed streaming recommendations have increasingly become a major interaction mode between many apps and their users. If you open apps such as Hupu and Toutiao, you will find that the news feeds on their home pages are recommended according to your daily preferences. For example, if you love basketball-related news, more sports-related content may be recommended to you. We utilize machine learning to develop a recommendation model based on the feed streaming recommendations that involve users and items. The model is expected to learn about both user preferences and item properties. In the architecture of the recommender system that we introduce today, the underlying implementation of the matching between user and item properties is about how to implement the feed streaming recommendation based on user and item properties.

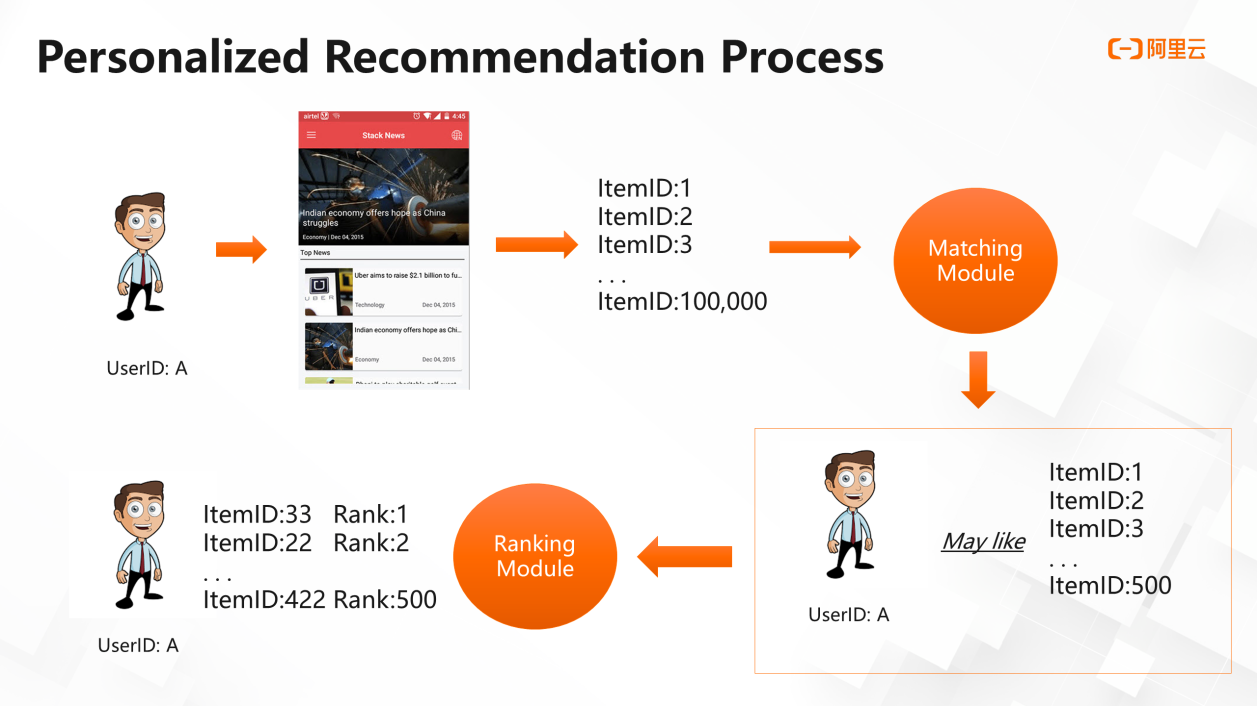

2) Personalized Recommendation Process

First, I would like to illustrate the whole recommendation service by using the following schematic diagram. Suppose we have a news platform, and user A with an ID visits the platform. This platform has thousands of news pieces, and we call each piece of news an item. Each item has an ID, such as 1, 2, or 3. Now we need to filter user A's favorite items from a total of 100,000 items. Which modules are needed in the underlying architecture to implement the recommendation? A typical recommender system based on matching and ranking usually has two modules. One is the matching module, and the other is the ranking module. The former performs a preliminary filtering of the 100,000 items to select those items user A may like. For example, if 500 items have been shortlisted, we only know that user A may like the 500 items, but we do not know which ones are user A's favorite and second favorite. The latter ranks the 500 items based on user A's preferences to create a final item list to be delivered to user A. Therefore, in the recommendation service, the matching module provides preliminary filtering to determine the general outline and scope. This accelerates property-based ranking of items by the ranking module, and makes recommendation feedback more efficient for users. A professional recommender system must be able to provide recommendation feedback within dozens of milliseconds after it receives a user request. A refresh of feed stream content may take dozens of milliseconds, and then the system must immediately show the newly recommended items. This is the logic behind the recommendation service.

The recommender system can be understood as the sum of recommendation algorithms and system engineering, specifically, Recommender system = Recommendation algorithms + System engineering. When a recommender system is discussed, many books and online documents focus more on how algorithms are implemented, and many papers are about the latest recommendation algorithms. However, if you get down to building such a recommender system, especially when you try to deploy it on the cloud, you will find it is actually a systematic project. Even if you know which algorithms a recommender service requires, you will face many problems such as performance problems and data storage problems. Therefore, this article focuses on both algorithms and system engineering, which together form a complete recommender system.

This article introduces the ranking algorithms and training architectures of a recommender system, specifically, the introduction of the ranking module in a recommender system, ranking algorithms, and online and offline training architectures for a ranking model.

An Introduction to Ranking Algorithms

This section describes the types of ranking algorithms, the training process of a ranking model, and training architectures. With the development of deep learning, ranking algorithms have been gradually integrated with deep learning. The following figure shows the typical ranking algorithms. The first is logistic regression (LR), which is a widely used algorithm. It is the most classic linear binary algorithm in the industry. It is easy to use, has low requirements for the computation power, and has good model interpretability. The second is Factorization Machine (FM), which has been applied to a large number of customer scenarios over the past one or two years and achieved a good performance. It uses the inner product method to enhance feature representation. The third is LR that uses gradient boosted decision trees (GBDTs) and feature encoding to enhance interpretability of data features. The fourth is the DeepFM algorithm, which is also a deep learning algorithm that is widely used. It combines deep learning and classic machine learning algorithms. If you attempt to build a recommender system for the first time, we recommend that you first try simple algorithms and then use complex algorithms. The preceding algorithms have been embedded to Machine Learning Platform for AI (PAI) and can be used as plug-ins.

This article introduces the matching algorithms and architecture, specifically, the introduction of matching module in a recommender system, matching algorithms, collaborative filtering, and vector matching architecture.

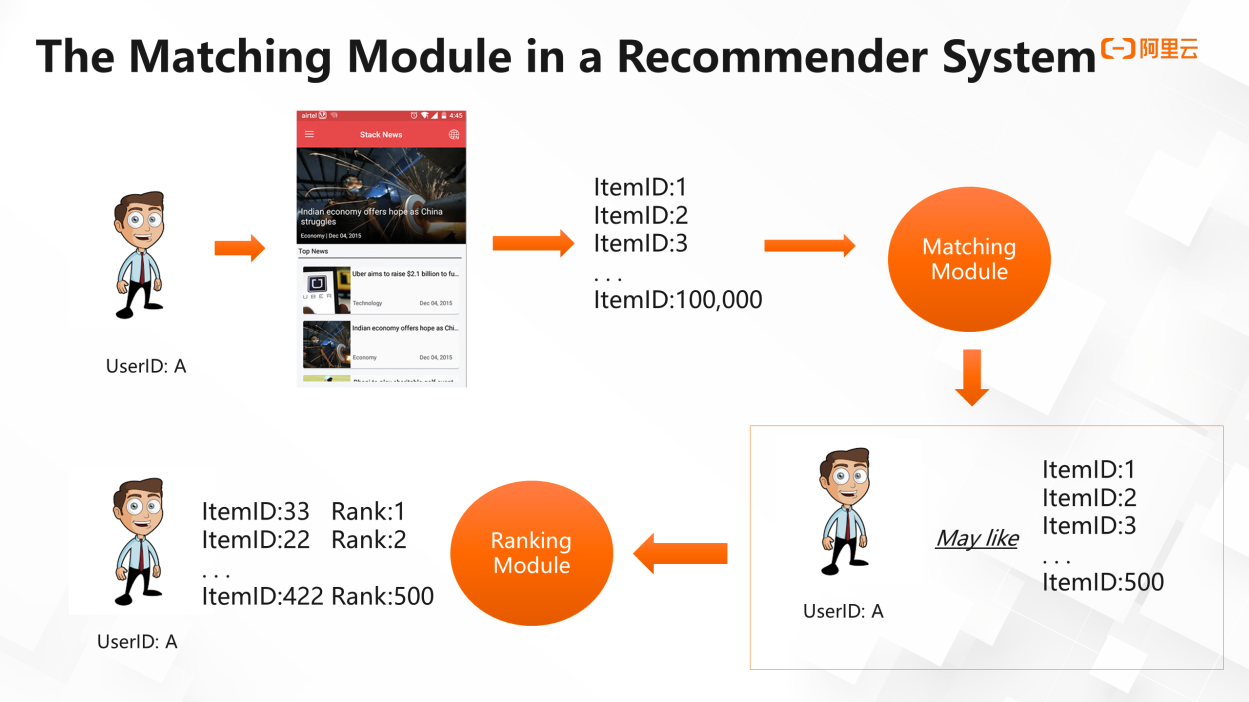

1) The Matching Module in a Recommender System

In the first article of the series for building an enterprise-level recommender system, we have introduced the recommender system architecture, its modules, and the application of cloud services in each module. In this article, we will focus on the matching algorithms in a recommender system and how you can build a matching architecture. First, let's review the matching module in a recommender system. The matching module is used for preliminary filtering. When user A visits a platform, the matching module filters out items that user A may like from a huge number of items. For example, the platform has 100,000 items, and the matching module filters out 500 items that user A may like. Then, the ranking module ranks these items based on user A's preferences.

This article introduces the online service orchestration and architecture, specifically, the online inference service architecture and online multi-goal implementation.

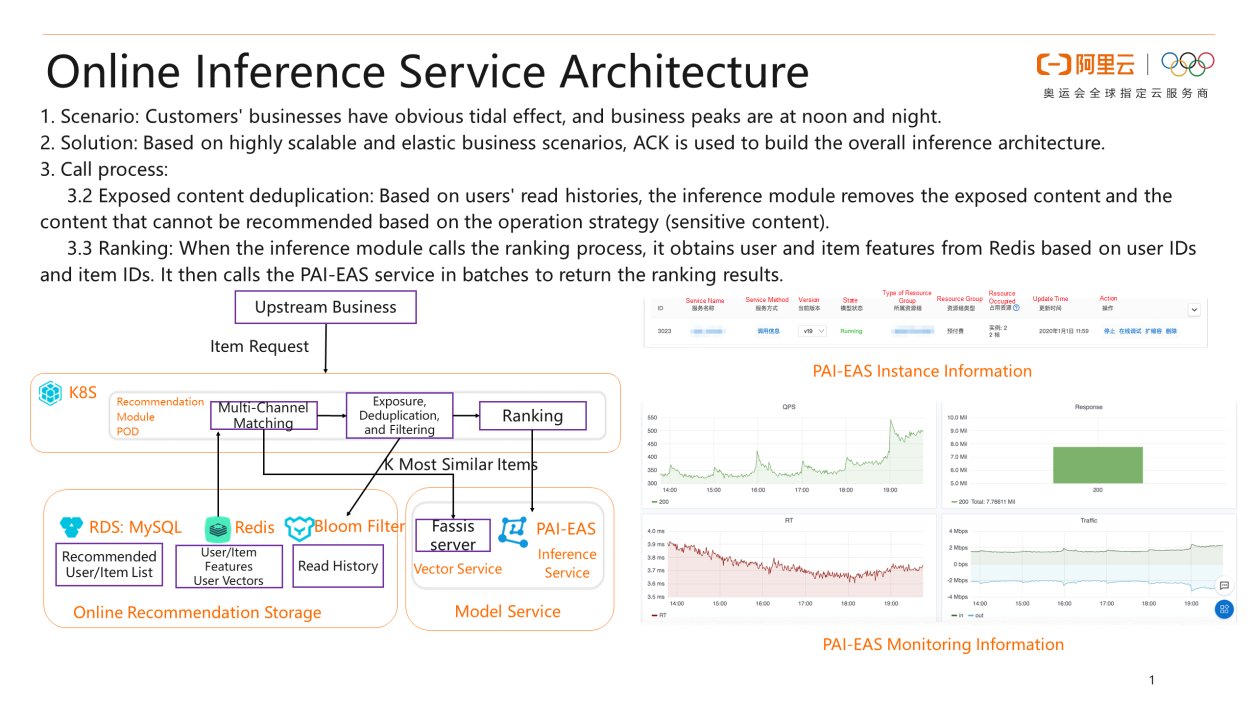

1) Online Inference Service Architecture

In the first two articles of the series for building an enterprise-level recommender system, we have introduced the algorithms and architectures in the matching and ranking modules. In this article, we will describe how you can orchestrate the results of the matching and ranking algorithms and apply the trained model to on-site businesses. First, let's take a look at the entire framework. Users' business scenarios, especially the Internet recommendation service, have peak traffic basically at noon and night. Therefore, you need an elastic mechanism to reduce resource consumption. For example, you need 10 servers at peak hours and only one server at off-peak hours. Without an elastic mechanism, you have to purchase 10 servers offline to meet the peak traffic requirements. However, if you use cloud services, you can implement elastic scaling. Costs can be reduced substantially because you no longer need to purchase 10 servers. The recommendation service requires an elastic mechanism. In the service orchestration phase, the cloud architecture is typically used to cope with the tidal effect of businesses.

To create the whole process of matching and ranking, we use Alibaba Cloud Container Service for Kubernetes (ACK) to build the entire inference architecture based on highly scalable and elastic business scenarios. The process includes the following steps: (1) Multi-channel matching: The inference module uses item-based collaborative filtering, semantic matching, hot matching, and operation strategy matching to retrieve thousands of candidates. (2) Exposed content deduplication: Based on users' read history, the inference module removes the exposed content and the content that cannot be recommended based on the operation strategy. (3) Ranking: When the inference module calls the ranking process, it obtains user and item features based on user IDs and item IDs. It then calls the Elastic Algorithm Service (EAS) provided by Machine Learning Platform for AI (PAI) in batches to return the ranking results. In the following figure, the right part shows the online monitoring capability of the PAI-EAS online inference service. You need to dynamically scale out or scale in the ranking model based on its metric to avoid a high RT or that the QPS cannot meet your business requirements. This is the entire online service orchestration framework.

This blog introduces how to establish a simple recommender system based on Machine Learning Platform for AI (PAI) within 10 minutes. This blog focuses on four parts: the personalized recommendation process, collaborative filtering algorithm, architecture of the recommender system, and practices.

Machine Learning Platform for AI provides end-to-end machine learning services, including data processing, feature engineering, model training, model prediction, and model evaluation. Machine Learning Platform for AI combines all of these services to make AI more accessible than ever.

Alibaba Cloud Container Service for Kubernetes (ACK) integrates virtualization, storage, networking, and security capabilities. ACK allows you to deploy applications in high-performance and scalable containers and provides full lifecycle management of enterprise-class containerized applications.

Alibaba Cloud was one of the first vendors to pass the Kubernetes conformance certification tests globally. Alibaba Cloud offers professional support and services.

2,593 posts | 794 followers

FollowFarah Abdou - January 19, 2026

Alibaba Developer - June 15, 2020

Alibaba Clouder - April 1, 2021

Alibaba Clouder - July 4, 2017

Alibaba Cloud MaxCompute - October 18, 2021

Alibaba Clouder - March 12, 2019

2,593 posts | 794 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn MoreMore Posts by Alibaba Clouder