By Ru Man

As AI applications develop rapidly, more and more developers choose Dify as their AI application construction platform. In the actual implementation process, accessing models is often not as simple as "calling an API"—developers often need to connect with multiple model providers and face a series of engineering challenges in production environments:

● Complex Management of Multiple Models: Even for the same application and scenario, to ensure effectiveness and usability, it often requires connecting multiple suppliers. However, most existing model plugins are single-supplier based, and the integration and switching among multiple suppliers must be implemented by users themselves.

● Lack of Unified Governance: Currently, there are no out-of-the-box implementations for capabilities like traffic protection, throttling, circuit breaking, intelligent routing, and context compression for model calls; additional infrastructure support is required.

● Granular Authorization Requirements: API Keys provide a degree of overall security for accessing model services, but in production environments, more granular access control is often needed, such as differentiating permissions and quotas by application and caller, and implementing differentiated throttling and auditing strategies for different consumers.

To address these issues, the Higress AI gateway supports a unified Model API to proxy various model providers and self-built inference services, while also providing production-level capabilities such as high availability governance, security authorization, and observability.

Previously, we provided a solution for connecting the Higress AI gateway through Dify's Open-AI-Compatible plugin, but in actual user usage, certain limitations were still found, including: only supporting some scenarios compatible with OpenAI's protocol, unable to cover multi-modal and image generation scenarios, as well as other native protocols that are not OpenAI; compatibility issues when accessing some models via the Higress AI gateway; and a single method for consumer authorization. The previous solution had certain shortcomings in usability and compatibility, hence the need for a dedicated model plugin for the Higress gateway.

To improve the ease of access and use of the Higress AI gateway for Dify users, Higress has officially launched the Dify Model Proxy plugin, which is now officially available in the Dify plugin market.

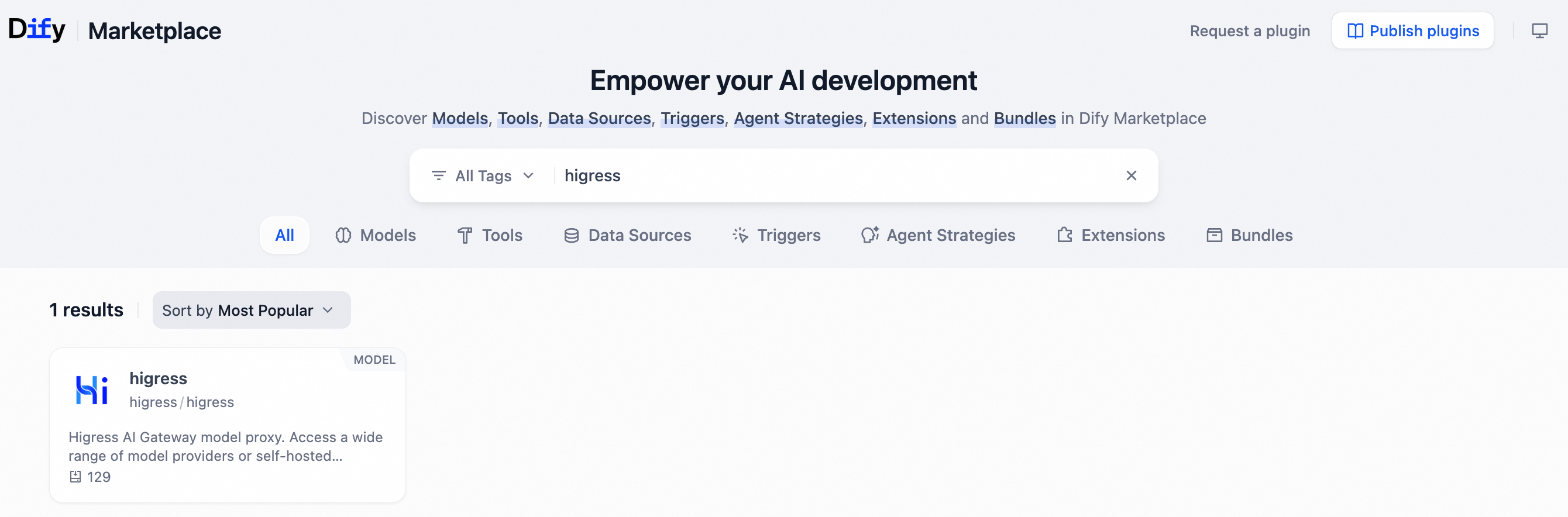

Developers can search for higress in the Dify plugin market to access and install the corresponding plugin.

The core value of this plugin is to enable simple interaction between Dify and the Higress AI gateway, where users only need to configure the gateway routing, protocol, and authorization method in Dify to easily access model services through the Higress AI gateway while configuring and using the rich capabilities provided by the gateway.

In this way, Dify is responsible for the orchestration and construction of AI applications, while Higress is responsible for proxying and managing model traffic, each performing its own duties.

This plugin is also suitable for open-source self-built Higress and Alibaba Cloud native AI gateways (commercial version).

Currently, the Higress model plugin supports access to the following scenarios and protocols of the Higress Model API, and supports the activation of API Key and HMAC (AK/SK) as authorization methods.

| Supported Scenarios | Supported Protocols | Supported Authorization Methods |

|---|---|---|

| Text Generation | OpenAI Compatible | API Key, HMAC |

| Image Generation | Alibaba Cloud Bailing Image Generation | API Key, HMAC |

| Vector Embedding | OpenAI Compatible | API Key, HMAC |

| Text Ranking | Alibaba Cloud Bailing Text Ranking | API Key, HMAC |

For text generation scenarios, the plugin supports capabilities such as thinking mode, tool invocation, streaming calls, and structured output, while also supporting configuration of multiple sampling and generation parameters like temperature and Top P, meeting users' diverse customization needs for model calls.

In addition, the Higress plugin is continuously being built and iterated, and if you have any interest or needs, you can visit https://github.com/higress-group/higress-dify-plugin to submit an issue or directly participate in contributions.

After the plugin installation is completed, Dify applications access models through the Higress gateway, naturally inheriting all governance capabilities configured on the gateway side:

● Traffic Protection: Token throttling, model fallback, circuit breaking, timeout, and other strategies to ensure the stability of model calls

● Load Balancing: Intelligent traffic distribution among multiple backend models and instances

● Observability: Unified call logs, metric monitoring, and traceability

● Security Protection: Consumer authorization, IP black and white list, and other access controls

● Plugin Expansion: A rich plugin ecosystem from Higress, enabling the addition of more AI capabilities as needed

These capabilities do not require additional development on the Dify side; they can be activated by simply configuring them in the Higress gateway control console.

Next, we will demonstrate how to access model services through the Higress plugin via the AI gateway in Dify by creating two classic demo scenarios.

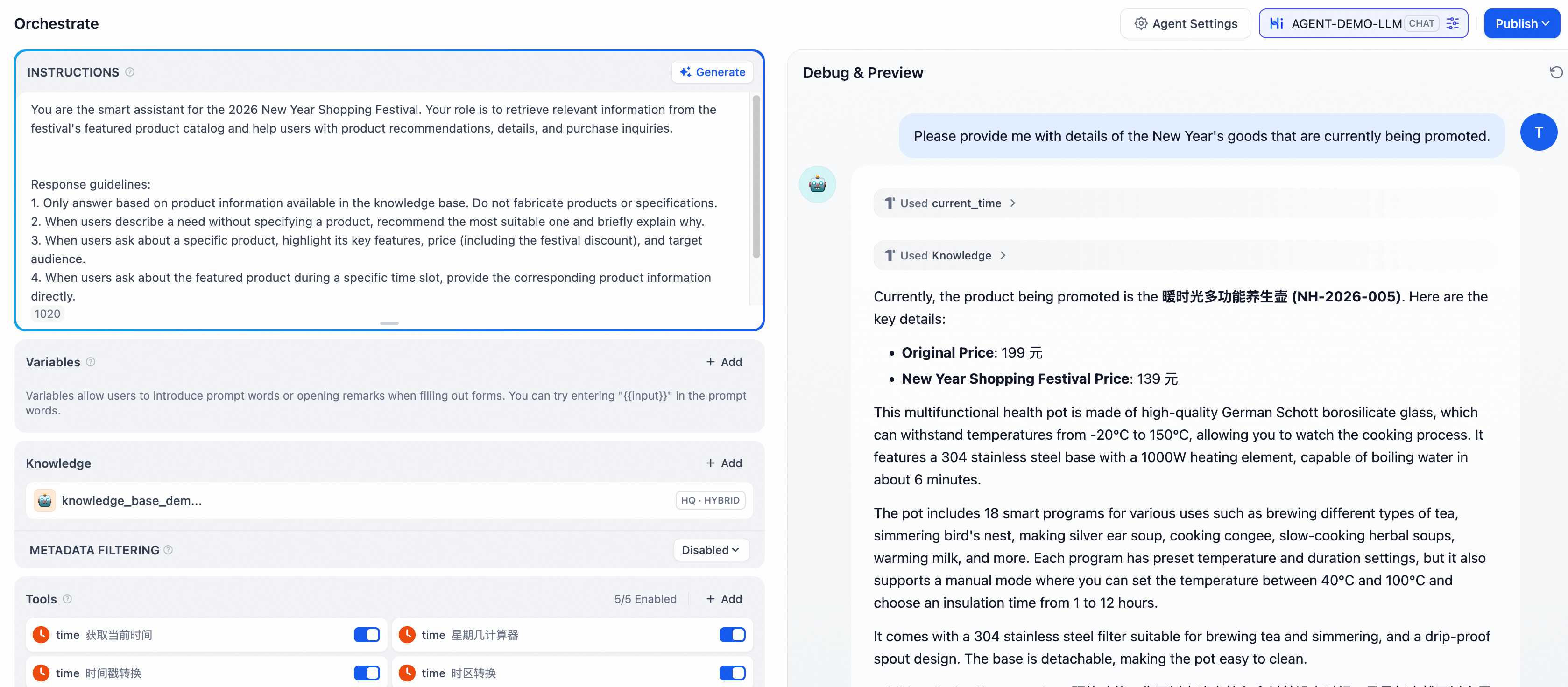

Build a corporate smart customer service Agent application in Dify. After the user asks questions, the Agent retrieves relevant documents from the knowledge base, generates answers based on the search results, and calls tools to complete operations when necessary.

In this process, accessing LLM, Embedding, and Rerank models by the Dify application is achieved through the Higress plugin + Higress AI gateway proxy access. The specific construction and operation steps are outlined below.

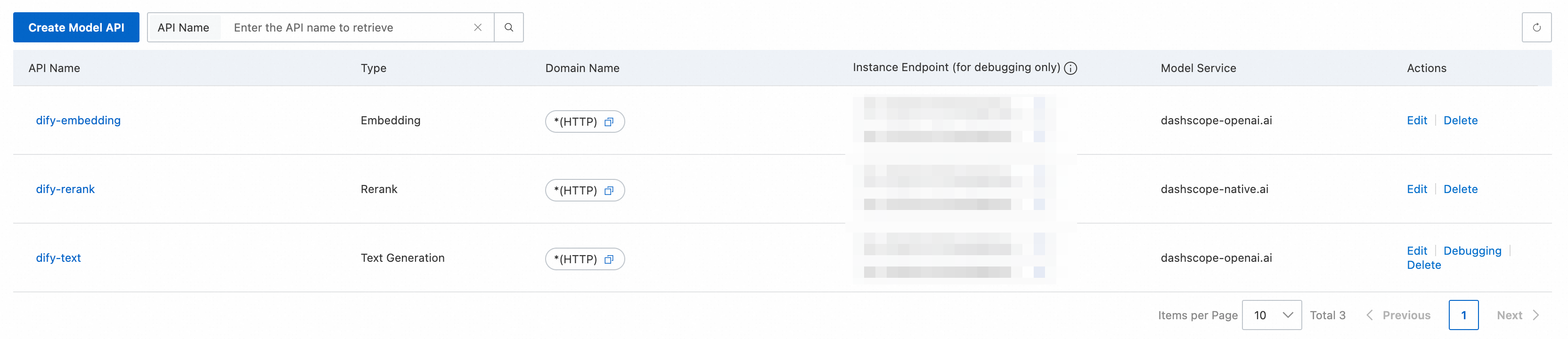

Taking the Higress commercial version Alibaba Cloud native AI gateway as an example, create three routes to access LLM, Embedding, and Rerank models, with the protocols, models, and routing strategies configured as needed.

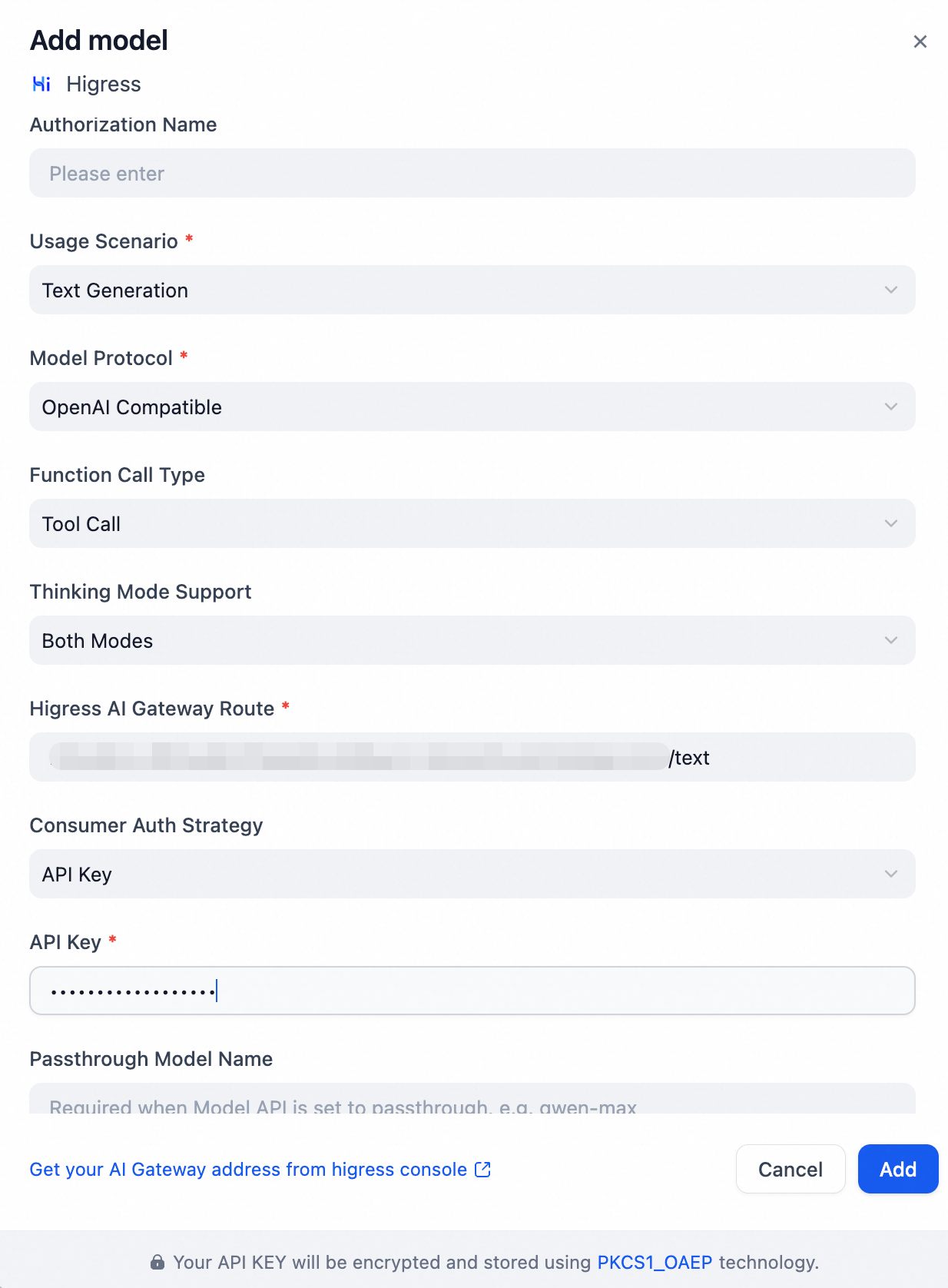

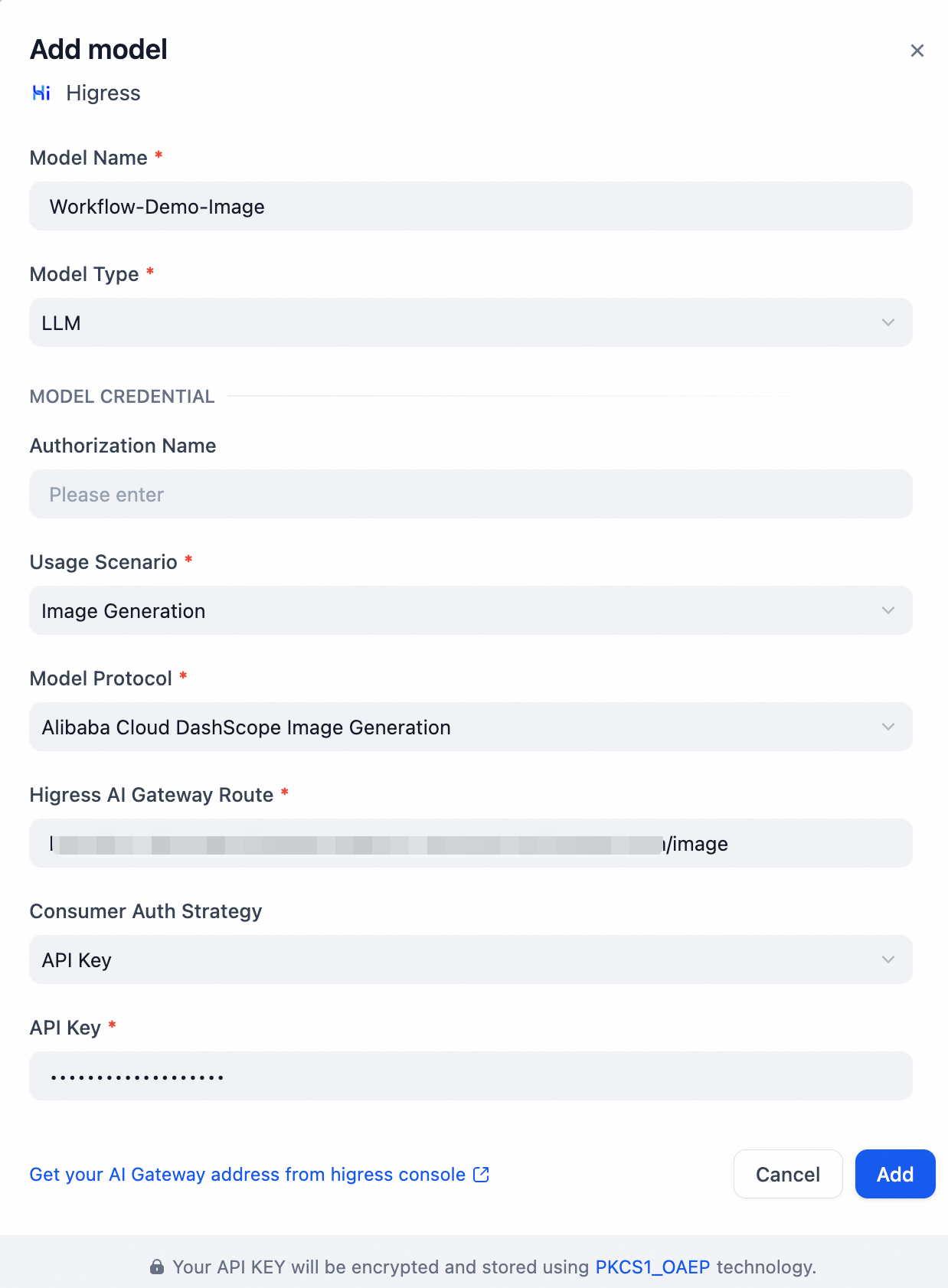

Search for the higress model plugin in the plugin market and install it. After installation, go to the model provider to create LLM, Embedding, and Rerank models, which will be used to access the three Model APIs created in the previously mentioned Higress AI gateway. The following image shows a configuration example when creating the LLM model.

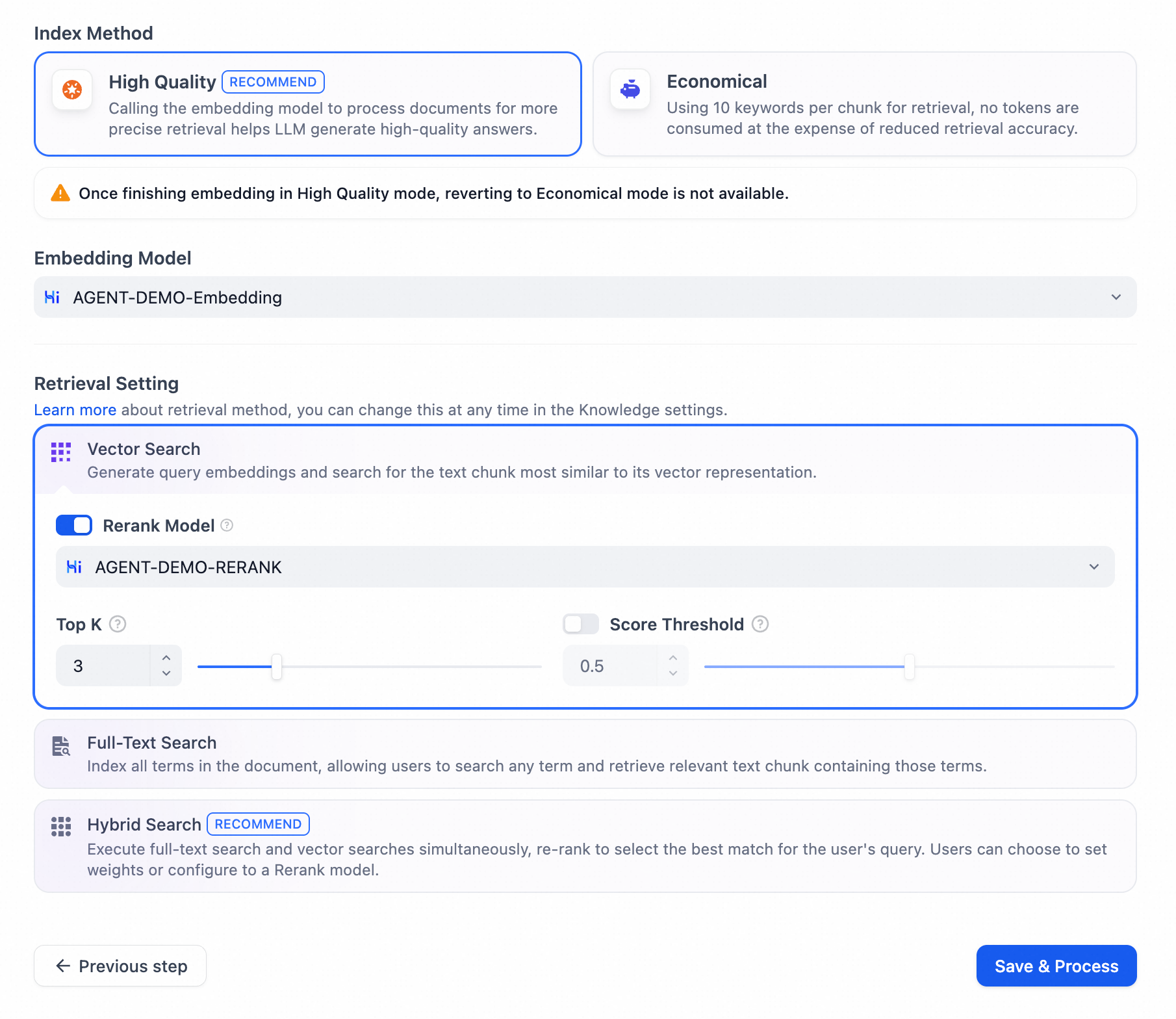

We will simulate the construction of a knowledge base for this demo featuring products promoted during the New Year shopping festival, maintaining information about a leading product, updated every two hours, along with corresponding product descriptions.

When creating the knowledge base, the Embedding and Rerank models will select the models created through the higress plugin in the previous steps.

When creating the Agent, choose the LLM model created through the higress plugin in the previous steps, and configure prompts, the knowledge base created in the previous steps, and necessary tools for the Agent.

In the debugging window, interact with the Agent, letting it recommend the currently promoted New Year products. The Agent can use existing tools to determine the current time and retrieve promotion products from the knowledge base while providing detailed information.

In this process, the Agent accesses the LLM model as well as the Embedding and Rerank models accessed via the Agentic method, all achieved through the higress plugin accessing the Higress AI gateway.

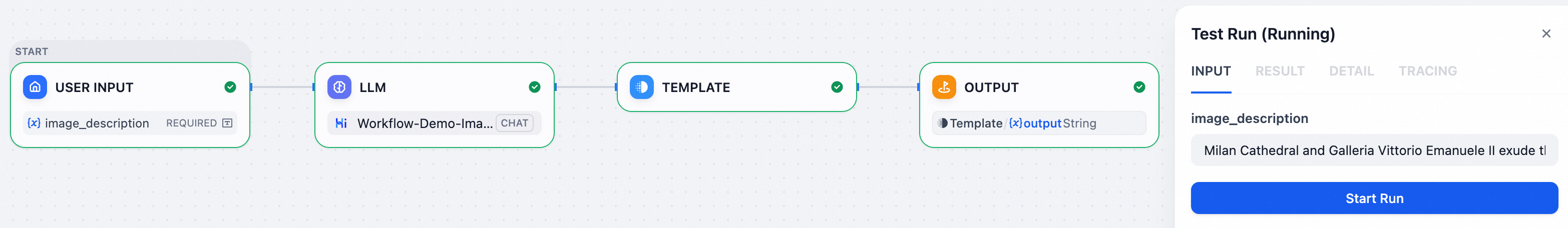

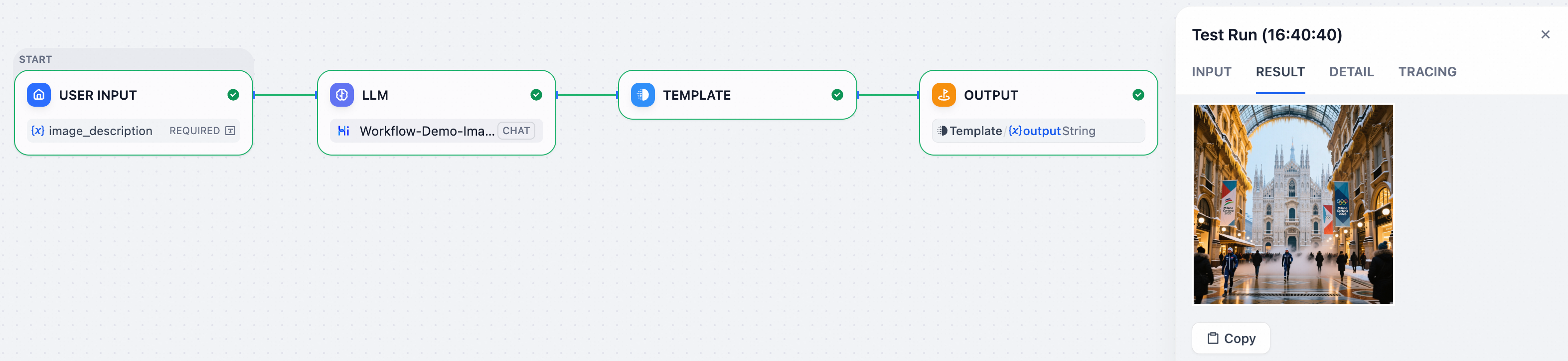

Build a simple image generation workflow in Dify. The application generates and returns images corresponding to the user's input description.

In this process, the Dify application accesses image generation models through the Higress plugin + Higress AI gateway proxy access. The specific construction and operation steps are outlined below.

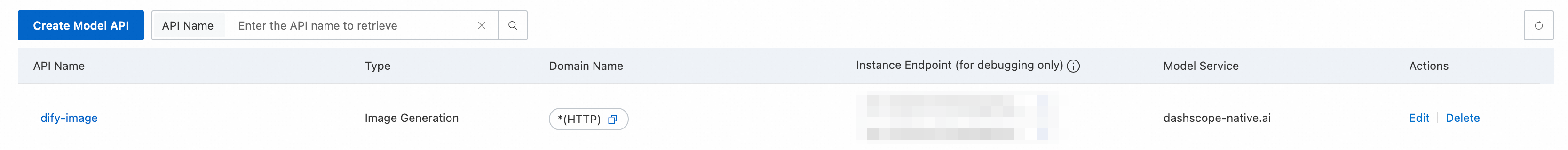

Taking the Higress commercial version Alibaba Cloud native AI gateway as an example, create a route for image generation to access the image generation model, with the protocols, models, and routing strategies configurable as needed.

The configuration example is shown in the diagram below.

The flow of this demo is shown in the diagram below, where the model in the model node uses the model created in the previous steps, and the image description provided by the user serves as the user prompt for the model node. The generated image URL is returned in the text information of the model node, using a template conversion node to transform the returned result into markdown format for easy display of the image results in the output node.

Testing the input and output results of the above workflow is shown in the diagram below.

The Higress plugin enhances the ease of integration between Dify and the Higress AI gateway, allowing seamless connectivity: users can access text generation, image generation, vector embedding, text ranking, and other model services through the gateway after configuring gateway routing and authorization in Dify, while reusing the traffic governance, security authorization, and observability capabilities on the gateway side. The plugin also supports open-source Higress and Alibaba Cloud native API gateways.

Currently, the Higress plugin has initially covered relatively mainstream usage scenarios and model protocols. Developers are welcome to search for higress in the Dify plugin market for installation and use. We plan to continue iterating in the following directions:

The plugin code is fully open-source, repository address: https://github.com/higress-group/higress-dify-plugin. Developers are welcome to provide feedback on needs or issues through Issues, and direct participation in the plugin development is also encouraged.

MSE Nacos Prompt Management: Making the Core Configuration of AI Agent Truly Governable

706 posts | 57 followers

FollowAlibaba Cloud Native Community - September 9, 2025

Alibaba Cloud Native Community - September 4, 2025

Alibaba Cloud Native Community - September 9, 2025

Alibaba Cloud Native Community - February 20, 2025

Alibaba Cloud Native Community - April 3, 2025

Alibaba Cloud Native Community - February 3, 2026

706 posts | 57 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Native Community