By Yi Li (Weiyuan)

Kubernetes has become an important infrastructure for the next-generation cloud IT architectures of enterprises. However, the deployment and maintenance of Kubernetes clusters still involve a high complexity and many challenges. Alibaba Cloud Container Service has been evolving along with customers and the community since its launch in 2015. Currently, it hosts tens of thousands of Kubernetes clusters to support customers all over the world. In this article, I will analyze some of the common problems during cluster planning and provide corresponding solutions.

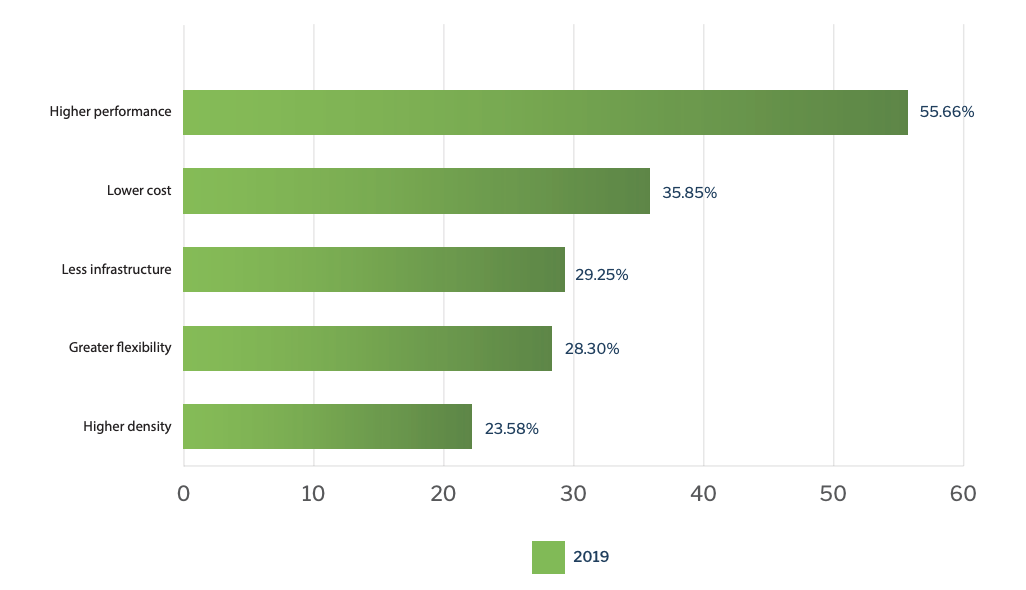

Dimanti's 2019 container survey report analyzed the main reasons why private cloud users select bare metal instances to run containers.

1) The main reason that most users (over 55%) chose bare metal instances was that traditional virtualization technologies result in high I/O loss. For I/O-intensive applications, bare metal instances provide better performance than traditional virtual machines (VMs).

2) In addition, nearly 36% of respondents believed that bare metal instances reduce costs. Most enterprises initially run containers on VMs. However, for large-scale production and deployment, users want to run containers on bare metal instances to reduce the licensing costs of virtualization technologies, which is often referred to as the "VMWare tax".

3) Nearly 30% of respondents said they chose bare metal instances to reduce additional resource overhead, such as virtualization management and VM operating systems. Nearly 24% of customers chose bare metal instances because their higher deployment density may reduce infrastructure costs.

4) More than 28% of respondents believed that physical hosts would give them more flexibility when choosing network, storage, and other device and software application environments.

But the critical question is what should public cloud users choose?

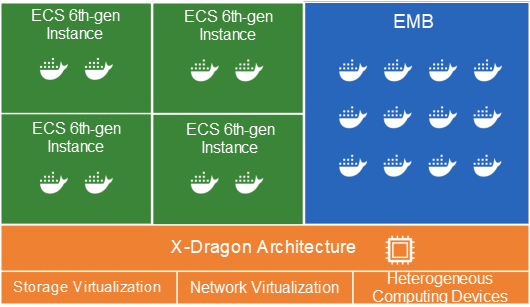

In October 2017, Alibaba Cloud's X-Dragon architecture came to being. ECS Bare Metal Instance (EMB) is an innovative computing product that features both the elasticity of VMs and the performance and characteristics of physical machines. It provides super-stable computing capabilities without any virtualization overhead. Alibaba Cloud released the sixth generation of enterprise-level instances for elastic computing in August 2019, upgrading their virtualization capabilities based on the X-Dragon architecture.

| ECS instance type | 6th-gen EMB | 6th-gen VM |

|---|---|---|

| CPU | Currently provide the 80 and 104 specifications | 2 to 104 |

| CPU-to-memory ratio | 1:1.8 to 1:7.4 | 1:2 to 1:8 |

| Virtualization resource overhead | Zero | Extremely low |

| GPU support | Yes (for some models) | Yes (for some models) |

| Intel SGX support | Yes (for some models) | No |

| Sandboxed container support (such as KataContainer and Alibaba Cloud sandboxed containers) | Yes | No |

| Support for license-hardware binding | Yes | Yes (DDH support) |

General Recommendations

Alibaba Cloud ACK clusters support multiple node scaling groups (AutoScalingGroups), and different auto scaling groups support different instance types. In practice, we divide the Kubernetes cluster into the static resource pool and the elastic resource pool. Generally, the static resource pool can select EBM or VM instances as needed. We recommend that you use VM instances of appropriate specifications based on the application load to minimize costs, avoid waste, and improve the elastic supply.

In addition, EBM instances typically have a large number of CPU cores. The following table lists the challenges of large-capacity instances.

This is an additional concern while selecting instance scales. Let's compare these two scenarios in the following table.

| A few high-specification instances | Many low-specification instances | Remarks |

|---|---|---|

| Relatively low | Relatively high | |

| Relatively low | Relatively high | |

| Relatively high | Relatively low | |

| Relatively high | Relatively low | "After the deployment density increases more rational resource scheduling is required to ensure the application SLA." |

| Relatively low | Relatively high | "As the deployment density increases node stability decreases." |

| Relatively large | Relatively small | "If a large instance fails more application containers will be affected. You also need to reserve more resources for downtime migration." |

| Relatively low | Relatively high | The number of worker nodes is one of the factors that affect the capacity planning and stability of master nodes. The NodeLease feature introduced in Kubernetes 1.13 greatly reduces the pressure on the master component due to the number of nodes. |

By default, Kubelet uses CFS quotas to enforce CPU limits for pods. When many CPU-intensive applications are running on a node, the workload may be migrated to different CPU cores. This may be affected by CPU cache affinity and scheduling latency. When high-specification instance types are used, the node has a large number of CPUs. When existing applications, such as Java and Golang applications, share multiple CPUs, their performance will significantly decrease. For high-specification instances, configure CPU management policies and use the CPU set to allocate resources.

Another important concern is support for NUMA. For an EMB or a high-specification instance started in NUMA, memory access throughput may be reduced by 30% compared with the optimized method. Topology Manager enables NUMA awareness, refer to this page for more details. However, Kubernetes provides only basic support for NUMA and cannot fully utilize its performance advantages.

Alibaba Cloud Container Service provides CGroup controllers to schedule and reschedule NUMA architectures with higher flexibility.

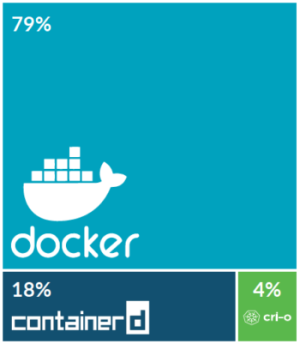

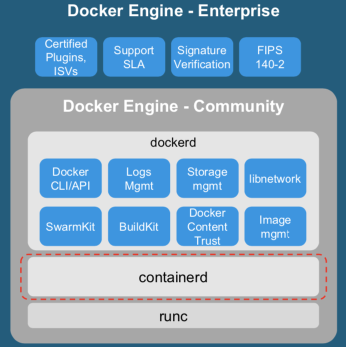

The Sysdig 2019 Container Usage Report shows that Docker containers account for 79% of all container runtime environments available in the market. Containerd is an open source container runtime contributed by Docker to the CNCF community. It occupies a modest place in the market, but is widely supported by manufacturers. CRI-O is a lightweight Kubernetes container runtime that supports OCI specifications launched by Red Hat. So far, it is still in the early stage of development.

Many users are concerned about the relationship between Containerd and Docker and whether Containerd can replace Docker. The underlying container lifecycle management of Docker Engine is also implemented based on Containerd. However, Docker Engine includes more developer toolchains, such as for image building. It also provides Docker's built-in logging, storage, network, and Swarm orchestration capabilities. In addition, the vast majority of container ecosystem vendors, including security, monitoring, logging, and development vendors, provide comprehensive support for Docker Engine and are gradually improving their Containerd support. Therefore, in the Kubernetes runtime environment, users who care more about security, efficiency, and customization may choose Containerd as their container runtime environment. For most developers, it is a good choice to continue using Docker Engine as the container runtime environment.

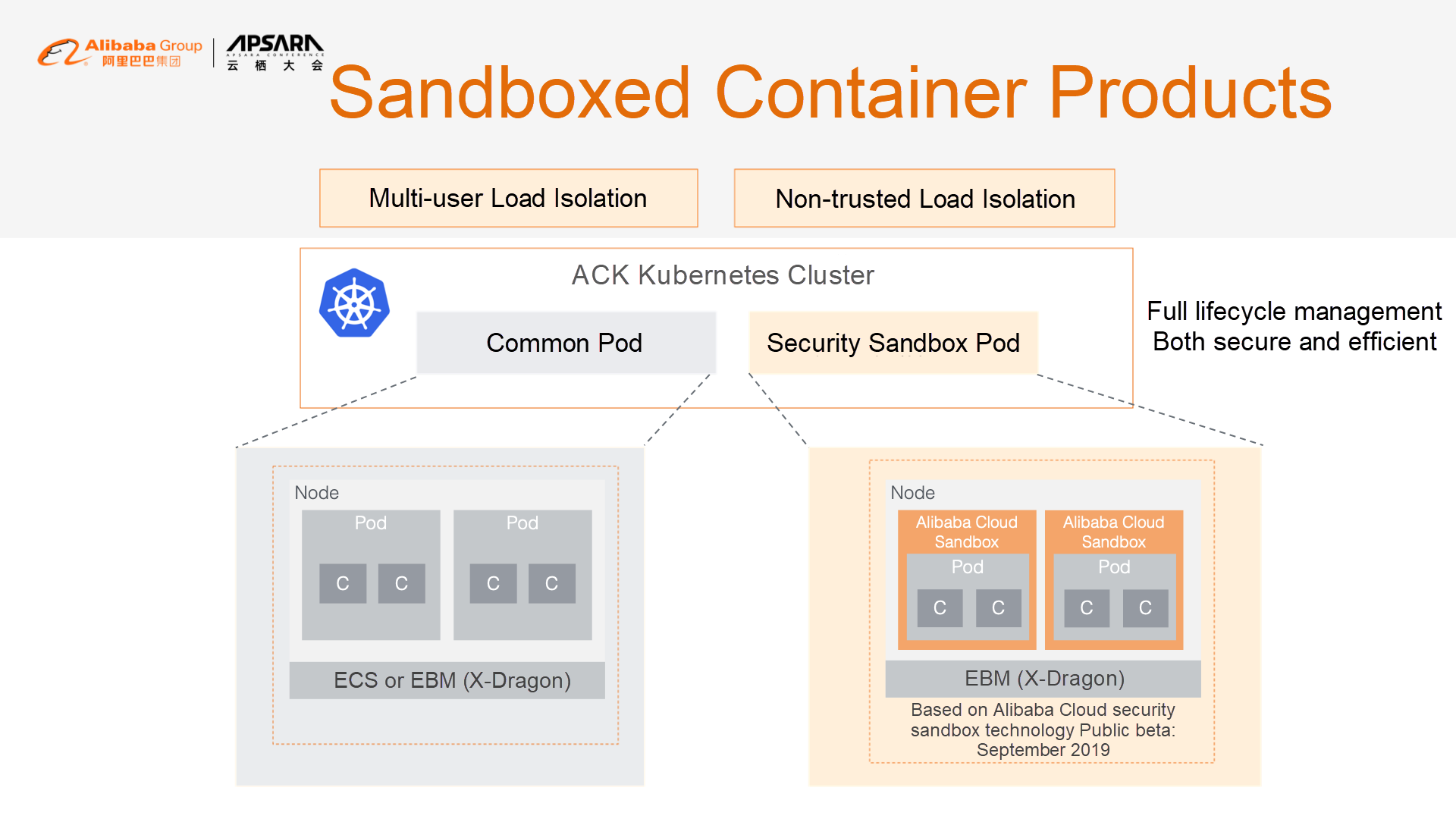

In addition, traditional Docker RunC containers share the kernel with the host's Linux system and isolate resources through CGroups and namespaces. However, as the kernel of the operating system presents a large target to attackers, once a malicious container exploits a kernel vulnerability, it may affect all the containers on the host.

More and more enterprise customers are concerned about container security. To improve security isolation, Alibaba Cloud and the Ant Financial team are working together to introduce sandboxed container technology. In September, we released the RunV security sandbox based on lightweight virtualization technology. Compared with RunC containers, each RunV container has an independent kernel. This implies that even if the kernel to which the container belongs is cracked, other containers are not affected. These containers are suitable for running non-trusted third-party applications and for providing better security isolation in multi-tenant scenarios.

Alibaba Cloud sandboxed containers have been significantly optimized and can achieve 90% of the performance of native RunC containers.

ACK also provides the same user experience for sandboxed containers and RunC containers, including logging, monitoring, and elasticity. In addition, ACK co-deploys RunC and RunV containers on EBM instances, allowing you to choose the containers that are most suitable for your business.

At the same time, sandboxed containers do have some limitations. Many existing tools, such as logging, monitoring, and security tools, do not support security sandboxes with independent kernels. In this case, they need to be deployed as sidecars within the security sandbox.

If you require multi-tenant isolation, use a security sandbox with network policies. Alternatively, run the applications of different tenants on different VMs or elastic container instances and use virtualization technology for isolation.

Note: Currently, security sandboxes only run on bare metal instances, so the cost is high when container applications require less resources. For more information, see the relevant Serverless Kubernetes section.

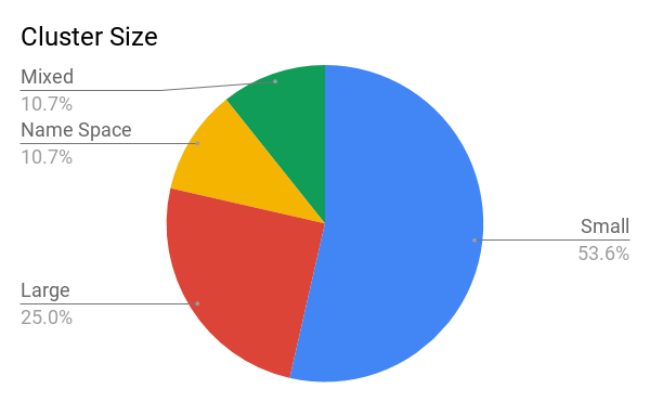

In terms of production practices, companies often wonder if they should use one or multiple Kubernetes clusters.

On Twitter, Rob Hirschfeld conducted a survey, asking whether people preferred:

https://thenewstack.io/the-optimal-kubernetes-cluster-size-lets-look-at-the-data/

Most users chose the second choice. This solution is typically applied in the following scenarios:

Based on the user feedback, the main reason for using multiple small clusters was that the impact scope of a failure is relatively small, efficiently improving the availability of the overall system. At the same time, different clusters isolate resources better. The increased complexity of management and O&M is the disadvantage of using multiple small clusters. However, in a public cloud, managed Kubernetes services (such as Alibaba Cloud ACK) provide an easy way to create Kubernetes clusters and manage their lifecycles, effectively solving this problem.

Let's compare these two options.

| Single large cluster | Multiple application-centric clusters | |

|---|---|---|

| Failure impact scope | Large | Small |

| Hard multi-tenancy (strong security and resource isolation) | Complex | Simple |

| Hybrid scheduling of various resources (such as GPUs) | Complex | Simple |

| Cluster management complexity | Low | High (self-built)/Low (with a managed Kubernetes service) |

| Cluster lifecycle flexibility | Difficult | Simple (different clusters can have different versions and scaling policies) |

| Complexity of large-scale introduction | Complex | Simple |

Drawing on Google Borg, Kubernetes seeks to become a data center operating system. Kubernetes also provides RBAC, namespace, and other management capabilities, allowing multiple users to share a cluster and imposing resource limits. However, these features only provide soft multi-tenancy and cannot achieve strong isolation between tenants. Among the best practices of multi-tenancy, we recommend the following:

Currently, Kubernetes' support for hard isolation has many limitations. At the same time, the community is actively exploring new ways to improve support, such as the Virtual Cluster Proposal of the Alibaba container team. However, these technologies are not yet mature.

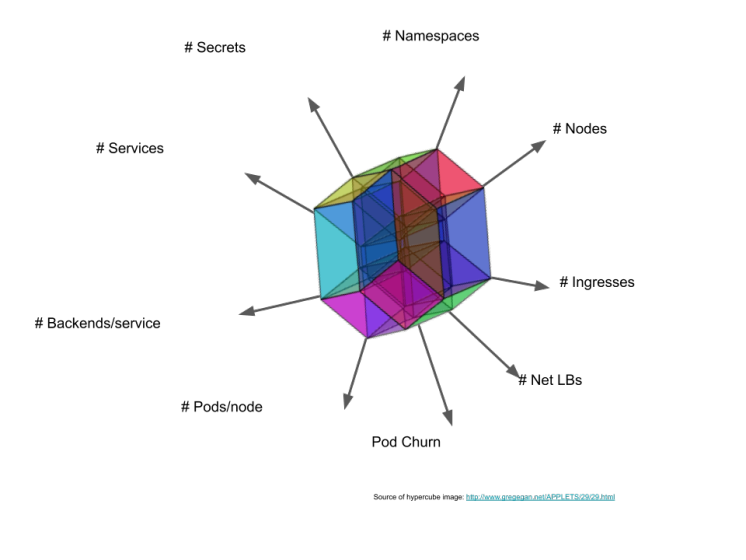

Another solution to be considered is the scalability of Kubernetes itself. We know that the scale of a Kubernetes cluster is limited along multiple dimensions due to the need to ensure stability. Generally, a Kubernetes cluster cannot have more than 5,000 nodes. Meanwhile, clusters that run on Alibaba Cloud are also limited by cloud product quotas. The Alibaba economy has extensive experience of using Kubernetes on a large scale, but for the vast majority of users, it is impossible to implement the complex O&M and customization necessary for super-large clusters.

We recommend that public cloud users select an appropriate cluster size based on their business scenarios.

For centrally managing the applications of multiple clusters, consider the following:

All the relevant surveys show that the complexity of Kubernetes and the lack of relevant skills are the main reasons that enterprises do not adopt Kubernetes. Operating a Kubernetes production cluster in an IDC remains a very challenging task. Alibaba Cloud's Kubernetes service simplifies Kubernetes cluster lifecycle management and hosts clusters' master nodes. However, you still need to maintain worker nodes, including security patching, and plan the capacity based on your usage needs.

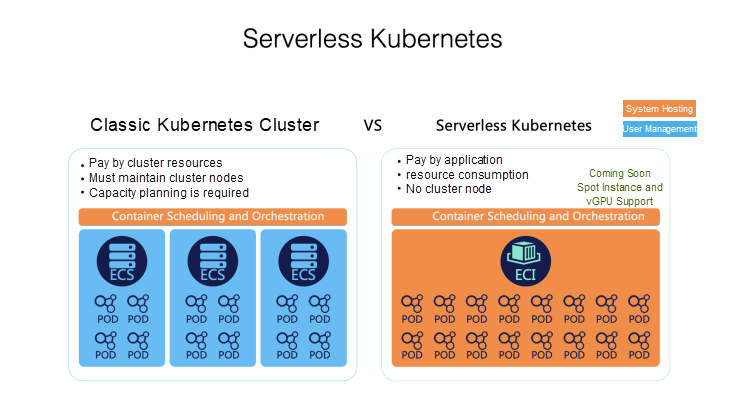

To address the complex O&M of Kubernetes clusters, Alibaba Cloud launched Container Service for Serverless Kubernetes (ASK).

This service is fully compatible with existing Kubernetes container applications, but all the container infrastructure is hosted by Alibaba Cloud, allowing you to focus on your own applications. ASK provides several key features:

In ASK, applications run on Elastic Container Instances (ECIs), which implement application security isolation based on sandbox environments provided by lightweight VMs. This environment is fully compatible with Kubernetes pod semantics. In ASK, we sink the complexity of Kubernetes to the infrastructure, greatly reducing the O&M management burden. This improves the user experience, makes Kubernetes simpler and allows developers to focus more on the applications themselves. In addition to managing nodes and master components, we implement DNS, Ingress, and other capabilities through the native capabilities of Alibaba Cloud products, providing a simple but fully functional Kubernetes application runtime environment.

Serverless Kubernetes greatly reduces management complexity, and its design copes well with applications that are prone to load bursts, such as CI/CD and batch computing. For example, a typical online education customer may deploy teaching applications on demand based on teaching needs and release resources automatically after courses are completed. As a result, total computing costs are only a third of the cost of using monthly subscription nodes.

At the orchestration and scheduling layer, we use the Virtual-Kubelet implementation developed by the CNCF community and have made in-depth extensions to it. Virtual-Kubelet provides an abstract controller model to simulate a virtual Kubernetes node. When a pod is scheduled to a virtual node, the controller uses the ECI service to create an ECI to run the pod.

Add virtual nodes to an ACK Kubernetes cluster to seamlessly control whether applications are deployed on common or virtual nodes. Note that ASK and ECI pods are nodeless, so they do not support many of the node-related capabilities of Kubernetes. For example, they do not support the NodePort concept. In addition, log and monitoring components are often deployed on Kubernetes nodes as DaemonSets, so they must be converted to sidecars in ASK and ECI.

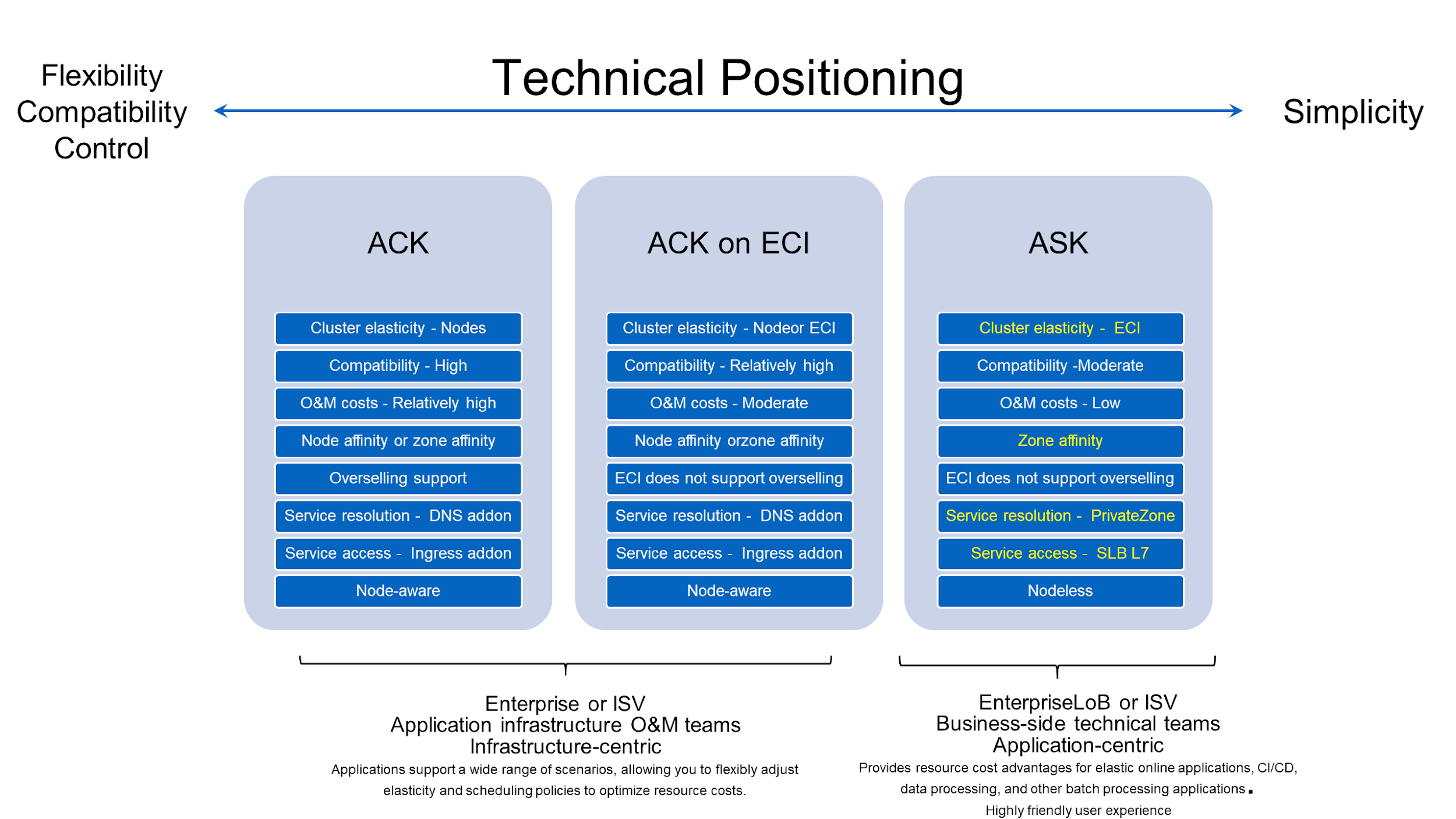

It's important to understand how to decide between ACK and ASK. ACK is designed for infrastructure O&M teams and features high Kubernetes compatibility and flexible control. ASK is intended for business-side technical teams or developers. Users can manage and deploy Kubernetes applications without the need for Kubernetes management and O&M capabilities. ACK on ECI allows user loads to run on both ECS instances and ECIs, giving users flexible control over their workloads.

ACK on ECI and ASK can run elastic loads through ECI. This approach is suitable for several typical scenarios:

ACK on ECI also work with serverless application frameworks, such as Knative, to allow the development of serverless applications.

By reasonably planning Kubernetes clusters, we can effectively avoid stability issues in subsequent O&M and reduce usage costs. I look forward to discussing my practical experiences in using Kubernetes on Alibaba Cloud with you all.

Are you eager to know the latest tech trends in Alibaba Cloud? Hear it from our top experts in our newly launched series, Tech Show!

Unlock Cloud-Native AI Skills | Develop Your Machine Learning Workflow

229 posts | 34 followers

FollowJDP - July 15, 2021

Alibaba Container Service - November 13, 2019

Alibaba Cloud Community - August 30, 2024

Alibaba Clouder - February 25, 2021

Alibaba Developer - October 13, 2020

Alibaba Container Service - August 1, 2024

229 posts | 34 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Registry

Container Registry

A secure image hosting platform providing containerized image lifecycle management

Learn MoreMore Posts by Alibaba Container Service