By Jeremy Pedersen

Welcome back for the 17th installment in our weekly blog series! Once again we're taking a look at your questions from past online events and training sessions. Let's jump in! We'll be talking about Kubernetes a lot this week, so be aware that I often refer to it as "K8s", for short.

Actually, it's not as complicated as it looks!

"ASK" is actually a type of Kubernetes cluster offered by the ACK service. As you can see on the ACK product page, there are actually three types of Kubernetes clusters on offer:

So what's the difference?

With Dedicated Kubernetes, you need to set up both the Master and Worker nodes yourself (though we install K8s for you). This gives you the highest level of control over your K8s cluster: you can directly SSH into the Master or Workers, install customizations, make operating system level changes, and more. You can even run the Master and Workers on EBM (Elastic Bare Metal) ECS instances, which do not have a virtualization layer, meaning you can run your containers directly on the host operating system for an extra speed boost. Drawbacks: You are responsible for ops & maintenance for both the Master and Worker nodes. Also, you need to do your own capacity planning to make sure you have enough Workers to handle your workload!

With Managed Kubernetes, the Master node is managed by Alibaba Cloud. This means you don't need to pay for a Master node ECS instance to be running 24/7 like you do with Dedicated Kubernetes, and it also saves some of the ops & maintenance hassles associated with maintaining the Master node. However, you still need to set up your own Worker nodes on ECS, so again you are responsible for making sure those Workers are functional, and you have to do your own capacity planning to make sure you don't run out of places to schedule Pods!

Then, there's Serverless Kubernetes, or as I like to call it: "hands-free Kubernetes". There are no nodes of any kind for you to manage!. The Master is managed by Alibaba Cloud, and the "Worker" node is a so-called "Virtual Node" that scales up and down seamlessly by simply scheduling your Pods to run on Alibaba Cloud's ECI service. There is nothing to manage and no scaling to worry about: you just create K8s objects and pass them to the Serverless K8s cluster, then it does the rest!

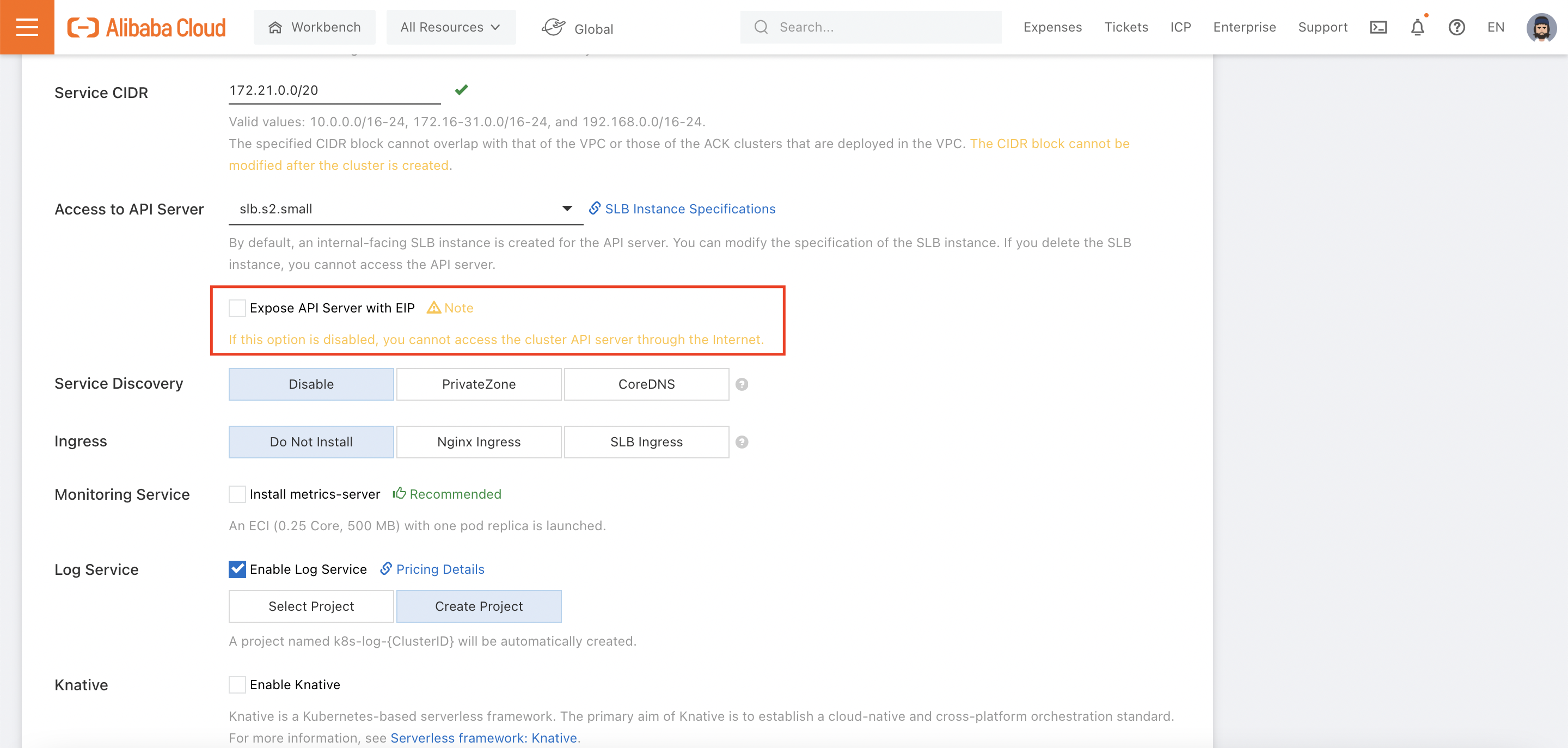

Good question! When you are setting up the cluster, take a look for this option:

If you do not check this box, you won't be able to use kubectl to connect to your cluster over the Internet (though you could still connect from within the Alibaba Cloud VPC hosting the cluster).

If the ECS instances acting as your Worker nodes don't have public IP addresses, you'll have to give them access to the Internet via the following 3-step process:

Instructions are here.

Yes. You can mount an OSS volume as a statically provisioned volume or by creating a PV and PVC(s). See this document for details.

There could be multiple causes, but I'm going to assume you're seeing an error message like this in your ECI or ACK container logs:

standard_init_linux.go:228: exec user process caused: exec format errorWhat this means is that your container won't start, because it was built for a different processor architecture than ACK or ECI use.

So what happened? In my case, I ran docker build on a new M1 Macbook Pro. This Mac uses Apple's new M1 processor, which cannot run applications built for AMD or Intel processors! Both ACK and ECI run on servers with Intel processors in them. So what can we do?

Luckily, Docker includes a buildx instruction that will let you build Docker images for different processor architectures, regardless of what hardware you've got installed locally. That means on my M1 Macbook, I can build a Docker image for an Intel or AMD processor using this command:

docker buildx build --platform linux/amd64 -t mynewimage .Of course, an even easier workaround would be to use Alibaba Cloud Container Registry's built-in build service. Just upload your Dockerfile and source code to GitHub, Bitbucket, or GitLab, and let Alibaba Cloud Container Registry pull the code from there (via webhook) and build your image for you.

s

Yes! Despite not having any fixed Worker nodes, Serverless K8s clusters can still use GPU hardware (say, to run a TensorFlow job).

Since Alibaba Cloud ASK clusters don't have fixed Worker nodes (they rely on the ECI service instead), you need to set an annotation when you create your Pods to indicate what type of GPU your Pod needs access to. The scheduler will then make sure your Pod runs on an ECI instance that has the appropriate GPU hardware.

You can follow along with this document to see how this is done. It even includes an example (which I reproduce here) of what your Pod's YAML file should look like. This code will schedule the Pod on a host that has a P4 GPU available:

apiVersion: v1

kind: Pod

metadata:

name: tensorflow

annotations:

k8s.aliyun.com/eci-gpu-type : "P4"

spec:

containers:

- image: registry-vpc.cn-hangzhou.aliyuncs.com/ack-serverless/tensorflow

name: tensorflow

command:

- "sh"

- "-c"

- "python models/tutorials/image/imagenet/classify_image.py"

resources:

limits:

nvidia.com/gpu: "1"

restartPolicy: OnFailureGreat! Reach out to me at jierui.pjr@alibabacloud.com and I'll do my best to answer in a future Friday Q&A blog.

You can also follow the Alibaba Cloud Academy LinkedIn Page. We'll re-post these blogs there each Friday.

Friday Blog - Week 18 - Security with Resource Access Management (RAM)

JDP - March 26, 2021

JDP - May 7, 2021

JDP - April 9, 2021

JDP - April 30, 2021

JDP - June 4, 2021

JDP - July 9, 2021

Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Alibaba Cloud Academy

Alibaba Cloud Academy

Alibaba Cloud provides beginners and programmers with online course about cloud computing and big data certification including machine learning, Devops, big data analysis and networking.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by JDP