In a Kubernetes environment, the Sidecar mode is ideal for log collection scenarios that require fine-grained control over application logs, multi-tenant isolation, or a tight coupling with the application lifecycle. This mode injects a LoongCollector (Logtail) container into an application pod to provide dedicated log collection for that pod, offering robust flexibility and isolation.

How it works

In sidecar mode, an application container and a LoongCollector (Logtail) log collection container run side-by-side within your application pod. They work together using shared volumes and lifecycle synchronization mechanisms.

Log sharing: The application container writes its log files to a shared volume, typically an

emptyDir. The LoongCollector (Logtail) container mounts the same shared volume to read and collect these log files in real time.Configuration association: Each LoongCollector (Logtail) sidecar container declares its identity by setting a unique

custom identifier. In the Simple Log Service (SLS) console, you need to create a machine group that uses the same identifier. This ensures all sidecar instances with the same identifier automatically apply the collection configuration from that machine group.Lifecycle synchronization: To prevent log loss when a pod terminates, the application container and the LoongCollector (Logtail) container communicate through signal files (

cornerstoneandtombstone) in the shared volume. This mechanism works with the pod'sgraceful termination period(terminationGracePeriodSeconds) to ensure a graceful shutdown. The application container stops writing first, LoongCollector (Logtail) finishes sending all remaining logs, and then both containers exit together.

Prerequisites

To collect logs, you must first create a Project and a Logstore for log management and storage. If you already have these resources, you can skip this step and proceed to Step 1: Inject the LoongCollector sidecar container.

Project: A resource management unit in Simple Log Service that isolates and manages logs for different projects or services.

Logstore: A unit for storing logs.

Create a Project

Create a Logstore

Step 1: Inject the LoongCollector sidecar container

Inject a LoongCollector sidecar container into your application Pod and configure a shared volume for log collection. If you have not deployed your application or are just testing, you can use the Appendix: YAML example to quickly verify the deployment.

1. Modify the Pod YAML configuration

Define shared volumes

In

spec.template.spec.volumes, add three shared volumes at the same level ascontainers:volumes: # Shared log directory (written by the application container, read by the sidecar) - name: ${shared_volume_name} # <-- The name must match the name in volumeMounts. emptyDir: {} # Signal directory for inter-container communication (for graceful startup and shutdown) - name: tasksite emptyDir: medium: Memory # Use memory for better performance. sizeLimit: "50Mi" # Shared host time zone configuration: Synchronizes the time zone for all containers in the Pod. - name: tz-config # <-- The name must match the name in volumeMounts. hostPath: path: /usr/share/zoneinfo/Asia/Shanghai # Modify the time zone as needed.Configure volume mounts for the application container

In the

volumeMountssection of your application container, such asyour-business-app-container, add the following volume mounts:Ensure that your application container writes logs to the

${shared_volume_path}directory so that LoongCollector can collect them.volumeMounts: # Mount the shared log volume to the application log output directory. - name: ${shared_volume_name} mountPath: ${shared_volume_path} # Example: /var/log/app # Mount the communication directory. - name: tasksite mountPath: /tasksite # Shared directory for communication with the LoongCollector container. # Mount the time zone file. - name: tz-config mountPath: /etc/localtime readOnly: trueInject the LoongCollector sidecar container

In the

spec.template.spec.containersarray, append the following sidecar container definition:- name: loongcollector image: aliyun-observability-release-registry.cn-shenzhen.cr.aliyuncs.com/loongcollector/loongcollector:v3.1.1.0-20fa5eb-aliyun command: ["/bin/bash", "-c"] args: - | echo "[$(date)] LoongCollector: Starting initialization" # Start the LoongCollector service. /etc/init.d/loongcollectord start # Wait for the configuration to be downloaded and the service to be ready. sleep 15 # Verify the service status. if /etc/init.d/loongcollectord status; then echo "[$(date)] LoongCollector: Service started successfully" touch /tasksite/cornerstone else echo "[$(date)] LoongCollector: Failed to start service" exit 1 fi # Wait for the application container to complete (signaled by the tombstone file). echo "[$(date)] LoongCollector: Waiting for business container to complete" until [[ -f /tasksite/tombstone ]]; do sleep 2 done # Allow time to upload remaining logs. echo "[$(date)] LoongCollector: Business completed, waiting for log transmission" sleep 30 # Stop the service. echo "[$(date)] LoongCollector: Stopping service" /etc/init.d/loongcollectord stop echo "[$(date)] LoongCollector: Shutdown complete" # Health check livenessProbe: exec: command: ["/etc/init.d/loongcollectord", "status"] initialDelaySeconds: 30 periodSeconds: 10 timeoutSeconds: 5 failureThreshold: 3 # Resource configuration resources: requests: cpu: "100m" memory: "128Mi" limits: cpu: "2000m" memory: "2048Mi" # Environment variable configuration env: - name: ALIYUN_LOGTAIL_USER_ID value: "${your_aliyun_user_id}" - name: ALIYUN_LOGTAIL_USER_DEFINED_ID value: "${your_machine_group_user_defined_id}" - name: ALIYUN_LOGTAIL_CONFIG value: "/etc/ilogtail/conf/${your_region_config}/ilogtail_config.json" # Enable full drain mode to ensure all logs are sent before the Pod terminates. - name: enable_full_drain_mode value: "true" # Append Pod environment information as log tags. - name: ALIYUN_LOG_ENV_TAGS value: "_pod_name_|_pod_ip_|_namespace_|_node_name_|_node_ip_" # Automatically inject Pod and Node metadata as log tags. - name: "_pod_name_" valueFrom: fieldRef: fieldPath: metadata.name - name: "_pod_ip_" valueFrom: fieldRef: fieldPath: status.podIP - name: "_namespace_" valueFrom: fieldRef: fieldPath: metadata.namespace - name: "_node_name_" valueFrom: fieldRef: fieldPath: spec.nodeName - name: "_node_ip_" valueFrom: fieldRef: fieldPath: status.hostIP # Volume mounts (shared with the application container) volumeMounts: # Mount the application log directory in read-only mode. - name: ${shared_volume_name} # <-- Name of the shared log directory. mountPath: ${dir_containing_your_files} # <-- Mount path of the shared directory in the sidecar container. readOnly: true # Mount the communication directory. - name: tasksite mountPath: /tasksite # Mount the time zone file. - name: tz-config mountPath: /etc/localtime readOnly: true

2. Adapt the application container's lifecycle logic

Based on your workload type, you must adapt your application container to coordinate its shutdown with the sidecar container:

Short-lived tasks (Job/CronJob)

# 1. Wait for LoongCollector to be ready.

echo "[$(date)] Business: Waiting for LoongCollector to be ready..."

until [[ -f /tasksite/cornerstone ]]; do

sleep 1

done

echo "[$(date)] Business: LoongCollector is ready, starting business logic"

# 2. Execute the core business logic (ensure logs are written to the shared directory).

echo "Hello, World!" >> /app/logs/business.log

# 3. Save the exit code.

retcode=$?

echo "[$(date)] Business: Task completed with exit code: $retcode"

# 4. Notify LoongCollector that the business task is complete.

touch /tasksite/tombstone

echo "[$(date)] Business: Tombstone created, exiting"

exit $retcodeLong-lived services (Deployment/StatefulSet)

# Define the signal handler function.

_term_handler() {

echo "[$(date)] [nginx-demo] Caught SIGTERM, starting graceful shutdown..."

# Send a QUIT signal to Nginx for a graceful stop.

if [ -n "$NGINX_PID" ]; then

kill -QUIT "$NGINX_PID" 2>/dev/null || true

echo "[$(date)] [nginx-demo] Sent SIGQUIT to Nginx PID: $NGINX_PID"

# Wait for Nginx to stop gracefully.

wait "$NGINX_PID"

EXIT_CODE=$?

echo "[$(date)] [nginx-demo] Nginx stopped with exit code: $EXIT_CODE"

fi

# Notify LoongCollector that the application container has stopped.

echo "[$(date)] [nginx-demo] Writing tombstone file"

touch /tasksite/tombstone

exit $EXIT_CODE

}

# Register the signal handler.

trap _term_handler SIGTERM SIGINT SIGQUIT

# Wait for LoongCollector to be ready.

echo "[$(date)] [nginx-demo]: Waiting for LoongCollector to be ready..."

until [[ -f /tasksite/cornerstone ]]; do

sleep 1

done

echo "[$(date)] [nginx-demo]: LoongCollector is ready, starting business logic"

# Start Nginx.

echo "[$(date)] [nginx-demo] Starting Nginx..."

nginx -g 'daemon off;' &

NGINX_PID=$!

echo "[$(date)] [nginx-demo] Nginx started with PID: $NGINX_PID"

# Wait for the Nginx process.

wait $NGINX_PID

EXIT_CODE=$?

# Also notify LoongCollector if the exit was not caused by a signal.

if [ ! -f /tasksite/tombstone ]; then

echo "[$(date)] [nginx-demo] Unexpected exit, writing tombstone"

touch /tasksite/tombstone

fi

exit $EXIT_CODE3. Set the graceful termination period

In spec.template.spec, set a sufficient termination grace period to ensure LoongCollector has enough time to upload any remaining logs.

spec:

# ... Your other existing spec configurations ...

template:

spec:

terminationGracePeriodSeconds: 600 # A 10-minute graceful shutdown period.4. Variables

Parameter | Description |

| Set this to your main account ID. For more information, see Configure user identifiers. |

| Set a custom identifier that will be used to create the machine group. For example: Important Ensure that this identifier is unique within the region of your Project. |

| Set this value based on the region of your Log Service Project and the network access type. For information about regions, see Service regions. Example: If your Project is in the China (Hangzhou) region, use |

| Set a custom name for the volume. Important The |

| Set the mount path. This is the directory in the container that contains the text logs. |

5. Apply and verify the configuration

Run the following command to deploy the changes:

kubectl apply -f <YOUR-YAML>Check the Pod status to confirm that the LoongCollector container was successfully injected:

kubectl describe pod <YOUR-POD-NAME>If you see two containers (your application container and

loongcollector) and their status is Running, the injection was successful.

Step 2: Create a custom identifier machine group

This step registers the LoongCollector sidecar instances injected into pods with Simple Log Service (SLS). This lets you centrally manage and apply collection configurations.

Procedure

Create a machine group

In the target project, click

in the left-side navigation pane.

in the left-side navigation pane.On the Machine Groups page, click .

Configure the machine group

Configure the following parameters and click OK:

Name: The name of the machine group. This cannot be changed after creation. The naming conventions are as follows:

Must contain only lowercase letters, digits, hyphens (-), and underscores (_).

Must start and end with a lowercase letter or a digit.

Must be 2 to 128 characters in length.

Machine Group Identifier: Select Custom Identifier.

Custom Identifier: Enter the value of the

ALIYUN_LOGTAIL_USER_DEFINED_IDenvironment variable that you set for the LoongCollector container in the YAML file in Step 1. The value must be an exact match, otherwise the association fails.

Check the machine group heartbeat status

After creating the machine group, click its name and check the heartbeat status in the Machine Group Status section.

OK: LoongCollector has successfully connected to SLS and the machine group is registered.

FAIL:

The configuration might not have taken effect. The configuration takes approximately 2 minutes to apply. Please refresh the page and try again later.

If the status is still FAIL after 2 minutes, refer to Troubleshoot Logtail machine group issues for diagnostic steps.

Each pod corresponds to a separate LoongCollector instance. Use different custom identifiers for different services or environments for fine-grained management.

Step 3: Create a collection configuration

This step defines which log files LoongCollector collects, how to parse their structure and filter content, and binds the collection configuration to the machine group.

Procedure

On the

Logstores page, click the

Logstores page, click the  icon to the left of the target Logstore name to expand it.

icon to the left of the target Logstore name to expand it.Click the

icon next to Data Ingestion. In the Quick Data Import dialog box, find the Kubernetes - File card and click Ingest Now.

icon next to Data Ingestion. In the Quick Data Import dialog box, find the Kubernetes - File card and click Ingest Now.In the Machine Group Configuration step, complete the following settings and click Next:

Use Case: Select K8s Clusters.

Deployment Method: Select Sidecar.

Select Machine Group: In the Source Machine Groups list, select the machine group with a custom identifier that you created in Step 2, and click

to add it to the Applied Machine Groups list.

to add it to the Applied Machine Groups list.

On the Logtail Configuration page, configure the Logtail collection rules as follows.

1. Global and input configurations

This section defines the name, log source, and collection scope for the collection configuration.

Global Configurations:

Configuration Name: A custom name for the collection configuration. This name must be unique within the project and cannot be changed after it is created. Naming conventions:

Can contain only lowercase letters, digits, hyphens (-), and underscores (_).

Must start and end with a lowercase letter or a digit.

Input Configuration:

Type: Text Log.

Logtail Deployment Mode: Select Sidecar.

File Path Type:

Container Path: Collect log files from within a container.

Host Path: Collect logs from local services on the host machine.

File Path: The path from which to collect logs.

Linux: The path must start with a forward slash (/). For example,

/data/mylogs/**/*.logspecifies all files with the .log extension in the/data/mylogsdirectory and its subdirectories.Windows: The path must start with a drive letter, such as

C:\Program Files\Intel\**\*.Log.

Maximum Directory Monitoring Depth: The maximum directory depth that the

**wildcard in the File Path can match. The default value is 0, which means only the current directory is monitored.

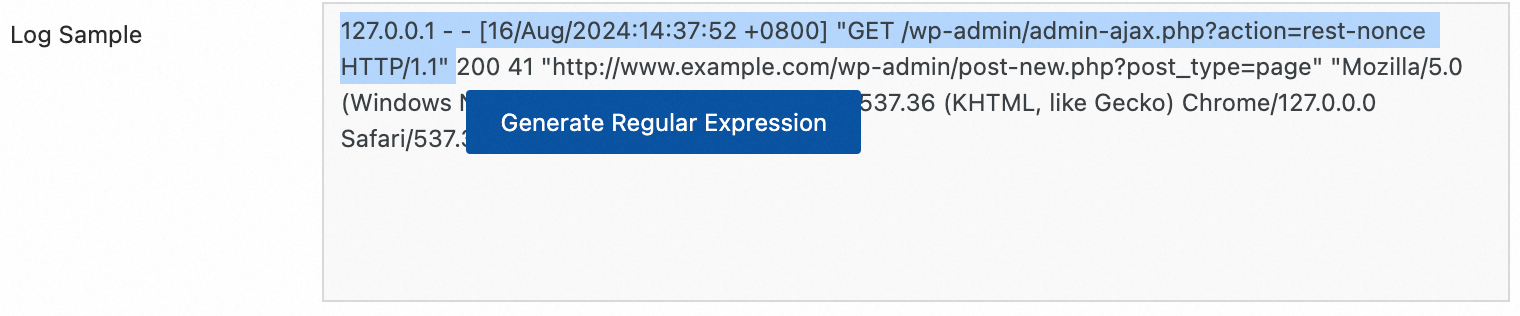

2. Log processing and structuring

Configure log processing rules to transform raw, unstructured logs into structured, searchable data. This improves the efficiency of log queries and analysis. We recommend that you first add a log sample:

In the Processor Configurations section of the Logtail Configuration page, click Add Sample Log and enter the log content to be collected. The system identifies the log format based on the sample and helps generate regular expressions and parsing rules, which simplifies the configuration.

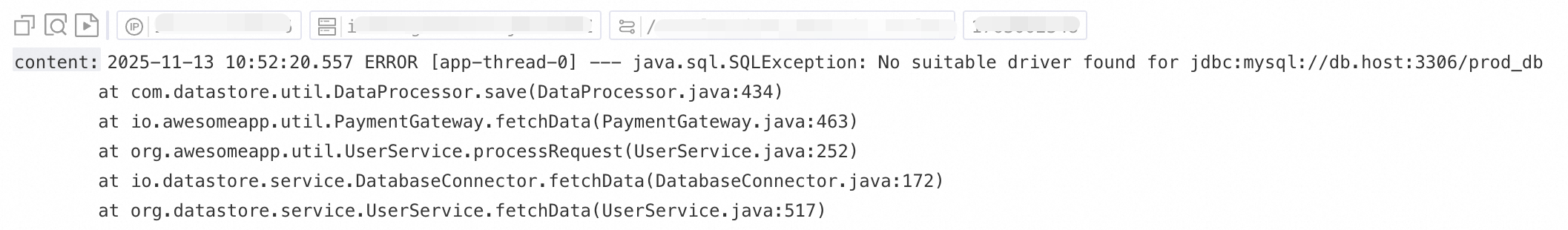

Use case 1: Process multiline logs (such as Java stack logs)

Because logs such as Java exception stacks and JSON objects often span multiple lines, the default collection mode splits them into multiple incomplete records, which causes a loss of context. To prevent this, enable multiline mode and configure a Regex to Match First Line to merge consecutive lines of the same log into a single, complete log.

Example:

Raw log without any processing | In default collection mode, each line is a separate log, breaking the stack trace and losing context | With multiline mode enabled, a Regex to Match First Line identifies the complete log, preserving its full semantic structure. |

|

|

|

Procedure: In the Processor Configurations section of the Logtail Configuration page, enable Multi-line Mode:

For Type, select Custom or Multi-line JSON.

Custom: For raw logs with a variable format, configure a Regex to Match First Line to identify the starting line of each log.

Regex to Match First Line: Automatically generate or manually enter a regular expression that matches a complete line of data. For example, the regular expression for the preceding example is

\[\d+-\d+-\w+:\d+:\d+,\d+]\s\[\w+]\s.*.Automatic generation: Click Generate. Then, in the Log Sample text box, select the log content that you want to extract and click Automatically Generate.

Manual entry: Click Manually Enter Regular Expression. After you enter the expression, click Validate.

Multi-line JSON: SLS automatically handles line breaks within a single raw log if the log is in standard JSON format.

Processing Method If Splitting Fails:

Discard: Discards a text segment if it does not match the start-of-line rule.

Retain Single Line: Retains unmatched text on separate lines.

Scenario 2: Structured logs

When raw logs are unstructured or semi-structured text, such as NGINX access logs or application output logs, direct querying and analysis are often inefficient. SLS provides various data parsing plugins that can automatically convert raw logs of different formats into structured data. This provides a solid data foundation for subsequent analysis, monitoring, and alerting.

Example:

Raw log | Structured log |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page:

Add a parsing plugin: Click Add Processor Plugin and configure a plugin for regular expression, delimiter, or JSON parsing based on your log format. This example collects NGINX logs by selecting .

NGINX Log Configuration: Copy the entire

log_formatdefinition from your Nginx server's configuration file (nginx.conf) and paste it into this text box.Example:

log_format main '$remote_addr - $remote_user [$time_local] "$request" ''$request_time $request_length ''$status $body_bytes_sent "$http_referer" ''"$http_user_agent"';ImportantThe format definition here must exactly match the format that generates the logs on the server. Otherwise, log parsing will fail.

General configuration parameters: The following parameters appear in multiple data parsing plugins and have consistent functions and usage.

Original Field: Specifies the source field to parse. The default is

content, which represents the entire collected log entry.Keep Original Field on Parse Failure: We recommend enabling this option. If a log cannot be parsed by the plugin (for example, due to a format mismatch), this option ensures that the raw log content is retained in the specified original field.

Keep Original Field on Parse Success: If selected, the raw log content is retained even if the log is parsed successfully.

3. Log filtering

Indiscriminately collecting large volumes of low-value or irrelevant logs, such as those at the DEBUG or INFO level, can waste storage, increase costs, impair query performance, and introduce data security risks. To avoid these issues, you can use fine-grained filtering policies for efficient and secure log collection.

Content filtering

Filter fields based on log content, such as collecting only logs where the level is WARNING or ERROR.

Example:

Raw log without any processing | Collect only |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page

Click Add Processor and select :

Field Name: The log field to use for filtering.

Field Value: The regular expression used for filtering. Only full matches are supported, not partial keyword matches.

Collection blacklist

Use a blacklist to exclude specified directories or files, which prevents irrelevant or sensitive logs from being uploaded.

Procedure: In the section of the Logtail Configuration page, enable Collection Blacklist and click Add.

Supports full and wildcard matching for directories and filenames. The only supported wildcard characters are the asterisk (*) and the question mark (?).

File Path Blacklist: Specifies the file paths to exclude. Examples:

/home/admin/private*.log: Ignores all files in the/home/admin/directory that start with private and end with .log./home/admin/private*/*_inner.log: Ignores files that end with _inner.log within directories that start with private under the/home/admin/directory.

File Blacklist: A list of filenames to ignore during collection. Example:

app_inner.log: Ignores all files namedapp_inner.logduring collection.

Directory Blacklist: Directory paths cannot end with a forward slash (/). Examples:

/home/admin/dir1/: The directory blacklist will not take effect./home/admin/dir*: Ignores files in all subdirectories that start with dir under the/home/admin/directory during collection./home/admin/*/dir: Ignores all files in subdirectories named dir at the second level of the/home/admin/directory. For example, files in the/home/admin/a/dirdirectory are ignored, but files in the/home/admin/a/b/dirdirectory are collected.

Container filtering

Set collection conditions based on container metadata, such as environment variables, pod labels, namespaces, and container names, to precisely control which container logs are collected.

Procedure: In the Input Configuration section of the Logtail Configuration page, enable Container Filtering and click Add.

Multiple conditions are combined with a logical AND. All regular expression matching uses Go's RE2 engine, which has some limitations compared to engines like PCRE. Follow the guidelines in Appendix: Regular expression usage limitations (Container Filtering) when writing regular expressions.

Environment variable blacklist/whitelist: Specify conditions for the environment variables of the containers to collect logs from.

K8s pod label blacklist/whitelist: Specify conditions for the labels of the pods that contain the containers to collect logs from.

K8s Pod Name Regex Match: Specify the containers to collect logs from by pod name.

K8s Namespace Regex Match: Specify the containers to collect logs from by namespace name.

K8s Container Name Regex Match: Specify the containers to collect logs from by container name.

Container label blacklist/whitelist: Collect logs from containers whose labels meet specified conditions. This is used for Docker scenarios and is not recommended for Kubernetes scenarios.

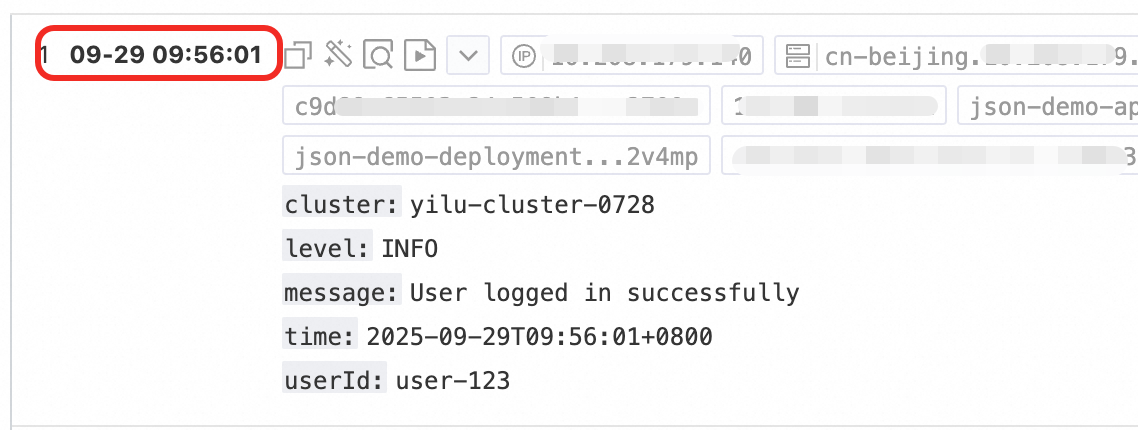

4. Log classification

In scenarios where multiple applications or instances share the same log format, it can be difficult to distinguish the log source. This can lead to a lack of context during queries and reduce analysis efficiency. To solve this, you can configure topics and log tags to automatically associate context and logically classify logs.

Topics

When multiple applications or instances have the same log format but different paths (such as /apps/app-A/run.log and /apps/app-B/run.log), it is difficult to distinguish the source of the collected logs. You can generate topics based on machine groups, custom names, or file path extraction to flexibly distinguish logs from different business services or source paths.

Procedure: : Select a method for generating topics. The following three types are supported:

Machine Group Topic: When a collection configuration is applied to multiple machine groups, LoongCollector automatically uses the name of the server's machine group as the

__topic__field for upload. This is suitable for use cases where logs are divided by host.Custom: Uses the format

customized://<custom_topic_name>, such ascustomized://app-login. This format is suitable for static topic use cases with fixed business identifiers.File Path Extraction: Extract key information from the full path of the log file to dynamically mark the log source. This is suitable for situations where multiple users or applications share the same log filename but have different paths. For example, when multiple users or services write logs to different top-level directories but the sub-paths and filenames are identical, the source cannot be distinguished by filename alone:

/data/logs ├── userA │ └── serviceA │ └── service.log ├── userB │ └── serviceA │ └── service.log └── userC └── serviceA └── service.logConfigure File Path Extraction and use a regular expression to extract key information from the full path. The matched result is then uploaded to the logstore as the topic.

File path extraction rule: Based on regular expression capturing groups

When you configure a regular expression, the system automatically determines the output field format based on the number and naming of capturing groups. The rules are as follows:

In the regular expression for a file path, you must escape the forward slash (/).

Capturing group type

Use case

Generated field

Regex example

Matching path example

Generated field example

Single capturing group (only one

(.*?))Only one dimension is needed to distinguish the source (such as username or environment)

Generates the

__topic__field\/logs\/(.*?)\/app\.log/logs/userA/app.log__topic__: userAMultiple capturing groups - unnamed (multiple

(.*?))Multiple dimensions are needed to distinguish the source, but no semantic tags are required

Generates a tag field

__tag__:__topic_{i}__, where{i}is the ordinal number of the capturing group\/logs\/(.*?)\/(.*?)\/app\.log/logs/userA/svcA/app.log__tag__:__topic_1__userA__tag__:__topic_2__svcAMultiple capturing groups - named (using

(?P<name>.*?)Multiple dimensions are needed to distinguish the source, and the field meanings should be clear for easy querying and analysis

Generates a tag field

__tag__:{name}\/logs\/(?P<user>.*?)\/(?P<service>.*?)\/app\.log/logs/userA/svcA/app.log__tag__:user:userA;__tag__:service:svcA

Log tagging

Enable the Log Tag Enrichment feature to extract key information from container environment variables or Kubernetes pod labels and add them as tags. This enables fine-grained grouping of logs.

Procedure: In the Input Configuration section of the Logtail Configuration page, enable Log Tag Enrichment and click Add.

Environment Variables: Configure an environment variable name and a tag name. The value of the environment variable is stored as the value of the specified tag.

Environment Variable Name: The name of the environment variable to extract.

Tag Name: The name for the new tag.

Pod Labels: Configure a pod label name and a tag name. The value of the pod label is stored as the value of the specified tag.

Pod Label Name: The name of the Kubernetes pod label to extract.

Tag Name: The name for the new tag.

5. Output configuration

By default, LoongCollector sends all logs to the current Logstore using lz4 compression. If you need to distribute logs from the same source to different Logstores, follow these steps:

Dynamic multi-destination distribution

Multi-destination output is available only for LoongCollector 3.0.0 and later. Logtail does not support this feature.

You can configure up to five output destinations.

After you configure multiple output destinations, this collection configuration will no longer appear in the collection configuration list of the current Logstore. To view, modify, or delete a multi-destination distribution configuration, see How do I manage a multi-destination distribution configuration?

Procedure: In the Output Configuration section of the Logtail Configuration page:

Click

to expand the output configuration.

to expand the output configuration.Click Add Output Destination and complete the following configuration:

Logstore: Select the destination Logstore.

Compression Method: Supported methods are lz4 and zstd.

Routing Configuration: Route and distribute logs based on their tag fields. Logs that match the routing rules are uploaded to the destination Logstore. If the routing configuration is empty, all collected logs are uploaded to the destination Logstore.

Tag Name: Specifies the name of the tag field used for routing. Enter the field name directly, for example,

__path__, without the__tag__:prefix. Tag fields fall into two categories:For more information about tags, see Manage LoongCollector collection tags.

Agent-related: Related to the collection agent itself and independent of plugins. Examples include

__hostname__and__user_defined_id__.Input plugin-related: Dependent on input plugins, which provide and enrich logs with relevant information. Examples include

__path__for file collection and_pod_name_or_container_name_for Kubernetes collection.

Tag Value: The value the tag must match. Logs with a matching tag value are sent to the destination Logstore.

Discard This Tag Field: If enabled, the uploaded logs do not include this tag field.

Step 4: Query and analysis

After you configure log processing and plugins, click Next to go to the Query and Analysis Configurations page:

Full-text index is enabled by default, which supports keyword searches on raw log content.

For precise queries by field, wait for the Preview Data to load, and then click Automatic Index Generation. SLS generates a field index based on the first entry in the preview data.

After the configuration is complete, click Next to finish setting up the entire collection process.

Step 5: View uploaded logs

After you apply a collection configuration to a machine group, the system automatically deploys the configuration and starts collecting incremental logs.

Verify new log entries: LoongCollector only collects incremental logs. Run the

tail -f /path/to/your/log/filecommand and trigger an action in your application to confirm that new logs are being written to the file.Query the logs: Go to the Search & Analyze page of the target Logstore. Click Search & Analyze to check for new log entries. By default, the time range is set to the last 15 minutes. The following table describes the default fields for text logs from containers.

Parameter

Description

__tag__:__hostname__

The name of the container host.

__tag__:__path__

The path of the log file within the container.

__tag__:_container_ip_

The IP address of the container.

__tag__:_image_name_

The name of the image used by the container.

NoteIf multiple images share the same hash but have different names or tags, the collection configuration selects one of the names based on the hash. The selected name is not guaranteed to match the one specified in your YAML file.

__tag__:_pod_name_

The name of the pod.

__tag__:_namespace_

The pod's namespace.

__tag__:_pod_uid_

The pod's unique identifier (UID).

Key configurations for log integrity

To ensure the integrity and reliability of your log data, properly configure the following LoongCollector Sidecar parameters.

LoongCollector resource configuration

In high-volume scenarios, proper resource configuration is essential for reliable on-host collection. Key parameters include:

# Configure CPU and memory resources based on the log generation rate

resources:

limits:

cpu: "2000m"

memory: "2Gi"

# Parameters that affect collection performance

env:

- name: cpu_usage_limit

value: "2"

- name: mem_usage_limit

value: "2048"

- name: max_bytes_per_sec

value: "209715200"

- name: process_thread_count

value: "8"

- name: send_request_concurrency

value: "20"

For information about configuring resources based on data volume, see Logtail network types, startup parameters, and configuration files.

Server-side quota configuration

Server-side quota limits or network issues can block data transmission, which creates backpressure on the collection agent and affects log integrity. Use CloudLens for SLS to monitor Project resource quotas.

Initial collection configuration

The initial file collection policy at pod startup directly affects data integrity, especially in high-throughput scenarios.

The initial collection size determines where LoongCollector starts reading from a new file. The default initial collection size is 1,024 KB.

If a file is smaller than 1,024 KB, collection starts from the beginning of the file.

If the file is larger than 1,024 KB, collection starts 1,024 KB before the end of the file.

The initial collection size ranges from 0 to 10,485,760 KB.

enable_full_drain_mode

This parameter is critical for data integrity. It ensures that LoongCollector finishes collecting and sending all data after receiving a SIGTERM signal.

# Parameter that affects collection integrity

env:

- name: enable_full_drain_mode

value: "true" # Enable full drain mode

FAQ

Manage multi-destination shipping configurations

A multi-destination shipping configuration links to multiple Logstores, so it is managed from the Project-level management page:

Log on to the Log Service console and click the name of the target Project.

On the target Project page, in the navigation pane on the left, click

.Note

.NoteThis page lets you centrally manage all data collection configurations in the Project, including orphaned configurations whose associated Logstores have been deleted.

Next steps

Data visualization: Monitor key metric trends with a visualization dashboard.

Automated alerting for data anomalies: Set up an alert policy to receive real-time alerts for system anomalies.

SLS collects only incremental logs. To collect historical logs, see Import historical log files.

Appendix: YAML example

This example provides a complete Kubernetes configuration that includes an application container (Nginx) and a LoongCollector sidecar container, suitable for collecting container logs in sidecar mode.

Before you begin, replace the following three placeholders:

Replace

${your_aliyun_user_id}with the UID of your Alibaba Cloud account.Replace

${your_machine_group_user_defined_id}with the custom identifier of the machine group that you created in Step 3. The identifier must match exactly.Replace

${your_region_config}with the configuration name that matches the region and network type of your Log Service (SLS) project.For example, if your project is in the China (Hangzhou) region, use

cn-hangzhoufor internal network access andcn-hangzhou-internetfor public network access.

Short-lived (Job/CronJob)

apiVersion: batch/v1

kind: Job

metadata:

name: demo-job

spec:

backoffLimit: 3

activeDeadlineSeconds: 3600

completions: 1

parallelism: 1

template:

spec:

restartPolicy: Never

terminationGracePeriodSeconds: 300

containers:

# Application container

- name: demo-job

image: debian:bookworm-slim

command: ["/bin/bash", "-c"]

args:

- |

# Wait for LoongCollector to be ready.

echo "[$(date)] Business: Waiting for LoongCollector to be ready..."

until [[ -f /tasksite/cornerstone ]]; do

sleep 1

done

echo "[$(date)] Business: LoongCollector is ready, starting business logic"

# Execute the business logic.

echo "Hello, World!" >> /app/logs/business.log

# Save the exit code.

retcode=$?

echo "[$(date)] Business: Task completed with exit code: $retcode"

# Notify LoongCollector that the task is complete.

touch /tasksite/tombstone

echo "[$(date)] Business: Tombstone created, exiting"

exit $retcode

# Resource limits

resources:

requests:

cpu: "100m"

memory: "128Mi"

limits:

cpu: "500"

memory: "512Mi"

# Volume mounts

volumeMounts:

- name: app-logs

mountPath: /app/logs

- name: tasksite

mountPath: /tasksite

# LoongCollector sidecar container

- name: loongcollector

image: aliyun-observability-release-registry.cn-hongkong.cr.aliyuncs.com/loongcollector/loongcollector:v3.1.1.0-20fa5eb-aliyun

command: ["/bin/bash", "-c"]

args:

- |

echo "[$(date)] LoongCollector: Starting initialization"

# Start the LoongCollector service.

/etc/init.d/loongcollectord start

# Wait for the configuration to be downloaded and the service to be ready.

sleep 15

# Verify the service status.

if /etc/init.d/loongcollectord status; then

echo "[$(date)] LoongCollector: Service started successfully"

touch /tasksite/cornerstone

else

echo "[$(date)] LoongCollector: Failed to start service"

exit 1

fi

# Wait for the application container to complete.

echo "[$(date)] LoongCollector: Waiting for application container to complete"

until [[ -f /tasksite/tombstone ]]; do

sleep 2

done

echo "[$(date)] LoongCollector: Application task completed, waiting for log transmission"

# Allow sufficient time for remaining logs to be transmitted.

sleep 30

echo "[$(date)] LoongCollector: Stopping service"

/etc/init.d/loongcollectord stop

echo "[$(date)] LoongCollector: Shutdown complete"

# Health check

livenessProbe:

exec:

command: ["/etc/init.d/loongcollectord", "status"]

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

failureThreshold: 3

# Resource configuration

resources:

requests:

cpu: "100m"

memory: "128Mi"

limits:

cpu: "500m"

memory: "512Mi"

# Environment variable configuration

env:

- name: ALIYUN_LOGTAIL_USER_ID

value: "${your_aliyun_user_id}"

- name: ALIYUN_LOGTAIL_USER_DEFINED_ID

value: "${your_machine_group_user_defined_id}"

- name: ALIYUN_LOGTAIL_CONFIG

value: "/etc/ilogtail/conf/${your_region_config}/ilogtail_config.json"

- name: ALIYUN_LOG_ENV_TAGS

value: "_pod_name_|_pod_ip_|_namespace_|_node_name_"

# Pod information injection

- name: "_pod_name_"

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: "_pod_ip_"

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: "_namespace_"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: "_node_name_"

valueFrom:

fieldRef:

fieldPath: spec.nodeName

# Volume mounts

volumeMounts:

- name: app-logs

mountPath: /app/logs

readOnly: true

- name: tasksite

mountPath: /tasksite

- name: tz-config

mountPath: /etc/localtime

readOnly: true

# Volume definitions

volumes:

- name: app-logs

emptyDir: {}

- name: tasksite

emptyDir:

medium: Memory

sizeLimit: "10Mi"

- name: tz-config

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

Long-lived (Deployment/StatefulSet)

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-demo

namespace: production

labels:

app: nginx-demo

version: v1.0.0

spec:

replicas: 3

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

maxSurge: 1

selector:

matchLabels:

app: nginx-demo

template:

metadata:

labels:

app: nginx-demo

version: v1.0.0

spec:

terminationGracePeriodSeconds: 600 # 10-minute graceful shutdown period.

containers:

# Application container - Web application

- name: nginx-demo

image: anolis-registry.cn-zhangjiakou.cr.aliyuncs.com/openanolis/nginx:1.14.1-8.6

# Startup command and signal handling

command: ["/bin/sh", "-c"]

args:

- |

# Define the signal handler function.

_term_handler() {

echo "[$(date)] [nginx-demo] Caught SIGTERM, starting graceful shutdown..."

# Send a QUIT signal to Nginx for a graceful stop.

if [ -n "$NGINX_PID" ]; then

kill -QUIT "$NGINX_PID" 2>/dev/null || true

echo "[$(date)] [nginx-demo] Sent SIGQUIT to Nginx PID: $NGINX_PID"

# Wait for Nginx to stop gracefully.

wait "$NGINX_PID"

EXIT_CODE=$?

echo "[$(date)] [nginx-demo] Nginx stopped with exit code: $EXIT_CODE"

fi

# Notify LoongCollector that the application container has stopped.

echo "[$(date)] [nginx-demo] Writing tombstone file"

touch /tasksite/tombstone

exit $EXIT_CODE

}

# Register the signal handler.

trap _term_handler SIGTERM SIGINT SIGQUIT

# Wait for LoongCollector to be ready.

echo "[$(date)] [nginx-demo]: Waiting for LoongCollector to be ready..."

until [[ -f /tasksite/cornerstone ]]; do

sleep 1

done

echo "[$(date)] [nginx-demo]: LoongCollector is ready, starting business logic"

# Start Nginx.

echo "[$(date)] [nginx-demo] Starting Nginx..."

nginx -g 'daemon off;' &

NGINX_PID=$!

echo "[$(date)] [nginx-demo] Nginx started with PID: $NGINX_PID"

# Wait for the Nginx process.

wait $NGINX_PID

EXIT_CODE=$?

# Also notify LoongCollector if the exit was not caused by a signal.

if [ ! -f /tasksite/tombstone ]; then

echo "[$(date)] [nginx-demo] Unexpected exit, writing tombstone"

touch /tasksite/tombstone

fi

exit $EXIT_CODE

# Resource configuration

resources:

requests:

cpu: "200m"

memory: "256Mi"

limits:

cpu: "1000m"

memory: "1Gi"

# Volume mounts

volumeMounts:

- name: nginx-logs

mountPath: /var/log/nginx

- name: tasksite

mountPath: /tasksite

- name: tz-config

mountPath: /etc/localtime

readOnly: true

# LoongCollector sidecar container

- name: loongcollector

image: aliyun-observability-release-registry.cn-shenzhen.cr.aliyuncs.com/loongcollector/loongcollector:v3.1.1.0-20fa5eb-aliyun

command: ["/bin/bash", "-c"]

args:

- |

echo "[$(date)] LoongCollector: Starting initialization"

# Start the LoongCollector service.

/etc/init.d/loongcollectord start

# Wait for the configuration to be downloaded and the service to be ready.

sleep 15

# Verify the service status.

if /etc/init.d/loongcollectord status; then

echo "[$(date)] LoongCollector: Service started successfully"

touch /tasksite/cornerstone

else

echo "[$(date)] LoongCollector: Failed to start service"

exit 1

fi

# Wait for the application container to complete.

echo "[$(date)] LoongCollector: Waiting for application container to complete"

until [[ -f /tasksite/tombstone ]]; do

sleep 2

done

echo "[$(date)] LoongCollector: Application task completed, waiting for log transmission"

# Allow sufficient time for remaining logs to be transmitted.

sleep 30

echo "[$(date)] LoongCollector: Stopping service"

/etc/init.d/loongcollectord stop

echo "[$(date)] LoongCollector: Shutdown complete"

# Health check

livenessProbe:

exec:

command: ["/etc/init.d/loongcollectord", "status"]

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

failureThreshold: 3

# Resource configuration

resources:

requests:

cpu: "100m"

memory: "128Mi"

limits:

cpu: "2000m"

memory: "2048Mi"

# Environment variable configuration

env:

- name: ALIYUN_LOGTAIL_USER_ID

value: "${your_aliyun_user_id}"

- name: ALIYUN_LOGTAIL_USER_DEFINED_ID

value: "${your_machine_group_user_defined_id}"

- name: ALIYUN_LOGTAIL_CONFIG

value: "/etc/ilogtail/conf/${your_region_config}/ilogtail_config.json"

# Enable full drain mode to send all logs when the pod stops.

- name: enable_full_drain_mode

value: "true"

# Add Pod environment information as log tags.

- name: "ALIYUN_LOG_ENV_TAGS"

value: "_pod_name_|_pod_ip_|_namespace_|_node_name_|_node_ip_"

# Inject Pod and Node information.

- name: "_pod_name_"

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: "_pod_ip_"

valueFrom:

fieldRef:

fieldPath: status.podIP

- name: "_namespace_"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: "_node_name_"

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: "_node_ip_"

valueFrom:

fieldRef:

fieldPath: status.hostIP

# Volume mounts

volumeMounts:

- name: nginx-logs

mountPath: /var/log/nginx

readOnly: true

- name: tasksite

mountPath: /tasksite

- name: tz-config

mountPath: /etc/localtime

readOnly: true

# Volume definitions

volumes:

- name: nginx-logs

emptyDir: {}

- name: tasksite

emptyDir:

medium: Memory

sizeLimit: "50Mi"

- name: tz-config

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

Appendix: Native parsing plugins

In the Processor Configurations section of the Logtail Configuration page, add processors to structure raw logs. To add a processing plugin to an existing collection configuration, follow these steps:

In the navigation pane on the left, choose

Logstores and find the target logstore.

Logstores and find the target logstore.Click the

icon before its name to expand the logstore.

icon before its name to expand the logstore.Click Logtail Configuration. In the configuration list, find the target Logtail configuration and click Manage Logtail Configuration in the Actions column.

On the Logtail configuration page, click Edit.

This section introduces only commonly used processing plugins that cover common log processing use cases. For more features, see Extended processors.

Rules for combining plugins (for LoongCollector / Logtail 2.0 and later):

Native and extended processors can be used independently or combined as needed.

Prioritize native processor because they offer better performance and higher stability.

When native features cannot meet your business needs, add extended processors after the configured native ones for supplementary processing.

Order constraint:

All plugins are executed sequentially in the order they are configured, which forms a processing chain. Note: All native processors must precede any extended processors. After you add an extended processor, you cannot add more native processors.

Regular expression parsing

Use a regular expression to extract log fields and parse the log into key-value pairs. Each field can be queried and analyzed independently.

Example:

Raw log without any processing | Using the regular expression parsing plugin |

| |

Procedure: On the Logtail configuration page, in the processor configuration area, click Add Processor, and then select .

Regular expression: Used to match logs. You can generate it automatically or enter it manually.

Automatic generation:

Click Generate Regular Expression.

In the log sample, select the log content to extract.

Click Generate.

Manual entry: Enter a regular expression based on the log format.

After configuring the expression, click Validate to test if it correctly parses the log.

Extracted field: Specify the names (keys) for the extracted log content (values).

For information about other parameters, see the general configuration parameters in Scenario 2: Structuring logs.

Delimiter-based parsing

Use a delimiter to structure log content, parsing it into multiple key-value pairs. This method supports both single-character and multi-character delimiters.

Example:

Raw log without any processing | Fields split by the specified character |

| |

Procedure: On the Logtail configuration page, in the processor configuration area, click Add Processor, and then select .

Delimiter: The character used to split log content.

Example: For a CSV file, select Custom and enter a comma (,).

Quote: If a field can contain the delimiter, you must specify a quote character to enclose the field and prevent incorrect parsing.

Extracted field: Set a field name (Key) for each column in the order they appear. The following rules apply:

The field name can contain only letters, digits, and underscores (_).

The field name must start with a letter or an underscore (_).

The maximum length is 128 bytes.

For information about other parameters, see the general configuration parameters in Scenario 2: Structuring logs.

JSON parsing

Parses an object-type JSON log into key-value pairs.

Example:

Raw log without any processing | Automatic extraction of standard JSON key-value pairs |

| |

Procedure: On the Logtail configuration page, in the processor configuration area, click Add Processor, and then select .

Original field: The name of the field containing the raw log to be parsed. Defaults to

content.For information about other parameters, see the general configuration parameters in Scenario 2: Structuring logs.

Nested JSON parsing

Parses a nested JSON log into key-value pairs based on a specified expansion depth.

Example:

Raw log without any processing | Expansion depth: 0, using expansion depth as a prefix | Expansion depth: 1, using expansion depth as a prefix |

| | |

Procedure: On the Logtail configuration page, in the processor configuration area, click Add Processor, and then select .

Original field: The name of the original field to expand, such as

content.JSON expansion depth: The desired expansion level for the JSON object. 0 (default) expands all levels, 1 expands only the top level, and so on.

JSON key connector: The character for joining nested keys into a single field name. Defaults to an underscore (_).

Expanded key prefix: The prefix to add to the field names after JSON expansion.

Expand Array: Enable this option to expand arrays into indexed key-value pairs.

Example:

{"k":["a","b"]}is expanded to{"k[0]":"a","k[1]":"b"}.If you need to rename an expanded field (for example, from

prefix_s_key_k1tonew_field_name), you can add a Rename Fields processor to perform the mapping.For information about other parameters, see the general configuration parameters in Scenario 2: Structuring logs.

JSON array parsing

Use the json_extract function to extract JSON objects from a JSON array.

Example:

Raw log without any processing | Extract JSON array structure |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, switch the Processing Mode to SPL, configure the SPL Statement, and use the json_extract function to extract JSON objects from the JSON array.

Example: Extract elements from the JSON array in the log field content and store the results in new fields json1 and json2.

* | extend json1 = json_extract(content, '$[0]'), json2 = json_extract(content, '$[1]')Apache log parsing

Parses log content into key-value pairs based on the LogFormat directive in an Apache configuration file.

Example:

Raw log without any processing | Apache Common Log Format |

| |

Procedure: On the Logtail configuration page, in the processor configuration area, click Add Processor, and then select .

Log format: combined

Apache configuration field: The system automatically populates this configuration based on the selected log format.

ImportantVerify that the auto-populated content exactly matches the

LogFormatdirective in your server's Apache configuration file (typically /etc/apache2/apache2.conf).For information about other parameters, see the general configuration parameters in Scenario 2: Structuring logs.

Data masking

Mask sensitive data in logs.

Example:

Raw log without any processing | Masking result |

| |

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: The field that contains the log content before parsing.

Data Masking Method:

const: Replaces sensitive content with a constant string.

md5: Replaces sensitive content with its MD5 hash.

Replacement String: If Data Masking Method is set to const, enter a string to replace the sensitive content.

Content Expression that Precedes Replaced Content: The expression used to find sensitive content, which is configured using RE2 syntax.

Content Expression to Match Replaced Content: The regular expression used to match sensitive content. The expression must be written in RE2 syntax.

Time parsing

Parse the time field in the log and set the parsing result as the log's __time__ field.

Example:

Raw log without any processing | Time parsing |

|

|

Procedure: In the Processor Configurations section of the Logtail Configuration page, click Add Processor and select :

Original Field: The field that contains the log content before parsing.

Time Format: Set the time format that corresponds to the timestamps in the log.

Time Zone: Select the time zone for the log time field. By default, this is the time zone of the environment where the LoongCollector (Logtail) process is running.

Appendix: Regular expression limitations (container filtering)

The regular expressions for container filtering use the Go RE2 engine, which has syntax limitations compared to engines like PCRE.

1. Named group syntax differences

Go uses the (?P<name>...) syntax for a named group and does not support PCRE's (?<name>...) syntax.

Correct example:

(?P<year>\d{4})Incorrect syntax:

(?<year>\d{4})

2. Unsupported regular expression features

RE2 does not support the following common but complex regular expression features:

Assertions:

(?=...),(?!...),(?<=...), and(?<!...)Conditional expressions:

(?(condition)true|false)Recursive matching:

(?R)and(?0)Subprogram references:

(?&name)and(?P>name)Atomic groups:

(?>...)

3. Recommendations

To ensure compatibility, select the Golang (RE2) mode in tools like Regex101 when debugging regular expressions. If you use any unsupported syntax, the plugin will fail to parse or match.

> Create Machine Group

> Create Machine Group