This topic describes the major updates and bug fixes in the Realtime Compute for Apache Flink release on May 29, 2024.

This version is rolled out incrementally using a canary release strategy. Check the latest announcement on the right side of the management console of Realtime Compute for Apache Flink for the upgrade schedule. New features are available only after the upgrade completes for your account. To request an early upgrade, submit a ticket.

Overview

This release includes platform updates and engine updates in Ververica Runtime (VVR) 8.0.7.

Platform updates

This release improves system stability, operations and maintenance (O&M), and ease of use:

-

Cross-zone high availability optimization: Convert the compute units (CUs) of an existing namespace from single-zone to cross-zone directly — no new namespace or deployment migration required.

-

Operator-level state TTL: Configure a separate state time-to-live (TTL) value for each operator in expert mode of resource configuration. Supported in VVR 8.0.7 and later for SQL deployments only.

-

Visual Studio Code extension: Develop, deploy, and run SQL, JAR, and Python deployments in your local environment. Sync updated deployment configurations from the development console of Realtime Compute for Apache Flink.

The data lineage UI and the Deployments page UI are also optimized.

Engine updates

VVR 8.0.7 is built on Apache Flink 1.17.2 and includes the following updates:

Real-time lakehouse: The Apache Paimon connector SDK is upgraded to support the data lake format used by Apache Paimon 0.9.

SQL optimization:

-

*State TTL hints*: Set state TTL values per operator for regular join and group aggregation operators directly in your SQL queries, without modifying deployment configurations.

-

*Named parameters in user-defined functions (UDFs)*: Named parameters are supported in UDFs to improve development efficiency and reduce maintenance costs.

Connector updates:

-

*MongoDB connector*: Now generally available (GA). Supports change data capture (CDC) source tables, dimension tables, and result tables.

-

*MySQL connector*: Four improvements in this release:

-

The

op_typevirtual column passes operation types (+I,+U/-U,-D) to downstream systems, enabling business logic and data cleaning based on operation type. -

Read performance is improved for MySQL tables with Decimal-type primary keys. SourceRecords from large tables are processed in parallel.

-

CDC source reuse: when a deployment contains multiple MySQL CDC source tables with identical configurations (excluding database name, table name, and server ID), the tables are merged into a single connection. This significantly reduces connection count and binlog listening load on the MySQL server.

-

Buffered execution: configure the

sink.ignore-null-when-updateparameter to enable buffered execution, improving processing performance by several times.

-

-

*ApsaraDB for Redis connector*: Two improvements:

-

Different DDL (Data Definition Language) statements are supported for non-primary key columns when creating dimension tables or result tables of the HashMap type. This improves code readability.

-

Key prefixes and delimiters are now configurable to meet data governance requirements.

-

-

*ApsaraMQ for RocketMQ connector*: Buffered reading is supported, improving throughput and reducing resource costs.

-

*Apache Iceberg connector*: Apache Iceberg 1.5 is now supported.

Catalog management: View information is no longer displayed for MySQL catalogs. MySQL views are logical structures that cannot read or write data, and their inclusion previously caused data operation errors.

Security:

-

Hadoop 2.x is now supported for Hadoop clusters with Kerberos authentication enabled.

-

Sensitive information such as connector configurations is masked in logs.

Upgrade notes

After the upgrade completes for your account, upgrade the VVR engine to this version. For instructions, see Upgrade the engine version of a deployment.

Features

|

Feature |

Description |

References |

|

Cross-zone high availability optimization |

The CUs of an existing namespace can be converted between the single-zone type and the cross-zone type. |

|

|

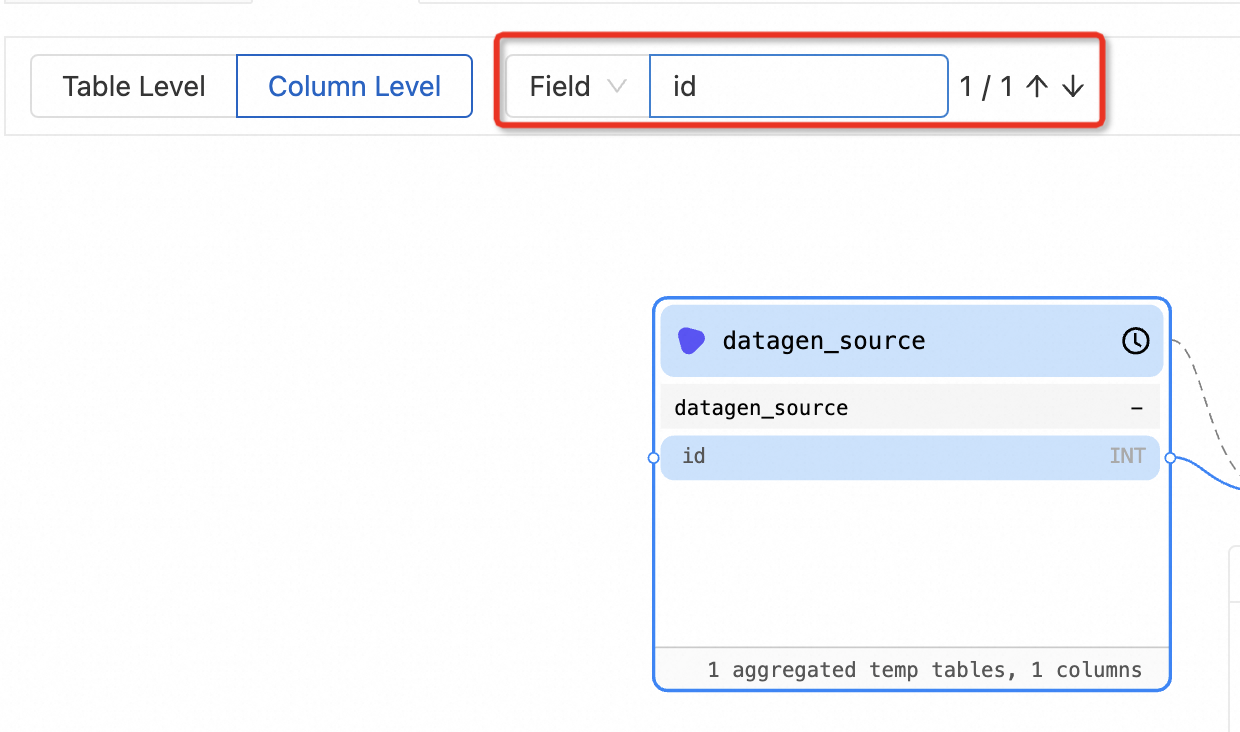

Data lineage optimization |

If multiple matches are found during the search for a field by name, you can press the up and down keys to quickly locate a result to view data lineage information.

|

|

|

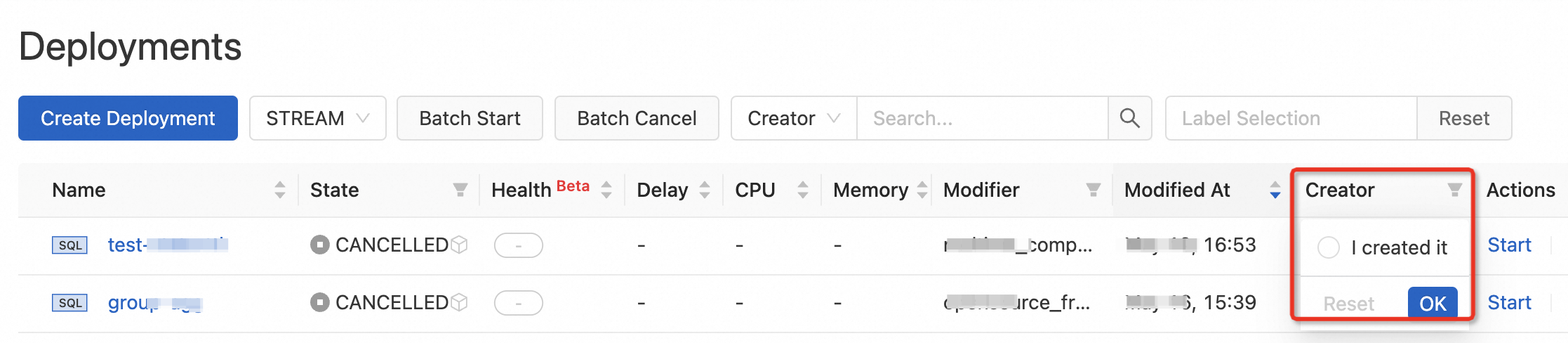

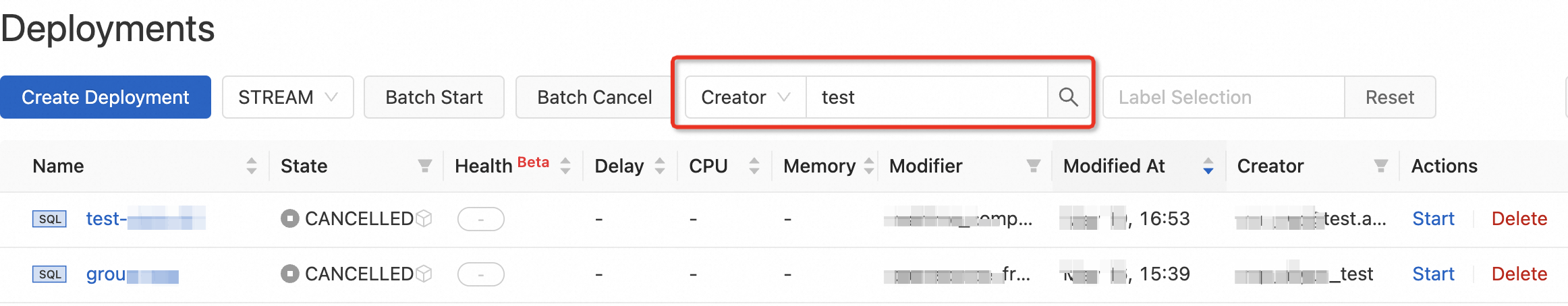

Creator field on the Deployments page |

The Creator field can be displayed on the Deployments page. To display the Creator field, click the

|

N/A |

|

Optimized permission management |

By default, the creator of a workspace, such as an Alibaba Cloud account, a Resource Access Management (RAM) user, or a RAM role, is assigned the owner role in the namespaces that belong to the workspace. |

|

|

Optimized state compatibility check for SQL deployments |

If you start a SQL deployment by resuming from the latest state, the system automatically detects deployment changes. We recommend that you click Click to detect next to State Compatibility to perform a state compatibility check and determine the subsequent actions based on the compatibility result. |

|

|

Visual Studio Code extension |

This extension allows you to develop, deploy, and run SQL, JAR, and Python deployments in the on-premises environment. It also allows you to synchronize updated deployment configurations from the development console of Realtime Compute for Apache Flink. |

|

|

Operator-level state TTL |

This feature is suitable for scenarios in which only specific operators require a large state TTL value. You can use multiple methods to configure a state TTL value for an operator to control its state size. This reduces the resource consumption of large-state deployments.

|

|

|

Named parameters in UDFs |

This feature improves development efficiency and reduces maintenance costs. |

|

|

Enhanced MySQL connector |

|

|

|

Enhanced ApsaraDB for Redis connector |

|

|

|

Buffered reading for ApsaraMQ for RocketMQ instances |

This feature improves processing efficiency and reduces resource costs. |

|

|

Removal of view information in MySQL catalogs |

The view information is no longer displayed for MySQL catalogs because a MySQL view is a logical structure and does not store data. |

|

|

Enhanced compatibility for Hadoop clusters that have Kerberos authentication enabled. |

Hadoop 2.x is supported for Hadoop clusters that have Kerberos authentication enabled. |

Register a Hive cluster that supports Kerberos authentication |

|

Enhanced Apache Iceberg connector |

Apache Iceberg 1.5 is supported. |

Fixed issues

-

Hologres connector (VVR 8.0.5 or 8.0.6): WHERE clause pushdown could produce incorrect query results.

-

Simple Log Service connector: Data loss could occur during failover because the source table continued committing data at the consumer offset.

-

State management: If TTL was configured for a

ValueStateobject but not for aMapStateobject, the states stored in theValueStateobject could be lost. -

Dynamic complex event processing (CEP): Deserialization results for the

WithinType.PREVIOUS_AND_CURRENTparameter could be inconsistent. -

Monitoring metrics: The

currentEmitEventTimeLagvalue displayed on the Realtime Compute for Apache Flink web UI was inconsistent with the value displayed on the console monitoring page. -

All issues fixed in Apache Flink 1.17.2. For details, see the Apache Flink 1.17.2 release announcement.