Realtime Compute for Apache Flink supports both stream and batch processing. Use batch processing when your input data is bounded—that is, the complete dataset is known before execution. This is typical for daily ETL jobs, data warehouse layer computations, and historical data reprocessing.

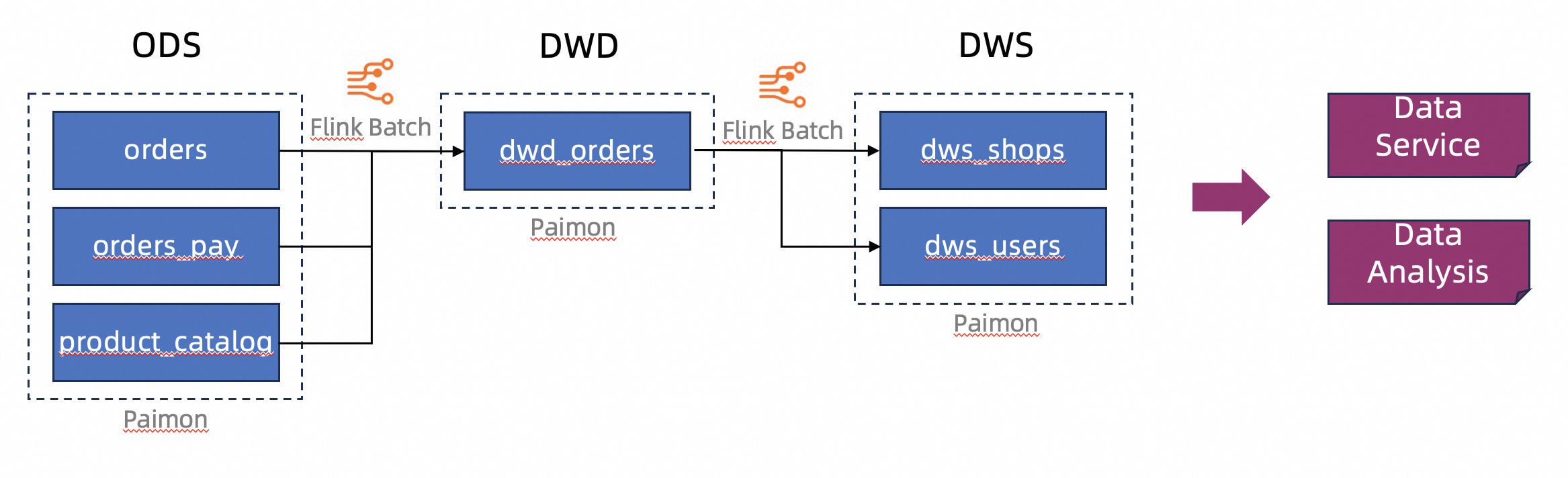

This tutorial walks through an end-to-end example: processing e-commerce order data through a three-layer data warehouse structure (ODS → DWD → DWS) stored in Apache Paimon, then orchestrating the batch deployments into a workflow.

In this tutorial, you perform the following steps:

Supported batch processing features

Realtime Compute for Apache Flink supports batch processing across the following features:

SQL draft development: Create batch drafts on the Drafts tab of the SQL Editor page, then deploy and run them as batch deployments. For more information, see Job development map.

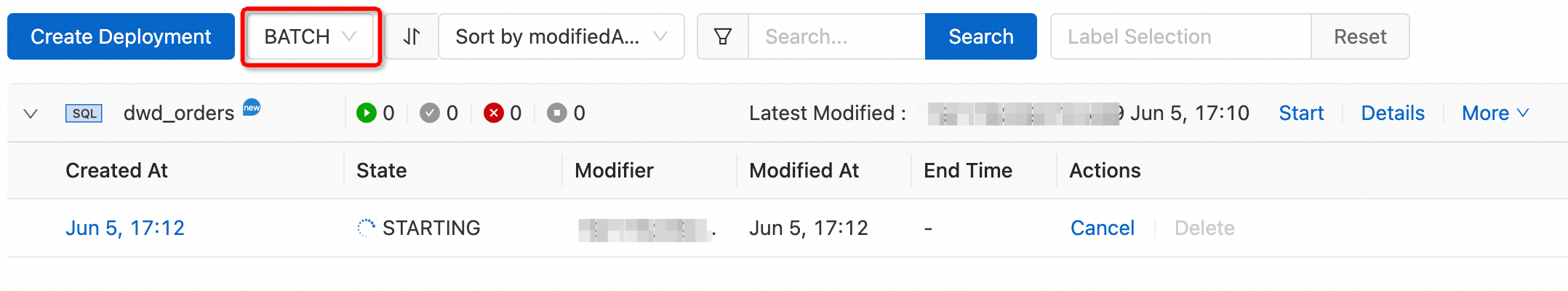

Deployment management: Deploy JAR or Python batch drafts directly from the Deployments page. Select BATCH from the deployment type dropdown to filter batch deployments, then expand a deployment to view its jobs. For more information, see Deployment management.

Scripts: Execute DDL statements or short query statements on the Scripts tab of the SQL Editor page. Statements run in a pre-created session, enabling low-latency queries by reusing session resources. For more information, see Scripts.

Catalogs: Create and view catalogs containing database and table metadata on the Catalogs page or the Catalogs tab of the SQL Editor page. For more information, see Data Management.

Workflows: Configure task dependencies visually on the Workflows page. Tasks are linked to batch deployments and run in dependency order, with support for manual and periodic scheduling. For more information, see Task orchestration (Public preview).

Queue management: Divide workspace resources into queues to prevent resource contention among stream deployments, batch deployments, and deployments of different priorities. For more information, see Manage resource queues.

Prerequisites

Before you begin, ensure that you have:

A Realtime Compute for Apache Flink workspace (see Create a workspace)

Object Storage Service (OSS) activated with a bucket using the Standard storage class (see Get started with the OSS console and Storage class)

Ververica Runtime (VVR) 8.0.5 or later — required for the Apache Paimon integration in this tutorial

Example overview

This tutorial processes business data from an e-commerce platform and stores it in lakehouse format in Apache Paimon. The data flows through a three-layer warehouse structure:

Operational data store (ODS): Raw order data ingested from external sources.

Data warehouse detail (DWD): Cleansed and joined order data.

Data warehouse service (DWS): Aggregated daily totals by shop and user.

Preparations

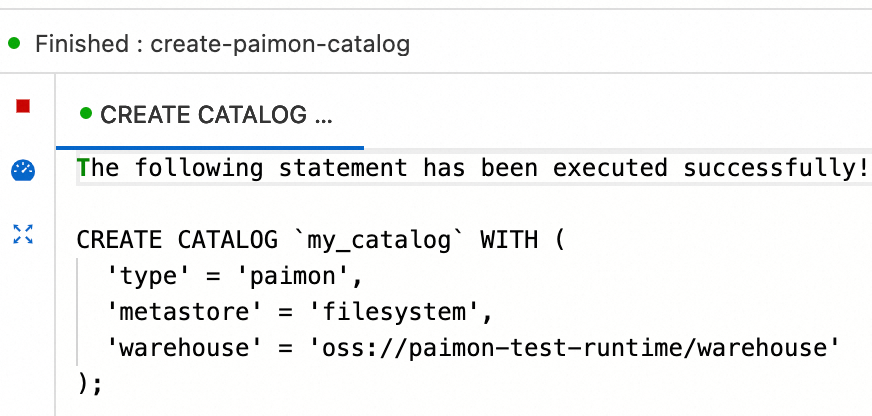

Before running the main steps, set up an Apache Paimon catalog to store all tables.

Create a script on the Scripts tab of the SQL Editor page.

In the script editor, enter the following SQL statement and click Run on the left side of the script editor.

Parameter

Description

Required

Remarks

typeCatalog type

Yes

Set to

paimonmetastoreMetadata storage type

Yes

Set to

filesystem. For other types, see Manage Paimon catalogswarehouseData warehouse directory in OSS

Yes

Format:

oss://<bucket>/<object>. Find your bucket and object path in the OSS consolefs.oss.endpointOSS endpoint

No

Required if the OSS bucket is in a different region from your Flink workspace, or belongs to a different Alibaba Cloud account. See Regions and endpoints

fs.oss.accessKeyIdAccessKey ID with read and write permissions on the OSS bucket

No

Required under the same cross-region or cross-account conditions as

fs.oss.endpoint. See Create an AccessKey pairfs.oss.accessKeySecretAccessKey secret corresponding to the AccessKey ID

No

CREATE CATALOG `my_catalog` WITH ( 'type' = 'paimon', 'metastore' = 'filesystem', -- stores metadata on the filesystem (not Hive Metastore) 'warehouse' = '<warehouse>', -- format: oss://<bucket>/<object> 'fs.oss.endpoint' = '<fs.oss.endpoint>', 'fs.oss.accessKeyId' = '<fs.oss.accessKeyId>', 'fs.oss.accessKeySecret' = '<fs.oss.accessKeySecret>' );Replace the placeholders with your actual values:

Verify that the catalog was created. If the

The following statement has been executed successfully!message appears, the catalog is created. View the catalog on the Catalogs page or the Catalogs tab of the SQL Editor page.

Step 1: Create ODS tables and insert test data

In this tutorial, test data is inserted directly into ODS tables to simplify the example. In production, Realtime Compute for Apache Flink uses stream processing to read data from external sources and write it to the data lake as ODS-layer data. For more information, see Get started with Apache Paimon.

In the script editor on the Scripts tab, enter and select the following SQL statements, then click Run on the left side of the script editor.

If

The following statement has been executed successfully!appears, the DDL statements ran successfully.If a job ID is returned, the DML statements (INSERT) created a Realtime Compute for Apache Flink deployment running in the session. Click Flink UI on the left side of the Results tab to view execution status. Wait a few seconds for the statements to complete.

All three ODS tables are Apache Paimon append-only tables without primary keys. They have better batch write performance than primary key tables, but do not support primary key-based updates.

CREATE DATABASE `my_catalog`.`order_dw`; USE `my_catalog`.`order_dw`; -- ODS table: raw orders CREATE TABLE orders ( order_id BIGINT, user_id STRING, shop_id BIGINT, product_id BIGINT, buy_fee BIGINT, create_time TIMESTAMP, update_time TIMESTAMP, state INT ); -- ODS table: payment records CREATE TABLE orders_pay ( pay_id BIGINT, order_id BIGINT, pay_platform INT, create_time TIMESTAMP ); -- ODS table: product categories CREATE TABLE product_catalog ( product_id BIGINT, catalog_name STRING ); -- Insert test data INSERT INTO orders VALUES (100001, 'user_001', 12345, 1, 5000, TO_TIMESTAMP('2023-02-15 16:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100002, 'user_002', 12346, 2, 4000, TO_TIMESTAMP('2023-02-15 15:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100003, 'user_003', 12347, 3, 3000, TO_TIMESTAMP('2023-02-15 14:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100004, 'user_001', 12347, 4, 2000, TO_TIMESTAMP('2023-02-15 13:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100005, 'user_002', 12348, 5, 1000, TO_TIMESTAMP('2023-02-15 12:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100006, 'user_001', 12348, 1, 1000, TO_TIMESTAMP('2023-02-15 11:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100007, 'user_003', 12347, 4, 2000, TO_TIMESTAMP('2023-02-15 10:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1); INSERT INTO orders_pay VALUES (2001, 100001, 1, TO_TIMESTAMP('2023-02-15 17:40:56')), (2002, 100002, 1, TO_TIMESTAMP('2023-02-15 17:40:56')), (2003, 100003, 0, TO_TIMESTAMP('2023-02-15 17:40:56')), (2004, 100004, 0, TO_TIMESTAMP('2023-02-15 17:40:56')), (2005, 100005, 0, TO_TIMESTAMP('2023-02-15 18:40:56')), (2006, 100006, 0, TO_TIMESTAMP('2023-02-15 18:40:56')), (2007, 100007, 0, TO_TIMESTAMP('2023-02-15 18:40:56')); INSERT INTO product_catalog VALUES (1, 'phone_aaa'), (2, 'phone_bbb'), (3, 'phone_ccc'), (4, 'phone_ddd'), (5, 'phone_eee');The execution result contains multiple sub-tabs:

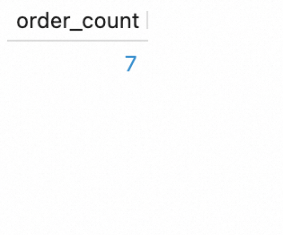

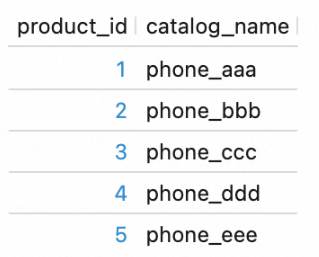

Verify the data by querying the ODS tables. In the script editor, enter and select the following SQL statements, then click Run.

SELECT count(*) as order_count FROM `my_catalog`.`order_dw`.`orders`; SELECT count(*) as pay_count FROM `my_catalog`.`order_dw`.`orders_pay`; SELECT * FROM `my_catalog`.`order_dw`.`product_catalog`;View the results on the Results tab of each query.

Step 2: Create DWD and DWS tables

In the script editor on the Scripts tab, enter and select the following SQL statements, then click Run.

USE `my_catalog`.`order_dw`;

-- DWD table: joined order details

CREATE TABLE dwd_orders (

order_id BIGINT,

order_user_id STRING,

order_shop_id BIGINT,

order_product_id BIGINT,

order_product_catalog_name STRING,

order_fee BIGINT,

order_create_time TIMESTAMP,

order_update_time TIMESTAMP,

order_state INT,

pay_id BIGINT,

pay_platform INT COMMENT 'platform 0: phone, 1: pc',

pay_create_time TIMESTAMP

) WITH (

'sink.parallelism' = '2' -- required: Paimon append-only tables do not support auto parallelism inference

);

-- DWS table: daily aggregates by user

CREATE TABLE dws_users (

user_id STRING,

ds STRING,

total_fee BIGINT COMMENT 'Total amount of payment that is complete on the current day'

) WITH (

'sink.parallelism' = '2' -- required: same reason as dwd_orders

);

-- DWS table: daily aggregates by shop

CREATE TABLE dws_shops (

shop_id BIGINT,

ds STRING,

total_fee BIGINT COMMENT 'Total amount of payment that is complete on the current day'

) WITH (

'sink.parallelism' = '2' -- required: same reason as dwd_orders

);All three tables are Apache Paimon append-only tables. When using an Apache Paimon append-only table as a Flink sink, sink.parallelism must be explicitly configured. Omitting this parameter may cause an error.Step 3: Create batch drafts and deploy them

Create one batch draft per layer and deploy each as a batch deployment.

Create and deploy the DWD draft

Go to Development > ETL, then create a blank batch draft named

dwd_orders. Select a VVR version with the RECOMMENDED label.Copy the following SQL into the draft editor.

-- INSERT OVERWRITE rewrites the entire append-only table on each run INSERT OVERWRITE my_catalog.order_dw.dwd_orders SELECT o.order_id, o.user_id, o.shop_id, o.product_id, c.catalog_name, o.buy_fee, o.create_time, o.update_time, o.state, p.pay_id, p.pay_platform, p.create_time FROM my_catalog.order_dw.orders as o, my_catalog.order_dw.product_catalog as c, my_catalog.order_dw.orders_pay as p WHERE o.product_id = c.product_id AND o.order_id = p.order_idIn the upper-right corner of the SQL Editor page, click Deploy, then click OK.

Create and deploy the DWS drafts

Create two blank batch drafts named

dws_shopsanddws_users, following the same steps as for the DWD draft.Copy the following SQL into the

dws_shopsdraft editor:-- INSERT OVERWRITE rewrites the entire append-only table on each run INSERT OVERWRITE my_catalog.order_dw.dws_shops SELECT order_shop_id, DATE_FORMAT(pay_create_time, 'yyyyMMdd') as ds, SUM(order_fee) as total_fee FROM my_catalog.order_dw.dwd_orders WHERE pay_id IS NOT NULL AND order_fee IS NOT NULL GROUP BY order_shop_id, DATE_FORMAT(pay_create_time, 'yyyyMMdd');Copy the following SQL into the

dws_usersdraft editor:-- INSERT OVERWRITE rewrites the entire append-only table on each run INSERT OVERWRITE my_catalog.order_dw.dws_users SELECT order_user_id, DATE_FORMAT(pay_create_time, 'yyyyMMdd') as ds, SUM(order_fee) as total_fee FROM my_catalog.order_dw.dwd_orders WHERE pay_id IS NOT NULL AND order_fee IS NOT NULL GROUP BY order_user_id, DATE_FORMAT(pay_create_time, 'yyyyMMdd');In the upper-right corner of the SQL Editor page, click Deploy, then click OK to deploy each draft.

Step 4: Start the deployments and verify results

Start and verify the DWD deployment

Go to O&M > Deployments. Select BATCH from the deployment type dropdown. Find the

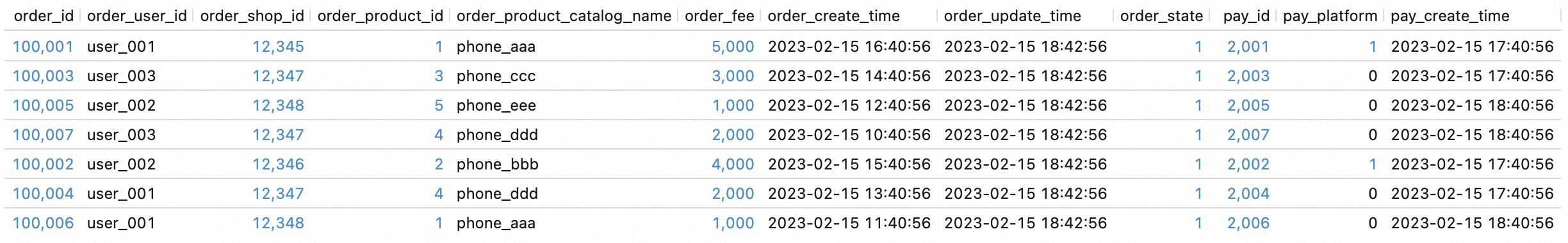

dwd_ordersdeployment and click Start in the Actions column. The deployment enters the STARTING state. When the job state changes to FINISHED, data processing is complete.

Verify the DWD results. In the script editor on the Scripts tab, enter and select the following SQL statement, then click Run.

SELECT * FROM `my_catalog`.`order_dw`.`dwd_orders`;

Start and verify the DWS deployments

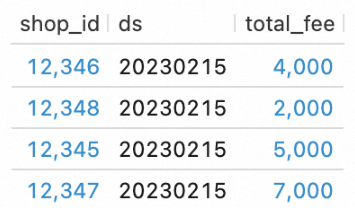

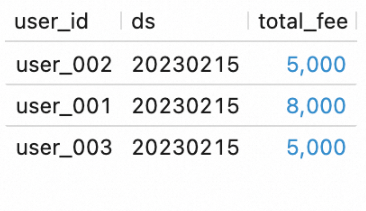

On the O&M > Deployments page, select BATCH from the deployment type dropdown. Find the

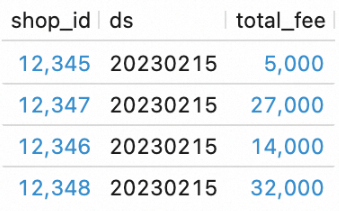

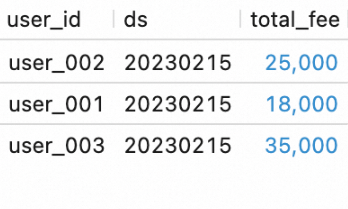

dws_shopsanddws_usersdeployments and click Start in the Actions column of each.Verify the DWS results. In the script editor, enter and select the following SQL statements, then click Run.

SELECT * FROM `my_catalog`.`order_dw`.`dws_shops`; SELECT * FROM `my_catalog`.`order_dw`.`dws_users`;

Step 5: Orchestrate the deployments as a workflow

Orchestrate the three deployments into a single workflow so they run in dependency order automatically.

Create the workflow

In the left-side navigation pane of the Realtime Compute for Apache Flink console, go to O&M > Workflows and click Create Workflow.

In the Create Workflow panel, configure the following settings: Click Create. The workflow editing page opens.

Name:

wf_ordersScheduling Type:

Manual Scheduling(default)Resource Queue:

default-queue

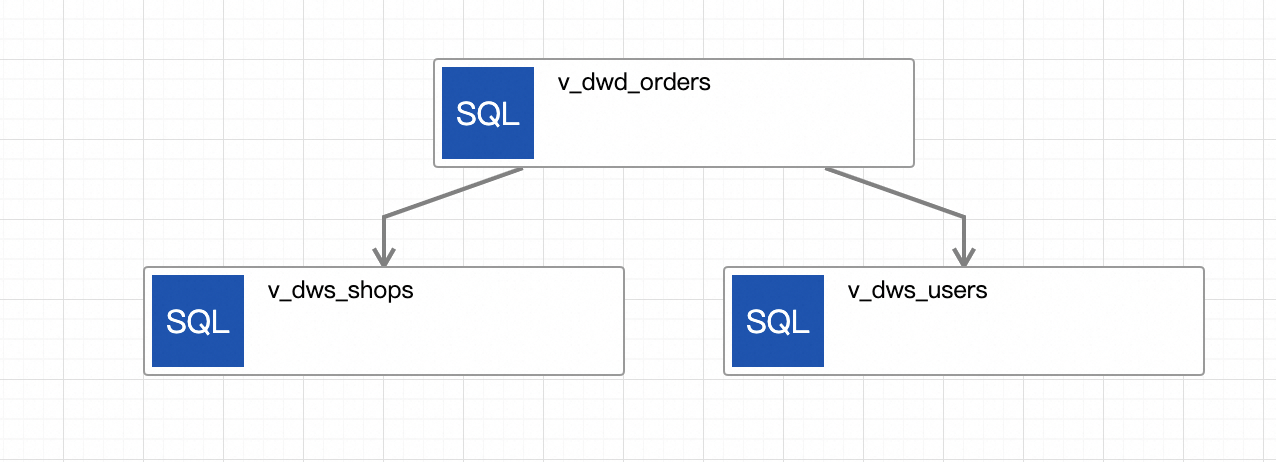

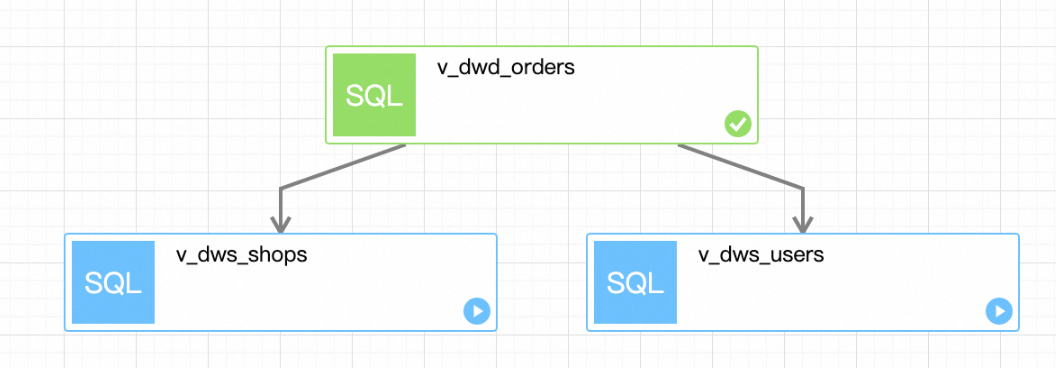

Edit the workflow to define task dependencies. The completed workflow looks like this:

Click the initial task, name it

v_dwd_orders, and select thedwd_ordersdeployment.Click Add Task to create a task named

v_dws_shops. Select thedws_shopsdeployment and setv_dwd_ordersas the upstream task.Click Add Task again to create a task named

v_dws_users. Select thedws_usersdeployment and setv_dwd_ordersas the upstream task.Click Save in the upper-right corner, then click OK.

Run the workflow

Insert additional test data to verify the workflow produces updated results. In the script editor on the Scripts tab, enter and select the following SQL statements, then click Run.

USE `my_catalog`.`order_dw`; INSERT INTO orders VALUES (100008, 'user_001', 12346, 1, 10000, TO_TIMESTAMP('2023-02-15 17:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100009, 'user_002', 12347, 2, 20000, TO_TIMESTAMP('2023-02-15 18:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1), (100010, 'user_003', 12348, 3, 30000, TO_TIMESTAMP('2023-02-15 19:40:56'), TO_TIMESTAMP('2023-02-15 18:42:56'), 1); INSERT INTO orders_pay VALUES (2008, 100008, 1, TO_TIMESTAMP('2023-02-15 20:40:56')), (2009, 100009, 1, TO_TIMESTAMP('2023-02-15 20:40:56')), (2010, 100010, 1, TO_TIMESTAMP('2023-02-15 20:40:56'));Click Flink UI on the left side of the Results tab to monitor deployment status.

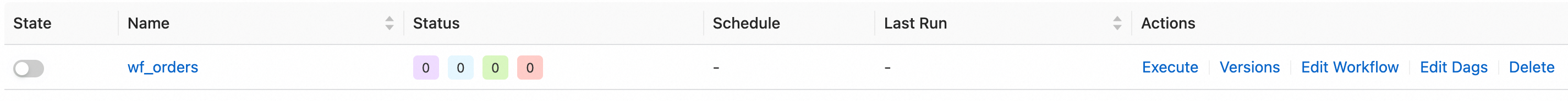

On the O&M > Workflows page, find

wf_ordersand click Execute in the Actions column. In the dialog box, click OK.

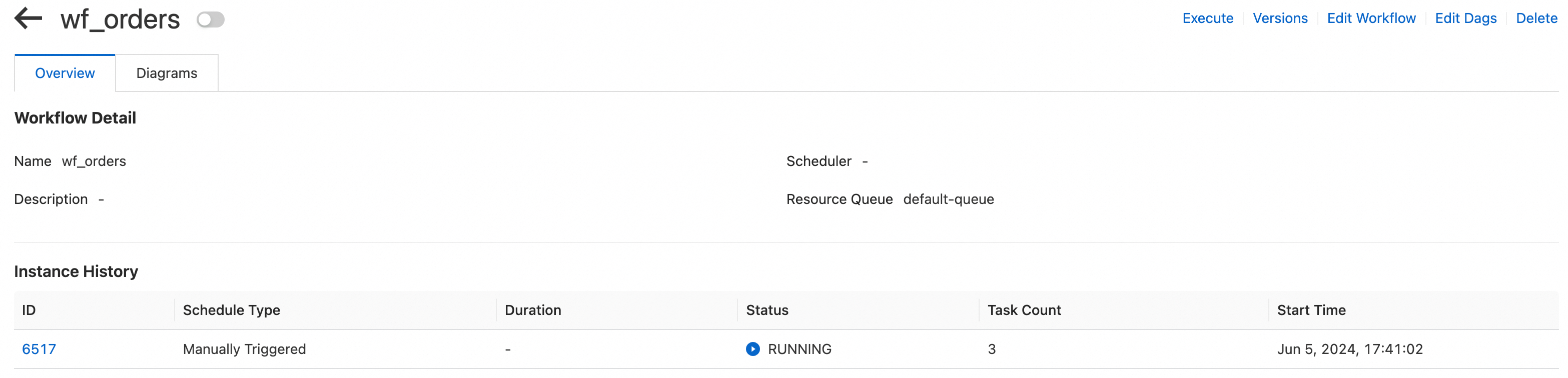

Click the workflow name to open the workflow details page. On the Overview tab, view the task instance list.

Click the ID of a running instance to open the execution details page. View the execution status of each task and wait for the workflow to complete.

Verify the workflow results

In the script editor, enter and select the following SQL statements, then click Run.

SELECT * FROM `my_catalog`.`order_dw`.`dws_shops`;

SELECT * FROM `my_catalog`.`order_dw`.`dws_users`;The new ODS data is processed and written to the DWS tables.

To switch wf_orders to periodic scheduling, go to the Workflows page, find the workflow, and click Edit Workflow in the Actions column. For more information, see Task orchestration (Public preview).What's next

To optimize the performance of your batch jobs, see Optimize batch job performance.

To build a real-time data lakehouse using Realtime Compute for Apache Flink and Apache Paimon, see Build a streaming data lakehouse with Paimon and StarRocks.

To develop and test Flink jobs locally before deploying to the console, see Develop a plug-in in the on-premises environment by using VSCode.