Serverless Spark Batch nodes let you develop and schedule Spark batch jobs on E-MapReduce (EMR) Serverless Spark clusters directly from DataWorks. You compile your Spark code into a JAR package, reference it in the node, and DataWorks handles the periodic scheduling.

Prerequisites

Before you begin, make sure you have:

-

An EMR Serverless Spark compute resource with network connectivity to your resource group

-

A Serverless resource group bound to the workspace — only Serverless resource groups can run this node type

-

A compiled JAR package — develop and compile your Spark code in EMR first. See Spark Tutorials.

-

(Optional) If you are a Resource Access Management (RAM) user: the Developer or Workspace Administrator role in the workspace. See Add members to a workspace. Alibaba Cloud account users can skip this.

The Workspace Administrator role has extensive permissions. Grant it with caution.

Create a node

See Create a node.

Develop a node

Choose how to provide the JAR package based on its size and storage location:

| Scenario | Method |

|---|---|

| JAR package smaller than 500 MB, stored locally | Option 1: Upload as an EMR JAR resource |

| JAR package 500 MB or larger, or already stored in OSS | Option 2: Reference directly from OSS |

Option 1: Upload and reference an EMR JAR resource

Upload a JAR package from your local machine to DataWorks as an EMR JAR resource, then reference it in the node.

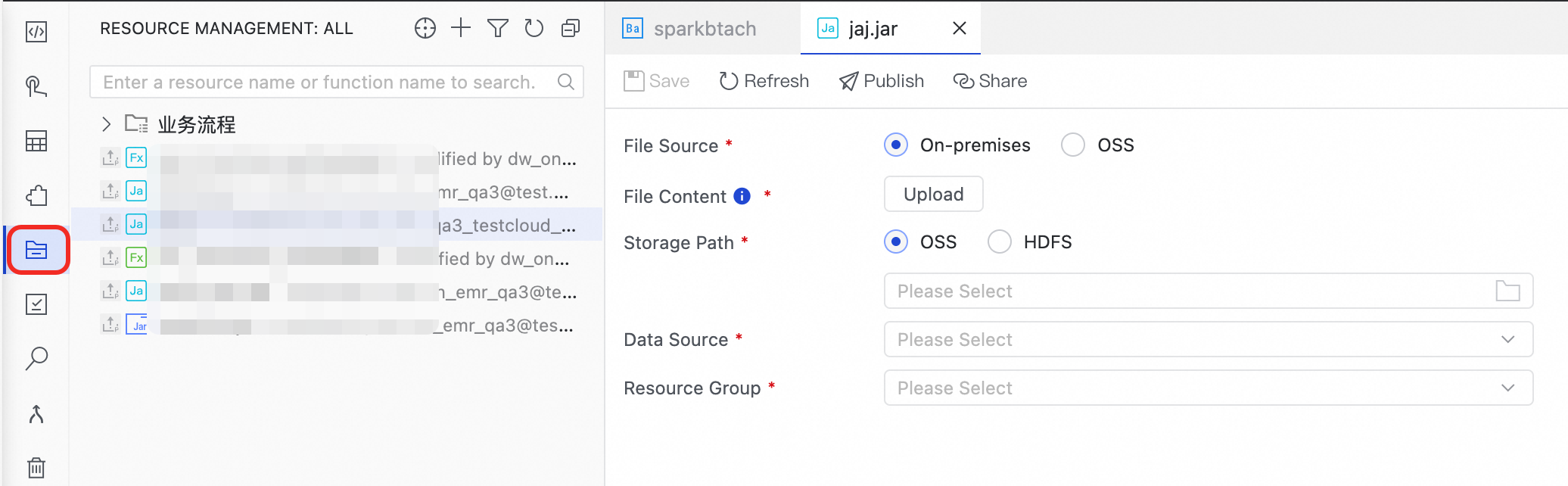

Step 1: Create an EMR JAR resource

-

In the navigation pane, click the Resource Management icon

to open the Resource Management page.

to open the Resource Management page. -

Click the

icon, select Create Resource > EMR JAR, and enter the name spark-examples_2.11-2.4.0.jar.

icon, select Create Resource > EMR JAR, and enter the name spark-examples_2.11-2.4.0.jar. -

Click Upload to upload spark-examples_2.11-2.4.0.jar.

-

Select a Storage Path, Data Source, and Resource Group.

ImportantFor Data Source, select the bound Serverless Spark cluster.

-

Click Save.

Step 2: Reference the EMR JAR resource

-

Open the code editor for the Serverless Spark Batch node.

-

In the navigation pane, expand Resource Management. Right-click the resource and select Reference Resource. A reference statement is automatically added to the code editor:

##@resource_reference{"spark-examples_2.11-2.4.0.jar"} spark-examples_2.11-2.4.0.jar -

Replace the default code with a

spark-submitcommand:Parameter Description --classThe main class of the task in the JAR package. In this example: org.apache.spark.examples.SparkPi.##@resource_reference{"spark-examples_2.11-2.4.0.jar"} spark-submit --class org.apache.spark.examples.SparkPi spark-examples_2.11-2.4.0.jar 100For all supported parameters, see Submit a task using spark-submit.

-

The code editor does not support comment statements. Do not add comments — they cause a runtime error.

-

Do not specify the

deploy-modeparameter. EMR Serverless Spark supports cluster mode only.

Option 2: Reference directly from OSS

Reference a JAR package stored in OSS. DataWorks downloads it automatically when the node runs. Use this method when the JAR has dependencies or when your Spark task depends on scripts.

Step 1: Upload the JAR to OSS

-

Log on to the OSS console and click Buckets in the navigation pane.

-

Click the target bucket to open the file management page.

-

Click Create Directory to create a folder for the JAR package.

-

Go to the folder and upload

SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jar. This topic uses SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jar as an example.

Step 2: Reference the JAR in the node

In the code editor for the Serverless Spark Batch node, add a spark-submit command that points to the OSS path:

spark-submit --class com.aliyun.emr.example.spark.SparkMaxComputeDemo oss://mybucket/emr/SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jarReplace mybucket and emr with your actual bucket name and folder path.

| Parameter | Description |

|---|---|

--class |

The full name of the main class to run. |

| OSS file path | Format: oss://{bucket}/{object}. bucket is a unique container name in OSS (view all buckets in the OSS console). object is the file path within the bucket. |

For all supported parameters, see Submit a task using spark-submit.

Debug the node

-

In the Run Configuration section, configure the following parameters:

Configure global Spark parameters at the workspace level to apply them across all modules, with the option to override module-specific parameters. See Configure global Spark parameters.

Parameter Description Computing Resource Select a bound EMR Serverless Spark compute resource. If none are available, select Create Computing Resource from the drop-down list. Resource Group Select a resource group bound to the workspace. Script Parameters Define variables in ${ParameterName}format in the node code, then specify the Parameter Name and Parameter Value here. DataWorks replaces them with actual values at runtime. See Sources and expressions of scheduling parameters.ServerlessSpark Node Parameters Runtime parameters for the Spark program. Two categories are supported: DataWorks custom parameters (see Appendix: DataWorks parameters) and Spark built-in properties (Open-source Spark properties and Custom Spark Conf parameters). Format: spark.eventLog.enabled : false. DataWorks passes them to Serverless Spark as--conf key=value. -

On the toolbar, click Run.

Before publishing, sync ServerlessSpark Node Parameters from Run Configuration to the ServerlessSpark Node Parameters field under Scheduling.

What's next

After development, the typical workflow is: configure scheduling → publish to production → monitor in Operation Center.

-

Schedule the node: Set scheduling policies and configure scheduling properties in the Scheduling section on the node page. See Schedule a node.

-

Publish the node: Click the

icon to publish. A node runs on a schedule only after it is published to the production environment. See Publish a node.

icon to publish. A node runs on a schedule only after it is published to the production environment. See Publish a node. -

Monitor the node: After publishing, track auto-triggered runs in Operation Center. See Get started with Operation Center.

References

Appendix: DataWorks parameters

| Parameter | Description |

|---|---|

SERVERLESS_QUEUE_NAME |

The resource queue to submit the task to. By default, tasks go to the Default Resource Queue configured in Cluster Management under Management Center. Add queues for resource isolation. See Manage resource queues. Set this parameter via node parameters or global Spark parameters. |