The EMR Spark node lets you develop and periodically schedule Apache Spark jobs directly within DataWorks, without managing job submission infrastructure separately. This topic describes how to configure an EMR Spark node, submit a Spark job, and set up scheduled runs.

Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud E-MapReduce (EMR) cluster created and registered with DataWorks. For more information, see Data Studio: Associate an EMR computing resource.

-

(For RAM users) A Resource Access Management (RAM) user added to your workspace with the Developer or Workspace Administrator role. The Workspace Administrator role has extensive permissions — grant it with caution. For more information, see Add members to a workspace.

If you are using an Alibaba Cloud account, skip this step.

-

(Optional) A custom image built on the official

dataworks_emr_base_task_podbase image, if your job requires specific libraries, files, or JAR packages. For more information, see Custom images and use the image in Data Development.

Limitations

-

Resource group: This node type runs only on a Serverless resource group (recommended) or an exclusive resource group for scheduling. If you use a custom image in Data Development, you must use a Serverless resource group.

-

[DataLake and custom clusters] EMR-HOOK required for metadata management: To manage metadata in DataWorks, configure EMR-HOOK on the cluster first. Without it, you cannot view metadata in real time, generate audit logs, display data lineage, or perform EMR-related data governance tasks. For more information, see Configure EMR-HOOK for Spark SQL.

-

[EMR on ACK] Data lineage not supported: You cannot view the lineage of Spark clusters deployed on E-MapReduce on Container Service for Kubernetes (EMR on ACK). Lineage is supported for EMR Serverless Spark clusters.

-

[EMR on ACK and EMR Serverless Spark] OSS-only resource storage: Reference resources only from Object Storage Service (OSS) using OSS REF, and upload resources only to OSS. Uploading to Hadoop Distributed File System (HDFS) is not supported.

-

[DataLake and custom clusters] HDFS supported: Reference OSS resources via OSS REF, upload resources to OSS, and upload resources to HDFS.

Usage notes

If you enabled Ranger access control for Spark in the EMR cluster bound to your workspace:

-

Ranger is available by default when running Spark tasks with the default image.

-

To run Spark tasks with a custom image, submit a ticket to upgrade the image to support Ranger.submit a ticket

Develop and package the Spark job

Before scheduling an EMR Spark job in DataWorks, develop the job code in E-MapReduce (EMR), compile it, and generate a JAR package. For more information, see Overview.

To schedule the EMR Spark job, upload the JAR package to DataWorks.

Configure and run an EMR Spark node

Step 1: Choose a JAR storage method and develop the Spark job

The method you use to store and reference your JAR depends on the JAR size and your cluster type. Use the following table to choose the right approach before proceeding.

| Method | When to use | Cluster support |

|---|---|---|

| Upload to DataWorks (Option 1a) | JAR smaller than 500 MB | All cluster types |

| Store in HDFS (Option 1b) | JAR 500 MB or larger | DataLake and custom clusters only (not EMR on ACK or EMR Serverless Spark) |

| Reference from OSS (Option 2) | JAR stored in OSS; EMR job depends on a script | All cluster types |

Do not add comments to your job code — they cause an error when the node runs.

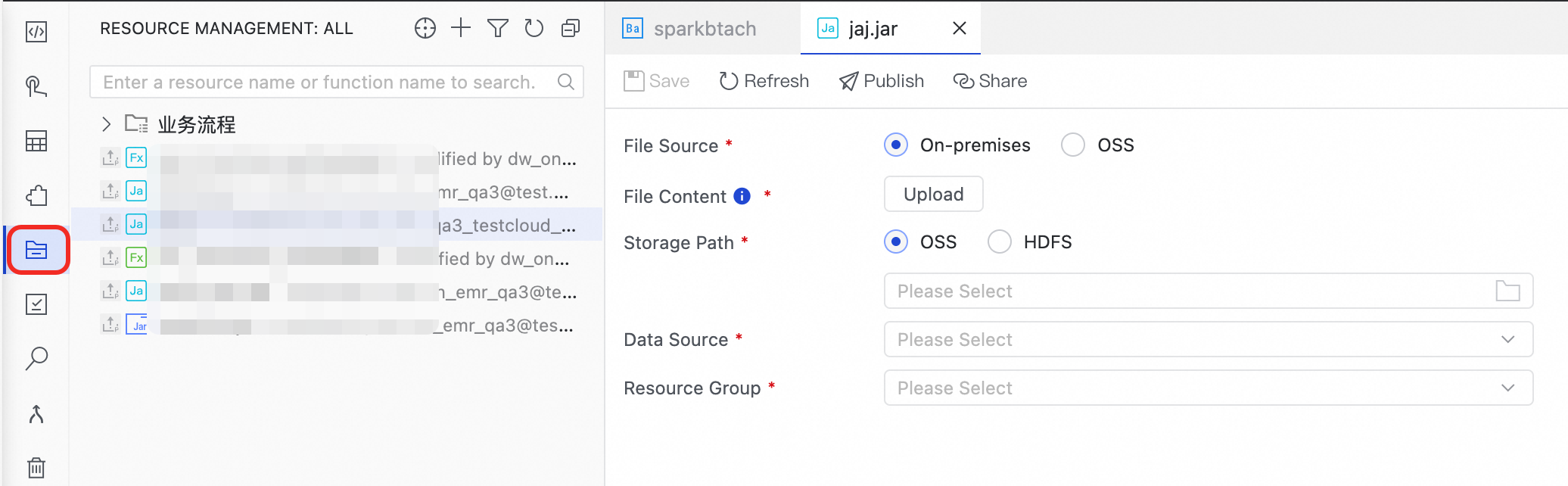

Option 1a: Upload a JAR smaller than 500 MB to DataWorks

-

Create an EMR JAR resource. After creating the resource, submit it. For more information, see Create and use an EMR resource.

-

Upload the JAR package from your Local machine to the directory where JAR resources are stored. For more information, see Resource management.

-

Click Upload.

-

Select the Storage Path, Data Source, and Resource Group.

-

Click Save.

-

-

Reference the EMR JAR resource in the node.

-

Open the created EMR Spark node to go to the code editor.

-

In the left-side navigation pane, right-click the resource you want to reference and select Reference Resource.

-

A reference is automatically added to the code editor:

##@resource_reference{"spark-examples_2.12-1.0.0-SNAPSHOT-shaded.jar"} spark-examples_2.12-1.0.0-SNAPSHOT-shaded.jar -

Add the

spark-submitcommand. For example:##@resource_reference{"spark-examples_2.11-2.4.0.jar"} spark-submit --class org.apache.spark.examples.SparkPi --master yarn spark-examples_2.11-2.4.0.jar 100Parameter Description org.apache.spark.examples.SparkPiThe main class of the job in your compiled JAR package

spark-examples_2.11-2.4.0.jarThe name of the EMR JAR resource you uploaded

Additional notes:

-

EMR Spark nodes only support YARN cluster mode. Set

--deploy-modetocluster, notclient. In cluster mode, the Spark driver runs inside the cluster; in client mode, it runs on the submitting machine, which is not supported for scheduled jobs in DataWorks. -

To add simplified parameters, include them in the command. For example:

--executor-memory 2G. -

Run

spark-submit --helpto view all available parameters.

-

-

Option 1b: Reference a JAR 500 MB or larger from HDFS

If the JAR is 500 MB or larger, you cannot upload it from your local machine. Store the JAR in HDFS on EMR and reference it by path instead.

EMR on ACK and EMR Serverless Spark clusters do not support uploading resources to HDFS. Use Option 2 (OSS REF) for those cluster types.

-

Create an EMR JAR resource in DataWorks.

-

Upload the JAR package from your Local machine to the directory where JAR resources are stored. For more information, see Resource management.

-

Click Upload.

-

Select the Storage Path, Data Source, and Resource Group.

-

Click Save.

-

-

Reference the JAR by its HDFS path.

-

Double-click the created EMR Spark node to open the code editor.

-

Write the

spark-submitcommand with the HDFS path:spark-submit --master yarn \ --deploy-mode cluster \ --name SparkPi \ --driver-memory 4G \ --driver-cores 1 \ --num-executors 5 \ --executor-memory 4G \ --executor-cores 1 \ --class org.apache.spark.examples.JavaSparkPi \ hdfs:///tmp/jars/spark-examples_2.11-2.4.8.jar 100Parameter Description hdfs:///tmp/jars/spark-examples_2.11-2.4.8.jarThe actual path of the JAR package in HDFS

org.apache.spark.examples.JavaSparkPiThe main class of the job in your compiled JAR package

Additional notes:

-

Configure other parameters based on your actual cluster settings.

-

EMR Spark nodes only support YARN cluster mode. Set

--deploy-modetocluster, notclient. -

To add simplified parameters, include them in the command. For example:

--executor-memory 2G. -

Run

spark-submit --helpto view all available parameters.

-

-

Option 2: Reference an OSS resource directly

Reference an OSS resource in the node using OSS REF. When the EMR node runs, DataWorks automatically loads the referenced OSS resource for the job. Use this method when running JAR dependencies in an EMR job or when an EMR job depends on a script.

-

Develop the JAR resource.

-

Find the required dependencies at

/usr/lib/emr/spark-current/jars/on the master node of your EMR cluster. The following example uses Spark 3.4.2. In your IDEA project, add the pom dependencies and reference the plug-ins.Add pom dependencies

<dependencies> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.12</artifactId> <version>3.4.2</version> </dependency> <!-- Apache Spark SQL --> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-sql_2.12</artifactId> <version>3.4.2</version> </dependency> </dependencies>Reference plug-ins

<build> <sourceDirectory>src/main/scala</sourceDirectory> <testSourceDirectory>src/test/scala</testSourceDirectory> <plugins> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <version>3.7.0</version> <configuration> <source>1.8</source> <target>1.8</target> </configuration> </plugin> <plugin> <artifactId>maven-assembly-plugin</artifactId> <configuration> <descriptorRefs> <descriptorRef>jar-with-dependencies</descriptorRef> </descriptorRefs> </configuration> <executions> <execution> <id>make-assembly</id> <phase>package</phase> <goals> <goal>single</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> <configuration> <recompileMode>incremental</recompileMode> </configuration> <executions> <execution> <goals> <goal>compile</goal> <goal>testCompile</goal> </goals> <configuration> <args> <arg>-dependencyfile</arg> <arg>${project.build.directory}/.scala_dependencies</arg> </args> </configuration> </execution> </executions> </plugin> </plugins> </build> -

Write the Scala job code. The following is an example:

package com.aliyun.emr.example.spark import org.apache.spark.sql.SparkSession object SparkMaxComputeDemo { def main(args: Array[String]): Unit = { // Create a SparkSession. val spark = SparkSession.builder() .appName("HelloDataWorks") .getOrCreate() // Print the Spark version. println(s"Spark version: ${spark.version}") } } -

Generate a JAR package from the Scala code. The generated JAR package in this example is

SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jar.

-

-

Upload the JAR to OSS.

-

Log on to the OSS console. In the navigation pane, click Bucket List.

-

Click the name of the destination bucket to go to the File Management page. This example uses the

onaliyun-bucket-2bucket. -

Click Create Directory. Set the Directory Name to

emr/jars. -

Go to the directory and click Upload File. In the Files to Upload section, click Scan Files, select

SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jar, and then click Upload File.

-

-

Reference the JAR resource.

-

On the editing page of the EMR Spark node, write the

spark-submitcommand referencing the OSS path:spark-submit --class com.aliyun.emr.example.spark.SparkMaxComputeDemo --master yarn ossref://onaliyun-bucket-2/emr/jars/SparkWorkOSS-1.0-SNAPSHOT-jar-with-dependencies.jarThe

ossref://scheme tells DataWorks to load the resource from OSS before running the job. The format isossref://{endpoint}/{bucket}/{object}.Parameter Description classThe full name of the main class to run

masterThe mode in which the Spark application runs

endpointThe public endpoint for OSS. If left blank, the OSS bucket must be in the same region as the EMR cluster

bucketThe OSS bucket name. Log on to the OSS console to view all buckets under your account

objectThe specific object (file name or path) stored in the bucket

-

Click the

icon and select the Serverless resource group to run the job. After the job completes, record the

icon and select the Serverless resource group to run the job. After the job completes, record the applicationIdprinted in the console — for example,application_1730367929285_xxxx. -

To view the result, create an EMR Shell node and run:

yarn logs -applicationId application_1730367929285_xxxx

-

Step 2: Configure advanced parameters (optional)

Configure the following parameters in the EMR Node Parameters and DataWorks Parameters sections of the right-side pane. Available parameters vary by cluster type. You can also configure additional open source Spark properties in the EMR Node Parameters and Spark Parameters sections.

DataLake and custom (ECS)

| Parameter | Description |

|---|---|

queue |

The scheduling queue for submitting jobs. Default: default. If you configured a workspace-level YARN resource queue when registering the EMR cluster: if Prioritize Global Configuration is set to Yes, DataWorks uses the registration-time queue; otherwise, it uses the queue configured in the EMR Spark node. For more information, see Basic queue configurations and Configure a global YARN queue. |

priority |

The job priority. Default: 1. |

FLOW_SKIP_SQL_ANALYZE |

The SQL execution mode. true: run multiple SQL statements at once. false (default): run one SQL statement at a time. Applies only to test runs in the data development environment. |

| Others | Add custom Spark parameters — for example, spark.eventLog.enabled : false. DataWorks formats them as --conf key=value before sending to the cluster. To configure global Spark parameters, see Configure global Spark parameters. Note

To enable Ranger permission control, add |

Spark (ACK)

| Parameter | Description |

|---|---|

FLOW_SKIP_SQL_ANALYZE |

The SQL execution mode. true: run multiple SQL statements at once. false: run one SQL statement at a time. Applies only to test runs in the data development environment. |

| Others | Add custom Spark parameters. DataWorks formats them as --conf key=value. To configure global Spark parameters, see Configure global Spark parameters. |

Hadoop (ECS)

| Parameter | Description |

|---|---|

queue |

The scheduling queue for submitting jobs. Default: default. Same YARN queue logic as DataLake and custom (ECS) clusters. For more information, see Basic queue configuration and Set a global YARN resource queue. |

priority |

The job priority. Default: 1. |

FLOW_SKIP_SQL_ANALYZE |

The SQL execution mode. true: run multiple SQL statements at once. false: run one SQL statement at a time. Applies only to test runs in the data development environment. |

USE_GATEWAY |

Whether to submit the job through a gateway cluster. true: submit via gateway cluster. false: submit to the header node (default). If the cluster has no gateway cluster, setting this to true causes job submission to fail. |

| Others | Add custom Spark parameters. DataWorks formats them as --conf key=value. To enable Ranger, add spark.hadoop.fs.oss.authorization.method=ranger in Configure global Spark parameters. |

EMR Serverless Spark

For parameter details, see Set parameters for submitting a Spark job.

| Parameter | Description |

|---|---|

queue |

The scheduling queue for submitting jobs. Default: dev_queue. |

priority |

The job priority. Default: 1. |

FLOW_SKIP_SQL_ANALYZE |

The SQL execution mode. true: run multiple SQL statements at once. false: run one SQL statement at a time. Applies only to test runs in the data development environment. |

SERVERLESS_RELEASE_VERSION |

The Spark engine version. By default, uses the Default Engine Version configured in Cluster Management in the Management Center. Override here to use a different version for a specific job. |

SERVERLESS_QUEUE_NAME |

The resource queue. By default, uses the Default Resource Queue configured in Cluster Management in the Management Center. For more information, see Manage resource queues. |

| Others | Add custom Spark parameters. DataWorks formats them as --conf key=value. To configure global Spark parameters, see Configure global Spark parameters. |

Step 3: Run the Spark job

-

In the Run Configuration section, configure the Compute Resource and DataWorks Resource Group.

Configure Scheduling CU based on your job's resource requirements. The default is

0.25. To access data sources in a public network or VPC, use a scheduling resource group with connectivity to the data source. For more information, see Network connectivity solutions. -

In the parameter dialog box on the toolbar, select the data source and click Run.

Step 4: Configure scheduling and publish

-

To run the node on a regular basis, configure scheduling based on your requirements. For more information, see Node scheduling configuration.

To use a custom environment, create a custom

dataworks_emr_base_task_podimage and use the image in Data Development. For example, replace a Spark JAR package or add dependencies on specificlibraries,files, orjar packagesin the custom image. -

Publish the node. For more information, see Node and workflow deployment.

-

After publishing, view the status of the scheduled task in the Operations Center. For more information, see Getting started with Operation Center.

FAQ

Why does a connection timeout error occur when I run a node?

Check the network connectivity between the Resource Group and the Cluster. Go to the computing resources page, find the resource, and click Initialize Resource. In the dialog box, click Re-initialize and make sure the initialization succeeds.

For Kerberos-related failures, see Spark-submit failure with Kerberos.