Build and deploy a Spark JAR job on E-MapReduce (EMR) Serverless Spark — from Maven configuration through execution and publishing.

EMR Serverless Spark does not provide an integrated development environment (IDE) for JAR packages. Build and package your Spark application on a local or standalone development platform before uploading it.

Prerequisites

Before you begin, ensure that you have:

A workspace. See Workspace Management.

A JAR file built from your Spark application.

Step 1: Configure Maven dependencies

In the pom.xml of your Maven project, add the Spark dependencies with scope set to provided. The EMR Serverless Spark runtime already includes these libraries, so setting provided prevents duplicate packaging and version conflicts while keeping the dependencies available during compilation and testing.

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.5.2</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.12</artifactId>

<version>3.5.2</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.12</artifactId>

<version>3.5.2</version>

<scope>provided</scope>

</dependency>Code examples

The following two examples are used throughout this guide. Each targets a different main class, which you specify when configuring the job.

Example 1: Query a Data Lake Formation (DLF) table

Main class: org.example.HiveTableAccess

public class HiveTableAccess {

public static void main(String[] args) {

SparkSession spark = SparkSession.builder()

.appName("DlfTableAccessExample")

.enableHiveSupport()

.getOrCreate();

spark.sql("SELECT * FROM test_table").show();

spark.stop();

}

}Example 2: Calculate the approximate value of pi (π)

Main class: org.example.JavaSparkPi

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.sql.SparkSession;

import java.util.ArrayList;

import java.util.List;

/**

* Computes an approximation to pi

* Usage: JavaSparkPi [partitions]

*/

public final class JavaSparkPi {

public static void main(String[] args) throws Exception {

SparkSession spark = SparkSession

.builder()

.appName("JavaSparkPi")

.getOrCreate();

JavaSparkContext jsc = new JavaSparkContext(spark.sparkContext());

int slices = (args.length == 1) ? Integer.parseInt(args[0]) : 2;

int n = 100000 * slices;

List<Integer> l = new ArrayList<>(n);

for (int i = 0; i < n; i++) {

l.add(i);

}

JavaRDD<Integer> dataSet = jsc.parallelize(l, slices);

int count = dataSet.map(integer -> {

double x = Math.random() * 2 - 1;

double y = Math.random() * 2 - 1;

return (x * x + y * y <= 1) ? 1 : 0;

}).reduce((integer, integer2) -> integer + integer2);

System.out.println("Pi is roughly " + 4.0 * count / n);

spark.stop();

}

}Click SparkExample-1.0-SNAPSHOT.jar to download a prebuilt test JAR package.

Step 2: Upload the JAR package

Log on to the EMR console.

In the left navigation pane, choose EMR Serverless > Spark.

On the Spark page, click the name of your workspace.

In the left navigation pane of the workspace, click Artifacts.

On the Artifacts page, click Upload File.

In the Upload File dialog box, click the upload area to select a local JAR package, or drag the package into the area. This guide uses SparkExample-1.0-SNAPSHOT.jar as an example.

Step 3: Create and run a job

In the left navigation pane, click Development.

On the Development tab, click the

icon to create a new job.

icon to create a new job.Enter a name, set Type to Application(Batch) > JAR, and click OK.

In the upper-right corner, select a resource queue. For instructions on adding a queue, see Manage resource queues.

Configure the following parameters, leave the remaining settings at their defaults, and click Run.

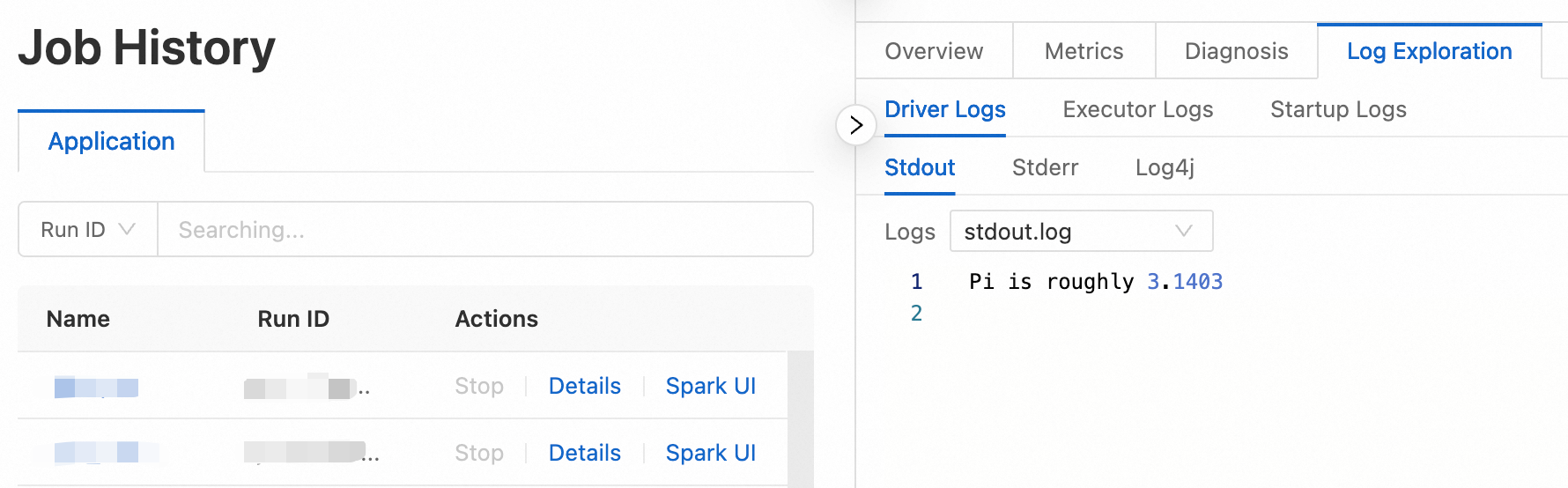

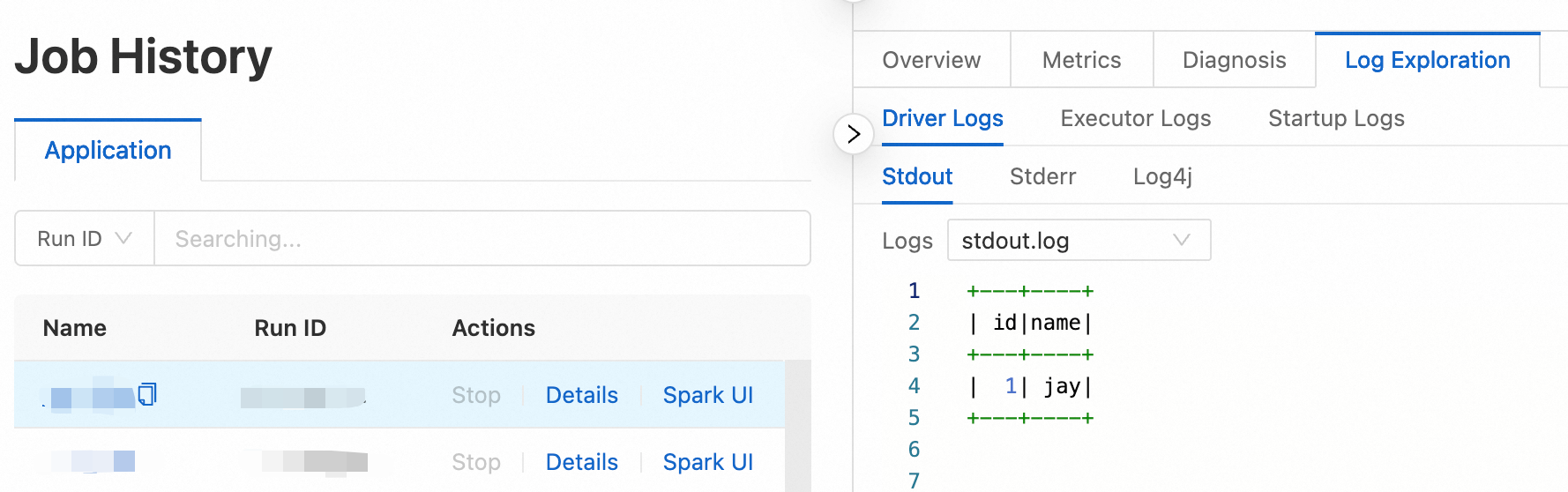

Parameter Description Main JAR Resource Select the JAR package uploaded in Step 2. In this example, select SparkExample-1.0-SNAPSHOT.jar. Main Class The entry point class for your Spark job. Enter org.example.JavaSparkPifor the pi example, ororg.example.HiveTableAccessfor the DLF table query.After the job runs, go to the Execution Records section and click Logs in the Actions column to view the output.

Step 4: Publish the job

Publishing a job makes it available as a node in a workflow.

After the job completes, click Publish in the upper-right corner.

In the dialog box, enter the release information and click OK.

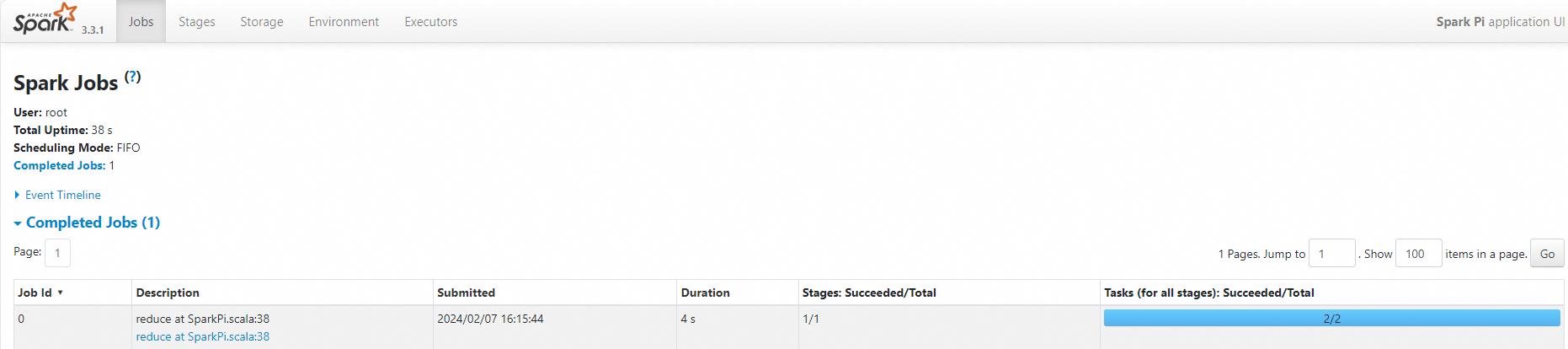

(Optional) Step 5: View the Spark UI

After the job runs successfully, inspect its execution details on the Spark UI.

In the left navigation pane, click Job History.

On the Application page, find your job and click Spark UI in the Actions column.

On the Spark Jobs page, view the job details.

What's next

After publishing, use your job as a scheduled node in a workflow. See Manage workflows for details. For a complete walkthrough of job orchestration, see Get started with SparkSQL development.