Global Spark parameters let you set workspace-level Spark properties for EMR tasks across multiple DataWorks modules, and optionally enforce those properties to take precedence over per-module settings.

How it works

DataWorks provides two ways to configure Spark parameters for scheduling nodes:

| Method | Scope | Where to configure |

|---|---|---|

| Global Spark parameters (this topic) | Workspace level | Management Center > Cluster Management > SPARK Parameters |

| Per-module Spark parameters | Individual node | Scheduling Configurations panel on the node editing page (Data Development / Data Studio only) |

Conflict resolution: When the same Spark parameter is configured in both the DataWorks Management Center and the E-MapReduce (EMR) console, the Management Center configuration takes precedence for tasks submitted from DataWorks.

Priority override: When you enable Global Configuration Has Priority, global Spark properties override any per-module configuration.

Limitations

Only the following roles can configure global Spark parameters:

An Alibaba Cloud account

A Resource Access Management (RAM) user or RAM role with the AliyunDataWorksFullAccess permission

A RAM user with the Workspace Administrator role

Global Spark parameters apply only to the following node types:

Global Spark parameters can only be set for these modules: Data Development (Data Studio), Data Quality, Data Analysis, and Operation Center.

Prerequisites

Before you begin, ensure that you have:

An EMR cluster registered to DataWorks. For more information, see DataStudio (old version): Associate an EMR computing resource

One of the roles listed in Limitations

Configure global Spark parameters

Log on to the DataWorks console. In the top navigation bar, select the target region.

In the left-side navigation pane, choose More > Management Center. Select the target workspace from the drop-down list, then click Go to Management Center.

In the left-side navigation pane of the SettingCenter page, click Cluster Management.

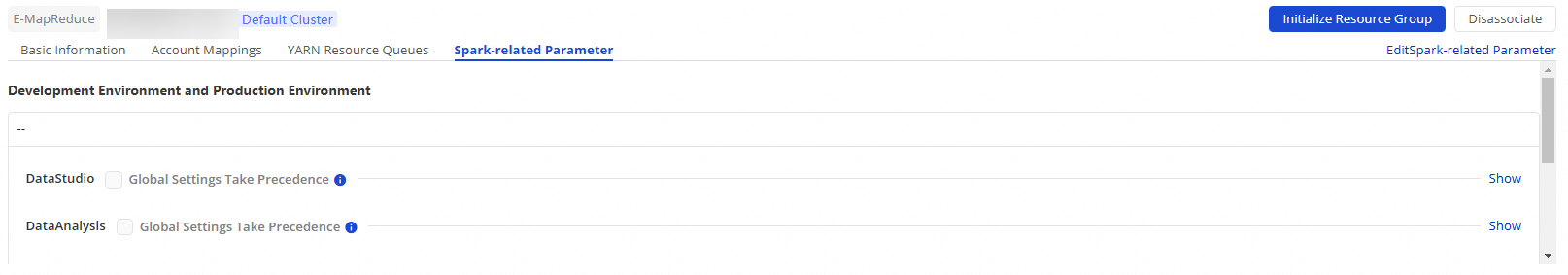

Find the target EMR cluster and click SPARK Parameters.

Click Edit SPARK Parameters in the upper-right corner.

NoteGlobal Spark parameters apply at the workspace level. Confirm the target workspace before making changes.

Configure the following parameters for each module:

NoteTo enable Ranger access control for Spark in DataWorks, add

spark.hadoop.fs.oss.authorization.methodwith the valuerangeras a Spark property to ensure that Ranger access control is enabled.Parameter Description Spark property The Spark properties (Spark Property Name and Spark Property Value) applied when a module runs EMR tasks. For valid property names and values, see Spark Configurations and Spark Configurations on Kubernetes. Global Configuration Has Priority If selected, global Spark properties override per-module configurations. In this case, tasks are run based on the globally configured Spark properties. Save the configuration.

What's next

To monitor and manage EMR tasks after configuration, see Operation Center overview.

For the full list of available Spark properties, see the Spark configuration reference.