CDH Hive nodes let you run Apache Hive tasks in DataWorks against a registered Cloudera's Distribution Including Apache Hadoop (CDH) cluster — querying data or processing it in batches.

Prerequisites

Before you begin, ensure that you have:

A workflow created in DataStudio. All nodes are created inside workflows. See Create a workflow.

A CDH cluster registered to DataWorks. See DataStudio (old version): Associate a CDH computing resource.

(Required for RAM users) The RAM user added to the workspace as a member with the Development or Workspace Administrator role. The Workspace Administrator role grants more permissions than most users need — assign it with caution. See Add workspace members and assign roles to them.

A serverless resource group purchased and configured, with workspace association and network settings complete. See Create and use a serverless resource group.

Limitations

CDH Hive nodes run on serverless resource groups or old-version exclusive resource groups for scheduling. We recommend that you use a serverless resource group.

Step 1: Create a CDH Hive node

Log on to the DataWorks console. In the top navigation bar, select the target region. In the left-side navigation pane, choose Data Development and O\&M > Data Development. Select the target workspace from the drop-down list and click Go to Data Development.

On the DataStudio page, find the target workflow, right-click the workflow name, and choose Create Node > CDH > CDH Hive.

Alternatively, hover over the Create icon at the top of the Scheduled Workflow pane and create a CDH node from there.

In the Create Node dialog box, enter a value for Name and click Confirm.

Step 2: Develop a Hive task

Double-click the node name to open its configuration tab.

Select a CDH compute engine instance (optional)

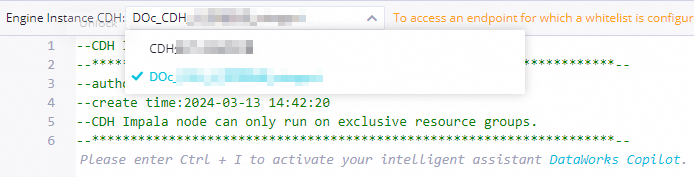

If multiple CDH clusters are registered to the workspace, select the target cluster from the Engine Instance CDH drop-down list. If only one cluster is registered, skip this step.

Write SQL code

Enter your Hive SQL in the SQL editor. Example:

SHOW tables;

SELECT * FROM userinfo;Use scheduling parameters

DataWorks scheduling parameters let you inject dynamic values into task code at runtime. Define variables in your SQL using ${Variable} syntax, then assign values in the Scheduling Parameter section of the Properties tab.

SELECT '${var}'; -- Replaced at runtime with the value assigned to varFor supported formats, see Supported formats of scheduling parameters.

Step 3: Configure task scheduling properties

To run the task on a schedule, click Properties in the right-side navigation pane and configure the following:

Basic properties: See Configure basic properties.

Scheduling cycle, rerun properties, and scheduling dependencies: See Configure time properties and Configure same-cycle scheduling dependencies.

Resource properties: See Configure the resource property. If the node is an auto triggered node that needs internet or virtual private cloud (VPC) access, select a resource group for scheduling that has network connectivity. See Network connectivity solutions.

Configure Rerun and Parent Nodes on the Properties tab before committing the task.

Step 4: Debug task code

(Optional) Click the

icon in the top toolbar. In the Parameters dialog box, select the resource group to use for debugging. If your code uses scheduling parameters, assign test values to the variables here. See Differences in scheduling parameter value assignment across run modes.

icon in the top toolbar. In the Parameters dialog box, select the resource group to use for debugging. If your code uses scheduling parameters, assign test values to the variables here. See Differences in scheduling parameter value assignment across run modes.Click the

icon to save, then click the

icon to save, then click the  icon to run the SQL statements.

icon to run the SQL statements.(Optional) Run smoke testing in the development environment during or after the commit step. See Perform smoke testing.

What's next

Commit and deploy the task

Click the

icon to save the task.

icon to save the task.Click the

icon to commit.

icon to commit.In the Submit dialog box, enter a Change description and click Confirm.

If the workspace uses standard mode, deploy the task to the production environment after committing: click Deploy in the top navigation bar of the DataStudio page. See Deploy tasks.

View the task in Operation Center

Click Operation Center in the upper-right corner of the node configuration tab to go to Operation Center in the production environment. See View and manage auto triggered tasks.

For a full overview of Operation Center, see Overview.