By Haoran

Check the doc of Solution 1.

Check the doc of Solution 1.

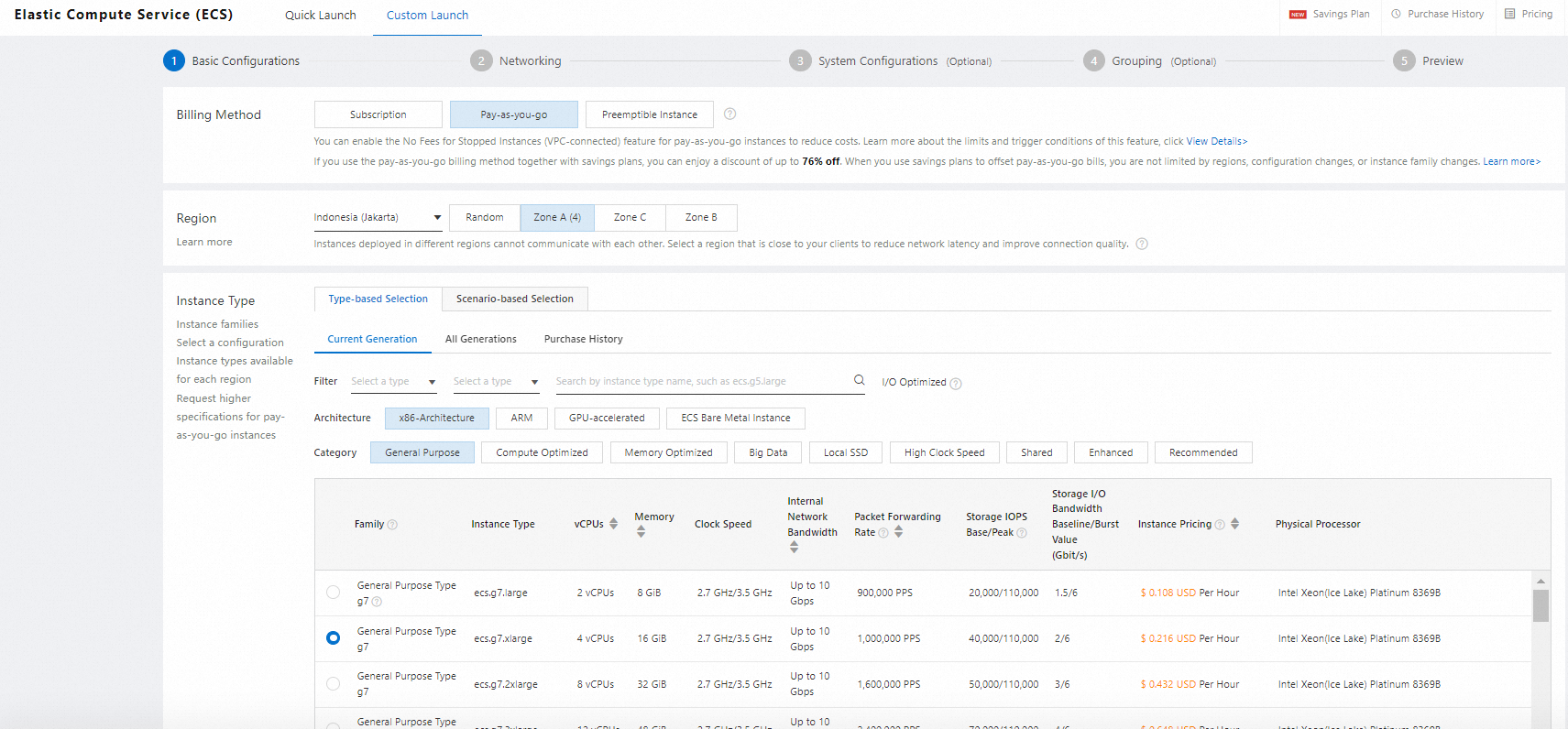

Choose the spec, eg. 4c 16g, ecs.g7.xlarge, and click pay-as-you-go.

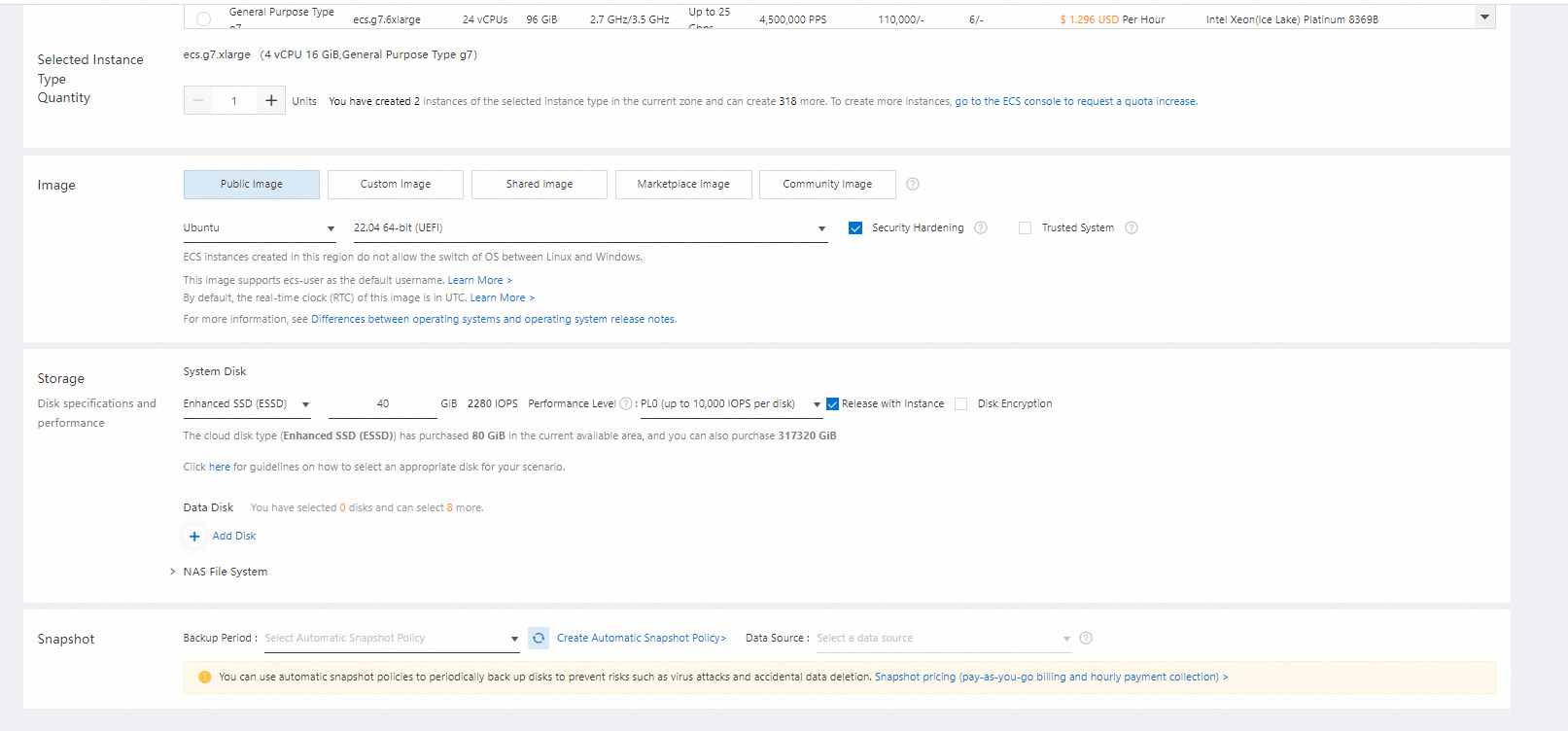

Choose Public Image and Ubuntu with 22.04 64-bit.

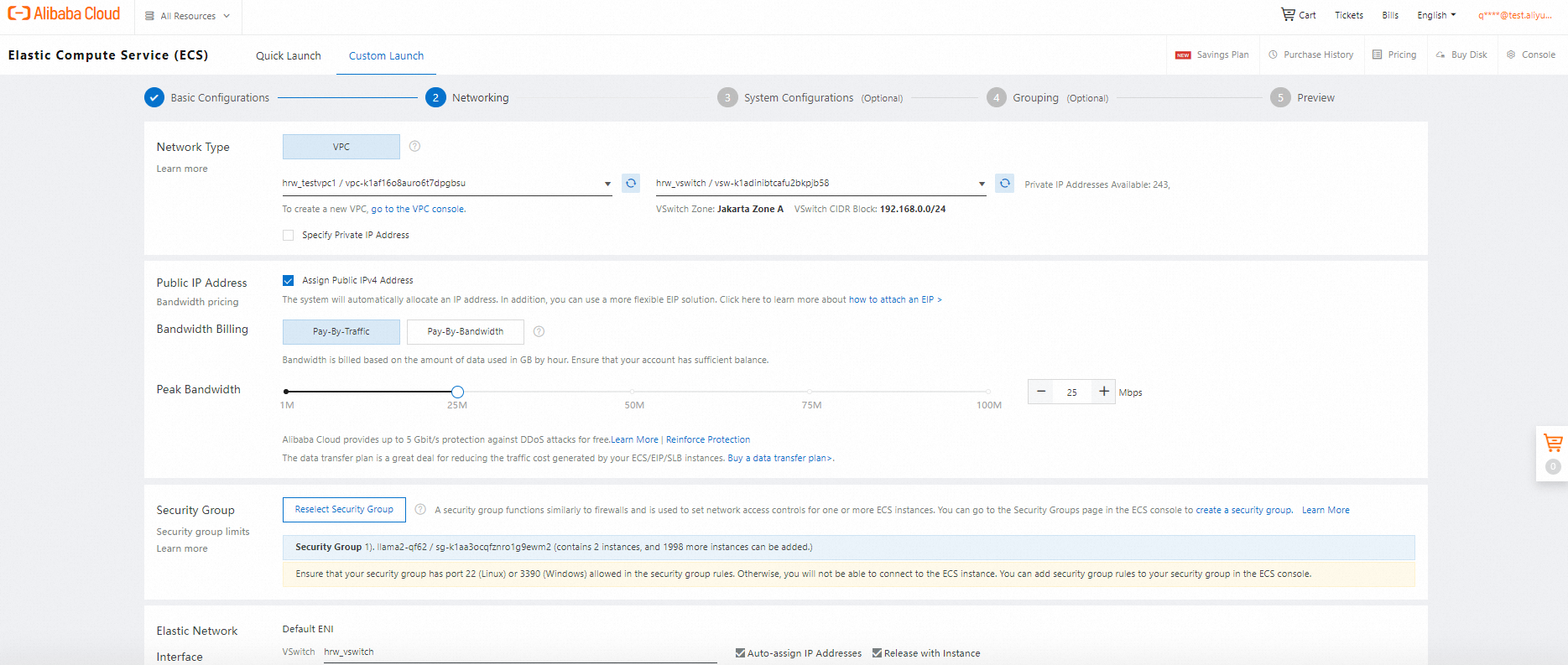

Specify VPC and VSwitch and Security Group. If you have created, click go to the VPC console to create it and refresh it to configure. For network, click assign Public IPV4 address, do you choose ENI this time, otherwise coupon cannot be used in public network part.

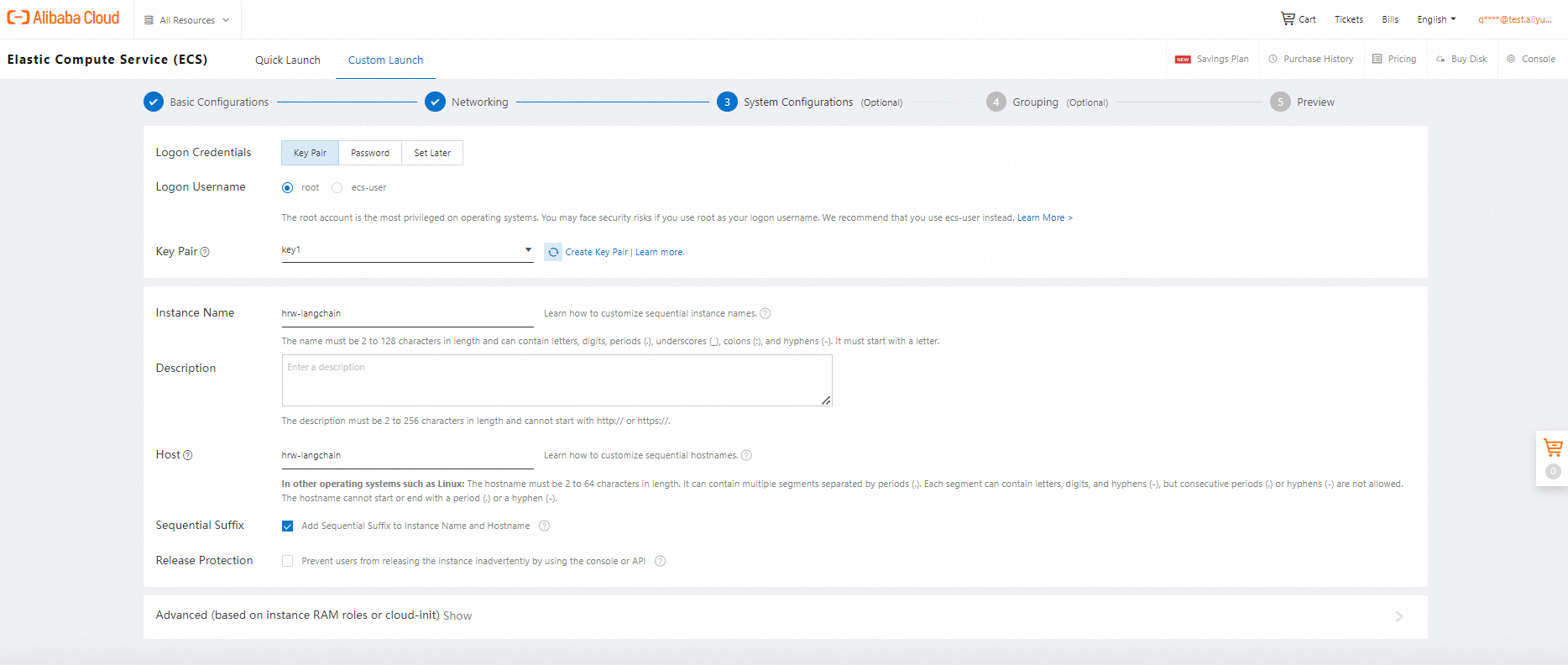

Choose Key Pair and create a key pair and configure it. It is your key.pem. then you can change your instance name and host name, and click sequential suffix.

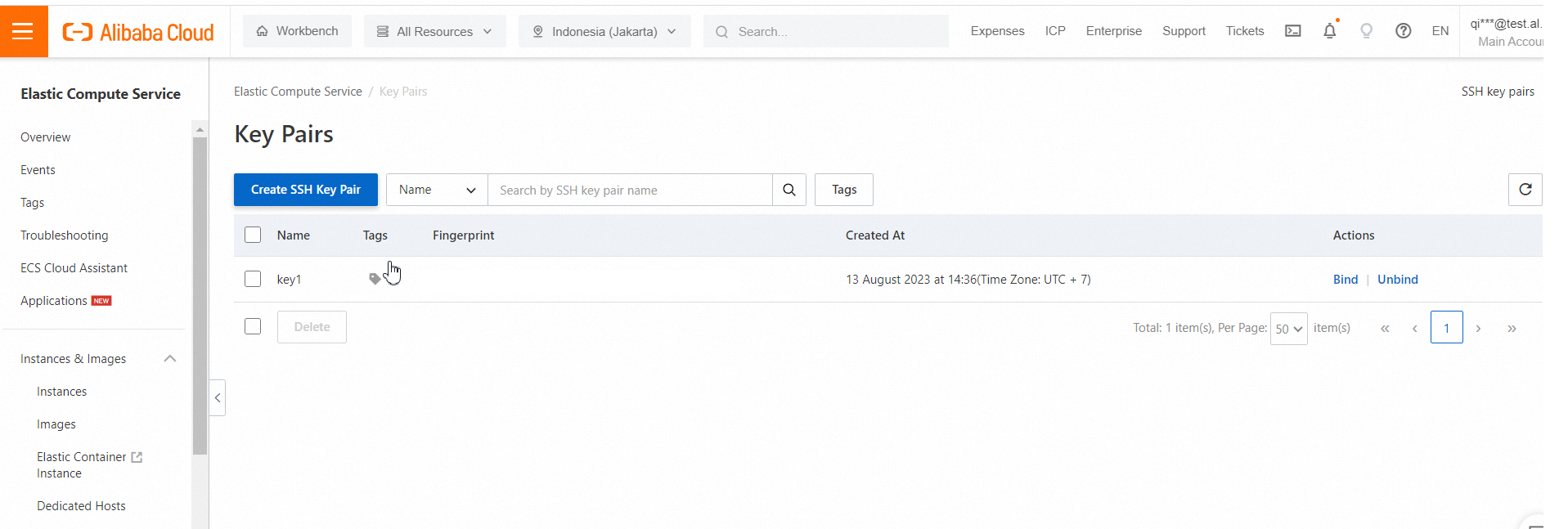

Here is the screenshot to create key pairs

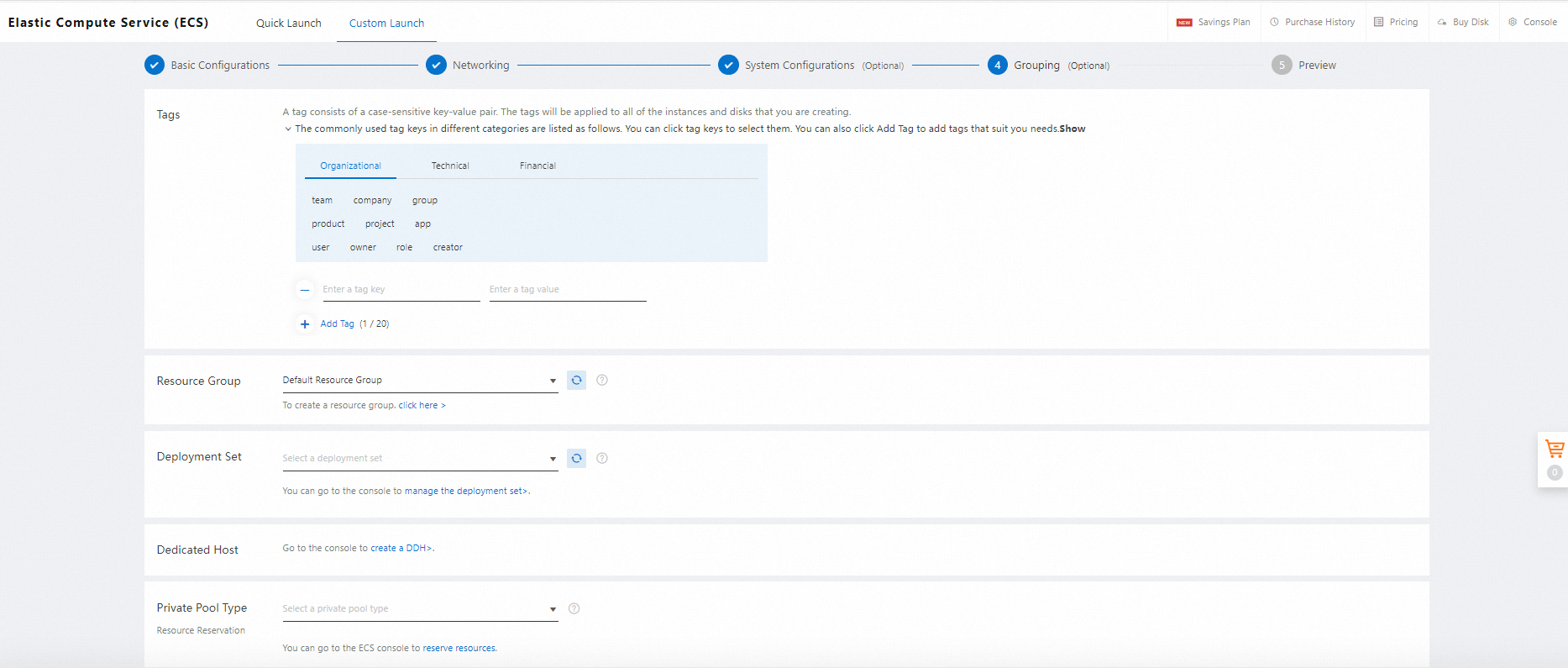

Then you can configure it into same resource group for better management.

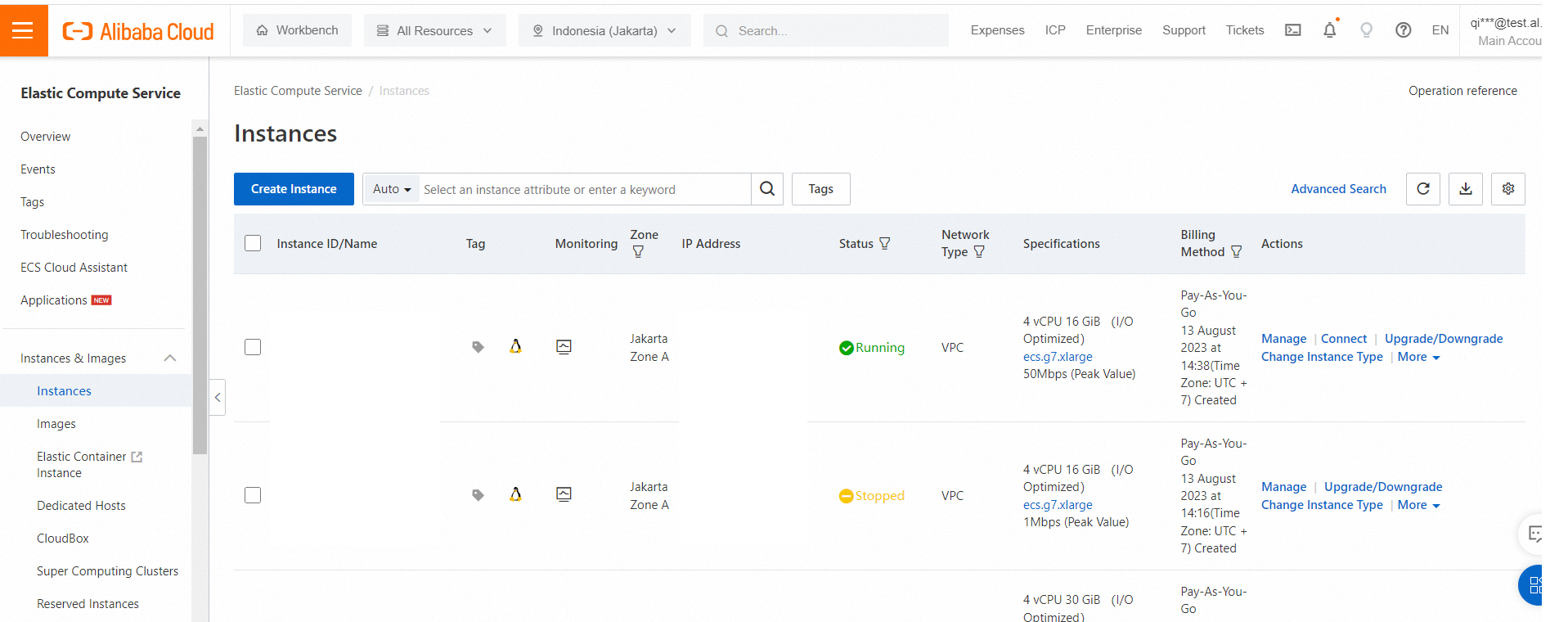

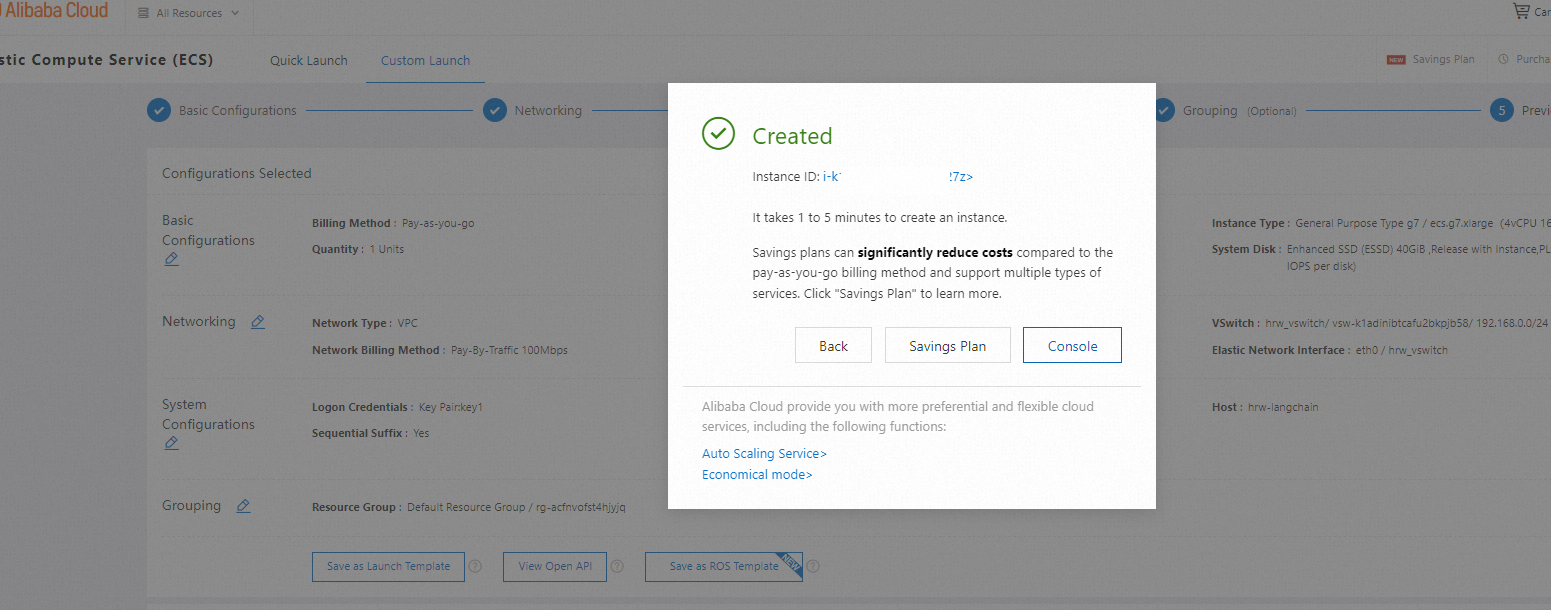

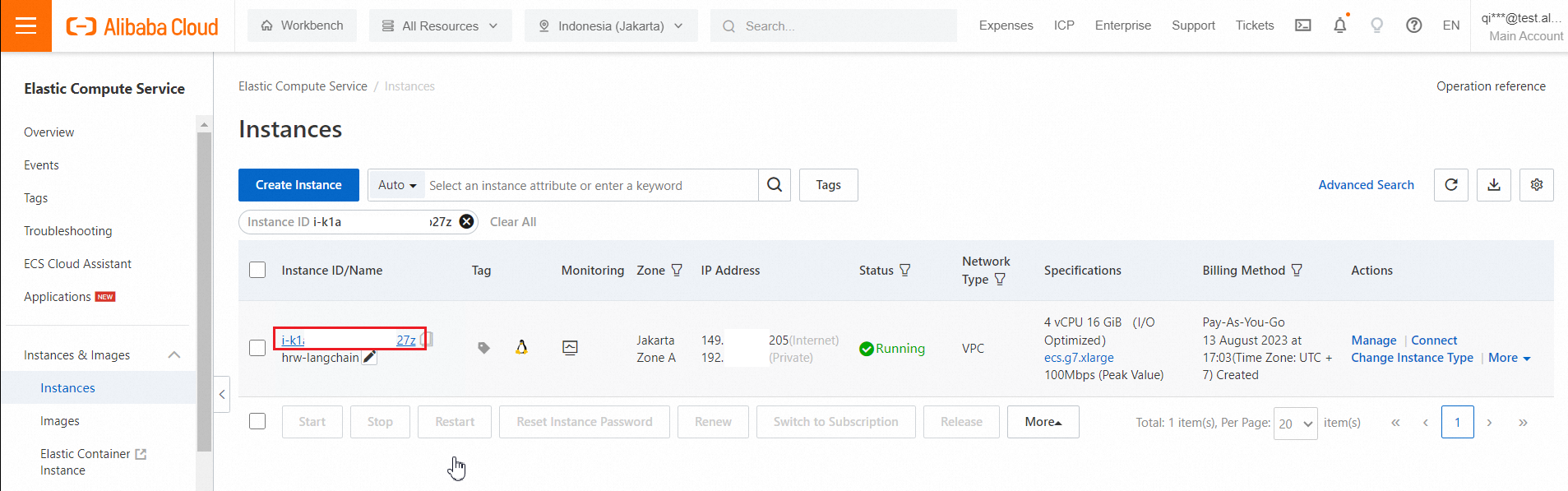

After final preview, you click create instance. Then it will create successfully. Click console to check the instance. You don’t need to click savings plan in this time.

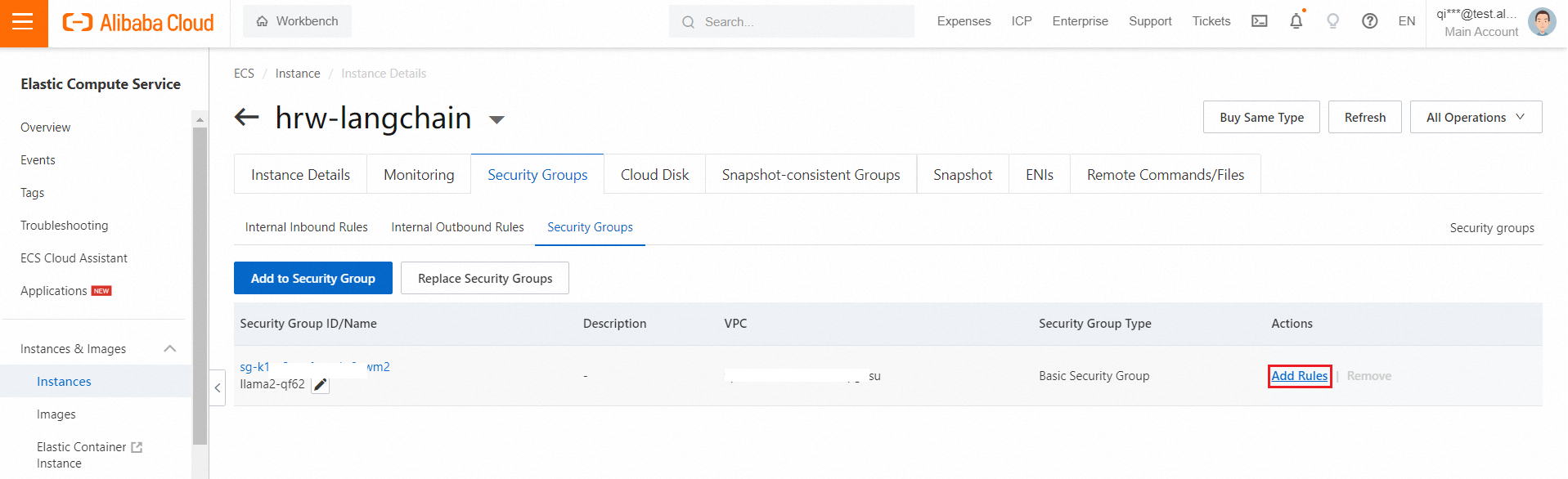

3. configure the security group

Click the instance to check the details

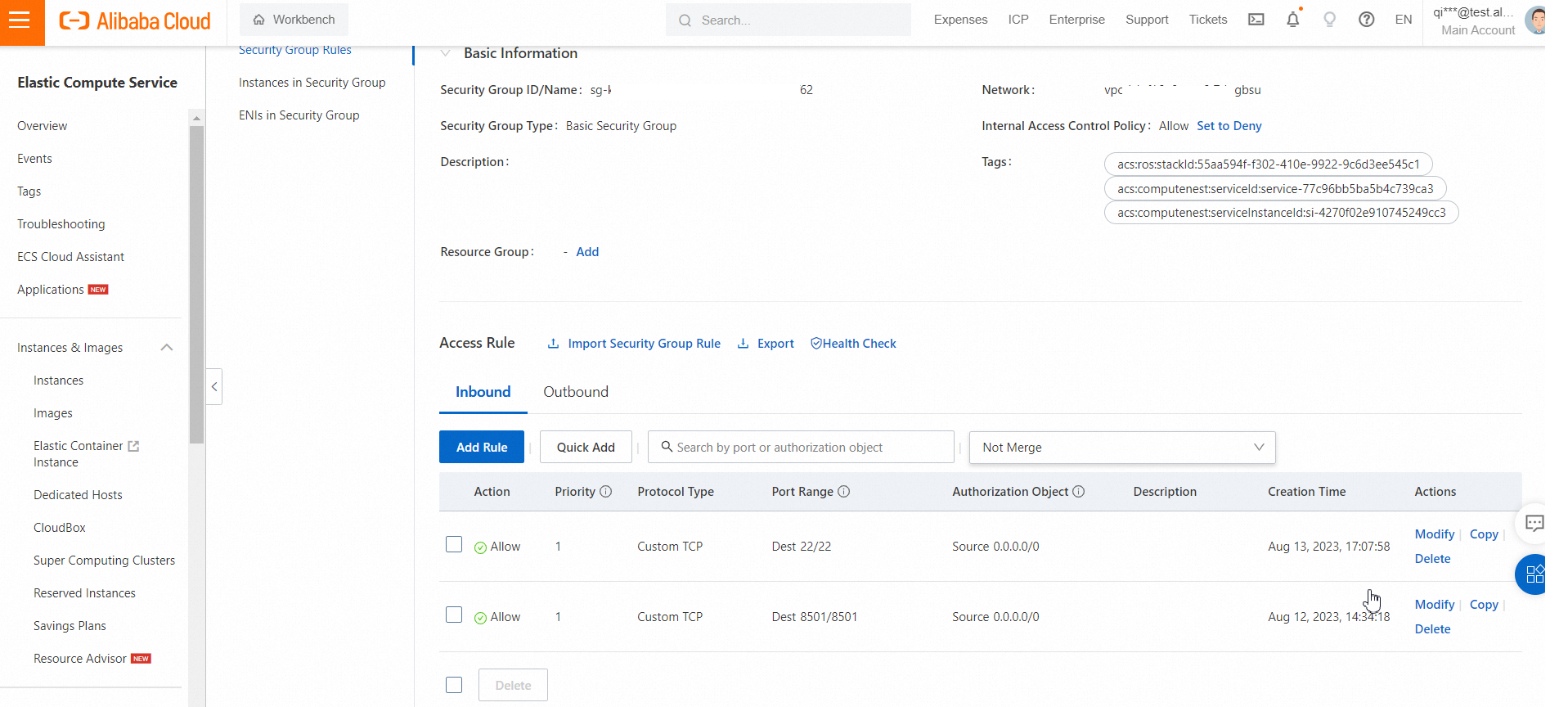

Click Add Rules

Click Add Rule to open port 22 for SSH, with address 0.0.0.0/0

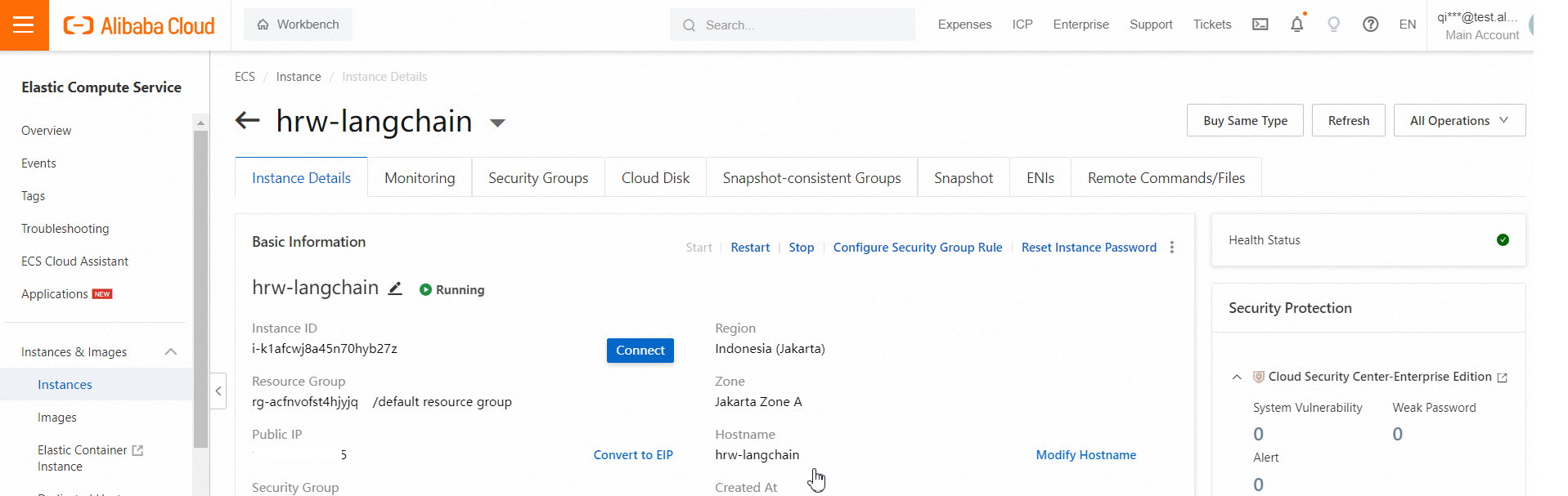

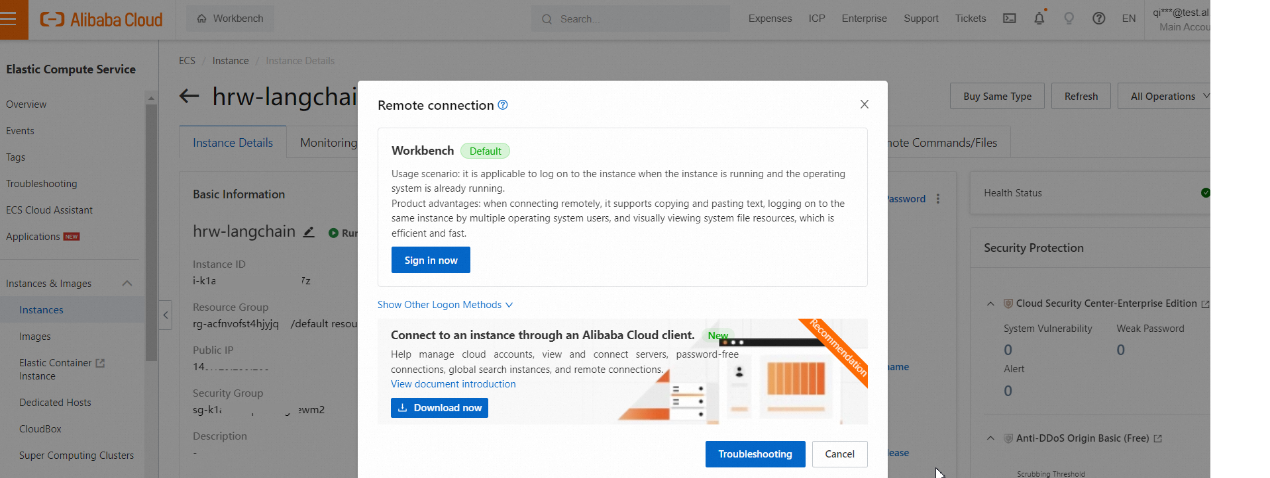

4. Test your connection in web workbench

Click Connect in Instance Details

Click Sign in now

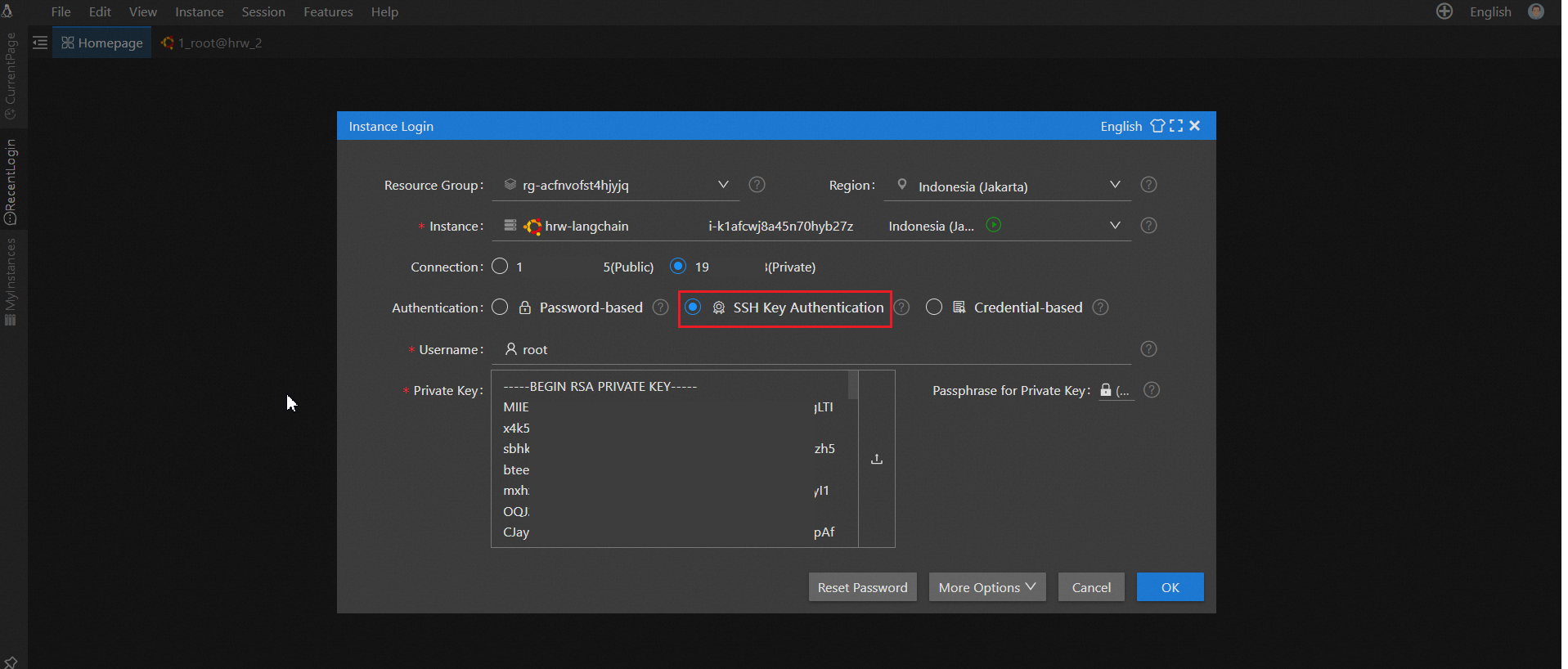

You can use SSH Key Authentication to logon it.

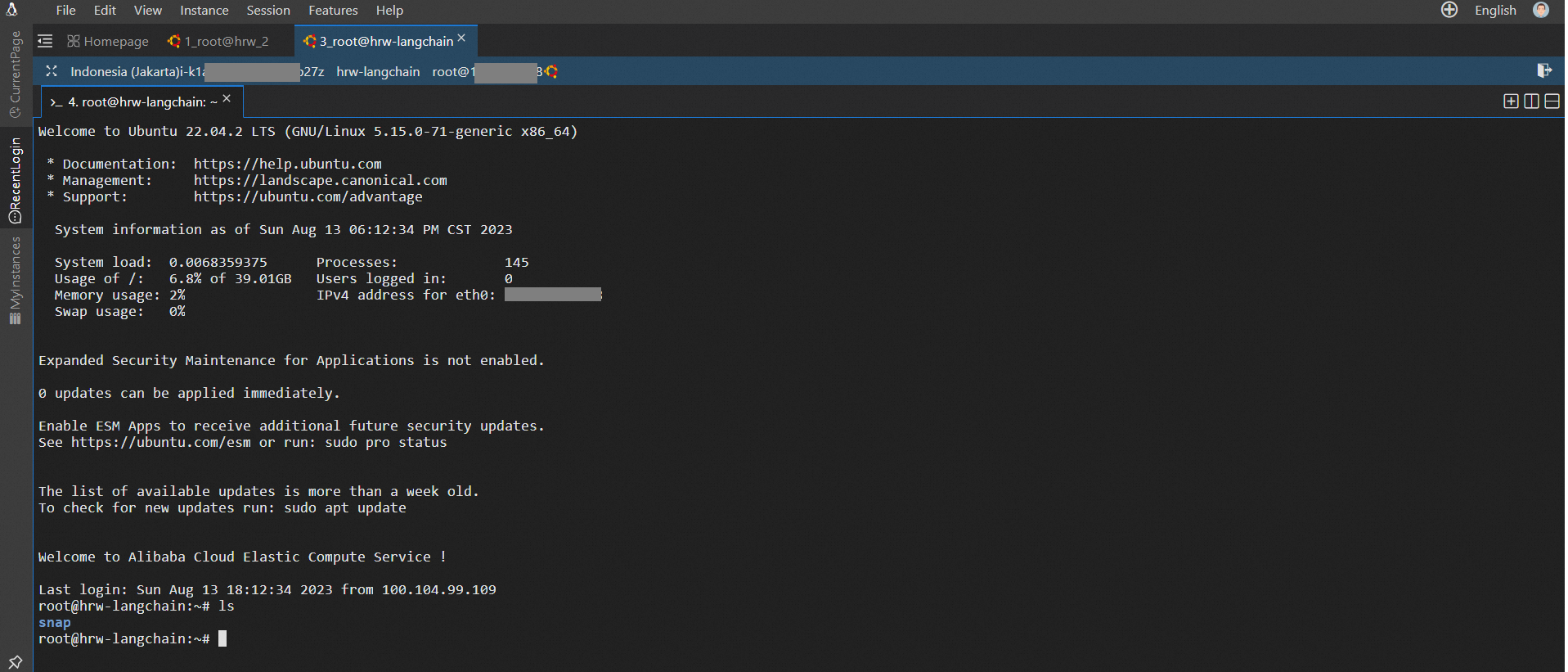

Then you can successfully logon as is shown in the following picture.

1. Install docker.io on ECS instance.

apt-get -y update

apt-get -y install docker.io 2. Start the docker server.

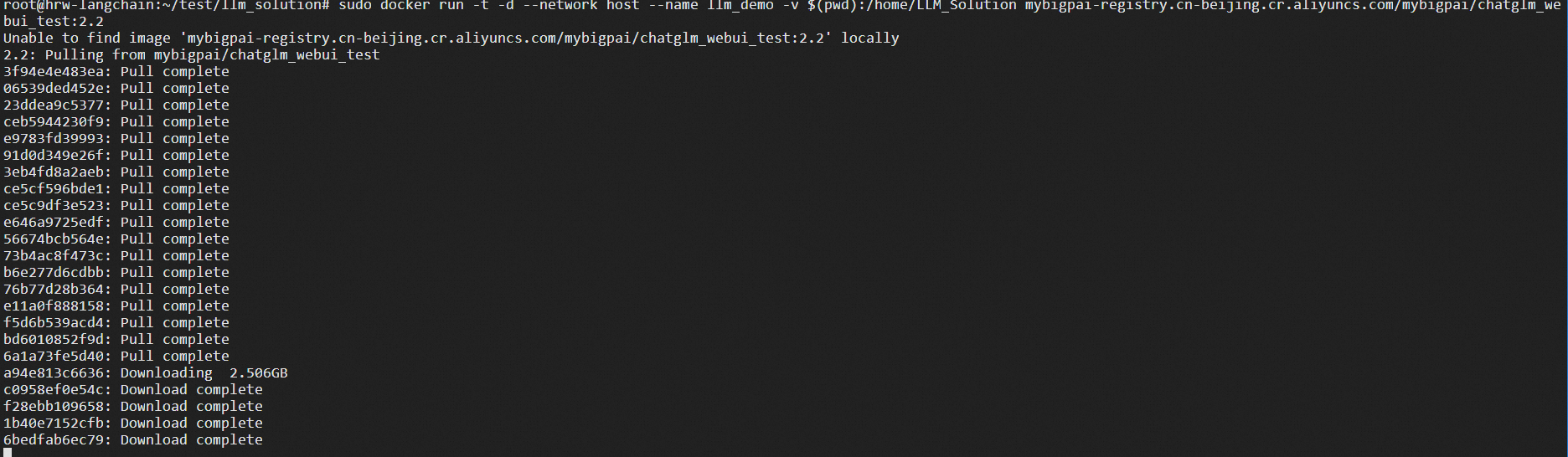

systemctl start docker 3. Run the following command to mount the project to the Docker container and start it.

sudo docker run -t -d --network host --name llm_demo mybigpai-registry.cn-beijing.cr.aliyuncs.com/mybigpai/chatglm_webui_test:2.2

It takes around 15-25 minutes to download, depending on network speed. This image will be available only during the Generative AI Hackathon event period. If you want to use this image, please, feel free to contact us.

4. Enter into the docker

docker exec -it llm_demo bash 5. Open configuration file (config.json)

vim config.json 6. Change the file as below and save it.

"embedding": {

"model_dir": "/code/",

"embedding_model": "SGPT-125M-weightedmean-nli-bitfit",

"embedding_dimension": 768

},

"EASCfg": {

"url": "[http://xxxxx.pai-eas.aliyuncs.com/api/predict/llama2_model](http://xxxxx.pai-eas.aliyuncs.com/api/predict/llama2_model)",

"token": "xxxxxx"

},

"ADBCfg": {

"PG_HOST":"gp-xxx.gpdbmaster.ap-southeast-5.rds.aliyuncs.com",

"PG_DATABASE": "postgres",

"PG_USER": "pg_user",

"PG_PASSWORD": "password"

},

"create_docs":{

"chunk_size": 200,

"chunk_overlap": 0,

"docs_dir": "docs/",

"glob": "**/*"

},

"query_topk": 4,

"prompt_template": "Answer user questions concisely and professionally based on the following known information. If you cannot get an answer from it, please say \\"This question cannot be answered based on known information\\" or \\"Insufficient relevant information has been provided\\", no fabrication is allowed in the answer, please use English for the answer. \\n=====\\nKnown information:\\n{context}\\n=====\\nUser question:\\n{question}"

} |7. Run the following code to upload the docs

python main.py --config config.json --upload true8. Run the following code to make a query

python main.py --config config.json --query "what is PAI?" Solution 1: Build Your Llama2 LLM Solution with PAI-EAS and AnalyticDB for PostgreSQL

Alibaba Cloud Indonesia - September 21, 2023

Alibaba Cloud Community - August 23, 2023

ApsaraDB - May 15, 2024

Farruh - January 22, 2024

Farruh - August 13, 2023

Farruh - August 11, 2023

AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More AnalyticDB for MySQL

AnalyticDB for MySQL

AnalyticDB for MySQL is a real-time data warehousing service that can process petabytes of data with high concurrency and low latency.

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn MoreMore Posts by Farruh