Let's be real: the old way of doing data is broken. You've got your batch jobs over here, your streaming pipelines over there, and your AI workloads somewhere else entirely. It's a mess of silos, sky-high costs, and constant headaches just to keep things running.

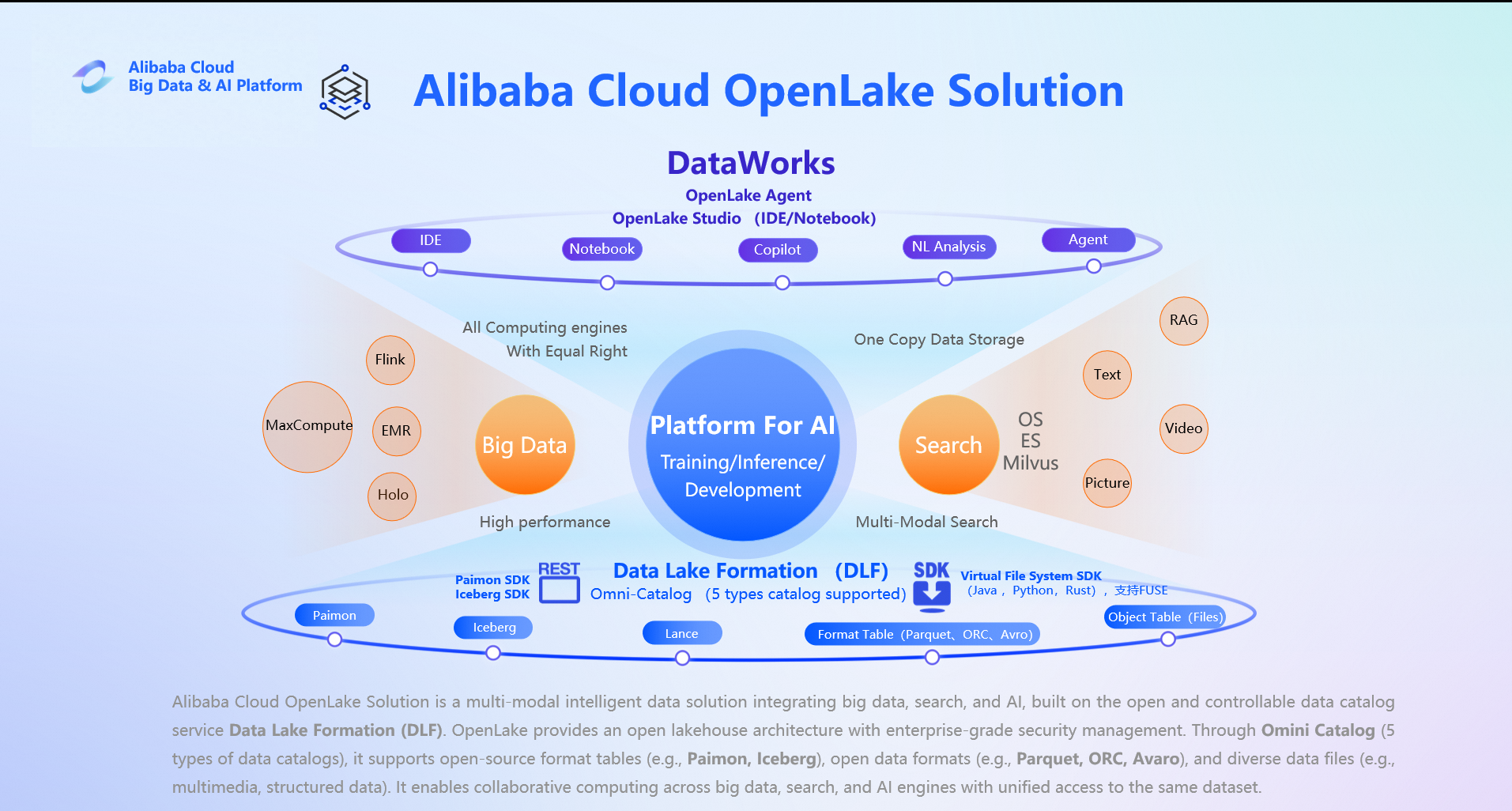

That's where OpenLake comes in. Think of it as Alibaba Cloud's answer to this chaos—a next-gen, open data and AI architecture that finally brings everything together into one powerful, cost-effective platform.

Forget the old "one-size-fits-all" engine trap. OpenLake is built on a smarter idea: use the right tool for the job. Its core superpower is letting different engines—like Spark, Flink, MaxCompute, and Hologres—work together seamlessly on the same data, without locking you into a single vendor.

It all starts with Data Lake Formation (DLF) and its Omni Catalog. This is your single source of truth for all your data, whether it's:

This unified layer means no more copying data between systems. Everyone—from your batch ETL jobs to your real-time dashboards to your LLM-powered apps—can access the same, fresh data. And yes, you can even call an LLM directly from your SQL!

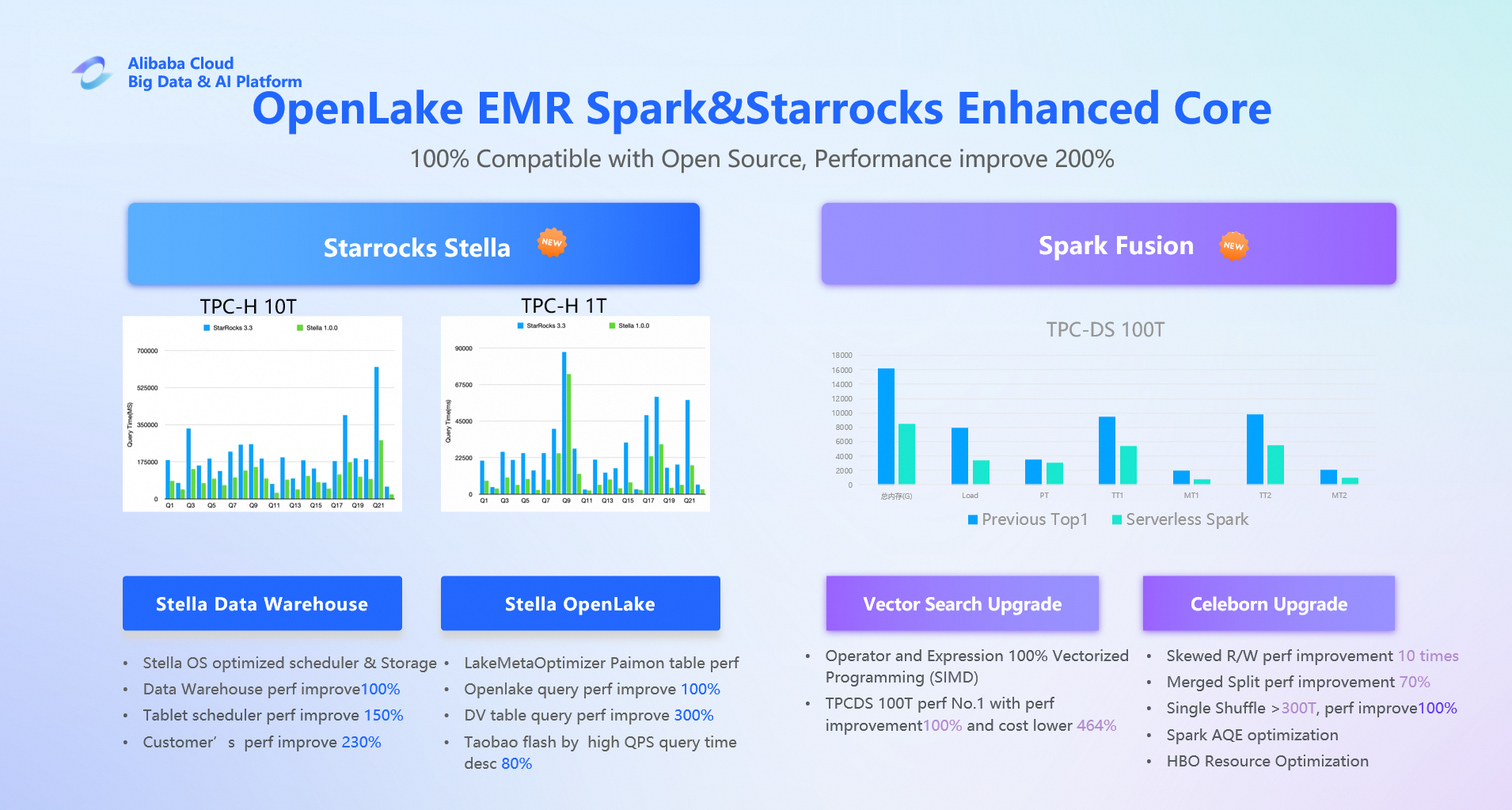

Oh, and about that performance? OpenLake-powered solutions (with EMR serverless spark) have hit #1 on the TPC-DS 100TB benchmark at half the cost of the runner-up. So, you get top-tier speed without the top-tier price tag.

Price-Performance That Wins: With Openlake you get industry-leading performance results at a fraction of the cost of legacy platforms. It's the ultimate "do more with less" setup for your budget.

Truly Integrated Platform: OpenLake isn't just a bunch of engines thrown together. DataWorks and DLF act as the central brain, handling orchestration, governance, and metadata so you get a smooth, single-platform experience.

AI Agents Do the Heavy Lifting: Tired of writing boilerplate code? OpenLake's DataWorks Agents let you describe what you want in plain English, and they'll build, deploy, and manage the entire pipeline for you. The future is "AI-executed tasks," not "human-written SQL."

Built for AI from Day One: OpenLake natively handles multimodal data—structured tables, vectors, images, audio, you name it—all in one place. It's not just a vector store; it's your complete AI-native data fabric.

Building your modern data stack with OpenLake is like assembling Lego blocks. Here's your parts list:

| Layer | Function | OpenLake Solution | Key Advantage |

|---|---|---|---|

| Compute | Batch Processing Engine | EMR Serverless Spark / MaxCompute | Fully managed, pay-as-you-go Spark with industry-leading TPC-DS performance, or MaxCompute for massive-scale enterprise data warehousing. |

| Metadata & Orchestration | Unified Catalog & Workflow Management | Data Lake Formation (DLF) + DataWorks | DLF provides the unified metadata catalog, while DataWorks offers end-to-end orchestration, governance, and a new natural language interface for building pipelines. |

| Real-time Serving | Real-time Analytics Engine | Hologres / StarRocks | Hologres (v4.0) now includes vector search and AI functions. StarRocks delivers top-tier OLAP performance. Both connect directly to DLF. |

| Streaming Compute | Stream Processing Engine | Flink (Real-time Compute) + Fluss | Flink processes streams, while Fluss acts as a real-time storage layer (replacing Kafka), enabling direct querying and seamless dumping into DLF. |

| Vector Store | Vector Database | Hologres / Milvus (on Alibaba Cloud) | Hologres offers integrated real-time analytics and vector search. Milvus provides a dedicated, enterprise-grade vector database for large-scale GenAI applications. |

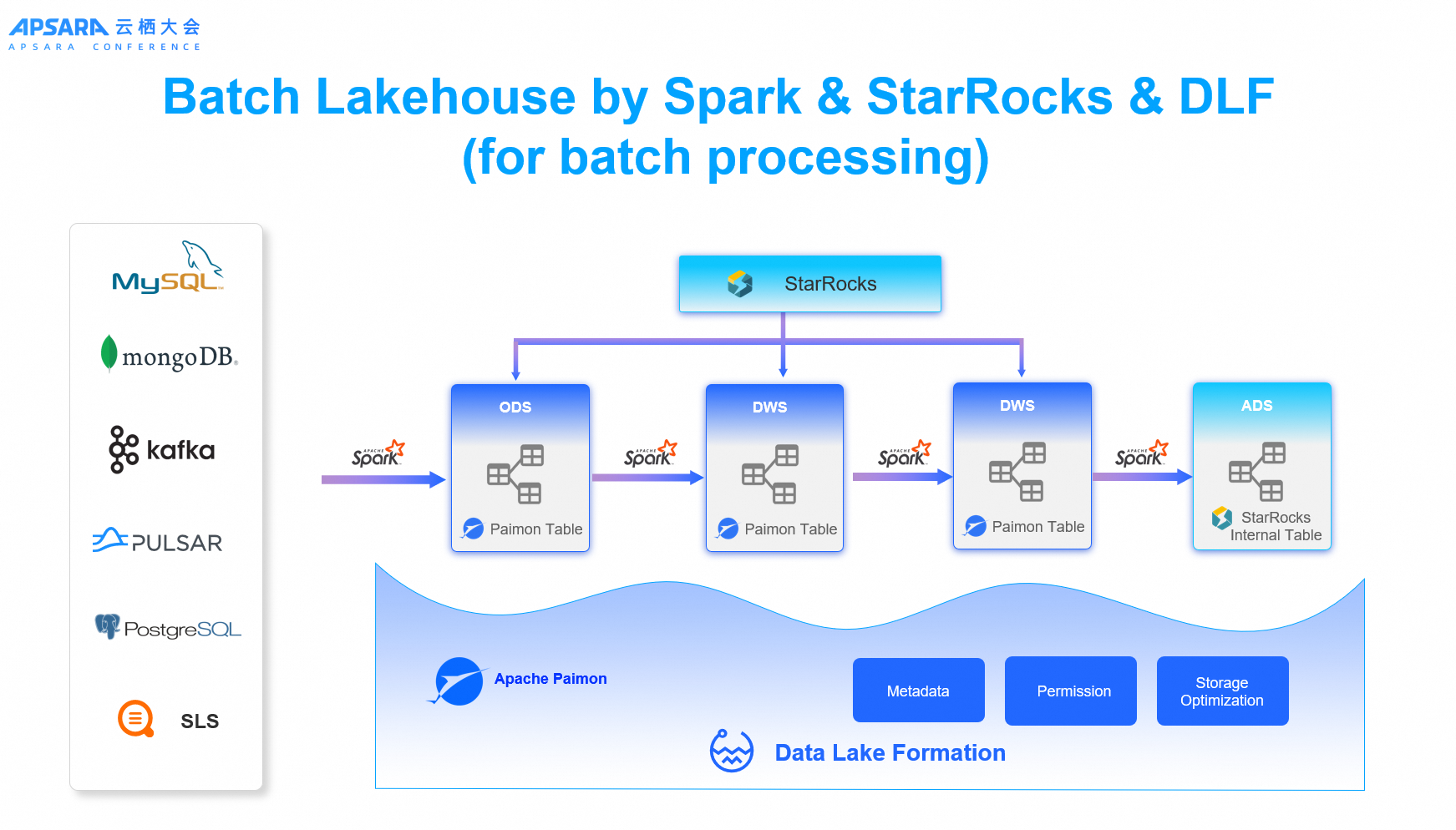

This lakehouse architecture targets cost-sensitive enterprises primarily using hourly or T+1 batch processing while demanding high-performance interactive queries. It delivers a highly cost-effective, fully managed, cloud-native lakehouse platform with zero operational overhead.

Key Components:

Alternatives Replaced: AWS Redshift + Glue, Azure Synapse Serverless, Databricks (batch Lakehouse scenarios), and legacy Hive + Presto/Trino stacks.

Value Proposition: Focuses on T+1 or hourly data refresh cycles—not millisecond real-time—but excels in ultra-fast queries, high concurrency, and strong SQL compatibility. It fills the critical gap between slow traditional Hive warehouses and expensive, operationally complex pure-streaming architectures.

Designed for enterprises requiring both data freshness (seconds to minutes) and high query performance, this architecture supports unified stream-batch processing and lakehouse convergence for real-time analytics.

Key Components:

Alternatives Replaced: AWS Kinesis + Redshift / MSK + Databricks, Azure Stream Analytics + Synapse, Google Cloud Dataflow + BigQuery, and legacy Lambda architectures (e.g., Kafka + Druid/ClickHouse + Hive).

Value Proposition: Delivers rear-time and near-real-time data visibility (seconds—minutes) with sub-second query response. Emphasizes native streaming, high throughput, strong SQL support, and deep cloud-native integration—bridging the gap between high-latency batch lakehouses and inefficient generic messaging-based architectures. Ideal for internet, finance, e-commerce, and IoT customers building real-time data pipelines on Alibaba Cloud.

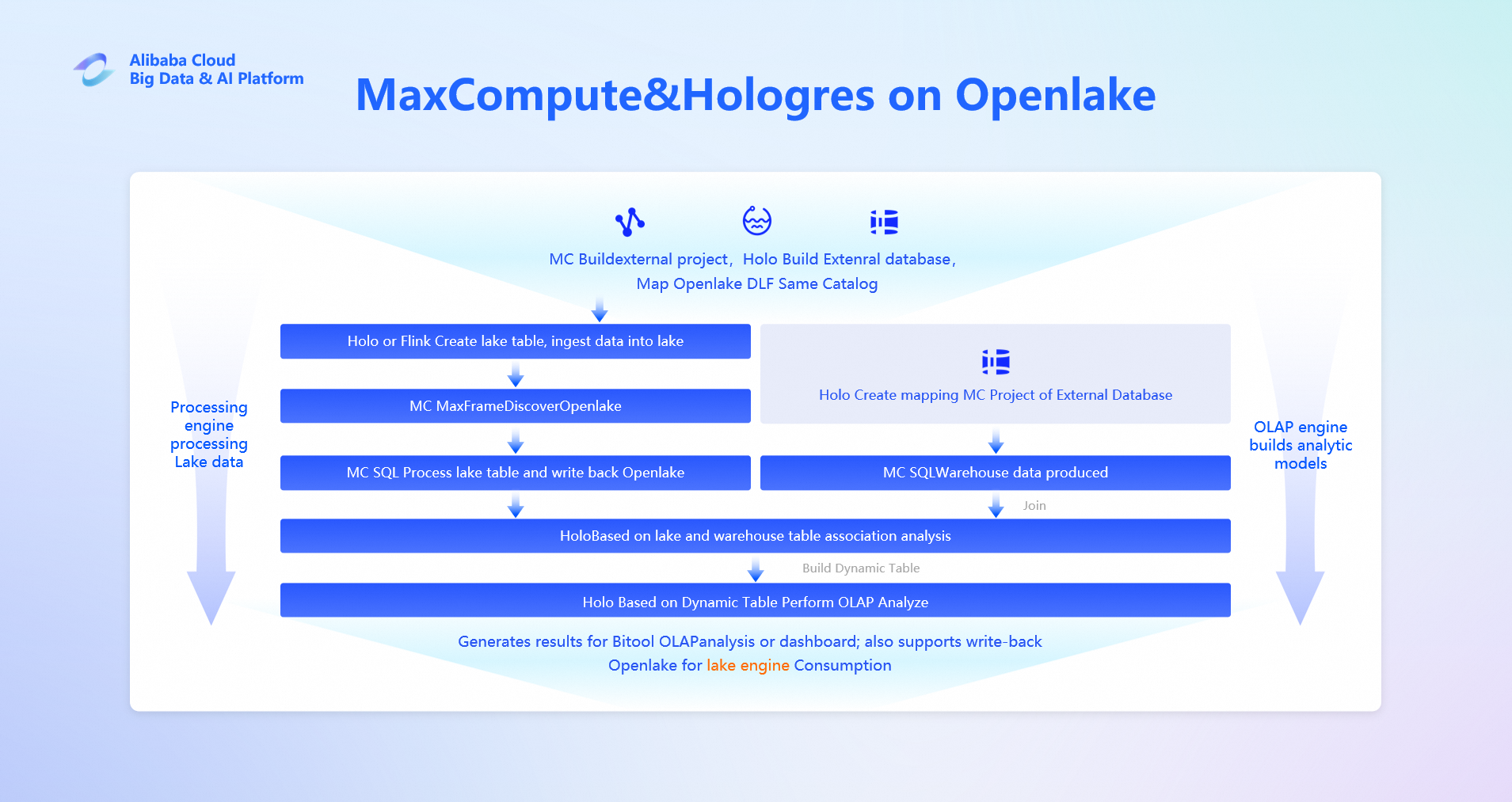

Built for enterprises demanding fully managed, highly reliable, large-scale data processing and real-time querying capabilities, this architecture offers a secure, compliant, and operation-free cloud-native lakehouse platform.

Key Components:

Alternatives Replaced: Snowflake, Databricks Lakehouse, AWS Redshift + S3 + Glue, and Microsoft OneLake, Azure Synapse Analytics.

Value Proposition: Optimized for hybrid T+0 to T+1 workloads—balancing massive batch processing with real-time interactive analytics. Highlights enterprise-grade security, elastic scalability, and deep integration with Alibaba Cloud's ecosystem. Fills the void between complex open-source lakehouse deployments and rigid, costly SaaS data warehouses. Best suited for finance, government, and large retail sectors with stringent requirements on stability, compliance, and analytical efficiency.

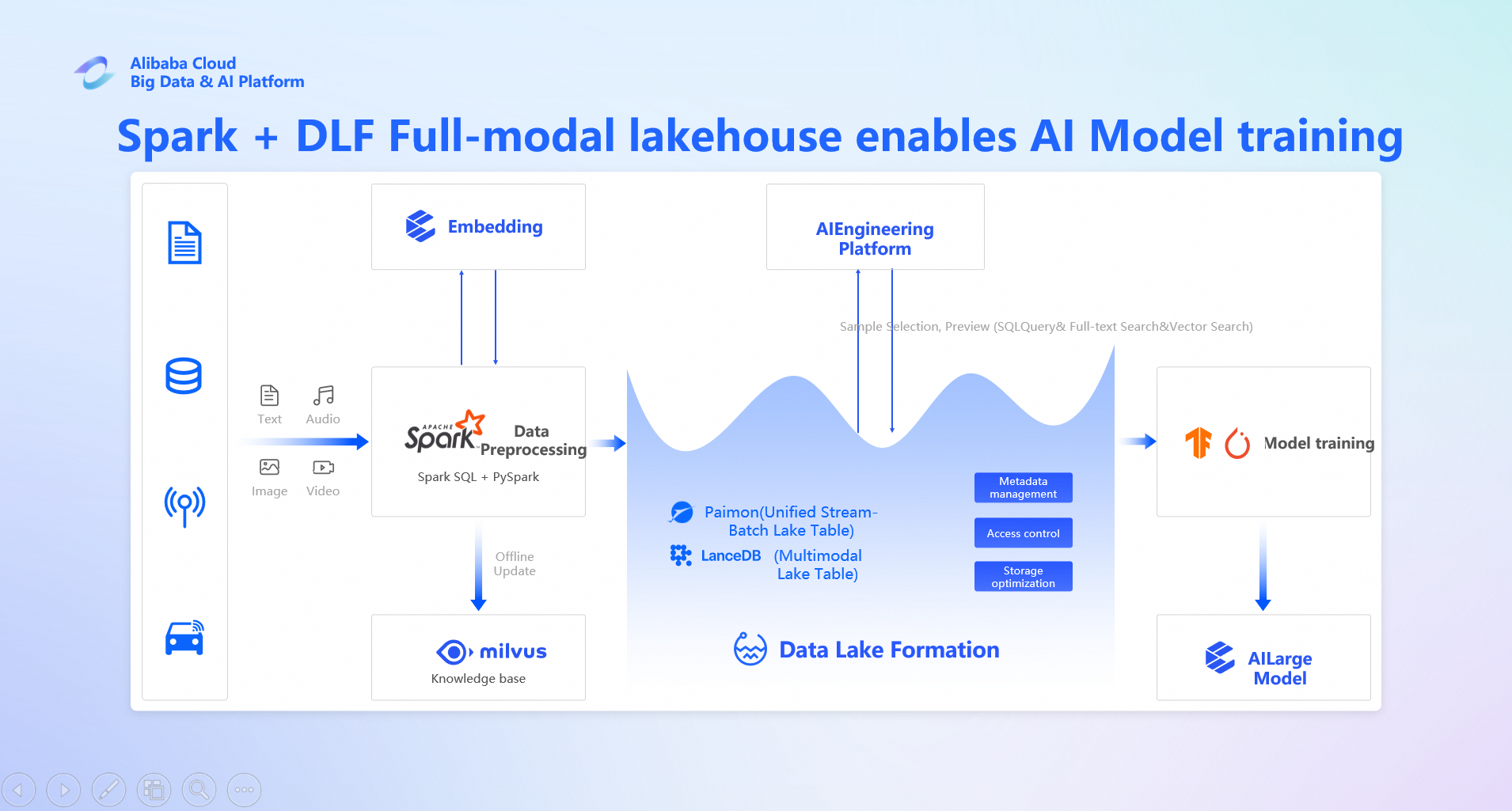

This next-generation, AI-native data platform is designed for enterprises that require unified management of multimodal data (text, images, audio, video), efficient vectorized search, and streamlined provisioning of high-quality training data for AI. It seamlessly integrates structured and unstructured data within a stream-batch unified architecture, bridging the gap between traditional data lakes and modern AI workflows.

Key Components:

Alternatives Replaced:

Ideal For: Internet companies, content recommendation systems, intelligent customer service, autonomous driving, and industrial quality inspection—especially those already running AI training pipelines on Alibaba Cloud.

OpenLake isn't just theory—it's driving real results for customers across the globe, from gaming and fintech to automotive and e-commerce, with strong adoption in Southeast Asia.

Success Story 1: A leading gaming company migrated its data platform to OpenLake, achieving a 38% reduction in total costs, 40-45% lower query latency, and a future-proof architecture for petabyte-scale data.

Success Story 2: An ed-tech firm leveraged OpenLake to unify its data platform, resulting in a 50% cut in operational costs, a 300% boost in query performance, and data freshness improved from T+1 to within 10 minutes.

Success Story 3: A smart EV manufacturer adopted the Multimodal Vector Lakehouse to unify vehicle sensor data, which shortened development cycles, reduced vector query costs by 30%, and cut storage costs by over 40%.

OpenLake isn't just another buzzword. It's a practical, proven way to unify your data and AI workloads, save serious money, and actually enjoy your data infrastructure.

So, what's next?

Alibaba Cloud E-MapReduce: Serverless Open-Source Big Data Platform

17 posts | 0 followers

FollowAlibaba Cloud Big Data and AI - April 24, 2026

Apache Flink Community - July 28, 2025

ApsaraDB - October 24, 2025

Apache Flink Community - May 30, 2025

Apache Flink Community - March 7, 2025

Apache Flink Community - January 7, 2025

17 posts | 0 followers

Follow Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More Qwen

Qwen

Full-range, open-source, multimodal, and multi-functional

Learn More Alibaba Cloud Model Studio

Alibaba Cloud Model Studio

A one-stop generative AI platform to build intelligent applications that understand your business, based on Qwen model series such as Qwen-Max and other popular models

Learn More AI Acceleration Solution

AI Acceleration Solution

Accelerate AI-driven business and AI model training and inference with Alibaba Cloud GPU technology

Learn MoreMore Posts by Alibaba Cloud Big Data and AI