By Yizheng

This article explains how Table Store, a distributed NoSQL cloud service, implements high data reliability and high service availability. When it comes to distributed NoSQL databases, many names come to mind, such as HBase, Cassandra, and AWS DynamoDB. These NoSQL databases were initially designed as distributed systems that support ultra-large data volume and concurrency. MongoDB and Redis may also come to mind as they also provide clustering functionality. These two databases, however, require manual intervention to configure sharding, replica sets, and master-slave replication.

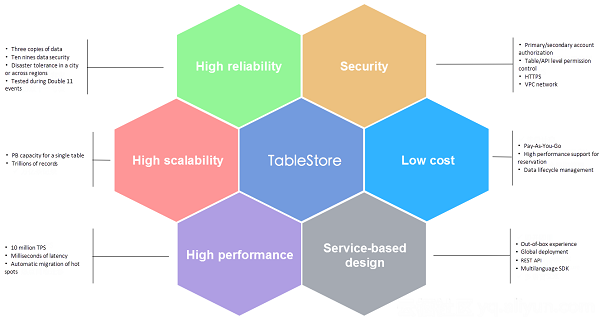

Table Store is a NoSQL service developed by Alibaba. The Bigtable paper from Google was used as the reference for its architecture design. Table Store has some similarities to HBase, Cassandra, and DynamoDB. The following figure shows some advantages of Table Store.

This article focuses on technologies used in Table Store to implement high reliability and high availability. Table Store is designed in accordance with eleven nines of data reliability and four nines of service availability. As a cloud service, Table Store can provide an SLA with ten nines of reliability and three nines of availability.

Fault tolerance, high reliability, and high availability are interrelated concepts. Fault tolerance is the ability to quickly detect software or hardware failures and quickly recover from failures. It is generally implemented through redundancy, which can ensure that other systems are not affected by failures in one system. High reliability indicates data security, and high availability refers to system uptime. Generally, high reliability and high availability are expressed as percentages containing several nines.

Redundancy can help implement fault tolerance. In the data layer, there are usually multiple copies of data. Data integrity is not influenced if one copy is damaged. In the service layer, there are multiple service nodes, and services can be migrated to another node if one node encounters downtime. High availability of services depends on high reliability of data because service availability cannot be implemented if data integrity is not guaranteed first.

A fault-tolerant system is not necessarily highly available. High availability is an indicator of how long a system is expected to run. If a system does not support hot upgrades or dynamic scaling, which indicates that maintenance requires downtime, the system is not highly available.

To achieve higher availability, two or more systems can be used to form an active-standby system to implement disaster tolerance. A common example is two data centers in a city. Two data centers in a city, combined with automatic switching or fast manual switching, can provide higher system availability. In addition, for businesses, elastic architecture design of applications can be made to allow automatic downgrade in the event of service exception, improving business availability.

For the traditional MySQL primary-secondary synchronization plan, when the primary database encounters a failure, the HA module migrates services to the secondary database to implement high availability. This method has one problem: To guarantee strong data consistency, data must be successfully written to both the primary and secondary database before the return operation (namely, max. protection mode). If the secondary database is unavailable, writing to the primary database also fails. Availability and consistency, therefore, cannot be guaranteed at the same time.

In distributed systems in which the likelihood of standalone failures is the same, the likelihood of standalone failures in clusters increases with cluster size. High availability and high reliability are the basic design objectives of distributed systems. In distributed systems, high data reliability is often implemented using distributed consistency algorithms, such as replica and Paxos. Implementing high availability usually includes implementing fast failover mechanisms, hot upgrading, and dynamic scaling.

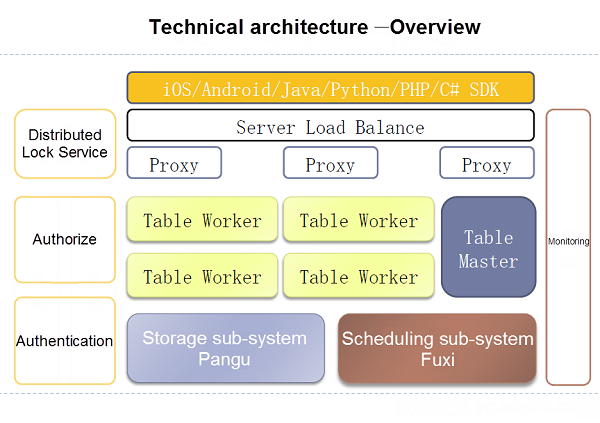

To learn how Table Store implements high reliability and high availability, let us first take a look at the following architecture diagram:

The Table Workers in the diagram represent the back-end service nodes. Under the Table Workers is a system called Pangu. Pangu is an advanced distributed system developed by Alibaba. Distributed storage is a shared system. All nodes in the Table Worker have access to all files in the Pangu system. This architecture also separates storage and computing.

Pangu focuses on problems with distributed storage. Its basic role is to ensure high reliability of data and strong consistency. We configure three copies of data when using Pangu, so one lost copy does not influence reading/writing or data integrity. When writing data, Pangu ensures that at least two copies are successfully written before returning data and performs fixes on the back-end if it fails to write one copy. When reading data, Pangu generally needs to read only one copy.

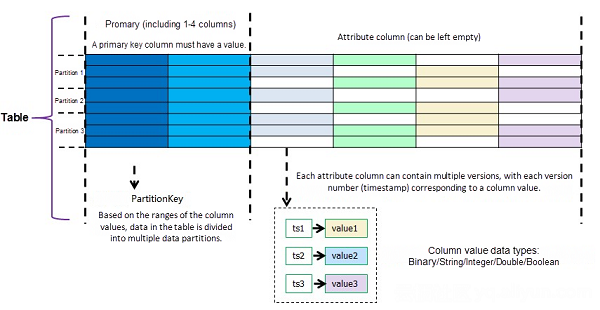

Let us first take a look at the Table Store data model, as shown in the following diagram:

In Table Store, a table consists of a series of rows, and each row contains the primary key and attribute columns. A primary key can have multiple primary key columns, for example, primary keys in the following diagram have three primary keys, all of which are strings:

PrimaryKey (pk1:string, pk2: string, pk3: string)

The first primary key column is the partition key. In this example, the partition key is pk1. All the data in a table is sorted by primary key (three primary key columns in this example) and partitioned based on partition key range. As data or access volume increases in a table, a partition is split to dynamically scale. A table containing a large volume of data may have hundreds or even thousands of partitions.

Why is it designed like this? Because all data is sorted by primary key, the entire table is like a large SortedMap, and range queries can be performed targeting any section of primary keys. In addition, because the first primary key column is the partition key, all the data entries that have the same first primary key column are divided into one partition to make it easier to develop features inside partitions, such as auto-incrementing columns, transactions in a partition, and LocalIndex.

Why are partition keys designed as sorted keys instead of hash keys? This is because we used shared storage. When using a shared storage system, data does not have to be transported when a partition is continuously split and data has been distributed by Pangu. If shared storage had not been adopted, we would have to deal with data distribution. For example, we can use the hash consistency algorithm. In this case, partition keys are usually hash keys, meaning that we cannot support range queries targeting any section of primary keys in the entire table.

Let us get to the point: How does Table Store implement high service availability? Consider a table containing 100 partitions that are loaded onto different workers. When a worker fails to work, the partitions on that worker are automatically migrated to another available worker. On the one hand, failures in one worker only influence some partitions, not all the partitions. On the other hand, because Pangu uses the shared storage system, any worker can load any partition, and computing resources are separated from storage resources. Therefore, failover is very fast and the architecture is simpler.

The preceding paragraph focuses on failover. We can also implement flexible load balancing with this architecture. When a partition has excessive write and read traffic, it can be quickly split into two or more partitions, which are loaded onto different workers. When a partition is split, data in the parent partition does not need to be migrated to the child partitions. Instead, the links to files in the parent partition are generated in the child partitions first, and then data in the parent partition is migrated through compaction in the background to eliminate the links. After a partition is split, its child partitions can be split even further. All partitions are evenly loaded onto different workers in the cluster to fully utilize the CPU of the cluster and network resources.

As a cloud service, Table Store provides daily maintenance, software upgrades, and hardware upgrades on the service side, which are completely transparent to users. These maintenance operations must be designed for high availability. Maintaining distributed systems is complex. Maintenance is troublesome and highly risky without cloud services. Servers may often become unavailable when they receive updates or handle various failures. In addition, hardware must be updated. Cloud services can provide users with better performance and continuously update hardware to build better networks. We believe that Table Store, as a cloud database, can improve performance, provide higher availability, and offer better user experience.

Table Store implements high data reliability through the Pangu shared storage system and achieves high availability through fast failover and flexible load balancing. Our discussion of high reliability and high availability implementation shows that shared storage is enormously beneficial to distributed NoSQL databases and that cloud services provide substantial convenience to users. We summarize these advantages here:

Advantages of Pangu shared storage:

Advantages of the Table Store cloud service:

57 posts | 12 followers

FollowAlibaba Cloud Storage - April 3, 2019

Apache Flink Community China - September 15, 2022

Alibaba Developer - November 5, 2020

ApsaraDB - October 21, 2020

Alibaba Clouder - July 4, 2019

Alibaba EMR - March 16, 2021

57 posts | 12 followers

Follow ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn More Tablestore

Tablestore

A fully managed NoSQL cloud database service that enables storage of massive amount of structured and semi-structured data

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn MoreMore Posts by Alibaba Cloud Storage