By Binbin Yin and Xu Zhou

In recent years, the rapid adoption of new energy vehicles (NEVs) has made technologies such as smart cockpits, Internet of Vehicles (IoV), and advanced driver-assistance systems (ADAS) the key battlegrounds for automakers. These features rely on an edge-cloud collaborative architecture where cloud infrastructure is critical. Whether it's a user streaming music, remotely controlling a vehicle, or an intelligent IoV system uploading sensor data, stable and efficient cloud platforms are the backbone of the operation.

As vehicle connectivity rates rise and AI model capabilities strengthen, the data throughput and computing load of automotive IT systems are growing exponentially. A single test vehicle equipped with autonomous driving capabilities can generate several terabytes of raw data per day. A single over-the-air (OTA) update for millions of users can trigger massive traffic spikes in a short window. Given these characteristics, the stability of cloud infrastructure has become a core factor directly impacting user experience and even driving safety.

Under such business pressure, infrastructure supporting automotive scenarios frequently encounters four types of typical problems that traditional O&M methods struggle to handle effectively:

During OTA rollouts, morning/evening peaks, or concentrated remote control triggers during holidays, server memory and CPU can overload instantly. This leads to a "system hang" state—processes cannot be scheduled, and commands stop responding. Even if the system hasn't fully crashed, the service becomes unavailable.

Issues like memory leaks, cache bloat, or abnormal VRAM growth are highly stealthy and hard to detect early on. However, they gradually exhaust system resources, eventually triggering OOM (Out-Of-Memory) events that terminate critical processes and cause service outages.

Systems may run normally most of the time but suffer occasional millisecond-level latency spikes that are difficult to reproduce. These issues usually stem from lock contention, high-frequency system calls, or I/O bottlenecks—root causes that traditional monitoring metrics often fail to capture.

In GPU clusters, problems like abnormal VRAM usage, NCCL communication failures, and task hangs often occur. The lack of full-stack observability—from the application layer down to the hardware—results in long troubleshooting cycles and heavy reliance on manual experience, severely impacting model training and inference efficiency.

These problems point to a core requirement: the automotive industry needs an intelligent O&M system that spans "application—OS—hardware" to achieve early warning, precise positioning, and automatic recovery rather than passive response.

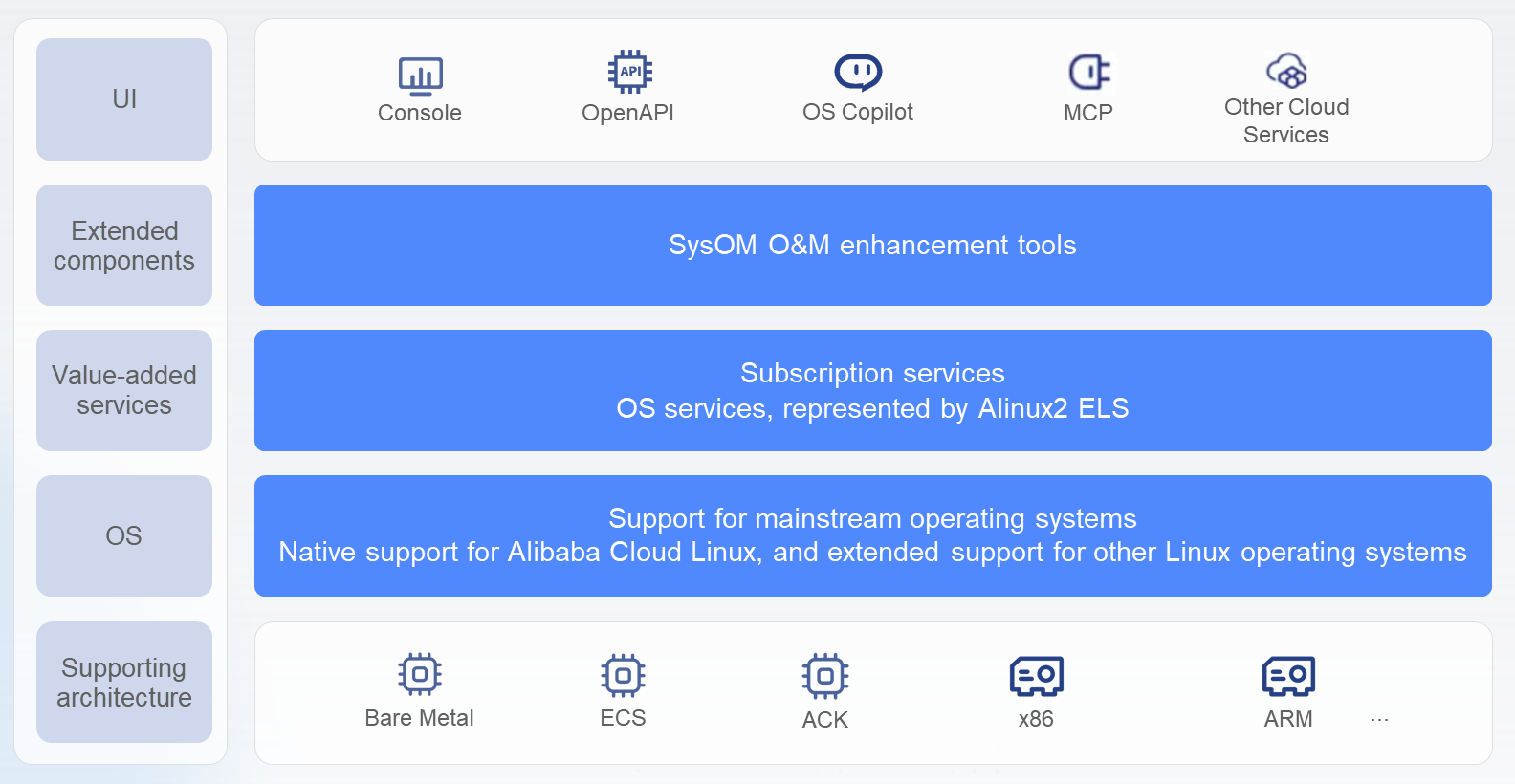

The Operating System Console (OS Console) is Alibaba Cloud's self-developed OS management platform. Supporting mainstream Linux distributions, it provides users with convenient, efficient, and professional OS lifecycle management capabilities, including O&M management, the intelligent assistant OS Copilot, and subscriptions—with support for service delivery via GUI, OpenAPI, MCP, CLI, and more. Committed to lowering the technical barriers of operating systems, the platform systematically resolves information asymmetry between customer applications and cloud platforms to enhance the user experience. Its intelligent O&M capabilities free users from complex O&M vertical stacks and analysis chains, enabling a deeper understanding of business anomaly root causes and resource consumption.

OS Console address: https://alinux.console.aliyun.com/

To meet the rigorous requirements of smart cockpits and autonomous driving (high concurrency, low latency, high stability), the OS Console acts as a comprehensive platform dedicated to bridging the "application—OS—hardware" full-stack link. It provides targeted diagnostic and observation capabilities for system hangs, OOM, jitter, and AI observability.

To address the four typical O&M pain points mentioned above for sectors like smart cockpits and autonomous driving, the operating system console has introduced specific diagnostic and observation capabilities, providing tailored solutions for common issues like system hangs, OOM, performance jitter, and AI observability, thereby bridging the infrastructure observability gap for automotive enterprises.

Core benefit: Resolves system hang issues caused by periodic business peaks, reducing service lag and anomalies.

Use cases: Transient high-load scenarios such as large-scale OTA rollouts, remote control command floods, and high-concurrency AI model inference.

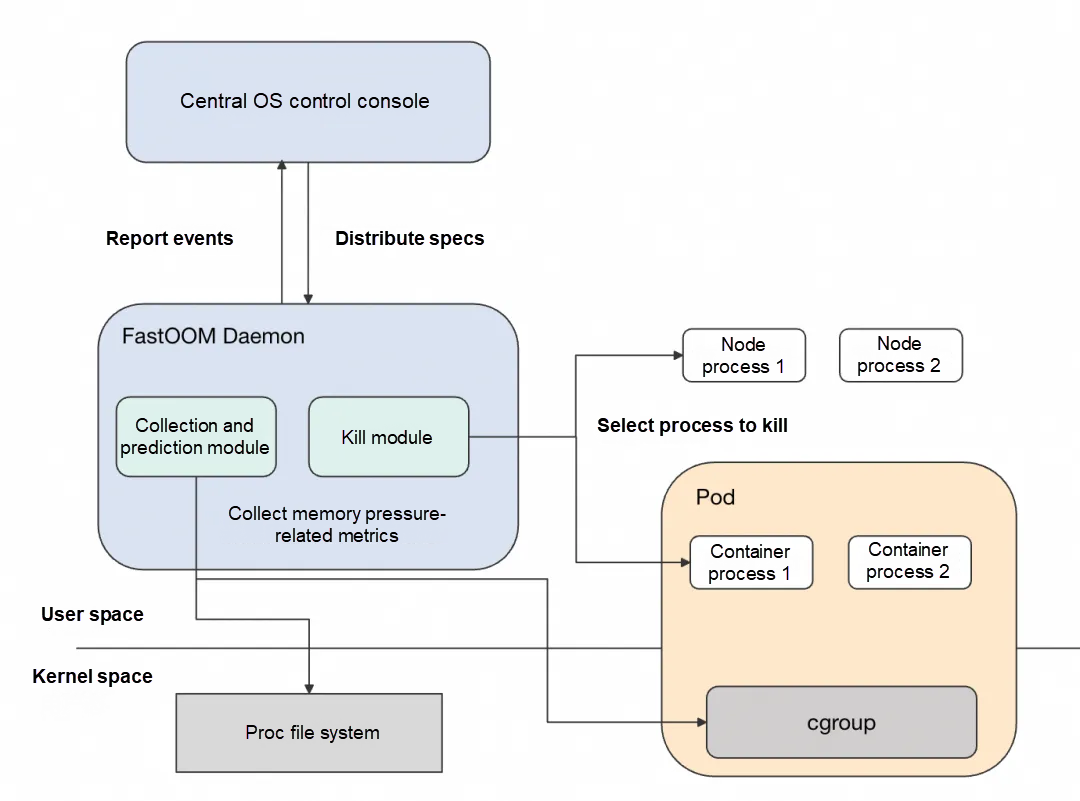

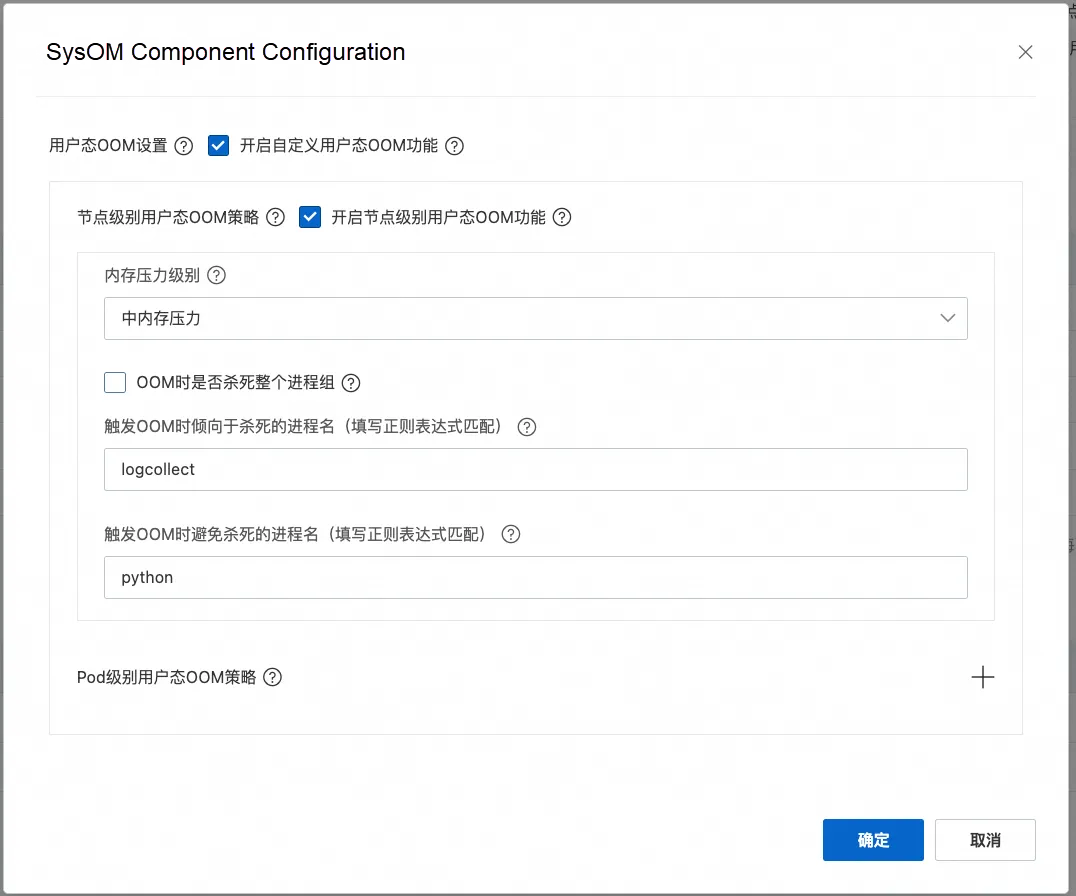

During peak periods, systems often enter a "near-OOM" state due to rapid memory exhaustion. The traditional Linux OOM mechanism responds with a lag, often triggering process termination only after the system is already frozen or unresponsive, and it is prone to mistakenly killing cache-heavy or I/O-intensive processes, which further exacerbates disk pressure.

Active protection is achieved through the following mechanisms:

• Precise heap memory scoring: Instead of relying on RSS (resident set size), the system focuses on reclaimable heap memory usage to more accurately identify the processes truly responsible for memory pressure.

• Batch termination policy: When a single release is insufficient to alleviate pressure, multiple high-memory-consuming processes can be terminated simultaneously to quickly release a large amount of memory.

• Multi-level pressure response: Supports low, medium, and high sensitivity configurations to adapt to the latency tolerance of different business types.

• Critical process whitelisting: Explicitly protects key services such as vehicle control and inference via process names or command-line parameters to avoid accidental termination.

By intervening at the early stages of rising memory pressure, this effectively prevents system hangs and ensures the reachability and execution timeliness of critical commands like remote control and OTA delivery.

Core benefit: Resolves service interruption issues caused by resource overselling in mobility services, enhancing business continuity.

Use cases: Complex memory issues such as continuously soaring memory usage, frequent OOM triggers, abnormal cache occupancy, and unclear GPU VRAM growth.

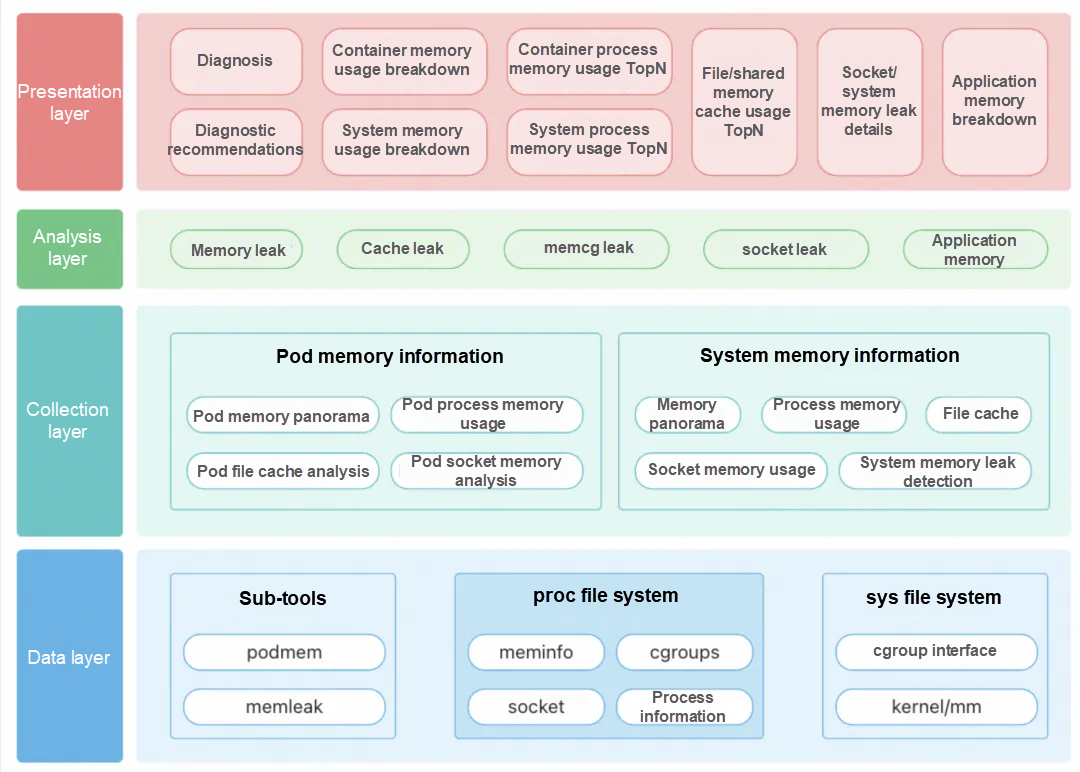

Traditional O&M struggles to answer exactly who is using the memory—is it an application leak, file cache accumulation, or implicit occupancy by drivers or GPUs?

Memory panorama analysis provides a unified, fine-grained view of memory.

• One-click full-link reports: Users can output a complete memory distribution—including processes, containers, cache, drivers, and GPU VRAM—directly from the console without logging into the machine.

• Deep application heap analysis: Supports the secondary breakdown of heap objects for processes in languages like Java, Python, and C++ to locate specific leak points.

• Correlation between cache and raw files: Identifies, for example, that "/ota/firmware_v2.1.bin" is occupying 8 GB of page cache, facilitating the optimization of preloading or cleanup strategies.

• Inclusion of GPU and NIC memory: Brings "invisible" memory, such as RDMA buffers and GPU VRAM mapping, into the monitoring scope to eliminate blind spots.

Memory panorama analysis transforms the process from "guessing who consumed the memory" to "locating the leak source in seconds," significantly shortening troubleshooting time and supporting capacity planning and resource optimization.

Core benefit: Resolves response latency issues in Internet of Vehicles (IoV) services, improving user experience.

Use cases: Performance issues that are difficult to reproduce, such as occasional lag, sudden CPU or I/O spikes, and millisecond-level latency jumps.

These types of problems often have no fixed reproduction path, and traditional monitoring cannot capture transient call stacks, leaving root causes unresolved for long periods.

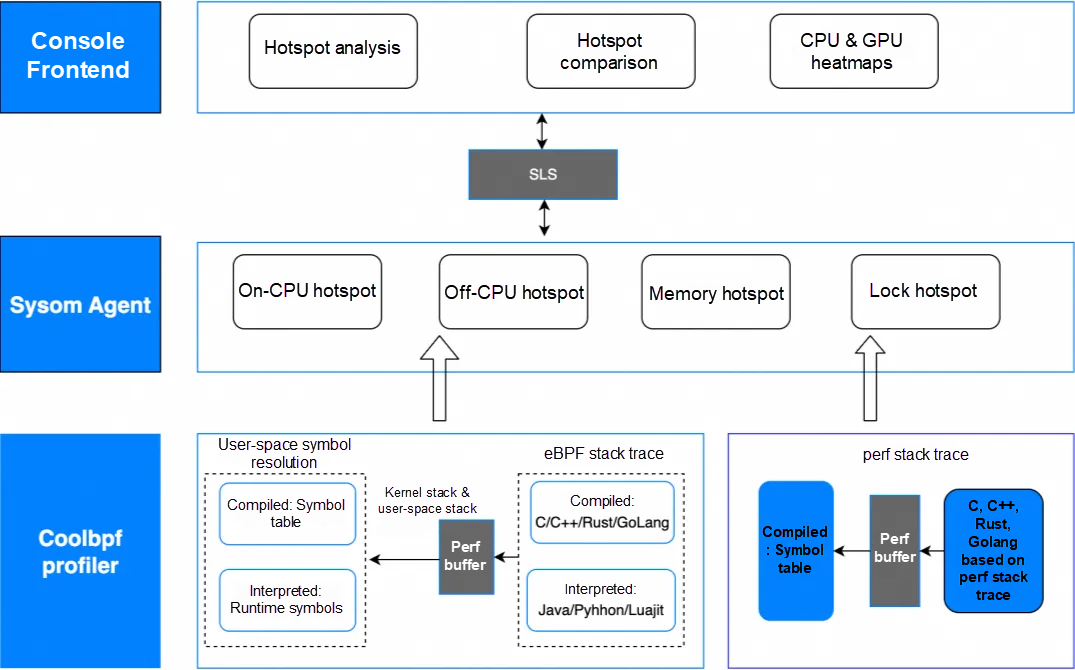

Process hotspot analysis implements lightweight, continuous tracking based on eBPF with the following characteristics:

• Lower than 3% performance overhead: Collects data such as function call stacks, context switches, and system calls non-intrusively, making it suitable for long-term operation in production environments.

• Flame graphs + diff comparison: Visually displays CPU hotspot paths and supports performance difference comparisons before and after jitter events or version upgrades, automatically highlighting regression points.

• Smart identification: Combines large model semantic understanding to identify common performance traps such as high-frequency /proc access, lock contention, and blocking I/O, providing optimization suggestions.

• Second-level backtracking of jitter moments: The system continuously caches lightweight call stacks, allowing for the immediate locking of transient high-load processes and their hotspot functions when an issue occurs.

Process hotspot analysis solves the "unreproducible" performance puzzle, making occasional lag traceable, explainable, and fixable.

Core benefit: Resolves the difficulty of O&M in GPU scenarios for autonomous driving business, improving training and inference efficiency and saving costs.

Use cases: AI-intensive workloads such as autonomous driving model training, large model inference like vLLM, and multi-GPU communication tasks.

AI tasks are highly sensitive to the stability of GPU resources, yet issues like VRAM leaks, XID errors, and communication bottlenecks are often stealthy and difficult to locate.

The OS Console builds a kernel-based continuous tracking system with the following characteristics:

• Minute-level anomaly alerts: Real-time monitoring of VRAM, SM utilization, temperature, and XID error codes to promptly detect GPU card drops, hardware errors, or task hangs.

• Hour-level problem demarcation: Supports slow node identification, NCCL communication latency analysis, and single-card/full-machine resource bottleneck assessment to quickly narrow down the troubleshooting scope.

• Function-level root cause analysis: Correlates Python-layer calls, framework operators, and CUDA kernels through GPU flame graphs and timeline profiling, visualizing operator execution sequences and wait times.

This makes AI tasks "visible, explainable, and fixable," preventing training interruptions or inference delays caused by underlying resource anomalies and enhancing the reliability of AI infrastructure.

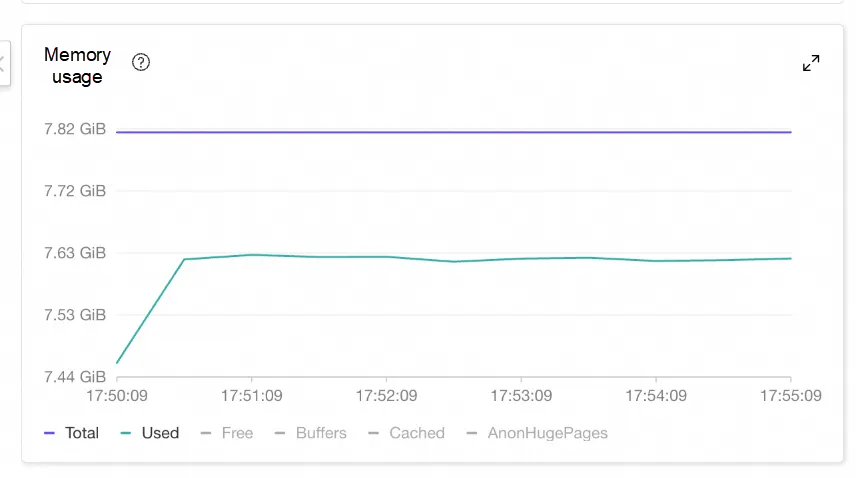

A leading logistics industry user experienced business unresponsiveness and extremely sluggish instance logins during a holiday period. Monitoring revealed that memory usage for the customer's instance surged at a specific point in time, approaching the system's total capacity (meaning available memory was very low), though it did not exceed the total physical memory.

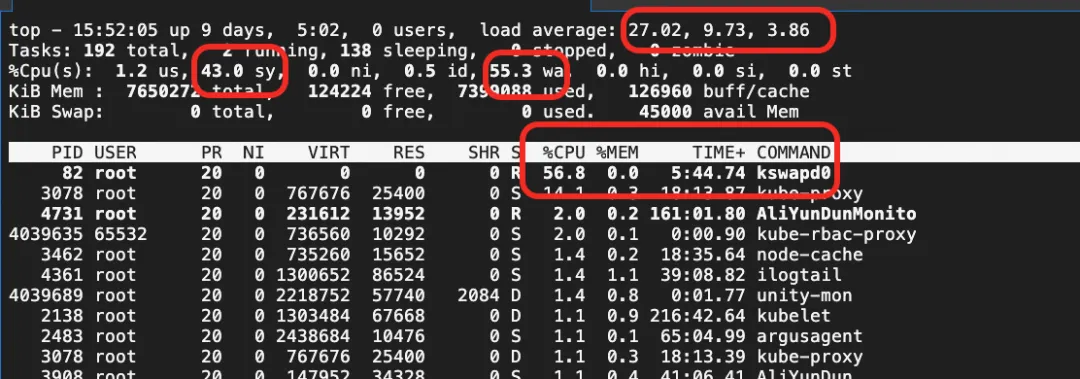

Top command data showed that system CPU sys utilization, iowait utilization, and overall system load were consistently high, with the kswapd0 thread consuming excessive CPU cycles to perform memory reclamation.

To resolve this, the user enabled the node-level FastOOM feature. Since the business was sensitive, the memory pressure sensitivity was set to medium. The core business application (started via python, process name contains the python substring) was whitelisted to avoid being killed, while unrelated log programs were configured to be prioritized for termination.

Once enabled, when the node memory reached a near-OOM state, the user-space mechanism intervened and terminated processes according to the configuration, releasing memory and preventing the system from hanging. Interventions were recorded in the system overview of the OS Console.

As shown in the data, since processes like kube-rbac-proxy and node_exporter had oom_score_adj values set near 999, FastOOM followed kernel policies to kill them first. However, because the memory released from these processes was insufficient and the system remained in a near-OOM state, FastOOM then killed the logcollect process as specified in the priority configuration.

This timely intervention allowed the system to avoid entering a near-OOM jitter state.

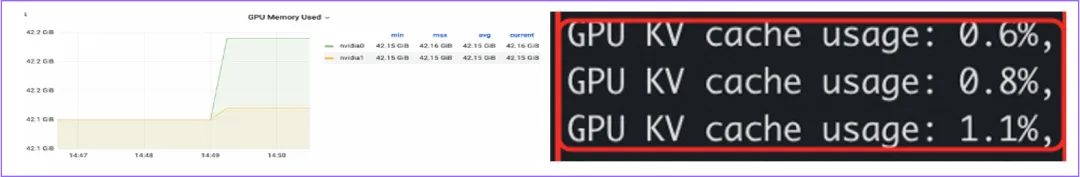

A top autonomous driving solution company deployed a vLLM online inference service where KV-Cache utilization was not at full capacity, yet GPU monitoring (DCGM) detected a significant increase in VRAM usage. Since vLLM employs a memory pre-allocation mechanism at startup, VRAM levels should theoretically remain stable when KV-Cache utilization is not full.

The team performed continuous profiling on the online application and located the cudaMalloc calls responsible for VRAM requests on the timeline. By marking these points, they were able to identify the specific Python calls involved. The investigation successfully located the call stack causing the additional VRAM allocation. Combined with the implementation details of decorate_context(), it was determined that the VRAM growth was caused by Torch's internal cache management mechanism. The issue was subsequently mitigated by adjusting vLLM VRAM pre-allocation or modifying Torch environment variables related to memory cache occupancy.

In the spring of 2025, a smart electric vehicle brand launched its flagship model globally, supported by a large-scale integrated marketing campaign. Because the launch covered multiple time zones and sparked intense interest during the price announcement, total App visits exceeded 8 million within five minutes of the reveal. Peak requests for mall-related interfaces reached 120,000 QPS, representing a traffic surge of over 200 times daily levels. In this extreme concurrency scenario, end-to-end response times for core interaction interfaces had to be kept under 30 ms. Any millisecond-level delay could result in white screens, unresponsive operations, or livestream stuttering, severely impacting user experience and the brand image. Traditional troubleshooting methods relying on log backtracing were completely ineffective under such pressure, putting system stability and real-time observability to an unprecedented test.

The brand relied on a "three-in-one" protection system built via the operating system console:

As smart electric vehicles continue to evolve, the coupling between in-car systems and cloud infrastructure will become even tighter. In the future, cars will not only be tools for transportation but also mobile computing terminals and data nodes. This requires cloud platforms to not only possess greater elasticity, lower latency, and higher reliability, but also to achieve deeper intelligence in resource scheduling, fault self-healing, and performance optimization.

We will continue to hone the OS Console's capabilities around core automotive industry scenarios. We will also further strengthen support for high-concurrency and high-real-time business, optimizing the performance of FastOOM, memory panorama analysis, and process hotspot tracking under typical loads such as OTA peaks and autonomous driving training. At the same time, we will explore the AIOps path by combining large models with real-time observability data to build an AI Agent O&M system equipped with prediction, diagnosis, decision-making, and execution capabilities.

OOM: Kill Processes or Endure Application Stutter? How to Decide?

SysOM MCP: Open-Source Intelligent O&M Assistant for AI-Powered System Diagnostics

105 posts | 6 followers

FollowAlibaba Cloud Native Community - November 4, 2025

OpenAnolis - September 4, 2025

OpenAnolis - September 4, 2025

OpenAnolis - December 26, 2025

Alibaba Cloud Indonesia - March 20, 2026

Alibaba Cloud Native Community - November 6, 2025

105 posts | 6 followers

Follow Bastionhost

Bastionhost

A unified, efficient, and secure platform that provides cloud-based O&M, access control, and operation audit.

Learn More Managed Service for Grafana

Managed Service for Grafana

Managed Service for Grafana displays a large amount of data in real time to provide an overview of business and O&M monitoring.

Learn More Alibaba Cloud Linux

Alibaba Cloud Linux

Alibaba Cloud Linux is a free-to-use, native operating system that provides a stable, reliable, and high-performance environment for your applications.

Learn More CloudBox

CloudBox

Fully managed, locally deployed Alibaba Cloud infrastructure and services with consistent user experience and management APIs with Alibaba Cloud public cloud.

Learn MoreMore Posts by OpenAnolis