By Queyue

With the development of deep learning, all areas of our lives are undergoing intelligent transformations. As the team positioned closest to users, the frontend also wants to use AI capabilities to improve our efficiency, reduce labor costs, and provide users with a better experience. Intelligent transformation is seen as an important area of growth for the future of the frontend field.

However, the following issues hinder the adoption of intelligent development by the frontend team:

To solve these problems and promote intelligent frontend development, we developed Pipcook. Pipcook uses the frontend-friendly JavaScript (JS) environment, adopts the TensorFlow.js framework for its underlying algorithm capabilities, and encapsulates algorithms for frontend business scenarios. This allows frontend engineers to quickly and easily use machine learning capabilities.

This article describes how Pipcook is integrated with TensorFlow.js and how the underlying models and computing capabilities of tfjs-node are used to build a high-level machine learning pipeline. For more information about Pipcook, visit the official Github repository at: https://github.com/alibaba/pipcook

TensorFlow.js is a JS-based machine learning framework released by Google in 2018. Google has since made the relevant code open source. Pipcook uses tfjs-node as the underlying framework for data processing and model training, develops plugins on TensorFlow.js, and assembles the plugins into a pipeline. We use TensorFlow.js for the following reasons:

To conduct machine learning, we need to access and process a large amount of data. In some traditional scenarios with small data volumes, we can read data to the memory at one time. However, in deep learning scenarios, the data volume generally exceeds the memory size. Therefore, we need to access partial data from the data source as needed. Dataset APIs provided by TensorFlow.js can encapsulate data in these scenarios.

In a standard Pipcook pipeline, we will use dataset APIs to encapsulate and process data. The preceding figure shows a typical data flow process.

We can regard the dataset as a group of iterative training data, like Stream in Node.js. Each time the next element is requested from the dataset, the internal implementation accesses data as required and executes the preset data processing function. This abstraction allows the model to train a large amount of data easily. When we have multiple datasets, they can be shared and organized as a single group for abstraction.

TensorFlow.js provides low-level and high-level APIs. Low-level APIs are derived from deeplearn.js and include operators required for building models. They process mathematical operations in machine learning, such as simple linear algebra data operations. High-level layers APIs encapsulate common machine learning algorithms and allow us to load trained models, such as Keras models.

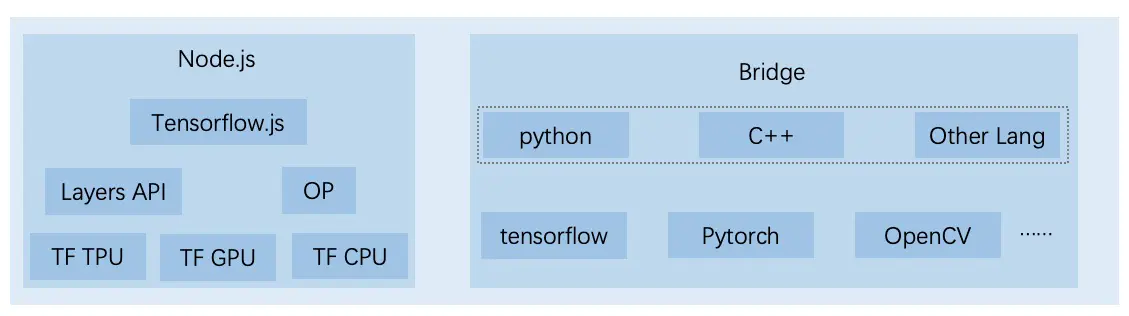

Pipcook uses plugins to develop and run models. Each model load plugin loads a specific model, and most models are implemented based on TensorFlow.js. tfjs-node also provides features to accelerate model training, such as GPU acceleration. Due to the ecosystem and other current conditions, it is expensive to implement certain models in TensorFlow.js currently. To solve this problem, Pipcook provides Python bridging and other methods to allow you to call Python to train models in the JS runtime environment. We will describe the bridging details in subsequent articles.

An industrial-level machine learning pipeline needs a method to deploy your model after training. This way, the model can serve real businesses. Currently, Pipcook provides the following deployment solutions, which can be implemented using the model deploy plugin.

Our ultimate goal is a mature and industrial-level machine learning pipeline that can apply excellent models to a production environment. To achieve the same goal, Google has released the open-source product TensorFlow Extended (TFX) based on its practices. You may wonder if Pipcook is any different from TFX. Pipcook is not designed to replace any other frameworks, especially products based on the Python ecosystem. Pipcook aims to promote intelligent frontend development. Therefore, Pipcook uses technology stacks and product-based methods oriented to the frontend.

Based on the preceding design, we are attempting to build a frontend-friendly machine learning environment to meet our expectations and goals.

Pipcook has been open source for about a month. In this period, we have received some user feedback. We hope to leverage the capabilities of the open-source community to optimize Pipcook so it can promote intelligent frontend development. To further develop Pipcook, we plan to:

In the future, we hope to combine the power of Alibaba°Øs intelligent frontend team and the entire open-source community to continuously optimize Pipcook and the push for intelligent frontend capabilities it represents. This way, we can provide inclusive technical solutions for intelligent frontend capabilities, accumulate more competitive samples and models, provide intelligent code generation services with higher accuracy and availability, and improve frontend R&D efficiency. In addition, frontend engineers will no longer have to do simple and repetitive work, giving them more time to focus on challenging work.

AI Empowers Design-to-Code in the Mid-End and Backend Scenarios

TensorFlow.js Helps Recognize Large Quantities of Icons in Milliseconds!

66 posts | 5 followers

FollowAlibaba F(x) Team - December 14, 2020

Alibaba F(x) Team - February 26, 2021

Alibaba F(x) Team - June 22, 2021

Alibaba Cloud Data Intelligence - September 6, 2023

Alibaba Cloud Data Intelligence - September 6, 2023

ApsaraDB - December 29, 2021

66 posts | 5 followers

Follow Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn More Online Education Solution

Online Education Solution

This solution enables you to rapidly build cost-effective platforms to bring the best education to the world anytime and anywhere.

Learn More Accelerated Global Networking Solution for Distance Learning

Accelerated Global Networking Solution for Distance Learning

Alibaba Cloud offers an accelerated global networking solution that makes distance learning just the same as in-class teaching.

Learn MoreMore Posts by Alibaba F(x) Team