By Tianke

Design-to-Code (D2C) refers to the use of various automation technologies to convert designs, such as Sketch, Photoshop, and images, into frontend code, reducing the workloads of frontend engineers, improving their development efficiency, and creating business values.

In the process of implementing D2C, the following input formats are used for designs:

Frontend pages that are expressed by designs consist of the following types:

This article explores how AI empowers D2C in mid-end and backend scenarios.

Compared with the client-side, the frontend development of the mid-end and backend systems has the following characteristics:

Given these characteristics, using a tool like Sketch2React to generate a lot of DIV + CSS code from Sketch designs is not appropriate. How can we implement D2C on the mid-end and backend pages? The solution is to recognize components using AI. Specifically, you can use deep learning to extract the information of components from image designs. Such information is used to generate readable, maintainable, and componentized code.

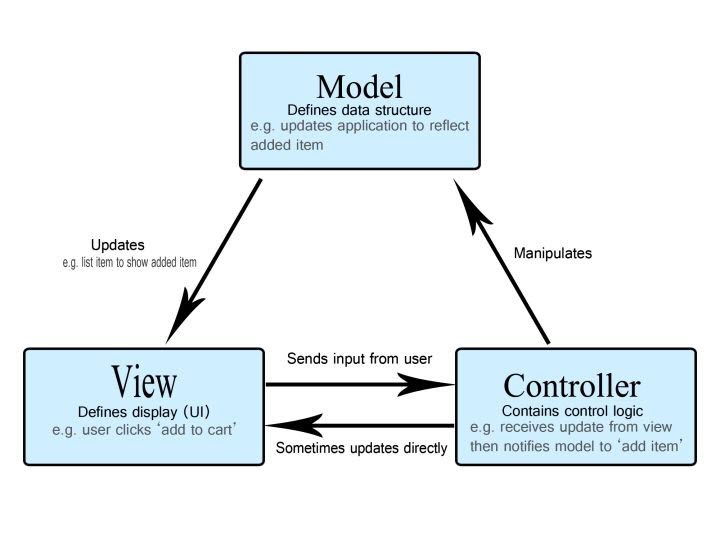

Until now, powered by our AI capabilities, we can extract information from images to generate the code for common complex components (such as forms, tables, and description lists) at the model and view layers based on the Model-View-Controller (MVC) architecture. The code for common complex components cannot be generated at the controller layer because even a real person cannot extract logical information, such as linkage and submission, from images. However, in the near future, we will generate the code at the controller layer through NL2Code, so an AI can "see," "listen," and help generate code under multiple modalities.

In addition to generating the code for common complex components from zero, we can also quickly retrieve and reuse the code that users need from tens of thousands of pieces of code. For example:

We expect AI to act as an independent frontend engineer, but that is now impossible. AI has the following drawbacks in its current stage:

Therefore, we decided to integrate AI into the current workflow to assist frontend engineers in their development. Even if these problems occur, frontend engineers can make corrections and supplements.

We have created the following two workflows for the mid-end and backend systems:

What does Pro Code and Low Code mean?

The following examples show how to use AI-empowered component recognition for Pro Code and Low Code.

Let's take a look at Pro Code first. Take the MID GUI tool used by the Frontend Team of the Alibaba Chief Customer Office (CCO) as an example. To generate code and the corresponding live demo, you can take a screenshot of the form section and then press CMD+V to paste the screenshot into AI-empowered component recognition. Alternatively, you can click or drag and drop the image to upload it if you have a form image, as shown in the following figure:

If you are satisfied with the AI-generated code, you can click "Download." After entering a component name, you can download the code of the component to the current project, as shown in the following figure:

You can view and use the downloaded code by opening the current project. The code is generated in the aicode/(user-entered component name) directory, which consists of the following files:

You can directly use or modify the AI-generated code, as shown in the following figure:

If you think the recognition effect is not satisfactory, click Feedback. We will retrain the model as soon as possible. After two hours, the deep learning model will learn from each bad case. The more knowledge the deep learning model acquires, the more intelligent it will become.

Table

Description Lists

Chart

We have embedded our AI capabilities into the official website of Ant Design. You are welcome to use AI screenshots to search for icons on this website.

In addition to using AI-empowered component recognition for Pro Code to improve the efficiency of developers, you can generate component protocols using images to accelerate visual building. For example, XForm was developed by the Frontend Team of the Alibaba CCO and was originally used to create forms by dragging and dropping form items. Now, the AI-empowered component recognition service can be used to upload images to generate protocols and help automatically generate user interfaces. You can intervene when the result is inaccurate or incomplete.

At the Apsara Conference 2019, Daniel Zhang said, "Big data is the petroleum, and computing power is the engine in the era of the digital economy." Componentization has begun to take shape in the frontend industry, so a large number of components can be used as big data. In the meantime, computing power in the industry is constantly improving. AI has the potential to transform the pattern of frontend development. Let's wait and see.

Building a High-Level Frontend Machine Learning Framework Based on the tfjs-node

66 posts | 5 followers

Follow淘系技术 - November 4, 2020

Alibaba Cloud MaxCompute - March 3, 2020

Alibaba Tech - April 24, 2020

Alibaba Cloud MaxCompute - March 3, 2020

Alibaba Cloud MaxCompute - December 8, 2020

Alibaba F(x) Team - December 7, 2020

66 posts | 5 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More CT Image Analytics Solution

CT Image Analytics Solution

This technology can assist realizing quantitative analysis, speeding up CT image analytics, avoiding errors caused by fatigue and adjusting treatment plans in time.

Learn More Intelligent Robot

Intelligent Robot

A dialogue platform that enables smart dialog (based on natural language processing) through a range of dialogue-enabling clients

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn MoreMore Posts by Alibaba F(x) Team