Performance Testing Service (PTS) runs Apache JMeter tests at scale in the cloud. Upload your .jmx scripts, configure load parameters, and run distributed performance tests -- without provisioning or managing local infrastructure. PTS handles elastic resource scaling and provides real-time monitoring with auto-generated reports.

Prerequisites

Before you begin, make sure that you have:

Access to the PTS console

A JMeter test plan saved as a

.jmxfile (the file name must not contain spaces)(Optional) Supporting files such as CSV data files or JAR plugin files

Step 1: Create a scenario

Log on to the PTS console, choose Performance Test > Create Scenario, and click JMeter.

In the Create a JMeter Scenario dialog box, enter a Scenario Name.

In the Scenario Settings section, upload a JMeter test plan (

.jmxfile). After the upload completes, PTS automatically resolves and installs required plugins. For details, see Automatic completion of JMeter plugins.Click Upload File to add supporting files, such as CSV data files or JAR plugin packages. Up to 20 non-JMX files can be uploaded per scenario.

ImportantUploading a file with the same name as an existing file overwrites it. To verify whether a file was overwritten, compare the MD5 hash in the Actions column with the hash of your local file.

ImportantDo not rename an

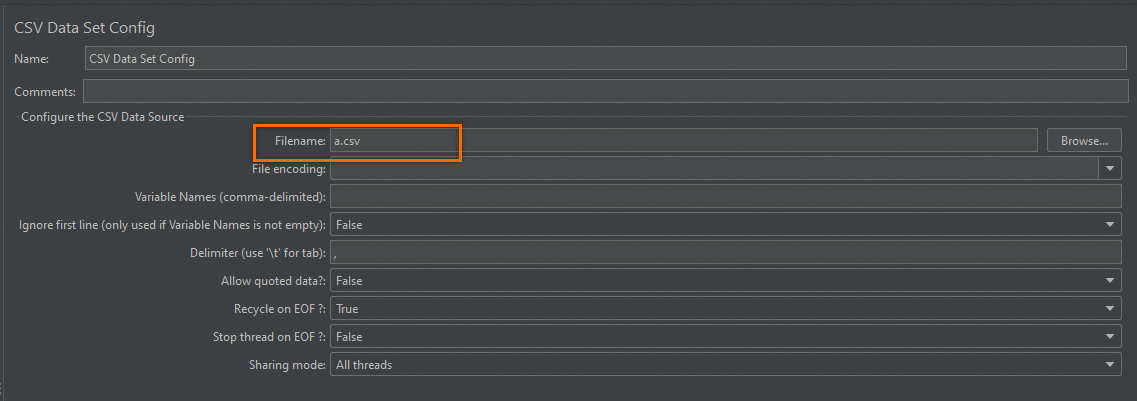

.xlsxfile to.csvby changing the extension. Instead, export to CSV from Excel or Numbers, or generate a CSV file programmatically using a library such as Apache Commons CSV.If a CSV file is referenced in a JMX script, enter only the file name -- not the file path -- in the Filename field of the CSV Data Set Config element. For example, enter

websites.csvinstead of/data/websites.csv. The same rule applies when using the__CSVReadfunction or referencing files in JAR packages.

File limits:

File type Size limit Notes JMX script 2 MB per file Multiple JMX files can be uploaded, but only one can be selected per test JAR plugin 10 MB per file Debug the JAR in your local JMeter environment before uploading CSV data 60 MB per file For larger files, use the OSS data source feature If you uploaded multiple JMX files, select the one to use for this test.

(Optional) Select Split File for a CSV file to ensure that the data is unique for each load generator. Without this option, every generator uses the same data. For details, see Use CSV parameter files in JMeter.

(Optional) Configure distributed adaptation components if your script uses timers or controllers that need coordinated behavior across generators. For details on how these modes distribute load, see Advanced settings. For usage examples, see Constant throughput distributed usage example.

Synchronous Timer: Set to Global or Single Generator.

Constant Throughput Timer: Set to Global or Single Generator.

Configure the JMeter runtime environment under Use Dependency Environment.

Option Behavior Yes Select an existing managed environment. For details, see JMeter environment management. No Select a JMeter version. PTS supports Apache JMeter 5.0 with Java 11.

Step 2: Configure load settings

Set the load model, concurrency level, ramp-up duration, and other parameters.

For details, see Configure load models and levels.

Step 3: Advanced settings (optional)

Advanced settings are disabled by default. When disabled, PTS uses the following defaults:

| Setting | Default value |

|---|---|

| Log sampling rate | 1% |

| DNS cache clear per request cycle | Disabled |

| DNS resolver | Default |

| Distributed timer scope | Single test |

Enable advanced settings to customize these behaviors.

Log sampling rate

The default sampling rate is 1%. To adjust it, enter a value in one of the following ranges:

| Goal | Value range | Example |

|---|---|---|

| Reduce sampling | (0, 1] | 0.5 samples 0.5% of logs |

| Increase sampling | Multiples of 10 in (1, 50] | 20 samples 20% of logs |

Sampling rates above 1% incur additional charges. For example, a 20% rate adds a fee of 20% x VUM. For details, see Pay-as-you-go.

DNS cache settings

Configure whether to clear the DNS cache on each request. Select either the default DNS resolver or a custom one.

A custom DNS resolver is useful in the following situations:

Internet testing: Bind the public domain name of your service to the IP address of your test environment to isolate test traffic from production traffic.

VPC testing: Bind API domain names to internal Virtual Private Cloud (VPC) addresses. For details, see Performance testing in an Alibaba Cloud VPC.

Distributed adaptation components

If your script includes a Synchronizing Timer or Constant Throughput Timer, configure how each timer distributes load across generators.

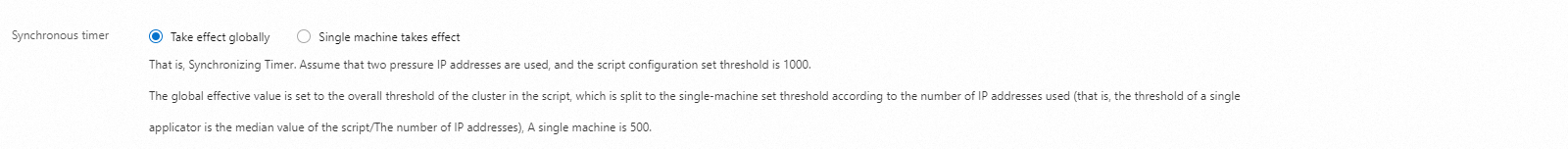

Synchronizing Timer

The mode determines how PTS applies the threshold defined in your script across multiple generators:

Global: Per-generator threshold = script threshold / number of IPs

Single Generator: Each generator uses the full script threshold independently

| Mode | Formula | Example (2 IPs, threshold = 1,000) |

|---|---|---|

| Global | Script threshold / number of IPs | Each generator: 500 |

| Single Generator | Script threshold per generator | Each generator: 1,000 (total: 2,000) |

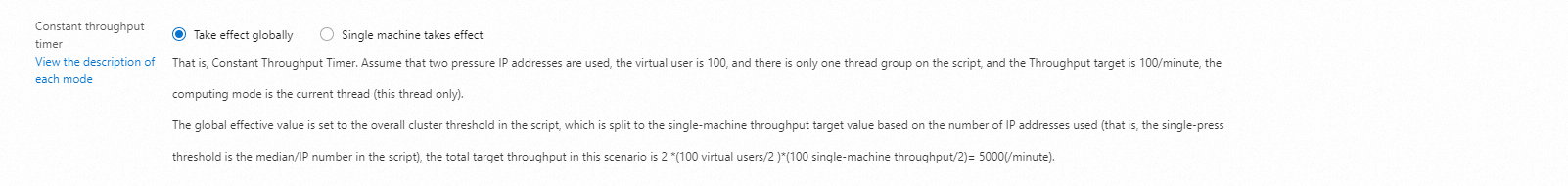

Constant Throughput Timer

The mode determines how PTS distributes the target throughput across generators:

Global: Per-generator throughput = script target / number of IPs

Single Generator: Each generator uses the full script target independently

| Mode | Formula | Example (2 IPs, 100 concurrent users, target = 100 req/min in "This Thread Only" mode) |

|---|---|---|

| Global | (Users / IPs) x (target / IPs) per IP | Total TPS/min = 2 x (100/2) x (100/2) = 5,000 |

| Single Generator | (Users / IPs) x target per IP | Total TPS/min = 2 x (100/2) x 100 = 10,000 |

Step 4: Add monitoring and metadata (optional)

Both features are disabled by default.

| Feature | Description |

|---|---|

| Cloud resource monitoring | View Elastic Compute Service (ECS), Server Load Balancer (SLB), ApsaraDB RDS, and Application Real-Time Monitoring Service (ARMS) metrics in the test report. |

| Additional information | Add a test owner and remarks for tracking purposes. |

Step 5: Start the test

Click Debug Scenario to validate network connectivity, plugin integrity, and script configuration before running at full load. For details, see Debug scenarios.

Click Save and Start. On the Tips page, select Execute Now, check The test is permitted and complies with the applicable laws and regulations, and click Start.

(Optional) Monitor performance data and adjust test speed during the run.

Real-time metrics

| Metric | Description |

|---|---|

| Real-time VUM | Total resources consumed. Unit: Virtual User per Minute (VUM). |

| Request Success Rate (%) | Percentage of successful requests across all agents. |

| Average RT | Average response time (RT) in milliseconds for successful and failed requests, shown separately. |

| TPS | Total requests in a sampling period divided by the period duration. |

| Number of Exceptions | Count of failed requests due to connection timeouts, request timeouts, and other errors. |

| Traffic (request/response) | Bandwidth consumed for sending requests and receiving responses. |

| Concurrency (current/maximum) | Current versus configured maximum concurrency. If the configured maximum concurrency is not reached during the warm-up phase, the current concurrency is used as the configured concurrency after warm-up completes. Click Speed Regulation to adjust concurrency during the test. |

| Total Requests | Cumulative number of requests sent during the test. |

Monitoring data is aggregated from the Backend Listener with a 15-second sampling period. Expect slight data latency.

Test details

Test Details: Click View Chart next to a request to see real-time TPS, success rate, response time, and traffic charts.

Load Generator Performance: View CPU utilization, Load 5, memory utilization, and network traffic for each generator.

Cloud Resource Monitoring: If enabled, view ECS, SLB, RDS, and ARMS metrics per resource.

Sampling logs

On the Sampling Logs tab, filter logs by Sampler, response status, or response time range.

Click View Details in the Actions column to open the Log Details dialog box. On the General tab, view log fields in either Common or HTTP format.

If Embedded Resources from HTML Files is configured in your JMX script, a Sub-request Details tab appears in the log details. Select a sub-request to filter its logs.

Timing Waterfall: Shows time breakdown for all requests and sub-requests.

Trace View: Shows upstream and downstream traces of the tested API.

Step 6: View the test report

After the test stops, PTS automatically generates a report with aggregated metrics and trend charts.

In the PTS console, choose Performance Test > Reports.

On the Report List page, select a scenario, find your report, and click View Report. For details, see View JMeter test reports.

Each data point in the trend chart covers a 15-second sampling period. Report data is retained for up to 30 days. Export the report if you need to keep it longer.

Export a test report (optional)

On the Report Details page, click Export Report.

Select Watermark Version or No Watermark Version to download the report as a PDF.

Sample scenarios

JMeter performance testing on PTS is suitable for the following scenarios:

High-concurrency distributed testing that requires elastic scaling beyond local infrastructure.

Real-time high-precision monitoring during testing, with auto-generated reports after the test completes.

Centralized management of JMeter scripts and dependency files across test environments.