Build and deploy a RAG application in LangStudio to enhance LLM responses with private knowledge bases for finance and healthcare.

Overview

RAG models combine information retrieval with generative AI to deliver accurate and contextually relevant answers. In specialized fields like finance and healthcare, traditional generative models may lack accuracy in domain-specific knowledge. RAG solves this by retrieving relevant information from private knowledge bases before generating responses.

Prerequisites

-

LangStudio supports Faiss or Milvus as vector database. To use Milvus, first create a Milvus database.

NoteUse Faiss for staging environments (no database setup required). Use Milvus for production environments (supports larger data volumes).

-

RAG knowledge base corpus has been uploaded to OSS. The following sample corpora are available for finance and healthcare scenarios:

-

Financial news: PDF format, primarily news reports from public news websites.

-

Disease introductions: CSV format, primarily disease introductions from Wikipedia.

-

Deploy LLM and embedding model (optional)

RAG application flow requires LLM and embedding model services. Skip this step if you already have a compliant model service that supports the OpenAI API.

Go to QuickStart > Model Gallery and deploy models for the following scenarios. For more information, see Model deployment and training.

Select an instruction-tuned LLM. Base models cannot correctly follow user instructions to answer questions.

-

Set Scenario to AIGC > large-language-model and deploy the DeepSeek-R1-Distill-Qwen-7B model as an example.

-

Set Scenario to NLP > embedding and deploy the bge-m3 embedding model as an example.

Create connections

LLM and embedding model service connections in this topic are based on model services (EAS services) deployed in QuickStart > Model Gallery. For other connection types, see Connection configuration.

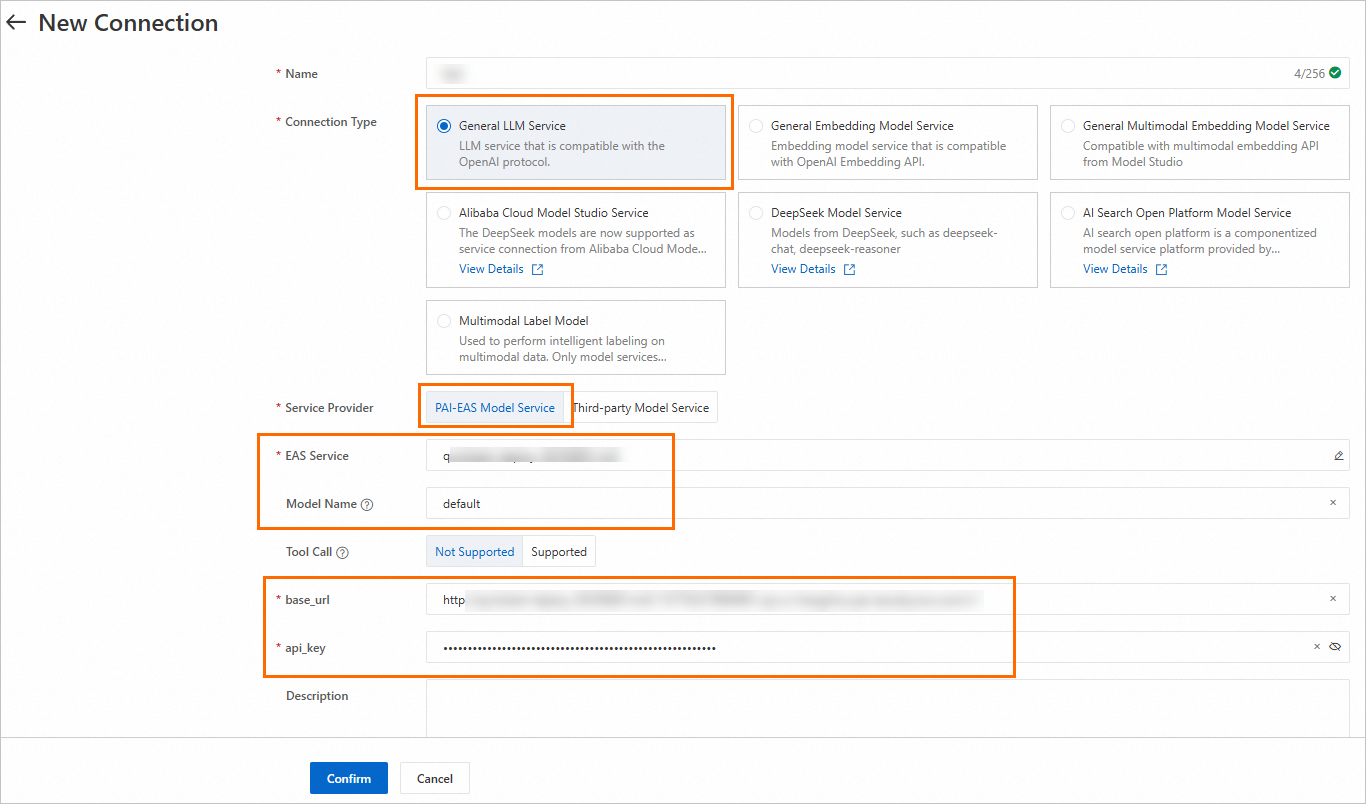

Create LLM service connection

Go to LangStudio, select a workspace, and then on the Model Service tab of the Service Connection Configuration page, click New Connection to create a general-purpose LLM model service connection.

Key parameters:

|

Parameter |

Description |

|

Model Name |

When deploying a model from Model Gallery, find the method to obtain model name on the model's product page. To access the product page, click the model card on the Model Gallery page. For more information, see Create a model service connection. |

|

Service Provider |

|

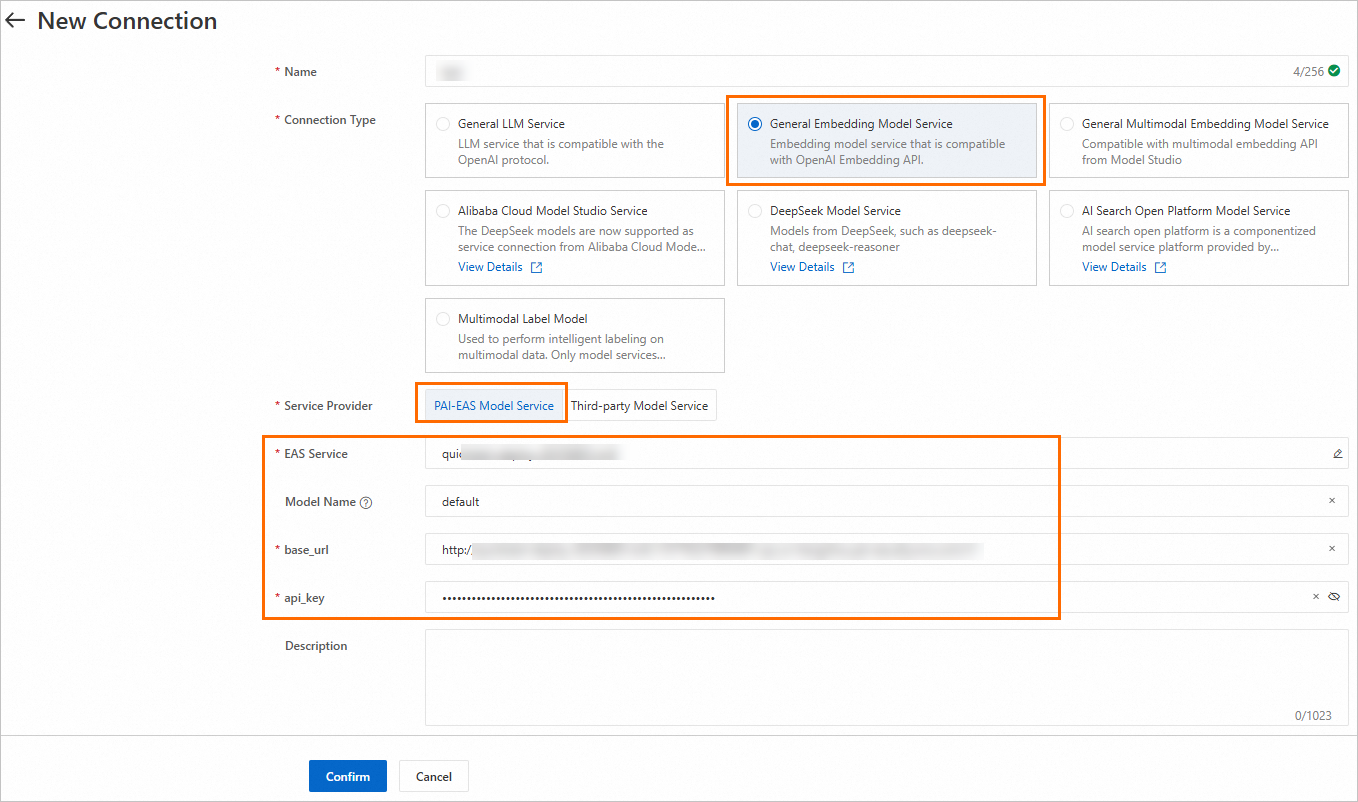

Create embedding model service connection

Similar to creating LLM service connection, create a general-purpose embedding model service connection.

Create vector database connection

On the Database tab of the Service Connection Configuration page, click New Connection to create a Milvus database connection.

Key parameters:

|

Parameter |

Description |

|

uri |

Milvus instance endpoint. Format: |

|

token |

Username and password to log on to Milvus instance. Format: |

|

database |

Database name. The following example uses the default database |

Create knowledge base index

Create a knowledge base index to parse, chunk, and vectorize the corpus. Results are stored in the vector database to build the knowledge base. The following table describes key parameters. For other configurations, see Manage knowledge bases.

|

Parameter |

Description |

|

Basic Configurations |

|

|

Data Source OSS Path |

OSS path of RAG knowledge base corpus from the Prerequisites section. |

|

Output OSS Path |

Path to store intermediate results and index information generated from document parsing. Important

When using FAISS as vector database, the application flow saves the generated manifest to OSS. If using the default PAI role (the instance RAM role set when starting runtime for application flow development), the application flow can access the default storage bucket of your workspace. Set this parameter to any directory in the OSS bucket where workspace default storage path is located. If using a custom role, grant the custom role access permissions to OSS. We recommend granting AliyunOSSFullAccess permission. For more information, see Manage the permissions of a RAM role. |

|

Embedding Model and Database |

|

|

Embedding Type |

Select General Embedding Model. |

|

Embedding Connection |

Select the embedding model service connection created in Create embedding model service connection. |

|

Vector Database Type |

Select Vector Database Milvus. |

|

Vector Database Connection |

Select the Milvus database connection created in Create vector database connection. |

|

Table Name |

Milvus database collection created in the Prerequisites section. |

|

VPC Configuration |

|

|

VPC Configuration |

Ensure the configured VPC is the same as Milvus instance VPC, or that the selected VPC is connected to the VPC where Milvus instance is located. |

Create and run RAG application flow

-

Go to LangStudio, select a workspace, and then on the Application Flow tab, click New Application Flow to create a RAG application flow.

-

Start runtime: In the upper-right corner, click Create Runtime and configure parameters. Note: Start runtime before parsing Python nodes or viewing more tools.

Key parameters:

VPC Configuration: Select the VPC used when creating the Milvus instance in the Prerequisites section, or ensure the selected VPC is connected to the VPC where Milvus instance is located.

-

Develop the application flow.

Keep default settings for other configurations in the application flow or configure as needed. Key node configurations:

-

Knowledge Base Retrieval: Retrieves text relevant to the user's question from the knowledge base.

-

Knowledge Base Index Name: Select the knowledge base index created in Create knowledge base index.

-

Top K: Number of top-K matching data entries to return.

-

-

LLM Node: Uses the retrieved documents as context and sends them with the user's question to the LLM to generate a response.

-

Model Settings: Select the connection created in Create LLM service connection.

-

Chat History: Enable chat history to use historical conversation information as input variable.

-

For more information about each node component, see Descriptions of pre-built components.

-

-

Debug/Run: In the upper-right corner, click Run to execute the application flow. For common issues that may occur when running the application flow, see the FAQ.

-

View link: Below the generated answer, click View Link to view the trace details or topology.

Deploy application flow

On the application flow development page, click Deploy in the upper-right corner to deploy the application flow as an EAS service. Keep default settings for other deployment parameters or configure as needed. Key parameter configurations:

-

Resource Deployment > Number of Instances: Configure the number of service instances. This deployment is for testing only, so set instances to 1. In production, configure multiple service instances to reduce single point of failure risk.

-

VPC > VPC: Select the VPC where Milvus instance is located, or ensure the selected VPC is connected to the VPC where Milvus instance is located.

For more information about deployment, see Deploy an application flow.

Call service

After deployment succeeds, you are redirected to the PAI-EAS page. On the Online Debugging tab, configure and send a request. The key in the request body must match the "Chat Input" field in the "Start" node of the application flow. The following example uses the default field question.

For other ways to call the service, such as using an API, see Call a service.