Select, deploy, and fine-tune pre-trained models from Model Gallery using domain filters, resource configurations, and training parameters.

Select a model

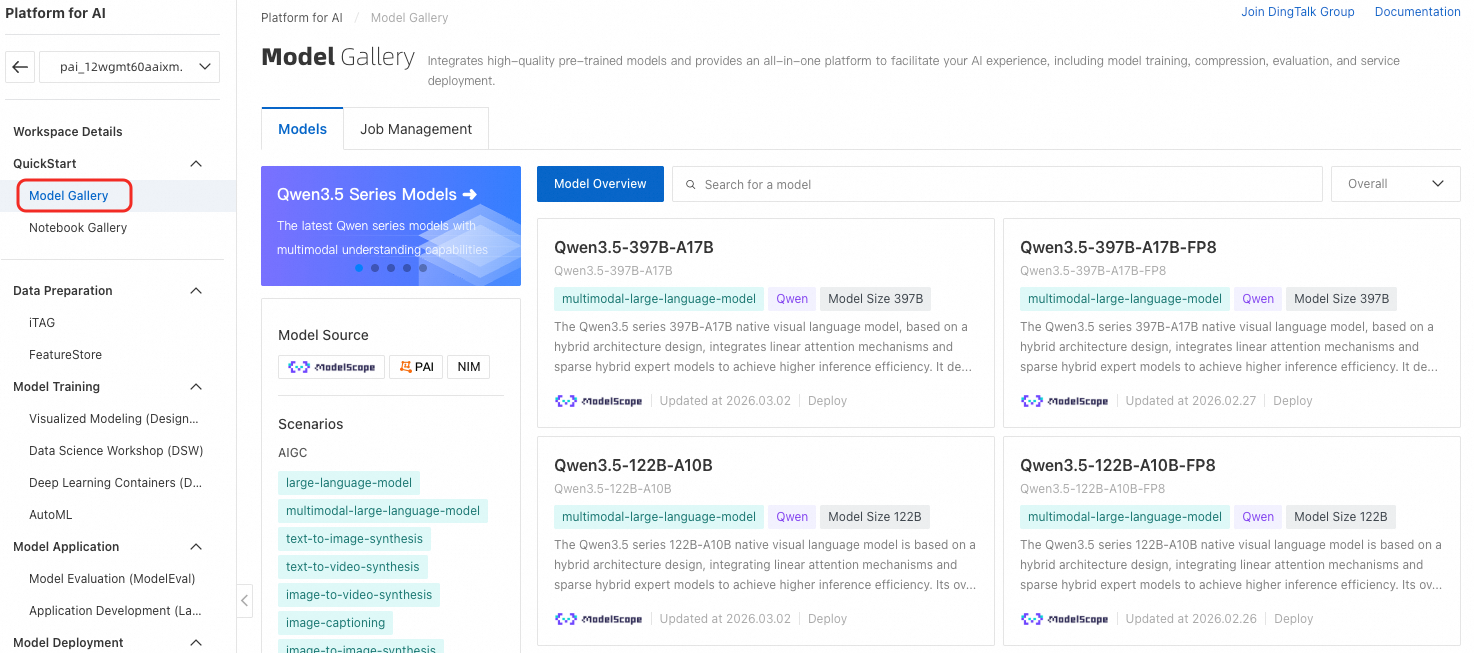

Model Gallery provides models for various business scenarios. Consider these factors when selecting:

-

Domain and task: Filter by application domain and task requirements.

-

Pre-training dataset: Models perform better when pre-training datasets match your use case. Check dataset details on each model's product page.

-

Model size: Larger models typically offer better performance but require more resources for deployment and fine-tuning.

To access Model Gallery:

-

Navigate to Model Gallery.

-

Log on to the PAI console.

-

In the left-side navigation pane, click Workspaces and select your workspace.

-

In the left-side navigation pane, click QuickStart > Model Gallery.

-

-

Select a model matching your requirements.

After selecting, deploy the model, test inference, and debug online. See Deploy a model and Fine-tune a model.

Deploy a model

For deployment example using Qwen3-0.6B, see Model Gallery Getting Started - Model Deployment.

Fine-tune a model

For fine-tuning example using Qwen3-0.6B, see Model Gallery Getting Started - Model Fine-tuning.

Fine-tuning configuration provides these parameters:

Available parameters vary by model. Configure according to your specific model requirements.

Billing

Model Gallery is free. Charges apply for EAS and DLC resources used during deployment and training. See Billing for Elastic Algorithm Service (EAS) and Billing for Deep Learning Containers (DLC).

to select the OSS path. In the Select OSS folder or file dialog box, select an existing file or click Upload file to upload.

to select the OSS path. In the Select OSS folder or file dialog box, select an existing file or click Upload file to upload. to select an existing dataset. To create datasets, see

to select an existing dataset. To create datasets, see