This topic describes how to deploy and start Flink Python stream and batch jobs in Realtime Compute for Apache Flink.

By the end of this guide, you will have a running word-frequency counter that reads Shakespeare text, processes it with a Flink Python job, and writes results to Object Storage Service (OSS) — covering both stream and batch deployment modes.

Prerequisites

Before you begin, make sure you have:

The required permissions to access the Flink console. If you use a Resource Access Management (RAM) user or a RAM role, see Permission management.

A Flink workspace. See Activate Realtime Compute for Apache Flink.

Step 1: Prepare the Python code file

Realtime Compute for Apache Flink does not provide a Python development environment. Develop your job locally before uploading it.

The Flink version you use for local development must match the engine version you select in Step 3. For guidance on local development, debugging, and connectors, see Python job development. For dependencies such as custom Python virtual environments, third-party Python packages, JAR packages, and data files, see Use Python dependencies.

This guide uses a sample word-frequency counter job. Download the files below — you'll upload them in Step 2.

Python job files (choose based on your deployment mode):

Stream job: word_count_streaming.py

Batch job: word_count_batch.py

Data file: Shakespeare

Step 2: Upload the Python file and data file

Log on to the Realtime Compute for Apache Flink console.

Click Console in the Actions column for your workspace.

In the left navigation pane, click File Management.

Click Upload Resource and upload both the Python file and the data file you downloaded in Step 1. For details on file storage paths, see File management.

Step 3: Deploy the Python job

Stream job

On the Job O&M page of the Operation Center, click Deploy Job > Python Job.

Enter the deployment information. For the full list of configuration parameters, see Deploy a job.

ImportantJobs deployed to a session cluster do not support monitoring and alerts (or data curves), monitoring and alert configuration, or auto tuning. Use session clusters for development and testing only. See Job debugging.

Parameter Description Example Deployment Mode Select Stream. Stream Deployment Name A name for the Python job. flink-streaming-test-python Engine Version The Flink engine version for the job. Use a version tagged Recommended or Stable for better reliability. See Release notes and Engine versions. vvr-8.0.9-flink-1.17 Python File Path Select the word_count_streaming.pyfile you uploaded. If it already exists in File Management, select it directly without uploading again.— Entry Module The entry class of the program. Leave blank for .pyfiles. For.zipfiles, enter the module name, such asword_count.Not required Entry Point Main Arguments The input parameters passed to the main method. Enter the OSS path of the Shakespeare data file. Copy the full path from File Management. --input oss://<your-oss-bucket-name>/artifacts/namespaces/<project-name>/ShakespeareLog on to the OSS console. In the oss://<your OSS Bucket name>/artifacts/namespaces/<project name>/python-batch-quickstart-test-output folder, click the folder that is named with the start date and time of the job. Then, click the object file name and click Download in the panel that appears.

--input oss://<your attached OSS Bucket name>/artifacts/namespaces/<project name>/Shakespeare--output oss://<your attached OSS Bucket name>/artifacts/namespaces/<project name>/python-batch-quickstart-test-outputYou can copy the full path of the Shakespeare file from File Management.

--input oss://<your-oss-bucket-name>/artifacts/namespaces/<project-name>/ShakespeareCopy the full path of the Shakespeare file from File Management.

Deployment Target Select a resource queue or a session cluster (not for production). See Manage resource queues and Create a session cluster. default-queue

Click Deploy.

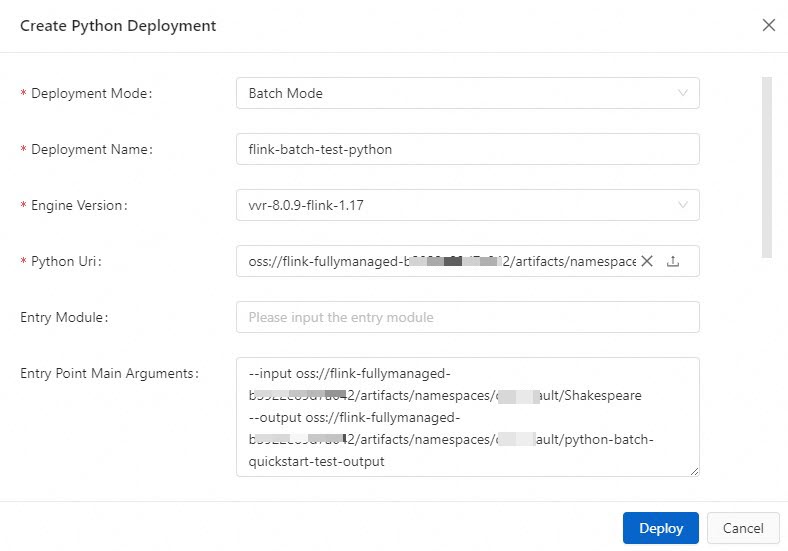

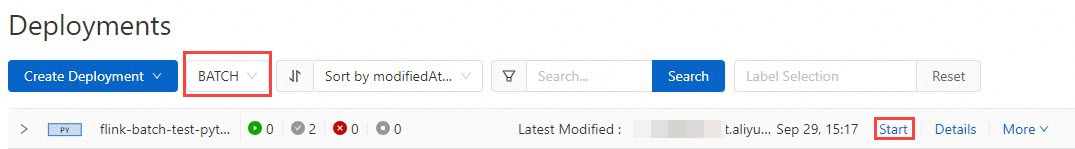

Batch job

On the Job O&M page of the Operation Center, click Deploy Job > Python Job.

Enter the deployment information. For the full list of configuration parameters, see Deploy a job.

ImportantJobs deployed to a session cluster do not support monitoring and alerts, monitoring and alert configuration, or auto tuning. Use session clusters for development and testing only. See Job debugging.

Parameter Description Example Deployment Mode Select Batch. Batch Deployment Name A name for the Python job. flink-batch-test-python Engine Version The Flink engine version for the job. Use a version tagged Recommended or Stable for better reliability. See Release notes and Engine versions. vvr-8.0.9-flink-1.17 Python File Path Select the word_count_batch.pyfile you uploaded.— Entry Module The entry class of the program. Leave blank for .pyfiles. For.zipfiles, enter the module name, such asword_count.Not required Entry Point Main Arguments The input and output paths for the job. Copy the Shakespeare file path from File Management. Specify only the output directory name — the directory is created automatically. The output directory's parent must be the same as the input file's parent. --input oss://<your-oss-bucket-name>/artifacts/namespaces/<project-name>/Shakespeare--output oss://<your-oss-bucket-name>/artifacts/namespaces/<project-name>/python-batch-quickstart-test-outputDeployment Target Select a resource queue or a session cluster (not for production). See Manage resource queues and Create a session cluster. default-queue

Click Deploy.

Step 4: Start the Python job and view results

Stream job

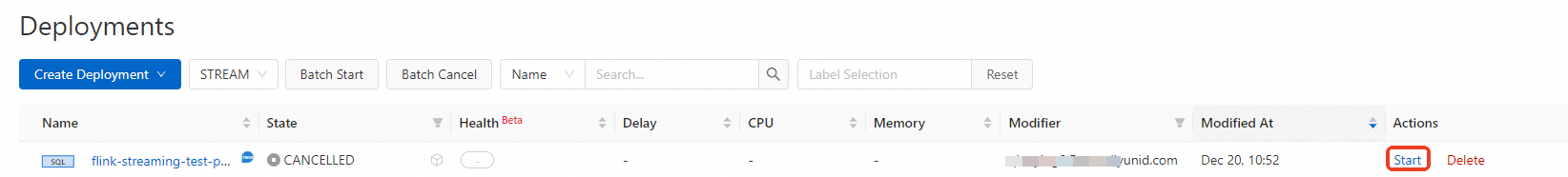

On the Operation Center > Job O&M page, find the target job and click Start in the Actions column.

Select Stateless Start and click Start. For details, see Start a job. After the job starts, its status changes to Running or Finished. For this sample job, the final status is Finished.

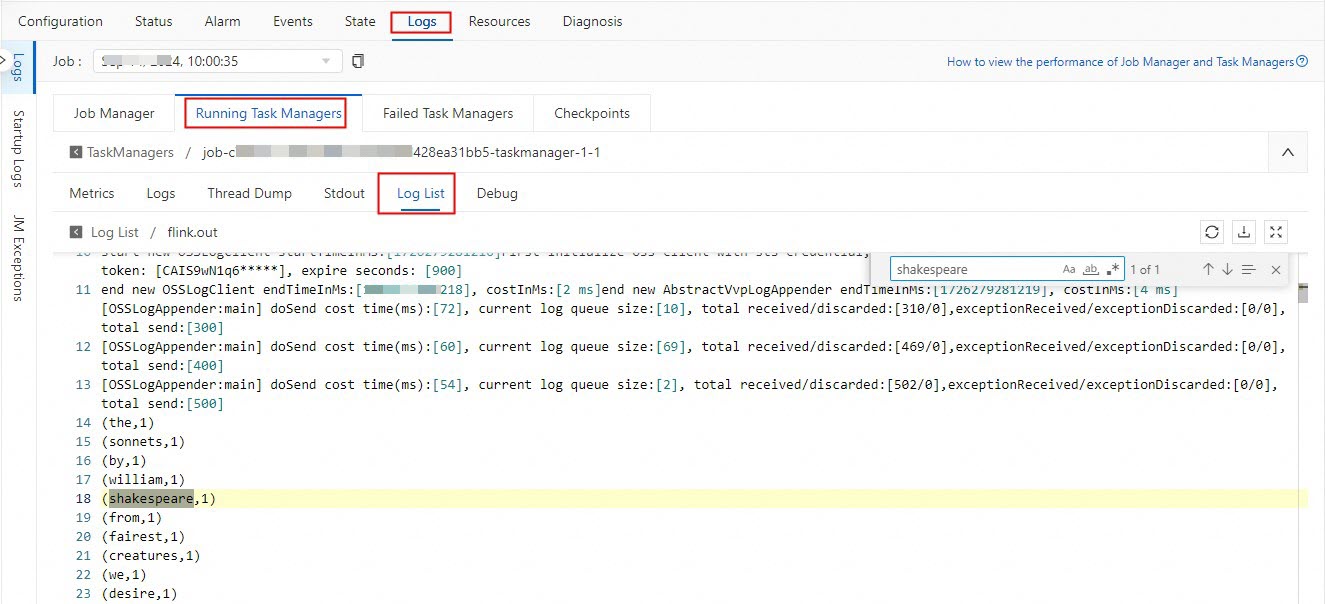

View the compute results while the job status is Running. In the TaskManager, search for

shakespearein the log file ending with.outto view the Flink compute results.ImportantFor this sample stream job, results are deleted when the status changes to Finished. View results only when the status is Running.

Batch job

On the Operation Center > Job O&M page, find the target job and click Start.

In the Start Job dialog box, click Start. For details, see Start a job.

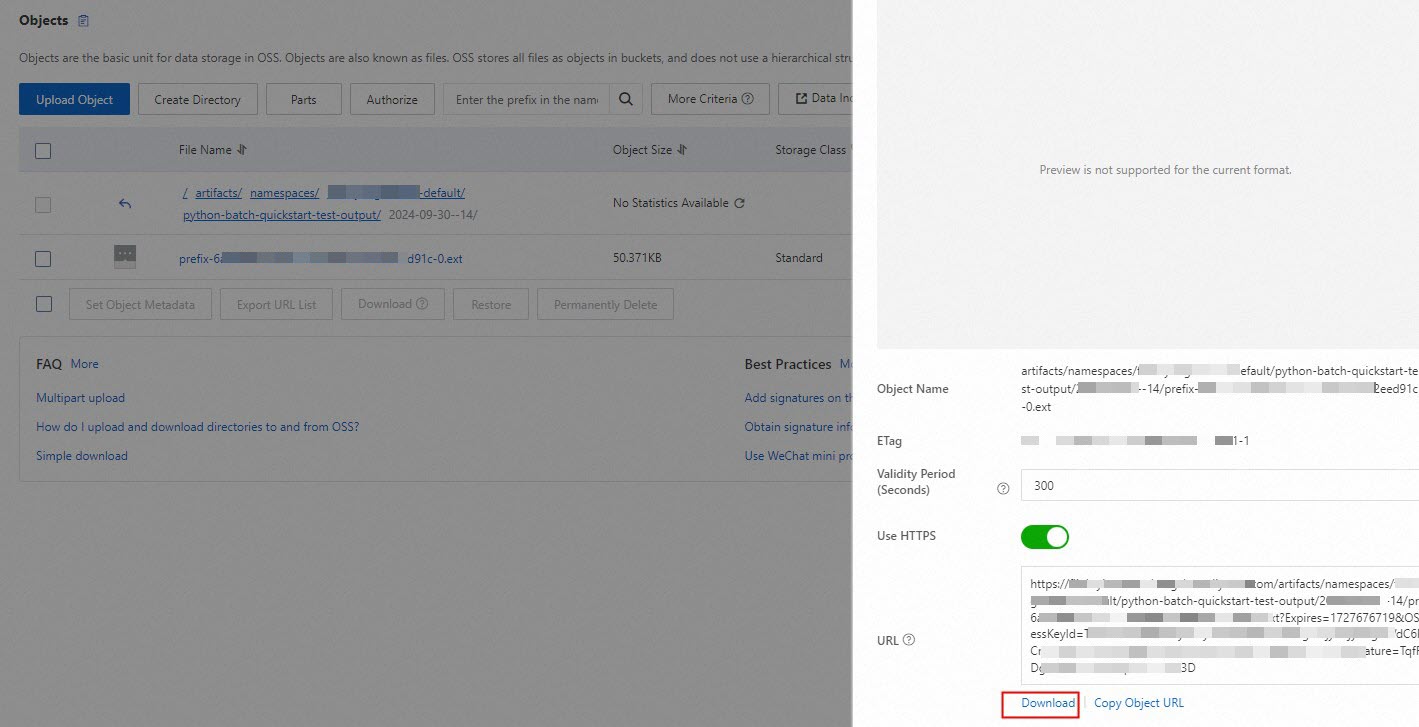

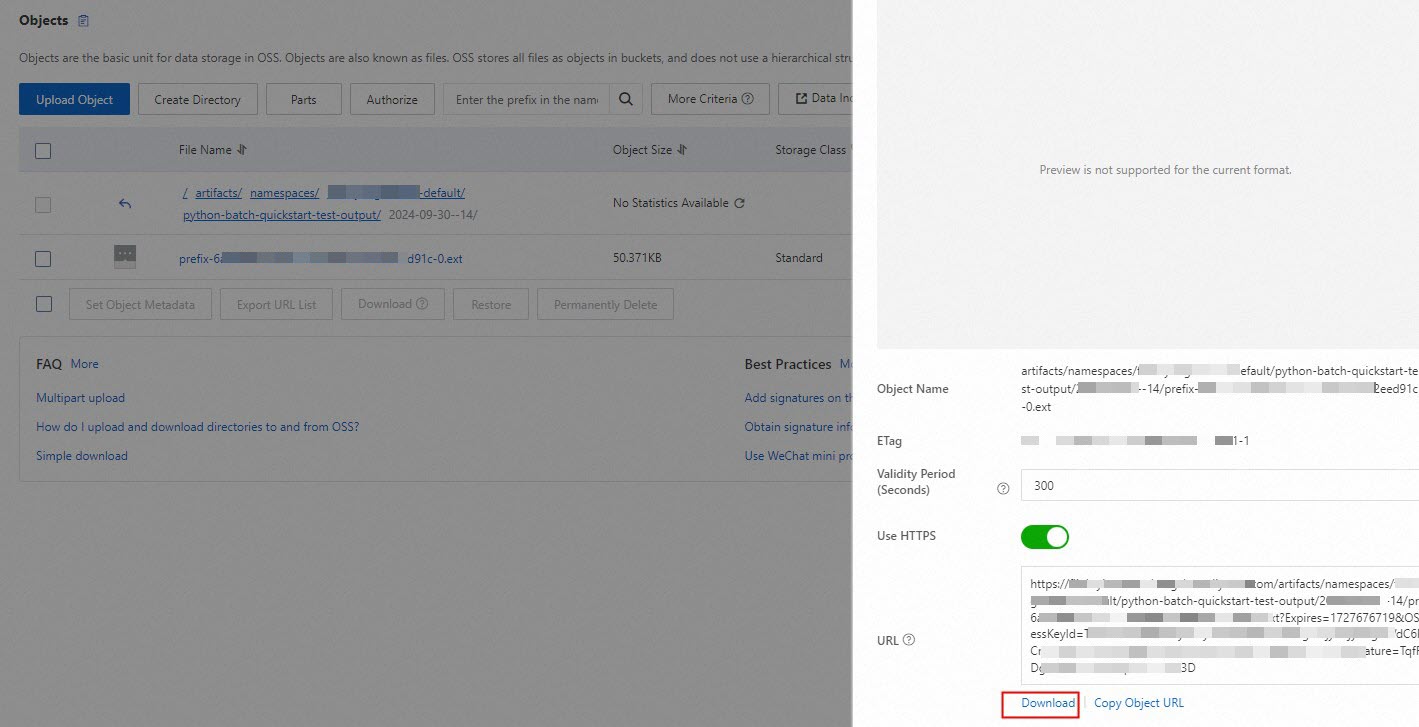

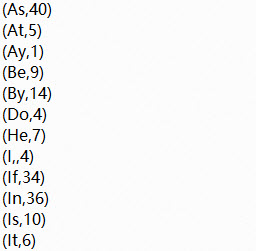

After the job status changes to Finished, retrieve the results from OSS. Log on to the OSS console. Navigate to

oss://<your-oss-bucket-name>/artifacts/namespaces/<project-name>/python-batch-quickstart-test-output, open the subfolder named with the job's start date and time, click the object file, and then click Download. The result is an.extfile. Open it with a text editor or Microsoft Office Word to view the word-frequency output.

(Optional) Step 5: Terminate the job

Stop and restart a job when any of the following apply:

You modified the code or changed the job version.

You added or removed WITH parameters.

The job cannot reuse its state.

You are starting a new job.

You updated parameters that do not take effect dynamically.

After modifying a job, redeploy it before restarting. For details, see Stop a job.

What's next

Configure resources: Set job resources before or after the job starts. Two modes are available: basic mode (coarse-grained) and expert mode (fine-grained). See Configure job resources.

Update parameters without restarts: Realtime Compute for Apache Flink supports dynamic updates of Flink job parameters, reducing business interruptions. See Dynamic scaling and parameter updates.

Configure log outputs: Set log levels and specify separate outputs per level. See Configure job log outputs.

Try an SQL job: See Quick start for Flink SQL jobs.

Build data pipelines: Build a real-time data warehouse with Hologres or build a streaming data lakehouse with Paimon and StarRocks.