Before creating a cluster, plan your VPC layout, CIDR block allocation, and CNI plug-in selection. Getting these right upfront prevents IP conflicts, avoids hard-to-fix topology mistakes, and reserves enough capacity for future growth. Three settings cannot be changed after cluster creation: the CNI plug-in, the Service CIDR block, and the container CIDR block (Flannel only).

Network scale planning

Region and zone

VPC count

vSwitch count

Cluster size

Network connectivity planning

CNI plug-in planning

ACK managed clusters support two container network interface (CNI) plug-ins: Terway and Flannel. The CNI plug-in is set at cluster creation and cannot be changed afterward. Your choice determines which network features are available and how CIDR blocks are configured.

Choose a CNI plug-in

Use this table to select the plug-in that fits your requirements:

| Terway | Flannel | |

|---|---|---|

| Best for | Workloads requiring advanced network features: NetworkPolicy, fixed pod IPs, pod-bound elastic IP addresses (EIPs), or inter-cluster access | Simpler setups where these features are not needed |

| Pod IP source | IPs assigned from VPC vSwitches | IPs assigned from a virtual CIDR block |

| IP pool size | Limited by vSwitch CIDR block size | Up to 65,536 pod IPs with a /16 container CIDR block |

| IPv6 | Supported | Not supported |

Feature comparison

| Feature | Terway | Flannel |

|---|---|---|

| NetworkPolicy | Supported | Not supported |

| IPv4/IPv6 dual-stack | Supported | Not supported |

| Fixed pod IP | Supported | Not supported |

| Pod-bound EIP | Supported | Not supported |

| Inter-cluster access | Supported (when security groups allow the required ports) | Not supported |

ACK uses a modified version of the Flannel plug-in optimized for Alibaba Cloud. It does not track upstream open source changes. For Flannel update history, see Flannel.

For a detailed feature comparison, see Terway vs. Flannel container network plug-ins.

CIDR block planning

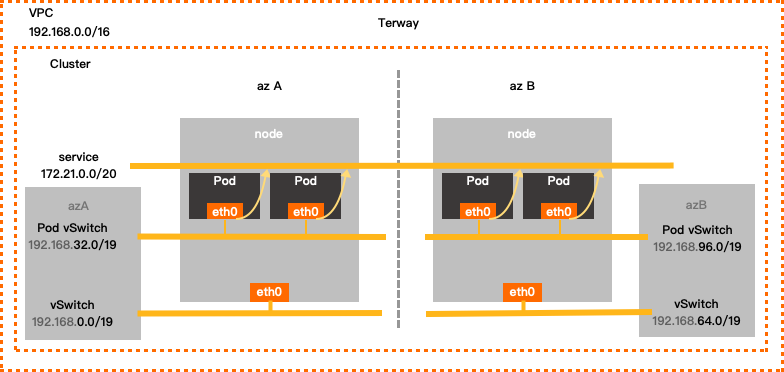

Terway network mode

In Terway mode, pods get IP addresses from dedicated pod vSwitches in your VPC. Plan pod vSwitch CIDR blocks large enough to accommodate all pods across nodes and zones, including headroom for rolling upgrades.

Configuration examples

Single-zone:

| VPC CIDR block | vSwitch CIDR block | Pod vSwitch CIDR block | Service CIDR block | Maximum assignable pod IPs |

|---|---|---|---|---|

| 192.168.0.0/16 | Zone I: 192.168.0.0/19 | 192.168.32.0/19 | 172.21.0.0/20 | 8,192 |

Multi-zone:

| VPC CIDR block | vSwitch CIDR block | Pod vSwitch CIDR block | Service CIDR block | Maximum assignable pod IPs |

|---|---|---|---|---|

| 192.168.0.0/16 | Zone I: 192.168.0.0/19 | 192.168.32.0/19 | 172.21.0.0/20 | 8,192 |

| Zone J: 192.168.64.0/19 | 192.168.96.0/19 |

VPC

Use one of the following RFC-standard private CIDR blocks—or a subnet—as your VPC's primary IPv4 CIDR block: 192.168.0.0/16, 172.16.0.0/12, or 10.0.0.0/8. Valid mask lengths range from /8 to /28 (varies by block). Example: 192.168.0.0/16.

For multi-VPC or hybrid cloud deployments, use subnets of these RFC-standard private CIDR blocks with a mask of /16 or shorter. Ensure no CIDR block overlaps between VPCs or between a VPC and your on-premises data center.

VPCs assign IPv6 CIDR blocks automatically when you enable IPv6. To use IPv6 for containers, choose Terway.

To use a public IP range for your VPC CIDR block, request the ack.white_list/supportVPCWithPublicIPRanges quota in the Quota Center.

vSwitch

vSwitches host ECS instances and handle node-to-node traffic. The vSwitch CIDR block must be a subset of the VPC CIDR block.

-

ECS instances in the vSwitch get IPs from this CIDR block. Size the vSwitch to have enough addresses for all nodes.

-

Multiple vSwitches in one VPC must not have overlapping CIDR blocks.

-

Each pod vSwitch must be in the same zone as its corresponding node vSwitch.

Pod vSwitch

The pod vSwitch assigns IPs to pods and handles pod traffic. Its CIDR block must be a subset of the VPC CIDR block.

-

Size the pod vSwitch based on the maximum number of pods you expect to run, plus buffer for upgrades.

-

The pod vSwitch CIDR block must not overlap with the Service CIDR block.

Service CIDR block

The Service CIDR block cannot be modified after cluster creation.

The Service CIDR block defines the IP range for ClusterIP services. Each service gets one IP.

-

Service IPs are only reachable within the cluster—not from outside.

-

The Service CIDR block must not overlap with the vSwitch CIDR block or the pod vSwitch CIDR block.

Service IPv6 CIDR block (when IPv6 dual-stack is enabled)

-

Use a Unique Local Address (ULA) in the

fc00::/7range. The prefix length must be between /112 and /120. -

Match the number of usable addresses in the Service CIDR block.

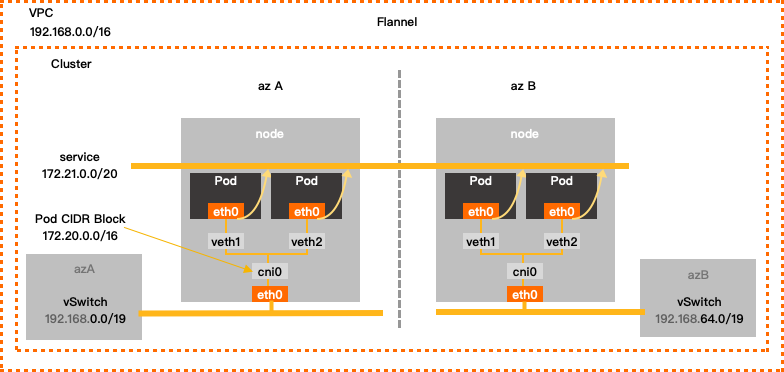

Flannel network mode

In Flannel mode, pod IPs come from a virtual container CIDR block—not tied to any vSwitch. Pod packets route through the VPC, and ACK automatically adds routes to each pod CIDR block in the VPC route table.

Configuration example

| VPC CIDR block | vSwitch CIDR block | Container CIDR block | Service CIDR block | Maximum assignable pod IPs |

|---|---|---|---|---|

| 192.168.0.0/16 | 192.168.0.0/24 | 172.20.0.0/16 | 172.21.0.0/20 | 65,536 |

VPC

Use one of the following RFC-standard private CIDR blocks—or a subnet—as your VPC's primary IPv4 CIDR block: 192.168.0.0/16, 172.16.0.0/12, or 10.0.0.0/8. Valid mask lengths range from /8 to /28 (varies by block). For multi-VPC or hybrid cloud deployments, use a mask of /16 or shorter and ensure no overlaps between VPCs or between a VPC and your on-premises data center.

To use a public IP range for your VPC CIDR block, request the ack.white_list/supportVPCWithPublicIPRanges quota in the Quota Center.

vSwitch

-

ECS instances in the vSwitch get IPs from this CIDR block. Size the vSwitch to accommodate all nodes.

-

Multiple vSwitches in one VPC must not have overlapping CIDR blocks.

Container CIDR block

The container CIDR block cannot be modified after cluster creation.

This virtual CIDR block assigns pod IPs across the cluster.

-

It is not tied to any vSwitch.

-

It must not overlap with the vSwitch CIDR block or the Service CIDR block.

For example, if your VPC CIDR block is 172.16.0.0/12, do not use 172.16.0.0/16 or 172.17.0.0/16 for the container CIDR block—both fall within 172.16.0.0/12.

Service CIDR block

The Service CIDR block cannot be modified after cluster creation.

The Service CIDR block defines the IP range for ClusterIP services.

-

Service IPs are only reachable within the cluster.

-

The Service CIDR block must not overlap with the vSwitch CIDR block or the container CIDR block.

What's next

-

Use VPC secondary CIDR blocks: After using a secondary CIDR block or expanding to a new zone, configure SNAT rules on a NAT Gateway and update security group rules to ensure services in the new CIDR block work correctly.

-

Account planning: Plan a unified account structure to support permission and workload isolation as your business grows.

-

Security capability planning: Plan security controls based on your network connectivity topology and security requirements.

-

Disaster recovery capability planning: Plan disaster recovery based on your business architecture to protect data and ensure continuity.

-

Access the API server from the Internet: Expose your cluster API server to the Internet using an elastic IP address (EIP).

-

Enable outbound Internet access for the cluster: Configure SNAT for outbound access to public resources such as image registries or dependency libraries.

-

Network management FAQ: Common network issues with ACK managed clusters.