After deploying a job, start it on the Job O&M page. You also need to start a job to recover a stopped job or apply updated parameter settings that are not dynamically applied.

Prerequisites

Before you begin, ensure that you have:

-

A deployed job. See Deploy a job.

-

Access permissions to the target project, if you are using a Resource Access Management (RAM) user, RAM role, or another Alibaba Cloud account. For details, see Grant permissions in the development console. For an overview of permission management, see Permission management.

Limitations

Only stream jobs support start options.

Usage notes

If you start a job from the latest state or a specified state, the system runs a state compatibility check. A job with state incompatibilities may fail to start or produce unexpected results. See Flink state compatibility reference.

Start a job

-

Log on to the Flink development console as a member with the owner role.

-

At the top of the page, select the name of the target project.

-

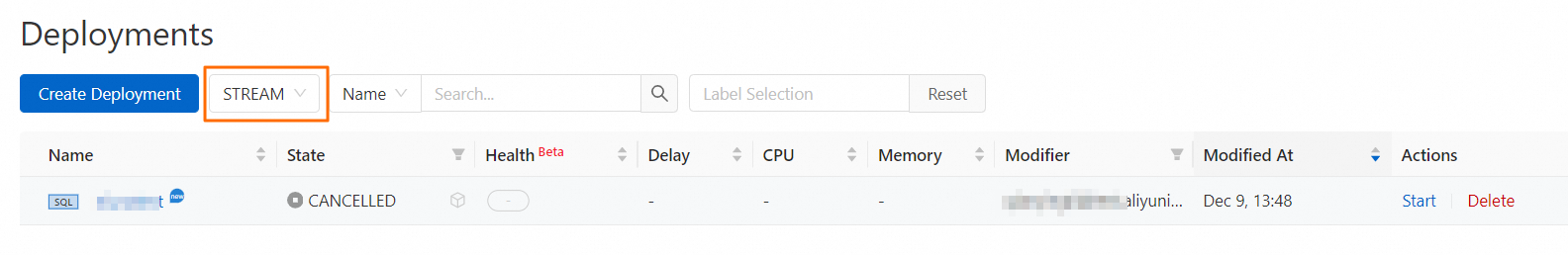

Go to Operation Center > Job O&M, then select Stream Job or Batch Job from the drop-down list.

-

In the Actions column for the target job, click Start.

-

(Optional) For a stream job, configure the start options.

-

Stateless Start — Use for a new job or when you cannot reuse a state.

Option

Description

Specify Source Table Start Time

Select Specify Source Table Start Time and specify a time. The read time configured here takes priority over the

startTimeset in the job's Data Definition Language (DDL) code. Supported connectors: Kafka, Simple Log Service (SLS), DataHub, ApsaraMQ for RocketMQ, Hologres, Paimon data lakehouse for streaming, and MySQL. Note:startTimetakes effect only when starting a job from scratch withstartTimespecified. It does not apply when starting from a system checkpoint or a snapshot. Not all connectors supportstartTime— check whether the connector's WITH parameters includestartTime. See Simple Log Service (SLS) WITH parameters for an example. Kafka versions earlier than 0.11 may not be supported due to connector compatibility issues.Configure Automatic Tuning

Turn on this switch and select a tuning mode: Intelligent tuning automatically adjusts resource allocation based on usage — scaling down when usage is low and scaling up when it reaches a threshold. See Enable and configure intelligent tuning. Scheduled tuning applies resource configurations on a time-based schedule. A schedule can contain multiple resource-to-time mappings. See Configure and apply a scheduled tuning plan.

-

Stateful Start — Select a recovery policy and optionally enable automatic tuning.

Policy

Description

Recover from the Latest State

Recovers the job from the latest snapshot or system checkpoint. If changes to the SQL code, Flink runtime parameters, or database engine version are detected, click Check next to State Compatibility Check before proceeding. See Compatibility for the meaning of compatibility results and recommended actions.

Recover from a Specified State

Select a specific snapshot to restore from. To create a snapshot before stopping a job, see Manage job state sets.

Recover from Another Job

Specify the target job and its snapshot. Snapshots can be shared between jobs, but the job states must be compatible. See Manage job state sets.

Allow Non-Restored State (JAR jobs only)

By default, the Flink system tries to match the entire snapshot with the job being submitted. If modifications to the job cause changes in the operator state, the task may not be recoverable. In this case, you can turn on this switch. The system skips states that cannot be matched, allowing the job to start. See Allow Non-Restored State.

Configure Automatic Tuning

Same options as in Stateless Start: intelligent tuning or scheduled tuning.

-

-

Click Start.

To check the current status of the job, go to Operation Center > Job O&M. See View the running status of a job.

What's next

-

Modify runtime parameters after the job starts. See Configure runtime parameters. Some parameters also support dynamic updates to reduce interruptions — see Dynamic scaling and dynamic parameter updates.

-

Trace data lineage to locate issues or assess impact. See View data lineage.

-

Learn about GeminiStateBackend and how it compares to RocksDBStateBackend. See Introduction to the enterprise-grade state backend.