EMR Shell nodes let you run Shell scripts against your EMR cluster from DataWorks, covering data processing, Hadoop component calls (YARN, Spark, Sqoop), and file management. This topic walks you through creating, developing, scheduling, and deploying an EMR Shell node.

Prerequisites

Before you begin, make sure you have:

An Alibaba Cloud EMR cluster registered to DataWorks. See DataStudio (old version): Associate an EMR computing resource

A serverless resource group purchased and configured (associated with a workspace and with network connectivity set up). See Use serverless resource groups

A workflow created in DataStudio. All node development in DataStudio is organized under workflows. See Create a workflow

(If developing as a RAM user) The RAM user added to the DataWorks workspace as a member with the Develop or Workspace Administrator role assigned. The Workspace Administrator role has more permissions than necessary. Exercise caution when you assign the Workspace Administrator role. See Add workspace members and assign roles to them

(Optional) A custom image prepared, if you need to customize the component environment—for example, to replace Spark JAR packages or include specific libraries. Build your custom image from the official

dataworks_emr_base_task_podimage. See Create a custom image and use it in DataStudio(If running Python scripts on a resource group) A third-party package installed:

Serverless resource group: use the image management feature. See Custom images

Exclusive resource group for scheduling: use the O&M Assistant feature. See O&M Assistant

Limitations

EMR Shell nodes run on DataWorks resource groups for scheduling, not inside EMR clusters. Use a serverless resource group (recommended) or an exclusive resource group for scheduling. If you need to use a DataStudio image, use a serverless resource group.

EMR Shell nodes cannot run Python scripts. To run Python scripts, use Shell nodes instead.

To reference an EMR resource in a node, upload the resource to DataWorks first. See Create and use an EMR JAR resource.

Do not add comments in your EMR Shell node code.

When using

spark-submit, setdeploy-modetocluster, notclient.To manage metadata for a DataLake or custom cluster in DataWorks, configure EMR-HOOK in the cluster first. Without EMR-HOOK, metadata is not displayed in real time, audit logs are not generated, data lineage is not tracked, and EMR governance tasks cannot run. See Use the Hive extension feature to record data lineage and historical access information.

Step 1: Create an EMR Shell node

Log on to the DataWorks console. In the top navigation bar, select a region. In the left-side navigation pane, choose Data Development and O&M > Data Development. Select a workspace from the drop-down list and click Go to Data Development.

Find the workflow you want to work in. Right-click the workflow name, then choose Create Node > EMR > EMR Shell. Alternatively, hover over the Create icon and choose Create Node > EMR > EMR Shell.

In the Create Node dialog box, configure Name, Engine Instance, Node Type, and Path. Click Confirm. The configuration tab of the EMR Shell node opens.

The node name can contain only letters, digits, underscores (

_), and periods (.).

Step 2: Develop an EMR Shell task

EMR Shell nodes support two ways to reference external resources. Choose the method that fits your setup:

| Method 1: Upload a JAR resource (recommended) | Method 2: Reference an OSS resource | |

|---|---|---|

| Use when | Your EMR cluster is a DataLake cluster | You need to reference JAR dependencies or scripts already stored in OSS |

| How it works | Upload from your local machine to DataStudio, then insert a resource reference | Point directly to an OSS path using the ossref:// scheme |

Method 1: Upload and reference an EMR JAR resource

DataWorks allows you to upload a resource from your on-premises machine to DataStudio before you can reference the resource. If the EMR cluster that you want to use is a DataLake cluster, you can perform the following steps to reference an EMR JAR resource. If the EMR Shell node depends on large amounts of resources, the resources cannot be uploaded by using the DataWorks console. In this case, you can store the resources in Hadoop Distributed File System (HDFS) and reference the resources in the code of the EMR Shell node.

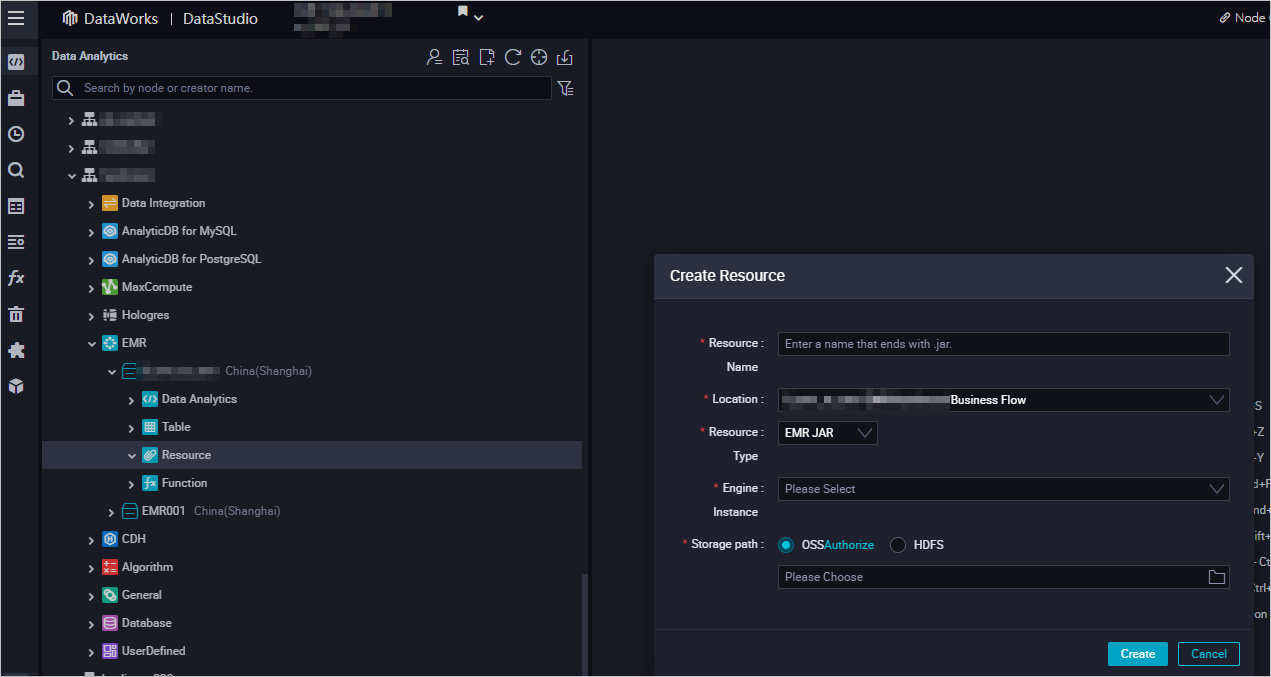

Create an EMR JAR resource. See Create and use an EMR resource. In this example, the JAR package is stored in the

emr/jarsdirectory. The first time you use an EMR JAR resource, click Authorize to grant DataWorks access, then click Upload.

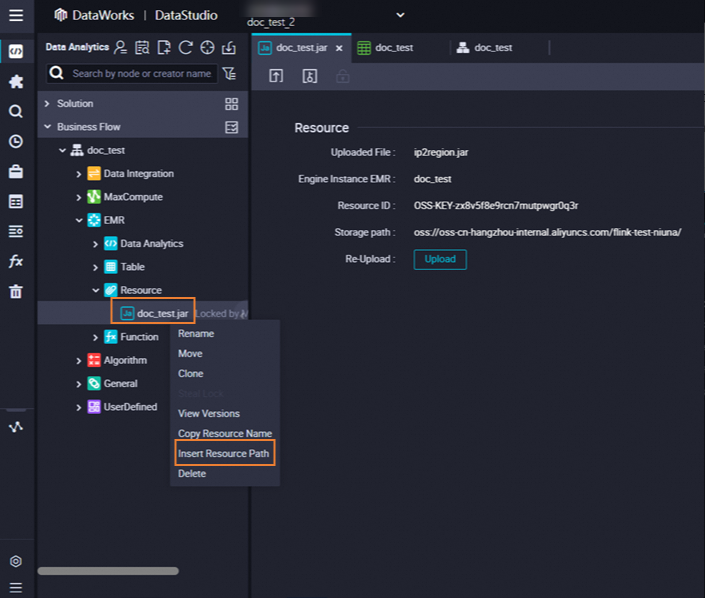

Open the EMR Shell node. In the EMR folder under Resource, right-click the resource you want to reference and select Insert Resource Path. A

##@resource_reference{""}line appears in the editor, confirming the resource is referenced.

Write your task code. Replace the placeholder values with your actual resource name, bucket, and path:

##@resource_reference{"onaliyun_mr_wordcount-1.0-SNAPSHOT.jar"} onaliyun_mr_wordcount-1.0-SNAPSHOT.jar cn.apache.hadoop.onaliyun.examples.EmrWordCount oss://onaliyun-bucket-2/emr/datas/wordcount02/inputs oss://onaliyun-bucket-2/emr/datas/wordcount02/outputs

Method 2: Reference an OSS resource

Use scheduling parameters

Define variables in your node code using ${Variable} syntax. Assign values to those variables in the Scheduling Parameter section of the Properties tab. DataWorks substitutes the values at runtime.

DD=`date`;

echo "hello world, $DD"

echo ${var};Supported commands (EMR DataLake clusters)

All commands run on the DataWorks resource group, not inside the EMR cluster directly. The following commands are supported when your cluster is a DataLake cluster:

| Category | Commands |

|---|---|

| Shell | Commands under /usr/bin and /bin, such as ls and echo |

| YARN | hadoop, hdfs, yarn |

| Spark | spark-submit |

| Sqoop | sqoop-export, sqoop-import, sqoop-import-all-tables |

To use Sqoop, add your resource group's IP address to the IP address whitelist of the ApsaraDB RDS instance that stores EMR cluster metadata.

Run the task

In the toolbar, click the

(Run with Parameters) icon. In the Parameters dialog box, select a resource group from the Resource Group Name drop-down list and click Run.

(Run with Parameters) icon. In the Parameters dialog box, select a resource group from the Resource Group Name drop-down list and click Run.To access a computing resource over the Internet or a virtual private cloud (VPC), use the resource group that is connected to that computing resource. See Network connectivity solutions.

Click the

icon in the top toolbar to save the node.

icon in the top toolbar to save the node.(Optional) Perform smoke testing on the node in the development environment before committing. See Perform smoke testing.

Step 3: Configure scheduling properties

To have DataWorks run the task on a schedule, click Properties in the right-side navigation pane and configure the scheduling settings.

Before committing the task, configure the Rerun and Parent Nodes parameters on the Properties tab.

If you need to customize the component environment, you can create a custom image based on the official image dataworks_emr_base_task_pod and use the custom image in DataStudio. For example, you can replace Spark JAR packages or include specific libraries, files, or JAR packages when creating the custom image.

For full details on scheduling options, see Overview.

Step 4: Deploy the task

Click the

icon to save the task.

icon to save the task.Click the

icon to commit the task. In the Submit dialog box, fill in Change description and decide whether to enable code review.

icon to commit the task. In the Submit dialog box, fill in Change description and decide whether to enable code review.If you enable code review, the committed code is deployed only after it passes review. See Code review.

If your workspace is in standard mode, deploy the task to the production environment after committing. Click Deploy in the upper-right corner of the configuration tab. See Deploy nodes.

What's next

View the scheduling status of the task in Operation Center. Click Operation Center in the upper-right corner of the node configuration tab. See View and manage auto triggered tasks.

Run Python scripts using Shell nodes: see Use a Shell node to run Python scripts.