Data quality, based on the Dataphin platform, offers comprehensive solutions for data development and utilization, encompassing quality rule configuration, monitoring, scheduling, intelligent alerts, and validation management.

Prerequisites

The data quality value-added service must be purchased and the data quality module enabled for the current tenant.

Background information

As demand for big data infrastructure, management, and application grows across industries, the diversity and complexity of Dataphin scenarios have increased. To ensure data quality in terms of timeliness, accuracy, completeness, consistency, and validity, Dataphin standardizes raw business system data, enabling reliable data-driven business decisions.

Data quality process guide

The data quality process guide assists you through the steps of (optional) configuring rule templates -> introducing monitored objects -> configuring quality rules -> validating rules -> viewing validation records and viewing quality reports -> performing quality rectification as outlined in the comprehensive process guide.

Scenarios for quality rules

Data quality is crucial in development to ensure the integrity of data.

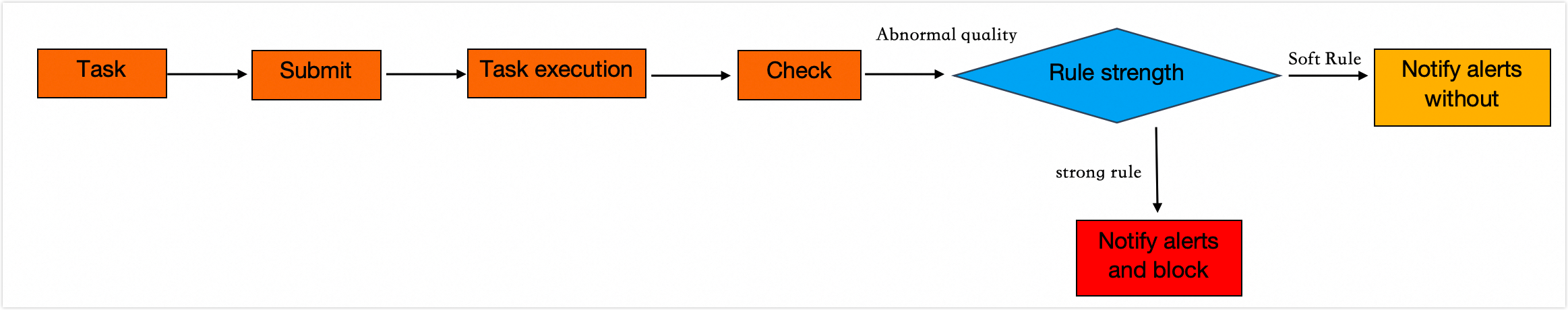

When quality anomalies are detected during validation, the strength of the rule settings-either hard or soft-determines whether downstream tasks are interrupted to prevent the propagation of abnormal data.

A hard rule triggers an alert and blocks downstream task nodes when the quality rule validation result is abnormal.

A soft rule triggers an alert but does not block downstream task nodes when the quality rule validation result is abnormal.

Feature overview

Data quality enables rule validation and rectification for various entities such as Dataphin tables, global tables, metrics, data sources, and real-time meta tables.

Dataphin tables facilitate rule validation and rectification across various table types, including physical tables, logical fact tables, logical dimension tables, and logical aggregate tables.

Global tables facilitate rule validation and correction across a range of data source types, including MaxCompute, Hive, MySQL, Oracle, Microsoft SQL Server, PostgreSQL, SAP HANA, AnalyticDB for PostgreSQL, ClickHouse, IBM DB2, DM, and Hologres.

Provides monitoring, anomaly alerts, and corrective actions for the quantity of field groups, duplicate field values, field stability, and volatility within metrics.

Provide monitoring, anomaly alerts, and corrective actions for changes in connectivity and table structure of data sources.

Provides support for statistical value detection, real-time versus offline comparison, multi-link real-time comparison, anomaly alerts, and corrective actions for real-time meta tables.

Data quality provides an end-to-end solution for quality validation, monitoring, intelligent alerting, report generation, and rectification initiation for tables, data sources, metrics, and real-time meta tables. This ensures data reliability and validity throughout the production and usage processes, preventing distrust and decision-making errors due to data quality issues.

Data quality encompasses Quality Overview, Quality Monitoring, and Quality Administration:

Quality Overview presents the number of tables validated, the number of tables with abnormal results, and other relevant information, facilitating quick identification and resolution of abnormal validation outcomes.

Quality Monitoring offers a list of quality rules, configuration options, validation record viewing, and quality report access.

Quality Administration enables error review in the data quality validation process and the initiation of rectification, ignoring, notification, and other administrative actions, completing the PDCA cycle from planning to action, thus effectively enhancing data quality.