This topic describes how to use the data transmission service to migrate data from a TiDB database to a MySQL tenant of OceanBase Database.

A data migration task remaining in an inactive state for a long time may fail to be resumed depending on the retention period of incremental logs. Inactive states are Failed, Stopped, and Completed. The data transmission service releases data migration tasks remaining in an inactive state for more than 3 days to reclaim related resources. We recommend that you configure alerts for data migration tasks and handle task exceptions in a timely manner.

Background

The data transmission service allows you to seamlessly migrate the existing business data and incremental data from a TiDB database to a MySQL tenant of OceanBase Database through schema migration, full migration, and incremental synchronization.

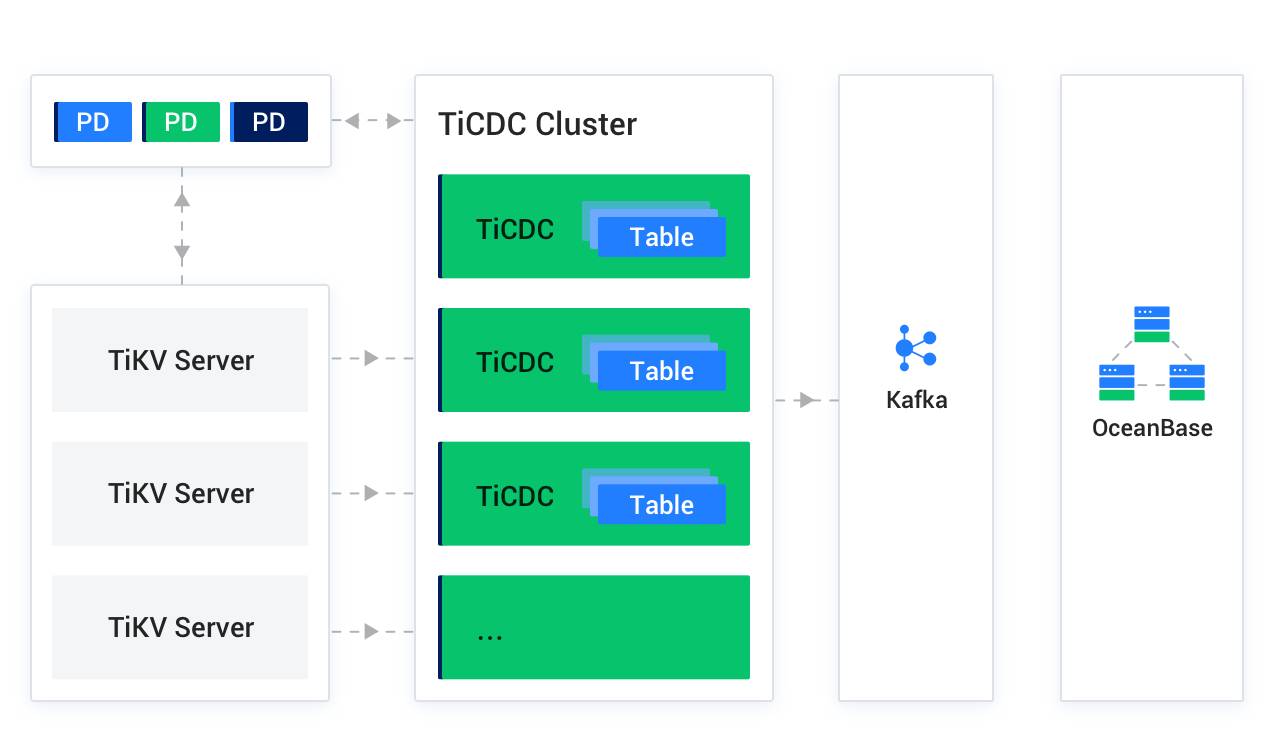

TiDB is an integrated distributed database that supports hybrid transactional and analytical processing (HTAP). You must deploy a TiCDC cluster and a Kafka cluster to synchronize incremental data from a TiDB database to a MySQL tenant of OceanBase Database.

TiCDC is an incremental data synchronization tool for TiDB and provides high availability by using a placement driver (PD) cluster, which is the scheduling module of the TiDB cluster and usually consists of three PD nodes. TiKV Server is a TiKV node in the TiDB cluster. It sends data changes in change logs to the TiCDC cluster. TiCDC runs multiple TiCDC processes to obtain and process data from TiKV nodes, and then synchronizes the data to the Kafka cluster. The Kafka cluster saves the incremental logs of the TiDB database that are converted by TiCDC. During incremental data synchronization, the data transmission service obtains the corresponding data from the Kafka cluster and migrates the data to the MySQL tenant of OceanBase Database in real time.

If you create a TiDB data source without binding it to a Kafka data source, you cannot perform incremental synchronization.

Prerequisites

The data transmission service has the privilege to access cloud resources. For more information, see Grant privileges to roles for data transmission.

You have created dedicated database users for data migration in the source TiDB database and target MySQL tenant of OceanBase Database, and granted corresponding privileges to the users. For more information, see Create a database user.

Limitations

Limitations on the source database

Do not perform DDL operations that modify database or table schemas during schema migration or full migration. Otherwise, the data migration task may be interrupted.

Only TiDB 4.x and 5.x are supported.

The data transmission service does not support DDL synchronization for data migration from a TiDB database to a MySQL tenant of OceanBase Database.

The data transmission service does not support triggers in the target database. If triggers exist in the target database, the data migration may fail.

The data transmission service does not support the migration of tables without primary keys and data with spaces from a TiDB database to a MySQL tenant of OceanBase Database.

Data source identifiers and user accounts must be globally unique in the data transmission system.

The data transmission service supports the migration of an object only when the following conditions are met: the database name, table name, and column name of the object are ASCII-encoded without special characters. The special characters are line breaks, spaces, and the following characters: . | " ' ` ( ) = ; / & \.

The data transmission service supports only the TiCDC Open Protocol. If you use an unsupported protocol, a null pointer error is returned.

When you use TiCDC to synchronize data to a Kafka instance, you must add the

enable-old-value = truesetting to the configuration file. Otherwise, the formats of data synchronization messages may be invalid. For more information, see Task configuration file.

Considerations

If the source contains foreign keys with the same name, an error occurs during schema migration. In this case, you can rename the foreign keys to restore the task.

If the UTF-8 character set is used in the source database, we recommend that you use a compatible character set, such as UTF-8 or UTF-16, in the target database to avoid garbled characters.

Do not write data to the topic synchronously used by TiCDC. Otherwise, a null pointer error is returned.

Check whether the migration precision of the data transmission service for columns of data types such as DECIMAL, FLOAT, and DOUBLE is as expected. If the precision of the target field type is lower than that of the source field type, the value with a higher precision may be truncated. This may result in data inconsistency between the source and target fields.

If you modify a unique index at the target, you must restart the data migration task to avoid data inconsistency.

If the clocks between nodes or between the client and the server are out of synchronization, the latency may be inaccurate during incremental synchronization or reverse incremental synchronization.

For example, if the clock is earlier than the standard time, the latency can be negative. If the clock is later than the standard time, the latency can be positive.

Take note of the following considerations if you want to aggregate multiple tables:

We recommend that you configure the mappings between the source and target databases by specifying matching rules.

We recommend that you manually create schemas at the target. If you create schemas at the target by using the data transmission service, skip the failed objects in the schema migration step.

A difference between the source and target table schemas may result in data consistency. Some known scenarios are described as follows:

When you manually create a table schema in the target, if the data type of any column is not supported by the data transmission service, implicit data type conversion may occur in the target, which causes inconsistent column types between the source and target databases.

If the length of a column at the target is shorter than that in the source database, the data of this column may be automatically truncated, which causes data inconsistency between the source and target databases.

If you selected only Incremental Synchronization when you created the data migration task, the data transmission service requires that the local incremental logs of the source database be retained for at least 48 hours.

If you selected Full Migration and Incremental Synchronization when you created the data migration task, the data transmission service requires that the local incremental logs of the source database be retained for at least 7 days. If the data transmission service cannot obtain incremental logs, the data migration task may fail or even the data between the source and target databases may be inconsistent after migration.

If the source or target database contains table objects that differ only in letter cases, the data migration results may not be as expected due to case insensitivity in the source or target database.

Supported source and target instance types

In the following table, OB_MySQL stands for the MySQL tenant of OceanBase Database.

Source | Target |

TiDB (self-managed database in a VPC) | OB_MySQL (OceanBase cluster instance) |

TiDB (self-managed database with a public IP address) | OB_MySQL (OceanBase cluster instance) |

TiDB (self-managed database in a VPC) | OB_MySQL (serverless instance) |

TiDB (self-managed database with a public IP address) | OB_MySQL (serverless instance) |

Data type mappings

TiDB database | MySQL tenant of OceanBase Database |

INTEGER | INTEGER |

TYNYINT | TYNYINT |

MEDIUMINT | MEDIUMINT |

BIGINT | BIGINT |

SMALLINT | SMALLINT |

DECIMAL | DECIMAL |

NUMERIC | NUMERIC |

FLOAT | FLOAT |

REAL | REAL |

DOUBLE PRECISION | DOUBLE PRECISION |

BIT | BIT |

CHAR | CHAR |

VARCHAR | VARCHAR |

BINARY | BINARY |

VARBINARY | VARBINARY |

BLOB | BLOB |

TEXT | TEXT |

ENUM | ENUM |

SET | SET |

DATE | DATE |

DATETIME | DATETIME |

TIMESTAMP | TIMESTAMP |

TIME | TIME |

YEAR | YEAR |

Procedure

Log on to the

ApsaraDB for OceanBase console and purchase a data migration task.For more information, see Purchase a data migration task.

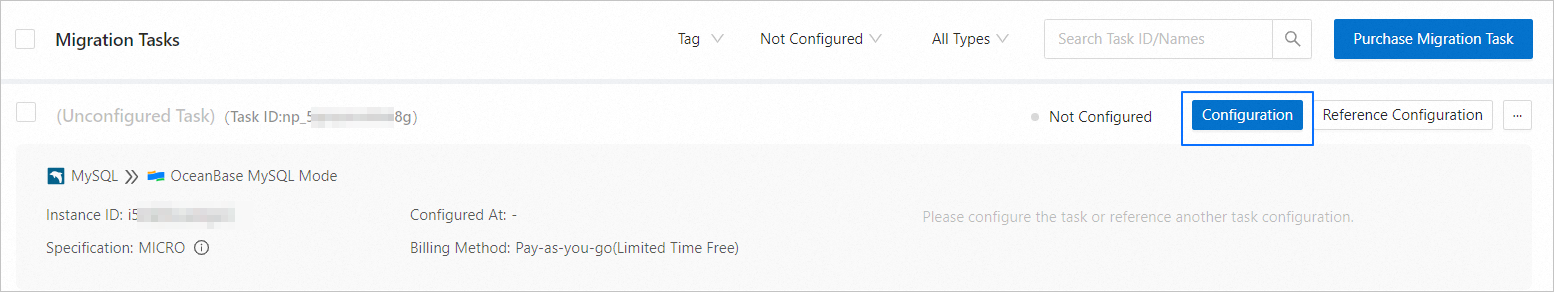

Choose Data Transmission > Data Migration. On the page that appears, click Configuration for the data migration task.

If you want to reference the configurations of an existing task, click Reference Configuration. For more information, see Reference the configuration of a data migration task.

On the Select Source and Target page, configure the related parameters.

Parameter

Description

Migration Task Name

We recommend that you set it to a combination of digits and letters. It must not contain any spaces and cannot exceed 64 characters in length.

Source

If you have created a TiDB data source, select it from the drop-down list. Otherwise, click New Data Source in the drop-down list and create one in the dialog box that appears on the right. For more information, see Create a TiDB data source.

Target

If you have created a data source for the MySQL tenant of OceanBase Database, select it from the drop-down list. Otherwise, click New Data Source in the drop-down list and create one in the dialog box that appears on the right. For more information about the parameters, see Create an OceanBase data source.

Tag (Optional)

Select a target tag from the drop-down list. You can also click Manage Tags to create, modify, and delete tags. For more information, see Use tags to manage data migration tasks.

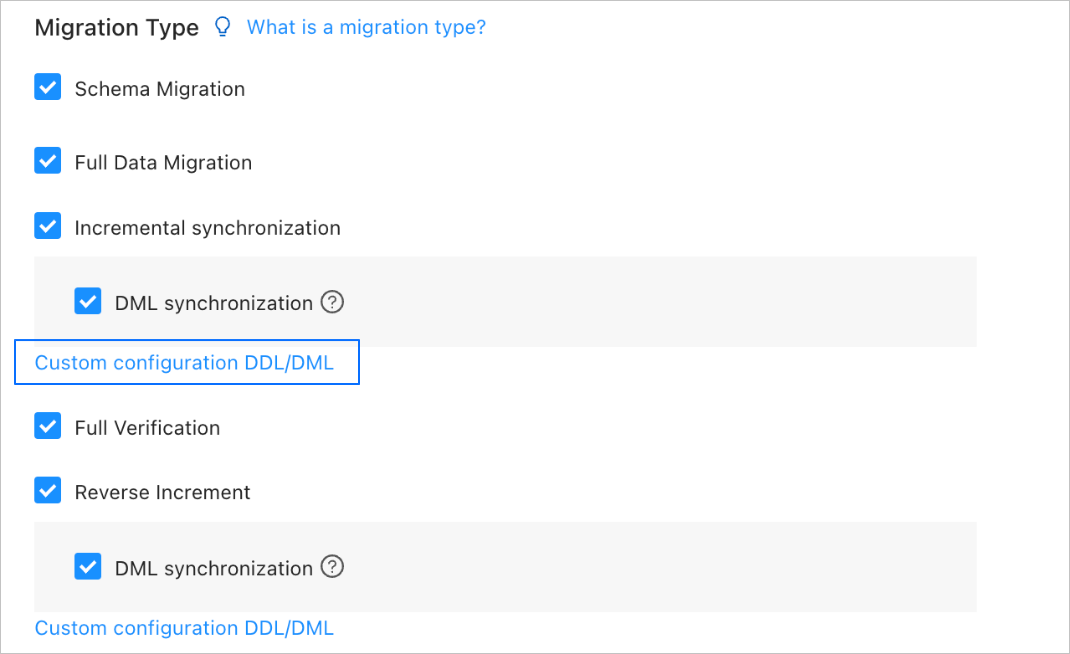

Click Next. On the Select Migration Type page, specify migration types for the current data migration task.

Supported migration types are schema migration, full migration, incremental synchronization, full verification, and reverse incremental synchronization.

Migration type

Description

Schema migration

After a schema migration task is started, the data transmission service migrates the definitions of database objects (such as tables, indexes, constraints, comments, and views) from the source database to the target database and automatically filters out temporary tables.

Full migration

After a full migration task is started, the data transmission service migrates existing data from tables in the source database to corresponding tables in the target database. If you select Full Migration, we recommend that you use the

ANALYZEstatement to collect the statistics of the TiDB database before data migration.Incremental synchronization

After an incremental synchronization task is started, the data transmission service synchronizes changed data (data that is added, modified, or removed) from the source database to corresponding tables in the target database.

DML Synchronization is supported for Incremental Synchronization. You can select operations as needed. For more information, see Configure DDL/DML synchronization. If you create a TiDB data source without binding it to a Kafka data source, you cannot select Incremental Synchronization.

Full verification

After the full migration and incremental synchronization tasks are completed, the data transmission service automatically initiates a full verification task to verify the tables in the source and target databases.

If you select Full Verification, we recommend that you collect the statistics of the TiDB database and the MySQL tenant of OceanBase Database before full verification.

If you select Incremental Synchronization but do not select all DML statements in the DML Synchronization section, you cannot select Full Verification.

Reverse incremental synchronization

Data changes made in the target database after the business database switchover are synchronized to the source database in real time through reverse incremental synchronization.

Generally, incremental synchronization configurations are reused for reverse incremental synchronization. You can also customize the configurations for reverse incremental synchronization as needed.

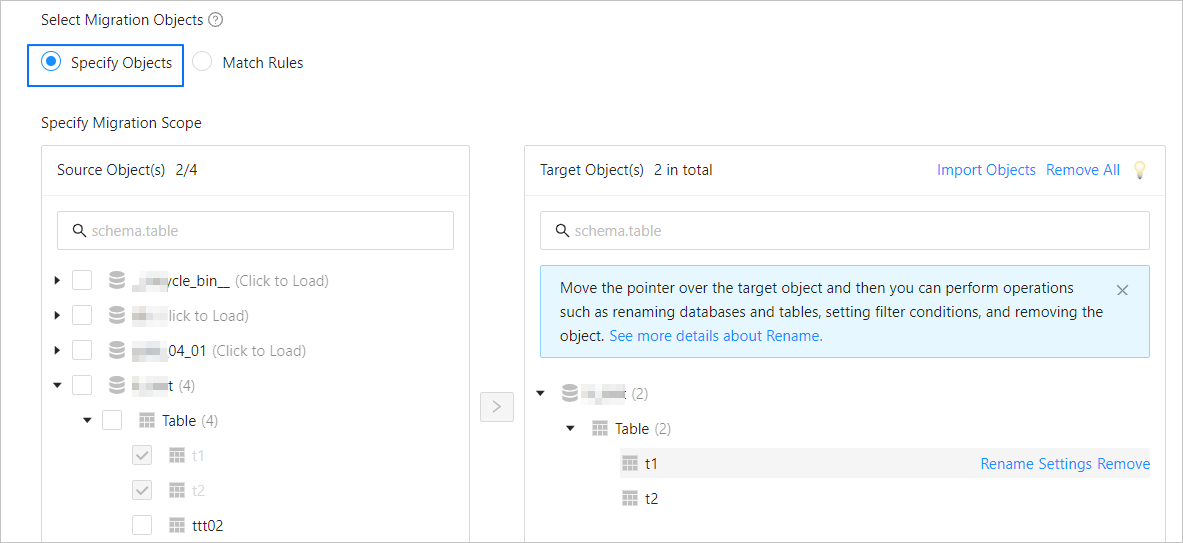

Click Next. On the Select Migration Objects page, specify migration objects for the data migration task.

You can select Specify Objects or Match Rules to specify the migration objects. This topic describes how to specify the migration objects by using Specify Objects. For information about matching rules, see Configure and modify matching rules.

ImportantThe names of tables to be migrated, as well as the names of columns in the tables, must not contain Chinese characters.

If a database or table name contains double dollar signs ($$), you cannot create the migration task.

In the Select Migration Objects section, select Specify Objects.

In the Source Object(s) list of the Specify Migration Scope section, select the objects to migrate. You can select tables and views of one or more databases.

Click > to add them to the Target Object(s) list.

The data transmission service allows you to import objects from text files, rename target objects, set row filters, view column information, and remove a single or all migration objects.

NoteWhen you select Match Rules to specify migration objects, object renaming is implemented based on the syntax of the specified matching rules. In the operation area, you can only set filter conditions. For more information, see Configure and modify matching rules.

Operation

Description

Import objects

In the list on the right, click Import Objects in the upper-right corner.

In the dialog box that appears, click OK.

ImportantThis operation will overwrite previous selections. Proceed with caution.

In the Import Objects dialog box, import the objects to be migrated.

You can import CSV files to rename databases or tables and set row filter conditions. For more information, see Download and import the settings of migration objects.

Click Validate.

After you import the migration objects, check their validity. Column field mapping is not supported at present.

After the validation succeeds, click OK.

Rename objects

The data transmission service allows you to rename migration objects. For more information, see Rename a database table.

Configure settings

The data transmission service allows you to filter rows by using

WHEREconditions. For more information, see Use SQL conditions to filter data.You can also view column information of the migration objects in the View Columns section.

Remove one or all objects

The data transmission service allows you to remove a single or all synchronization objects that are added to the right-side list during data mapping.

Remove a single migration object

In the list on the right, move the pointer over the object that you want to remove, and click Remove to remove the migration object.

Remove all migration objects

In the list on the right, click Remove All in the upper-right corner. In the dialog box that appears, click OK to remove all migration objects.

Click Next. On the Migration Options page, configure the parameters.

Full migration

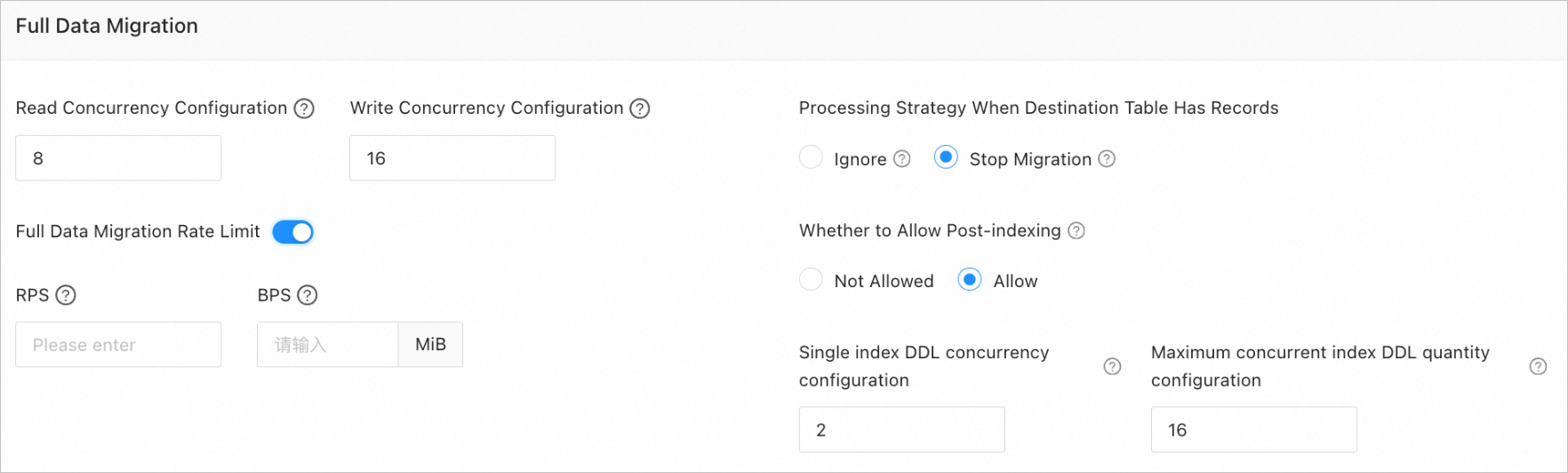

The following table describes the parameters for full migration, which are displayed only if you have selected Full Migration on the Select Migration Type page.

Parameter

Description

Read Concurrency

The concurrency for reading data from the source during full migration. The maximum value is 512. A high read concurrency may incur excessive stress on the source, affecting the business.

Write Concurrency

The concurrency for writing data to the target during full migration. The maximum value is 512. A high write concurrency may incur excessive stress on the target, affecting the business.

Full Migration Rate Limit

You can choose whether to limit the full migration rate as needed. If you choose to limit the full migration rate, you must specify the records per second (RPS) and bytes per second (BPS). The RPS specifies the maximum number of data rows migrated to the target per second during full migration, and the BPS specifies the maximum amount of data in bytes migrated to the target per second during full migration.

NoteThe RPS and BPS values specified here are only for throttling. The actual full migration performance is subject to factors such as the settings of the source and target and the instance specifications.

Handle Non-empty Tables in Target Database

Valid values are Ignore and Stop Migration.

If you select Ignore, when the data to be inserted conflicts with existing data of a target table, the data transmission service logs the conflicting data while retaining the existing data.

ImportantIf you select Ignore, data is pulled in IN mode during full verification. In this case, verification is inapplicable if the target contains data that does not exist in the source, and the verification performance is downgraded.

If you select Stop Migration and a target table contains records, an error prompting migration unsupported is reported during full migration. In this case, you must process the data in the target table before continuing with the migration.

ImportantIf you click Restore in the dialog box prompting the error, the data transmission service ignores this error and continues to migrate data. Proceed with caution.

Post-Indexing

Specifies whether to create indexes after the full migration is completed. Post-indexing can shorten the time required for full migration. For more information about the considerations on post-indexing, see the description below.

ImportantThis parameter is displayed only if you have selected both Schema Migration and Full Migration on the Select Migration Type page.

Only non-unique key indexes can be created after the migration is completed.

If the target OceanBase database returns the following error during index creation, the data transmission service ignores the error and determines that the index is successfully created, without creating it again.

Error message in an OceanBase database in MySQL-compatible mode:

Duplicate key name.Error message in an Oracle tenant of OceanBase Database:

name is already used by an existing object.

If the target is an OceanBase database and you select Allow for this parameter, the following parameters need to be set:

Single Index DDL Concurrency Configuration: the maximum number of concurrent DDL operations allowed for a single index. A larger value indicates higher resource consumption and faster data migration.

Maximum concurrent index DDL quantity configuration: the maximum number of post-indexing DDL operations that the system can call at a time.

If post-indexing is allowed, we recommend that you use a CLI client to modify the following parameters for business tenants based on the hardware conditions of OceanBase Database and your current business traffic:

// Specify the limit on the file memory buffer size. alter system set _temporary_file_io_area_size = '10' tenant = 'xxx'; // Disable throttling in OceanBase Database V4.x. alter system set sys_bkgd_net_percentage = 100;Incremental synchronization

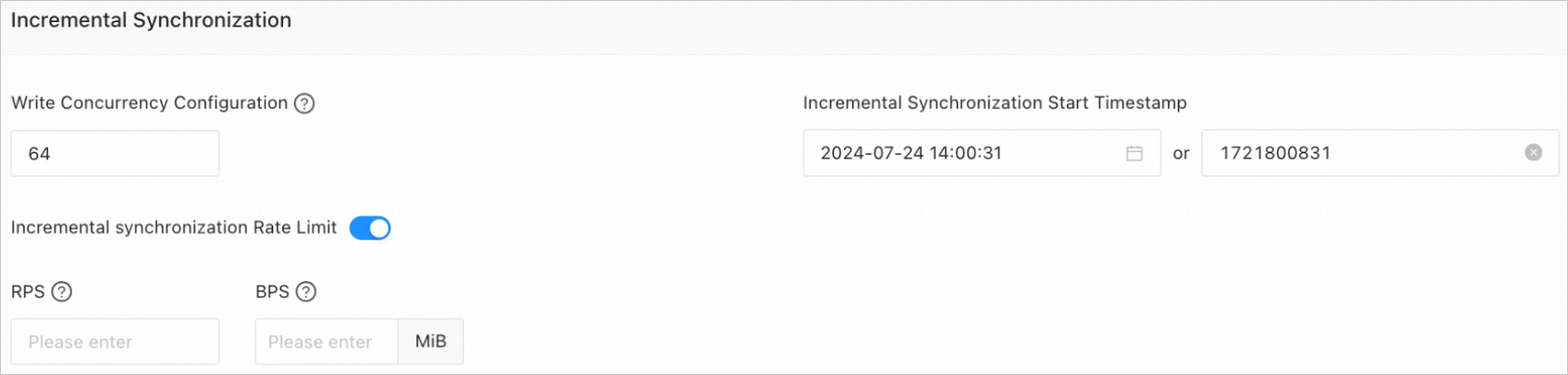

The following table describes the parameters for incremental synchronization, which are displayed only if you have selected Incremental Synchronization on the Select Migration Type page.

Parameter

Description

Write Concurrency

The concurrency for writing data to the target during incremental synchronization. The maximum value is 512. A high write concurrency may incur excessive stress on the target, affecting the business.

Incremental Synchronization Rate Limit

You can choose whether to limit the incremental synchronization rate as needed. If you choose to limit the incremental synchronization rate, you must specify the records per second (RPS) and bytes per second (BPS). The RPS specifies the maximum number of data rows synchronized to the target per second during incremental synchronization, and the BPS specifies the maximum amount of data in bytes synchronized to the target per second during incremental synchronization.

NoteThe RPS and BPS values specified here are only for throttling. The actual incremental synchronization performance is subject to factors such as the settings of the source and target and the instance specifications.

Incremental Synchronization Start Timestamp

This parameter is not displayed if you have selected Full Migration on the

Select Migration Type page.If you have selected Incremental Synchronization but not Full Migration, specify a point in time after which the data is to be synchronized. The default value is the current system time. For more information, see Set an incremental synchronization timestamp.

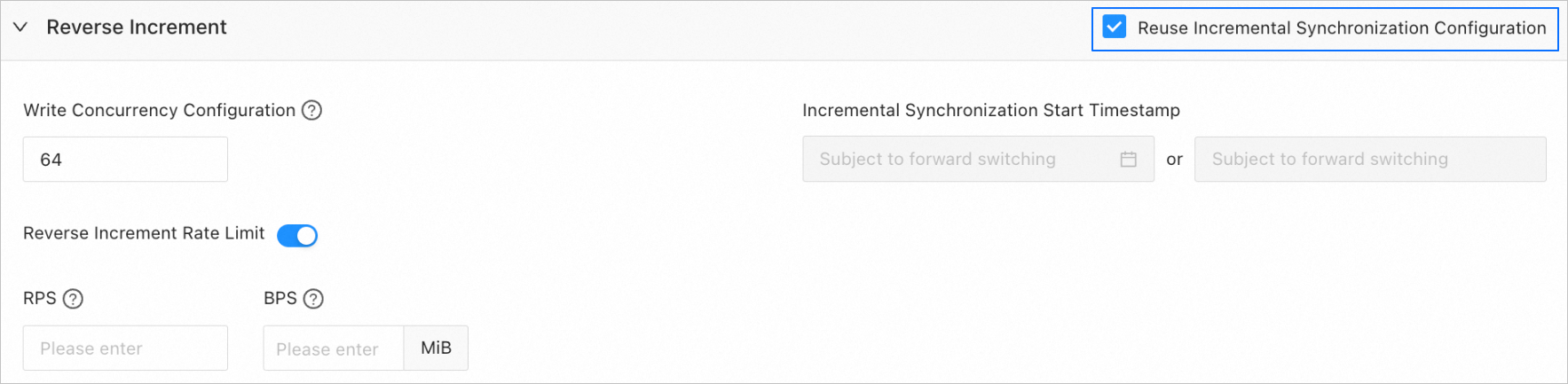

Reverse increment

The following table describes the parameters for reverse increment, which are displayed only if you have selected Reverse Increment on the Select Migration Type page. By default, incremental synchronization configurations are reused for reverse increment.

You can choose not to reuse the incremental synchronization configurations and configure reverse increment as needed.

Parameter

Description

Write Concurrency

The concurrency for writing data to the source during reverse increment. The maximum value is 512. A high concurrency may incur excessive stress on the source, affecting the business.

Reverse Increment Rate Limit

You can choose whether to limit the reverse increment rate as needed. If you choose to limit the reverse increment rate, you must specify the requests per second (RPS) and bytes per second (BPS). The RPS specifies the maximum number of data rows synchronized to the source per second during reverse increment, and the BPS specifies the maximum amount of data in bytes synchronized to the source per second during reverse increment.

NoteThe RPS and BPS values specified here are only for throttling. The actual reverse increment performance is subject to factors such as the settings of the source and target and the instance specifications.

Incremental Synchronization Start Timestamp

This parameter is not displayed if you have selected Full Migration on the Select Migration Type page.

If you have selected Incremental Synchronization but not Full Migration, the forward switchover start timestamp (if any) is used by default. This parameter cannot be modified.

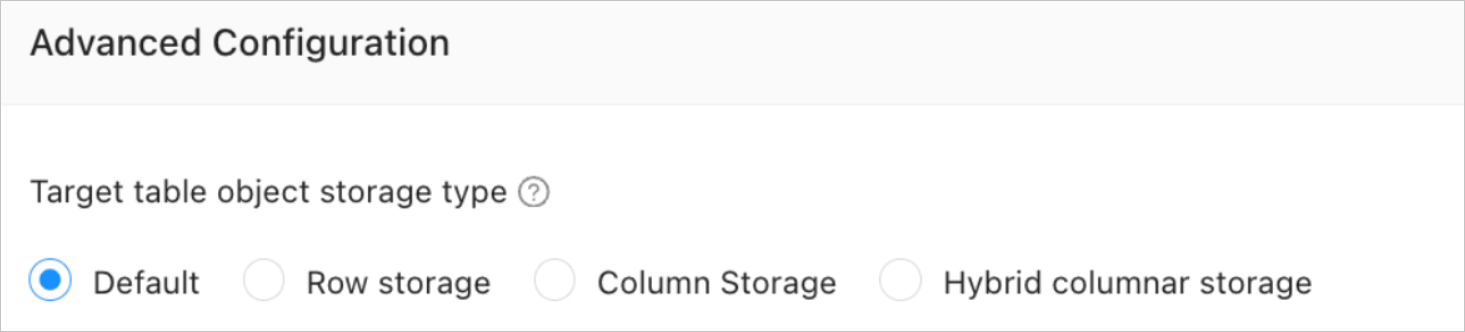

Advanced parameters

This section is displayed only if the target is an OceanBase database in MySQL-compatible mode V4.3.0 or later and you have selected Schema Migration on the Select Migration Type page.

This parameter specifies the storage type for target table objects during schema migration. The storage types supported for target table objects are Default, Row storage, Column storage, and Hybrid columnar storage. For more information, see default_table_store_format.

NoteThe value Default means that other parameters are automatically set based on the parameter configurations of the target. Table objects in schema migration are written to the corresponding schemas based on the specified storage type.

Click Precheck to start a precheck on the data migration task.

During the precheck, the data transmission service checks the read and write privileges of the database users and the network connections of the databases. A data synchronization task can be started only after it passes all check items. If an error is returned during the precheck, you can perform the following operations:

Identify and troubleshoot the problem and then perform the precheck again.

Click Skip in the Actions column of the failed precheck item. In the dialog box that prompts the consequences of the operation, click OK.

After the precheck is passed, click Start Task.

If you do not need to start the task now, click Save. You can start the task later on the Migration Tasks page or by performing batch operations. For more information about batch operations, see Perform batch operations on data migration tasks.

The data transmission service allows you to modify the migration objects and their row filtering conditions when a migration task is running. For more information, see View and modify migration objects and their filter conditions. After the data migration task is started, it is executed based on the selected migration types. For more information, see View migration details.