By Gao Chao, nicknamed Longduo at Alibaba. Gao Chao is an expert in high-availability architectures, being the head of Alibaba's business check platform. Gao Chao was responsible for system assurance for the Double 11 Shopping Festival over the past four years. He has several years of experience in business data reconciliation and asset loss prevention and control.

Enterprise systems become increasingly complex as the business grows. A complex distributed system architecture is prone to remote call failure, message sending failure, and concurrency bugs. These problems may cause inter-system data inconsistencies, which affect the experience and interests of users and damage platform assets. Given these concerns, it is important for an enterprise to ensure the stability of system businesses. This article overviews the best practices for ensuring consistent business data.

A common solution to business data inconsistency is to configure a scheduled task to pull data from a past period of time at fixed time points and compare the data to check for data errors. Hadoop does not provide a time-efficient offline computing method to batch process MapReduce jobs. E-commerce systems or systems that require real-time performance may face great losses when problems are not promptly identified. Therefore, inconsistent data must be identified in real time by using an online check method.

During online consistency checks, a data record is compared once upon creation. Consistency checks can be divided into pre-checks and post-checks.

Strictly speaking, post-checks are a quasi-real-time check method because the check time lags behind the actual business action. Post-checks are decoupled from the business to avoid impact on business when checking for inconsistent data in a quasi-real-time manner. Post-checks are exclusively used in certain special scenarios, such as asynchronous business services. Post-checks are not as time-efficient as pre-checks.

You may wonder what the purpose of a post-check is since it checks data only after a business action is executed. Compared with pre-checks, post-checks cannot ensure that each data record is correct. However, post-checks are essential in several different business scenarios, especially e-commerce, where business function iteration is done from the routine and staging environments all the way to the beta test and online environments. In the staging environment and beta test environment, online traffic is introduced or data is simulated for testing. Only a limited volume of traffic or data is introduced so that any problems do not have a serious and global impact. In these scenarios, post-checks can be used to check the correctness of functions and data without affecting the online business due to strong coupling. After functions go online, post-checks can be used for promptly checking inconsistent data to prevent errors from spreading.

Offline checking is inexpensive to develop, easy to use, and suitable in scenarios that do not require real-time data checking. Post-checks are suitable in scenarios with moderate requirements for real-time data checking. Pre-checks are suitable in scenarios with high requirements for real-time data checking and high levels of resource investment.

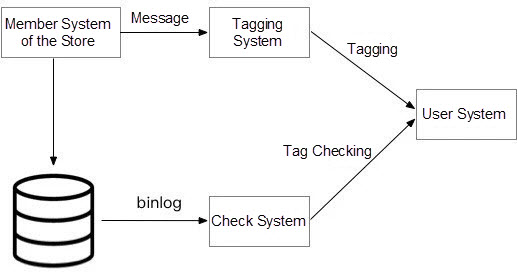

In this example, a store provides a membership service to allow customers to become members or cancel their membership through the store system, tagging system, and membership system, as shown below.

In this business scenario, after a buyer applies to become a member on the membership page of the store, an METAQ message for becoming a member is sent if the application is approved. Then, the downstream business system adds a tag to this buyer based on the METAQ message. When this buyer places an order in the store, the system checks whether this buyer has purchase priority based on the tag. When a buyer applies to cancel his membership, an METAQ message is sent to remove the tag from this buyer. The membership status, specifically whether the buyer is a member or non-member, in the store system must be consistent with the tag status, specifically tagged or untagged, in the user system. If they are inconsistent, a buyer who has canceled his membership but is still tagged may be able to buy products that are exclusive to members. This may cause losses to the store. Therefore, strong data consistency must be ensured through reconciliation. When inconsistent data is found, the related personnel must be instructed to check data and make revisions when necessary.

Select a reconciliation system to meet the following requirements:

In this scenario, the business services are asynchronous in nature. After a buyer initiates a membership application to the membership system, this buyer is not immediately tagged in the user system. This makes real-time pre-check unsuitable in this scenario. The tag of this buyer can be queried only after a period of time. Therefore, post-check is suitable for checking the consistency between the membership status and the tag status.

Last, real-time binlog data is pulled from the membership database of the store and sent to the check system. The check system parses the log data to retrieve the member ID of the to-be-tagged buyer. Then, the check system checks the membership status of this buyer in the membership system after a period of time and compares it with the log-recorded status. An alert is triggered if they are inconsistent.

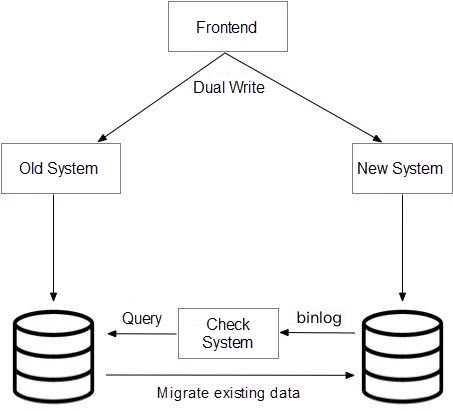

Data migration is often performed when old systems are replaced by new systems. Migration must be a step-by-step process because a large amount of data must be migrated without downtime. The migration process is divided into two subprocesses. The first subprocess is dual write to ensure data consistency between old and new systems. The second subprocess is the migration of existing data by slowly running background tasks. In the migration process, inconsistent data may result from the non-synchronization of some fields and disordered data updates. Therefore, data consistency must be checked during migration to promptly discover and fix data errors or bugs.

A consistency check is triggered when data is written to the new system. If the data cannot be synchronized from the old system and, consequently, cannot be written to the new system, then is a consistency check still triggered? The answer to this question depends on the check boundary. We assume that system synchronization is always successful and that even in the case of failed synchronization, synchronization is retried until it is successful. When synchronization fails, the synchronization is stopped and an error log is printed. Such a failure is unrelated to the check system and is observed based on the synchronization progress or the printed log.

One solution is to receive binlog data, which is about database changes, from the new database, parse the log data, query the related data record in the old database based on the data ID, and compare the data content. Real-time consistency checking is required in a dual write scenario where a data record may be modified multiple times. Otherwise, the received log data may lag behind data changes, causing a false positive that the data queried in the old database is inconsistent with the data in the new database.

Four Major Technologies Behind the Microservices Architecture

Alibaba Cloud Native Helps Enterprises Achieve Digital Transformation

212 posts | 13 followers

FollowKalpesh Parmar - January 6, 2026

Alibaba Cloud_Academy - September 9, 2022

Alibaba Cloud_Academy - September 1, 2022

Alibaba Cloud Community - January 13, 2026

Alibaba Cloud Community - January 13, 2026

Mehmad - December 30, 2024

212 posts | 13 followers

Follow Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn More DataWorks

DataWorks

A secure environment for offline data development, with powerful Open APIs, to create an ecosystem for redevelopment.

Learn More DataV

DataV

A powerful and accessible data visualization tool

Learn More Livestreaming for E-Commerce Solution

Livestreaming for E-Commerce Solution

Set up an all-in-one live shopping platform quickly and simply and bring the in-person shopping experience to online audiences through a fast and reliable global network

Learn MoreMore Posts by Alibaba Cloud Native