By Zhaofeng Zhou (Muluo)

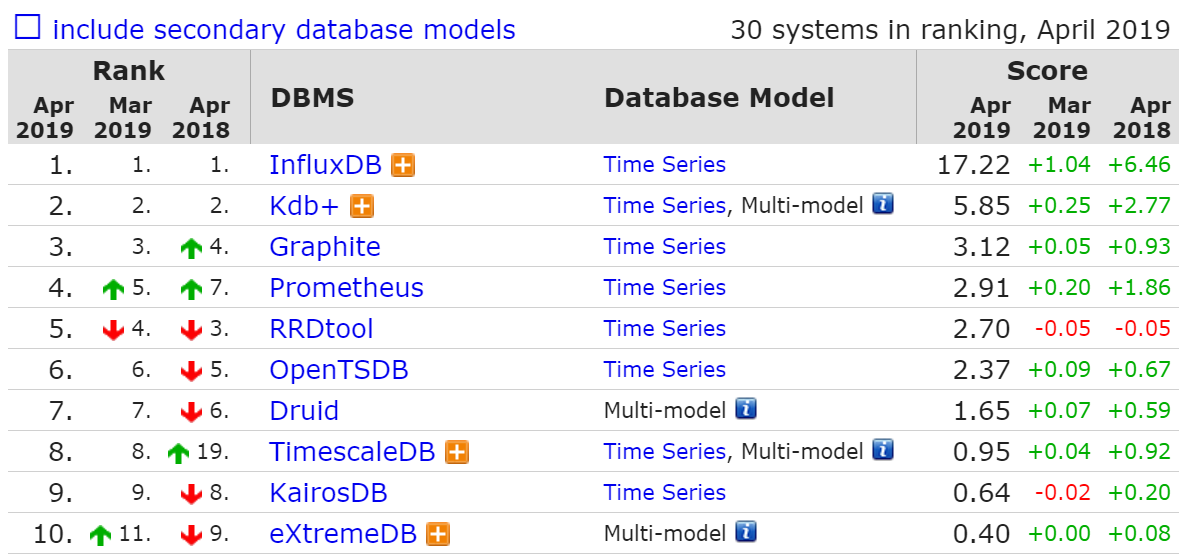

With IoT development in recent years, there has been an explosion in time series data. Based on growth trends of database types collected in DB-Engines over the past two years, there has been a rapid growth of time series databases. Implementations of these large open source time series databases differ, and none of them are perfect. However, the strengths of these databases can be combined to implement a perfect time series database.

Table Store is a distributed NoSQL database developed by Alibaba Cloud. It features a multi-model design, which includes the same Wide Column model as BigTable, as well as a Timeline model for message data. In terms of storage models, data size, and write and query capabilities, it can better meet the requirements of time series data scenarios. However, as a general-purpose model database, if the time series data storage is to fully utilize the capabilities of the underlying database, the schema design and computational integration of the table require special designs, such as OpenTSDB's RowKey design for HBase and UID encoding.

This article relates to the architecture, and focuses on data model definitions and core processing flows for time series data, and the architecture for building time series data storage based on Table Store. In the future, there will be an article on solutions, which will provide a highly-efficient schema design and indexing design for time series data and metadata storage. Finally, there will also be an article on computing, providing several project designs for time series data stream computing and time series analysis.

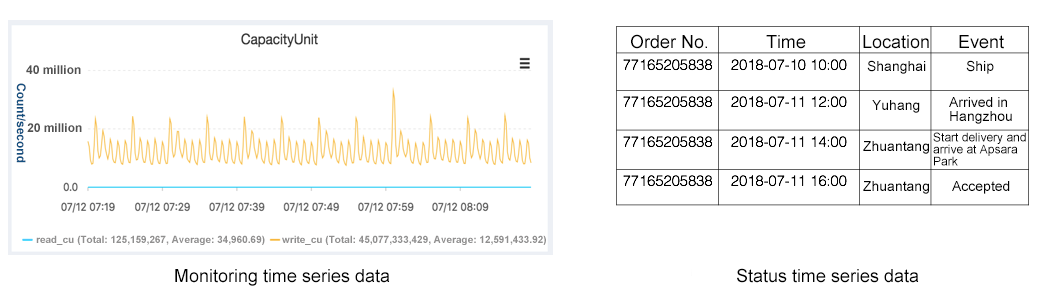

Time series data is divided into two main types: 'monitoring' time series data and 'status' time series data. Current open source time series databases all focus on the monitoring type of time series data, and specific optimizations are made for the data characteristics in this scenario. However, based on the characteristics of time series data, there is still one other type of time series data, namely 'status' time series data. These two types of time series data correspond to different scenarios. As its name suggests, the monitoring type corresponds to monitoring scenarios, while the status type is for other scenarios, such as tracing and abnormal status recording. Our most common package trace is for status time series data.

The reason both types of data are classified as 'time series' is that both are completely consistent in terms of data model definition, data collection, and data storage and computing, and the same database and the same technical architecture can be abstracted.

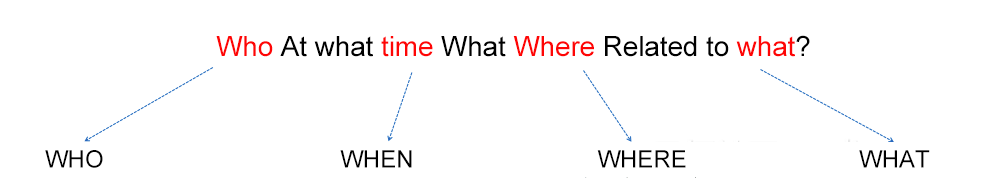

Before defining the time series data model, we first make an abstract representation of the time series data.

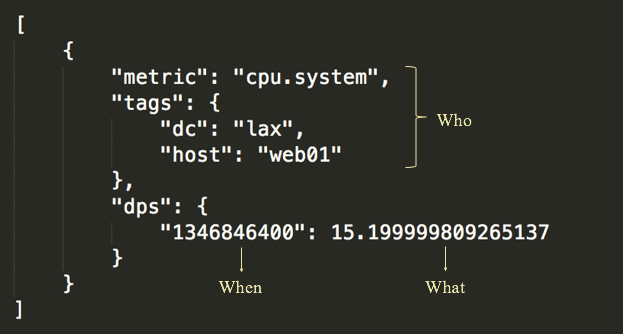

The above is an abstract representation of the time series data. Each open source time series database has its own definition of the time series data model, which defines the time series data for monitoring. Take the OpenTSDB data model as an example:

The monitoring time series data model definition includes:

The monitoring time series data is the most typical type of time series data, and has specific characteristics. The characteristics of the monitoring time series data determine that these types of time series databases have specific storage and computing methods. Compared with the status time series data, it has specific optimizations in computing and storage. For example, the aggregation computing will have several specific numerical aggregate functions, and there will be specially optimized compression algorithms on the storage. In the data model, the monitoring time series data do not usually need to express the location, namely the spatiotemporal information. However, the overall model correlates with our unified abstract representation of the time series.

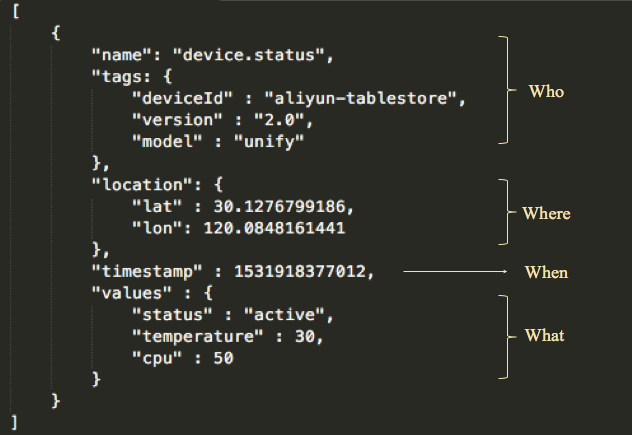

Based on the monitoring time series data model, we can define a complete model of the following time series data according to the time series data abstract model described above:

The definition includes:

This is a more complete time series data model, and has two main differences with the model definition of the monitoring time series data of OpenTSDB: first, there is one more dimension, location, in metadata; second, it can express more values.

Time series data has its own specific query and computing methods, which roughly include the following types:

Timeline retrieval

According to the data model definition, name + tags + location can be used to locate an individual. Each individual has its own timeline, and the points on the timeline are timestamps and values. For time series data queries, the timeline needs to be located first, which is a process of retrieval based on a combination of one or more values of the metadata. You can also drill down based on the association of metadata.

Time range query

After the timeline has been located through retrieval, the timeline is queried. There are very few queries on single time points on a timeline, and queries are usually on all points in a continuous time range. Interpolation is usually done for missing points in this continuous time range.

Aggregation

A query can be made for a single timeline or multiple timelines. For range queries for multiple timelines, the results are usually aggregated. This aggregation is for values at the same time point on different timelines, commonly referred to as "post-aggregation."

The opposite of "post-aggregation" is "pre-aggregation", which is the process of aggregating multiple timelines into one timeline before time series data storage. Pre-aggregation computes the data and then stores it, while post-aggregation queries the stored data and then computes it.

Downsampling

The computational logic of downsampling is similar to that of aggregation. The difference is that downsampling is for a single timeline instead of multiple timelines. It aggregates the data points in a time range on a single timeline. One of the primary purposes of downsampling is to display the data points in a large time range. Another is to reduce storage costs.

Analysis

Analysis is done to extract more value from the time series data. There is a special research field called "time series analysis."

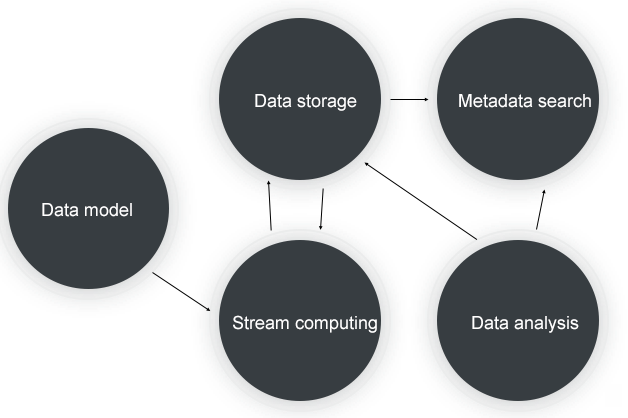

The core procedure of time series data processing is shown above, and includes:

Let us now have a look at the selection of products that can be used in these core processes.

Data storage

Time series data is typical non-relational data. It is characterized by high concurrency, high throughput, large data volume, and high write and low read. The query mode is usually range query. These data characteristics are very well-suited for use with NoSQL-type databases. Several popular open source time series databases use NoSQL database as the data storage layer, such as OpenTSDB based on HBase, and KairosDB based on Cassandra. Therefore, in terms of product selection for "data storage", we can select an open source distributed NoSQL database such as HBase or Cassandra, or a cloud service such as Alibaba Cloud's Table Store.

Stream computing

For stream computing, we can use open source products such as JStrom, Spark Streaming, and Flink, or Alibaba's Blink and cloud product StreamCompute.

Metadata search

Metadata for the timeline will also be large in magnitude, so we will first consider using a distributed database. In addition, because the query mode needs to support retrieval, the database needs to support inverted rank index and location index, so open source Elasticsearch or Solr can be used.

Data analysis

Data analysis requires a powerful distributed computing engine. We can select the open source software Spark, the cloud product MaxCompute, or a serverless SQL engine such as Presto or cloud product Data Lake Analytic.

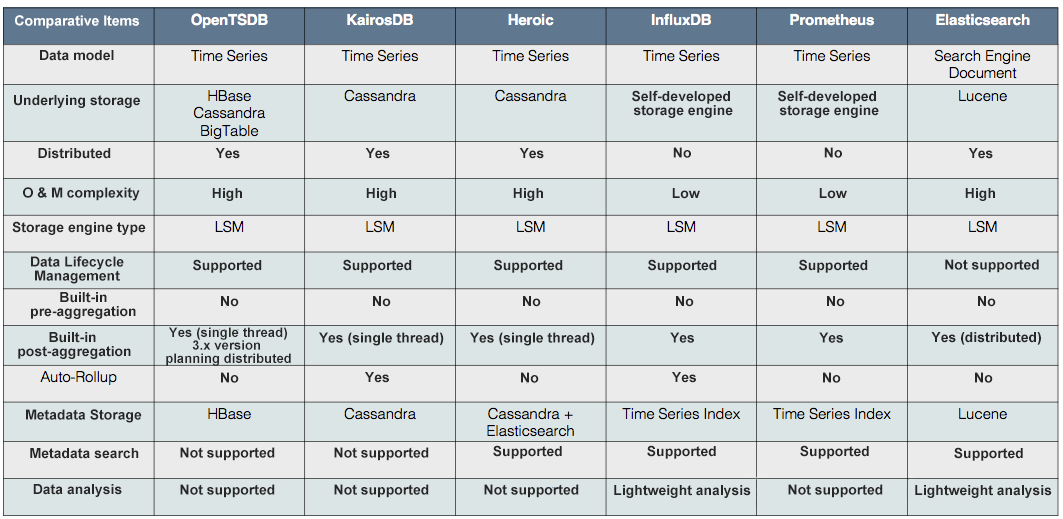

Based on database development trends on DB-Engines, we see that time series databases have developed rapidly over the past two years, and a number of excellent open source time series databases have emerged. Each implementation of major time series databases has its own merits. Below is a comprehensive comparison of these databases from a number of dimensions:

As a distributed NoSQL database developed by Alibaba Cloud, Table Store uses the same Wide Column data model as Bigtable. The product is well-suited to time series data scenarios in terms of storage model, data size, and write and query capabilities. We also support monitoring time series products such as CloudMonitor, status time series products such as AliHealth's drug tracking, and core services such as postal package tracing. There is also a complete computing ecosystem to support the computing and analysis of time series data. In our future plans, we have specific optimizations for time series scenarios regarding metadata retrieval, time series data storage, computing and analysis, and cost reduction.

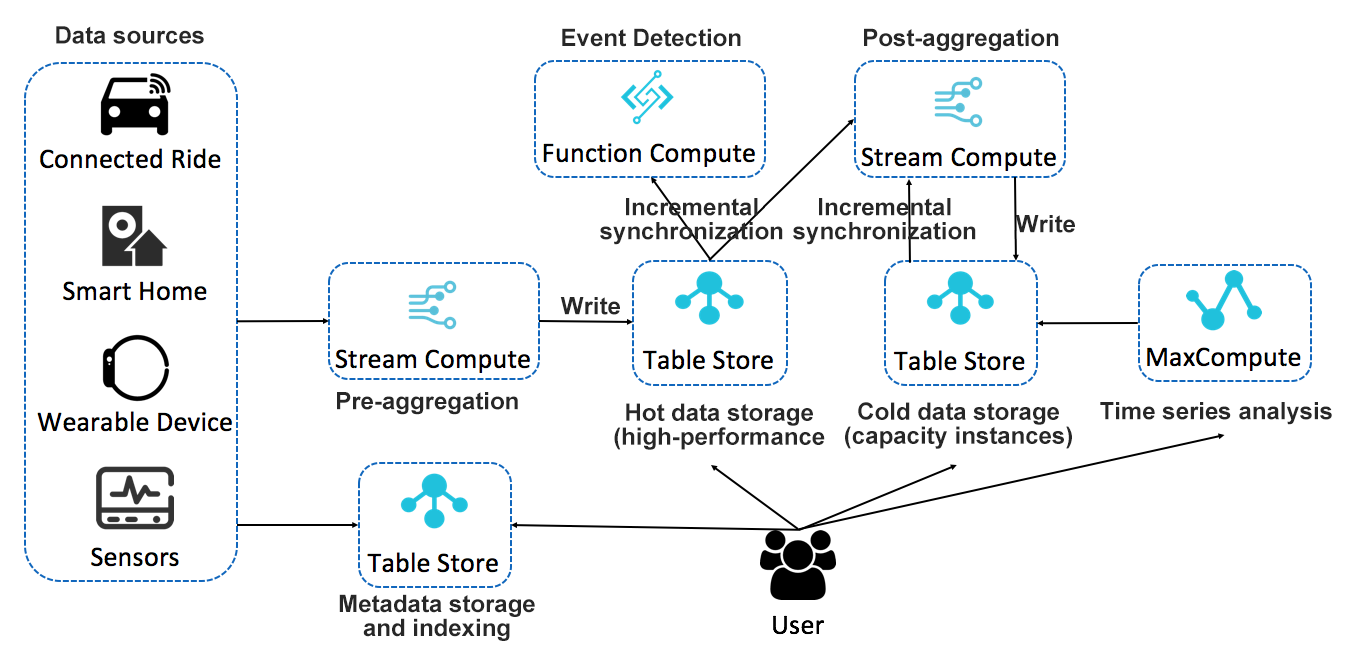

The above is a complete architecture for time series data storage, computing, and analysis based on Table Store. This is a serverless architecture that can provide all the features needed for a complete time series scenario by combining cloud products. Each module has a distributed architecture, providing powerful storage and computing capabilities, and resources can be dynamically expanded. Each component can also be replaced with other similar cloud products. The architecture is flexible and has huge advantages over open source time series databases. The core advantages of this architecture are analyzed below:

Separation of storage and compute

Separation of storage and compute is a leading form of technical architecture. Its core advantage is to provide more flexible computing and storage resource configuration, with more flexible costs, and better load balancing and data management. To allow users to truly enjoy the benefits brought by the separation of storage and compute, products for the separation of storage and compute must be provided in the context of the cloud environment.

Table Store implements separation of storage and compute in both technical architecture and product form, and can freely allocate storage and computing resources at a relatively low cost. This is especially important in time series data scenarios, where computing is relatively constant yet storage volume grows linearly. The primary way to optimize costs is to allocate constant computing resources and infinitely scalable storage, so that computing drives storage without the need to shoulder extra computing costs.

Separation of cold/hot data

A notable characteristic of time series data is the clear distinction between hot and cold data access, with recently written data being accessed more frequently. Given this characteristic, the hot data adopts a storage medium with a higher IOPS, which greatly improves the overall query efficiency. Table Store provides two types of instances: high-performance instances and capacity instances, which correspond to SSD and SATA storage media respectively. The service feature allows users to freely allocate tables with different specifications for data of different levels of precision and different performance requirements for query and analysis. For example, for high-concurrency and low-latency queries, high-performance instances are allocated; for cold data storage and low-frequency queries, capacity instances are allocated. For interactive data analysis that requires higher speed, high-performance instances can be allocated. For scenarios of time series data analysis and offline computing, capacity instances can be allocated.

For each table, the TTL of the data can be freely defined. For example, for a high-precision table, a relatively short TTL can be configured. For a low-precision table, a longer TTL can be configured.

The bulk of the storage is for cold data. For this less-frequently accessed data, we will further reduce storage costs by using erasing coding and an extreme compression algorithm.

Closed loop of data flow

Stream computing is the core computing scenario in time series data computing. It performs pre-aggregation and post-aggregation on the time series data. A commonly-seen monitoring system architecture is the use of a front stream computing solution. Pre-aggregation and downsampling of the data are all performed in the front stream computing. That is, the data is processed before it is stored, and what is stored is only the result. A second downsample is no longer required, and at most post-aggregation queries may be required.

Table Store is deeply integrated with Blink and is now available as a Blink maintenance table and a result table. The source table has been developed and is ready for release. Table Store can be used as the source and back-end of Blink, and the entire data stream can form a closed loop, thus offering a more flexible computing configuration. The original data will be subject to data cleansing and pre-aggregation after entering Blink, and is then written to the hot data table. This data can automatically flow into Blink for post-aggregation, and historical data backtracking for a certain period of time is supported. The results of post-aggregation can be written to the cold storage.

In addition to integrating with Blink, Table Store can also integrate with Function Compute for event programming, and can enable real-time abnormal status monitoring in time series scenarios. It can also read incremental data through Stream APIs to perform custom analyses.

Big data analysis engine

Table Store deeply integrates with distributed computing engines developed by Alibaba Cloud, such as MaxCompute (formerly ODPS). MaxCompute can directly read the data on Table Store to perform an analysis, eliminating the ETL process for data.

The entire analysis process is currently undergoing several optimizations. For example, optimization of queries through the index, and providing more operators for computing pushdown at the bottom.

Service capabilities

In summary, Table Store's service capabilities are characterized by zero-cost integration, out-of-the-box functionality, global deployment, multi-language SDK, and fully managed services.

Metadata storage and retrieval

Metadata is also a very important component of time series data. It is much smaller than time series data in terms of volume, but much more complicated in terms of query complexity.

From the definition we provided above, metadata can be divided primarily into 'Tags' and 'Location'. Tags are mainly used for multidimensional retrieval, while Location is mainly used for location retrieval. So, for efficient retrieval from the underlying storage, Tags must implement an inverted rank index, while Location needs to implement a location index. The order of timelines for a service-level monitoring system or tracing system is tens of millions to hundreds of millions, or even higher. Metadata also requires a distributed retrieval system to provide a high-concurrency low-latency solution. A preferable implementation in the industry is to use Elasticsearch for the storage and retrieval of metadata.

Table Store is a general-purpose distributed NoSQL database that supports multiple data models. The data models currently available include Wide Column (BigTable) and Timeline (message data model).

In applications of similar database products in the industry (such as HBase and Cassandra), time series data is a very important field. Table Store is constantly exploring the field of time series data storage. We are also constantly improving in the building of closed loops for stream computing data, data analysis optimization, and metadata retrieval, and we strive to provide a unified time series data storage platform.

Implementation of Message Push and Storage Architectures of Modern IM Systems

57 posts | 12 followers

FollowAlibaba Cloud Storage - November 8, 2018

Alibaba Cloud Storage - November 8, 2018

Apache Flink Community China - September 15, 2022

Alibaba Cloud Storage - March 28, 2019

zhuoran - February 5, 2021

Alibaba Cloud Storage - April 25, 2019

57 posts | 12 followers

Follow Time Series Database for InfluxDB®

Time Series Database for InfluxDB®

A cost-effective online time series database service that offers high availability and auto scaling features

Learn More Time Series Database (TSDB)

Time Series Database (TSDB)

TSDB is a stable, reliable, and cost-effective online high-performance time series database service.

Learn More Tablestore

Tablestore

A fully managed NoSQL cloud database service that enables storage of massive amount of structured and semi-structured data

Learn More Architecture and Structure Design

Architecture and Structure Design

Customized infrastructure to ensure high availability, scalability and high-performance

Learn MoreMore Posts by Alibaba Cloud Storage