By Li Wei (Apache RocketMQ Committer, RocketMQ Python Client Project Owner, Apache Doris Contributor, Tencent Distributed Message Queue (TDMQ) Senior Development Engineer, and RocketMQ Distributed Message Middleware (Core Principles and Best Practices) Author)

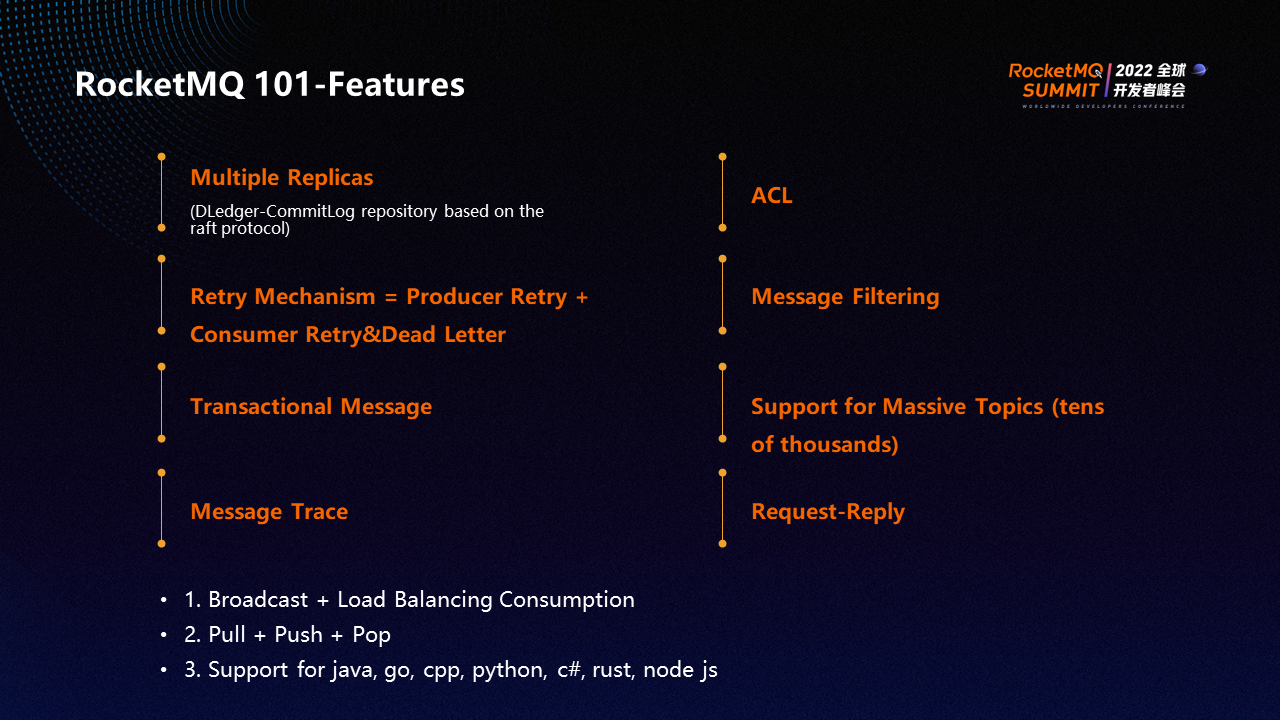

RocketMQ has many outstanding features. RocketMQ supports multi-replica Dledger at the storage layer, which is a consistent repository based on the Raft protocol to ensure that multiple replicas can be implemented at the storage layer.

The ACL authentication mechanism is used to determine which producers can produce, which consumer groups can consume, and the message filtering on the server.

A transactional message is a producer transaction implemented by RocketMQ. The producer sends a transactional message to the broker, and the producer executes the local transaction. If the execution is successful, a commit event is sent to the broker so the consumer can consume the message. If the local transaction fails to be processed, a rollback event is sent so the consumer cannot consume the message.

Request-Reply is similar to the synchronous RPC call. The same logic of users is implemented by messages, which can realize the process of synchronous RPC calls and unify the logic of calling API and sending messages.

A broadcast message means that after a message is sent, consumers that subscribe to it can consume all instances. Load balancing consumption refers to how consumers in the same consumer group can consume messages evenly in the default policy. The specific policy can be adjusted. RocketMQ supports three consumption modes: Pull, Push, and Pop. It supports multiple languages (such as Java, Go, Cpp, Python, and C#).

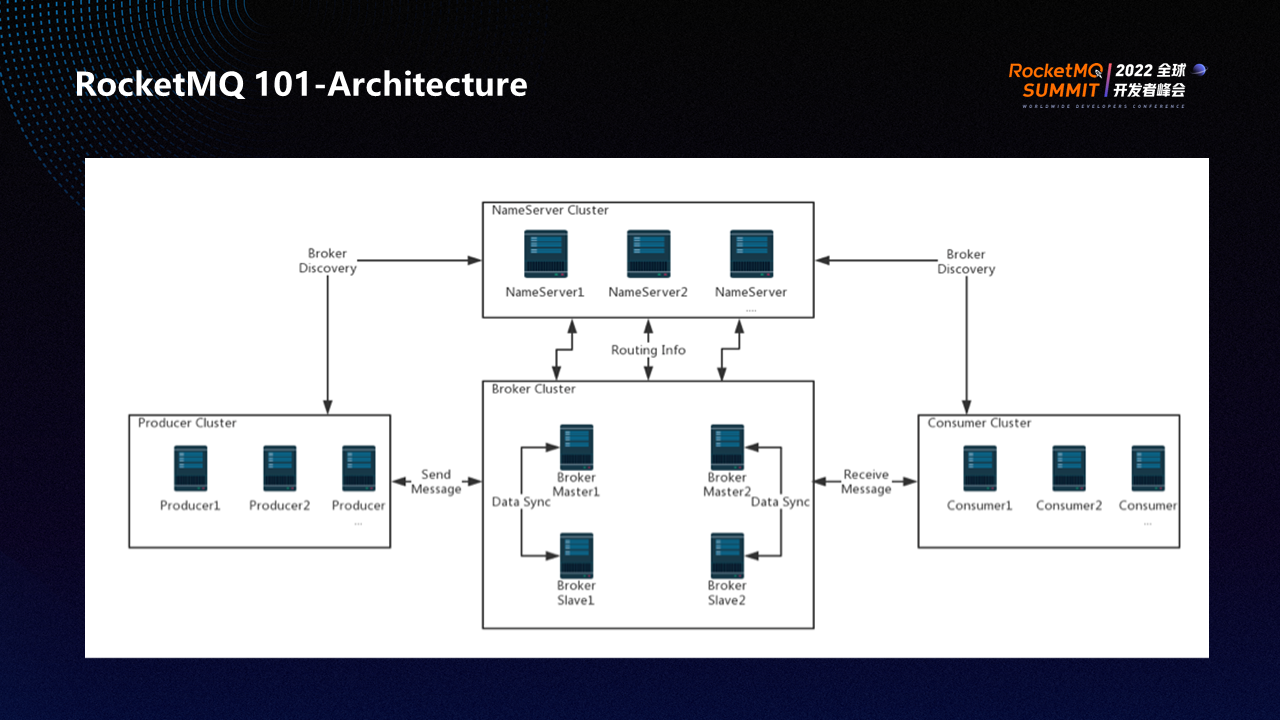

The process of building a RocketMQ cluster is listed below:

Step 1: Install the NameServer cluster. A NameServer cluster contains one or more NameServer nodes. When you start a service, port 9876 is monitored by default. After the NameServer cluster is built, start a broker cluster.

Step 2: Build a broker cluster and use the classic master-slave deployment mode. The master provides read-and-write operations and synchronizes data storage and metadata to the slave. Synchronize data through the HA port of the 10912.

Step 3: Write producer for code production. A producer cluster contains multiple producer instances that send data to the broker through port 10911 and port 10909.

Step 4: The consumer pulls data from the broker through port 10911 and port 10909.

When the producer or consumer instance is started, the NameServer address is configured first. The producer or consumer pulls routing information (such as topics, queues, and brokers) from the NameServer cluster and then sends or pulls messages based on the routing information.

Both the producer and the consumer have a channel connection with the broker. If the producer or consumer does not contact the broker for a long time, the broker will reject the connection.

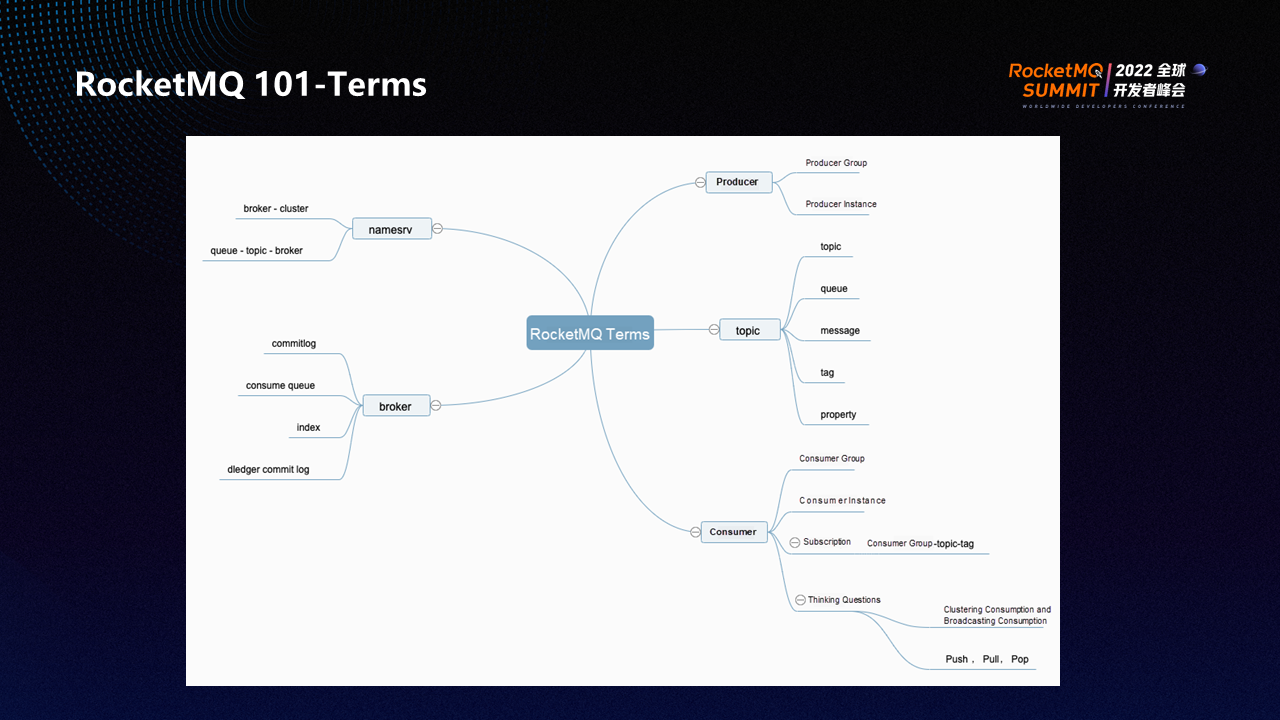

The following is an explanation of RocketMQ 101-related terms:

producers include producer groups and producer instances. A producer group is a combination of several producer instances, and RocketMQ expects the instances in the same producer group to behave consistently. The same goes for consumer groups and consumer instances. Consistent behavior means all producer instances produce the same type of message. For example, they all produce order messages, including steps such as order creation, order shipment, and order deletion. The advantage of consistent behavior is that the production and consumption of messages are relatively regular without confusion.

A topic is a category of messages in the form of a string. You can use a topic to classify all messages in a cluster. The messages of all topics form the full messages. A Tag is a subcategory of a topic.

When a consumer subscribes to a message, it must specify the topic and then the tag. Such a record is called a subscription relationship. If the subscription relationships are inconsistent, the subscription may be confused (such as repeated consumption, no consumption, and message accumulation).

Queue is similar to partition. It is a logical concept, not a concept of physical storage. A property is similar to a header. A property contains extended information besides the main information (such as the service ID to which the message belongs and the IP address of the sender). You can specify the property when you send a message to a topic.

The NameServer contains the relationship between the broker and the cluster, and the relationship between the queuetopic and the broker, which is the routing information.

The broker consists of the following four parts:

① CommitLog - Regular File Storage: The data sent by RocketMQ will be appended to CommitLog.

② Consumer Queue: When a consumer consumes a topic, the topic contains multiple queues. Each queue is called a consumer queue. Each consumer has a consumption schedule for each consumer queue.

③ Index: You can query messages by key on the dashboard.

④ Dledger commitlog: CommitLog managed by the Dledger repository, enabling multiple replicas

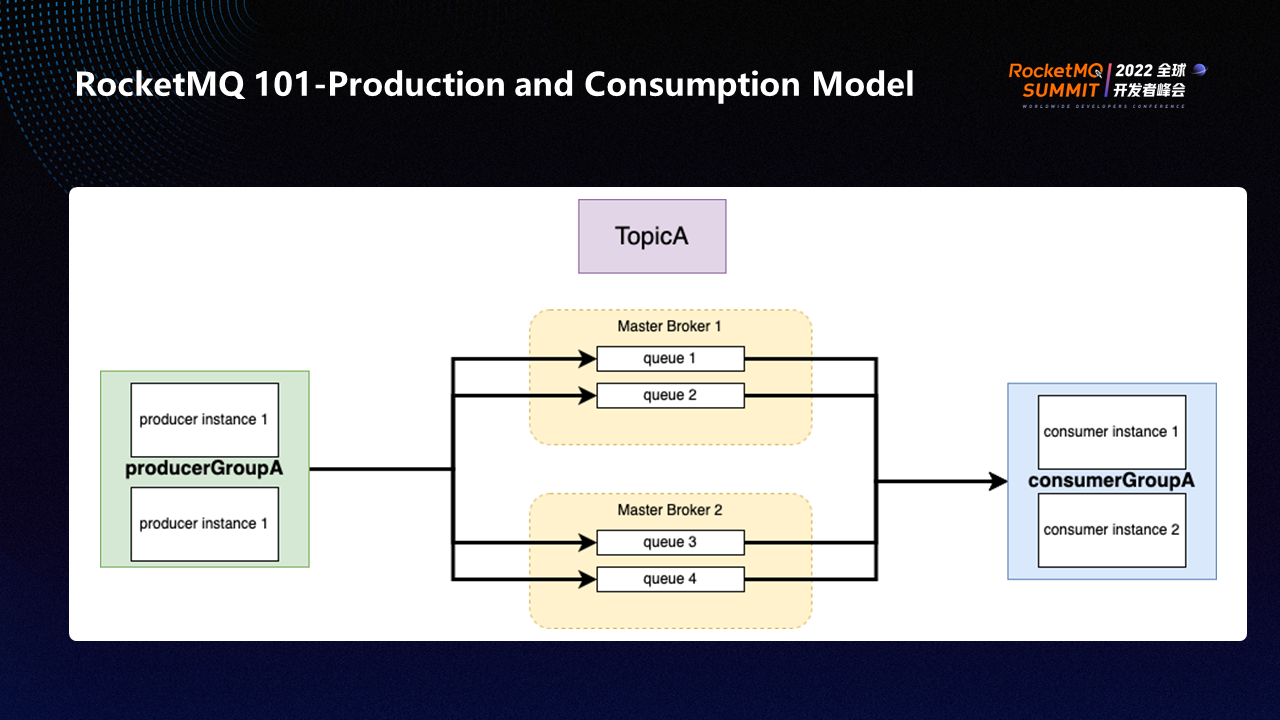

The production and consumption model of RocketMQ is simple. As shown in the preceding figure, Topic A has four queues. Queue 1 and queue 2 are on Master Broker 1, and queue 3 and queue 4 are on Master Broker 2. Producer Group A has two producer instances that send messages to the queues of the two brokers, respectively. Consumer Group A also has two consumers, consumer 1 and consumer 2.

When messages are retrieved from four queues, the default policy of each consumer is to send messages to queue 1, queue 2, queue 3, and queue 4 in sequence. This ensures that messages are evenly distributed.

There are two consumption patterns of consumers: load balancing consumption and broadcasting consumption.

For example, if there are four queues in the load balancing policy, consumer instance 1 and consumer instance 2 will be assigned to two queues, respectively. The specific allocation is determined by the algorithm.

In the broadcasting policy, if the topic contains 100 messages, consumer instance 1 and consumer instance 2 will consume 100 messages each, which means each consumer instance in the same consumer group consumes all messages.

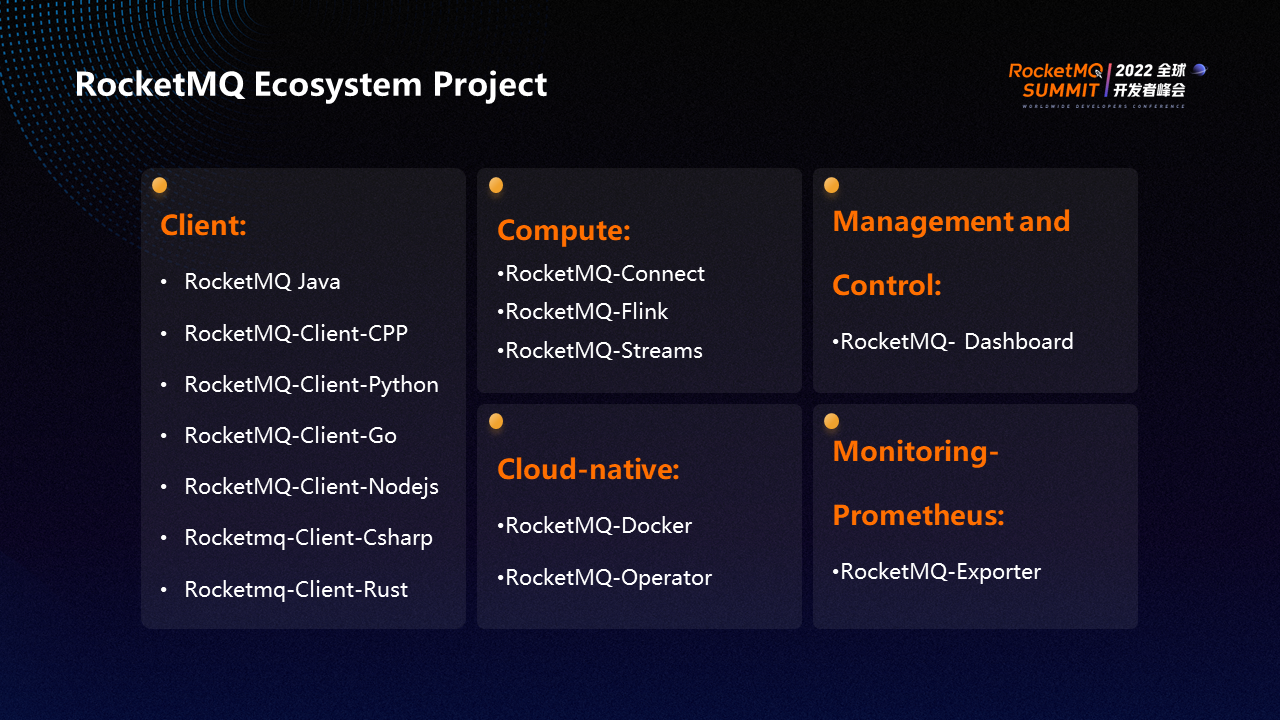

The RocketMQ ecosystem project contains the following parts:

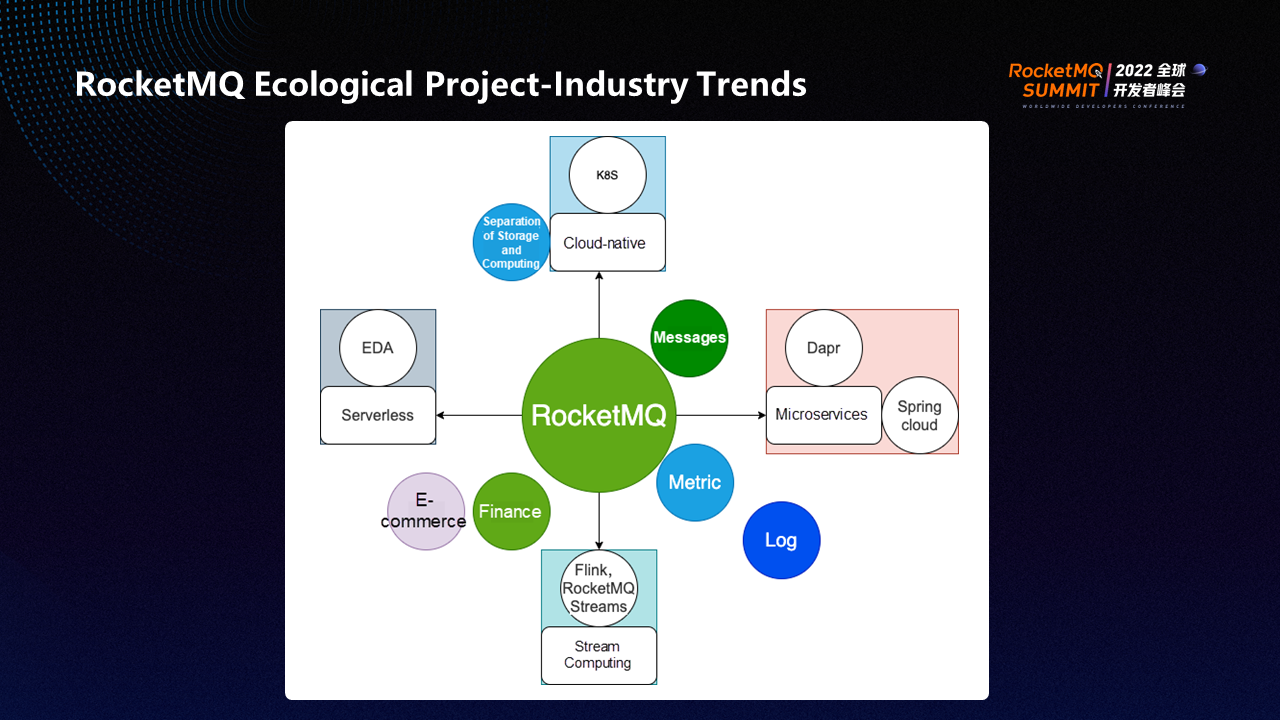

Cloud-native is a trend in the technology industry that reduces costs and facilitates O&M and management. The new version of RocketMQ implements the separation of storage and computing and supports faster and more convenient use of Kubernetes. EDA and Serverless technology are also trends. For example, Tencent Cloud's Serverless Cloud Function (SCF) and Alibaba Cloud's EventBridge are developed in Serverless and EDA scenarios and can be directly integrated with RocketMQ. RocketMQ also provides many native supports in the microservices field.

Traditional fields (such as e-commerce and finance) are undergoing digital transformation. Requirements (such as message, metrics, and log delivery) can be easily and quickly implemented using Rocket MQ.

All in all, RocketMQ can use its powerful ecological projects to support enterprises in various forms of data transmission and computing.

The RocketMQ data streams include messages, CDC data streams, monitoring data streams, and data lakehouse streams. CDC is mainly responsible for recording data changes and monitoring data streams, including business monitoring and routine monitoring.

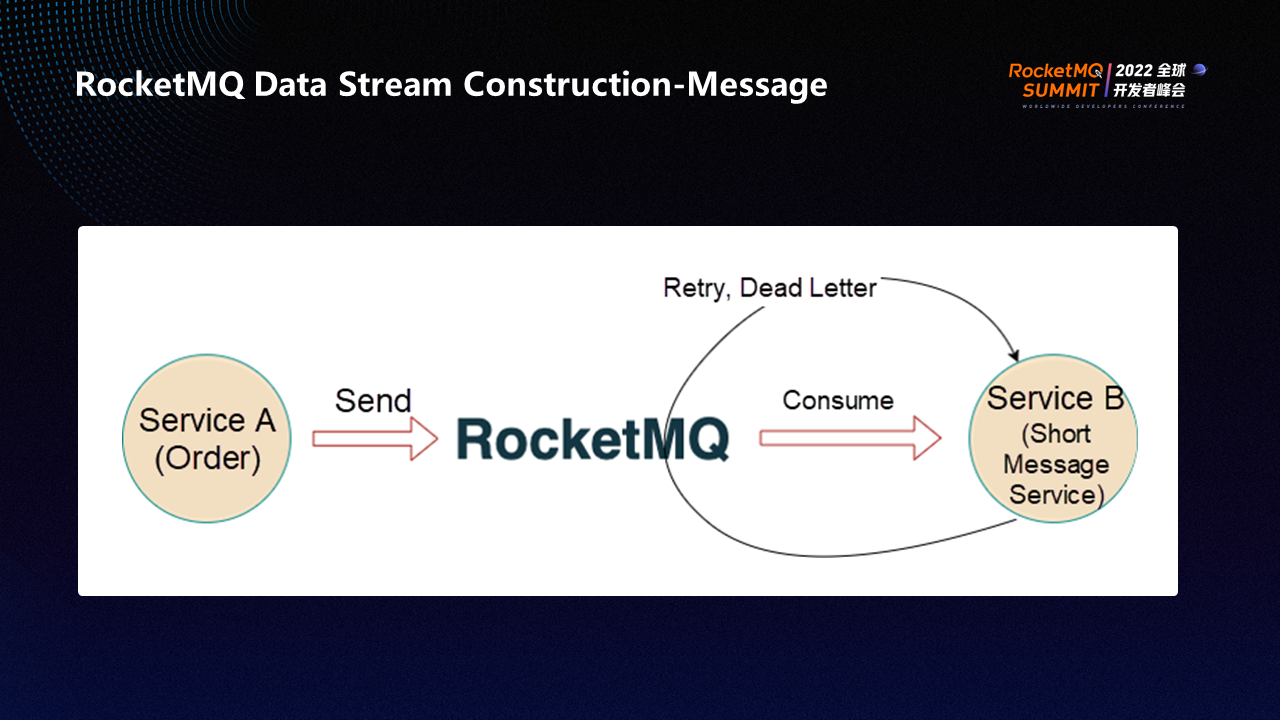

The message is constructed as shown in the preceding figure.

Take the order service as an example. The order service receives a request to create an order. After the order is successfully created, it sends the basic information of the order to service B through RocketMQ. Assume that Service B is a Short Message Service (SMS), and Service B sends an SMS notification to the customer, which contains detailed information about the order.

When RocketMQ sends a message to Service B, it realizes eventual consistency through retries and dead letters to ensure the message can be successfully sent to the consumer. RocketMQ has 16 retries, which are staged and can last for more than ten hours.

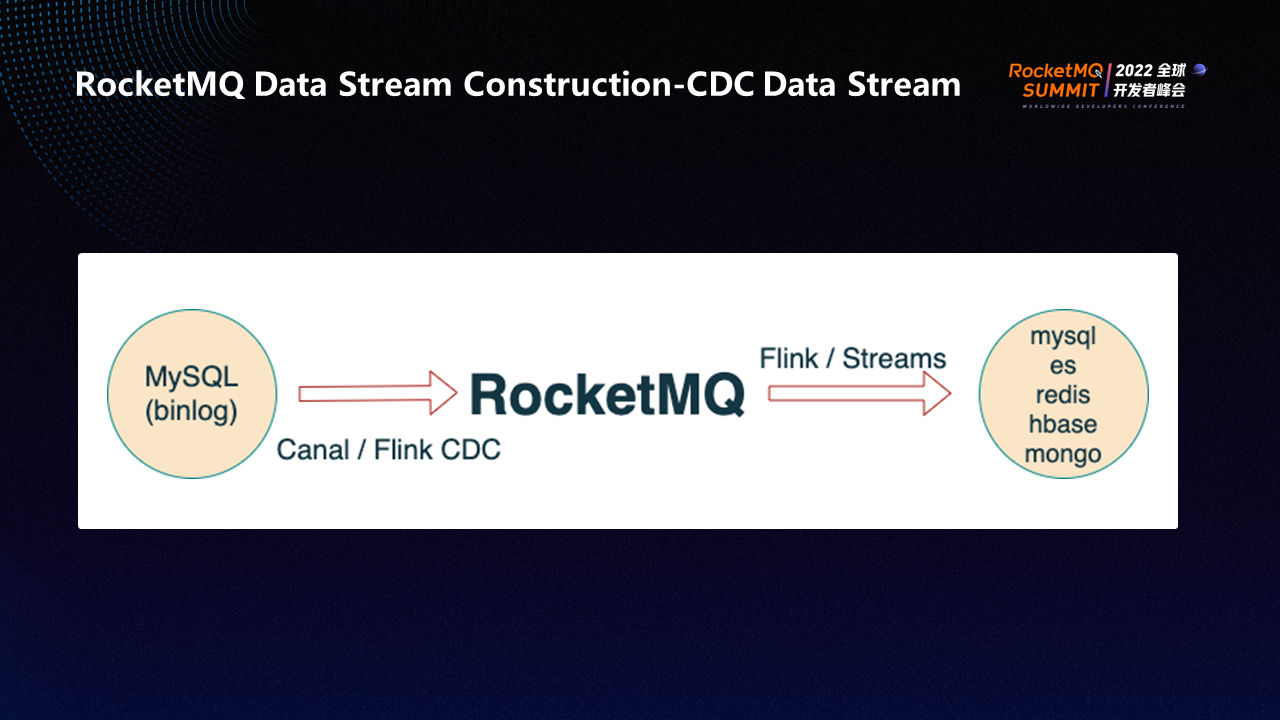

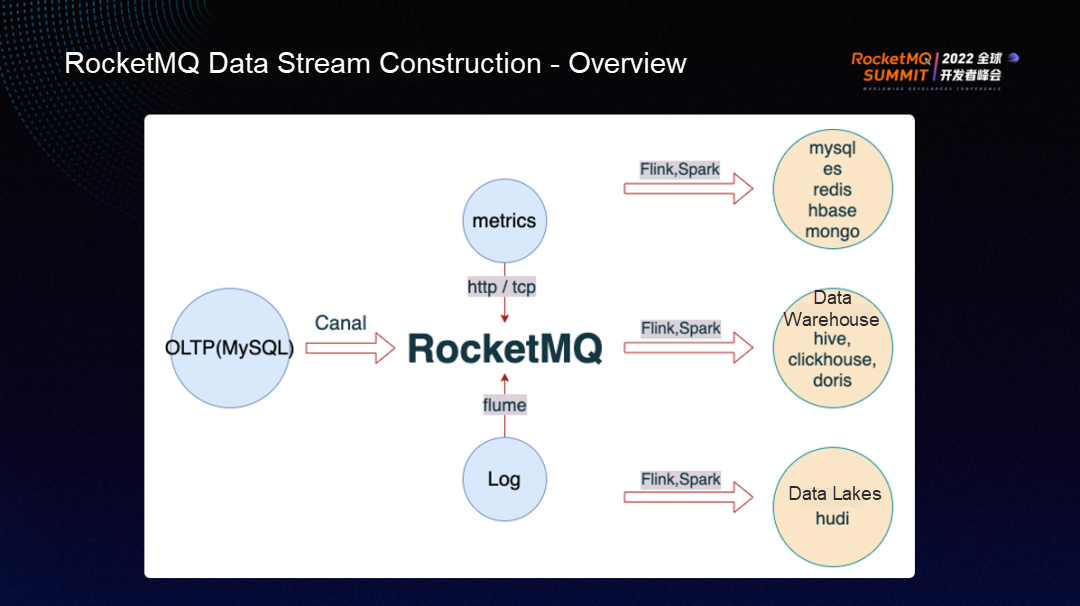

RocketMQ allows you to use Canal/Flink CDC or RocketMQ-collect to provide binary logs and other data to the computing platform. Then, you can use RocketMQ Flink and RocketMQ Streams to perform lightweight computing. After the computing is complete, the system forwards the computing results to downstream databases (such as MySQL, ES, and Redis) for heterogeneous or homogeneous data synchronization.

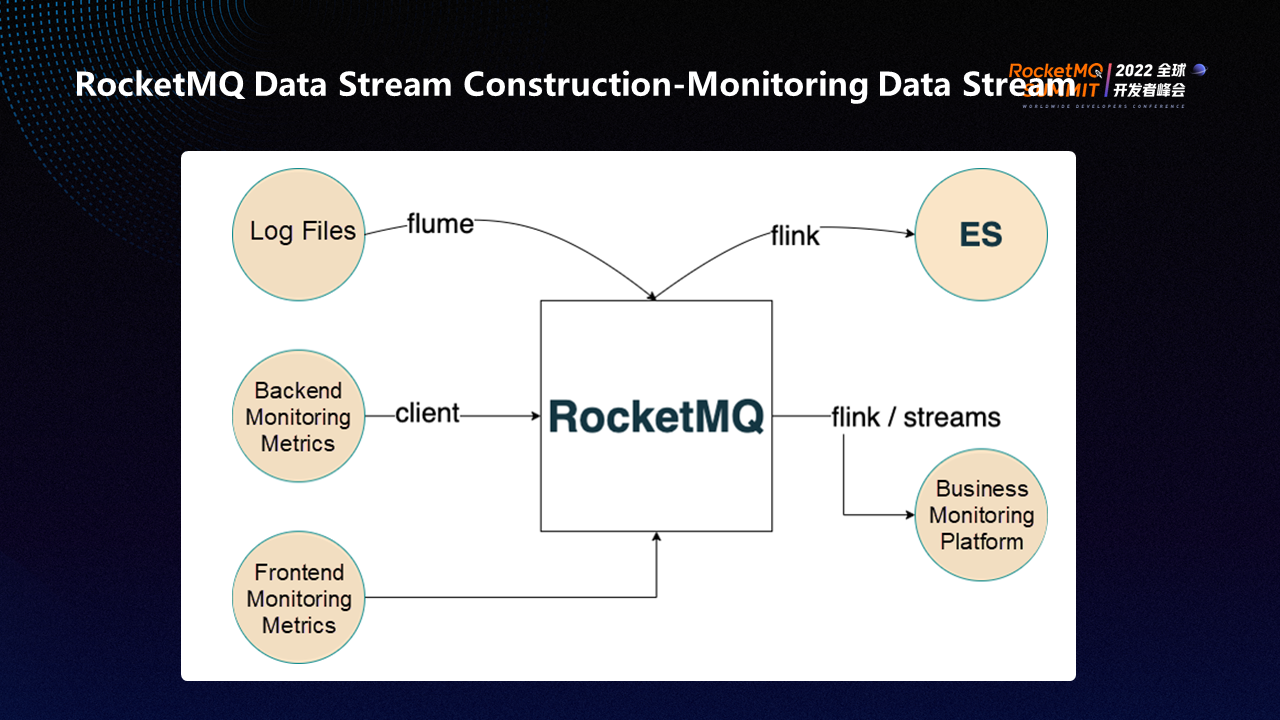

RocketMQ allows you to read log files from Flume and send them to RocketMQ. Then, you can use RocketMQ Collect or RocketMQ Flink to consume the log data, perform ETL conversion, or send the log data to ES. ES is connected to ELK. You can view logs on Kabana.

In addition to logs, RocketMQ can be used to create backend monitoring tracking points in business systems. RocketMQ client sends the monitoring tracking data to RocketMQ, consumes the data through RocketMQ Flink or RocketMQ Streams, and sends it to business monitoring platforms or data lakehouses to generate online reports or real-time reports.

Most of the frontend monitoring data is sent to RocketMQ through HTTP requests. Then, the lightweight computing framework of RocketMQ aggregates the data to different backends (such as ES or self-built platforms).

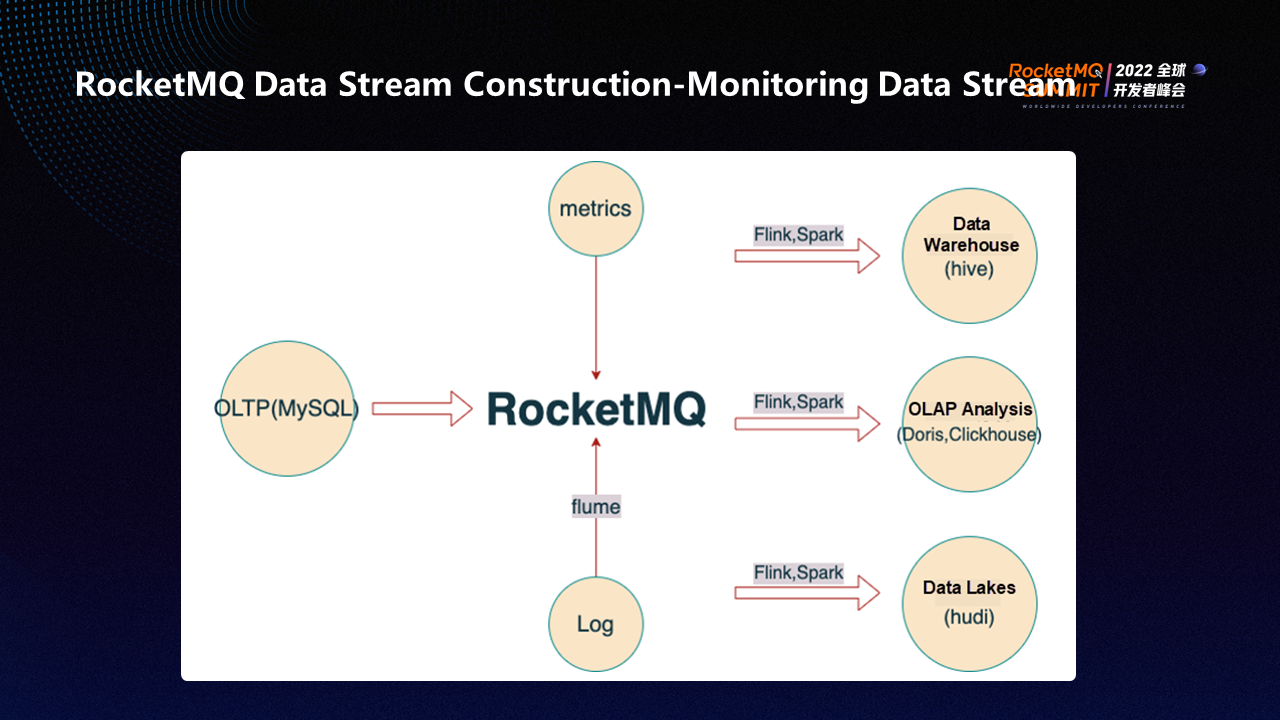

All data can be stored in the data lakehouse because there is a demand for data analysis, data mining, or statistical report. For example, frontend and backend monitoring metrics, business data in TP databases, metric data in log files, or log files can be sent to RocketMQ through corresponding tools (processed by the lightweight computing tools provided by RocketMQ) and then sent to downstream databases or data warehouses (such as Hive, Doris, Clickhouse, or Hudi) to produce reports, real-time dashboards, and real-time data tables.

RocketMQ can collect various data (such as metrics, TP data, and log data), which will be processed by the lightweight computing tools provided by RocketMQ and aggregated into homogeneous/heterogeneous databases, data warehouses, or data lakes.

As the core transmission link, can RocketMQ use its features in the data stream construction process to prevent accidental factors from affecting data transmission?

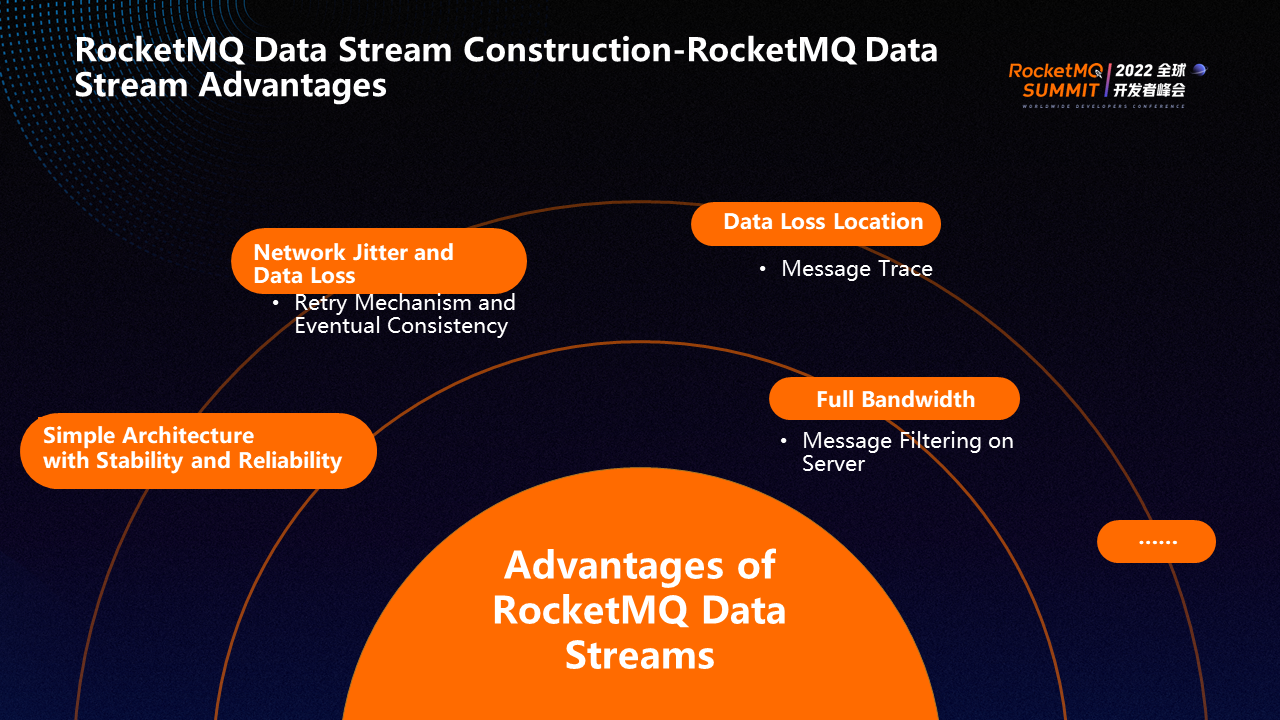

The architecture of RocketMQ is simple, and simplicity also means stability and reliability. Therefore, when RocketMQ is used as the core data link, its stability and reliability can avoid many accidents and reduce uncontrollable factors.

Network jitter is often unavoidable, which may lead to data loss. RocketMQ can ensure the eventual consistency of data through the retry mechanism. For example, network jitter occurs when a message is only half sent. How can we ensure the data can be completely consumed by consumers after the network is restored?

By default, RocketMQ has 16 retries, retry in steps, and the retry interval increases gradually to maximize data consumption. If the consumption is not successful after 16 retries, the message will be put into the dead letter queue and processed manually. After a dead-letter message is generated, RocketMQ generates alerts to detect and handle problems quickly.

For data loss, RocketMQ provides the message trace to help locate the problem quickly. You can check whether the consumer message is sent, whether the broker stores the message, and whether the consumer consumes the message.

Message Queue for Apache RocketMQ provides the server-side message filtering feature to address the issue of the full bandwidth. Assume the topic contains access logs, and the tag is set to the domain name. A consumer group can subscribe to only access logs under the domain name. RocketMQ can filter messages on the server and send them to the consumer group. The broker will only send the domain name messages that belong to the consumer to the consumer, not all messages, thus achieving significant bandwidth savings of up to 80-90%.

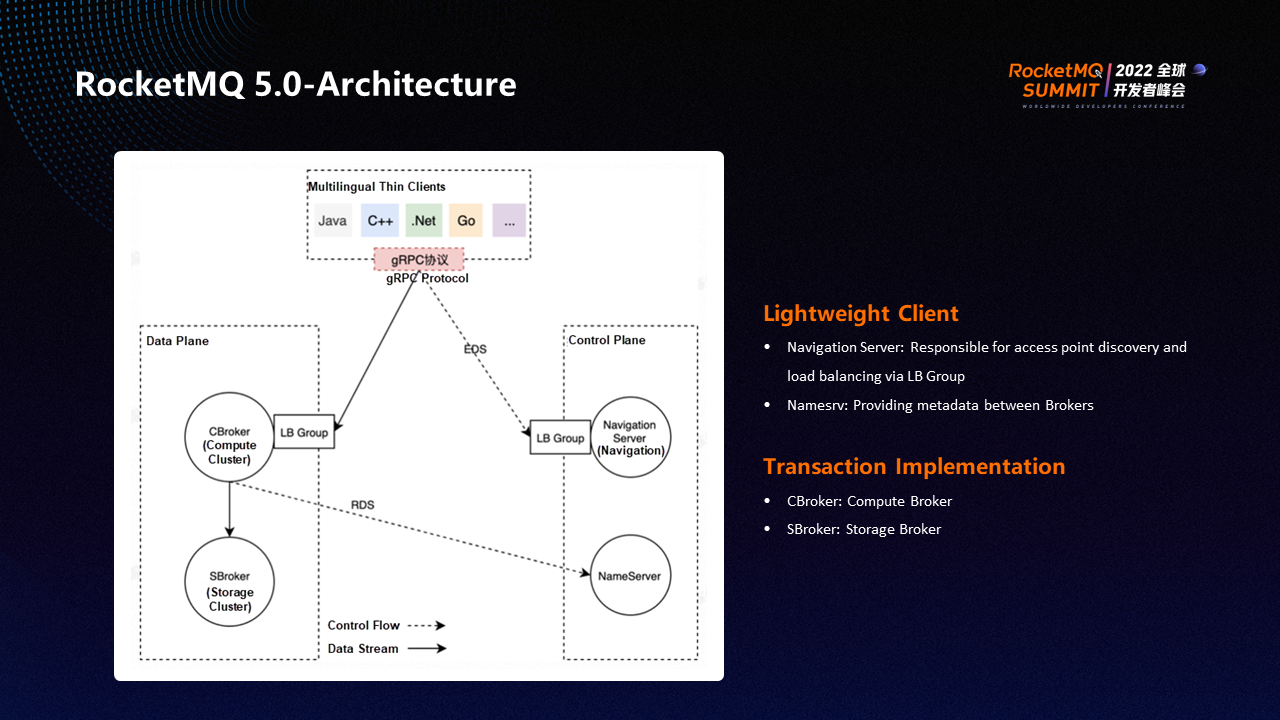

The RocketMQ 5.0 architecture has two major changes, implementing storage and computing separation and a lightweight client.

The separation of storage and computing is mainly at the data level. The storage and computing brokers are split into storage brokers and computing brokers. The two types of brokers perform their own duties and are responsible for storage and computing, respectively.

Previously, the client ecosystem of RocketMQ was rich, but the functions of each client varied significantly, making it difficult to achieve consistency. RocketMQ 5.0 thoroughly solved this problem and implemented a lightweight gRPC-based multilingual client. RocketMQ 5.0 transfers the heavy logic of the client (such as rebalance) to the Cbroker, which makes the client logic lightweight. The client only has the interface for message consumption or message sending. The logic of each language is easy to unify with better compatibility, and there is no logic inconsistency caused by different implementation methods.

In addition to the separation of storage and computing, RocketMQ 5.0 also implements the separation of the data plane and the control plane. The control plane is mainly responsible for access. Previously, it could only be accessed through NameServer. Now, in addition to NameServer, it provides a new way to access through LB Group, which is easier to use. LB Group can facilitate access in a simpler way. Logical cluster access can be realized through LB Group (such as which clients should be connected to which clusters), which is difficult for NameServer to achieve. A group of NameServers manages a physical cluster. A physical cluster can be split into multiple logical clusters. Each logical cluster can be used by different tenants.

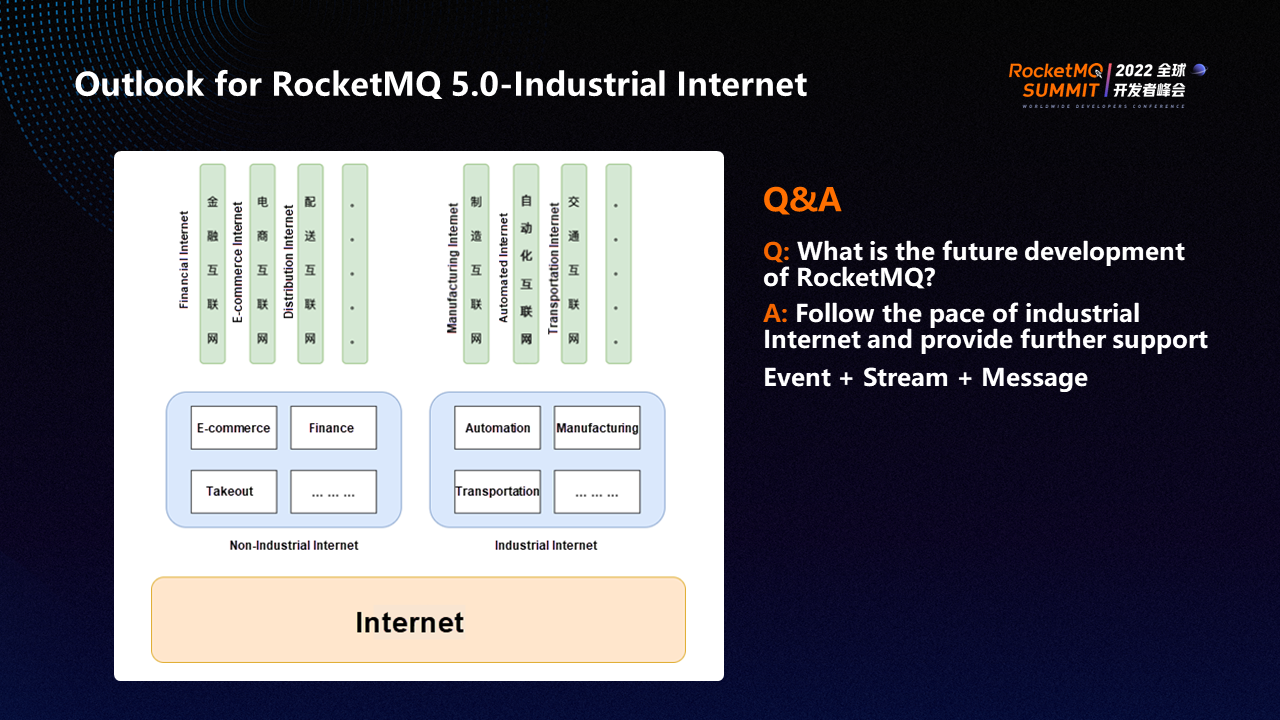

Rocket MQ can be regarded as a channel. The channel can be divided into upstream and downstream. The upstream part and downstream part of different industries are different, and the data in the channel is also different.

The Internet has been involved in every field, but the development of vertical fields is still inadequate. For example, Internet distribution involves transportation and requires a deep combination of transportation and Internet technology to bring far-reaching and extensive impacts on the distribution industry.

In the future, we need technical support personnel to deeply cultivate specific industries and make excellent Internet products that apply to the industry.

Currently, RocketMQ supports events and streams. A very important element of the industrial Internet industry is IoT events, which may come from various terminals and generate a large number of continuous events every day. These large amounts of data need to be transmitted in queues and sent downstream for computing.

Therefore, the future development of RocketMQ will focus on events and streams.

RocketMQ has launched RocketMQ Collect, RocketMQ Flink, and RocketMQ streams. It has gradually developed a complete ecosystem for stream computing and can help users quickly build streaming applications. RocketMQ excels in messages. It can help users process messages in different scenarios conveniently and quickly. RocketMQ has made protocols (such as MQTT) open-source, making access to devices faster and easier.

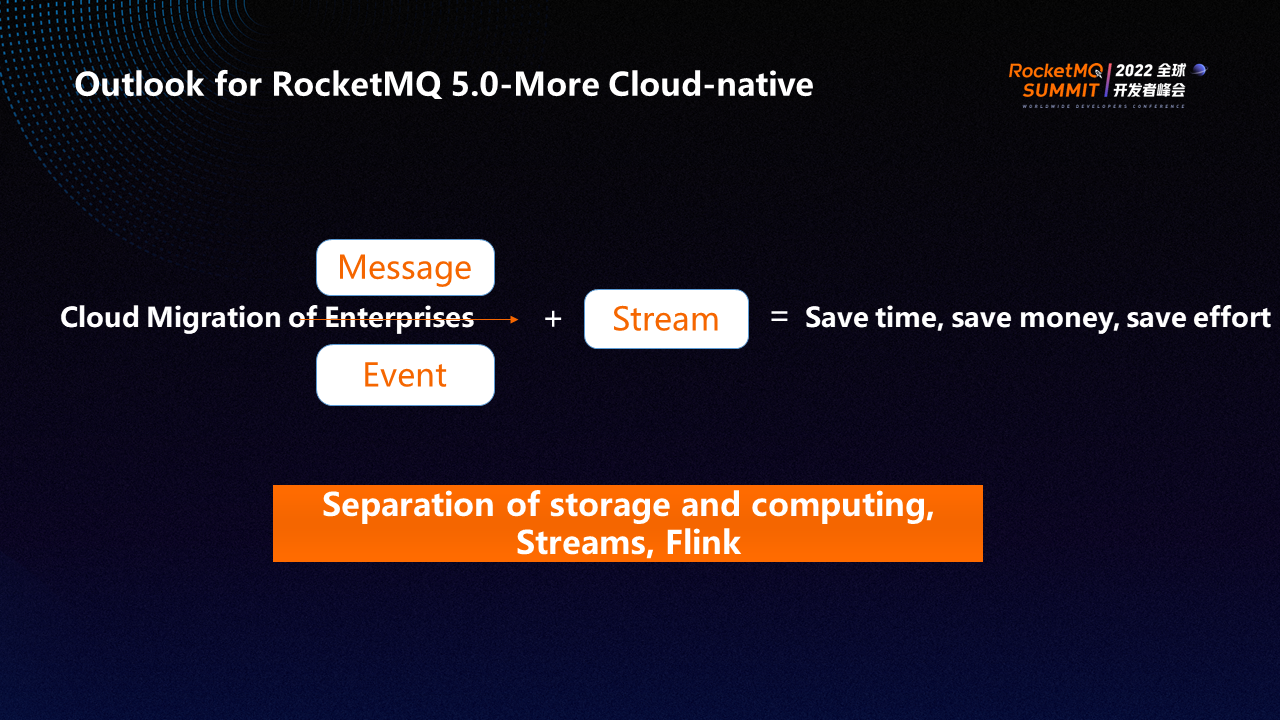

With the release of RocketMQ 5.0, RocketMQ has become unified and powerful in processing messages, events, and streams. The separation of storage and computing also enables RocketMQ to provide lower costs. This allows enterprises to move to the cloud with less money, effort, and workforce.

The Practice of Apache ShenYu Integrating with RocketMQ to Collect Massive Logs in Real-Time

Make Data Stream Move: An Analysis of RocketMQ Connect Architecture

705 posts | 57 followers

FollowAlibaba Cloud Native - June 6, 2024

Alibaba Cloud Native Community - January 5, 2023

Alibaba Cloud Native Community - November 23, 2022

Alibaba Cloud Native - June 11, 2024

Alibaba Cloud Native - June 12, 2024

Alibaba Cloud Community - December 21, 2021

705 posts | 57 followers

Follow ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More AliwareMQ for IoT

AliwareMQ for IoT

A message service designed for IoT and mobile Internet (MI).

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn MoreMore Posts by Alibaba Cloud Native Community