We are delighted to announce the official release of Qwen3.5, introducing the open-weight of the first model in the Qwen3.5 series, namely Qwen3.5-397B-A17B. As a native vision-language model, Qwen3.5-397B-A17B demonstrates outstanding results across a full range of benchmark evaluations, including reasoning, coding, agent capabilities, and multimodal understanding, empowering developers and enterprises to achieve significantly greater productivity. Built on an innovative hybrid architecture that fuses linear attention (via Gated Delta Networks) with a sparse mixture-of-experts, the model attains remarkable inference efficiency: although it comprises 397 billion total parameters, just 17 billion are activated per forward pass, optimizing both speed and cost without sacrificing capability. We have also expanded our language and dialect support from 119 to 201, providing broader accessibility and enhanced support to users around the world.

Qwen3.5-Plus is the hosted model available via Alibaba Cloud Model Studio, featuring:

- a 1M context window by default

- official built-in tools and adaptive tool use

Below we present the comprehensive evaluation of our models against frontier models in a wide range of evaluation tasks, covering different tasks and modalities.

|

GPT5.2 |

Claude 4.5 Opus |

Gemini-3 Pro |

Qwen3-Max-Thinking |

K2.5-1T-A32B |

Qwen3.5-397B-A17B |

|

|

Knowledge |

||||||

|

MMLU-Pro |

87.4 |

89.5 |

89.8 |

85.7 |

87.1 |

87.8 |

|

MMLU-Redux |

95.0 |

95.6 |

95.9 |

92.8 |

94.5 |

94.9 |

|

SuperGPQA |

67.9 |

70.6 |

74.0 |

67.3 |

69.2 |

70.4 |

|

C-Eval |

90.5 |

92.2 |

93.4 |

93.7 |

94.0 |

93.0 |

|

Instruction Following |

||||||

|

IFEval |

94.8 |

90.9 |

93.5 |

93.4 |

93.9 |

92.6 |

|

IFBench |

75.4 |

58.0 |

70.4 |

70.9 |

70.2 |

76.5 |

|

MultiChallenge |

57.9 |

54.2 |

64.2 |

63.3 |

62.7 |

67.6 |

|

Long Context |

||||||

|

AA-LCR |

72.7 |

74.0 |

70.7 |

68.7 |

70.0 |

68.7 |

|

LongBench v2 |

54.5 |

64.4 |

68.2 |

60.6 |

61.0 |

63.2 |

|

STEM |

||||||

|

GPQA |

92.4 |

87.0 |

91.9 |

87.4 |

87.6 |

88.4 |

|

HLE |

35.5 |

30.8 |

37.5 |

30.2 |

30.1 |

28.7 |

|

HLE-Verified¹ |

43.3 |

38.8 |

48 |

37.6 |

-- |

37.6 |

|

Reasoning |

||||||

|

LiveCodeBench v6 |

87.7 |

84.8 |

90.7 |

85.9 |

85.0 |

83.6 |

|

HMMT Feb 25 |

99.4 |

92.9 |

97.3 |

98.0 |

95.4 |

94.8 |

|

HMMT Nov 25 |

100 |

93.3 |

93.3 |

94.7 |

91.1 |

92.7 |

|

IMOAnswerBench |

86.3 |

84.0 |

83.3 |

83.9 |

81.8 |

80.9 |

|

AIME26 |

96.7 |

93.3 |

90.6 |

93.3 |

93.3 |

91.3 |

|

General Agent |

||||||

|

BFCL-V4 |

63.1 |

77.5 |

72.5 |

67.7 |

68.3 |

72.9 |

|

TAU2-Bench |

87.1 |

91.6 |

85.4 |

84.6 |

77.0 |

86.7 |

|

VITA-Bench |

38.2 |

56.3 |

51.6 |

40.9 |

41.9 |

49.7 |

|

DeepPlanning |

44.6 |

33.9 |

23.3 |

28.7 |

14.5 |

34.3 |

|

Tool Decathlon |

43.8 |

43.5 |

36.4 |

18.8 |

27.8 |

38.3 |

|

MCP-Mark |

57.5 |

42.3 |

53.9 |

33.5 |

29.5 |

46.1 |

|

Search Agent |

||||||

|

HLE w/ tool |

45.5 |

43.4 |

45.8 |

49.8 |

50.2 |

48.3 |

|

BrowseComp |

65.8 |

67.8 |

59.2 |

53.9 |

--/74.9 |

69.0/78.6 |

|

BrowseComp-zh |

76.1 |

62.4 |

66.8 |

60.9 |

-- |

70.3 |

|

WideSearch |

76.8 |

76.4 |

68.0 |

57.9 |

72.7 |

74.0 |

|

Seal-0 |

45.0 |

47.7 |

45.5 |

46.9 |

57.4 |

46.9 |

|

Multilingualism |

||||||

|

MMMLU |

89.5 |

90.1 |

90.6 |

84.4 |

86.0 |

88.5 |

|

MMLU-ProX |

83.7 |

85.7 |

87.7 |

78.5 |

82.3 |

84.7 |

|

NOVA-63 |

54.6 |

56.7 |

56.7 |

54.2 |

56.0 |

59.1 |

|

INCLUDE |

87.5 |

86.2 |

90.5 |

82.3 |

83.3 |

85.6 |

|

Global PIQA |

90.9 |

91.6 |

93.2 |

86.0 |

89.3 |

89.8 |

|

PolyMATH |

62.5 |

79.0 |

81.6 |

64.7 |

43.1 |

73.3 |

|

WMT24++ |

78.8 |

79.7 |

80.7 |

77.6 |

77.6 |

78.9 |

|

MAXIFE |

88.4 |

79.2 |

87.5 |

84.0 |

72.8 |

88.2 |

|

Coding Agent |

||||||

|

SWE-bench Verified |

80.0 |

80.9 |

76.2 |

75.3 |

76.8 |

76.4 |

|

SWE-bench Multilingual |

72.0 |

77.5 |

65.0 |

66.7 |

73.0 |

69.3 |

|

SecCodeBench |

68.7 |

68.6 |

62.4 |

57.5 |

61.3 |

68.3 |

|

Terminal Bench 2 |

54.0 |

59.3 |

54.2 |

22.5 |

50.8 |

52.5 |

|

GPT5.2 |

Claude 4.5 Opus |

Gemini-3 Pro |

Qwen3-VL-235B-A22B |

K2.5-1T-A32B |

Qwen3.5-397B-A17B |

|

|

STEM and Puzzle |

||||||

|

MMMU |

86.7 |

80.7 |

87.2 |

80.6 |

84.3 |

85.0 |

|

MMMU-Pro |

79.5 |

70.6 |

81.0 |

69.3 |

78.5 |

79.0 |

|

MathVision |

83.0 |

74.3 |

86.6 |

74.6 |

84.2 |

88.6 |

|

Mathvista(mini) |

83.1 |

80.0 |

87.9 |

85.8 |

90.1 |

90.3 |

|

We-Math |

79.0 |

70.0 |

86.9 |

74.8 |

84.7 |

87.9 |

|

DynaMath |

86.8 |

79.7 |

85.1 |

82.8 |

84.4 |

86.3 |

|

ZEROBench |

9 |

3 |

10 |

4 |

9 |

12 |

|

ZEROBench_sub |

33.2 |

28.4 |

39.0 |

28.4 |

33.5 |

41.0 |

|

BabyVision |

34.4 |

14.2 |

49.7 |

22.2 |

36.5 |

52.3/43.3 |

|

General VQA |

||||||

|

RealWorldQA |

83.3 |

77.0 |

83.3 |

81.3 |

81.0 |

83.9 |

|

MMStar |

77.1 |

73.2 |

83.1 |

78.7 |

80.5 |

83.8 |

|

HallusionBench |

65.2 |

64.1 |

68.6 |

66.7 |

69.8 |

71.4 |

|

MMBenchEN-DEV-v1.1 |

88.2 |

89.2 |

93.7 |

89.7 |

94.2 |

93.7 |

|

SimpleVQA |

55.8 |

65.7 |

73.2 |

61.3 |

71.2 |

67.1 |

|

Text Recognition and Document Understanding |

||||||

|

OmniDocBench1.5 |

85.7 |

87.7 |

88.5 |

84.5 |

88.8 |

90.8 |

|

CharXiv(RQ) |

82.1 |

68.5 |

81.4 |

66.1 |

77.5 |

80.8 |

|

MMLongBench-Doc |

-- |

61.9 |

60.5 |

56.2 |

58.5 |

61.5 |

|

CC-OCR |

70.3 |

76.9 |

79.0 |

81.5 |

79.7 |

82.0 |

|

AI2D_TEST |

92.2 |

87.7 |

94.1 |

89.2 |

90.8 |

93.9 |

|

OCRBench |

80.7 |

85.8 |

90.4 |

87.5 |

92.3 |

93.1 |

|

Spatial Intelligence |

||||||

|

ERQA |

59.8 |

46.8 |

70.5 |

52.5 |

-- |

67.5 |

|

CountBench |

91.9 |

90.6 |

97.3 |

93.7 |

94.1 |

97.2 |

|

RefCOCO(avg) |

-- |

-- |

84.1 |

91.1 |

87.8 |

92.3 |

|

ODInW13 |

-- |

-- |

46.3 |

43.2 |

-- |

47.0 |

|

EmbSpatialBench |

81.3 |

75.7 |

61.2 |

84.3 |

77.4 |

84.5 |

|

RefSpatialBench |

-- |

-- |

65.5 |

69.9 |

-- |

73.6 |

|

LingoQA |

68.8 |

78.8 |

72.8 |

66.8 |

68.2 |

81.6 |

|

V* |

75.9 |

67.0 |

88.0 |

85.9 |

77.0 |

95.8/91.1 |

|

Hypersim |

-- |

-- |

-- |

11.0 |

-- |

12.5 |

|

SUNRGBD |

-- |

-- |

-- |

34.9 |

-- |

38.3 |

|

Nuscene |

-- |

-- |

-- |

13.9 |

-- |

16.0 |

|

Video Understanding |

||||||

|

VideoMME(w sub.) |

86 |

77.6 |

88.4 |

83.8 |

87.4 |

87.5 |

|

VideoMME(w/o sub.) |

85.8 |

81.4 |

87.7 |

79.0 |

83.2 |

83.7 |

|

VideoMMMU |

85.9 |

84.4 |

87.6 |

80.0 |

86.6 |

84.7 |

|

MLVU (M-Avg) |

85.6 |

81.7 |

83.0 |

83.8 |

85.0 |

86.7 |

|

MVBench |

78.1 |

67.2 |

74.1 |

75.2 |

73.5 |

77.6 |

|

LVBench |

73.7 |

57.3 |

76.2 |

63.6 |

75.9 |

75.5 |

|

MMVU |

80.8 |

77.3 |

77.5 |

71.1 |

80.4 |

75.4 |

|

Visual Agent |

||||||

|

ScreenSpot Pro |

-- |

45.7 |

72.7 |

62.0 |

-- |

65.6 |

|

OSWorld-Verified |

38.2 |

66.3 |

-- |

38.1 |

63.3 |

62.2 |

|

AndroidWorld |

-- |

-- |

-- |

63.7 |

-- |

66.8 |

|

Medical VQA |

||||||

|

SLAKE |

76.9 |

76.4 |

81.3 |

54.7 |

81.6 |

79.9 |

|

PMC-VQA |

58.9 |

59.9 |

62.3 |

41.2 |

63.3 |

64.2 |

|

MedXpertQA-MM |

73.3 |

63.6 |

76.0 |

47.6 |

65.3 |

70.0 |

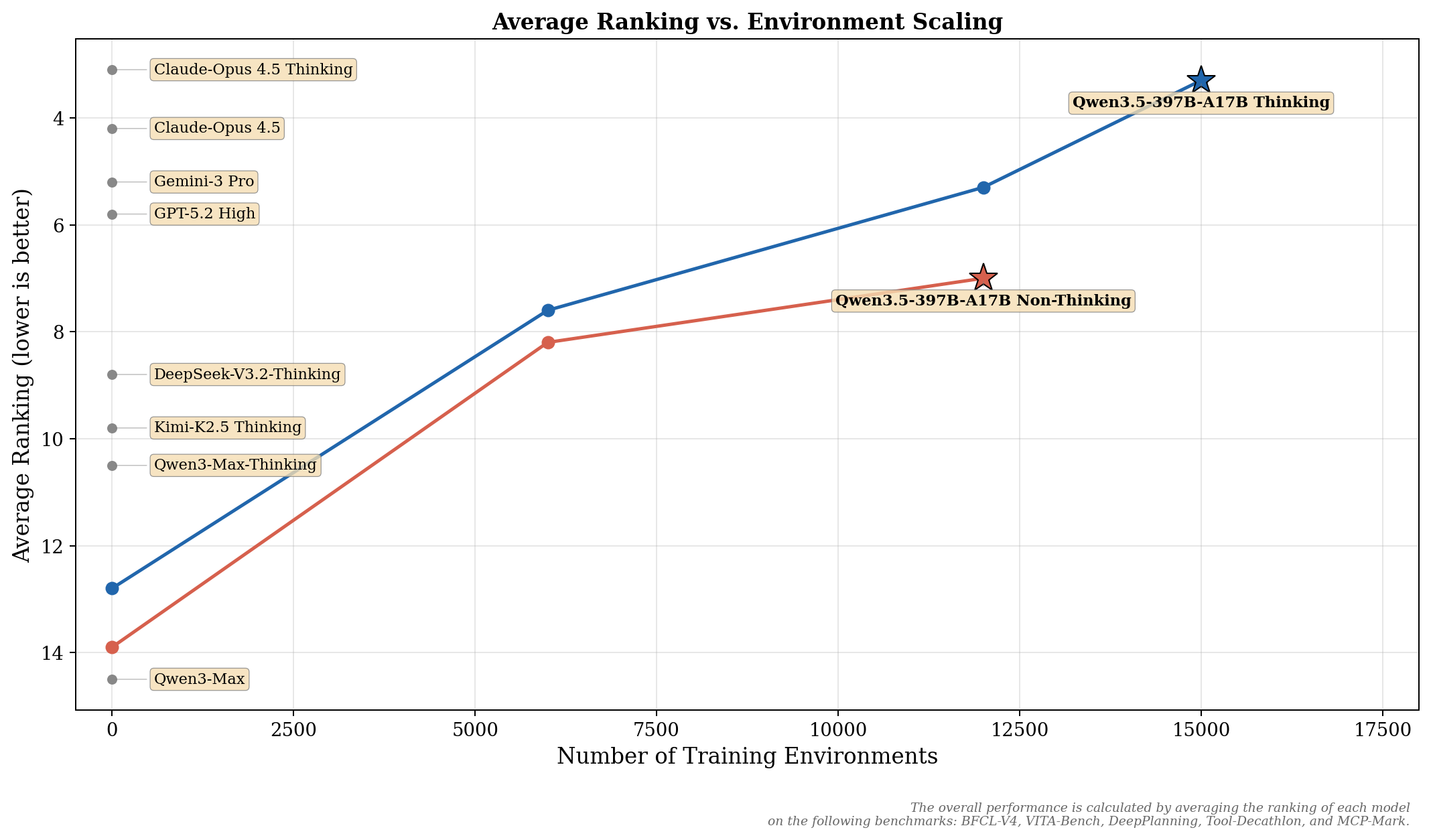

Compared to the Qwen3 series, the post-training performance gains in Qwen3.5 primarily stem from our extensive scaling of virtually all RL tasks and environments we could conceive. Our approach placed strong emphasis on increasing the difficulty and generalizability of RL environments, rather than optimizing for specific metrics or narrow categories of queries. Below, we illustrate the improvements in general agent capabilities resulting from this RL environment scaling. The overall performance is calculated by averaging the ranking of each model on the following benchmarks: BFCL-V4, VITA-Bench, DeepPlanning, Tool-Decathlon, and MCP-Mark. Additional scaling results across a broader range of tasks will be detailed in our upcoming technical report.

Qwen3.5 advances pretraining across three dimensions—power, efficiency, and versatility:

Power: Trained on a significantly larger scale of visual-text tokens compared to Qwen3, with enriched Chinese/English, multilingual, STEM, and reasoning data under stricter filtering. This enables cross-generation parity: Qwen3.5-397B-A17B matches the >1T-parameter Qwen3-Max-Base.

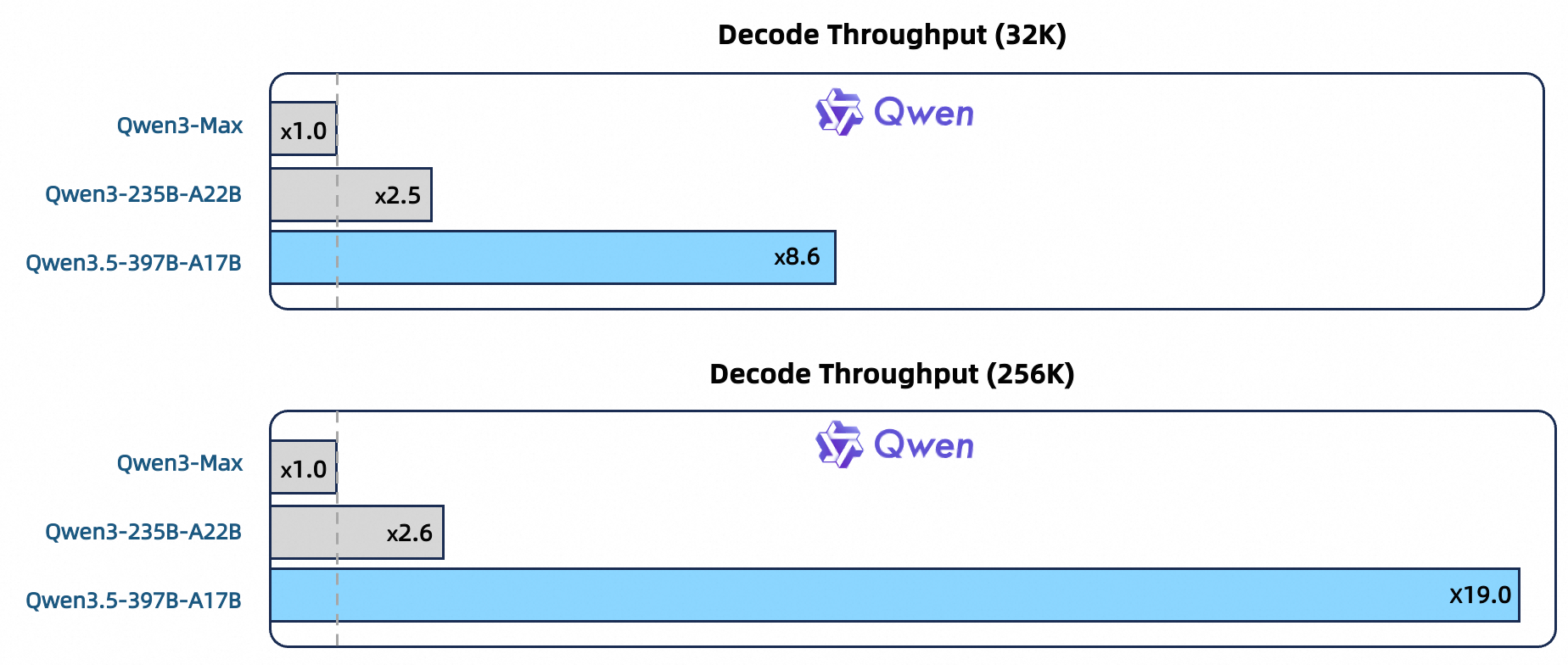

Efficiency: Built on Qwen3-Next architecture—higher-sparsity MoE, Gated DeltaNet + Gated Attention hybrid attention, stability optimizations, and multi-token prediction. Under the 32k/256k context length, the decoding throughput of Qwen3.5-397B-A17B is 8.6x/19.0x that of Qwen3-Max, and the performance is comparable. The decoding throughput of Qwen3.5-397B-A17B is 3.5x/7.2 times that of Qwen3-235B-A22B.

Versatility: Natively multimodal via early text-vision fusion and expanded visual/STEM/video data, outperforming Qwen3-VL at similar scales. Multilingual coverage grows from 119 to 201 languages/dialects; a 250k vocabulary (vs. 150k) boosts encoding/decoding efficiency by 10–60% across most languages.

Below we present the performance of the base models.

|

Qwen3-235B-A22B |

GLM-4.5-355B-A32B |

DeepSeek-V3.2-671B-A37B |

K2-1T-A32B |

Qwen3.5-397B-A17B |

|

|

General Knowledge & Multilingual |

|||||

|

MMLU |

87.33 |

86.56 |

88.11 |

87.38 |

88.61 |

|

MMLU-Pro |

67.73 |

65.00 |

62.82 |

67.64 |

76.01 |

|

MMLU-Redux |

87.44 |

86.86 |

87.29 |

86.65 |

89.09 |

|

SuperGPQA |

42.84 |

44.56 |

43.46 |

44.86 |

57.96 |

|

C-Eval |

91.82 |

85.50 |

90.48 |

91.82 |

91.82 |

|

MMMLU |

81.27 |

82.26 |

83.20 |

82.26 |

85.82 |

|

Include |

75.26 |

73.41 |

76.52 |

72.05 |

79.27 |

|

Nova |

66.52 |

60.96 |

60.40 |

61.44 |

67.55 |

|

Reasoning & STEM |

|||||

|

BBH |

87.95 |

87.68 |

86.03 |

89.11 |

90.98 |

|

KoRBench |

50.80 |

52.80 |

54.00 |

53.84 |

54.08 |

|

GPQA |

47.47 |

44.63 |

44.16 |

46.78 |

54.64 |

|

MATH |

71.84 |

61.84 |

64.40 |

71.50 |

74.14 |

|

GSM8K |

91.17 |

89.31 |

89.12 |

92.12 |

93.71 |

|

Coding |

|||||

|

Evalplus |

77.60 |

69.49 |

62.68 |

71.77 |

79.32 |

|

MultiPLE |

65.94 |

62.51 |

61.88 |

70.64 |

79.39 |

|

SWE-agentless |

31.77 |

29.23 |

34.67 |

28.54 |

43.26 |

|

CRUX-I |

64.25 |

67.63 |

63.25 |

70.50 |

71.13 |

|

CRUX-O |

78.88 |

77.13 |

73.88 |

77.13 |

82.38 |

Qwen3.5 enables efficient native multimodal training via a heterogeneous infrastructure that decouples parallelism strategies across vision and language components, avoiding uniform approaches’ inefficiencies. By exploiting sparse activations for cross-component computation overlap, it achieves near 100% training throughput versus pure-text baselines on mixed text-image-video data. Complementing this, a native FP8 pipeline applies low precision to activations, MoE routing, and GEMM operations—with runtime monitoring preserving BF16 in sensitive layers—yielding ~50% activation memory reduction and >10% speedup while scaling stably to tens of trillions of tokens.

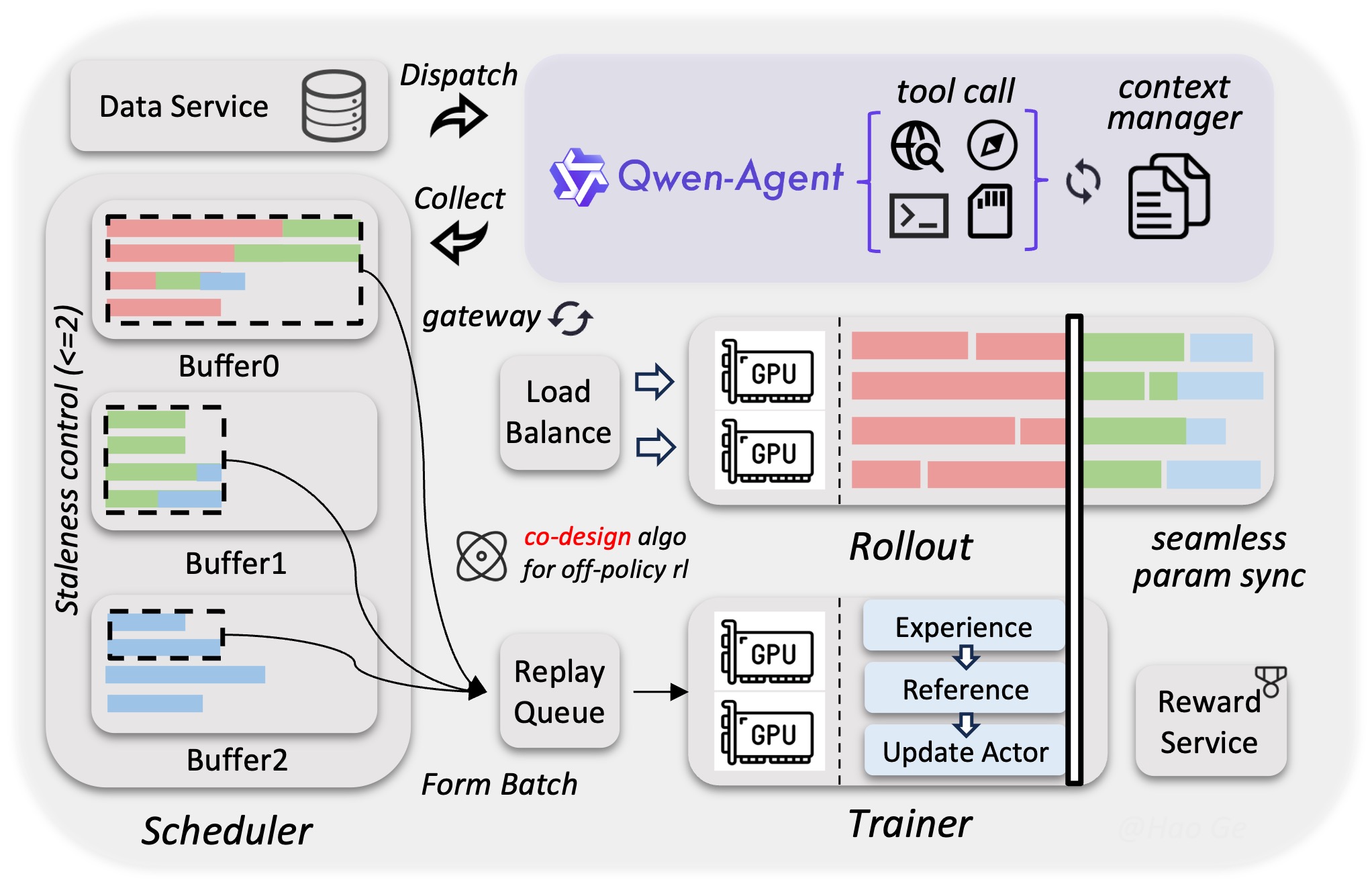

To continuously unleash the power of reinforcement learning, we built a scalable asynchronous RL framework that supports Qwen3.5 models of all sizes, spanning text, multimodal, and multi-turn settings. By adopting a fully disaggregated training-inference architecture, the framework achieves significantly improved hardware utilization, dynamic load balancing, and fine-grained fault recovery. It further optimizes throughput and enhances train–infer consistency via techniques such as FP8 end-to-end training, rollout router replay, speculative decoding, and multi-turn rollout locking. Through tight system-algorithm co-design, the framework effectively bounds gradient staleness and mitigates data skewness, preserving both training stability and performance. Moreover, it natively supports agentic workflows, facilitating seamless multi-turn interactions without framework-induced interruptions. This decoupled design enables the system to accommodate million-scale agent scaffolds and environments, substantially boosting model generalization. Collectively, these optimizations yield a 3×–5× end-to-end speedup, demonstrating superior stability, efficiency, and scalability.

Feel free to use Qwen3.5 on Qwen Chat. We provide three modes, auto, thinking, and fast, to users to choose. With “Auto” mode, users can leverage adaptive thinking, which can think and use tools including search and code interpreter, while with “Thinking” mode, the model can think deeply for hard problems. With “Fast” mode, the model answers questions instantly without spending tokens on thinking.

Users can experience our flagship model, Qwen3.5-Plus, by invoking it through Alibaba Cloud ModelStudio. To enable advanced capabilities such as reasoning, web search, and Code Interpreter, simply pass the following parameters:

Example code is provided below:

"""

Environment variables (per official docs):

DASHSCOPE_API_KEY: Your API Key from https://bailian.console.aliyun.com

DASHSCOPE_BASE_URL: (optional) Base URL for compatible-mode API.

DASHSCOPE_MODEL: (optional) Model name; override for different models.

DASHSCOPE_BASE_URL:

- Beijing: https://dashscope.aliyuncs.com/compatible-mode/v1

- Singapore: https://dashscope-intl.aliyuncs.com/compatible-mode/v1

- US (Virginia): https://dashscope-us.aliyuncs.com/compatible-mode/v1

"""

from openai import OpenAI

import os

api_key = os.environ.get("DASHSCOPE_API_KEY")

if not api_key:

raise ValueError(

"DASHSCOPE_API_KEY is required. "

"Set it via: export DASHSCOPE_API_KEY='your-api-key'"

)

client = OpenAI(

api_key=api_key,

base_url=os.environ.get(

"DASHSCOPE_BASE_URL",

"https://dashscope-intl.aliyuncs.com/compatible-mode/v1",

),

)

messages = [{"role": "user", "content": "Introduce Qwen3.5."}]

model = os.environ.get(

"DASHSCOPE_MODEL",

"qwen3.5-plus",

)

completion = client.chat.completions.create(

model=model,

messages=messages,

extra_body={

"enable_thinking": True,

"enable_search": False

},

stream=True

)

reasoning_content = "" # Full reasoning trace

answer_content = "" # Full response

is_answering = False # Whether we have entered the answer phase

print("\n" + "=" * 20 + "Reasoning" + "=" * 20 + "\n")

for chunk in completion:

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

continue

delta = chunk.choices[0].delta

# Collect reasoning content only

if hasattr(delta, "reasoning_content") and delta.reasoning_content is not None:

if not is_answering:

print(delta.reasoning_content, end="", flush=True)

reasoning_content += delta.reasoning_content

# Received content, start answer phase

if hasattr(delta, "content") and delta.content:

if not is_answering:

print("\n" + "=" * 20 + "Answer" + "=" * 20 + "\n")

is_answering = True

print(delta.content, end="", flush=True)

answer_content += delta.contentYou can effortlessly integrate the Bailian API with third-party coding tools, such as Qwen Code, Claude Code, Cline, OpenClaw, OpenCode, etc., to enable a seamless “vibe coding” experience.

Qwen3.5 provides a strong foundation for universal digital agents through its efficient hybrid architecture and native multimodal reasoning. The next leap requires shifting from model scaling to system integration: building agents with persistent memory for cross-session learning, embodied interfaces for real-world interaction, self-directed improvement mechanisms, and economic awareness to operate within practical constraints. The goal is coherent systems that function autonomously over time, transforming today’s task-bound assistants into persistent, trustworthy partners capable of executing complex, multi-day objectives with human-aligned judgment.

Feel free to cite the following article if you find Qwen3.5 helpful:

@misc{qwen35blog,

title = {Qwen3.5: Accelerating Productivity with Native Multimodal Agents},

url = {https://qwen.ai/blog?id=qwen3.5},

author = {Qwen Team},

month = {February},

year = {2026}

}Alibaba Unveiled Open-sourced Embodied Foundation Model for Robotics

1,370 posts | 487 followers

FollowAlibaba Cloud Community - April 2, 2026

ray - April 1, 2026

Alibaba Cloud Community - October 14, 2025

Alibaba Cloud Community - April 2, 2026

Alibaba Cloud Community - December 17, 2025

Apache Flink Community - July 28, 2025

1,370 posts | 487 followers

Follow Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Alibaba Cloud for Generative AI

Alibaba Cloud for Generative AI

Accelerate innovation with generative AI to create new business success

Learn MoreMore Posts by Alibaba Cloud Community