By Wupeng and Chenzui

Links to the series about Kubernetes Stability Assurance Handbook:

Logs are an important source of information for software users and developers. Logs can characterize the running status of the software and provide rich information when software operation does not meet expectations. Moreover, logs can also be used in the development stage to debug software, which is convenient for locating problems.

The software lifecycle involves two stages of development and running. The generation of logs is in the development stage, while the use of logs is concentrated in the running stage.

During the development stage, standardized logs help the running stage analyze logs and configure log monitoring and alarming through standardized methods.

During the running stage, the use of logs through standardized methods helps grasp the running-status behavior of programs at low cost, detecting exceptions in time and facilitating iterative efficiency during the development stage.

In the software lifecycle, the running stage is much longer than the development stage. In other words, the time spent on logs is much longer than the time spent on writing log logic in the development stage. Applying good log specifications in the development stage will be helpful to the normal running and rapid iteration of the software lifecycle:

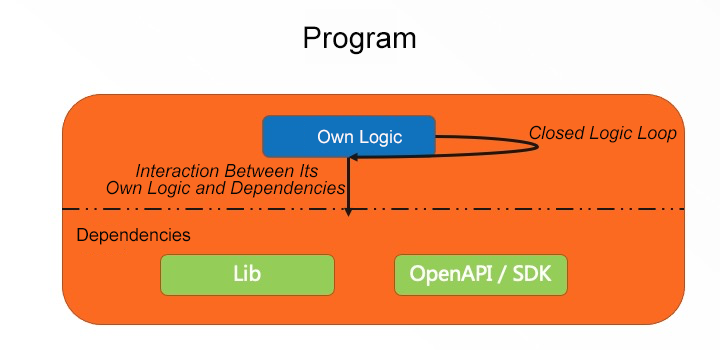

Elements in a program can be abstracted into two parts: its own logic and dependency. There are two types of interaction between the two types of elements, one is called a closed logic loop and the other is called the interaction between the logic and dependencies.

From a long-term perspective, the probability of interaction failure is higher than the probability of logic failure. Therefore, you need to focus on the logging logic of interaction.

In addition, the management of logs needs to be aware of who will be using them. Generally, there are four roles:

Users follow the current running status of the software through logs using the software from the perspective of black box testing and focusing on the normal status of the software.

Maintainers debug the software through logs using the software from the perspective of black box testing. Site reliability engineer (SRE) perceives the exception status in time through logs and analyzes the cause of the exception through the log context.

Security personnel can detect risks, such as malicious logins and abnormal deletion, by analyzing logs.

Auditors can confirm business and architecture compliance through audit logs and application logs.

Based on the preceding application scenarios, several log types can be sorted out to understand these log types in the development and running stages:

| Category | Description |

| Application Logs | Follow internal changes of applications from the information of white box testing |

| Audit Logs | Follow application service status from the information of black box testing |

After understanding the concerns of log users, we recommend using the following best practices when writing logs in the development stage:

For Golang, Klog is considered to be implemented as a logger.

Structured logs are structured log formats with the following structure. Among logs, msg is a common event, and k=v is used to represent the event:

msg k=v k=v ... k=vExample:

"Pod status updated" pod="kube-system/kubedns" status="ready"In the development stage, structured logs help developers think about program logic status through solidified structures and field semantics, which control program complexity and understand program logic.

In the running stage, k in structured logs naturally has the attribute of an index and is easy to query and analyze. Besides, msg can be considered to be standardized to add event semantics. The msg functions are enhanced by limiting the msg semantics.

The burden of usage and maintenance will be reduced by reducing the number of log types.

Debug can be integrated at the information level.

For users and maintainers, warning and critical are vague terms that usually do not indicate specific actions to be taken. Like error, these two levels indicate that unexpected events occur during the running of the program. At this time, the program will either handle it automatically or leave it to external manual intervention to determine. If the program handles this automatically, then users and maintainers can review and confirm whether it can meet the requirement. If external manual intervention is required, the error can satisfy the requirement. The severity of such issues can be evaluated by error-specific information in the running stage, such as Service Unavailable error and Unauthorized error.

Fatal encapsulates the error and panic, which may lead to unclear execution logic during the development. For example, the decision on whether the logic in panic needs to be placed in the top-level logic. If fatal is called under the top-level logic, it may cause problems, such as resource leakage and increased program complexity.

Format strings have the following structure:

klog.V(4).Infof("Got a Retry-After %ds response for attempt %d to %v", seconds, retries, url)This structure, coupling generic events and specific content, is not conducive to reducing the cost of understanding the program logic in the development stage, nor does it facilitate querying and analysis in a standardized manner in the running stage. As a result, it is increasing the cost of using the logs.

A way to improve:

klog.V(4).InfoS("got a retry-after response when requesting url", "attempt", retries, "after seconds", seconds, "url", url)During the development, sensitive information, such as passwords and tokens, may be output to logs. Code review should be strengthened to avoid such leakage. Meanwhile, we also recommend configuring filters in the logger to filter sensitive information automatically. For more information, please see KEP: Kubernetes System Components Logs Sanitization.

For Golang, Klog is considered to be implemented as a logger while using Kubernetes/component-base: sanitization.

The running stage describes the use of logs and includes the following four phases:

Since logging services are critical to the running and subsequent operations of the program, we recommend using a hosted logging product to meet the logging requirements during the running stage, such as Alibaba Cloud's Log Service (SLS) products.

If a cluster is deployed in multiple regions and the components of the cluster are the same, it is necessary to ensure that the rules for logging item names are consistent in each region when using logging products. Let's use Alibaba Cloud SLS products as an example. If logs from multiple regions need to be collected separately, the names of the project and logstore need to keep the same rules in multiple regions to facilitate the query and analysis of logs from different clusters through a unified method.

Generally, log products provide the four-phase log service. For more information about how to use the product, please see the documentation for Log Service.

The alarm should meet the following objectives:

Uniform alarms can be made for error level alarms based on the log specifications of the development stage. For instance, unify the alarm information into low-priority notification channels, such as DingTalk groups that perceive common alarms.

The following aspects need to be addressed to perceive key alarms promptly:

Defining key alarm features is characterized by long-term and continuous improvement, including general key alarms and business key alarms.

General key alarms are less coupled to the business, such as machine-level key alarms for downtime, high memory pressure, high load, and other issues. Besides, key alarms for hosted services also belong to general key alarms, such as panic on OOM and large memory pressure of the master component. Alarm configurations of general key alarms can be used as basic services and as a part of cluster delivery.

Business key alarms are closely related to the business and need long-term maintenance with the business. You need to focus on the alarms of business interaction.

Notification channels typically contain the following types:

These notification channels have different timeliness for reaching people. Phone calls have the best timeliness, followed by SMS and IM groups. Essentially, Webhook is a channel that can connect to different IM groups, SMS, and phone calls.

We recommend the following three alarm levels:

| Alarm Level | Description | Notification Channels |

| Level 1 | Immediate processing | IM groups, SMS, and phone calls with level 1 alarms |

| Level 2 | Needs focus but not addressed immediately | IM groups and SMS with level 2 alarms |

| Level 3 | General exceptions, covering exceptions as much as possible, to help with alarm tracing | IM groups with level 3 alarms |

Alarm configuration is a long-term and iterative process. The configuration of each alarm can be considered using the following table to facilitate iteration of alarm effectiveness, standardize its configuration, and think deeply about the effectiveness of the configuration:

| Key Issues | Analysis | Remarks |

| Cluster level? | ||

| Component level? | ||

| Exceptional information source? | ||

| Accurate exception features? | ||

| Fuzzy exception features? | ||

| Blast radius? | ||

| Alert level? | ||

| Scope covered (clusters/components)? |

Business-dependent tools, such as OpenAPI, SDK, and libraries, usually have error code lists (For example, Alibaba Cloud: API error centers and errors files in libraries.) Based on this information, enumerate the status codes that have a significant negative impact on the business in the dependent tools of OpenAPI or SDK for hierarchical alarms, such as Service Unavailable, Forbidden, and Unauthorized.

You are welcome to leave a comment on the stability assurance issues while using Kubernetes and the stability assurance tools or services you are looking forward to using.

Kubernetes Stability Assurance Handbook – Part 1: Highlights

Kubernetes Stability Assurance Handbook – Part 3: Observability

706 posts | 57 followers

FollowAlibaba Developer - August 9, 2021

Alibaba Cloud Native Community - September 28, 2021

Alibaba Developer - August 9, 2021

Alibaba Developer - August 2, 2021

Alibaba Clouder - August 31, 2018

Alibaba Clouder - March 18, 2020

706 posts | 57 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn MoreMore Posts by Alibaba Cloud Native Community