The way people live, work, learn, and enjoy themselves has all altered as a result of the Internet. The rapid development of technologies has driven the evolution of the cloud computing market from the early physical machines to virtual machines (Bare Metal Instance) and then to containers, while the Internet architecture evolved from centralized architectures to distributed architectures, and then to cloud-native architectures.

Cloud-native technologies (architectures) have seen a sharp increase in popularity, but the concept is still interpreted differently by different people, despite the wide-ranging articles and discussions on this topic in the online community and inside Alibaba. In my opinion, we are exploring what it means to be cloud native and trying to understand and put cloud-native technologies into practice. Therefore, there is still no clear or overarching standard definition.

From the birth of the Internet to the present, we have adopted Internet thinking and then Internet+ thinking (which is essentially Internet native). When enterprises reach a certain stage, they need to develop value thinking (or, value-native thinking). Therefore, it is necessary for cloud computing practitioners to develop cloud-native thinking. Abstract paradigms always preceded tangible solutions in any technological reform or widespread adoption of new methods.

Drawing on the definitions given by Pivotal Software and CNCF, I came to the following understanding of what it means to be cloud-native:

Being cloud-native means building an application system that runs on the cloud through both a methodology (such as that from Pivotal Software) and a technical framework (such as that from CNCF). Such an application system breaks away from traditional system building methods and makes full use of the native capabilities of the cloud to maximize its value. It adopts the characteristics of cloud-native architectures in order to rapidly empower businesses.

This abstract interpretation can be broken down into four questions:

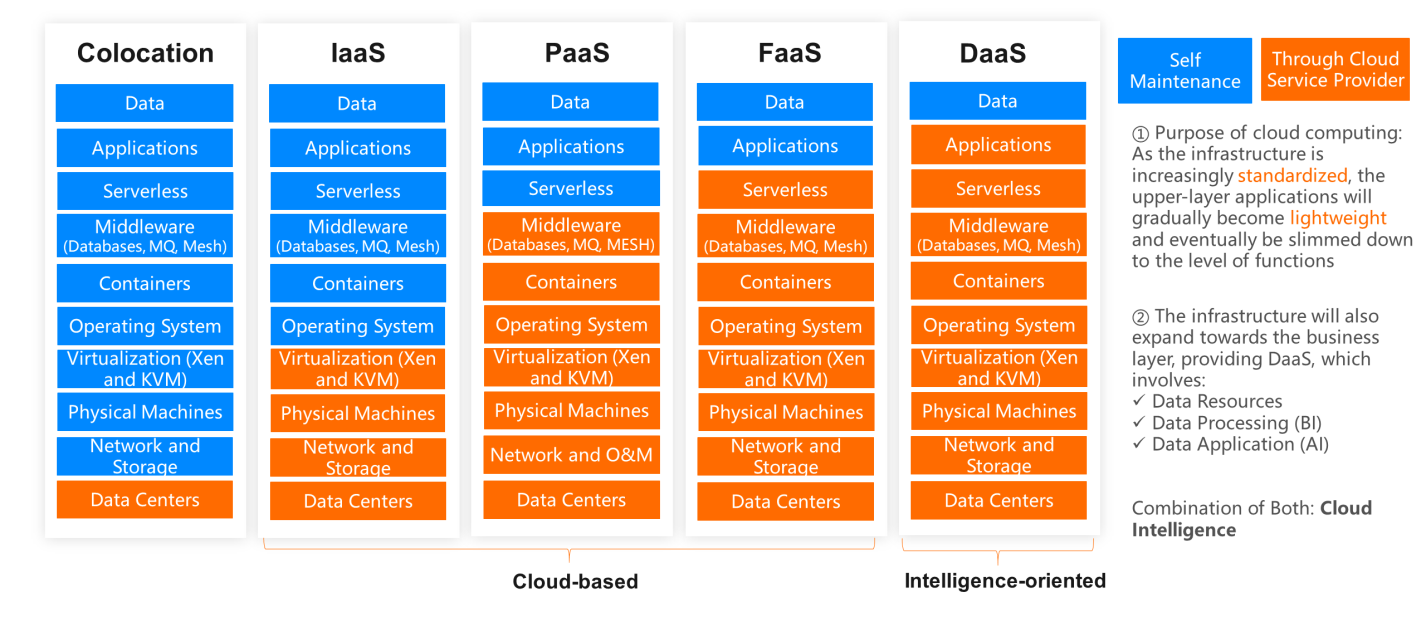

The emergence of cloud computing is closely related to the development and maturity of virtualization technology. It is an emerging IT infrastructure delivery method. that relies on virtualization technology to standardize, abstract, and scale IT hardware resources and software components into product-like services that allow users to "pay as they go". In a sense, this reconstructs the IT industry's supply chain. Its models of service delivery include Infrastructure as a Service (IaaS), Platform as a Service (PaaS), Function as a Service (FaaS), and Data as a Service (DaaS).

IaaS

IaaS indicates the fundamental and underlying capabilities of cloud computing, such as computing, storage, network, and security.

PaaS

PaaS generally refers to the high-level domain- or scenario-oriented services that are built on top of the underlying cloud capabilities, such as cloud databases, cloud object storage, middleware (including caches, message queues, load balancing, service mesh, and container platforms), and application services.

Serverless

This is a serverless computing architecture, through which users can run applications without purchasing or concerning themselves with infrastructure and elastically scale services using the pay-as-you-go billing method. This is also an extreme form of evolution from PaaS. Currently, three types of solutions are available under this architecture:

DaaS

Using data as a service, the architecture extends to upper-layer applications and, when used with AI and cloud services, can deliver various high-value services. These services include big data-based decision making, video and facial recognition, deep learning, and scenario-based semantic understanding, among others. This is also the core strength of the cloud of the future.

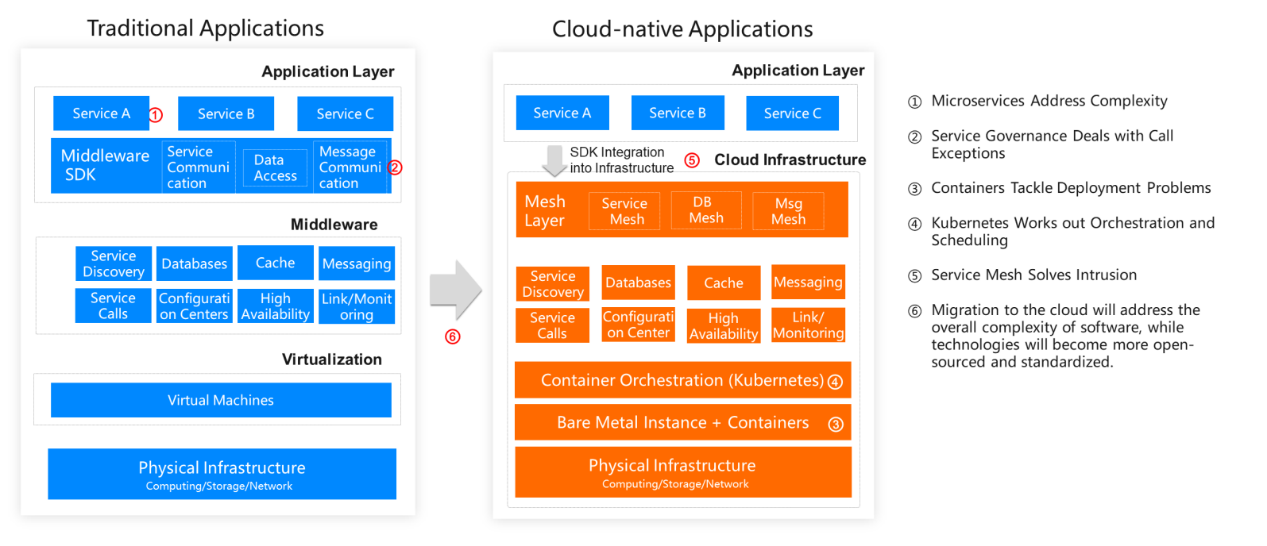

As technologies and open-source solutions continue to develop and cloud service providers provide more products and capabilities, every layer of today's technology architecture, from physical machines, virtual machines, and containers to middleware and then to the serverless architecture, has been gradually standardized. The more standardized the layers are, the greater the added value they can contribute. Relatively common technologies that are not directly related to business (such as service mesh) have also been standardized and incorporated into the underlying infrastructure. Every time a layer of the technology architecture becomes standardized, it will eliminate some of the inefficient and tedious tasks. In addition, the application layer provides emerging technologies, such as AI, to help enterprises reduce the costs incurred during the exploration of suitable solutions, speed up the verification and delivery of new technologies, and truly empower the business.

Meanwhile, users can choose the cloud products that best fit their needs just like building with LEGO blocks, using readily-available resources to avoid repetitive work. This greatly improves the efficiency in each stage of software and service development and accelerates the implementation of various applications and architectures. Users who are already on the cloud can realize huge cost-savings by consuming resources as needed and scaling out at any time.

The preceding section discusses the strong capabilities of the cloud. In comparison with traditional applications, new cloud applications, need to be adapted to these capabilities in each stage of the entire application lifecycle. This involved adaption during the design of software architecture, development, construction, deployment, delivery, monitoring, and O&M. I will discuss this process in terms of various issues users must face.

Great architectures come into being after evolving and progressing over time. They are not created all at once. Therefore, it is meaningless to talk about architectural design. The purpose of architectural evolution must be to solve a certain problem. We can address the problems listed below to better understand the design the cloud-native architecture.

Single-microservice applications are adapted to the cloud-native architecture due to their low complexity and comprehensive set of functions for monitoring, governance, deployment, and scheduling supported by the strong underlying system. However, from the perspective of the overall system, the complexity does not decrease. Instead, enterprises must bear the high costs of building a robust underlying system with strong architectural and O&M capabilities.

In addition, the technology stacks and middleware systems used by enterprises to achieve these functions are closed and highly private, making it difficult to meet all business needs (as is the case with Alibaba). Cloud hosting can reduce the overall complexity of such a project. The cloud service provider can take over the complex underlying system and provide attentive services. Projects will eventually evolve into an infrastructure-free design and use YAML or JSON declarative code to orchestrate the underlying infrastructure, middleware, and other resources. In this way, the cloud can meet every need of an application. Eventually, enterprises will embrace an open and standard cloud technology system.

We introduced DevOps to address the problem of the continuous delivery of applications.

DevOps is a concept everyone is familiar with. I see it as a series of values, principles, methods, practices, and tools designed to achieve fast delivery and continuous optimization. Its core advantage is to close the gap between R&D and O&M, expedite the software delivery process, and improve software quality. The chart below shows a DevOps pipeline.

The platforms involved in this process include: GitHub, Travis, Artifactory, Spinnaker, FIAAS, Kubernetes, Prometheus, Datadog, Sumology, and ELK.

The key to truly implementing and practicing DevOps lies in the answers to the following questions:

In essence, DevOps supports O&M services. By introducing a series of automation tools for new technologies and development into O&M, it brings development closer to the production environment and manages the entire development and O&M processes, ensuring freedom and innovation. When monitoring and fault prevention and control tools are used together with function switches, they can help reach achieve a balance between the user experience and fast delivery.

If technology professionals only need to consider business solutions and business code in the future, it would be necessary to quickly integrate the abundant technical products and cloud vendor platforms already available on the market. This would allow technical professionals to focus on finding solutions and connecting business and technology in a bid to satisfy increasingly diversified and complicated business needs. In terms of O&M, the cloud hides the complexity of the infrastructure and shifts to the O&M mid-end and large-scale O&M for toolchain development. This allows practitioners to focus on cost, efficiency, and stability while ensuring the steady progress of application development.

- Ease of management: To transition from manual maintenance to automatic maintenance, the applications support automatic exception analysis and diagnosis without the need to log on to servers.

Cloud native computing generally includes the following three dimensions: cloud native infrastructure, software architecture, and delivery and O&M systems. This article will focus on software architecture.

"Software architecture refers to the fundamental structures of a software system and the discipline of creating such structures and systems." — From Wikipedia.

In my understanding, the main goal of software architecture is to solve these challenges:

1. Complexity control. Due to the business complexity, better means are needed to help R&D teams overcome cognitive barriers and achieve better labor division and collaboration. This allows us to solve the targeted problems better.

2. Uncertainty solving. The demand of rapidly developing business is constantly changing. Even if the software architecture is perfect, it is inevitable that architectures will be adjusted with changes of R&D teams over time. In Design Patterns: Elements of Reusable Object-Oriented Software and Building Microservices, "decoupling" is one of the most frequent words. Authors want readers to focus on the separation of certainty and uncertainty in the architecture and improve its stability and adaptability.

3. Systemic risks management. The certainty and uncertainty risks in the system should be well-managed to avoid known pitfalls and prepare for unknown risks.

The cloud native application architecture aims to build a loosely coupled, elastic, and resilient distributed application architecture. This allows us to better adapt to the needs of changing and developing business and ensure system stability. In this article, I'd like to share my observations and reflections in this field.

New technologies such as cloud computing, big data, and artificial intelligence (AI) are rapidly changing our world. Their enormous influence has shifted from quantitative changes to qualitative changes. For any enterprise to survive in today's world, they must adapt through digital transformation. According to IDC, among the world's 1,000 largest enterprises, 67% have escalated digital transformation to the level of enterprise strategy, and many Chinese enterprises are incorporating digital transformations in their core strategies. As migration to the cloud becomes the trend in the business world, it is time to make full use of open-source technologies and cloud services when constructing software services. For enterprises, embracing cloud computing and cloud-native technology and using this technology to accelerate innovation will become the keys to successful digital transformations.

This article discusses why it is essential for enterprises to embrace cloud computing and cloud-native technology to accelerate innovation and digital transformations.

This article is intended to help you ensure the high availability of your business systems under cloud native through the following three approaches:

Serverless Workflow is a fully managed serverless cloud service used to coordinate the execution of multiple distributed tasks. It is committed to simplifying tedious tasks, such as task coordination, state management, and error handling required to develop and run business processes. It enables you to focus on business logic development.

You can arrange distributed tasks in sequence, branch, and parallel. The service will reliably coordinate task execution according to the set sequence and track the state transition of each task. Then, it will execute custom retry logic (whenever necessary) to ensure the smooth completion of the workflow.

Alibaba Cloud Container Service for Kubernetes (ACK) integrates virtualization, storage, networking, and security capabilities. ACK allows you to deploy applications in high-performance and scalable containers and provides full lifecycle management of enterprise-class containerized applications.

For years, IT organizations have maintained development and operations teams as separate entities. Despite having the same organizational goals, these parallel functioning teams often would be at odds with each other. Many organizations realized the urgency to create a new and unified software development and delivery model, which evolved into the DevOps methodology.

2,593 posts | 794 followers

FollowAlibaba Cloud Serverless - June 23, 2021

Alibaba Cloud Native - October 27, 2021

ApsaraDB - December 25, 2023

Alibaba Cloud Community - April 25, 2022

Alibaba Cloud Native - September 11, 2023

Alibaba Cloud Community - November 4, 2022

2,593 posts | 794 followers

Follow Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn More Container Registry

Container Registry

A secure image hosting platform providing containerized image lifecycle management

Learn MoreMore Posts by Alibaba Clouder