By Guyi and Shimian

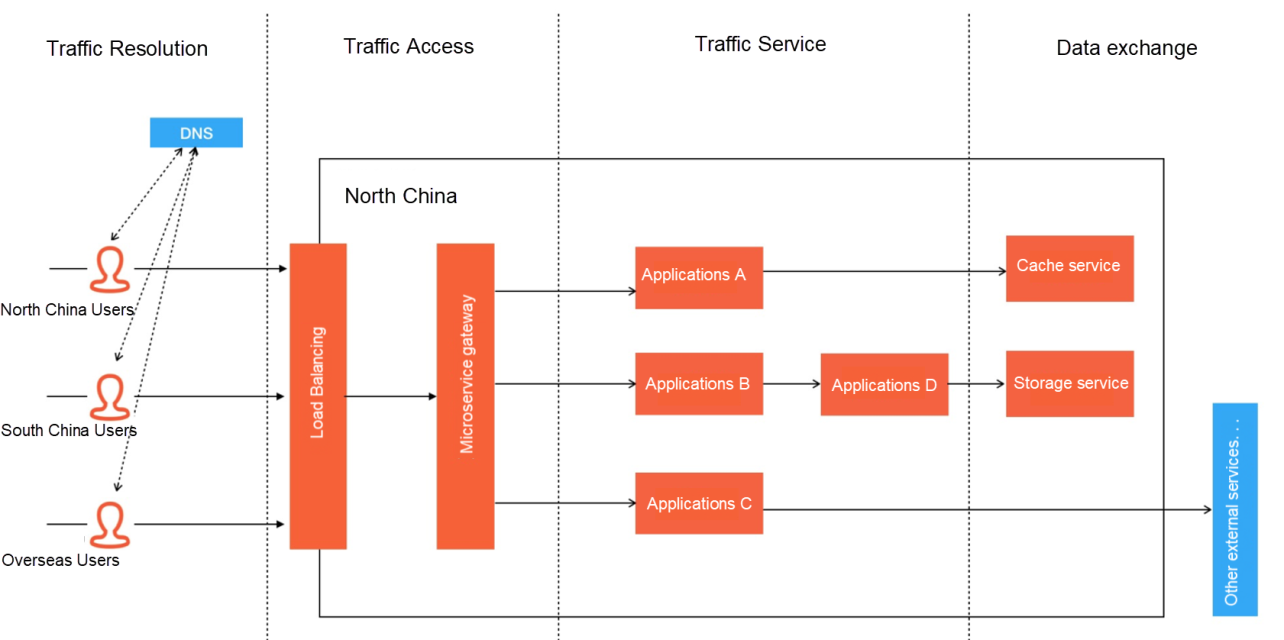

The last two articles discussed traffic analysis, traffic access, and traffic service. This article explains how the data exchange process affects online traffic from the perspective of data exchange. Finally, we will introduce two common precautions: comprehensive-procedure stress testing and safety production drills. Let's talk about the data exchange:

When traffic circulation is finished in the application cluster, its destination is generally to exchange data with various types of data services, such as reading data from the cache, storing order records in the database, and exchanging transactions data with peripheral payment services. However, the unavailability of peripheral services might occur as long as data access is conducted with external services. Common situations include the avalanche effect due to heavy dependency or data overload and large area paralysis due to the overall unavailability of the data center. For example, a recent event is the large-scale downtime of Meta Platforms, Inc. (formerly known as Facebook, Inc.) A wrong configuration was issued, cutting off the backbone router between data centers.

Massive amounts of data exist in Internet companies within China. When their businesses grow to a certain scale, they will meet problems in cache or DB capacity. Let's take MySQL as an example. When the capacity of a single table is at the level of tens of millions, if a relational query needs to be performed on this table, the pressure on the database in I/O and CPU will occur. At this time, we need to start considering database sharding and table partitioning. However, it is not only about sharding and partitioning. New problems in distributed transactions, joint queries, cross-library Join, and other areas will be introduced. Things will get thorny if we solve each problem manually. Fortunately, there are also many excellent frameworks for these areas on the market, such as Sharding JDBC in the community and Alibaba Cloud's PolarDB-X, which just became open-source.

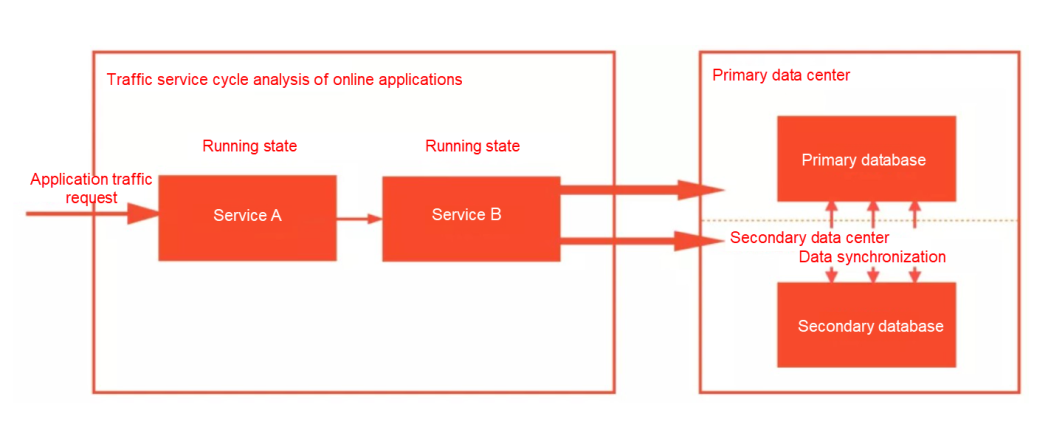

If you want to prevent the overall unavailability of the data center, a common idea is to build a high-availability capability with disaster recovery and multi-activity. Disaster recovery at the data center level usually includes zone-disaster recovery and geo-disaster recovery. However, the services deployed in a data center are likely to be distributed services, and the disaster recovery strategy for each distributed service has a slight difference. This article uses the common MySQL example to describe some common ideas.

The core of disaster recovery is to solve two problems in CAP, namely C (data consistency) and A (service availability). However, according to CAP theory, we can only ensure either CP or AP, so the strategy to choose needs to be formulated according to the business form. Since its RT is generally small, the data consistency can be satisfied to the greatest extent for IDC-level zone-disaster recovery. However, if the data center where the master node of Paxos (a consistency algorithm in MySQL) is located is down, the master has to be selected again. If the cluster is large, DB unavailability at the tens of seconds may occur due to master selection.

Data consistency is impossible to meet for geo-disaster recovery because the data link is too long. Therefore, the business must be transformed to achieve horizontal segmentation at the business level. For example, the South China Data Center serves the South China customer group, and the North China Data Center serves the North China customer group. The sharding data achieves consistency through data synchronization.

So far, we have introduced the traffic loss in four core processes of online applications, especially traffic loss due to architecture design, fragile infrastructure, and other factors. We also listed the corresponding solutions for different scenarios. However, from the perspective of safe production, the purpose of all safe production efforts is to prevent accidents before they happen. Compared with traditional software products, we recommend two prevention methods at the production level in Internet systems: comprehensive-procedure stress testing and safety production online drill (also called fault drill).

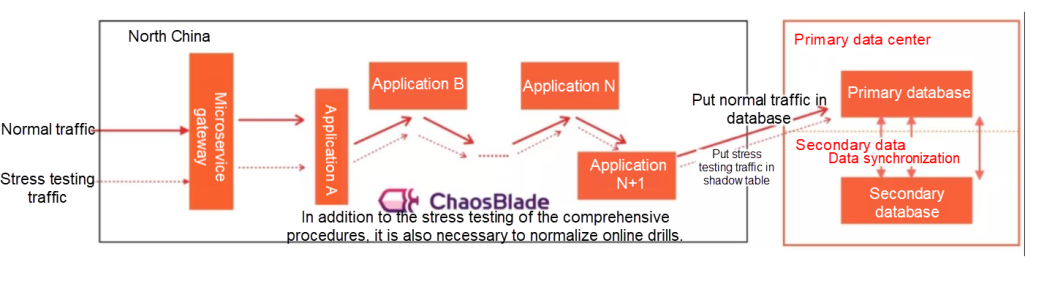

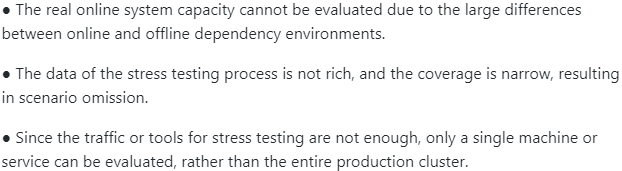

Various tests will be performed on any system that is about to be released In the production system of software, including stress testing. This testing puts the system in a rather rigid environment to see its performance. The general stress testing will only construct the corresponding interface to carry out the corresponding stress testing on the environmental services deployed offline. The testing report is perfect if there is no accident. However, such stress testings have several problems:

If you want to achieve a comprehensive, systematic, and real traffic evaluation, we recommend performing targeted performance stress testing in the production environment. However, many technical bottlenecks need to be broken through to achieve such a comprehensive-procedure stress testing:

However, in the process of implementation, due to the large influence of the comprehensive procedures, the preparatory work needs to be gradually implemented before officially starting the stress testing of large traffic. The preparatory work includes stress testing plan formulation, prerun verification, prefetching, and stress testing. After stress testing, the results need to be analyzed to ensure the entire system meets the preset goals.

Similar to the idea of comprehensive-procedure stress testing, we also recommend completing the safe production drill online to be as close as possible to the production environment. The purpose of the drill is to test whether the system's behavior is still robust in various unexpected scenarios, such as unavailable services, infrastructure failure, and dependency failure. Usually, the scope of the drill ranges from a single application to a service cluster (and even to the whole data center infrastructure). Drill scenarios include intra-process (such as request timeout), process level (such as FullGC), container (such as high CPU), and Kubernetes cluster (such as Pod eviction and ETCD failure). We need to make targeted choices of drill scenarios according to the anti-vulnerability capability of the business system.

Many scenarios and technical points in this 3-part series come from failures of real online systems. We have productized our corresponding solutions for each stage and built them into our Enterprise Distributed Application Service (EDAS). EDAS is committed to solving the full-process lossless traffic of online applications. After six years of tireless R&D efforts, EDAS has provided our customers with the key capabilities of traffic loss in traffic access and traffic service. Our next goal is to deploy this capability across the whole application process. This will provide full-process lossless traffic for your application by default, ensuring business sustainability.

Moving forward, we will continue building a complete technical middle platform around development and testing. We are also preparing a free download version so you can enjoy many default traffic lossless capabilities in any environment. On the delivery side, multi-cluster and multi-application batch delivery will be achieved, and the delivery capabilities among online public clouds, offline free output, and hybrid clouds will be added. These features will be available soon.

How to Build a Traffic-Lossless Online Application Architecture – Part 2

212 posts | 13 followers

FollowAlibaba Cloud Native - April 28, 2022

Alibaba Cloud Native - April 28, 2022

Alibaba Clouder - September 25, 2020

Alipay Technology - February 20, 2020

Alibaba Container Service - July 29, 2019

Alibaba Clouder - May 11, 2021

212 posts | 13 followers

Follow IT Services Solution

IT Services Solution

Alibaba Cloud helps you create better IT services and add more business value for your customers with our extensive portfolio of cloud computing products and services.

Learn More Enterprise IT Governance Solution

Enterprise IT Governance Solution

Alibaba Cloud‘s Enterprise IT Governance solution helps you govern your cloud IT resources based on a unified framework.

Learn More DNS

DNS

Alibaba Cloud DNS is an authoritative high-availability and secure domain name resolution and management service.

Learn More CloudBox

CloudBox

Fully managed, locally deployed Alibaba Cloud infrastructure and services with consistent user experience and management APIs with Alibaba Cloud public cloud.

Learn MoreMore Posts by Alibaba Cloud Native