Landmarks are one of the basic elements of a map – they indicate the names of locations or routes on the map. In map JSAPI, the display effect and performance of landmarks are important.

The latest version of AMAP brings in Signed Distance Field (SDF) to reconstruct the code for the marking. This new method requires that the frontend compute the offset, avoidance, and triangular splitting of landmarks, which not only increases the amount of computation sharply, but also consumes a large amount of memory.

For example, in a 3D scenario, a large number of vertex coordinates need to be built. With about 10,000 landmarks with text, the data size is up to 8 (attributes) 5 (1 icon + 4 words) 6 (vertices) 1E4 (about 2.5 million vertex coordinates). When Float32Array is used for storage, the space required is about 2.5E6 4 bytes. This is a massive map marking demo.

High storage usage on the frontend requires that memory be used with caution. This article explores some issues related to frontend memory to help developers make better choices during the development process, reduce memory consumption, and improve program performance.

This section provides an overview of memory structure.

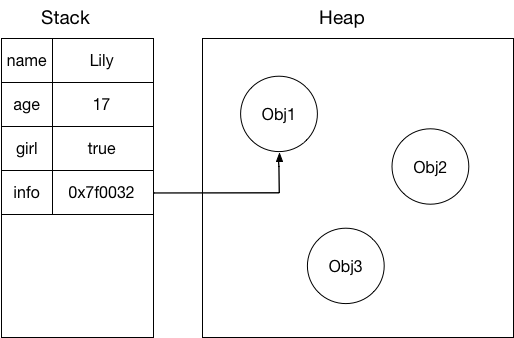

Memory is divided into heap and stack. The heap memory stores complex data types, whereas the stack memory stores simple data types for fast writes and reads. When data is accessed, its storage address is identified in the stack, and then the content that the variable stores in the heap is read based on the obtained address.

In the frontend, the types of data stored in the stack include small number, string, boolean, and complex address index.

Small number data is data of the number type, which is less than 32 bits in length.

Some complex data types, such as Array and Object, are stored in the heap. If we want to obtain object A that has been stored, we first find the storage address of this variable in the stack, and then use this address to find the corresponding data in the heap. The following figure illustrates this process:

As simple data types are stored in the stack, they can be read and written faster than complex types that are stored in the heap. The following demo compares the write performance in the heap and in the stack:

function inStack(){

let number = 1E5;

var a;

while(number--){

a = 1;

}

}

var obj = {};

function inHeap(){

let number = 1E5;

while(number--){

obj.key = 1;

}

}Experiment environment 1:

mac OS/firefox v66.0.2

Result of the comparison:

Experiment environment 2:

mac OS/safari v11.1(13605.1.33.1.2)

Result of the comparison:

If each function is executed 100,000 times, writes in the stack are faster than in the heap.

In JavaScript (JS), you can add and remove any number of properties to and from an object as needed, but the total number of properties of an object cannot exceed 2^32. An array in JS is variable-length and allows you to add and delete array elements as needed. Each element can be of a different type. In addition, you can add any properties to an array as to a common object.

First, we need to understand how JS stores an object.

To store complex data types, JS needs a data structure that features high performance for reads, inserts, and deletes.

Arrays have the fastest read and sequential write speed, but low insert and delete efficiency.

Linked lists are highly efficient in deletes and inserts, but inefficient in reads.

Despite the various advantages of different tree structures, they are more complicated to build and lead to low initialization efficiency.

Therefore, JS use the hash table, a structure that performs well (although not the best) in all aspects, including initialization, queries, inserts, and deletes.

Hash Table

A hash table is a commonly used data structure for storage. Hash mapping converts an input of any length to an output of fixed length by using a certain hash algorithm.

For a JS object, each property is mapped to a different storage address according to a certain hash mapping function. When we search for a property, we also use this mapping method to find the location where the property is stored. The mapping algorithm cannot be too complex or too simple. A complex algorithm will make mapping inefficient, whereas an overly simple algorithm will cause frequent hash collisions as variables cannot be evenly mapped across a contiguous storage space.

There are many well-known solutions for hash mapping algorithms, which will not be described in detail here.

Hash Collision

A hash collision occurs when variables are mapped to the same address after they are calculated by a certain hash function. To handle hash collisions, we need to process the new variables that are mapped to the same address.

As mentioned earlier, JS objects are variable, allowing properties to be added and deleted at any time (in most cases). To avoid hash collisions, you need to allocate a large contiguous storage space when you initially allocate memory to an object. This can result in great waste of space as most objects do not have many properties.

However, if a small amount of memory was allocated initially, hash collisions are bound to occur as the number of properties increases.

There are several classic methods to handle hash collisions:

Each of these methods has its own advantages and disadvantages. They will not be described further as hash collision is not the focus of this article.

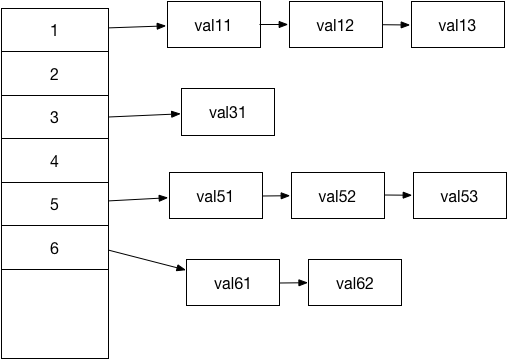

JS uses chaining to handle hash collisions. In the chaining method, values that are mapped to the same address are stored in a linked list. The following figure illustrates this method. The skewed arrows indicate that the memory space for the linked list is dynamically allocated and is not contiguous:

The mapped address space stores a pointer to a linked list. Each unit of the linked list stores the key and value of the property and the pointer to the next element.

The advantage of this storage method is that you do not need to allocate a large storage space at the beginning. Memory will be dynamically allocated to newly added properties.

This method also delivers better performance for index addition and removal.

The preceding description also answers the question that why JS objects are so flexible, as mentioned at the beginning of this section.

Why are JS arrays also more flexible than arrays in other languages? This is because JS arrays are a special type of objects.

In an array, the key of a property is the index of the property. This object also has the length property and methods such as concat, slice, push, and pop.

This explains many questions, including the following ones:

Why can a JS array have different data types?

A JS array is an object and every data entry can be added to the linked list as a new type.

Why is a JS array variable without a preset length?

The answer to this question is the same as that of the first question.

Why can properties be added to an array as to an object?

A JS array is actually an object.

Memory Attacks

Every data storage method has disadvantages. This hash chaining algorithm can incur very high memory consumption in extreme cases.

A good hash mapping algorithm evenly maps data to different addresses. Conversely, if we construct a hash function that maps a large enough amount of data to the linked list of the same address, it can cause very high memory consumption. Reading and inserting a data entry becomes extremely slow and eventually crashes the memory. This is what we call a memory attack.

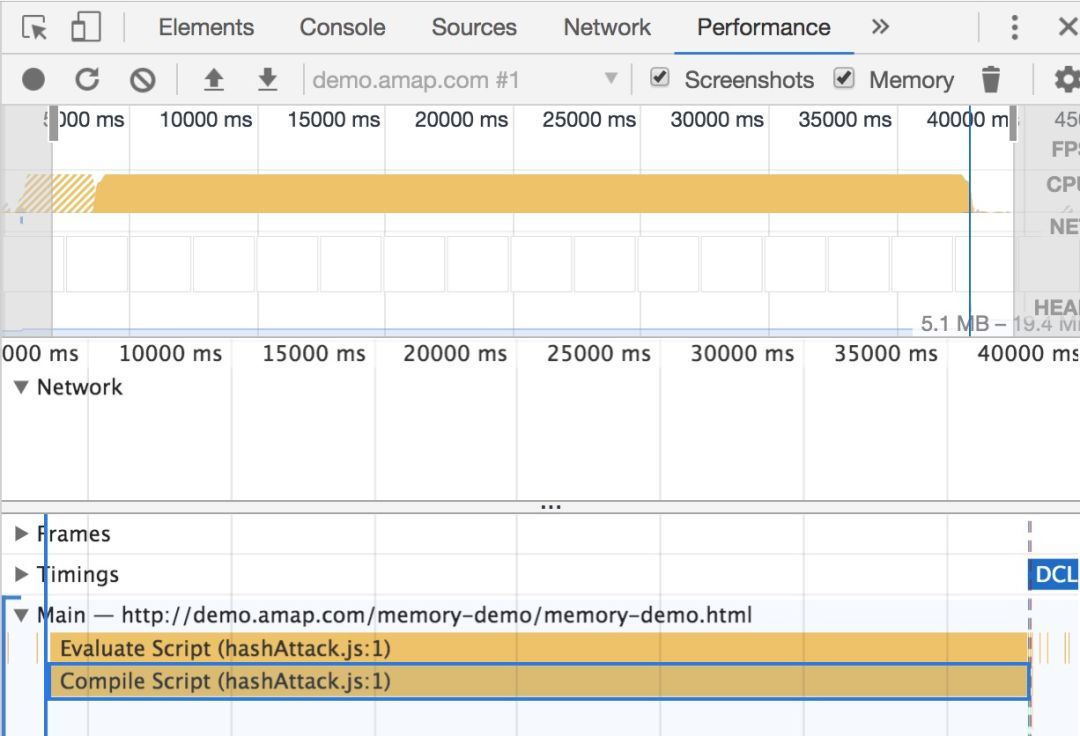

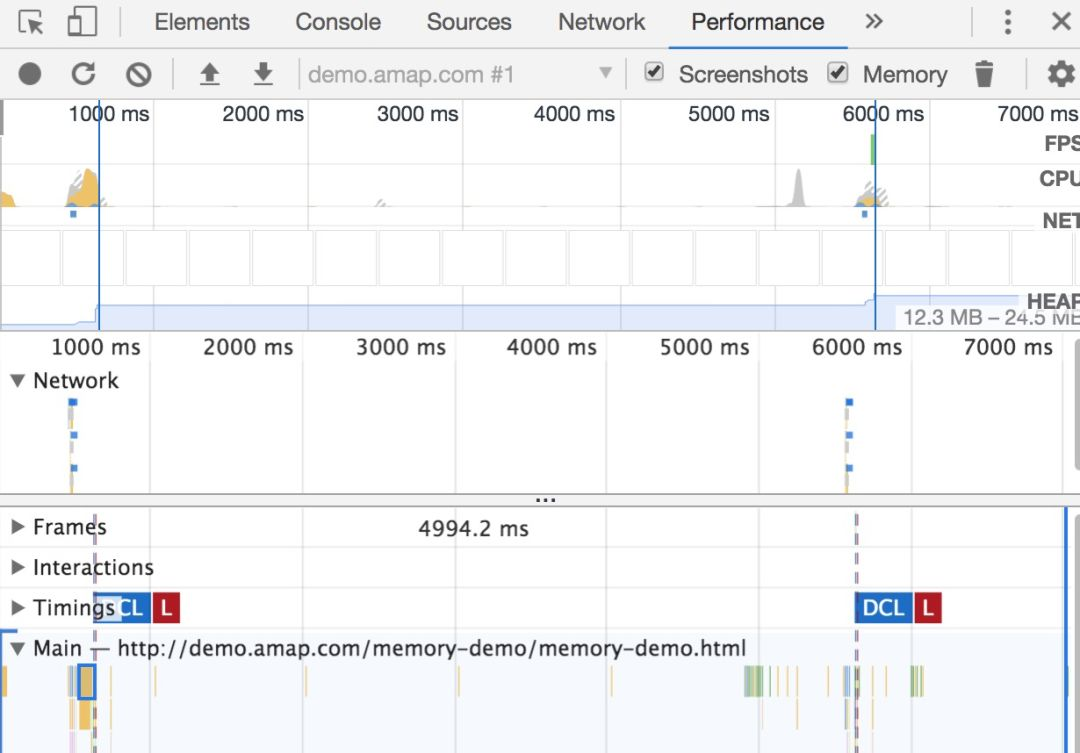

In Figure 1, we construct a JSON object and make keys of the object hit the linked list pointed to by the same address multiple times. The attachment is JS code, which contains only one object that is specially constructed (reference source). Figure 2 shows the screenshot of performance viewed under the Performance tab.

The following figure shows the performance comparison for objects with the same size.

As the screenshot of the performance shows, it took 40 seconds to load an object with 65535 keys. The time for running non-attack data of the same size is negligible.

Attackers might exploit this vulnerability to render service unavailable by building large JSON data to raise server memory usage to 100%. Developers should take care to prevent these risks from happening.

However, this example also demonstrates how objects are stored as mentioned earlier.

From the preceding introduction and experiments, we know that the arrays we used are actually pseudo-arrays. These pseudo-arrays facilitate our operations. However, they also bring another problem: The ultimate speed of fast array indexing cannot be achieved. As mentioned at the beginning of this article, with millions of data entries, memory space needs to be dynamically allocated each time a new entry is added. When data is indexed, the performance waste caused by traversing the linked table index will become obvious.

Fortunately, in ES6, JS provides a new way to get real arrays: ArrayBuffer, TypedArray, and DataView.

ArrayBuffer is used to represent a fixed-length block of contiguous memory. We cannot directly operate on this memory block. Instead, we manipulate its content through TypedArray and DataView.

TypeArray is a general term for Int8Array, Int16Array, Int32Array, Float32Array, and other array types. For more information, see https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/TypedArray

Take Int8Array as an example. The name can be split into three parts: Int, 8, and Array.

The Int8Array object is an array that stores signed integers. Each data entry occupies 8 bits with the first bit as the sign bit. Each element in the array can represent a numeric value up to 2^7 = 128.

// TypedArray

var typedArray = new Int8Array(10);

typedArray[0] = 8;

typedArray[1] = 127;

typedArray[2] = 128;

typedArray[3] = 256;

console.log("typedArray"," -- ", typedArray );

//Int8Array(10) [8, 127, -128, 0, 0, 0, 0, 0, 0, 0]Other types can be understood in the same way. The longer the data can be stored, the larger memory space it occupies. Therefore, when you use TypedArray, you should know your data well in order to choose the most memory efficient type possible.

DataView is more flexible than TypedArray. Each element of the TypedArray object is fixed in length. For example, an Int8Array object can only store data of the Int8 type. DataView, however, can dynamically allocate length for each element after an ArrayBuffer object is passed, which means it can store data of different lengths and types.

// DataView

var arrayBuffer = new ArrayBuffer(8 * 10);

var dataView = new DataView(arrayBuffer);

dataView.setInt8(0, 2);

dataView.setFloat32(8, 65535);

// Obtain different data, starting from the offset.

dataView.getInt8(0);

// 2

dataView.getFloat32(8);

// 65535DataView provides more flexible data storage and minimizes memory usage at the cost of some performance. The following example compares the performance of DataView and TypedArray with the same data size:

// Ordinary arrays

function arrayFunc(){

var length = 2E6;

var array = [];

var index = 0;

while(length--){

array[index] = 10;

index ++;

}

}

// dataView

function dataViewFunc(){

var length = 2E6;

var arrayBuffer = new ArrayBuffer(length);

var dataView = new DataView(arrayBuffer);

var index = 0;

while(length--){

dataView.setInt8(index, 10);

index ++;

}

}

// typedArray

function typedArrayFunc(){

var length = 2E6;

var typedArray = new Int8Array(length);

var index = 0;

while(length--){

typedArray[index++] = 10;

}

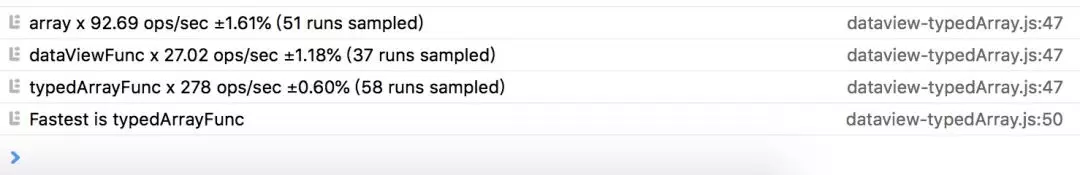

}Experiment environment 1:

mac OS/safari v11.1(13605.1.33.1.2)

Result of the comparison:

Experiment environment 2:

mac OS/firefox v66.0.2

Result of the comparison:

On Safari and Firefox, DataView underperforms ordinary arrays. Therefore, we recommend that you use TypedArray for better performance.

Of course, the comparison results are not fixed. For example, in the recent upgraded version of Google's V8 engine, the performance problem of DataView during operations has been solved.

The biggest performance problem of DataView is the performance waste in converting JS to C++. After Google rewrites this part by using the CodeStubAssembler (CSA) language, TurboFan (V8 engine) can be used to avoid performance loss during the conversion.

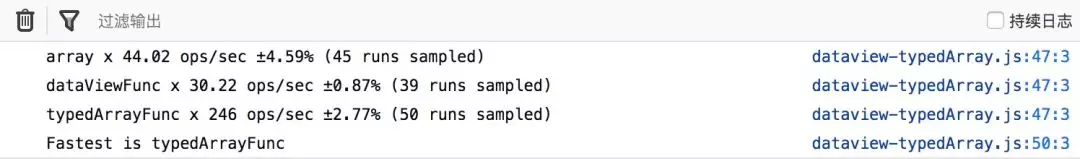

Experiment environment 3:

mac OS / chrome v73.0.3683.86

Result of the comparison:

After the optimization of DataView by Chrome, the performance difference between DataView and TypedArray is small. When variable-length data storage is required, DataView saves more memory than TypedArray.

For information about the specific performance comparison, see https://v8.dev/blog/dataview

The shared memory mechanism is an important part of what we are discussing when we talk about memory.

All JS tasks run in the main thread. With the preceding views, we can improve performance to a certain extent. However, when the program becomes too complex, we want to start a new separate thread by using webworker to complete the computation separately.

The problem with starting a new thread is communication. The postMessage method of webworker can help us complete the communication, but this mechanism needs to copy data from some memory space to the memory of the main thread. This replication process increases performance consumption.

Shared memory, as the name implies, allows different threads to share a same piece of memory. These threads can operate on the piece of memory or read data from it. The overall performance is greatly improved due to the removal of the data replication process.

Use the traditional postMessage method to transmit data:

main.js

// main

var worker = new Worker('./worker.js');

worker.onmessage = function getMessageFromWorker(e){

// Compare the modified data with the original data. The result indicates that the data has been cloned.

console.log("e.data"," -- ", e.data );

// [2, 3, 4]

// msg remains unchanged.

console.log("msg"," -- ", msg );

// [1, 2, 3]

};

var msg = [1, 2, 3];

worker.postMessage(msg);worker.js

// worker

onmessage = function(e){

var newData = increaseData(e.data);

postMessage(newData);

};

function increaseData(data){

for(let i = 0; i < data.length; i++){

data[i] += 1;

}

return data;

}The preceding code shows that data in each message is cloned and then transferred in different threads. The larger the data size, the slower the transfer speed.

Use sharedBufferArray to transfer messages:

main.js

var worker = new Worker('./sharedArrayBufferWorker.js');

worker.onmessage = function(e){

// Data that has been calculated is transferred back to the main thread.

console.log("e.data"," -- ", e.data );

// SharedArrayBuffer(3) {}

// The original data of the main thread has changed when you use sharedBufferArray, which is different from when you use the traditional postMessage method.

console.log("int8Array-outer"," -- ", int8Array );

// Int8Array(3) [2, 3, 4]

};

var sharedArrayBuffer = new SharedArrayBuffer(3);

var int8Array = new Int8Array(sharedArrayBuffer);

int8Array[0] = 1;

int8Array[1] = 2;

int8Array[2] = 3;

worker.postMessage(sharedArrayBuffer);worker.js

onmessage = function(e){

var arrayData = increaseData(e.data);

postMessage(arrayData);

};

function increaseData(arrayData){

var int8Array = new Int8Array(arrayData);

for(let i = 0; i < int8Array.length; i++){

int8Array[i] += 1;

}

return arrayData;

}For the data passed through the shared memory, after the data is changed in the worker, the original data in the main thread is also changed.

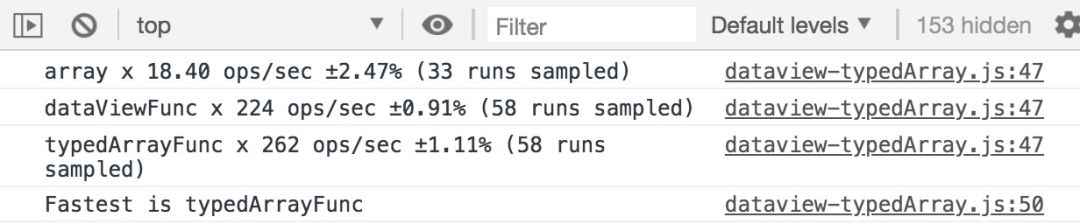

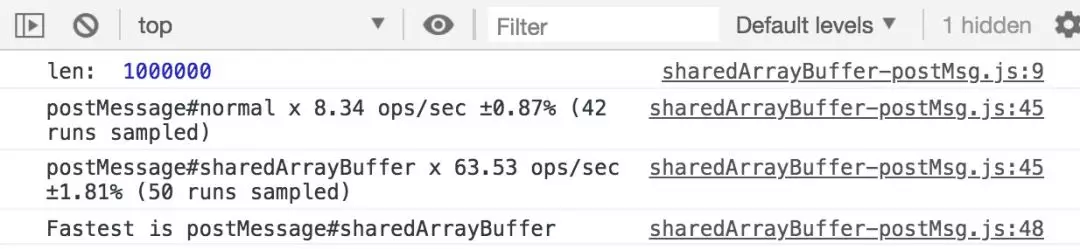

Experiment environment 1:

mac OS/chrome v73.0.3683.86,

100,000 data entries

Result of the comparison:

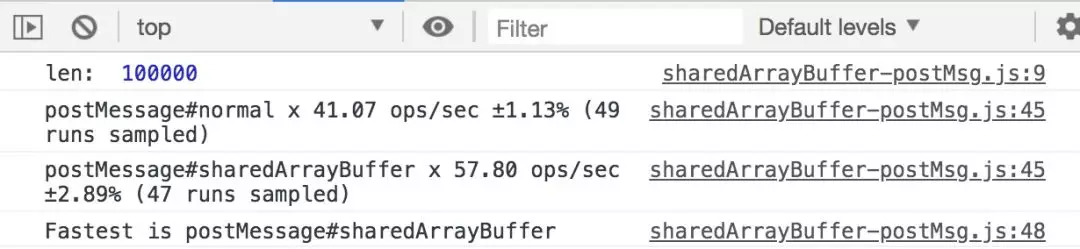

Experiment environment 2:

mac OS/chrome v73.0.3683.86,

1,000,000 data entries

Result of the comparison:

As shown in the figure, in the experiment with 100,000 data entries, sharedArrayBuffer does not have an obvious advantage. However, in the experiment with 1,000,000 data entries, sharedArrayBuffer provides a significantly improved performance.

SharedArrayBuffer can be used not only in webworker, but also in wasm. While this technique greatly improves performance, it does not make data transfer a performance bottleneck.

However, SharedArrayBuffer has a low compatibility. It is supported by Chrome 68 and later. Although it is also supported by the latest version of Firefox, users must enable it manually. Moreover, SharedArrayBuffer is not supported by Safari.

Memory detection and garbage collection are integral parts of memory-related topics. However, this article will not go into details as you can easily find numerous articles on them.

After we have learned about frontend memory, its performance, and usage optimization, we will explore how to detect the memory usage. In Chrome, you can use Memory in the console to perform memory detection and analysis.

For how to use memory detection, see https://developers.google.com/web/tools/chrome-devtools/memory-problems/heap-snapshots

Unlike C++, JS does not require you to manually allocate and deallocate memory, and instead has its own set of dynamic garbage collection (GC) policies. There are many types of garbage collection mechanisms that are commonly used.

The frontend uses the Mark-Sweep method, which can resolve circular references. For more information, see https://developer.mozilla.org/en-US/docs/Web/JavaScript/Memory_Management

After you have learned about the frontend memory mechanisms, when you create any data types, you can choose the storage method that is most applicable to your business scenarios. For example, you can use the following instructions:

In the case of our map mark revisions, to save the consumption caused by dynamic memory allocation, we use TypedArray to store vast amounts of data. In addition, most data processing is done in workers. In order to reduce GC, we have changed the declaration of a large number of variables inside loops to external one-time declaration, which greatly helps to improve performance.

Finally, the final results of these performance tests are not fixed (such as the optimization of Chrome), but they work in similar ways. Therefore, it would be more reliable to write test cases on your own in order to get accurate performance analysis at different time points and on different platforms.

Exploration and Practice of Deep Learning Application in AMAP ETA

Alibaba Cloud Data Intelligence - September 6, 2023

Alibaba Cloud Native - September 12, 2024

XianYu Tech - August 10, 2021

OpenAnolis - May 6, 2023

Apache Flink Community - May 7, 2024

Alibaba Clouder - February 8, 2021

YiDA Low-code Development Platform

YiDA Low-code Development Platform

A low-code development platform to make work easier

Learn More mPaaS

mPaaS

Help enterprises build high-quality, stable mobile apps

Learn More Super App Solution for Telcos

Super App Solution for Telcos

Alibaba Cloud (in partnership with Whale Cloud) helps telcos build an all-in-one telecommunication and digital lifestyle platform based on DingTalk.

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn MoreMore Posts by amap_tech